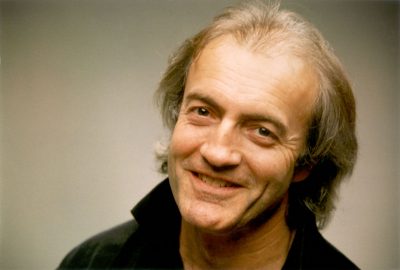

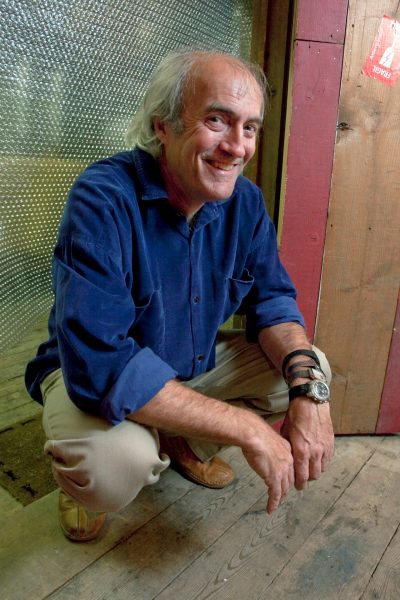

Bill Buxton has a rich history in human-computer interaction and computer graphics. Now a Principal Researcher at Microsoft Research, Buxton previously worked as Chief Scientist at Alias|Wavefront and was a leading contributor to both Maya and Sketchbook Pro. He’ll also be presenting this year at SIGGRAPH Asia which is being held in Hong Kong from 12-15 December. fxguide had a wide-ranging conversation with Buxton about the relationship between computer graphics and interaction, on Maya and Sketchbook Pro, and the future of interactive techniques.

fxg: You’re speaking at SIGGRAPH Asia about interaction and graphics and how the emphasis on graphics has become more profound at the conference over the years. How did you come to that view?

Buxton: Well, I have a funny background, in that I’ve worked a great deal in computer graphics, but I’m actually not a graphics person. My specialty is on the input – getting things into the computer. With SIGGRAPH, the full name is actually the ACM Special Interest Group on Computer Graphics and Interactive Techniques – you can’t have interaction if it’s only a display feeding you images through pixels. The interactive imagery depends as much on how you manipulate things as how you see the things that are being manipulated.

I think in the 80s, both the graphics side and the input side were equally represented at SIGGRAPH, and that’s changed over the years so that now it’s probably far more about the graphical side. So at SIGGRAPH Asia I’m going to talk about the relationship between the input side and the output side. I’m going to look at some of the historical things that happened from 1969 and even earlier.

fxg: What are some of these examples you’ll be showing?

Buxton: They range from some of the things we have now with game controllers and consoles, and looking back at the trackball dates back to 1952. Plus things like touchscreens were already being used in commercial systems since – well, we think touch is a fancy-dancy new thing – but they’ve been around since 1965, and they were used in the UK in air traffic control systems in the 60s. They were used with plasma screens then, and already in classrooms in 1972 throughout the state of Illinois.

Also, multi-touch, which is the fancy thing on mobile smart phones today, dates back to 1984. In computer graphics, even, a lot of the gestural things, where you use the gesture of a stroke to control an action, were demonstrated as early as 1969. I uncovered a film from that time of a CAD system that had radial menus. We also have some nice examples of a film I got digitized from Lincoln Labs at MIT that used early digitization tablets and a stylus where you could draw not just the characters that were being animated, and not just the paths that the objects were being animated would follow, but could even have motion capture on the stroke. If I drew a ball and then drew a path that the ball would follow in its movement, the speed with which the ball would follow that path would directly correlate to the dynamics I used to draw the curve. The strange thing is, these are things that aren’t even available today, even in high-end graphics systems. They were already being demonstrated on things like the TX-2 (an MIT computer from the 1968/69).

– Above: The GENESYS system built by Ron Baecker at MIT’s Lincoln Labs as part of his 1969 PhD thesis. You can see more videos from Bill Buxton’s YouTube channel here.

fxg: Given that you’re saying some of these technologies have been around for a while, and not even being used now, what does that mean for their future use in computer graphics?

Buxton: Well, I have a series of things I’ve published called ‘the long nose of innovation’. For any of these inventions, before they become a billion dollar industry, there’s at least a 20 year development from the time of first inception. The mouse took 30 years, for example. So the notion that things take a long time is important, because it also changes our notion of what innovation is. From my perspective, what that tells us is that innovation is far less about inventing something brilliant out of the blue and more about prospecting and finding things that are right there in front of you, and refining them.

fxg: Where might that fit into the computer graphics and visual effects fields?

Buxton: I’m not sure just yet. I was the chief scientist at Alias for eight and a half years. I started a research group there, and my group did all of the interfaces for Maya – we shared a Scientific and Technical Academy Award for Maya. And if you use the marking menus in Maya, they come directly from this work I mentioned from 1969 that was in the system from Great Britain using radial menus. Also, there’s Sketchbook Pro from Autodesk, which my team worked on at Alias. The marks and gestures used there to make selections and actions is mined out of old work and brought up to date and put in a new context. So you get a very modern project based on older ideas.

I think as we move forward, now we can render almost anything, with large data sets, and with spectacular GPUs, we have excellent algorithms, we have computational fluid dynamics, real-time. What we learn from that is that the real value of being able to do such renderings and do them quickly and with the back-end analysis – the value is, ‘How do I explore that space to start to see the relationships?’. Interaction from that side of things becomes fundamental to reaping the potential value that the graphics brings.

I’d argue that the same thing is true if I’m doing animation – now that we can put complex 3D models on the screen, and render them dynamically while I’m grabbing IK handles and so on, now you can ask yourself the question: ‘What’s the difference between animation and manipulation?’ I think the difference is you have a record pedal now. You can start to do desktop motion capture, and furthermore, a cue out of what we did in music earlier, is that I can lay down tracks and refine the motion, and grab the handles with whatever manipulation device is appropriate and do desktop puppeteering. Rig the model, rough in motion and refine it and just layer it.

I mocked all this kind of stuff up back in 1990 when we were designing Maya but couldn’t get it in the software. I failed in that, which is actually one of my bigger regrets. I’ve got video tapes that show this stuff, but the idea was that you can start to do performance animation without requiring a huge motion capture stage. If you want to wag the tail of a dinosaur you just grab the tail and move it.

fxg: What in your view is the current state of play with input devices?

Buxton: I remember at SIGGRAPH in 1982 I photographed every input device I could find on the show floor. To a large extent, there’s less variety and diversity now than there was in 1982. Everything sort of congealed upon the mouse or the tablet. You might now have a multi-touch screen or tablet, but it’s greatly impoverished and I think that will change.

Where we use graphics, and especially interactive graphics, is going to change dramatically because high-end displays with high-end display processors are appearing in a variety of new types of form-factors. Both large ones embedded in our environments from our cars to homes and offices to billboards, and also in the smaller form in the things we’re wearing and carrying with us. The opportunities for doing computer graphics are going to go way beyond the video game, flight simulator, medical visualization, entertainment and computer aided design that have dominated computer graphics over the last 20 years. The space is opening up.

fxg: Can or should SIGGRAPH be a place for discussion about interaction and graphics again?

Buxton: What happened really with SIGGRAPH was that the interaction side kind of forked off and went to a separate conference, namely SIGCHI, which is the human computer interaction conference. So the input community kind of abandoned SIGGRAPH. My claim is we will never get to where we need to be as long as we keep input and output as separate disciplines. For example, the work of Jeff Han at Perceptive Pixel and what we’ve done with Microsoft Surface is indicative of what happens when you have pretty good graphic engines and knowledge of computer graphics coupled with knowledge of novel input devices. But it’s significant to remember that the light pen, the mouse, the graphics tablet and touch screens all grew out of computer graphics labs, as did all the single stroke shorthand kind of things.

When I was hired at Alias to join the research group, four out of the five people in the group were doctorate level high-end researchers but only one of them was a graphics person. We had lots of graphics people of enormous talent in the company. How we were going to differentiate ourselves from Softimage and 3D Studio Max at the time was to make the interaction better. We made those marking menus, which fundamentally gave a huge differentiator to the Alias products from those of the competitors. And no one was successful in copying it, because they just didn’t understand it. There were attempts to copy how we did the gesture menus that were in Maya and the hotbox and stuff, and they just didn’t get what made them work – they were really bad copies.

fxg: Can you tell me a little about the development of Sketchbook at Alias as well?

Buxton: When we did that product, there was no team, no idea what we were going to do, but we got a green light. I said there was a different way to do a product – I wanted to design a product the same way you design something in industrial design. I knew that because a large part of Alias’ business was industrial design firms. So I put the team together on the 1st of July and the product was shipping on November 7th the same year. And it was a virtual team, so not all the team were full time, but it was the equivalent of five full-time heads.

fxg: Does it interest you that Sketchbook Pro can now be used with finger gestures rather than a stylus and has become such an incredibly popular App?

Buxton: When you’ve got a tool that has potential and captures the imagination, it always amazes me what hoops people are willing to jump through in order to do something interesting. Having said that, I think that if people want to use touch on a program like Sketchbook Pro, that’s a testament to their talent, initiative and willingness to work hard and develop that technique. I also think, why in God’s earth aren’t they using a stylus! – as well as touch, not instead of. I mean, Picasso finger-painted too sometimes.

I demonstrated a system a couple of years ago called Gustav, where I have a really nice stylus on one hand for if I want to paint. And then while holding the stylus I can bring my finger to smudge the paint, then come in and use the brush and add more. But I can also hold the page and rotate it and scale it – do pinch and zoom kinds of things – with my other hand. That use of both hands is really fluent.

– Above: Excerpt of Bill Buxton’s MIX10 Keynote, where he demonstrates Project Gustav (developed at Microsoft Research).

I would argue that everything is best for something and worst for something else. I actually published the first paper in the literature in 1985 on multi-touch, so I’m not against multi-touch – I helped invent it. And I’ve been doing it for over 25 years. But what I do believe is there are things that multi-touch is not the best solution for. The thing is, thin lines are really hard to do with a finger – there’s a reason we use a paint brush. But the space is still very exciting and still opening up. I think this is the most exciting time in over 30 years in the industry.