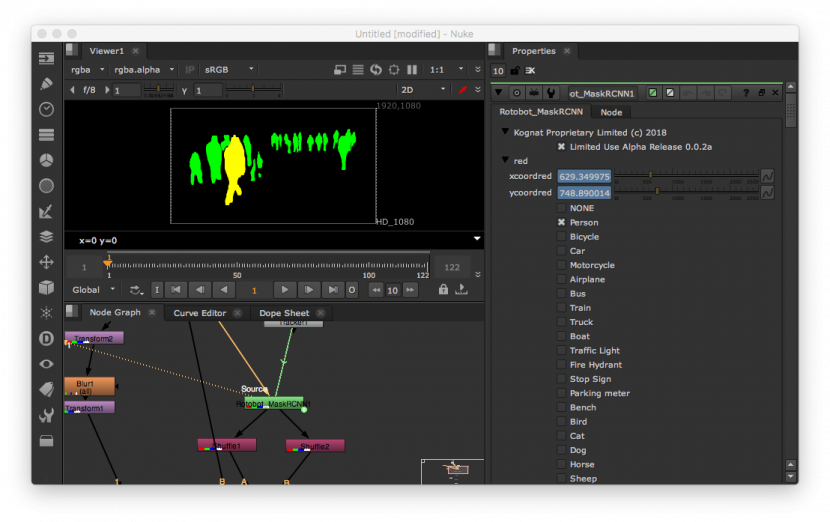

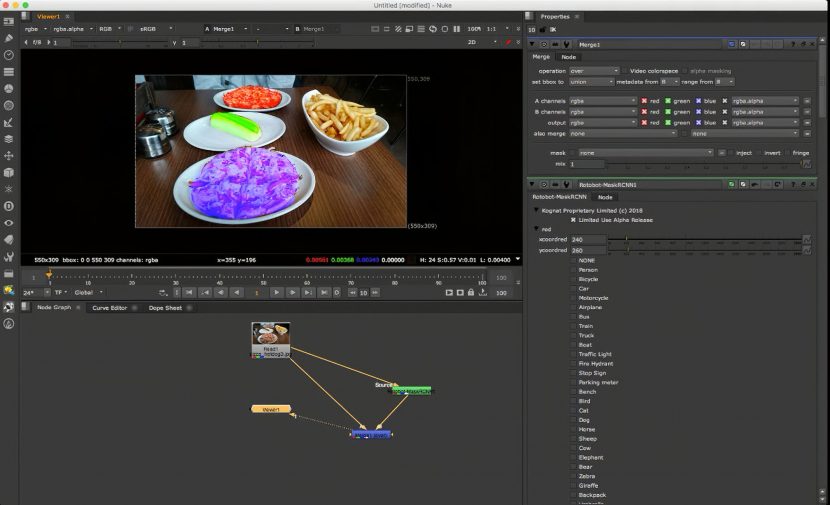

Rotobot is the first AI product for compositing packages which uses machine learning to generate mattes. Kognat Software have designed it as a plugin for Nuke and while Rotobo results are still only rough, it points the way to machine based automatic rotoscoping.

Rotobot is able to isolate instances of pixels that belong to ‘semantic’ classes of objects such as people, cars, etc. This means that by analysing an image with a recurrent convolutional neural network (CNN), the pixels that belong to one of 81 categories can be isolated. Up to 100 instances of each of these categories can be isolated at a time. This is useful to VFX as it means there is a quick tool to generate quick and dirty hold out mattes.

While the results are not yet good enough for final roto use, there are many situations that require a quick hold out matte. Normally, this would be done by performing quick roto manually or farming out the shot(s) offshore. But even using specialist houses in developing economies, where artists are only paid low wages, there is significant cost and time involved. “Even if you could find artists who only charged out a hundred US$ per day, one second of footage can take 24 minutes to process, meaning that in one day, an artist can process 20 seconds of footage,” commented Sam Hodge founder of Kognat Software. “Thus, a few people in shot for twenty seconds would have a minimum turn around time of a day, costing a few hundred dollars and requiring a couple of trained artists to work concurrently. Plus there is the cost of production software such as Silhouette or something similar.”

While there is great demand for high quality roto, Hodge points out that there is also a need for quick and simple roto, known as ‘bash roto’. This work allows an artists to check or visualise work, before committing to more costly and time consuming high quality roto. Bash roto is often used in ‘slap’ composites which allow artists and supervisors alike to explore ideas and test approaches. These constructs are typically internal and are not shown outside of the VFX facility. “The cost of a bash roto can be as much as forty percent of the total cost of the final roto,” explains Hodge. As the bash roto cannot often be reused to form the foundation for the finished masks, it is often considered as throw away work. “The cost of bash roto for a typical VFX shot can be two to sixty hours of labour depending on the length and complexity of the shot and number of elements that need masks,” he adds.

Importantly, it is this time delay, as much as the dollar cost, that Rotobot aims to address. By its very nature, bash roto for slap comps needs to be fast and turned around quickly. Automation of bash roto can represent a great saving. Turn around time can be condensed from a 24 hour turn period, to just a few minutes on a sufficiently scaled render farm. This allows downstream artists to start their tasks earlier and there can be more parallel work.

The OpenFX Rotobot plugin can process footage 24 hours a day without breaks, and it is only limited to the number of licenses and hardware resources available. Rotobot can normally process a frame in as little as 12 seconds on a standard laptop (low quality setting) and can isolate up to a 100 instances within a single calculation. No specialised GPU hardware is required. The software has been proven to work on even low end computers manufactured in 2007 with 2GB of RAM, albeit slowly. Rotobot will use as much CPU resource as it can, with no extra cost for multiple elements. Rotobot has been tested by a variety of professionals around the world on both Linux and macOS platforms. The license manager allows for floating licenses, pricing is not public, but it is estimated it will be under $500 a licence.

Quality

The program is designed with different quality levels from super fast and rough, to a more refined version, but even the highest quality will only be roughly correct. The quality of the roto achieved by Rotobot is not temporally stable, as it tends to flicker between frames. It produces a per frame solution so there is no editable splines to refine or continue working with. Kognat is working to increase the fidelity of the result to a much higher standard. However, today it is aimed at slap comps only.

How it works

Kognat started on, “the 11th of November 2017. I know because that is when I posted on Facebook to see if anybody was already doing this and I have a lot of friends on Facebook, all at different studios around the world, and nobody responded, so I thought, ‘Ok let’s try this and see if it works,'” explains Hodge.

Kognat’s deep learning is based on publicly available models in Google’s TensorFlow, that have just been trained at a higher resolution than typical ‘toy’ data sets. “The computer vision research market is basically set up for toy data. So it’s often setup for just a single frame, a series of stills images typically 640 x 480,” explains Hodge. It was therefore key to make sure higher quality data sets were used for Kognat.

The backend of the product is a TensorFlow library from the Google Brain team. “I’ve experimented with other open source frameworks such as Caffe and PyTorch and MXNet and a few other things, and it turned out that TensorFlow was a good fit and fast,” outlines Hodge, who is also Lead Software Developer in Pipeline at Rising Sun Pictures in Adelaide. That background in production has made Hodge well aware of the need for speed in production workflows.

TensorFlow is a Google tool first released 3 years ago and written in C++ and Python, and is based on deep learning neural networks. It is rightly perceived to be one of the best open source libraries for Machine Learning. It is used by teams such as Google Translate, DeepMind, Uber, AirBnB, and Dropbox, according to Mateusz Opala of Netguru. TensorFlow is good for advanced projects, such as creating multilayer neural networks (CNN). It is widely used in voice/image recognition and computer vision segmentation. PyTorch by comparison is written in just Python and is a primary competitor to TensorFlow. It was developed by Facebook and is used by Twitter, Salesforce, the University of Oxford, and many others. MXNet is a DL framework created by Apache, which supports Python, C++, R, or JavaScript. It’s been used by Microsoft, Intel, and AWS (Amazon Web Services). Finally, Caffe and Caffe2 are C++ projects that also have a Python interface. While Caffe was not widely adopted, Caffe 2 is from Facebook is used for mobile and large-scale deployments in production environments. It is one of the newest libraries and only currently widely used by Facebook.

While the system has presets, it is possible to train new and custom items or even actors to automatically roto. “There’s no reason why we couldn’t do training on a particular actor. If we’re doing another say Wolverine movie or something similar…then we could potentially make a Hugh Jackman isolator. We could feed it a set of semantic masks of Hugh Jackman, and we could train the system to pick up Hugh Jackman under various lighting conditions,” explains Hodge. “I’ve got the ability to train this to whatever I like.”

At the moment Kognat is trained on large data sets that are available publicly and that define the current categories. Interestingly, Hodge points out that various facilities have large sets of shots they have done which would make excellent training data, should a major facility consider doing a sequel to a film that they had previously manually rotoscoped.

The program runs only on CPU currently, even though TensorFlow runs on both GCP and GPUs. “I realised that most render farms are CPU based so I started there. The current estimate of a couple of minutes a frame per computer, at the highest quality, is CPU only, but once I get it running on a wider base of hardware, probably NVIDA GPU first, it will come down to a fraction of that time. I am not willing to commit but it could be as much as a 1/20th of that time,” he explains.

Hodge is currently looking to hire additional developers, currently it is just him developing the code. On the roadmap for future work is the task of taking the pixel output and running it through process to convert it to vector shapes, and hopefully provide temporal consistency. Of course the whole process should improve with more training data and continued advances in Machine Learning.

Well the author obviously kind of contradicts himself if he says at one point it is trained on larger image dataset but at the same time saying it is trained on public dataset. Public dataset are smaller res simply otherwise you would have to run batch sizes that are tiny and training would take forever. Also this really smells like basically using MaskRCN to me.