John Berton, who worked on Men in Black and The Mummy, was overall visual effects supervisor for Charlotte’s Web. We talk to the teams at Digital Pictures Iloura, Rising Sun, Stan Winston Studio and FUEL about their involvement in the motion picture. UPDATE: VES nomination and detailed 3D passes.

Our online story this week covers both digital and practical characters created in the film. Stan Winston Studios was involved for on-set work, creating multiple models for several characters. In the digital realm most of the characters were handled by just one company. For example, Wilbur was digitally reproduced by Digital Pictures Illoura, Charlotte was handled by Rising Sun Pictures, and Charlotte’s children were by Fuel.

In addition to our written story, in this week’s podcast we talk to Digital Pictures/ Iloura about the animation and effects they did for the Wilbur character. Click on the link to the right or visit the digg podcast page to hear the interview.

***********************************************

UPDATE: Charlotte’s Web gets VES Nomination:

We asked Digital Pictures/ Iloura about the VES nomination:

“Charlotte’s Web marks a milestone in animation and visual effects for Iloura, and with the recent nomination for a VES award in the ‘Outstanding Animated Character in a Live Action Motion Picture’ category it has become even more special. Our role in the film was to create a believable full CG double for Wilbur the pig at his various stages of development, as well as Ike the horse. This enabled director Gary Winick to achieve shots beyond the realm of what the real animals were able, or willing to do. This included physical effects such as crashing through fences, fainting and splashing around in the mud as well as calmer moments where the shoot did not provide the performance desired.

The process involved much close observation, detailed sculpting, some very challenging animation as well as many technical challenges including generating skin, hair, water, mud and straw. As well as the Wilbur and Ike effects we also contributes several matte painting shots as well as general compositing effects, for example combining several takes of different animals shot separately into a single shot, making it appear as if they were all there at the same time.

We were lucky on this project to work with some of the most experienced people in the industry including Visual Effects supervisor John Berton Jr (I Robot, Men in Black, The Mummy). John was great to work with and brought a great deal of expertise and experience, as well as good humour, to the project which was fantastic. He went out of his way to make us feel at home amongst some of the biggest and best players in the industry.

We feel privileged to have worked on Charlotte’s Web, and are humbled to be nominated alongside such outstanding work as Tippet Studio’s Templeton (Charlotte’s Web), and ILM’s Davy Jones (Pirates of the

Caribbean: Dead Man’s Chest).”

Now that the film is in release, we have managed to source examples of the many passes used by DP/Iloura to produce Wilbour.

***********************************************

Stan Winston Studios

On set there was a combination of live animals and animatronic puppets. Sometimes these were then combined with digital elements such as beaks or mouths. SWS provided Gully and Gussy (Geese). There were done as both two full hero rod puppets for each character and then one insert head and neck for each goose. Multiple insert wings with the ability to flap/swat and insert body and performable feet for egg shots were also made

For Wilbur the pig, there were four phases of the pig at different ages. SWS provided different puppets and rigs for the different sized Wilburs:

Phase 1 : There was one full mechanical puppet

Phase 2 : Four puppets were used. One in the laying down position, one in the sit-down position one standing hero puppet, and a sit-down stunt puppet.

Phase 3 : One standing hero puppet

Phase 4 : Had three puppets – one lay-down, one sit-down and one standing hero puppet.

In addition to this, an oversized mechanical snout was made for inserts and close-ups where Charlotte lands on Wilbur’s nose.

Rising Sun Pictures

Our discussion is with members of the team from Rising Sun Pictures: CG Supervisor Ben Paschke, Technical Director Sam Hodge, and Compositor Ben Warner, who is also one of the key people responsible for the 3D tracking done at RSP.

fxg: Can you discuss the design process for Charlotte?

Ben Paschke: We started the design process in August 2004 when we started the pitch piece for Charlotte’s. The design process went until almost the end of the project! We started off with many hand-drawn and photoshopped coloured sketches. Once we had some concepts that the clients were interested in we started mocking up some really rough 3d models.

The mouth really was one of the areas of highest contention. We knew that spiders’ faces aren’t built for human speech so we had to find a way to reconcile a good, solid spider design with the mechanics for speech. This was a very long process. We arrived at a design where the chelicerae (the big mandible/fangs at the front of the face) weren’t the mechanical parts making the sounds. We rationalised that she had actual mouth parts in behind the chelicerae which were articulating the speech, and the chelicerae were just reacting to the mouth moving underneath. This idea lead to some pretty subdued ‘lip sync’.

Sam Hodge: The design process in preproduction explored a lot of different avenues. There was a problem trying to reach two objectives one to keep her as a photorealistic spider and the other of making her and endearing and lovable character that the audience can connect to. The two objectives pull you in different directions. The body and legs were fairly straightforward and taken as closely as possible from the barn spider Araneus *cavaticus.

Modeling the facial features were more difficult namely the eyes and the two fangs (chelicerae) and little arms (pedipalp) around the mouth. There are two sets of eyes the two major eyes, that blink and emote and the six minor eyes that act as eye brows to some extent. At one stage these blinked too but it seemed weird and cartoony. The choice to have blinking was made early on so the character had a greater range of emotion, although real spiders have no eyelids. The mouth was attempted as a small humanoid mouth between the two fangs and this look OK, but it seems like she had a massive beard below her mouth. Then the talking was done via the fangs. They were able to be posed and rotated by a number of bones.

Other parts of the facial rig were driven by a combination of a few hundred morph targets using a combination sculpting rig and enveloping. Parts of the human anatomy mapped to regions of the spider face and animators were able to create human emotions on the spider face. The animation of the lip sync went through many rounds of improvement until animation supervisor Eric Leighton got the look he was after. As the fangs are covered in fur it took a leap of faith on the animators behalf to know how the final shot would look when covered in fur.

Charlotte was able to portray a huge range of emotions through the film from being a young and sprightly spider to an expecting mother lumbered with the load of her engorged abdomen and then through to her pinnacle in the death scene. And was able to do all this without a mouth. The challenge to create a smile without lips, made the animators use posture and other subtle features such as the eyes and “cheeks” to create the emotion. The challenge mouth-less character kept the animators on their toes throughout the production and enabled them to think outside the square*

fxg: Can you discuss the passes that moved from 3D to 2D – was this a multi-pass render or did 3D nail it and pass just a few mattes and passes to 2D?

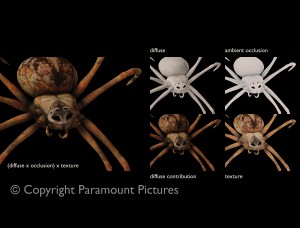

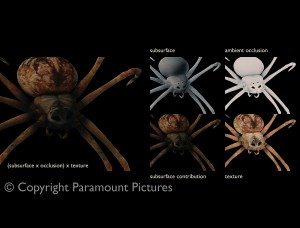

Ben Warner: At her most basic a typical Charlotte build would use 33 passes. 11 passes for the skin, 11 for the fur, 6 for the eyes and 5 for finishing features (3-head hairs for instance). However most shots required additional matte and control passes and most composites usually had anywhere between 35 – 45 passes and that was just for Charlotte all at 2K float resolution. These passes meant that in comp we hand the ability to control all aspects of Charlottes look that were need to help integrate her into the scan plates as well as push her to meet the directors and vfx supervisors vision.

Aspects in comp that could be controlled included separate fur and skin treatments, subsurface contributions, specular intensity and colour, diffuse lighting colour, occlusion control as well as general colour grading and other compositing effects. Other elements including webs and drag-lines were also multi pass renders, with typical web builds averaging anywhere between 10 – 20 passes. For the most part 3D would nail the lighting direction and colour, and the 2D department would push the grades, intensities, and contributions to make the final shot.

Sam Hodge: The number of passes that Charlotte produced without any effect topped out at about 42 passes and this was after a number of matter were packed into the RGB channels of one pass. Of these about 16 were effected by lighting and the remainder were utility and matte passes. Of note were subsurface scattering, ambient occlusion, HDR environmental lookup these all added to the visual richness of the final render. This was a choice to spend the time tweaking the look in comp rather than tweaking to look via shader parameters. It gave the compositors a lots of room to adjust things. The majority of these passes were as float for greater accuracy.

The passes were built together into a template and then adjusted from there. There were two main parts to the build, the subsurface look that gave the spider a glowing look and the ambient look, which was split again into furry and bald spider these could be adjusted by the compositor to suit the conditions of the lighting and the direction of the client. Of course there was a large part of the compositing effort put into getting the eye looking as powerful as they do. In hindsight there may have been more passes that were actually used but it seemed easier to produce extra data and throw it away rather than having to go back to 3d to ask for more.

fxg: Can you discuss the web – I assume the spec highlights were the key?

Ben Warner: The final look and play of light on webs was controlled completely in 2D. Compositors were given a number of noise, control and specular maps in which a combination of some or all could be brought together and the final web look achieved. The key to webs was the play of light and specular contributions, with regards to the diffuse and web thickness. The breakup and intensity of the speculars not only allowed the movement of the web to play through the light, but also defines the age of the web. The cleaner the threads, the newer the web, but with time and age the webs would become more diffuse, less specular and in many cases less fine and perfect. Have the ability to control all these aspects in 2D allowed both artists and supervisors to quickly achieve web looks that were believable, but also direct-able (for instance the glint of light highlighting words in the web and the correct time).

Sam Hodge: The web was a big challenge. There are many aspect to it beyond just the look. First of all we to design the layout of the webs one web for each word. Once the webs were built a number of properties were applied to them to define how Charlotte would interact with the web how the web would react dynamically to wind and impact of Charlotte and other insects. An entire dynamics system for the web was written in house by TD Dan Wills that dealt with the motion of the web.

Once the model and motion of the web were complete. The shading and lighting of the web made use of the auxiliary data tags put into the web; thickness, distress, word, construction, gluey. The placement of the spec was also done via light, but a lot of the specular highlights could only be resolved in comp, by putting together a number of noise and ID passes to bring out the most of the twinkling nature of the web. Because of the thin nature of a silken strand the web was over-sampled heavily so that the renders wouldn’t twinkle more than we needed. On top of that we needed to add dew that would swing and slide on top of the web, all of these details

fxg: Can you discuss the hair on Charlotte – how were they done – how were they rendered – did the pose any special considerations?

Ben Paschke: Charlotte’s hair was created and groomed in XSI. The hair was exported to an intermediate database file format for storage and fast parallel reading. A renderman DSO then loaded the hairs from the file and converted them to Renderman curves. Hair is a very difficult thing. We developed the shaders and grooming and technical logistics all while we were trying to lock down the style of the hair with the client.

A big consideration was that Charlotte is actually pretty small. Most of the 3d hair you see in films is on relatively large animals. We found we could really sell the size of Charlotte by paying close attention to how small, fine hairs shade and how they need to be lit.

Sam Hodge: The grooming of the hair was done out of the box using the fur in XSI, thanks to our chief fluffer Steve Evans. But because we were rendering with Renderman compliant renderer 3delight. We needed to get the data from XSI out in a form that was compatible with renderer. Matt Daw and Moritz Moller tried a number of approaches before concluding on a system that minimized the memory footprint that the hair used leaving more memory free to be used for the shading of Charlotte.

The amount of hair on Charlotte required a half hour pre-calculation of the hair per frame, but allowed this data to be reused during lighting. The hair archives produced a sizable amount of data to be managed and wrangled. The hair also produced about a dozen hair specific passes. Because of the hair deep shadow maps needed to be used to get the best look out of the hair and this had an effect on the size and generation time of shadow maps. We also broke the comp out into a skin and fur tree and using 3delight’s exclusive pass feature we were able to render the skin behind the fur in one render, this was a real life saver rather than having to run the same render twice.

fxg: In many shots you were needing to indicate scale – was there much consideration to the blocking of the shots to indicate scale

Ben Paschke: We would generally start laying out the shots with some kind of nearly realistic lens choice, but ultimately, these sequences are almost fantasy. They are kind of like dream sequences where the viewer enters a magical world for a moment. So we ended up moving off physically accurate lenses if the shot warranted it.

Sam Hodge: We never really needed to cheat the lenses that much to indicate scale, this was mostly done from how the plates were shot, there is usually reference from what Charlotte is standing on or the scale of the other animals in the barn. But indicators of scale were present, dust moving in the background, interacting with tiny pebbles, dirt and strands of hay, all of these details helped sell the scale of the object.

fxg: In a couple of shots with real actors Charlotte needed to still stand out (such as leaving for the fair). How did you help her stand out? Did the scale to the set always remain the same or was she cheated in size at all ?

Sam Hodge: Charlotte’s motion helps her stand out in a wide shot, if the shot is wide enough for her to be lost she is usually scampering around in a very instinctual spidery way. Her scale did vary a little from shot to shot. Sometimes there wasn’t enough survey information to do it numerically so it was eyeballed, but all within a fair tolerance. Its also a bit tricky to judge as Wilbur the pig is growing but Charlotte’s size remains the same.

fxg: Did you use visual (video tape) reference of Julia Roberts’ performance at all to get the performance for Charlotte? Such as head tilts etc?

Ben Paschke: We had video reference for every bit of Julia’s ADR sessions, which was an invaluable source of inspiration and ideas. We could use them as solid directions for starting a shot, or we could just examine the fine nuances to help finish and polish a shot.

fxg: What resolution did you render at?

Ben Warner: Part of the Charlotte pipeline was understanding and allowing for lens distortion. Because we wanted to retain all lens distortion in the scan plates we need to have the ability to distort the 3d renders to match. We used a shake plugin called hype and were able to match the distortion of the scan plates onto the clean undistorted 3d plates. However many times this adding distortion to the 3d would result in the edges being pulled in… in effect the lens distortion would actually capture additional image data that is outside the traditional 2048×1556 render size. To allow for this all the renders from 3D were in essence “over-scanned” and instead of 2D receiving 2048×1556 renders 2D was delivered 2196×1668 size renders from 3D so that the distortion effects could be added. This was dubbed “pixel padded 2K” and with hype for shake allowed believable real world lens distortion to be applied to the final 3D renders.

fxg: Did you render OpenEXR files?

Ben Warner: All 3D was delivered as float EXR, but never more than 4 image channels were used in a single EXR image (shake didn’t natively support image file formats easily that had more than 4 colour channels of information).

The guys on set recored a whole lot of grey-balls and mirror balls for most of the sets and for most of the shots. The mirror balls were multiple exposures. The most obvious example of our use of the resultant HDR maps are in the reflections in Charlotte’s eyes. But for lighting a shot, they were used more as references than actual sources modulating the lighting. The lighting artists would use them as another reference tool to set up their lights for the shot.

Sam Hodge: Yes, but primarily Float RGBA rather than multilayer EXR, the compression allows you to get pretty good compression, but not without the occasional crash due to a gremlin in the pipeline, that we are trying to identify the cause of

fxg: How many animators worked on the character?

Sam Hodge: At the peak of production there were about 20 animators working on Charlotte and her web, they were cast into different types of roles, where some animators would focus on the face, other animators would be focussed on the more spidery-twitchy animation, and some more technical to do with Charlotte interacting with her environment. They really got through a lot of work

fxg: What program did you mainly use for the 3D tracking?

Ben Warner: For the majority of tracks we used Boujou Bullet, and for the more difficult tracks we used Boujou 3. Most tracks needed small clean ups in XSI, but were generally pretty close. Most of our tracking requirements only required a camera solve, and the few occasions were an object required tracking was usually the combination of camera track solutions from Boujou combined with hand tracking and traditional 2d tracking solutions using Shake.

fxg: What percentage (if any) of the shots had any non-prime lens (zoom) work?

Ben Warner: Fortunately zoom lenses when used for shots were left at a fixed focal length. Where we did have problems was when there was a focus rack that would trick the camera tracking into thinking it was a focal length shift. Fortunately Boujou enabled artists to specify locked focal lengths and adjust for these focus racks in the camera solves.

fxg: How did you deal with lens distortion did you estimate or extract a warp and then apply that to the 3D? Or was it not a factor?

Ben Warner: Application called Hype. Production provided footage of lens grids shot with all lenses used on Charlotte’s Web (about 67 individual lenses – including different focal lengths on zoom lenses). From these grids, distortion models were generated that could then be applied using hype to the footage to create undistorted footage which in turn could be used to track. This undistortion process meant that our tracks once imported into 3d scenes would match the the 3d geometry without the slippage and sliding usually associated with distorted footage being used for tracking solutions. (ed: Hype will be featured in an upcoming fxg story)

fxg: If you did use lens distortion calculations, did you export something to shake for the 3D to be warped to match or did you undistort the footage ?

Ben Warner: Our distortion pipeline was crucial in the line up and matching of the live action plates with the computer generated Charlotte. To maintain the integrity and “look” of the live action plates we maintained any lens distortion, chromatic aberration or other lens characteristics and applied these lens distortion and characteristics to our 3d cg elements. In essence our pipeline for 2d was a distorted environment for any final composites, and an undistorted environment for any tracking, match-moving or 3d pass compositing.

Our 3d pipeline was only ever an undistorted pipeline, with the final delivery from 3d to 2d being undistorted 3d passes. Any compositing of Charlotte or webs from 3d was undertaken in an undistorted environment and then before integration with live action plates the 3d elements would be distorted using hype using the associated lens model for the specific shot within Shake. Using this approach we were able to accurately track and place Charlotte into live action plates, and still retain the lens characteristics and distortion of the live action plates. Numerous custom tools were written for 3d and 2d to automate the association of lenses with shots and streamline the distortion pipeline as much as possible so as not to impact on the animation and compositing of shots.

FUEL

FUEL was focused on the young spider babies and their first web flights. As with the primary webs in the rest of the film, the use of specular highlights played a huge role in defining the look of the ‘flying’ webs. Animators rendered out multiple spec passes with different frequencies so the compositors could isolate the correct specular amount for each shot as part of the compositing process.

Lighting the webs was also difficult since the amount of pings gained from a faithful reproduction of the actual lighting was not enough. The team ended up having to fly digital 3D lights around the 3D barn space to cause the spec highlights to move and glint enough to work.

As luck would have it, while in production on set — completely without planning or assistance — a real set of baby spiders took flight. Someone on the production suddenly noticed, and while the primary 35mm camera was locked off and unable to be used, an extensive video was made of the real spider flights. This video became the benchmark for both the director and visual effects supervisor. According to FUEL’s Visual effects supervisor Simon Maddison, FUEL saw their job as just matching as closely as they could to this real life footage captured spontaneously on set at the real barn location.

FUEL is based in Sydney, the team is extremely experienced in high end compositing and contributed to several other shots, besides the baby spiders. In brief, Fuel delivered 157 VFX shots for Charlotte’s Web and this included the Baby Spider sequence (the film’s finale) and the talking Geese. The crew is especially experienced using Shake.

Rhythm & Hues, Tippett Studio, and Digital Dimension were also heavily involved in the motion picture.

All images are © Copyright Paramount Pictures