Session talks

While the conference started today, Sunday, the main papers and talks really begin tomorrow. Still, there were some early presentations that were very interesting.

One of the first talks focused on ‘Pushing Production Data’, with presentations by DreamWorks Animation on the artistic rendering of feathers and on coherent out-of-core point-based global illumination and ambient occlusion used on ‘Kung Fu Panda 2’. The studio also demonstrated its artist-friendly simulation tools used to destroy a city in ‘Megamind’. Weta Digital showed off the capabilities of PhotoSpace, its photogrammetry tool that allows for automated photo capture and digitization of props for feature film and visual effects use. Items are placed on a turntable and then multiple photographs are taken, with the tool delivering a 3D point cloud that can then be meshed. The fidelity in the final 3D model comes from the feature detection undertaken on every single image. The tool also works with Mari to allow for very high-resolution re-projections. We saw some great examples of small but relatively complex props like a backpack or a crumpled jacket that were placed on the turntable and meshed into models without any artist intervention. Weta says they are continuing to work on PhotoSpace to improve its ability to recognize shiny objects, objects with not much detail and, in the future, to take it out of a controlled turntable environment.

Technical Papers Fast Forward

A highlight of every Siggraph is the Papers Fast Forward, it is both a brilliant chance to see an overview of every talk in the conference, and a chance for many of the serious technical presenters to have some fun. Each paper is given a minute to present a summary of their work, mainly in the form of a speaker with slides. But as this is really a mini-advertisement to attract an audience to your paper, the results can be very funny and engaging. This year saw one paper presented by a speaker in a gorilla suit, for yes a Rise of the Planet of the Apes-based paper, several very funny gameshow rapid fire graphics pitches, live face painted mime artists acting out character animation capture and even an old 1920s silent movie with hand cranked music score.

While a few papers were not included, presumably as their authors were yet to arrive for the conference, the Fast Forward did throw up some papers we’d missed when reviewing the paper schedule that we we will aim to see, such as:

0019-Real-Time Eulerian Water Simulation Using a Restricted Tall Cell Grid

In this paper, real time GPU processing allows for real time water simulation – with user interaction. This paper is both impressive and highlights a stunning new level of fluid sim real time GPU performance, which extends from just waves to even spray and foam simulation.

In this paper, real time GPU processing allows for real time water simulation – with user interaction. This paper is both impressive and highlights a stunning new level of fluid sim real time GPU performance, which extends from just waves to even spray and foam simulation.

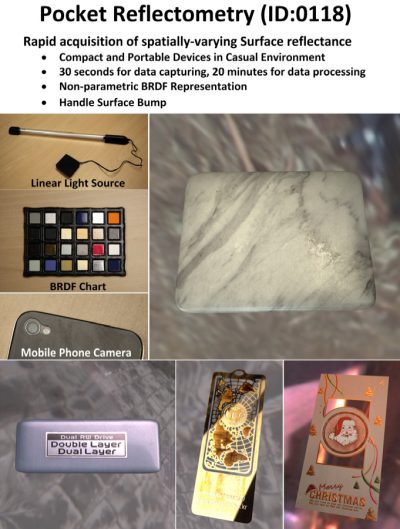

0118-Pocket Reflectometry

This small, literally pocket, kit allows BRDF sampling of materials using an iPhone and while not realtime, allows for incredibly impressive results. As most BRDFs are just approximations and most professional rigs are in research labs with expensive lasers – this system could be made accessible to any material shader writer and the results are stunning.

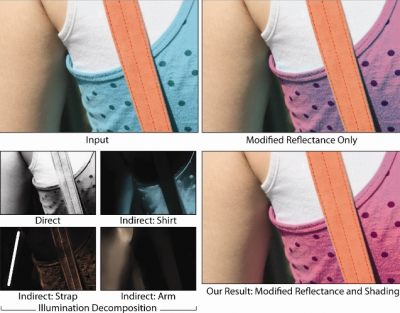

0494-Illumination Decomposition for Material Recoloring with Consistent Interreflections

This paper shows how to deal with re-coloring spill so that when say a dress is changed in color – the subtle spill on the arm is also addressed and carefully changed to match the right radiosity spill or bounce effect on live action footage.

0182-Realtime Performance-Based Facial Animation

It is hard to pick which facial animation paper to recommend but this one caught our eye as it will be demonstrated live during the talk. The authors aim to produce realtime facial animation from one camera so you can turn your Skype video conference into something a little more fun – all in realtime.

It is hard to pick which facial animation paper to recommend but this one caught our eye as it will be demonstrated live during the talk. The authors aim to produce realtime facial animation from one camera so you can turn your Skype video conference into something a little more fun – all in realtime.

0284-Depixelizing Pixel Art

![]() Image processing algorithms are a popular subject at any Siggraph. This paper seems to offer exceptional up-resing and aliasing repair. We will wait to see the paper but the results seem stunning.

Image processing algorithms are a popular subject at any Siggraph. This paper seems to offer exceptional up-resing and aliasing repair. We will wait to see the paper but the results seem stunning.

0360-Microgeometry Capture using an Elastomeric Sensor

This is actually a returning favorite of fxguide. We covered an earlier version of this at Siggraph in New Orleans. At that time the sensor could scan the bumps on a fingerprint and produce a 3D model by pressing your finger on the elastomeric sensor. Now the system is 10 times as powerful and thus can read the bumps on a banknote from the printing and ink height differences. It can literally produce a model of the height field of the text on a $20 bill.

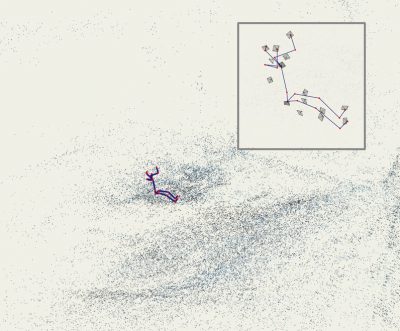

0426-Motion Capture from Body-Mounted Cameras

This motion capture solution aims to place the cameras on the subject – thus allowing motion capture in large exterior locations.

This motion capture solution aims to place the cameras on the subject – thus allowing motion capture in large exterior locations.

Instead of producing a capture volume – multiple cameras are attached to the body and look out, allowing for complex ‘camera tracking style’ solutions to solve the position of all the body points.

The classic thinking outside the box Siggraph paper we love to see!

One more…(that we missed seeing at the FF but is of real interest to many of us)…

0151-A Versatile HDR Video Production System

An optical architecture for HDR imaging that allows simultaneous capture of high-, medium-, and low-exposure images, and an HDR merging algorithm that avoids undesired artifacts. This paper explains implementation of a prototype HDR-video system with what appears to be a beam splitter for acquiring HDR video.

fxguide / fxphd user meet-up

Sunday Night was also the annual Siggraph fxguide and fxphd meet-up, this year held at a pub near to the convention centre. It was great to see so many members of fxguide insider and fxphd. Thanks to everyone who came…between drinks, here are just a few pics of those who attended.