Ubisoft’s Assassin’s Creed IV: Black Flag is due for release in October this year. The announcement trailer and featured one of the characters, the pirate Blackbeard. We talked to Digic Pictures about how they made the digital pirates, oceans and the battle.

fxg: This trailer seems so informed by live action – can you talk about how it was planned, and shot – both in terms of mocap and any live action reference?

Peter Sved, project director: We made use of full-performance capture data on all of the Blackbeard shots. That is, the actor’s voice, facial gestures, and body movements were all recorded simultaneously, to ensure full coherence across the 3d character’s body animation and facial performance/lip-sync, as well as his intonation during his monologue.

A number of takes were recorded, with each take spanning the full two-and-a-half minute performance of the actor. Three additional actors were seated at the table, to aid the lead actor’s performance.

Two takes were selected to be used in the final shots of Blackbeard – one where the monologue’s beginning was preferred, and one where the performance’s second half was deemed to have more of the desired “chilling” effect.

The action sequences were recorded as body mocap only, as here the head-mounted cameras would’ve gotten in the way, and choreography took priority over facial performance (which can easily be animated by hand on such short, non-speaking shots.) We used our usual team of stuntmen, rehearsing for about a day and a half, then recording over about three days in total, including pick-ups.

fxg: What were some of the modeling challenges for people, as well as environments and the ships?

Tamas Varga, lead character modeler: The challenge with the cast was twofold: we had to build some highly detailed lead characters to be featured in close-ups, and a very large and diverse range of extras able to stand up to the leads – and all at the same time. Beyond the ‘usual’ hero Assassin, Edward, we also had the legendary pirate Blackbeard narrating the announcement trailer; we had Spanish navy soldiers and captains, courtesans naked and clothed, and we also needed to populate bar brawls and shipboard fights with pirates.

Over the years, we’ve developed a pipeline at Digic to create detailed hero characters, and thus we were able to rely on our previous experience to deal with the leads in Black Flag. The process starts with concept sculpts created in Zbrush, enhanced in Photoshop, in order to get feedback and approval from the client as quickly as possible. This includes the head, and sometimes we sculpt the clothing as well, creating the main folds and creases; but for softer fabrics we model in an inflated, smooth initial pose. Then we retopologize the sculpts to create high res poly models, following all the details, ending up in the range of several hundred thousand polygons for the entire character. We’ve found that the higher density helps a lot with the deformations and today’s computers are able to deal with the larger datasets.

Then we use reprojection to preserve as much detail from the concept sculpts as possible, but most models generally require a secondary sculpting pass as well. Smaller accessories and hard surface objects are built by hand before undergoing a detail sculpting pass, and we paint all textures from scratch in Bodypaint and Photoshop.

Edward turntable.Facial animation was created with a blendshape system based on FACS, and for Blackbeard we’ve relied on full performance capture data provided by the client. This was a highly iterative process, testing how far we should go in trying to match the actor’s characteristics on a completely different looking CG character, and constantly tweaking the base shapes and adding corrective shapes as required. The beard that occluded a lot of the face did not help much, but it was a far bigger challenge for the rigging department – see below for their comments.

The supporting cast was however a larger problem, because pirates (or courtesans) don’t wear uniforms – they all have unique clothing and accessories, built up over time from looting merchant ships and ports grabbing whatever they can. We knew from the start that building a small set of clothing and dipping into our library of previous assets will not be sufficient, that we will have to model a lot of completely individual characters – and stay within the budget and the schedule of the project. Our existing pipeline would not have allowed us to complete enough characters in time, so we had to finally introduce a long dreaded approach: re-usable base meshes.

All the new secondary characters in this movie are using the same topology for the anatomy models (with separate male/female versions), the same starting set of blendshapes, and we have simplified the geometry of the clothing and accessories as much as possible, relying more on displacements instead. Additional excitement was provided by the fact that we had no time to fully test the new workflow and had to build final geometry on the fly, but in the end it all worked out and we’ve made the right calls. This new approach has allowed us to create a dozen new characters with dynamic hair and clothing in a much shorter amount of time; and because they’re all built on top of concept sculpts similar to the hero characters, they are also looking good enough to appear in mid shots right beside the leads. The quality of the facial animations and the cloth dynamics has suffered a bit, but this was a trade-off we were glad to make. Our modeling and sculpting team stood up to the challenge magnificently and we were able to create a colorful band of brigands and beauties from the Golden Age of piracy.

Frank turntable.fxg: What approach did you take to cloth and sail simulations?

Andras Tarsoly, lead rigging TD: Cloth simulations were done using SyFlex. Due to the high number of assets involved, and for the sake of easier handling of shots, the simulations were baked into the hi-res geometries.

The motion seen on the sails and ropes were not done with cloth-sim. Here, the rigging department was called upon by the set department to create various rope setups, which had to be as simple as possible, considering the high number of instances that were used to furnish certain sets. These could be used directly, for example to create complete mast-cordage set elements, together with their appropriate sails. These ropes could be fixed at either end as needed, and bent. The controller for each middle part had a “wind” parameter to take care of constant movement on the rope, allowing us to skip an extra simulation phase.

Blackbeard cloth sim test.There was another important request for us to consider, from the render department: due to the large number of rope assets per shot, they had to have direct access to the displacement and subDiv parameters of the rope asset, as well as to its basic polygon parameters (number of divisions lengthwise and across). This way, we could use just a single asset for background ropes as well as ropes closer to camera. Also, since assets could be referenced within other assets, modifications to ropes only had to be handled at the necessary level.

fxg: What were some of the specific tools and techniques you used for hair, and also for feathers, in the trailer?

Andras Tarsoly, lead rigging TD: Black Flag was the first project where we’ve used new tools for both the creation of hair and fur, as well as for simulating their movements. For hair and fur creation, we used a third party Maya plugin called Yeti, by Peregrine Labs. Thanks to the pirate theme, nearly all characters had multiple fur assets on them, the only exception being the soldiers. In addition to these, the hero characters also each had a peach-fuzz asset.

Blackbeard hair and fur.The “hero” hair/beard assets (eg Blackbeard’s) had a complexity several times greater than that of our so-called “hi-res” characters’ (eg the bearded pirates.)

Upon completion of the look-development phase, there were two ways that the given hair/fur asset could take its final form: all short hair and short beard were done as static assets. In these cases, the rigging TD’s job was to prepare the asset, so that once it got placed into a shot, it would communicate with the other assets relevant to it, ie get the appropriate data from objects driving it, then pass the right data towards the renderer. In all other cases, the hair/beard had to be prepared for simulation. All such hair and beard were simulated using Maya’s nHair solver.

fxg: How did you accomplish water sims, including underwater?

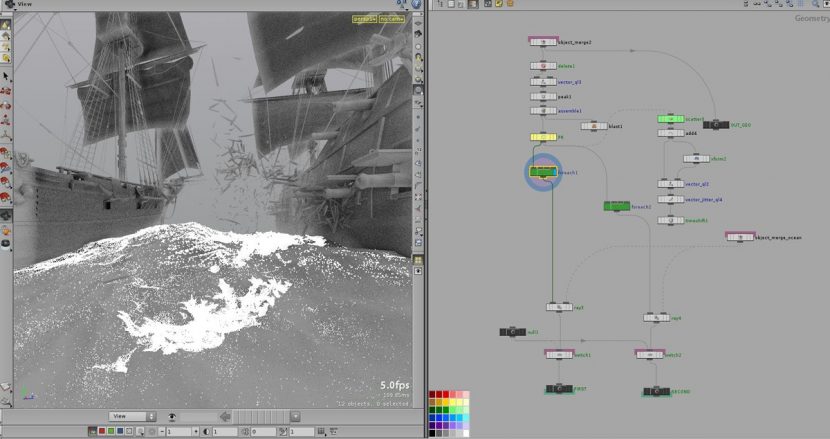

Daniel Bukovec, Imre Tuske, Ferenc Ugrai, Houdini FX TDs: We used HOT (Houdini Ocean Toolkit) to get the main body of the ocean. The effects that come with the ocean like foam, mist, splashes were simulated inside Houdini.

There is an effect-intensive shot with two ships shooting at each other, this was the most complex. The cannon shots are destroying the hull of the ship, and the falling wooden debris generates splashes in the ocean. The falling debris pieces were generating point groups where they intersected the ocean, and they had custom velocities set here, then these point groups were fed into the flip fluid simulation. This way we were able to control the initial velocities, thus controlling the shape of the splashes. Foam and mist was a simple particle system, the trick was getting nice initial velocities for the emission, especially at the intersection of the ocean and the ships.

The underwater feeling was achieved by simulating clothing which looks like it is underwater, with bubbles and “fog” added to enhance realism.

fxg: What were the effects tool you used for the battle, and also for things like dust, gunfire and rain?

Viktor Nemeth, Lead Fx TD (FumeFx, Houdini FX), Daniel Bukovec Imre Tuske (Houdini FX TDs): We began working on the effects as early on as the animatic phase: we modified the proxy ships so that we could do tests on the exploding shrapnel and wooden chunks. The models were broken up using Houdini Fx, using a completely procedural workflow, so that when we received the final models (with vastly different geometries) not long before the film’s deadline, we could re-simulate all effects within about two days, based on the prototype that had already been approved over a month beforehand. We used the Alembic format to exchange data across the various programs in the pipeline.

The cannon-shots were done in FumeFx. Thanks to the new version, we could emit particle smoke of various colours which added to the realism of these shots. We had 14 FumeFx layers in the shot’s final comp tree. We simulated each cannon-shot over 200 frames, synchronizing their beginning to when the shots were being fired, and using the sims’ ends to add atmosphere to the fight sequences taking place on deck. We used about 3 animated wind forces to achieve the right kind of turbulence for the smoke. The cache file for each such cannon-shot came to be about 100 Gb in size (including temperature, velocity, and color channels.) All effects were rendered out from Maya using the Arnold renderer.

fxg: There’s such a step-up in terms of lighting. How did you approach lighting and rendering for daylight scenes, and also for say the candelit scenes?

Balazs Horvath, lead lighting & compositing and Peter Hostyanszki, lighting & compositing artist: The interiors were generally much harder to light than the exteriors. For the daylight scenes, our approach was to try and achieve a realistic look and feel to the shots by focusing on adjusting the dynamic ranges of the shots, things like the over-exposed white areas of the sky, and also getting the light-wrapping around the characters to feel right when compositing.

– Above: see more of Digic Pictures’ work in their 2013 demo reel.

For the night-time exterior shots at the end of the trailer, the main challenge was to achieve consistency for the lightning-flashes across the 3D characters and the backgrounds, since the latter were nearly all projected matte-paintings. For maximum control, all masks were created in Nuke to define the matte-paintings’ shadow areas on the lightning-lit frames.

For the interior shots of Blackbeard, we focused less on realism via physical accuracy. Instead, these shots were more about achieving the most appropriate artistic result. Nevertheless, we did make use of samples from the real world to help us: along with shooting live reference footage of a candle-lit actor, we also shot some video footage of a single candle, and used it to grab intensity values which then directly drove the 3d candle’s light on Blackbeard’s face, to achieve the appropriate flickering.

I am always hugely impressed by Digic’s work, they are brilliant artists. I did not see anywhere in the article where it talked about their schedule and deliverables, could you address that in the comments? How long did they have for this? Do they work in 1080P HD Resolution and output PRORES QuickTime or do they deliver final DPX frames to be graded by a finishing house? Did they do all the sound design/editing/finishing as well?

Coming from VFX I’d like to know more about the overall pipeline for something like this.

Thank you!

astonishing, amazing , jaw dropping

Congratulations

Hi Joe – I asked Digic about your questions and they have provided the following for you:

1. How long did they have for this?

This is always a tricky question to answer exactly, because there’s often a lengthy initial period during which time the script and animatic are crystallized, and during this time, only a limited number of people from a limited number of departments are working on the given project. You could call this “pre-production” but that wouldn’t really be true, because actual production can (and does) begin on certain assets during this time – those assets which are 100% sure to be in the film, eg. the hero character(s). So with this in mind, AC4 Black Flag took about two months of full-steam production after the animatic was approved.

2. Do they work in 1080P HD Resolution and output PRORES QuickTime or do they deliver final DPX frames to be graded by a finishing house?

We do work in full 1080p HD resolution during production. Our final deliverables can vary slightly from project to project, but usually a ProRes QuickTime is included. Final grading is usually done by us.

3. Did they do all the sound design/editing/finishing as well?

Final sound design was handled at the client’s end on this project, we worked with temp sound throughout production.

Hey I love the breakdown of the trailer. I hope to one day work on a cinematic like this. I have a couple of questions I would like to ask just to get a better idea of what goes on in the pipeline that wasn’t included in this article.

What was the poly count on the characters with clothing and accessories included? What was used for texturing Mari, Photoshop, Mudbox or Zbrush and the size of the textures? The last question is what solution was used for the hair, thanks?

Scratch my previous post my questions were answered in the article, thanks.

Pingback: Assassin’s Creed 4- BLACK FLAG OFFICIAL TRAILER | CGNCollect