In this in-depth look at Doug Liman’s time-splicing Edge of Tomorrow, we talk to Sony Pictures Imageworks, Framestore, Cinesite, MPC, ncam, The Third Floor and Prime Focus World about how they helped orchestrate some of the film’s biggest effects and its previs and stereo conversion – including complicated (and crazy) tentacled mimics, power exo-suits, the detailed beach attack, the training room and the final Paris raid. We explore newly written Maya plugins and the toolsets behind animation, destruction and water sims in the film, overseen by production visual effects supervisor Nick Davis.

Making the mimics

For the dangerous and frenetic tentacled mimics, Imageworks took early designs and developed a Maya plug-in that dealt with the complicated tentacles and interpenetration issues. These alien creatures were envisaged as vicious beasts that could adapt their bodies – made up of fast moving tentacles – quickly to stage an attack. With the mimics, or ‘grunts’, appearing in Imageworks’ gamut of visual effects work in the film’s first two acts, the studio took initial designs created by Framestore, MPC and the production art department, plus previs by The Third Floor (see below) and implemented this into its pipeline.

“One of the production artists came up with a design that was completely made out of tentacles and he made a little maquette out of clay,” recalls Imageworks visual effects supervisor Daniel Kramer. “There were no solid structures whatsoever. It was like he took a bowl of spaghetti and you just formed that spaghetti into limbs and a body. What that meant was that the creature could completely change shape – it could spawn a limb or retract a limb or grow new tentacles within a limb. They would writhe against each other and slide against each other – it just had tentacles coming out where the head might be.”

“The thought was that there’s really no up or down or left or right to this creature,” adds Kramer. “So if it were running along the beach and wanted to turn around to attack somebody, rather than actually turning around, it might just invert its body – just suck it in and grow a limb out the other side. It was great idea, although it creates lots of challenges in terms of how do you actually do that!?”

Added to that issue was what the tentacled body would be made out of. “Doug didn’t want anything too organic or terrestrial,” says Kramer. “The idea then was that it was made out of stones or pieces of glass – its body was very sharp and it could slash and stab. So we came up with obsidian as the material that it would look like – basically a glass that could cut. It even looks mean when it’s put together.”

But by far the biggest challenge for the mimics came in wrangling the mass of tentacles as they moved and attacked – something that would normally be a daunting keyframe animation task. “We didn’t want animators spending all day animating hundreds of tentacles and trying to figure out how to prevent them from inter-penetrating,” notes Kramer. “We had to manage it and get through the shots. So one of our technical animators – Dan Sheerin – built a plug-in in Maya to build these tentacle limbs procedurally.”

That plugin became known as spTentacle, enabling artists to define a center spline curve as the basic mimic limb and then grow new curves that wrapped and twisted around that center core. “Along those curves we would instance those obsidian bits,” says Kramer. “The plug-in would know the radius of every bit as it went down the chain, and it would know where they went in space. So it would handle all of the collisions and inter-penetrations between all of those tentacles.”

“It would order softer shapes toward the base and more angular shapes toward the ends as well as some extra spike geometry for more weaponry,” continues Kramer. “That segment geometry could cycle up and down the limb like a conveyer belt or chain saw. All the splines would twist around the core based on a noise pattern and the plugin would push the splines away from each other given a radius that could vary long each spline. Plus it would detect what the direction of gravity was and we would let some of the looser tentacles hang down, or we could contract them to give it some more power.”

spTentacle also handled several levels of detail by copying different LOD geo or simply skinning a simple shape over the whole mimic body. “The plugin could handle two cores and satellite tentacles could warp around both cores,” says Kramer, “or it could wrap around each individual core making a very complicated weave. We also had some bones at the very end to represent digits. They became targets when we turned on the finger controls and some of the tentacles would grow into those bones for really articulate posing when stepping or grabbing.”

To help sell the frightening speed at which the mimics move – particularly in the beach scenes – Imageworks incorporated within the mass of tentacles an array of flying sand and debris. “What Nick Davis and I were worried about was how were we going to give that thing a sense of weight and how where we going to make it believable that it was there,” comments Kramer. “We found the solution was that if we just paired it with a massive amount of effects animation and we made sure that any time it was contacting the ground that it was throwing up tons of dirt and dust. We would even burst dirt and dust off of it as it was spinning around just to make sure it was always enshrouded.”

Imageworks’ typical pipeline for the mimics involved Maya, Katana and Arnold, with the studio relying on instancing to get large number of mimics into the scenes without overbearing render times. Says Kramer: “One thing we could leverage off quite nicely was that all of the limbs were rigid pieces that were basically bound to the limbs as just transforms, so there were no deformations going on on the limbs themselves. So even though those pieces were pretty detailed and we would have a lot of mimics in the scene, we only had a limited subset of technical pieces that would get instanced along those limbs.”

“And we could instance those in memory,” Kramer adds, “and we could use that through Arnold and Katana, and that kept the memory footprint down. It also made translating the scenes really light because, normally if you’re deforming geometry you generally have to translate all of those points out to disk per frame and with those obsidian pieces. But all we had to do was translate out a transformation matrix for each individual piece, so it made it really light to export.”

Larger mimics – alphas – also appear in the beach scenes, including one that spills its blood on Cage to trigger the reset. For these, Imageworks incorporated a definable head area since these mimics were designed to be more sentient. “We wanted them to be the generals on the battlefield that are controlling the time a little bit more,” says Kramer. “As a result they’re about 60 or 70 per cent bigger, but they share a lot of the same qualities. We ended up giving them a different color to make sure they were indistinguishable with a different head. They also move a lot slower and more confident and powerful. What’s cool about that was that all our work on the grunts was going by so quickly because they were so frenetic, but in the few alpha shots you really do get a sense of the complicated nature of how the limbs were constructed and how they grow and form.”

Suiting-up

Production built full-scale power exo-suits worn by the performers that were then replicated and enhanced by the visual effects teams, including or fully digi-double takeovers. Production designer Oliver Scholl worked with special effects supervisor Pierre Bohanna on the suits, taking early Framestore designs and crafting practical versions for the actors to wear that was further enhanced by costume designer Kate Hawley. The suits weighed between 85 and 130 pounds depending on the artillery installed with them – the weight was such that in some shots the suits were suspended with wires and chains.

Imageworks led the creation of digital exo-suits and actors, since again these assets would appear in the early scenes. “On set we built a scanning booth where we would have actors go through different stages, for full body photography and FACS scanning of their faces,” describes Kramer. “Then we had a hand scanner to do detailed scans of the exo-suits themselves, then obviously lots of texture photography. Some of the pieces of the suits were sent back to Imageworks so the guys doing the lookdev could also see how the materials were responding.”

Imageworks also benefitted on Edge of Tomorrow by incorporating a library of scientifically measured materials into its shading pipeline, including for the suits. “The shader team had all of this measured data for plastic, metal and obsidian,” outlines Kramer, “and ended up doing a fit process where they had the response curve of the diffuse and the specular and all the properties and sub-surface. They went through and took all our basic Arnold materials and they tried to fit our materials by tweaking the properties to this curve. We had our basic Arnold materials, but they had been dialed through this genetic algorithm to mimic the real-world materials. I believe our show is the first show to use that. So when we were assigning materials to the guns – these ‘angel wings’ on the back of their suits – or the suit properties we could have a really great starting place for these measured materials.”

Dropping in

A practical drop ship set was used for scenes of Major William Cage (Tom Cruise) and J-Squad’s flight into the beach, which Imageworks then enhanced with its CG ship as well as the surrounding environment and subsequent crash. Production designs for the drop ships – based largely on Osprey VTOL aircraft – led to a practical build for a crashed ship on the beach head, which Imageworks matched in CG. “It was probably our heaviest model,” says Kramer, “because we had to build it at a really high tolerance. When they’re walking across the tarmac at Heathrow earlier I knew they would get very close to it, especially on individual wheels and landing gear. There’s also quite a few times it crashes into the ground – I wanted to make sure we would see panels separating and sliding against each other.”

Imageworks chose to develop the drop ships and other hard surface vehicles as 3D models with volumes rather than using displacement maps, in order to give them the necessary detail. “It meant that when we put things through our destruction pipeline, things were able to tear apart properly,” explains Kramer. “It made it very heavy and difficult to work with sometimes, but it was such a hero asset I felt that that’s what was needed. Again we worked with those measured materials but the texture and lookdev artists did amazing work. We used a lot of reference images of Osprey for panels and scratches.”

Watch part of the drop ship sequence.Cage and J-Squad’s approach to the beach was filmed on a large gimbal rig surrounded by bluescreen at Leavesden, with the actors almost literally hung from large hooks. “They could rock it back and forth so everybody inside could be dangling,” says Kramer. “I think it was kind of brutal for the actors. I know that the day we had to scan Bill Paxton, I think he was a little bit sick – he had spent the entire day inside that gimbal rig three stories up, hanging up, and walking up and down the center isle. They were in there like sardines and it was hot with the lights which were rigged from below when the floor opens up to let in the sunlight.”

Imageworks generated 3D terrain for the scene below from tiles shot at Saunton Sands in southern England. The area also included a Houdini-built ocean rendered in Arnold, along with other drop ships, hovercrafts, landing vehicles and Massive infantry. Much of that is visible as Cage makes his own exit from the drop ship after it explodes. “Nick Davis previs’d it with The Third Floor in London (see below),” says Kramer. “Then once that was bought off our job was replicating that with live action and a few guys dangling, then filling out the world. We added in some pre-baked animation of troops and vehicles on the beach, and animation of the guys on the ropes swinging and the ships. That entire shot is pretty much CG – there’s a moment at the beginning when you’re with Tom and as soon as he leaves frame, from thereafter he’s CG.”

Drop ship b-roll.On the beach

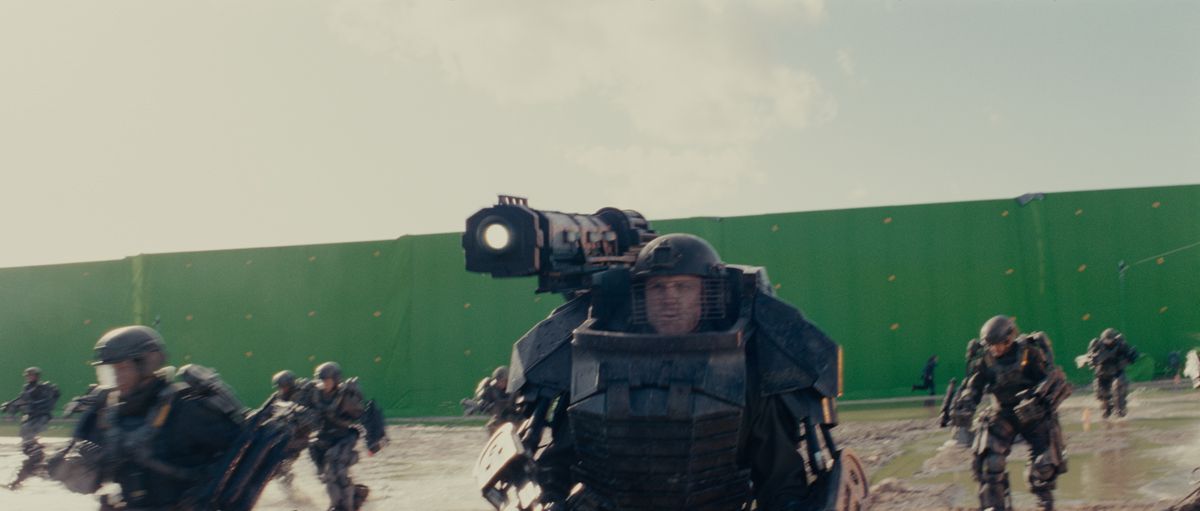

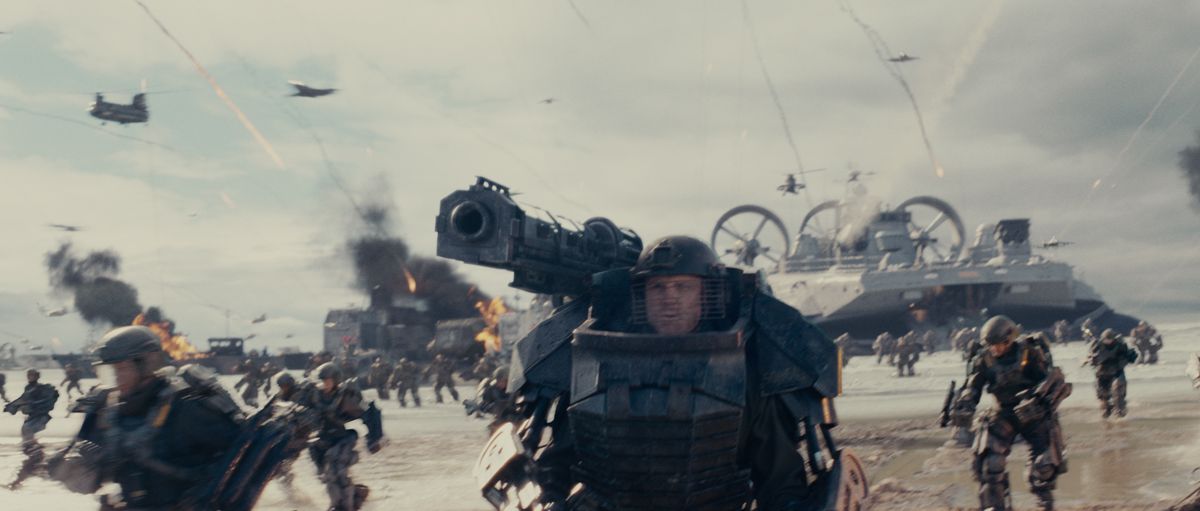

As the United Defense Forces arrive on the beach, they are inundated with a waiting force of mimics, mostly obliterating the army and its vehicles and artillery. An outdoor beach set surrounded by greenscreen at Leavesden served as the location for principal photography captured by DOP Dion Beebe on 35mm film, with Imageworks filling out scenes with extra CG vehicles, infantry, mimics and various levels of destruction. “When I arrived there the first day,” recalls Kramer, “it really felt like war already, without any visual effects! There were gas explosions, and dantes, which are diesel burning smoke columns.”

A LIDAR scan of the beach set was utilized for extensions and in adding in CG elements. “We could sometimes swap out the grand completely and do CG ground and it looked pretty good,” says Kramer. “But a couple of things made that difficult – if you push the camera way in or your pull the camera way out, and you go to a very different scale, that lookdev tended to fall apart. The beach was also so varied, depending on where you were on the beach. We also shot there for two months and it would rain some days – so there wasn’t one solution to figuring out how to render that beach.”

Watch some b-roll footage from the beach shoot.Ultimately, a CG beach render was done for just about every shot, with HDRI used to take the shot as far as possible. Compositors then would split in tiled backgrounds or enhance the shot where necessary. In fact, comp’ers were given somewhat free reign to layer in elements to make shots work. “Sometimes the greenscreen or the pull was very difficult and they couldn’t layer something behind a practical explosion,” explains Kramer. “So they were free to stick their own explosion over it or a crashed jeep somewhere. This was really because the continuity could be very loose. We almost couldn’t be overboard – we just kept adding.”

The mimics fire javelin missiles at their foes, which appeared on screen with a double-helix trail of smoke. “Nick Davis was keen to make sure there were a lot of them,” states Kramer, “because for a large part of the shots you don’t actually see the mimics firing them. What are you fighting? Why is it so dangerous? Having those javelins up there made it like, anybody could die at any minute and it was really unpredictable where it could be.”

Imageworks artists studied tracer fire and rockets, with the final look intended to be actual segments of mimic tentacles that had been expelled from the creatures. “We were suggesting that they had a hot lava internal core,” says Kramer, “and they could fire one of these things out that was red hot and molten. Then we had this particulate soot pass that would come off the back and from that we burst this smoke. We initially did a straight pass that kind of wiggled, but Nick wanted them to be very unpredictable – if they hit the ground they would scrape along and take out four or five guys. So we started tumbling them end over end. Dave Davies, one of our effects leads, started spinning and tumbling them in a particular way which created a double helix pattern behind them.”

“We had so many javelins in the shots that the sims started getting really heavy,” notes Kramer. “So the effects team simulated a volume of javelins going in the air in Houdini – maybe a hundred of them in a particular section of the beach – and then we baked that out to disk. When the lighters would get a shot, they could literally grab this volume of javelins and rotate and place them in the shot. If they needed more they could just export it again and place it differently or offset the timings. So we could stamp down fields of javelins in the air. The lighters then had a lot of freedom to render pre-baked smoke columns or the javelins and it freed up our effects artists to do all the other custom sims.”

More b-roll footage from the beach shoot.The javelins’ impact is the destruction of drop ships, hovercraft and other vehicles – CG creations that Imageworks destroyed with a combination of its existing RBD Bullet pipeline and more recent implementation of Pixelux’s DMM API into Houdini. “The artists would assign different material properties to a jeep and say which parts are glass, which parts are stiff metal and soft metal and what can tear,” explains Kramer. “It basically breaks it down to a finite analysis with all these small tetrahedrons and you give them material properties. All the big crashes with the drop ships were done with DMM, too, and we also had Houdini water sims when they crashed into the shore.”

Although the filmmakers always knew there would be additional elements added in, they still incorporated smoke and fire into shot frames so that the actors would be aware of their surroundings. Some green triangles and eyeline poles were used for the mimics, but predominantly the actors performed against nothing. Stunt co-ordinator and second unit director Simon Crane made use of wire work for soldiers flying and also being grabbed and pulled by the mimics.

The action proved so chaotic that continuity from shot to shot was not as crucial as some other shows. “It was disorienting and chaotic which actually gave the artist a lot of freedom to come up with solves. So early on we had the effects department mimic what all of these practical effects were doing with our own gas explosions and CG smoke columns. Then we had our lighters render that out from various camera angles and lighting conditions. So we had both the element library Nick Davis had shot with practical elements, but some broke frame or we needed different angles, so we copied the look of them as best we could, rendered out CG versions and kept adding that to our element library as 2D elements, but we also kept those sims around in case we needed something specific later on.”

Roto and matchmoving of course proved to be a crucial solution to the chaotic shots. Imageworks’ India team handled roto in NUKE. “Just about everything was roto’d in the scene,” says Kramer, “characters, terrain, static objects, and even the greenscreen to help with the sky to greenscreen transition. Often the curve networks were so dense that keeping them live in NUKE was too complicated or slow. We ended up rendering out the shapes automatically when we’d get them back from India with, and without, motion blur to give the compositors some pre-baked mattes. We had the curves to pull in if we needed them though. Often we weren’t quite sure how a shot would come together as each shot was such a puzzle and we’d ask for extra roto quite late in the life of a shot once we were sure what was needed.”

Matchmoving was carried out for the beach shots by Yannix who tracked anamorphic film plates and performed object tracks. “For each shot on set,” recounts Kramer, “we’d sprinkle the scene with orange pingpong balls and of course we had the greenscreens with markers as well. We used a total station survey for each setup. Yannix would fit the beach LIDAR as best as they could to the plate. Even though the ground had changed most of the major features of the beach were similar enough that we could get a decent match. If we needed to put a CG object on a particular part of the beach we’d often have to have them touch that part up or ask our modeling department to build a patch of ground that matched closer if the topology had changed too much.”

Almost every shot with Emily Blunt required some object tracking, especially to enable her CG ‘Angel Wings’ guns on the back of her exo-suit. “You’ll notice in the film they are generally folded up on her back,” says Kramer, “but when deployed the guns look like they are attached to Steadicam arms (Tom has them too in later loops). The folded wings were too heavy and uncomfortable for Emily and it hindered her performance. As a result they were removed on set and we added them digitally in 95% of shots where they were visible.”

Trashing the trailer park

Eventually, Cage and his ‘reset’ confidante Rita (Emily Blunt) are able to exit the beach and move inland, making their way at first through a trailer park and commandeering a car with a trailer in tow. It, however, is hiding a mimic that Cage must dispatch. Imageworks incorporated a CG mimic and destruction effects into a practical car stunt for the sequence. “Special effects rigged up the trailer with all sorts of bangs to explode it and smash out the windows,” says Kramer. “There were about six or seven trailers, and then they would go to the next stage, hook up a trailer that was half eaten away and had bullet hits in it or be a little bit on fire. Then we also towed the same trailer with the camera car and shot some tiles so there was a rear view of the trailer swinging back and forth.”

For the times the mimic was in there, Imageworks replaced large parts of the trailer with its own version. “For each stage of the trailer we’d take many pictures,” says Kramer. “We hadn’t used a lot of photogrammetry in the past but we used Agisoft PhotoScan and we were able to get pretty good on-the-fly 3D models or good starts for the volumes, just by using photogrammetry. We replaced the whole front facing the van and some of the front quarter side of the caravan so the mimic could rip it apart. It was again a DMM sim so as the tentacles pushed through it ripped it apart. Then we added practical fire and smoke to get that to work.”

At the very end of the shot the mimic falls off the caravan and tumbles around. For this shot, Imageworks had to determine what a ‘deceased’ mimic would actually look like. “Is it a lifeless plate of spaghetti?,” questioned Kramer. “There was a notion that when they die they go into a rigor mortis and stiffen up, so we would stiffen it up and then do a rigid body dynamics sim and have all those tentacle pieces that were attached and allow them to break and crack apart as it rolls.”

Copter crash

The duo make it to a barn that houses a nearby helicopter, but they no longer have any power in their exo-suits. When Rita attempts to take off in the chopper, a mimic attacks and the helicopter crashes through the building, again a mix of practical effects and Imageworks digital artistry. “They built the barn on the backlot,” describes Kramer, “then built a gimbal arm to hold the helicopter, which was stripped down with no blades. Then they rigged the roof of the barn to break away.”

Watch b-roll from the helicopter crash.Imageworks then added in blades as the chopper pushes through the roof, while also painting out the rig holding the helicopter and adding in smoke and debris. “As the helicopter is flying around and swinging past Tom, they did a pass of that with the rig, but we painted that out and did our CG helicopter and CG Emily Blunt, plus smoke and dust, using DMM for the crash and simple blendshape work for crumbling of the metal,” says Kramer. “There was also one shot we didn’t have a plate for where the copter swings past camera and hits the far wall of the barn and bounces off. We used the LIDAR from the barn and set photography and reconstructed the plate – and then put in a CG helicopter.”

To Paris (but not for sightseeing)

Cage and Rita execute a plan to kill the controlling mimic – an omega – which has positioned itself inside the Louvre in Paris. For this sequence, Nick Davis called upon Framestore and visual effects supervisor Jonathan Fawkner to execute shots of J-Squad approaching war-torn Paris in a drop ship, being attacked by a hoard of grunts and then crashing through the Louvre courtyard and the famous pyramid.

Imageworks shared a number of its key CG assets – including the drop ship, exo-suits, digi-doubles and mimics – with Framestore, which then re-purposed these assets for its needs. For the mimics and their tentacles, in particular, Framestore relied on its own instancing plugin called fShambles. “It was perfect for instancing the geo onto the wires of the creature,” says Fawkner. “It does make it hard to animate because the geometry doesn’t exist in a Maya scene – it really is just pulled together at render time into Arnold but it worked great. The same tech allowed us to place hundreds and hundreds of trees within the gardens, or 600 broken up cars.”

A major construction effort on Framestore’s part was that of Paris. Fawkner and a Framestore team visited the city for three days of surveying and photography. “We were on the ground photoscanning by photomodeling,” says Fawkner. “We modeled all the statues and the walls and gates – all of the details: the bins and the chairs, and some streets. We also made a collapsed Eiffel Tower. On one very snowy winter’s day I even went up an 80 meter crane that we parked in the Louvre courtyard – which went up and down while I took photographs freezing in the air! But it was great, it was such a great opportunity to be as high above Paris as possible since the area is a no-fly zone.”

The raid on the omega takes place at night, with Fawkner ensuring that visual effects enhancements of some of the practical photography – filmed at Leavesden – had a ‘night for night’ look. “Whenever you go out with movie cameras at nighttime you have to use a lot of light, but the audience accepts a certain fakeness of nighttime movie lighting, he notes. “When we were trying to evoke the look of night I wanted to do it with fog and light atmosphere to show silhouettes as if the lighting crew had gone out and put all the big lights out there with smoke machines, and backlit monuments, so that it looked lit but in a movie lighting way.”

For shots of the drop ship that involved crashing through the Arc de Triomphe du Carrousel and also the Louvre pyramid, artists built around 15 ship variants with different cockpit and wing configurations that would be smashed off, plus aggregate damage as mimics interact with the ship. The archway and Louvre pyramid crashes made use of Framestore’s fBounce RGB toolset, with the glass shredding amid a steel frame – much like a steel net – also relying on Maya’s nCloth.

Framestore also had to implement large water sims for these shots as the ship skims over the flooded area. For that the studio used their own implementation of Naiad called fLush inside of Maya. “We had a series of library mimic interactions and could instance fluid caches onto animated mimics and that meant we didn’t have to simulate a mile square volume of water,” explains Fawkner. “We could instance the splashes onto the mimics and it did a good job – there were the mimics with 50,000 lumps of geometry on them and they’ve got to swim through the water and interact with it. We kind of got lucky with the sims too – we had the camera really low to the water which played really well with the mimics thrashing through it, and the mimics are so fast and furious that all the water played up nicely to that.”

Training day(s)

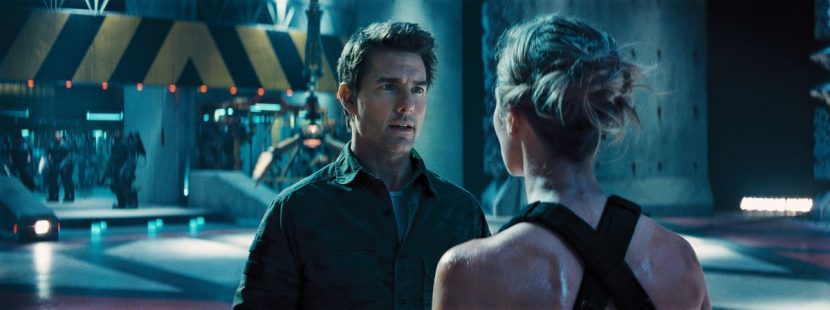

Once Cage learns of his reset abilities and meets Rita, she is able train him to combat mimics with some mechanical stand-ins. Cinesite handled scenes involving both characters fighting the ‘training’ mimics.

“They were developed as if they were bolted together from spare parts,” recounts Cinesite visual effects supervisor Simon Stanley-Clamp. “They’re quite scrappy – the logos don’t match up, they’ve got bullet holes and scratches all over them. We also played with this in animation. When they’re spinning fast it’s useful to have some exposed metal so you can streaks and nice highlights that show up.”

Watch some of the training room footage.Production filmed scenes in a set at Leavesden in which the actors mostly mimed actions against the mechanical mimics. “Sometimes there’d be a guy with an air canon just off camera blowing air on his face to get a nice reaction on his hair as a mimic whizzes by,” says Stanley-Clamp. “Or there’d be a few stuntmen in green with markers for eyelines on poles showing how close these mimics were going past. For the more physical stunts where Tom is getting thrown across the room, they had jerk rigs that could pull him really rapidly.”

Cinesite extended the area for certain shots and implemented a new roof with lighting. “We had even worked up the mechanics where these things travel around up there,” adds Stanley-Clamp. “It was like those printers that can only move x and y – so to get from one side of the room to the other it isn’t a straight line – it had to traverse across the room in a different way. It kind of un-hinged and re-attached itself to get about.”

See behind the scenes of the training room shoot.Animation-wise, the meccas only hinted at a sly level of sentience as they powered up. “But they couldn’t be too clever,” acknowledges Stanley-Clamp. “We had to dial them down a bit and give them the appropriate mass and weight. Also, we often rendered them with different shutter angles so we could dial the motion blur in and out in comp and make them show up.”

Artists added sparks and dust hits when the meccas collided with the characters, with Cage occasionally being realized as a Cinesite CG take-over. In one shot, Cage is hit so hard by the metal mimic that he is thrown into a wall and breaks his back. “They shot him against the wall for the final bump,” says Stanley-Clamp, “so there was a complete takeover there before that when he’s tossed in the air. There’s another shot I loved when he’s thrown across the floor and spins on his back like a turtle – the take-off was digital, then the landing is digital into a live action take-over of him spinning around with sparks and debris.”

More from the training room.Omega face-off

Cage makes his way to the location of the omega, which is hiding under the submerged Lourve car park. He eventually destroys the creature, even while being pursued by an alpha. MPC handled the VFX for this sequence, under visual effects supervisor Gary Brozenich.

Production filmed in an underwater tank at Leavesden, with MPC filling out environments and also contributing a CG Tom Cruise digi-double. The studio created the watery environment via Maya fluids and Flowline sims, adding in plankton, dust and debris and a disturbed water surface. For the omega explosion, MPC crafted the creature’s destruction using CG sims and practical elements that had been shot high speed in a glass tank with a Phantom camera. Cage interacts with some final alien ink to depict his final time loop – an effect sim’d with Maya fluids and formed also from a practical element shoot.

Making sense of the sets

With many of the sets for Edge of Tomorrow requiring digital extensions, the camera and visual effects teams on the film benefited from the use of the ncam camera tracking system to help in crafting shots and providing temp views both for the Heathrow sequences and those in Paris.

ncam is a multi-sensor bar mounted on the principal camera that relays real-time data to a separate server for tracking sets. It delivers camera position, rotation, focal length and focus information – that is then compatible with any VR/AR graphics system. On Edge, the filmmakers utilized ncam to work with Motion Builder and ncam Reality software. As scenes were shot against greenscreens, the company’s temporary digital set extension of the airport along with moving military vehicles that were then composited directly using the real-time tracking and comp software.

For the Paris sequences, the ncam camera bar was mounted underslung to the main film camera on a Libra stabilized remote head, and the ncam tether was rigged along the arm of a mobile 50ft Technocrane. Once the crane was positioned on the set, ncam instantly aligned the CG Paris to the practical set, by correlating geometry in the CG Paris model with visual tracking reference points from the video feed on the live camera.

During live filming the Technocrane camera operators, adjusted the camera’s position and orientation to frame the visual composition of the Louvre and the demolished Parisan skyline. The live composited signal was relayed back to the director assisting him with the visual information for screen direction, geography and eyelines for the actors. The tracking data was recorded in to an FBX file format for use in post-production and the live composites were recoded by video village, providing editorial with post-visualization material.

Envisioning Edge

As they have done on several blockbusters already this summer, The Third Floor contributed key previs and postvis services to the filmmakers to help explore certain scenes and guide principal photography and VFX. “Through previs it was possible to visualize how some scenes would play out if an idea was taken in a particular direction or, in some cases, as an alternate timeline of events,” outlines The Third Floor’s previs supervisor Albert Cheng. “It was also helpful to try out ideas in a potential location, or in a certain way before committing to that route and seeing what potential narrative and visual advantages were available in one location or another.”

The Third Floor worked on previs for the beach landing and its variants for each timeline, the training room, Whitehall chase and the Paris landing, with postvis shots for many scenes in the film aiding the editorial process. The studio also created techvis diagrams for complex shots aimed and planning camera movement and actor placement.

The drop ship beach sequence, in particular, was The Third Floor’s first outing on the film. “We started from well-drawn storyboards that began in the interior of the ship with J-Squad flying towards the beach,” says Cheng. “The idea from the beginning was that once everyone bails out after the impact, we would stay with Cage all the way until he hits the beach. The production team knew it would be the key thrill beat to see Cage’s point of view of the chaos throughout. All those beats, including Cage seeing the drop ship disintegrating, following him dropping out, getting yanked upwards as he hit the end of his line, and twirling with the rest of the squad, was all planned from the very beginning and stayed intact. So, as long as we could keep Cage as the focus of the entire shot, all the background chaos could play out around him and we understood what was happening.”

“Doug didn’t want crazy cameras or moves that would make a shot feel CG, so the camera is actually pretty straightforward,” adds Cheng. “Some of our initial previs versions had crazier moves, but it was ultimately about staying with the character, presumably as if shot from another tether, and that kept it more personal as well as readable. And one thing we knew going in was that Tom would be doing the actual stunt hanging from a cable, so that informed the choreography of the shot and being able to stay on him the whole time.”

Once on the beach, Cage’s movements and the battles against the mimics were also choreographed by The Third Floor, often with the aid of motion capture. “In battle scenes,” relates Cheng, “there tends to be a lot of background animation and fx so mocap really allowed us to focus on elements like the Mimic animation or stunts we couldn’t capture.” For the mimics, whose look and movement evolved throughout the film’s development, The Third Floor delivered several animation tests to show their ultra fast-motion and multi-directional attack capabilities. “Our first previs animation test illustrated this concept,” says Cheng, “only we took it to an R-rated level where the mimics would tear through a squad, impaling and eviscerating them. t looked cool and captured the deadliness of the creatures, but didn’t work for a PG-13 film. ‘Less blood’ was directed at us on more than a few occasions.”

Cheng says that the approved previs animation cycle for the mimics would show the creature churning up “dust and dirt or water when it moved because Doug wanted the tentacles to shred whatever surface they were touching. We did this using textured particle emitters with dirt, smoke and dust textures that emitted around their feet. Motion wise, I think what was discovered over the course of production was that even though moving the mimics super fast was deadly, they would then seem impossible for any human to kill. I think they struck a nice balance of speed and ferocity while being believable that you could actually engage them in combat.”

“During postvis,” says Cheng, “and in particular in J-Squad’s trench, we animated the mimic to be this twirling death machine with tentacles whipping out, slashing the soldiers so they’d be thrown around to match what had been done by the stunt team. The tentacle rigs were hand animated and attached onto the core as needed. To emphasize the mimics’ crystalline-like body structure, we also used some particle emitters to kick out dark debris chunks whenever a mimic was shot or hit.”

Stereo challenges

Filmed in mono, Edge of Tomorrow was converted in its entirety by Prime Focus World, with production stereo supervisor Chris Parks working with Prime’s SVP Production Matthew Bristowe and senior stereographer Richard Baker. For Edge, Prime Focus expanded on its use of geometry and head cyberscans that it had utilized on Gravity and WWZ. “We used the cyberscans we received from film production to create a rigged model for each of the main characters,” says Baker. “These models were tracked and animated for each of their mid and close up shots, which covered around 900 shots in the film. This ensured we had highly accurate and consistent faces for our main characters. We also used LIDAR scans of the sets to create models for the recurring scenes with highly structural environments, such as the training room scenes.”

For the beach invasion, Prime Focus had to deal with in-camera dirt hits, water spray and explosions for conversion. “We extracted the dust, dirt and water spray where possible through detailed clean plating and keying methods,” explains Baker, “but we wanted to get some extra layering and transparency as well. This led to us using our particle team to generate extra dirt hits, missiles, and spray – not just for the extreme foreground but to fill up some of the mid-ground space also, to make it more interesting in 3D. To create some standout 3D moments we also modelled some larger debris, to be aimed toward camera and around the existing action.”

“Another scene that was interesting from a technical standpoint was the hologram table in the bunker,” adds Baker. “We worked closely with VFX vendor Nvizible to setup a pipeline for creating these holograms in stereo so that they could retain all the internal layering, which would be very difficult to achieve with just the 2D element package normally provided for conversion. Whilst Nvizible were comping the 2D versions of these shots they passed us their mono camera and temp geometry for the holograms.”

“We integrated this into our geometry for the room then passed back a stereo camera for Nvizible to render their hologram through to create clean stereo elements that match the depth of the converted plates. These elements were then passed back to us for the final stereo comp with stereo holdouts where necessary in the foreground. Any hand to hologram interactions with lighting effects also needed stereo mattes generated by us, by converting their mono comp mattes. This technique utilized the stereo camera generation toolset that we developed during our work on Gravity.”

All images and clips copyright © 2014 WARNER BROS. ENTERTAINMENT INC.

Pingback: Live. Die. Repeat the effects | Occupy VFX!

Pingback: Live. Die. Repeat the effects – (fxguide) | Dan Sheerin

Pingback: Live. Die. Repeat the Effects of “Edge of Tomorrow” | FilmmakerIQ.com

Pingback: Live. Die. Repeat the effects (Edge of Tomorrow VFX) | Eternal W.I.P.'s Blog

Pingback: Les VFX d'Edge of Tomorrow | Blog de l'école 3D e-tribArt

Pingback: VFX Close-Up: The Effects of Edge of Tomorrow

Pingback: Live. Die. Repeat the effects | fxguide | timcoleman3d