After many years as one of the world’s largest and most accomplished visual effects studios, Weta Digital began a research project that has evolved into their own full blown physically-based production renderer: Manuka. In fact, the project encompasses not just the final renderer but as part of work in the lead-up to the new Avatar films, they have also developed a pre-lighting tool called Gazebo, which is hardware based, very fast and also physically based, allowing imagery to move consistently from one renderer to another while remaining consistent and predictable to the artists at Weta.

Manuka is a huge accomplishment, given that it not only renders normal scenes beautifully and accurately, but it handles the vast (utterly vast) render complexity requirements of the company and it does so incredibly efficiently and quickly.

Weta Digital’s team recently won a Sci-Tech Oscar for their work with spherical harmonics and their PantaRay renderer which has allowed, since the time of the first Avatar, highly complex scenes to be pre-processed as part of Weta’s RenderMan pipeline. Manuka improves upon both tools. The new renderer started life as a small R&D research platform and has grown rapidly into a hard core very serious production renderer.

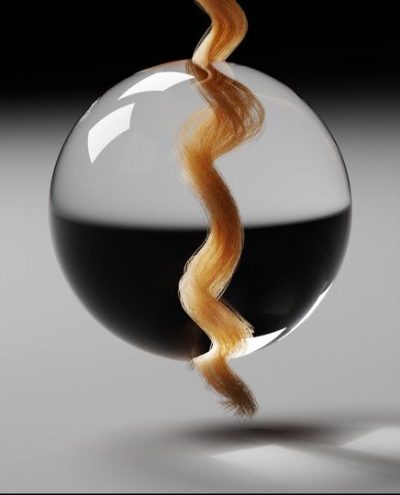

While the upcoming and third Hobbit film will really be Weta Digital’s first major motion picture with Manuka as the primary renderer, some shots in The Desolation of Smaug and some shots in Dawn of the Planet of the Apes were also rendered with Manuka. For example, in Dawn when the apes ride into San Francisco and tell the humans to stay out of the forest in the “show of strength” scene, the vast amounts of apes – each with complex hair that would have taxed any renderer – were rendered in Manuka. For these scenes, the Dawn crew tested Manuka and found it significantly improved rendering allowing for all the complex apes to be primarily rendered in the same pass, something that could easily produce major memory problems with most other renderers.

In 1997, Eric Veach completed his Ph.D. thesis. For this and related articles he received the Sci-Tech Academy Plaque at this year’s Technical Oscars. His foundational research was on efficient Monte Carlo path tracing for image synthesis and it can be said that Manuka is perhaps the most complete implementation of this approach to physically based production rendering that has yet been written. Veach’s work has transformed computer graphics lighting by more accurately simulating materials and lights, allowing digital artists to focus on cinematography rather than the intricacies of rendering, but many of the ideas were simply beyond practical in the late 90s. Still, Veach formalized the principles of Monte Carlo path tracing and introduced essential optimization techniques, such as multiple importance sampling which make physically based rendering computationally feasible today.

Manuka started life as a technical validation of this approach. “The whole original experiment started as an exercise in being able to validate the models we had elsewhere in the rendering pipeline of the studio,” explained Luca Fascione, Rendering Research Supervisor at Weta Digital. “And so to do that we wanted to test the material models and the light transport algorithms, and so as part of that we followed fairly closely Veach’s thesis as it is the most unified treatment of the light transport algorithm if you are doing a path tracing approach.”

There are other very faithful implementations of path tracing and the approach Weta has taken, Mitsuba for example. Mitsuba is a personal project of Dr Wenzel Jakob and consists of over 150K lines of code and been used in research projects at Cornell, MIT, University of Virginia, Columbia University, UC Berkeley, NYU, Berlin, TU Dresden, NVIDIA Research, Disney Research, Volvo Car Corporation, Square Enix and Weta Digital. Another example has been extremely key work in this area with Matt Pharr and Greg Humphery’s PBRT renderer – which was part of the Sci-Tech Oscar awarded book Physically Based Rendering. (Mitsuba’s Jakob is coauthor the third edition of that book). Manuka, coming in at well over 750K lines of code, is separate from PBRT or Mitsuba, and is completely production focused, where PBRT and Mitsuba are important research projects.

Weta Digital senior visual effects supervisor Joe Letteri discusses Manuka.

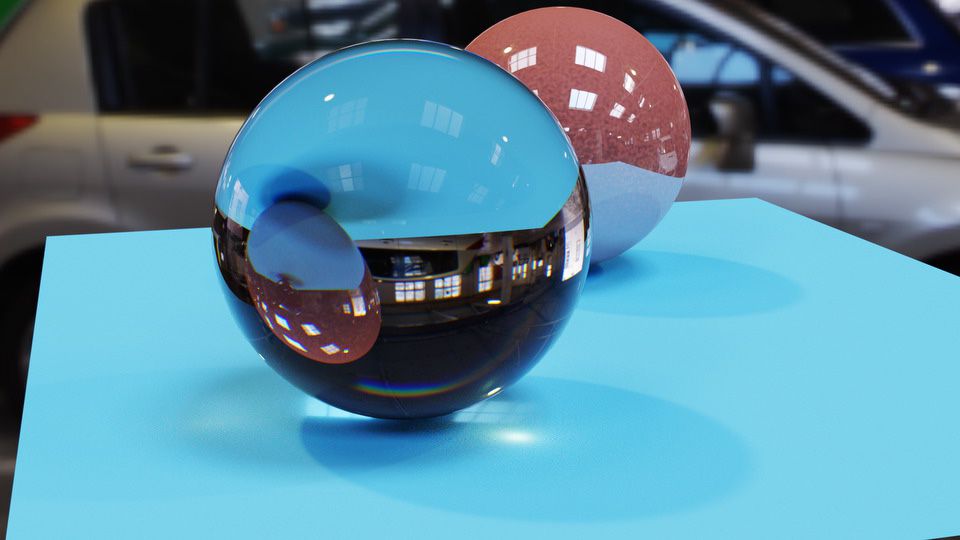

Manuka is focused on production, in terms of both the control a real world feature film production pipeline needs to achieve the director’s vision (and their deadline), as well as having a much more complex system internally that allows much greater model complexity to be rendered. Similarly, Gazebo started from work that was done in the lead up to the new Avatar films. What was key for Weta’s work in real-time or hardware GPU based rendering was that it give artists an accurate preview of the final image. The lighting and responses to materials had to lead into Manuka evenly. Clearly Gazebo would not be able to handle the complexity that the large production renderer was aimed at, but if creative decisions were being made in Gazebo then it needed to be accurate and relevant to the final shots, or it would quickly become irrelevant. “Gazebo was our first foray into GPU rendering solutions that would improve the way we worked across the whole pipeline,” states Jedrzej Wojtowicz, head of the Shaders Department at Weta Digital. “We actually knew what Gazebo was ahead of the start of our rendering experiments in the offline world.”

This meant that the team wanted to pass along what had worked well, but also how they would need to modify Gazebo to bring it in line with Manuka, so that they would work together. “A lot of that came down to them rendering as much as possible with the same physical restrictions,” says Wojtowicz. “The main thing we were trying to do was make sure the shading and material description was up to the same standard in Gazebo.” Both Manuka and Gazebo would then use the same reflectance models so that the look development would translate from one renderer to the other. While the result is not perfect – especially as they have different use cases (in other words they are trying to achieve different things: realtime vs final ultimate quality) – most of the effort was already in the right direction as Gazebo had started from the outset as a physically plausible shader-driven renderer. The move has proven already to be a success. “The main thing to artists is that the light angle, the compositional aspects of a shot, all match as closely as possible,” says Wojtowicz.

Any normal path tracing ray tracer will work such that it produces an image, noisy at first and then progressively better. This allows Manuka to be used as a progressive refinement render preview tool, leveraging an artist’s ability to work with partially rendered images. In addition, “it is not too difficult to implement on top of a path tracer some kind of re-lighting, interactive rendering kind of approach, and most renderers (which are path tracers) do that,” comments Fascione.

Gazebo is central to blocking and quick interactive feedback across a whole variety of areas inside Weta Digital’s pipeline at a whole variety of frame rates, “where you can playblast your lighting, you can see how your lighting works in the context of the whole sequence,” adds Wojtowicz. “Then when you are happy with the broader blocking you can start using the re-lighting engine to help refine say key frames,” and get some of the indirect light transport that Gazebo does not necessarily do. Finally, explains Wojtowicz, there is the master final renders which encompass all of the complexity and subtlety Manuka has to offer.

Manuka is both a uni-directional and bidirectional path tracer and encompasses multiple importance sampling (MIS). Interestingly, and importantly for production character skin work, it is the first major production renderer to incorporate spectral MIS in the form of a new ‘Hero Spectral Sampling’ technique, which was recently published at Eurographics Symposium on Rendering 2014. The term ‘hero’ spectral samples comes from the film industry jargon of the ‘hero shot’ or ‘hero render’. The principle is that for, say, skin, the scattering is handled very differently for different wavelengths. One can deal with this in R, G and B or in a proper light spectrum. This is a non-trivial distinction, not only for the accuracy of the results (less noise for the similar effort) but to work you want your pipeline spectral – including materials/BSSRDF. A hero wavelength is the basis then for an MIS style directed spectrum.

Spectral rendering is only part of the solution. Weta can do everything from brute force Monte Carlo to quantized diffusion or the simpler dipole method; apart from anything else the Manuka team want to run everything so they can provide production with test comparisons and look at relative render time efficiency.

While previously there had been published a form of spectral rendering, the technique was very expensive and only a few renderers such as Mitsuba supported it. The exception is Maxwell from Next Limit. The Maxwell software captures much more of the light interactions between the elements in a scene as all its lighting calculations are performed using spectral information and not RGB. A good example of this is the sharp caustics which can be rendered using the Maxwell bi-direction ray tracer with their metropolis light transport (MLT) approach. “Maxwell uses spectral rendering from its first beta in 2004. It is the first commercial renderer that did that and actually some of the best kept secrets in our algorithms are related to this area, because rendering spectrally with a minimum impact in render times and memory consumption is a challenge”, explains Juan Canada, Head of Maxwell Render Technology.

Manuka is different from Maxwell in that it has an advanced new spectral multiple importance sampling solution, . Maxwell has a fixed set of wavelengths, worked out differently depending on the shot, but 12 fixed wavelengths (Next Limit do make special builds with more for say lens design and scientific testing). These 12 are a spread of distributed wavelengths around the main primary wavelength. In contrast to existing spectral MIS approaches, the Manuka approach is directly usable with photon mapping techniques, which means that it integrates well into multi-pass global illumination techniques, which matters to Weta as it needs to be production flexible.

The spectral work also helps match computer generated objects to real ones, more closely matching colors and responses – making CG objects ever more difficult to pick from reality.

Weta Digital visual effects supervisor Erik Winquist talks about the use of Manuka.

One important part of adding production value and scene complexity is volumetrics. Much work has been researched in this area. There are many solutions on offer, namely photon mapped volumes and beam solutions in addition to more brute force approaches. “Various different approaches have proven to provide other interesting speed/quality tradeoffs for us,” says Fascione. “One thing we always kept alive was the ability to do full volumetric scattering simulations, which has proven enormously useful in verifying and validating our other models and approximations. We’re certainly interested in following the research in this domain, as well as the various opportunities coming from the followups to the VCM paper.”

For a film like The Hobbit: The Battle of the Five Armies or any of the complex effects films of its type that Weta must face, artists work in a production environment with the most complex scattering – both volumetric and sub surface. “Haze on top of skin, on top of hair with complex material models and with cloth, all in the same picture, and they all have to land with the same level of quality, together, and this tends to not be what your typical research platform does,” points out Fascione.

In particular on Dawn of the Planet of Apes, the production team was particularly impressed with how Manuka handled fur, something it was able to not only render well, but able to handle with hundreds of ape characters on screen at once. Other companies have bi-directional path tracing that works well for many scenes but few claim to be incredibly efficient at vast amounts of separate hairy characters all rendered at once, not instanced, unique and at very high quality in stereo. Apart from anything else, the memory limitations should defeat Manuka, and clearly it does not. “From our algorithmic research,” notes Fascione, “we have learnt that there is a place in medium to large scale shots where our approach really shines. You can have 60 to 70 to 80 hero apes in a frame and it will render just fine, and before it used to be really hard to do a shot like that.”

In terms of the pipeline, everything rendered at Weta was already completely interwoven with their deep data pipeline. Manuka very much was written with deep data in mind. Here, Manuka not so much extends the deep capabilities, rather it fully matches the already extremely complex and powerful setup Weta Digital already enjoy with RenderMan. For example, an ape in a scene can be selected, its ID is available and a NUKE artist can then paint in 3D say a hand and part of the way up the neutral posed ape. This can then be dropped immediately back into the scene and now the hand has a traveling matte, based on deep data, even as the ape walks and moves, thus in no way maintaining the neutral limb extended pose used to define the matte. The ability to use deep to paint one neutral posed ape, and then avoid maybe hundred of frames of roto as that hand moves behind and in front of other apes, is remarkably powerful. This ‘Deep-CompC’ tool was developed in RenderMan and now also fully runs in Manuka.

In terms of camera models, Manuka offers currently orthographic and projection mapping only (simply because no one has asked for anything else yet such as spherical or cylindrical projection rendering). Manuka has highly advanced depth of field and motion blur. It does not currently support rolling shutter simulator camera models, but Weta Digital will very soon be implementing new shutter modeling that will allow accurate rendering of these artifacts, if required. “Personally, it (camera modeling) is something I like a lot and I have done a fair amount of work and I have asked the team to implement these,” says Fascione. “One thing that we have already that not many renderers have is that we can offset the film back and also tilt it in both angles, so the orientation of the film back is independent of the orientation of the lens axis.”

In terms of AOVs, the studio approach is to succeed in the render rather than pass a multi-pass render to compositing. “We actually try and limit the number of AOVs so we have a limited number of arbitrary outputs by design – rather than limitation,” explains Wojtowicz. “The whole point of physically based rendering is that it does all adhere to the same principles.”

For lighting, the approach is based on area lights and IBL / environment lighting. Importantly, an arbitrary shape can be a light source so a fire simulation can be a light source. Any volume or shape can be a full area light emitter. “The renderer was always designed to handle a ridiculous amount of lights, more than say a million. And that allows you to do all sorts of things, suddenly volumes, multi-colored lights and textured lights, it all just becomes a non-issue – and that is cool,” outlines Fascione somewhat proudly.

In terms of shaders, Weta Digital does not support open shader language (OSL) as Manuka was designed to work with the studio’s current shader pipeline. “We really needed to make it a streamlined transition process – we did not have a TD go through for three days with a conversion tool or script to see anything,” says Wojtowicz. “In reality there was a limited amount of impact or rewriting of our shaders. Essentially both RenderMan and Manuka can run on the same shaders – with a very limited amount of additional Manuka specific code.”

The team support Ptex and UV mapping, the renderer forces no one approach on the production team moving forward. Older scenes and models can be handled without costly conversion.

In terms of instancing, Manuka does have powerful instancing support but it is different from most other production renderers. Weta believes their implementation is extremely efficient – but with certain limitations and they are still investigating if those limitations will impact production. The area is still being researched. Today it works and works extremely well, but aims to not be all things to all people at the cost of render time. Weta is very much focused on rendering in their high end production environment. No longer is a PantaRay required for their huge scenes to be memory limited but Manuka clearly does have some tricks in it that Weta is not ready to reveal. After all, they have been working on large scale rendering research for at least six years. “All I can say is, we have learnt a thing or two about storing polygons in memory!” jokes Fascione.

If a shot is trying to be re-rendered and that shot was initially done with PantaRay, it can be rendered. There were lessons learnt in developing and maintaining that tool, but Manuka does not just include that code approach. Manuka solves separately the problem that PantaRay was developed to also solve, but Manuka can backwardly handle Weta’s legacy shots, whereas before to render killer vast complex scenes the image had to be fractured to be rendered or pre-rendered or broken down into components to get the final shot out the door. Data storage and data management has been simplified as a result. “If I was to take a scene from say Tintin now, I would be able to open it, make changes and – assuming the textures and images are still online – just press go and render it,” says Wojtowicz.

It is worth noting that it has only been in the last couple of years, perhaps three years ago, that the experiment evolved into being the idea of making Manuka Weta’s primary renderer. The first film that included a shot rendered with Manuka was last December’s The Desolation of Smaug. Dawn had a few shots and the next Hobbit film will be primarily rendered in Manuka. Given the long range planning of Weta generally, and the impressive slate of planned films, the Weta R&D team follow in the general philosophy laid down by Joe Letteri of “going after research wholeheartedly.”

To make Manuka and Gazebo, Weta’s team has worked solidly for years doing hard core rendering research, they have had a load of talented contributors and interns working with them from around the world. They have chosen to make some unexpected and novel algorithmic choices, all with very specific production biases in mind. Fascione finishes by summarizing their approach to the realities of production rendering at the level Weta works at. In Wellington, at Weta, every night the farm burns away producing shots for dallies the next morning: “The reality is you have to make sure our shots can render and be there for the morning – no matter how complex – otherwise you can’t make a movie. You can do lots of other interesting things, but you can’t make movies.”

Pingback: Manuka: Weta Digital’s new Renderer | Animationews

Pingback: Manuka: Weta Digital’s new Renderer | Occupy VFX!

Simply amazing! I hope the other companies use this as push to update their software, often times companies will hold back new tech because its not financially beneficial to give you something thats not out, but now that they realize there is something of value out and yet it may not be in the general market, we now know whats possible. so hopefully they will deliver. Hair is one of those issues where on my own tests i’m about 85% to realistic looking hair out of Maya, its the 15% that i’m having an issue with. Single frame is about 90%, its dealing with animating for live sequence and having light and color data hold up through the same pass with body geo and dynamically shadowing to the body realistically. I would love to see this upclose and what system requirements are used and depends on.

Pingback: Manuka | Lily Blue

Pingback: Weta新的Renderer “Manuka" – 好動份子 animator.idv.tw

Pingback: Manuka | VFX Serbia

Pingback: Rick Baker: Monster Maker. | DEBRISLAND.

Pingback: Manuka: Weta Digital’s new Renderer | CGNCollect

telugu cinema news and gossips

Nice info. Thank you for sharing your ideas with us.