SIGGRAPH 2012 has wrapped up in LA – here’s a look at some of the further highlights of the week, with news from Side Effects, the RenderMan user group, NVIDIA, Massive, Open Source, Dreamworks, and the Weta Virtual Production session.

Side Effects

Side Effects had several key announcements at the show: a new pricing structure and a cool new asset marketplace. The company has rebranded its flagship product, Houdini Master, as Houdini FX. This version includes all Houdini features with a focus on particles, fluids, Pyro FX, cloth, wire and rigid body dynamics.

With the new name comes a significant price reduction as well, with Houdini FX now available for $4,495 (savings of over $2,000). Houdini, formerly known as Houdini Escape, remains priced at $1,994. It’s become a popular choice for many studios with its comprehensive set of procedural modeling, rendering, and animation tools and its ability to open up and render scenes and assets created in Houdini FX. Customers now get their first year on the Annual Upgrade Plan for free — something that previously cost an additional $800.

One very cool announcement from the company was the announcement of a brand new asset marketplace called Orbolt™. Artists can join Orbolt and become asset authors, creating Smart 3D assets using Houdini’s Digital Asset technology. Artists don’t even need a commercial version of Houdini to create and sell assets. They can be created in the non-commercial Houdini Apprentice version as well.

Artists set their own prices for the uploaded assets and Orbolt delivers them around the world to other artists. There is some currating by Side Effects, as all submissions from users are tested and verified before they are offered for sale. Creators of these smart assets receive 70% of the sale price, with Side Effects taking the remaining as commission. The app store model seems fair in marketplaces such as this.

Houdini has had “digital asset” technology for some time. At a basic level — and this is admittedly an oversimplification for explanation’s sake — it allows users to group a network of operators/nodes and encapsulate them in a single, simple place. A custom UI for the asset can be made which exposes only the values one wishes to expose. Because of Houdini’s procedural nature, these truly open up a broad range of possibilities for artists.

In the past if an artist created an asset for sharing, one drawback was that it could be broken apart and examined because the asset files were plain text. But the new smart assets system includes compiling of the code, which prevents users from seeing an asset’s contents. This also allows for licensing, which allows artists to make money from their creations. In addition, the new dedicated Orbolt site makes searching for the assets and sharing them much easier than it’s been in the past.

Digital assets might include characters, props and sets, effects, lighting and shading operators, and even custom tools and more. It’s much, much more than grouping a process tree or creating a gizmo in an app such as NUKE.

Some interesting examples for from the new site include:

- A greebles generator that can be applied to a variety of geometry

- A stadium creator that can be customized for different applications

- A motion captured male with a variety of action cycles and clothes

If you’d like to know more about how to create digital assets, check out an overview video on the Side Effects site. The presentation was made a year ago, but it provides a good overview of the tech.

And it wouldn’t be SIGGRAPH without the annual Side Effects party. Celebrating the 25 year anniversary of the company, they took over the ESPN Zone on Tuesday evening with a huge party that lasted past midnight.

Pixar RenderMan User Group

Wednesday night was the Pixar RenderMan User group, with a massive turnout. This is a chance for Pixar’s RenderMan team to layout the upcoming roadmap and for users to present Stupid RAT (RenderMan tricks).

RenderMan had a huge release with version 16, so great was this release companies such as DNeg overhauled their whole rendering and lighting pipeline as a result (hear this week’s fxpodcast). The next release builds on this and improves performance. Gold mastering on the new RMS 4 and RPS 17 is very soon with a ‘wide’ beta starting the week after SIGGRAPH, and the final gold mastering planned for the end of September. Interestingly, with the new rolling release schedule, 17.1 b1 release will follow not long after around the 1st of November. This is part of the new rolling releases that aims to get out any new features that Pixar “feels won;t get in the way of 17’s stability”.

Chris Harvey, ran through RMS 4. The key features are:

- Scalability and speed

- Faster rib generation and better archiving

- Better lighting and shading

- Added modern area lights

- Blockers

Julian Fong then ran through RPS 17’s features including:

- Object instancing new implementation

- Memory saving

- Multiple avenues for variation

One of the biggest additions to 17 is object instancing, something already available in say Arnold. Fong gave a great demo of the advantages of instancing building a tree and then populating a forest. Based on his morning walks through a park, and a guess of tree density, he calculated he needed 76,227 trees for his scene. With instancing this fits in 284M but the same scene would require 48G without instancing.

While there was an increase due to Ray Tracing over Reyes, Fong then built up his ray traced tree and forest. By just adding simple instant leaves on his tree there was not really a major difference, adding more complex leaves helped but as he worked through instancing branches (based on a earlier non-Pixar SIGGRAPH paper). The file size dropped and dropped, until the tree was simplified but still remarkably similar to the direct approach. The results however were stunning – while he was at pains to point out this was nothing to do with the forest of Brave, he then added various volume and backlit rays to the forest and had maintained good render times. The source code is being added to the newly revamped Pixar Renderman user site for users to download. The instancing allows for lower data sizes and faster rendering.

Pixar also indicated they were working on practical global illumination in volumes. And then Per Christensen showed improvements to Photon Mapping. While the technique has been around for almost a decade, Pixar had not revamped their Photon mapping recently and 17 changes that.

- Motion blurred photons so caustics get time interval blurred correctly

- Photon guided indirect diffusion

- Photon beams for volumes so motion blurs of say shadows in volumes are blurred correctly

- IBL lights improved, so they have less noise for the same render or can be done with fewer samples for the same noise level

- V16.6 saw compression of deep data, the old level on a test file was 216MB now that would be 40MB for example, with no desernable visual difference.

- Deep compositing was fully supported by Pixar as was OpenEXR 2.0

- Deep Colour examples were shown that now were much smaller, 546M (v16) now 184MB in 17.

Massive crowd sims

The last major release of Massive was version 4.0 in February 2010. Since then the company has restructured and is now back with a great new 5.0 release. The new 5.0 aims to address long-term user requests, new features and some interesting extensions, but it is how the product is being used by key customers such as Dreamworks, as presented at SIGGRAPH (DigiPro) that is of particular interest. We spoke to Stephen Regelous, founder of Massive software, who had a private suite off the show floor showing the newest work in Massive crowd simulation.

Massive runs by having rule based agents that perform tasks and who are varied through key procedural tools allowing the production value of massive crowds, traffic and other rule based characters to be rendered based on the Massive software crowd simulation. Massive itself does not final crowd shots, it works as part of a pipeline.

In terms of Agents, one aspect that always seems to work against realism of large shots was the mathematical distribution of the agents. The agents could always vary in size but they varied evenly. In other words if the agents were sized between 5ft and 6ft tall then they would be evenly sized across this range. Now with Massive 5.0, the distribution can be a ‘normal’ distribution or with full editorial control or even a manually controlled distribution.

This leads to not only a more normal looking crowd, but it introduces odd outliers and misfits just as we have in everyday life. “I noticed that before if there was a tall agent then there were a lot of tall agents,” explained Regelous. The new distribution modes and user interface solve this distribution problem.

When fxguide last spoke to Massive, the company had released its ‘lanes’ option which allowed for realistic traffic, something that came from work done for Peter Jackson’s King Kong. Originally roads required a special ‘paint’ down the middle for the agents to follow. The latest version of lanes allows for an important variant, which lets a car move or perhaps drift out of the fixed lanes and still be aware of the lanes and sensibly drive back to the primary road. This is akin to a car going off the track on a corner of a race and driving back on the track. Not only is this much better than the previous ‘lost driver’ option which had agents driving off completely, but it works well with the new 3D lanes that allow cars or agents to fly, yet still conform to 3D virtual lanes.

The user interface has been redesigned to allow many improvements in workflow. There is a new on screen controller that allows most common adjustments of scale, rotation or translation without having to hold down additional keys. The agents can now be previewed for positioning and there is a scrub feature that allows for previewing your work. But the changes run deeper. As the program used to build variation based on the id of the agents, if you adjusted the fundamentals of a simulation, it could internally renumber the agents, in so doing the variations would all adjust, – an agent with a shield would now have the spear, the spear agent an axe etc. Now the program assigns a unique id that will not change even if you alter the fundamentals, and thus maintain the current variants.

One major addition to Massive use at the application level is that the software now supports 3dsMax. There is a new Max plug-in that allows sim work to be done and rendered from inside 3ds just like any other 3ds Max objects. With V-Ray, rendering is fully integrated, and Massive agents can be rendered in context with the other objects in the scene. The plug-in is expected to be released shortly after the release of Massive 5.0 (within 2-3 months).

Below, fxinsider members have exclusive access to a full-length audio interview with Massive founder Stephen Regelous.

[mepr-show if=”rule: 246100″]

[fx_audio src=”/wp-content/uploads/2012/08/masive_edit_1.mp3″ link=”/wp-content/uploads/2012/08/masive_edit_1.mp3″]

[/mepr-show]

Dreamworks and Massive

What is of even more interest is not just what Massive is releasing but how teams are using it. Dreamworks in particular presented a brilliant paper at DigiPro 2012 (SIGGRAPH co-located event) on accessing the Massive sim output data directly and using it to improve the characters that the agents drive by having a high quality render time deformation engine (RTD), where high quality character geometry is generated on the fly. This technique required significant changes to Dreamworks’ animation rigs. Character TDs created Multidimensional Rigs, where a character can run in a ‘service mode’, interfacing with the RTD server to deliver any model in a crowd for any pose as requested by the renderer.

High quality deformations allow crowd characters to be pushed closer to camera, where their acting performance is highlighted. This was first seen in Madagascar 3: Europe’s Most Wanted. Right from the beginning of production, all of their generic ‘people’ characters were designed so that their facial expression would read the same way when the same animation was applied, allowing the Crowds department to ‘cast’ generic characters with any head variation with no additional overhead.

With crowd characters much closer to camera, they also receive more director feedback. To allow direction of theses performances, they developed a system called ‘Hero Promotion’, which promotes crowd characters to hero assets. Crowds and Character TDs can now ensure that the hero promoted animation keys matched both Massive’s agent positioning for shot composition, and the original hand-animated cycle for easy animation augmentation. An example that was shown involved two agents in a casino moving next to each other. It looked like these two characters a man and a woman were a couple, so the director could ask for the two to be promoted to hero characters, and now be key framed to be holding hands, but while retaining all the joint sim data from the original Massive sim.

Dreamworks’ paper on this was one of the most interesting character pipeline papers at this year’s SIGGRAPH event – full credit to its Dreamworks authors Nathaniel Dirksen, Justin Fischer, Jung-Hyun Kim, Kevin Vassey and Rob Vogt (who we paraphrase here).

Dreamworks by doing render time deformations also makes the whole pipeline much faster. Even for a mid-sized crowd in Madagascar 3 this can mean a huge difference. With the Dreamworks pipeline, all crowd cycles are key-framed by animators (the base walk cycles etc). Massive is used to blend these cycles, outputting joint locations that drive their animation rigs, from which models are baked and ultimately rendered. For even mid-sized crowds, the disk-space and network bandwidth requirements make this approach unworkable if full resolution models are used. Baked out geometry consumes about 2 MB per-frame per-character. There are several shots which have thousands of characters in the circus scenes of Madagascar. Each shot would use upwards of 500 GB if all character geometry was baked to disk.

The new system bypasses the need to store these models on disk; the RTD server requires only the joint positions, animation curves and character variation, decreasing disk usage to one percent of the conventional method. Each animation file is about 20KB but models produced from it are about 2 MB. When there are 1000 characters on screen, the geometry could require 20GB of space and it would take 8 seconds to transfer them for rendering. If the shot has 100 frames, just transferring data would take about 13 minutes. With motion blur, the amount of data will be doubled, and it would take 26 minutes just for crowd model data to transfer. Even worse, the network pipe for intercontinental transfers is more limited: transferring models for a render in India could take nearly 2 hours. Render-Time Deformations decrease the network load to less than 1% of the time for a full model transfer, which is as important as the improvement to disk usage.

In the Dreamworks paper they also outlined how their facial animation system was improved to allow high quality faces for crowd members. Dreamworks already had methods for sending their facial animation through Massive. Each animation control is represented as a blend shape curve, allowing blended animation values to be read from Massive’s output, converted into Dreamwork’s own animation format, and fed into the character. With some small modifications to the character rig, Crowds could also pipe this animation on the fly to the RTD server.

Massive has always had a great large scale production background from its birth during Lord of the Rings, but these new innovations for producing high quality crowd animations from industry leaders such as Dreamworks, coupled with Massive’s new advances means that the product is continuing to advance large crowd, traffic and sim work adding tremendous production value to films and projects at every level.

NVIDIA Announcements

This week, NVIDIA introduced their second generation workstation platform, Maximus, as well as new versions of their professional Quadro and Tesla cards. What’s Maximus? Maximus certified workstations (from HP, Dell, etc) contain two NVIDIA cards – a matched Quadro® GPU as well as a Tesla® GPU. This divides the tasks normally done with a single card; the Quadro® drives the display and the Tesla® card is used for computationally intensive tasks, such as fluid dynamics simulation, GPU rendering, and real-time color correction.

NVIDIA Announcements

The new version is made possible because of the core parts: a new generation Kepler Quadro® K5000 paired with a new Kepler Tesla K20. NVIDIA also announced new versions of the Quadro® K5000M, Quadro® K4000M, Quadro® K3000M, Quadro® K2000M, Quadro® K1000M, and Quadro® K500M.

These new “Kepler” cards feature a substantial leap in performance over the previous Fermi generation cards, with expected speed increases hovering in the 35% range. Improvements will obviously vary depending upon the software packages, and developers need to update some aspects of their software in order to take advantage of the new features. This increase in performance, however, comes with a substantial reduction in power use. For instance, the new Quadro K5000 card has a maximum 122W power consumption, while the previous Quadro 5000 was rated at 152W. So it’s faster and uses less power.

The Quadro K5000 doubles frame buffer capacity to 4GB (over the Fermi 5000), has a next-generation PCIe-3 bus increases throughput by 2x compared with PCIe-2, drives up to four displays simultaneously, and supports Display Port 1.2 for resolutions up to 3840×2160 @60Hz.

Many of the hardware and software optimizations on the new Kepler platform have to do with attempting to minimize some of the traditional bottlenecks of getting data on and off of the GPU. Quite a bit of time is spent swapping information back and forth between the CPU and GPU, time that could otherwise be spent on processing the actual data.What are the advances that make the new Kepler cards significantly different from the previous generation? Effectively it breaks down into several key areas:

- Increased Frame Buffer and PCIe-3 bus

- Bindless Textures

- Dynamic Parallelism

- SMX

- Hardware H.264 Encoder

Doubling the frame buffer from 2GB to 4GB in the K5000 should help the traditional bottleneck of getting imagery on and off of the graphics card. The aforementioned PCIe-3 bus, which is the path between the CPU and GPU, increases throughput by two times so by default there will be gains simply from this hardware advance. But obviously not all systems have PCIe-3 busses, so Kepler has other improvements to increase efficiency.

One example of this are new bindless textures, making over 1 million simultaneous textures available on the GPU for shaders. This means that textures can more efficiently be loaded once onto the GPU, instead of having to wait to load (and unload) textures on and off of the graphics card. This makes it much more efficient not only for cards that do GPU ray-traced rendering, but also for non-CUDA type applications such as The Foundry’s MARI.

With Dynamic Parallelism, the GPU can spawn new threads on its own without going back to the CPU. If all of the computation can be kept on the card without touching the system bus, processing efficiency increases dramatically. Take simulations for instance. If you break sim processing up into a large grid, apps won’t need to load data from the CPU as frequently. However, processing is coarse.

The opposite holds true if apps make the grid finer to achieve higher quality results — there is quite a bit of swapping that goes on between the GPU and CPU. And much of this swapping of small regions might not include much data. But with Dynamic Parallelism, the GPU can work with larger coarse areas when appropriate — and then on the GPU itself spawn finer grain processes when appropriate without going back to the CPU. This makes processing much faster and happens transparently under the hood for end users.

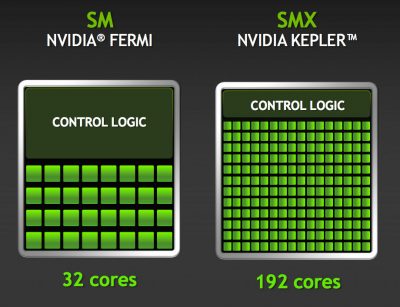

The new SMX module is an improvement in the core architecture that reduces the footprint of the control logic for the GPU. How does this help? If less space is taken up managing the overhead of control, more space can be used for cores that do actual processing.

The new SMX module is an improvement in the core architecture that reduces the footprint of the control logic for the GPU. How does this help? If less space is taken up managing the overhead of control, more space can be used for cores that do actual processing.

This change leads to a substantial increase in the number of cores on a given GPU; for instance, the previous generation Fermi Quadro® 5000 had 352 CUDA cores. The new generation Quadro® K5000 has 1536 cores. However it is *very* important to know that this actual core number is not an apples to apples comparison; it does not mean the new card is five times faster. It’s faster — just not 5x.

What SMX does bring is a 3X increase in power efficiency on a core by core basis, which can be especially significant on mobile platforms for those of us who work remotely or on set. In addition to the improvements mentioned earlier regarding the K5000, there are portables such as the recently introduced retina MacBook Pro that contain a very energy efficient Kepler chip (the geForce 650M) compared to the previous Fermi generation. The new mobile Quadro chips with the Kepler architecture see these power efficiency gains make it to the high end line.

Finally, the new Quadro® line contains an incredibly fast built-in Hardware H.264 encoder. This can provide up to 8x realtime performance for 1080p30 video — effectively processing up to 240 frames per second. It supports Basline, Main, High and Stereo H.264 Profiles, resolutions up to 4K in hardware, and support for YUV 4:2:0, Planar 4:4:4.

The reality of the actual performance for sensible production settings will most likely be far below 8x. For sensible production settings with high quality transcode settings, actual performance will be around 2x real time. That’s still an impressive number and we’re eager to try it out when manufacturers build support into their applications.

What does this all mean as far as real world performance gains for the new cards? At this point in time, hardware is just getting into the hands of software developers and it will take some time to support some of the new features in the Kepler architecture. So it’s the standard “too soon to know” for definitive, specific benchmarks. But overall, NVIDIA’s Greg Estes feels that a 35% increase in speed is a sensible benchmark.

At this point in time, the focus of NVIDIA for rendering and sims is not at replacing CPU render farms. That tech may come some day, but the reality of some the tech issues (not enough memory, loading data on and off the card) for large scale render farms makes this a difficult challenge. So instead, it’s about being able to more quickly visualize particle sims or renders to better set up final renders. In this area, the cards excel.

For applications that use CUDA (Resolve and After Effect’s Ray Tracer), the additional cores will certainly increase performance, as will the Dynamic Paralleism. But it’s not just CUDA apps that will see increases. Apps that use Open GL and multiple/large textures such as MARI, Flame, NUKE, and others will benefit from the bindles textures, increased frame buffer memory, and (eventually with hardware upgrades) the PCIe 3 architecture.

Bottom line: It’s another very strong evolution in NVIDIA’s professional graphics line.

The new Quadro® K5000 is expected to ship in October with a suggested retail price of $2249. The Tesla K20 will be available in December with a MSRP of $3199.

Open Source

Both OpenEXR and Alembic had new releases at SIGGRAPH, and very well attended Birds of a Feather (BOF) user meetings. Plus there’s some news on OSL (open source shader language).

OpenEXR 2.0

Development of OpenEXR v2 has been undertaken in a collaborative environment comprised of Industrial Light & Magic, Weta Digital as well as a number of other contributors such as Pixar who have licensed some key code to the project.

Some of the new features included in the Beta.1 release of OpenEXR v2 are:

* Deep Data. Pixels can now store a variable length list of samples. The main rationale behind deep-images is to have multiple values at different depths for each pixel. OpenEXR v2 supports both hard surface and volumetric representation requirements for deep compositing workflows.

* Multi-part image files. With OpenEXR v2, files can now contain a number of separate, but related, images in one file. Access to any part is independent of the others; in particular, no access of data need take place for unrequested parts.

While a press release on a new version of a format might seem less than electric, the work shown by Weta using the new OpenEXR at the the show was the exact opposite.

fxguide covered the film Abraham Lincoln: Vampire Hunter, in which Weta Digital employed its new deep compositing shadow solution called Shadowsling. At the DigiPro2012 conference they showed just how far they are already pushing deep image compositing.

The key to deep compositing is not only the savings from not having to render hold out mattes, and the ease of layering or updating complex volumetric shots, – it promises to revolutionize what can be done during comp.

Deep Compositing is a relatively new technique. The process relies on rendering deep images, which can now be stored in OpenEXr 2.0. As Weta stated in their paper Camera Space Volumetric Shadows in these files each pixel stores an arbitrarily long list of depth-sorted samples. Elements rendered into separate deep images can be combined accurately to produce a single composited image of the entire scene, even if the images are interleaved in depth. This allows for great flexibility, since only elements which have changed need to be re-rendered, and the scene can be divided into elements without needing to consider how they will be combined. By comparison, in traditional compositing with regular images the scene must be divided into elements in such a way that they can be recombined without edge artefacts or depth order violations. Volumetric deep image pixels represent a volume as piecewise constant optical density and color as a function of depth. Volumetric renders of participating media are generally computationally intensive, so the ability to compute them independently and composite them correctly into the scene is highly advantageous.

For Abraham Lincoln, Weta separated the shadows and lighting from the volume. This allows for large amounts of flexibility during compositing.

Thanks to Weta we can now also release three videos showing some more of that work. In this first video below a demonstrative render shows inverted shadows (for clarity) on a static volume with an animated light source, demonstrating the shadow light shafts are consistent under motion.

In this second video, deep composite is shown with shadows and phase function of an animated light applied to static volumes, created by fx renders and procedurally within the Nuke plugin. The static environment is unlit (black) and is composited in depth to give silhouettes.

In this third video, deep composite is combining the shadows and phase function with a static environment lit by the same light source. The volumes are once again static and rendered without shadows and lighting, which is applied in the deep composite in Nuke – at Weta.

The paper went further to show how some work can be done directly in Nuke, with Nuke producing a low frequency ‘fog’ and by adding that with deep compositing, and controlling it with both height fields and even roto, a large amount of the base low frequency work was all done and generated in Nuke. High frequency additional shadows were then added to this allowing even faster workflows and user directed volumetrics with amazing results.

Alembic 1.1

Alembic 1.1, the open source animation transfer file format jointly developed by Sony Pictures Imageworks (SPI) and Lucasfilm, released its newest version.

Joint development of Alembic was first announced at SIGGRAPH 2010 and Alembic 1.0 was released to the public at the 2011 ACM SIGGRAPH Conference in Vancouver. The software focuses on efficiently storing and sharing animation and visual effects scenes across multiple software applications. Since the software’s debut last year both companies have integrated the technology into their production pipelines. ILM notably using the software for their work on the 2012 blockbuster The Avengers and Sony Pictures Imageworks on the 2012 worldwide hits Men in Black 3 and The Amazing Spider-Man in addition to the upcoming animated feature Hotel Transylvania, scheduled for release September 28, 2012.

At the BOF Rob Bredow casually asked for a show of hands on the number of people who used Alembic and commented how happily surprised he was with the huge response. Alembic is one of the most rapid adoptions of open source software we have seen, being adopted perhaps quicker than even OpenEXR was in its earliest days of release.

Alembic 1.1’s updates include Python API bindings, support for Lights, Materials, and Collections, as well as core performance improvements and bug fixes. Feature updates to the Maya plug-in will also be included.

OSL

V-Ray (Chaos Group) commented at their 10 year user party that they were looking to adopt OSL. SPI released this open source shader language a year or so ago and so far only SPI use it. The principle of it seems incredibly powerful, to allow a uniform shader description to be used across a range of renderers. While SPI has OSL working with Arnold, the Solid Angle version of Arnold does not even support it. But at the show this seemed to be changing. fxguide sought out official confirmation and got it from V-Ray’s Vladimir Koylazov, one of the two founders and key developers from Chaos. He confirmed that not only was V-Ray going to support OSL in the next major release scheduled for roughly March next year, but that they already had it working.

Following on the heels of this, fxguide confirmed with Bill Collis and Andy Lomas of The Foundry that Katana would full support OSL in any renderer such as V-Ray which supported it. Katana itself does not ship with shaders but as OSL was already working with Katana inside Sony, round the same rough timeline as V-Ray, The Foundry would fully support OSL. It remains to be seen if other key renderers such as Mantra, Solid Angle’s Arnold and others will follow suit, but it certainly looks like OSL might be starting to gain momentum.

Weta Digital Virtual Production: Combining Animation, Visual Effects and Live Action

Four time Academy Award winner Joe Letteri talked about his experience of virtual productions at Weta Digital. He started his talk by saying that the idea of a virtual production workflow had pretty much crystalized with Weta’s work on Avatar (2009) but it had been a long road in getting there which started back in 2001 with Peter Jackson’s Lord of The Rings: The Fellowship of the Ring.

During the production of Lord of The Rings: The Fellowship of the Ring Peter Jackson experimented with the concept of virtual production in the sequence where Legolas fights a Cave-Troll. For that sequence the troll was played by Randall William Cook, Animation Supervisor at Weta (recent Animation Supervisor on The Amazing Spider-Man at Sony Picture Imageworks) and was used for previs only.

Things really moved on when Andy Serkis came on-board, initially just to play the voice roll of the Witch King and of course Gollum but quickly after arriving in New Zealand it became clear that having him on stage was going to be a huge benefit to both the actors, Director of Photography (Andrew Lesnie, also D.P. on The Hobbit trilogy) and Jackson himself. Serkis’s interaction with the actors become a huge benefit and also helped tremendously for editing while the production was waiting of the VFX shots back from Weta.

Once the principal photography had wrapped, Serkis remained in New Zealand moving to the motion capture stages of Weta Digital where he had to remember what had been done on set and record those performances which would then be used as the basis for the animation of Gollum. At this point in time the motion capture setup was pretty basic and did not allow for a background plate to be visible during the capture process.

Letteri went on to explain that later Weta found a way to have Serkis’ performance played out with the background plate behind the animated character, making the shot much easier to visualize. At this point in time, Weta were only capturing the body performance. The face was recorded to video at the same time and was used as reference for the animation team to create the final animation. However, it was noticed that because each animator used a mirror as part of the animation process their individual personalities where coming through in the animation of Gollum’s face. Although subtle, this was something Letteri wanted to avoid.

Once all three Lord of the Rings films had finished, Weta moved on to King Kong (2005) having completed work on both Van Helsing and I, Robot the year before (supervised by Joe Letteri). With Peter Jackson directing once again, Weta made use of a technique called “Facial Action Coding System” or FACS. Originally developed by Paul Ekman, Wallace Friesen, and Richard Davidson in 1978, FACS is used to taxonomize human facial expressions. FACS encodes the movement of individual facial muscles from slight different instant changes in facial appearance. This system is used not only for animators but also psychologists as well as a way of detecting if a person is telling the truth or lying. It allowed Weta to analyze the expressions of the face, work out what the underlying muscles where doing and then reapply those muscle activations to a new character you can transfer the express.

For the capture process Serkis was recorded with a hundred or so markers attached to his face and that data was then translated though the FACS system. Although extremely useful, the system did not allow Letteri and Weta to capture enough data for the lip movement so this again was handed over to the animation team to add on top of the motion capture for the rest of the face.

Around the time King Kong was finishing up, Jon Landau (Producer on Avatar) called Weta to discuss the idea of applying the techniques they had been using on Kong to a virtual world that included characters. James Cameron, who had been keeping up with the production, had the idea of turning the process on its head. His idea was not to think of the motion capture stage as a system to making the film but think about the virtual production stage as the stage where you’re making the film.

There were a couple of things that were needed in order to make this work. First you needed a bigger volume, and second a new system to record the face, as dots weren’t going to be practical. Cameron really liked the idea of mounting a video camera onto a head rig that sat in front of the actor’s face and recorded their performance. Also, the concept of a virtual camera became integral to the way Cameron wanted to work.

For the shoot on Avatar, Letteri added various basic props onto the motion capture stage so the actors had something to work with. The data was captured and feed through Autodesk’s MotionBuilder software and then validated and put though layout. The animation was then brought into Maya and added to the face which was part created via Mudbox (then being developed by Tibor Madjar, Dave Cardwell, and Andrew Camenisch as in in-house tool). The data could then either be used as is, replaced entirely with keyframe animation or a mix between the two. The next step was to see if Sam Worthington’s performance would be matched with that of his CG Avatar. Once the pipeline was in place Letteri and the animators at Weta could mix and match performances from different takes of the motion capture date ultimately giving Cameron maximum flexibility.

The look of the Na’vi was ultimately created via a mixture of Mari (again developed at Weta by Jack Greasley and now sold and further developed by The Foundry) and Pixar’s RenderMan. To create the realistic look of the skin, Weta created plaster molds of the actors faces. Latex was then used to reverse the mold which was finally cut up and scanned into the computer. These images where then imported into Mari and used as the basis for the texture maps for the skill of the characters.

Once the texture maps had been created they were exported out of Mari into Maya where they where used as part of the overall RenderMan skin shader. The shaders also employed Sub-Surface Scattering techniques which enables light to penetrate the skin surface, bounce or scatter about within and then exit the surface at a different point.

Letteri then went on to explain that pre-Avatar, the animation of characters was done using clusters weights, a system of multiple control vertices which were set to animate at different percentages, so as one part of the model moved and the deformation would then tail off as the weighting reduced. This weighting was usually added by hand using a paint system, white was 100% and black 0%.

However, for Avatar this system was not going to produce adequate results so a new method needed to be employed. This came in the form of a finite analysis model for the muscles of the characters, today however Weta now have they own proprietary muscle system called “Tissue”.

In order to deal with the massive forest scenes Weta used a stochastic pruning system to reduce the geometry resolution of trees and plants in the distance of shots and spherical harmonics to help light the environments.

From here, Letteri moved focus from live action films to Weta’s first animated feature The Adventures of Tintin: The Secret of the Unicorn (2011). Letteri said originally Tintin was going to be a live action feature but Peter Jackson, one of the three producers, suggested to Director Steven Spielberg that maybe it would work better as an animated movie. James Cameron also thought this was a good idea and invited Spielberg and Jackson over to Giant Studios in Los Angeles where the motion capture stage for Avatar was setup. Spielberg and Jackson where able to run a few test animations which convinced them to shot the whole movie that way. They later ended up shooting the whole film on the same soundstage that Cameron had used for Avatar employing the camera face recording technology.

For the look of Tintin, Jackson and Spielberg wanted a realistic yet stylized feel. Letteri explained that Weta created a turn table system animation of the character from various drawing in the comics. This enables the modelers to see the character from multiple angles which helped in the modeling process. From there Weta created a 3D model of he actor playing Tintin (Jamie Bell) along with a model of the comic book character. These where then merged into a new hybrid model. From there further work was done until the model was finalized.

Letteri then moved on to talk about clothing and how they felt working from drawings of the costumes would not be adequate for the production so they hired people to create physical costumes as you would on a live action feature. This gave everyone a better sense of realism and enabled stand-in actors to perform various actions wearing the costumes which the modelers, animators and TD’s could then use a reference for the CG clothing. This idea was also used for environments, an art department was brought in and build sets, everything was designed as if for carpenters and plasterers except that instead of turning the plans over to these more traditional and physical methods of set building, they went to the 3D modelers.

Just as Cameron had done, Spielberg also employed the use of virtual cameras again using various props to help the actors visualize the CG environments and other objects. For some shots, the vertical camera volume was scaled down so that Spielberg could physically move only a short distance but in the 3D world cover a much large area.

Letteri moved on to discuss the lighting of the movie and how in the last few years Weta and other VFX companies had been trying to solve CG lighting in a more physically plural way. These systems allow the lighters and TD’s to approach things in a more live action based methodology. For lighting scenes in Tintin often the character was lit first using a ambient, key and various fills lights, followed by the lighting of the environment.

Moving on from Tintin, Letteri talked about the next obvious step in the evolution of virtual productions was to bring everything together, live environments, actors and virtual actors. They had tried this with The Lord of the Rings: The Return of the King (2003) but things hadn’t work out very well, but by the time Weta started work on Rise of the Planet of the Apes (2011), a pipeline was in place.

Weta changed their motion capture system from using reflective patches to active LED’s so they could shot on a normal sound stage or even outside without worrying about the lighting. The motion capture camera was added to the set along with the normal rigging and light setup. Andy Serkis was again employed as the main virtual actor playing the ape Caesar, both as a grown ape and baby. As before he needed to be removed from the background plate so the CG character could be added later.

Finally, Letteri made it clear that although they try and capture as much data as possible for the virtual characters in the end you still need animators and TDs to make the final character performance. He stated that of course Serkis performance was instrumental in the creation of the characters personality but the animators also played a key role, sometimes animating the character from scratch, based on Serkis’s performance where no motion capture data was available.

Thanks to fxphd.com’s Matt Leonard for reporting from the session.

Pingback: My War with the MCP