At NVIDIA GTC 2026, Jensen Huang introduced DLSS 5 as the next major step in NVIDIA’s long arc of graphics innovation.

“Twenty-five years after NVIDIA invented the programmable shader, we are reinventing computer graphics once again, DLSS 5 is the GPT moment for graphics, blending handcrafted rendering with generative AI to deliver a dramatic leap in visual realism while preserving the control artists need for creative expression

Jensen Huang, founder and CEO of NVIDIA.

The headline framing and hype are ambitious, but beneath is something more grounded and, arguably, more interesting for the VFX and real-time virtual production community.

DLSS 5 represents a shift in how images are constructed, not just how they are accelerated.

For context, real-time rendering has always operated under tight constraints. Even with modern GPUs, a frame rendered in 16 milliseconds has only a fraction of the computational budget of a film frame, where render times can naturally extend to minutes or hours. Over the past decade, techniques such as hardware ray tracing and path tracing have narrowed that gap, but not closed it.

What DLSS 5 proposes is not to push further on simulation alone, but to combine it with learned ‘inference’.

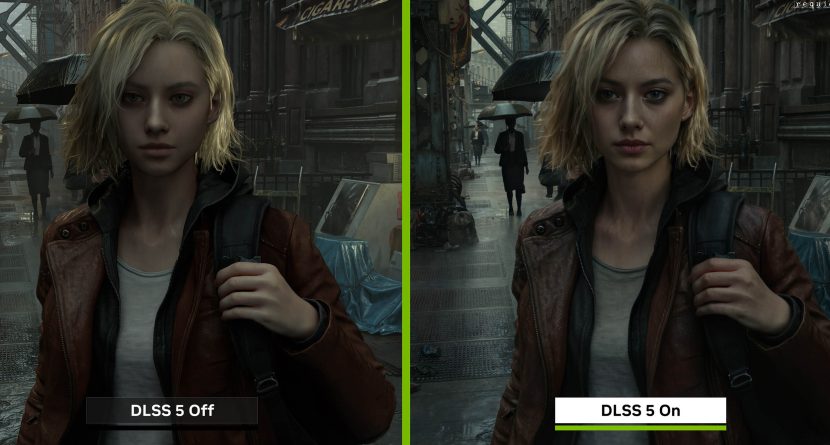

The system takes conventional rendering of the underlying 3D scene and passes it through a neural model that enhances lighting and material response. The key point is that this is not a post-process filter layered on top of an image. The model effectively participates in the formation of the final frame, using the structured data as a constraint and filling in detail in ways that align with learned or inferred representations of how light and materials behave.

This hybrid approach, structured 3D ground truth combined with probabilistic inference, is where DLSS 5 achieves controllable AI realism.

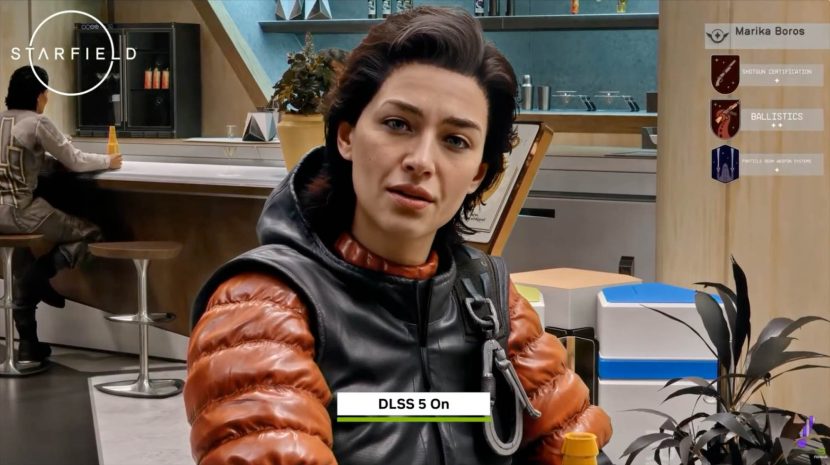

The system is based on a single, unified model. It is not trained per title, per asset, or per character. Instead, it generalises across content, recognising scene semantics such as skin, hair, fabric, and lighting conditions. That generalisation is important. In traditional pipelines, achieving believable results for digital humans often requires highly specialised shader work and careful tuning for each asset. DLSS 5 suggests a model that already encodes much of that understanding.

For digital characters, the implications are practical rather than speculative. Skin rendering, in particular, has always been sensitive to lighting conditions and difficult to maintain consistently in real time. Hair and fabric introduce further complexity. The demonstrations indicate that DLSS 5 can enhance these elements by introducing more plausible subsurface effects, material response, and light interaction, while remaining stable across frames.

Stability is also a critical point. Many generative systems can produce different results for the same input, DLSS 5 is deterministic and temporally consistent. It is anchored to the underlying scene data and respects motion and structure. For production use, whether in games, virtual production, or with interactive installations, that predictability is essential.

Equally important is that the system remains controllable. Developers are not presented with a binary choice. Instead, DLSS 5 provides a set of parameters that allow the effect to be adjusted spatially and artistically. Different materials or regions of a frame can be treated independently, and colour characteristics can be tuned to match a desired look. This keeps the system aligned with established workflows, rather than replacing them.

While NVIDIA positioned DLSS 5 primarily for characters in games, its broader relevance is clear. It works on the whole scene. In virtual production, the possibility of achieving more final-pixel quality lighting in real time could reduce the reliance on heavier offline re-rendering. For interactive digital humans or installations, training environments, or live performances, – the ability to deliver more convincing material and lighting response at interactive frame rates is significant.

It is also worth noting that DLSS has evolved considerably from its origins. Initially DLSS was released in 2018 as an AI technology to boost performance, first by upscaling resolution and then by generating entirely new frames. It has been integrated into over 750 games, becoming a standard for the industry. With DLSS 5, that role becomes explicit. The system is no longer just improving efficiency; it is contributing directly to visual fidelity.

For the VFX community, this does not replace existing rendering approaches, nor does it eliminate the need for high-end pipelines. However, it does point to a convergence. As neural rendering techniques mature, the distinction between real-time and offline image simulation or construction may become less about capability and more about context.

DLSS 5 is an early example of that shift. It combines deterministic rendering with learned models in a way that preserves control while extending realism. Whether it represents a fundamental turning point will depend on how broadly it is adopted and how it integrates into production workflows.

But it does suggest that the way we think about rendering, particularly with respect to the nature of ‘final pixels’, is starting to change.