An ant eye’s view of the world

Making the Ant-Man

Crafting Yellowjacket

It’s an ant, ant world

Ant-flight

Falcon fight

Pym raid

A brief case of flight

Off the rails

Quantum journey

Macro photography, digital youthefication, hordes of ants and…Thomas the Tank Engine – these were just some of the visual effects challenges faced by the filmmakers in bringing Marvel’s newest cinematic universe film, Peyton Reed’s Ant-Man, to the screen.

fxguide talks to visual effects supervisor Jake Morrison and visual effects producer Diana Giorgiutti, plus supervisors from Double Negative on the macro photography and digital characters, Method Studios on their digital ant creations, Luma Pictures on staging key heist scenes, and Lola VFX about making Michael Douglas look just as he did in the 1980s.

Greed is not good, (but youth is)

Michael Douglas is gunning for an Ant-Man ‘prequel’ after seeing himself look all Romancing the Stone in the newest Marvel film to deploy Lola VFX aging and de-aging compositing. Lola VFX is perhaps most famous for Skinny Steve, a jaw-dropping body mass, and facial transformation that allows the Captain America films so much creative and narrative freedom. Lola VFX aged and de-aged three characters at the beginning of Ant-Man, doing 28 extremely complex compositing shots, of which some have multiple characters aging in opposite directions.

The 70-year-old Michael Douglas appears in Ant-Man as Dr. Hank Pym, the genius who created the suit which enables Paul Rudd’s Scott Lang to shrink to the size of an insect. Audiences see the young Hank as Dr Pym splits from S.H.I.E.L.D. in a scene set in 1989. The scene is remarkable not only for a 25 year younger Michael Douglas but also a younger Martin Donovan, playing Mitchell Carson, and an older Hayley Atwell as Peggy Carter.

As Carson is younger than Douglas at only 57 (58 next month), it was easier to cover the range of ages his character required. So when Lola de-aged Michael Douglas back to his Wall Street prime – some 25 years younger than the actor is today – they only needed to notionally de-age Carson a decade or so. No double was used, the work on Carson focused on his eyes, neck and chin.

Atwell is actually 33, but her character Margaret “Peggy” Carter, must have been in her 20s in the second World War, and thus must be in her 60s in the flashback sequence. Lola had already aged Atwell for her stunning appearance in Captain America: The Winter Soldier. The shots of ‘Old Peggy’ were realized by Lola VFX by transposing the facial features of an elderly actress onto the face of Atwell who had performed her lines with no make-up and only a few tracking markers. The team again opted for a similar approach, but the story timeline meant a much less severe process. There were 9 Lola VFX shots with Atwell, and 13 each of the other two, although some of those were in the same shot. Atwell wore a wig for her performance and a very fine layer of latex makeup which is a special foundation style makeup that can give the skin a more leathery look, (but no appliances or latex moldings and no ‘paint work’ such as laugh lines etc).

The actor Michael Douglas is reported to believe he looked more like a 1984 version of himself – thus he joked about being ready for a prequel to Romancing the Stone. “Seeing myself CGI-ed at the beginning of the movie 30 years younger was incredible, because it was one of the scenes we were looping and Peyton [Reed, director] said, ‘You’ll get a kick out of this,’ because they’d completed half of it so it’s a scene at the beginning of the movie and I had these little dots all over my face, and I’m looking at it and half way through the scene the picture it just appeared and there I was 30 years ago. Romancing the Stone!”, Douglas marveled in an interview in a Cover Media interview. “I’m thinking I’m all for a prequel!”

Lola looked at films and imagery of Douglas a few years either side of Wall Street that “matched for lighting and angles, as close as we could find,” says Lola VFX visual effects supervisor Trent Claus. “It was pretty much what we had done before but hopefully gotten better each time. What was different on this de-aging vs say Benjamin Button is that the production hired a look-alike – a double, which they shot on set in the same lighting conditions. So they would have Mr Douglas do a take and then they would have the double do a take – in the exact same set, and that was very helpful not only to occasionally steal parts from but just as reference of how the younger skin reacts and moves with that particular dialogue.”

Lola also hired another actor themselves and shot them in their lighting and scanning rig in their LA offices. This allowed them to get very accurate facial patches or digital ‘skin grafts’ in precise lighting if they needed something they could not modify from Douglas himself or use from the on-set double. The majority of work was done via strict compositing in 2D based on the footage they received.

Douglas delivers a key line in the scene issuing an ultimatum that while Pym is alive no one will have the Pym particles (required for shrinking). This shot was Lola’s test shot, and also one of the hardest given the close framing on the actor. If there was one hard shot for Lola, it was an almost identical shot a few edits earlier. Claus jokingly recalls watching the first offline rushes edit and the team muttering under their breath ‘Don’t turn your head, don’t turn you head…” which is of course exactly what happens! “It makes it a great deal harder,” says Claus. “That always poses so many problems when they turn like that. That shot and the one where he smashes Carson’s head into the table were hard, just because there is so much going on and shaking his head back and forth.”

Hair

The hair we see in the de-aging sequence is from the live action plate, adding digital hair or even compositing on additional hair is extremely complex, but as everyone knew this going in, the on-set makeup and hair team built up the actor’s hair to look very close to what is seen in the final shots. The other huge contribution from hair and makeup is removing Michael Douglas’ beard / goatee. “That would have added all sorts of additional problems in the de-aging, so what you see in the final product is Michael Douglas’ hair, but just thickened up a little – especially around the hair line and darkened a bit.”

Neck

The neck on any character is one of the hardest parts to de-age, not only is the skin very different on a 70 year old man compared to a fit 45-year-old, but Lola has found that it is very important to have the neck move correctly with the head expressions and dialogue. The adam’s apple needs to very closely match the actual actor, yet there are collars, ties, shadows and a range of other issues. “First of all,” explains Claus, “any neck shows so much of one’s age, it tends to sag, it builds up around the adam’s apple and loses elasticity, but then with the dialogue and acting it reacts totally different in that part of the head than a young neck would. It is one of the hardest areas to work on.”

While most people may believe they are looking at the actor’s face, if the neck is wrong, then the face seems wrong. “You get the bobble head look,” says Claus, “they become two separate elements, and that is something you always have to be aware of – that bizarre connection between the neck and the face. You think in your mind’s eye it would be easy – add a little shadow, add a little highlight – but it is never that simple.”

There are all sorts of intricacies with necks – the highlights, the shadows and the detail. “They are never quite where you think they are, so it’s a difficult area,” concludes Claus. “So the neck in the final film in some shots are just completely 2D composites on Michael’s neck and on some particular shots they are a sort of skin graft from the younger actor onto the most difficult area.” Claus points out that Lola tries to avoid this approach as much as possible because “then you have to animate it and match the movement, and then reaction to the environment, reflections from his chin or collar…all those sorts of things you have to deal with when using an additive element.”

Eyebrows

As one might expect the eyes and the crows feet or bags under the eyes are targeted in a de-aging, but what is less well known is that as you age your eyebrows sag, as a consequence, people lift their eyebrows more in everyday life. You eyebrows sit on the top of the eye socket, but with age they start to come lower. This drift needs to be adjusted, but Michael Douglas – like any man his age raises his eyebrows more today than he did during his filming of Wall Street. What Lola needed to determine was how much of this eyebrow lift is a performance decision and how much is it a property of how anyone acts as their eyebrows drift down. The artists at Lola needed to lift enough to de-age but not so much as to change the performance, but allow for the lifting done by the actor that was not so much an acting choice as a normal human age response compensation. “In the end,” states Claus, “the performance is the main thing, and that is why they (the studio) are going with us – to preserve that performance – so the ultimate goal is always: performance – performance – performance … it is definitely a fine line.”

Ears

As anyone ages, their ears and noses grow larger on the head, making those features a key candidate for reduction. The nature of the growth is also very specific, they grow up and down but manly from the lobe, and “wrinkles in the cartilage get a little more pronounced as you get older,” notes Claus. “The texture of the ear itself is a little more glossy and a little more waxy, so we affect the texture of the skin and color. The sheen and hue of the skin becomes more blue as the amount of blood flowing is different, so we have to restore that youthful glow, and that is all done in color correction.” Ears are redder, shinier and smoother – especially the connection between the first bit of cartilage as it connects to the head, near the sideburns.

Nose

It is not just the shape of your nose or its size that changes with age. The blood vessels in one’s nose become more pronounced, the shape near the bridge changes, the bridge between the eyes, according to Claus, “hollows out a little bit, but the skin becomes stretched, so you get two harsher lines with darker areas beneath them, which the center of the bridge becoming more pronounced.” To make someone more youthful you have to make it a “little wider and give the appearance of more fat under the skin.”

While Lola did not do any additional photography or scanning of the actual actor, they did have a set of dots placed on the actor’s face for tracking. There was no special makeup, but a set of 20 ~ 30 or so small dots were applied, which are very important to Lola’s work.

Given Lola’s long history with Marvel, there is a lot of trust, cooperation and respect for their work on set, says Claus.The footage was shot on Alexa, and worked on in 3.2K by 11 artists at Lola in LA – on a very tight schedule. Lola’s painstaking work has once again appeared remarkably effortless, and the youthful Michael Douglas looks remarkably like Michael Douglas, an actor the audience knows well from the period.

Back to the top.

An ant eye’s view of the world

When Scott Lang/Ant-Man shrinks to his half-inch size, the audience gets a whole new perspective on the world around him. Intent on creating the most photorealistic imagery as possible when Ant-Man is ant-sized, a dedicated macro unit acquired macro photography of partial re-created sets, with DNeg adopting a complex focus stacking and image stitching technique for realizing appropriate depth of field.

“We went through the reference of every milestone shrinking film that’s ever been done,” says overall visual effects supervisor Jake Morrison. “That’s from The Incredible Shrinking Man to Tom Thumb, The Borrowers, Fantastic Voyage, and Honey, I Shrunk the Kids. We dissected piece by piece what worked and didn’t work. The one thing that came out really strongly was that every single shrinking movie is without doubt the most technologically advanced thing that could have been done when it was released. The breakthrough stuff in the older pictures would be the large models, would be the split negatives, doing the set twice as much larger and splitting the negative.”

Having looked at these previous projects and their solutions, Morrison and the filmmakers realized they had two major requirements in telling a modern superhero shrinking story. The first was absolutely photorealistic environments, and the second was a photorealistic character. That second requirement was, relatively, an easier one to solve, since Paul Rudd could be filmed in a real set, with a digital Ant-Man built to match where necessary, and with motion capture used to achieve all the appropriate performance beats.

But placing Ant-Man convincingly in convincing environments would be a far greater challenge. Having Ant-Man act amongst oversized props was one area the production sought to avoid in shooting, since absolute photorealism and freedom of camera were going to be crucial to telling the superhero’s action adventure. “How could we make sure these digital sets had this reality to them?” asked Morrison. “It’s actually phenomenally simple when you think about it. The best way to do is to assemble those sets and really ‘shoot’ them, a lot.”

And shoot them they did, via a dedicated ‘macro unit’. This unit would re-create elements of the main unit sets for the purpose of photographing for shots when Ant-Man was only half an inch tall. “What we would do,” explains Morrison, “is break off all of the sets we needed and actually build them separately much in the same way as you would approach doing a pack shot. We ended up with this very cool production line system where we had three and sometimes more of these sets being built. They were called macro sets but they were really about 6 or 8 feet. They were all built and supervised by our macro art director Jann Engel who was absolutely invaluable.”

Braving the bathtub

Scott steals the Ant-Man suit from Hank, and tries it on in a bathtub. When he ‘accidentally’ triggers the suit it shrinks Scott down into the bottom of the bathtub and leads to a series of vignettes where he must escape running water and several other new – to him – and large experiences. “Whenever we’re small with Ant-Man the world is always digital,” says DNeg’s Alex Wuttke. “So the water was a CG sim. We had a CG bathtub recreated from the photography. And then we did a digital sim of the water which effectively gave us full control of rendering, lighting and compositing. The water sim was massively detailed and pushed the limits of resolution we could simulate. It’s fully raytraced water refracting everything.”

Engel would take the plans and reference from the main unit sets and re-build them from scratch, at a higher level of detail. “They were still 1:1, not oversized,” says Morrison. “But say if it’s floorboards or carpet in a hallway, Jann would take those floorboards, and rather than doing just a normal set dressing paint job, she’d actually go out and find salvaged pieces. We have a moment where Ant-Man smacks his head on some rusty pipes – he falls through between two pieces of tile in a bathroom and bounces off some rusty pipes. Those pipes we knew we were going to go CG on. But rather than have the art department paint and try and create the pipes to look like they’re rusted, Jann went out to salvage yards and got real pipes that had been in action for 10 years and really had all the gak and the rust built into them. So there was immediately this level of realism to the sets that Jan built us.”

A single set in a pack shot configuration would be laid out on a raised platform. Then a dedicated macro unit DOP, Rebecca Baehler, would shoot the scenes, taking cues from main unit DOP Russell Carpenter. “We’d bring in a Milo moco system on a long track which could slide up and down between the macro sets,” describes Morrison. “We would mount a Frazier lens or a SKATER Scope for extreme depth of field on the Milo, and then effectively shoot previs’d or storyboarded moves over these tiny sets in incredible detail. Because it was moco they could do repeatable moves with different levels of SFX – if you had dust going through there or mini tumbleweed type things. Then you could also finally put in anything you needed as a tracking pass. We used casino dice as a method of making the tracking really easy. You could do your track on the tracking pass, but then apply your effects to the standard beauty passes we were shooting.”

Previs was crucial to planning these macro unit shots. “There was a lot of experimentation with lenses and framing to get Ant-Man’s scale right,” says The Third Floor previsualization supervisor Jim Baker. “If you had a medium shot of the character with a wide lens, he would appear too large in comparison to the environment. However, if you had him look very small in a relatively huge space – for example, pulling way back from within the bathroom to see a tiny guy in a gigantic canyon of a bathtub, that really worked to indicate size. Ultimately, close ups and long shots seemed to be best to sell his scale. We used very wide lenses for his POVs to emphasize the strangeness of his environment. We also implemented shallow depth of field and floating dust mites into our previs, which helped convey the miniature scale.”

https://youtu.be/sfsoi-iQtRY

The result was Alexa motion picture footage of the environment with correct lighting. But it was only part of the step towards creating these Ant-Man environments. The next wave involved the acquisition of stills, not just to aid in photogrammatical re-creations of the sets, but also re-creations at varying levels of focus via bracketing. It was at this stills stage that Double Negative and visual effects supervisor Alex Wuttke heavily weighed in on the macro unit approach. “We started with a test here at DNeg,” describes Wuttke, “where we set up a keyboard and a lot of gunk all over it and looked into ways of being able to capture the essence of that environment at a very small scale. Then the process we ended up following was to use a 100mm macro lens on a standard Canon 5D body and capture that set really like we would capture a full-sized set. We would take bracketed tile sets of the environment and then use a stitching tool to do a lot of re-projection work.”

However, the macro photography nature of the imagery presented a problem – the depth of field had to be ‘super shallow’. “So we had to come up with new ideas,” says Wuttke. “To project the environment we wanted to have an infinitely deep sharp set of tiles that we could project to re-create the environment. We invested a little R&D in how we would go about doing this. We shot multiple exposure brackets for each little macro tile – and we also had to shoot multiple focus brackets. So effectively what we did was move the focal plane deep into the image with successive slices that we then had to stitch back together – all of the little focus steps and slices to re-create a single tile. Then you’d have to move to the next tile and repeat.”

“So for an individual tile,” adds Wuttke, “if we were to capture an environment for these table top sets would be in the region of 1 and a half to two feet wide by 2 feet deep. To capture all of that you’d go through a huge amount of tile increments but also a huge amount of focus brackets within each of those tiles. So it was quite labor intensive.”

Any small deviation or movement of the camera body would render the whole tile defunct. So the macro unit invested in RODEON robotic pan and scan heads from Dr Clauss. “That comes with an open API so you can program the head yourself,” states Wuttke. “It’s a really nicely engineered bit of kit. It holds it very firmly and move in small increments and repeat those moves as well. We had a remote system with the 5D with 100mm macro lens mounted on one of these robotic heads, and we could program that and drop those in hard to reach spots as well – we could manoeuvre it into difficult to reach places and get fine degrees of tolerance and good image line-up.”

Ultimately, the macro unit would run alongside the main unit shoot in Atlanta. They captured the stills, LIDAR scans and motion control footage with the Alexa and Frazier lens setups in the macro environments, occasionally utilizing a Bolt moco Cinebot rig with a Phantom Flex 4K for high speed plates.

DNeg’s Jigsaw image stitching program was the next tool in the toolbox for bringing the macro unit photography together. “DNeg had a great automatic system,” explains Morrison. “It took the Ev brackets, concatenated those, did a frequency analysis on the focus brackets, and then did a concatenation there so you end up with a single tile which is infinitely in focus. And then it did a pattern analysis on the tiles and then stitched together all of the surrounding tiles to make effectively a macro panorama which was very high resolution but also infinitely in focus. So you have that now from three different angles – you have a photogrammatical solution and also the high resolution geometry to be able to project that and stick that onto. It was an all around system to be able to harvest the macro set that was then shared with the various vendors for their shots.”

The extensive CG environment and prop build moved from Jigsaw to Maya. “Here,” says DNeg CG supervisor Artemis Oikonomopoulou, “we would align cameras with the pictures and start building our models from there. You’d get 6 or 8 cameras in Maya with a traditional image plane and then do traditional modeling from all these angles. Then we also could project the plate because you had your camera with the image plane and you could project and get a first quick pass of textures. Then from that we could bake them down to as high a resolution as you could, and then start painting on top of that. We had to use a lot of displacement, but sometimes the general undulation of a surface you actually had to model it in because it was at that miniature scale. We added a lot of dressing on top, for example dust and dust motes floating around and minuscule particulate.”

DNeg rendered scenes using a Katana and PRMan 19 setup, with Houdini’s Mantra used for volumes. It was the first show completed with Katana. “Everyone picked up Katana so quickly and the lighters in the end just loved it,” says Oikonomopoulou.

Selling the scale shots involved not only the macro photography approach, but also layers of atmosphere. Incorporating dust motes became a signature of the small-scale scenes. “These were all the little tiny dust particles that are around us all the time,” says Morrison. “The paradigm for going into the macro world is a little bit like underwater, and we also riffed off the idea that insects effectively see the world at a higher frame rate than we do which meant the dust and other things played a little heavy or overcranked. At the end of the day 49 fps was the general feel. The nice thing with that is that it adds a nice soupiness to the environment, so layering on top of that we decided to use the motes, they added an underwater particulate vibe. It actually helped to pick up the atmosphere and make the air more jellylike and reinforce atmosphere.”

Similarly, Morrison worked closely with DNeg to come up with a distinct look for the depth of field itself. “On Thor: The Dark World,” says Morrison, “we’d come up with Alex with a photographically correct circle of confusion bokeh treatment which has a slightly raised ring around the edge and then a kind of tissue paper texture for within. For this movie we pushed that a little bit further – if you have an image where everything is in focus all the time, and then you have a person standing in front of the camera, it’s almost impossible for you not to think that that person is anything other than a normal person. You can have big things in the background, but what effectively happens a lot of the time is that it looks like a rear projection. So you have to reinforce the depth of the field. But if you just go soft it looks even more rear projection. So then you’ve got a situation where it looks like an actor standing in front of a rear projection screen and you’ve just de-focused the projector.”

Macro aerials

“The one thing we found which was interesting in the macro unit,” says Morrison, “was that even though the Frazier lens was actually pretty small compared to a prime 35mm camera, they’re still not actually that small. Because Ant-Man is only half an inch tall, if you have a lens which has a 4 inch diameter and you split that in half and say your entrance pupil is 2 inches over the ground, because you’re scraping the ground with the barrel of the lens, for Ant-Man you’re effectively at 24 feet up like a crane. Even at the tightest you can get with the Frazier lens, you’re effectively shooting aerials – little macro aerial plates.”

“The challenge,” adds Morrison, “became, ‘how do you make a de-focused image interesting?’, because you know you’re going to need to reinforce that macro scale. The cool thing about the bokeh profile we came up with was, because of this raised ring around the outside, it actually creates a huge amount of detail. So if you have a couple of bright things and a few dark things behind you, it adds a level of complexity to even defocused backgrounds. When you throw something completely out, it actually gets more complex visually, and that was a key part of the equation.”

A further cinematographic aspect aided in selling Ant-Man’s size – shooting him from the right angle. “It was always about making sure Ant-Man looked small all of the time,” outlines Morrison. “We discovered that actually craning the camera up a little bit and looking down on Ant-Man all the time is really the key way to go. This was the same as when you shoot children. If you want to make them feel more vulnerable in the scene, you always bring the camera up and tilt down slightly, just to reinforce the fact that we’re looming on them slightly. It worked amazingly well. There’s still normal coverage, but we definitely fell into two forms of camera coverage – where we’d either go very wide and shoot down on Ant-Man to reinforce his size. On those shots we could be quite deep with our depth of field to allow the audience to see all the detail.”

Back to the top.

Making the Ant-Man

Scott as Ant-Man is seen in the film as both human and pint sized, and was often required to carry out complex stunts and fighting. Paul Rudd and his stunt double performed Ant-Man in wardrobe, while also delivering performance and motion capture for his digital counterpart – at large and small scales – made by Double Negative.

Costume designer Sammy Sheldon and head specialty costume creator Ivo Cogney create Ant-Man’s suit, a retro-esque diving bell/motorcycle mashup. “Having that suit on set meant meant we could photograph it and get all that high level of texture information – the real details and apply that to the CG version of Paul when he’s tiny.”

That photography was handled using DNeg’s Photobooth photogrammetry approach. “We set up a big tent on one of the stages,” explains Wuttke. “We have it filled with digital SLR cameras in a fixed configuration and a series of polarized and unpolarized flash units. We bring in the actor in the suit into the volume, and photograph it from multiple angles. It gives us a geometry extraction, but also textures which are aligned with that geometry by virtue of being shot at the same time. Because it’s all cross-polarized we can extract specular and diffuse components from that as well. The other advantage of the cross-polarized photography is that when you extract the specular it also allows you, because you’re shooting from multiple angles, you can extract fine displacement detail on top of that.”

DNeg then modeled, textured and rigged the suit asset, ultimately sharing it amongst the vendors. Cloth sims were required for the leather outer and to deal with the fact that the suit was slightly baggy – a deliberate ‘vintage’ addition. For Morrison, this proved to be a factor in making the suit even more photorealistic. “Most CG suits that I’ve done tended to be pretty snug,” he says. “That’s cool and makes a good silhouette, but the one thing we always fight against – when you throw someone out a plane how do you show wind resistance and make the audience believe they are skydiving, falling or having some sort of interaction? Having a suit that was slightly looser meant we were able to run a lot of wind and cloth sims. As he runs there’s bunching. It allows you to get a lot more detail into the suit which takes the CG edge off it.”

Ant-Man’s extensive stunts – often in parkour moves – made use of motion capture as well as more pseudo mocap witness cam setups in Atlanta as well as individual vendor mocap additions. “We got some generic stuff of Paul running, his stunt guy landing and doing rolls,” says Morrison. “But we also got a huge amount of very specific actions where he was doing the classic performances of what he would be doing on a bluescreen or greenscreen normally where you’re saying ‘Right behind you there’s a massive rat the size of skyscraper to you…’ We got Paul to act out all the key action sequences in the movie and we got that in the bag. Paul was in great shape and had done a lot of training too.”

Seeing Scott’s reaction to predicaments he is placed in required close-ups on Rudd’s eyes. For that and also the eyes of Corey Stoll, the production embarked on a universal capture approach, filming the actors in various poses surrounded by multiple cameras and then compositing his eyes into the suit visor. “We match-moved that,” explains DNeg’s Oikonomopoulou, “and projected that back onto the body surface of the model – in comp they would bring back the eyes through the helmet with the expression.”

A signature effect when Ant-Man shrinks was the outline of his body, a graphic element that been a feature of the comic books. DNeg worked on a look for the effect, drawn further upon by ILM, and adopted by all the vendors. “It’s like a little time echo,” describes Wuttke. “As Ant-Man shrinks in almost a stop motion way he would leave behind outlines of the poses he’d been in as he shrinks down. When the camera’s fairly locked you can get away with a more 2D approach, but when the camera’s in motion which more often than not it is, we’d have to go with a 3D approach. We’d have two CG cameras rendering the action from different points along the timeline with slightly different framings. One would be the main shot camera, the other would be a utility camera that would provide renders of static poses of Ant-Man at different points along the timeline. In the comp we could float in position and whoosh past the camera.”

Back to the top.

Crafting Yellowjacket

Darren Cross’ (Corey Stoll) own attempts at inventing shrinking technology manifest themselves in a weaponized ‘Yellowjacket’ suit, which he uses to confront Ant-Man in several sequences. Double Negative built the suit as an entirely digital creation (a real suit was not on set) incorporating deadly ‘stingers’ and space age materials, also sharing the asset with other vendors.

For Yellowjacket sequences, Stoll wore a basic motion capture reference suit on set, but was always replaced with a CG character. Designs for Yellowjacket were aimed at demonstrating the suit’s advanced nature. “It had to be a fabric that didn’t exist in real life,” notes Morrison. “DNeg did some looks and we showed the studio. Then we took DNeg’s renders and passed them onto Marvel’s visdev department. They would paint on top of DNeg’s renders and we’d get something back that might not be easy to build geo-wise but did look ten times cooler. We’d give that to DNeg and they’d incorporate that. There’s a tendency when there’s a blank piece of paper to create fashion looks where the silhouettes are almost impossible to make in real life, but our process was more like 3D building blocks based on a person. You might bend the rules a little bit in terms of helmet size, but at least it was based on reality.”

“The yellow parts of the suit,” discusses Oikonomopoulou, “which were meant to be this ‘energy’ were the hardest things to conceptualize because it was meant to be something not of this world. We went for a paneling approach with gold threading. The animators also had to figure out the language of Yellowjacket’s movement, especially because he has a backpack with mechanical arms – do they drive him forward, or are they an extension of his suit? His chest was a bit more solid – it wouldn’t deform and bend. That was tricky to rig because he sometimes does superhero moves, so it’s hard to make these metallic panels not intersect. The only cloth part of him was some carbon fiber material around his arms and legs.”

Yellowjacket’s weapons were plasma cannons referred to as ‘stingers’. “They were like robotic appendages that are attached to his suit by a backpack that also has thrusters,” says Wuttke. “We went through a lot of animation iterations to figure out how they would move – the best way we could come up with to get what everyone was after, they almost had their own sense of AI in them and lock on to a subject.”

Back to the top.

It’s an ant, ant world

Having learnt to communicate and control ants with the aid of technology from Hank, Scott finds the creatures to be helpful compatriots during his missions, even relying on one winged carpenter ant named Anthony for transport. Method Studios led the visual effects effort in creating several species of ants, which were then shared amongst the vendors. Luma, too, took on a number of ant swarming shots.

The ants of Ant-Man were based, of course, on real ants, but as crucial characters in the story that needed to essentially be heroes, they required a number of modifications. “When you look at macro photography of ants, they’re pretty terrifying,” notes Morrison. “But it was a big concern that the ants not be creepy or hairy. What we decided to do was take a cue from some Saharan silver ants that, in order to survive extreme desert temperatures, have developed a ‘silver fur coat’. If you look close enough it looks like combed hair or fur but actually when you step back a little bit it goes very shiny with an anisotropic profile to it – and it looks like steel or a beautiful suit of armor. Then we had four or five main ants that each had their own look.”

Those different ants included the carpenter ants, a flying version of the carpenter ant, bullet ants, fire ants and crazy ants – all of which Method sought reference on. Method modeled in Maya and ZBrush, with texturing in MARI and Maya and V-Ray for animation and rendering. “We found in our research that ants have mostly the same components,” says Method visual effects supervisor Greg Steele, “which helped in rigging because it meant we could mostly utilize the same character kit.”

The carpenter ants were realized as solid brown and black creatures, while the bullet, fire and crazy ants had more subsurface and translucent qualities. “The subsurface is totally dependent on what’s behind them – so it’s more than just subsurface, we combine it with refraction,” explains Steele. “Our turntables looked great on certain frames but horrible on others. So we created some vignettes to get the right look and did some early tests with macro photography tests of gears within a clock.”

Animating Anthony

“Anthony is a winged carpenter ant – he’s Ant-Man’s steed,” says Morrison. “The paradigm was that if this was Ant-Man’s steed, we’ll make the ant the size of a horse relative to Ant-Man. We had this big reveal where Anthony rescues Scott from outside the jail, and that was really conceived from reference of Black Hawk Down. The first time the winged ant arrives it’s a huge sound and emotional cue.”

Those vignettes also served as the starting point for what behavior each ant would exhibit. The bullet ants, for example, were determined to be the ‘muscle’ of the group. “They are giant tropical ants which are nasty and huge,” relates Morrison. “They can kill a person with a bite from their venom – they have a neuro toxin that can cause heart attacks. So we decided they would be the ‘Ray Winstones’ of the ant world – they were going to be the heavies. We took the real bullet ants and Method turned their hair into more like horns, not like rhino horns, but shrunk down to an eighth or tenth of the size, it starts looking like organic armor.”

Meanwhile, the fire ants were considered more like engineers – able to form rafts and bridges – and the crazy ants, the smallest of the group, were treated like puppies. “We tried to give them a playful goofy quality but they are actually quite menacing up close,” says Steele. “It’s funny, if you searched for ants working together on some websites it actually showed ants pulling other ants apart!”

Method’s main ant shots in the film occur as Scott is required to bond with the creatures in their backyard hives as he trains for the heist on Cross’ facility. Initially freaked out by the ants – resulting in several scenes of him bursting through the ground full-sized – Scott eventually learns to work with them.

A montage shows Scott, as a digital Ant-Man, running with the ants inside their tunnels. In general, Method approached animation (led by animation supervisor Keith Roberts) of the creatures by treating the ants less like insects and more like ‘noble beasts’. That meant slowing down their normal frenetic actions. “When Scott’s running with them,” relates Steele, “we had an almost Dances With Wolves shot with the swarms of ants, but we slowed them down to make them less insectoid and feel a little more relatable.”

For the hordes of ants, Method developed a component process to efficiently realize so much geometry on screen. “We broke out the different components of the body parts and those were normalized to zero,” says Steele. “Those were taken into Houdini and we set up a standard hierarchical rig inside Houdini that allowed them to re-parent all the pieces and create some standard rig motion. We created a two-part locomotion system. The first part was traversing the characters from point A to B, and it also had an AI system built in. If you threw 100 of them out there and say head to point A, some would slow down for each other or get out of each other’s way. The guys could introduce as much randomness in there as they wanted.”

“As the locomotion was solved,” continues Steele, “the second piece was the footwork that needed to happen. So after we solved the swarm, we applied the legs – it was all broken up and randomized. The feet would stick to the ground. Then we took all that information and we created a particle disk cache. Since we had normalized all the body parts out earlier, we were able to take that information and re-assemble the ants inside Maya at rendertime, and redistribute the ants across a surface and all their legs would be moving in the way they were in the Houdini file. It ended up being a really efficient way to do the work.”

The tunnel environments were initially imagined – and actually shot – as real macro set pieces. “They built these huge models and structures and they shot motion control passes,” states Steele. “But because of story reasons and plot points, we couldn’t really use the footage, although it was still great reference.”

Method’s art department did further research of tunnel and soil reference, which had to be replicated in CG. “We started with using textures in the tunnels,” says Steele, “but even using vector displacement as high end as you go, but we knew we needed full geometry. We created a nice kit of stone and rocks and crystals and leaves and sticks. Then in Houdini we set up a system that allowed us to instance the geometry all over the surface so the artist could interactively determine size and placement and mixture and bias. Then we again wrote out a particle disk cache and that allowed us to instance all the rocks and geometry at rendertime in V-Ray.”

Root structures were also integral in selling the underground tunnels and also had the added benefit of, along with dust motes and atmosphere, producing dramatic lighting environments. “So we actually made those root structures available to the lighters,” notes Steele. “We had film noir reference for these shots, and the lighters could use the root structures to make interesting silhouettes and shapes.”

Back to the top.

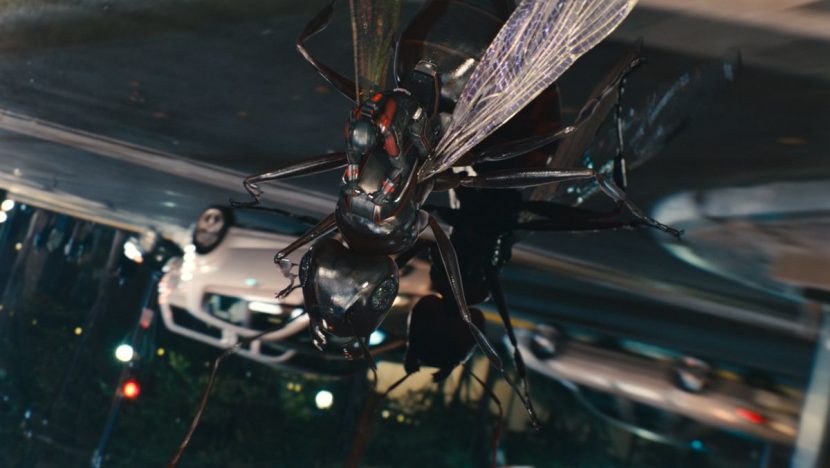

Ant-flight

Scott is arrested after trying to return the Ant-Man suit to Hank, but he is busted out of jail thanks to the ants bringing him the shrunk-down clothing. He eventually escapes on the back of Anthony. Double Negative utilized Method Studio ant assets to showcase Ant-Man riding Anthony through the streets of San Francisco, hanging onto police cars and other vehicles.

Live action background plates in San Francisco were acquired by shooting with a RED EPIC camera array on a Russian Arm vehicle. “That would provide driving plates that we could then freely move our 3D camera within,” states Wuttke. “The same camera vehicle also had a fisheye lens attached to an Alexa mounted on the side, which had enough range to give enough information to create a moving HDRI bubble within the same travel, so we could light our CG with that. Just to top off the range, we set it so it was effectively gathering low light up to the high end. For everything that clipped beyond that on the Alexa, we sent someone to shoot HDRI images of super bright light sources such as headlamps, lighting fixes, just to be able to augment our top end HDRI information with that. “

DNeg then built CG vehicles, police cars and a police light bar, while Ant-Man and Anthony were of course all-CG. “For this sequence,” adds Wuttke, “we had to play with the way that real world light sources like the light bar would behave if you’re only 5mm high. In terms of beauty lighting, a light source would absolutely wash all the way across you and it would be like being lit from stadium lights, even if it was just a light bulb. We also played around with chromatic aberration – the idea that a tiny lens viewing a bright light source, and how that would respond.”

Back to the top.

Falcon fight

Conspiring to steal the Yellowjacket from Cross, Hank Pym and his daughter Hope (Evangeline Lilly) have Scott acquire a special device from Avengers headquarters. There, Scott encounters Sam Wilson or Falcon (Anthony Mackie) and the two engage in a tussle as Ant-Man evades the Avenger with his small size.

ILM was entrusted to pull off the effects for this sequence – having also delivered Falcon for Captain America: The Winter Soldier – in which Scott uses his small size to evade the winged Avenger. Live action was captured on stages at Pinewood in Atlanta as well as a roof section built in a parking lot surrounded by greenscreen. An open field nearby served as background plates and a location for some wire work, although much of the trees in these plates were replaced since they had been filmed in the fall and had no leaves.

Falcon, in an updated suit, uses his wings and a new visor to try and catch Ant-Man. The scenes required a combination of live action, digital take-overs and fully digital shots. “Legacy Effects built a new backpack and he had a new costume,” outlines ILM visual effects supervisor Russell Earl. “We had a scan of the actor in the costume and did a build from there.”

For fighting scenes, ILM tended to keep Ant-Man as a six foot character and then “scaled the world around him,” says Earl, “with the exception of when he’s interacting with Falcon, then we went with the half-inch size. Anthony Mackie did a great job of miming the fight. The actor would be told ‘he’s jumping on your shoulder, he’s hitting you in the chin’ and then we would take those beats and make them feel as if Ant-Man is seated in there.”

Back to the top.

Pym raid

Ant-Man and his insect buddies infiltrate Pym Technologies while Cross is unveiling the Yellowjacket suit. They arrive via the water pipes, sabotage the servers and also evade security guards before successfully blowing up the building in a macro-like explosion.

Luma Pictures handled a number of sequences in the Pym raid, starting with Ant-Man’s ant raft as his team surfs through a pipe system. The studio first solved the look of the water. “Interestingly, water like that at a macro level is not interesting at all,” says Luma visual effects supervisor Vince Cirelli. “Because of the surface tension of water, you don’t get any detail, it looks smooth. It looks like a 1990s water simulation. That wasn’t going to fly for the movie, so we had to figure out a way to inject some interest into it but keep the macro feel. So we added a lot of particles and bubbles underneath the surface that get refracted and a lot of textural information to add detail.”

Once the sim of the water was done in the pipes, Luma then had to lay a crowd sim on top for the ants. For that they approached the crowd sim slightly differently than normal. “Usually,” notes Cirelli, “what happens with crowd sim software is you have a land-based territory and you place your AIs and paths on top of that. But in this instance we had a dynamically changing surface which was the water surface – it changes every frame. The ants are moving and crawling too. So on top of the water sim we had a target particle sim which defined the shape and volume of where the ants live and ride. The AI part – which we did in Houdini and Miarmy – targets those particles and tries to get to that particles. That’s the way we accommodate it – instead of paths, we make the ants just try to get to the particles on top of the water simulation. On top of that is Ant-Man who is hand-keyed over the top of everything.”

Mounted on Anthony, Ant-Man and the other ants destroy parts of the Pym server room. Luma realized both wide shots at human-sized level and at macro scale, all in CG, pushing the sequence through their Arnold pipeline. “You’re building twice for everything – they are flying through the server room world but then landing on surfaces at macro level,” says Cirelli. “So the datasets are enormous. We had to build some advanced partition tools to be able to accommodate that and get that data through. We would make things hit just at render time through Arnold. We came up with some unique level of detail tools that worked on the realtime side and also worked on the rendertime side.”

Luma also developed its own method for cloth-sim’ing Ant-Man’s suit, an asset originally created by DNeg. “We came up with a tool called SuitUp,” says Cirelli. “It is a way to essentially pre-determine cloth simulation that’s triggered dynamically for every shot. The way it works is that you simulate starting out at the farthest extremities of the character how the cloth responds to different velocities and speeds and translations. You go through an entire huge library of this and create a huge dataset. Then based on the animation, the animation then runs and calculates the speed of certain translations and then pulls the pre-simulated cached out geometry with the wrinkles and folds with that specific action. What you get is something that’s pre-baked that you can put into every shot and basically the cloth sim’s done before you even enter the shot.”

SuitUp was used to animate Ant-Man, too, as he launches an attack on security guards who fire their guns at him while he escapes through an architectural model of the building located in a lab. A macro shot was required for one shot of Ant-Man parkour’ing onto the gun and running along its barrel. “We actually shot some of our mocap for that here at the studio,” states Cirelli. “We took the run cycle and blew away a lot of the noise on it, figuring out how to stretch the gait but still make it feel natural. We found on that we could stretch the gait a good 20 per cent and that was still within the realm of feeling like he’s running naturally. Beyond that it was hopping or skipping.”

As Ant-Man then runs through the architectural model it explodes in ‘mortar-like’ pieces around him due to the bullet fire. An actual miniature model was shot and destroyed during filming. To help plan the shoot, The Third Floor produced techvis to aid in how a Bolt high-speed Cinebot motion control rig equipped with a Phantom Flex 4K would capture the live action. “They needed to dig trenches in the model to allow for the cam and figure out how far it would be traveling since it would be shot at 1000 frames per second,” says The Third Floor’s Jim Baker, “so in techvis, we built schematics that helped with calculating camera speed and travel distance.”

Luma took the real photography and also produced a digital version. “It was really hard to replicate the explosions practically especially at macro level ,” says Cirelli. “The pieces visually move differently than what you’d expect, and adding things like the paper and foam core was a bear to make. In digital, we cheated it to be slightly faster than they would really be to be more dangerous, we added dust, and then the paper and foam core we had to match the buoyancy.”

https://youtu.be/y3iDwW9s1dY

Ant-Man and the ants successfully place miniature explosions in the particle containment chamber, which grow large and eventually destroy the building. Before that, Hank and Hope escape in a miniature tank that is made large, a digital Luma creation. The explosion – or implosion – of the building was devised as a multi-tier effects shot. “First we need to build these huge pieces and work out how they shrink,” says Cirelli. “Then we need another simulation where they crumble and fall apart as they’re coming in and scaling and changing shape. Then had to figure out the lighting effects and volumetrics that are happening at the same time. Fluids are great at expanding out, but trying to take a fluid and have it crushing into a tiny space is damn hard to do! The huge pieces are not trailing dust; the dust is preceding and pushing and pulling in to the center point.”

Back to the top.

A brief case of flight

Cross attempts to escape the Pym building in a helicopter but is pursued by Ant-Man. The two fight aboard the helicopter but manage to be enclosed in a briefcase as it is flung outside the aircraft. Double Negative tackled the briefcase scene, making use of lighting from only specific sources such as a mobile phone screen.

The Third Floor previsualized the sequence, which needed to tell the story of the characters in freefall with a range of large objects tumbling around them. “Lighting was a big consideration as the only light sources we used were the cellphone and the lights on Ant-Man and Yellowjacket’s suits,” says Third Floor’s Jim Baker. “Having the phone as the major light source gave a great look that is similar to the sun in space, except our light was spinning all the time, which made for some nice lighting. When Yellowjacket destroys a lifesaver, we had a miniature asteroid field of debris flying around the briefcase. We ended up keyframing all of the action in the briefcase and added Trapcode shine in After Effects to certain shots in order to get the look of the props or characters throwing shadows through a dusty environment.”

In finalizing the all-CG shots, DNeg artists paid close attention to the detail of the objects inside the case. “We would get up close to a plastic USB key,” notes Wuttke, “so seeing the frayed edges of the plastic injection molding, fingerprints up close, different greasy specular response from that – that was important for scale cues, just to keep you rooted in being in a real environment.”

Lighting and lighting sources was also crucial. “You have to light your characters in different ways and decide on light sources,” states DNeg’s Oikonomopoulou. “There’s a mobile phone inside the briefcase and that was our main source of light. Everything is tumbling so you couldn’t have a basic setup that all your lighters would pick up to beauty light the characters – it was a constantly changing lighting environment. The animators were lending lighters a hand – they would try to frame all the props inside this case in such a way that it would be behind a character or it would be maybe out of shot but lighting the character.”

Back to the top.

Off the rails

Catch a glimpse of the train sequence in this trailer.

Ant-Man faces Yellowjacket in his daughter’s (Cassie) bedroom, a sequence that involves them fighting as both large and small characters, and even a confrontation atop a Thomas the Tank Engine train set. Shots at macro level during the battle were orchestrated by Double Negative, while ILM handled the characters when they were full-sized.

Again, The Third Floor aided in imaging the sequence in previs form. “One big selling point,” notes Third Floor’s Jim Baker, “was that while in the macro world of the characters, the camerawork would be done just like if it had been a full-sized train. We used booms, helicopter shots, handheld on the roof of the train and tracking shots. For the shot of Ant-Man falling and shrinking we referenced paratrooper action, which was of course complicated by having him shrink in the shot. We really wanted to sell the scale of Thomas and give the feeling of jeopardy to the characters. Then, anytime the action would pop out to Cassie’s view of the situation, the action would play out with the physics of toys, which lent for some of the funniest shots in the movie, when Yellowjacket is hit by the train. One thing we used in this and other sequences was the snap zoom, which is normally reserved for finding high speed aircraft with long lenses. VFX supervisor Jake Morrison came up with the idea to use it to ‘find’ Ant-Man in a big environment.”

Double Negative’s work began with an extensive photo shoot of the bedroom set. “Main unit would be in there with the full-sized actors prior to them going small,” says Wuttke. “Then they’d go small – you’d still be in that environment but down a tiny scale and it would have to be CG. So we decided early on that we were going to need that set for the best part of two weeks to go in an photograph and scan. When we first walked onto the set once the production designer had put his finishing touches to it, the amount of detail in the room was astonishing. There’s toys everywhere, little trinkets, a bookshelf full of stuff.”

The macro unit employed the three 100mm Canon 5D rigs with the robotic heads for the shoot. Says Wuttke: “We’d position each of them at slightly different vantage points around a feature, photograph that – a region of a foot and a half across would give us a 20K image that was infinitely sharp once we stitched all the photo brackets. We’d get three of those for an area and move onto the next area, then repeat the process, so that we ended up with a 360 degree feature set around a specific area.”

B-roll from the bedroom fight.

“We cataloged each of the props, too, and took them into a blackout tent and shot cross polarized photography on little turntables. We would have the base environment and props and completely re-build that in CG. We could move the camera wherever we wanted to go and have a faithful reproduction of the actual room.”

Postvis

“On the set,” says The Third Floor’s Jim Baker, “Paul Rudd was shot without the faceplate of the mask and Corey Stoll’s Yellowjacket suit was also added in post. PFTrack and Boujou were used to track the shots and then the mask and suit were added in postvis. Yellowjacket’s mechanical arms were animated to interact with the action that was shot. We also tracked a background into the blue screen they had placed for the spot where Thomas the Train causes a hole in the room. We had previs’d the shots of the train flying out and crushing the police car and we postvis’d them after they were shot. Action Essentials was used to add debris effects in After Effects. Thomas’ weight was important to get across in the postvis animation. We also added the enlarged ant that runs down the stairs and out of the house, startling the characters.”

As had been foreshadowed in the previs, a large part of the final sequence in macro was achieved with virtual cinematography. “That’s really part of the charm of the sequence,” suggests Wuttke. “It’s an action sequence and you need to shoot it like an action sequence. One of the problems you have in a CG world is that you remove constraints from the camera, which can be a dangerous thing because you end up with camera moves that can be wholly unbelievable, and that detracts from the realism of the sequence you’re trying to portray. So wherever possible we had to mimic real cinematography techniques – when they’re fighting it out on top of the train set, we shot it like an aerial, as if a helicopter was flying over the top of the train inherent with all the rumble you get in the camera mount. Then down on the carpet and he’s leading his ants on the charge – well maybe we’ve got a motor bike with a pillion rider and a camera on his shoulder and he’s capturing the action.”

An extra effect required for the Ant-Man/Yellowjacket battle were explosions – the result of Yellowjacket’s stinger blasts. An element shoot conducted in Atlanta helped serve as the basis for these explosions. “We tried to do it in a macro style,” says Wuttke. “We got the SFX guys to rig things with tiny little charges, and tried different styles of explosions. We shot that on a Phantom at high speed. So if we were going to blow up a car and it’s a toy car, we thought let’s not do the massive four foot bigature of a car with gasoline. Let’s get a little toy car and rig it up with some tiny little charges and blow it up – and shoot it in macro. It led to some things we didn’t expect to see – macro flames. It has a very different flavor to a petrol or pyro and we used that as reference for our FX teams to build these in simulations.”

Back to the top.

Quantum journey

In order to defeat Yellowjacket, Ant-Man must shrink to a molecular size to penetrate Cross’ suit, but then Scott continues to shrink to subatomic levels. ILM delivered an array of microscopic and largely psychedelic imagery for the subatomic shrinking, taking advantage of procedural fractal rendering techniques the studio had utilized on Lucy.

To handle the journey Ant-Man takes, ILM kept the character the same size while growing the world around him. “One of the things we found,” notes ILM’s Russell Earl, “was that as soon as you start to shrink a character, they always feel like they’re falling. Initially it was more like he was floating or shrinking, then it was more like diving in a squirrel suit. We quickly came around at the end to somewhere in between. He’s not necessarily falling through space but the world is growing around him.”

The imagery included more traditional electron microscope-like material but soon became ‘trippier and stranger’, according to Earl. “Then we travel into the nucleus and for that we used a fractal tool that let us create pieces, but then we could freeze out that geo and instance the geo into that and tweak it. From that world we went into the kaleidoscope lens which was the ‘quantum mirror’ section. Then we get into the void – the last step through – which was a big open empty space.”

The fractal imagery made use of R&D done with fractal rendering for ‘inside the brain’ shots in Lucy, led by effects artist Florian Witzel (see our coverage here). “I was joking with Florian that he had to come up with a really cool name for the system that he built,” says Earl. “It got to the point where we had things like ‘the raw chicken and sprite’ look. We had found a bunch of images – I could find an image online that looked like raw chicken in sprite and some of the look ends up in the sequence. Florian could turn around in a day or two to that look. The tool could create it in a procedural way in infinite levels of detail.”

Back to the top.

Ants on their minds

For Morrison and visual effects producer Diana Giorgiutti, Ant-Man proved to be an enormous undertaking. Apart from the many hundreds of shots, careful co-ordination was required amongst vendors which would be sharing detailed environments and assets across the globe. However, much of that was done seamlessly. “These days on big visual effects films you’ve got a bunch of big vendors and there’s normally a lot of shared assets and cross-pollination, but never have I seen as much as we have on this film,” says Giorgiutti.

Detailed research into macro photography and the lives of ants – arguably the silent stars of the film – also had an interesting impact on the visual effects team. “You will never want to tread on an ant again,” suggests Giorgiutti, who also recalled the moment Marvel executive producer and executive VP of visual effects & post-production Victoria Alonso exclaimed one morning, ‘You’ll never believe it, I found an ant in bathtub, and I saved it. I was talking to it!’”

All images and clips copyright 2015 Marvel Studios.

Excellent coverage!! Thanks so much!