The opener trailer of the 16th Annual D.I.C.E. Awards has gained a lot of interest for its wonderful mix of action game recreations and visual effects humor. We take a look behind the scenes of how it was created.

The DICE opener 2013

The opener idea came from NoodleHaus, (part of Hamagami / Carroll Inc), a 20 year old creative agency whose web site explains makes “campalgnable content utilizing remarkable creative that taps trigger-points deep in each consumer.” NoodleHaus has done many AAA game campaigns from trailers, CGI, On-Disc Content, to live shoots and interviews/word of mouth.

NoodleHaus Sizzle reel (as a company)

NoodleHaus in turn, went to Motion Maven, to realize the vision that included the ambitious full live action recreation of an American revolution battle sequence in the mountains of Simi Valley as tribute to Assassin’s Creed 3. “Armed with a crew of amazing film production mavens, we charged into battle, the rain pelting our faces as we massacred redcoats one by one, until the sun dipped behind the undulating blood-soaked hills, and the assistant director called “Cut!”. Motion Maven produce everything from internal sizzle reels to documentaries and broadcast television campaigns, and are a long time collaborator with NoodleHaus.

Behind the Scenes of the DICE 2013 opener

To produce the VFX, Motion Maven turned to the new Locktix in Venice Beach LA. Locktix do a lot of 911 work, but for this project they were brought in early one or two weeks before principal photography. “They needed some guidance on how to shoot this thing and how to push it, and I am so glad we were brought in early,” explains Gresham Lochner, owner of Locktix, to fxguide this week.

Founded in 2010, Locktix prides itself on VFX artistry and focusing on the fine details, and the company has a very strong heritage in compositing, its team having worked at companies such as MPC, R&H, DD and RSP on films like Harry Potter, Tron and Real Steel. It is has only 3 full time staff, hiring teams on a project basis.

Founded in 2010, Locktix prides itself on VFX artistry and focusing on the fine details, and the company has a very strong heritage in compositing, its team having worked at companies such as MPC, R&H, DD and RSP on films like Harry Potter, Tron and Real Steel. It is has only 3 full time staff, hiring teams on a project basis.

Shooting was in mid to late December 2012, and there was full turn over of VFX plates in early January, which allowed about three weeks for VFX post. Locktix “did just about everything (VFX-wise) except for the head up displays – that was done by the ad agency themselves,” says Lochner.

The project is remarkable not least for its merging of the major key character assets of the biggest games companies in the world, but the project ran incredibly smoothly – although each company was careful to guard the accuracy and fine detail of the ‘look’ of their hero games characters.

Lochner was on set supervising but says, “I was pleasantly surprised with the amount of VFX knowledge, Motion Maven had a very savVy DOP and director which really made our job run smoothly.”

The project was shot on the Alexa in Log C with in camera recording at 1920×1080.

Half Life 2

The trailer begins in a Messenger and an Engineer who represents the Academy dispatches this year’s nominations.

The trailer begins in a Messenger and an Engineer who represents the Academy dispatches this year’s nominations.

The device that stamps the nominations was the first thing shot in production.

So, where is the Half Life reference? “Look closely at the Portal gun,” jokes Lochner. “just an Easter egg for anyone who wants to freeze frame.”

Portal gun

Borrowing from Portal 2, of course, is the critical Portal gun.

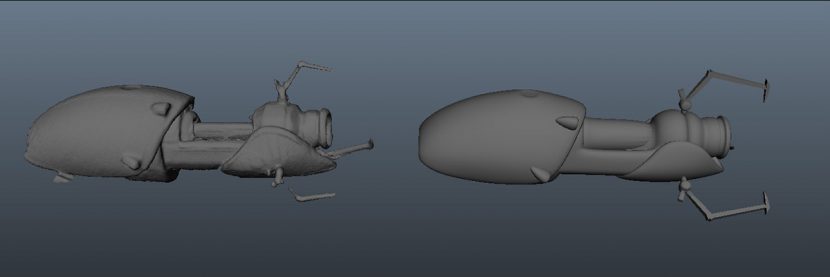

For the portal mist coming out of the gun, Locktix needed to object track the weapon and attach particle emitters to the front of it. “Rather than use photogrammetry or modeling off of reference photography, we wanted a more accurate model, faster,” says Lochner. “We’ve been looking at scanning solutions for a while and finally had an opportunity explore it further. Unfortunately the solutions we found were all too slow and expensive for this particular situation. We knew we didn’t need a super detailed mesh so we looked at alternate solutions and finally settled with using an XBox Kinect for mesh generation. It was simple, fast and effective to capture our asset that way. The mesh comes in with quite a heavy poly count so we had an artist re-create a low poly version that was identical. This gave us accurate proportions much faster than having an artist guess with reference photography. It tracked perfectly.”

The on set team had rigged the portal gun on a C-stand and it was manually scanned it. “We noticed that the Kinect was sensitive to certain types of reflected light angles,” notes Lochner. “We’ve also been looking into a more automated way of asset acquisition that wouldn’t rely on an operator manually scanning the object in. (Imagine a reversed lazy susan corkscrew design).”

For object tracking, like the camera tracking, the team used 3D Equalizer (3DE). Lochner speaks very highly of 3DE. The camera and tracking software is used by a killer list of high end facilities that as of this week included: Double Negative, Framestore, Cinesite, Weta Digital, Sony Pictures Imageworks, Prime Focus, MPC, Animal Logic, Image Engine, Laika, Hybride, Crazy Horse, Stereo D, Deluxe/Method Studios, Mokko, New Breed VFX, Baseblack, Reliance, Scanline VFX, RSP, WWFX, and game developer Ubisoft.

Much of the 3DE’s parent company’s success comes from the very close relationship the develops have with the actual artists in these companies. Many equipment suppliers speak of working closely with customers. Science. D .Visions has made it almost their entire marketing approach. “Our “secret sauce” is customer support. I almost spend half of my time answering question, bug report and feature request emails, every day! Our turnaround times are typically quite fast – it’s our goal to reply to/solve a problem within 24 hours.

Constantly being in close contact to the actual users at the forefront has a number of positive side effects. First, we learn about 3DE related problems and new developments in the scene very early (such as stereo, the renaissance of anamorphic, etc.). Second, I would consider a significant number of people, I learned through our business, to now be friends of mine,” says Rolf Schneider CEO of Science. D .Visions. speaking to fxguide from Dortmund Germany.

In addition, the developer and even the CEO does actual tracking jobs for a German friend on a regular basis – mostly quick commercial work, aiming to use 3DE on actual projects to learn about bugs and design flaws. The team has done regular long term engagements working from inside Weta Digital in New Zealand. “Same thing with my engagements at Weta. Last time I joined them was last September, where I worked on Hobbit and Man Of Steel for six weeks. I was able to collect a huge number of new ideas and found tons of bugs, which we already took care of (final R2), or will take care of in the future,” says Schneider.

Locktix uses the newer 3DE4, but R1 of the software. The move from release 3 to 4 brought major improvements, especially in speed. “3DE4’s calculation core is roughly 50-100 times faster than 3DE V3’s. With calculation core we refer to the solver which transforms 2D tracking data into a full 3D solve (camera/object animation and point cloud).”

Science. D .Visions sells two version of the 4 software: R1 and R2. Locktix uses R1 which is sold for under half the price of R2 – especially targeting smaller companies like Locktix as the company moves to widen its reach.

The major new features of the 64 bit R2 version that Weta, DNeg and others use are:

– calculation engine: calculation of sync’ed cameras (mocap and witness camera setups, for mono and stereo)

– an entirely new autotracking engine

– display of undistorted footage in realtime

– hidden line rendering of imported geometry (OBJ)

– motionblur display

– streamlined lineup controls

– comprehensive python interface update (incl. the ability to develop non blocking GUI “plugins”)

During its regular calculation process, 3DE4’s new calculation core applies a unique technique which automatically computes individual weighting curves for each point on a frame-by-frame basis. It allows the identification of so-called outliers on-the-fly (points of inconsistent or totally wrong 2D tracking) that can then be removed.

As most fxguide readers would know, almost every 3D tracking program has a confidence level that allows tracking outliers to be ignored – it is fairly standard in any 3D track. Actual tracks / mark tracks are often shown green / orange/ red and have error margins or deltas allowing filtering. But this is a confidence on the 2D track itself. By increasing the “weighting” of specific tracking points, their 3D reconstruction and thus “visual” quality in respect to the underlying footage can be improved. This is an approach that the 3DE team introduced themselves about 15 years ago.

The new thing about 3DE4’s calculation core that worked so well for Locktix is that it is able to compute individual point weights on a frame-by-frame basis for each point – essentially doing an outlier analysis while computing a solve. This refers to the 2D -> 3D calculation process (not 2D tracking per say). “It is really fantastic,” says Lochner of 3DE, who has gone much further on other projects than they even had to on the DICE opener.

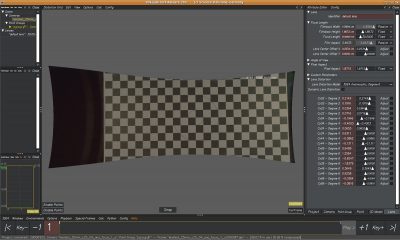

Locktix uses the Weta public domain 3DE lens distortion tools “which is really, really helpful”. The Weta Nuke Node is essentially an interface between Nuke and 3DE’s LDPK. “This means, it allows you to make any 3DE lens distortion plugin instantly available within Nuke (such as our standard models, as well as any 3rd party distortion plugin),” explains Schneider. An example of the LDPK is that the Arnold Lens Distort allows the lens distort worked out in 3DE to be rendered into outputs from Arnold with the 3DE lens distortion models. There is also a plugin for After Effects available.

“Over the years I done a lot of matchmoving,” says Lochner. “I started doing matchmoving at Giant Killer Robot. SynthEyes is good, a lot of packages are good, even Nuke’s tracker, but what we really like about 3DE is their lens distortion stuff, especially if you are doing say anamorphic crop plates. I’ve seen insane radial distortion. I don’t find the amount of control in any other software package, that i do in 3DE. We are diehard fans of 3DE especially since Weta was generous enough to open source their lens tools for Nuke – we can’t use anything else.”

The portal

Getting the look of the portal itself took some doing. “There was that live action portal short done about a year ago, and up until that was done, no one had seen a live action portal before,” says Lochner. “But after that film, it was assumed ‘that’s what a portal should look like’. Which is all a bit unique – we were interpreting the game via the live action film, and they are slightly different, as we discovered as we really researched the game and how these portals work. So we ended up with a middle ground, something in between the live action version that had already been done and the actual game – and they were very happy with that.”

Above: the wonderful Portal: No Escape live action short by Dan Trachtenberg was referenced by the Locktix team. (VFX supervisor Jon Chesson).

Master Chief

The first major character to be seen is Master Chief. “Microsoft were very particular,” says Lochner. In fact, Microsoft recently set up a new company to expand the Halo universe not only into a new trilogy but ‘transmedia’, they are thus naturally very protective of one of the most beloved CG games characters of all time: Master Chief. A character like this is considered a very real corporate asset by any large company such as a Disney or McDonalds. In a move similar to how McDonalds manages the live appearances and filming of Ronald McDonald, Master Chief could never be photographed with helmet off, or even seen behind the scenes. “We couldn’t interact with him directly – he had a handler and we could only talk to the handler and we couldn’t be there when he took off his face mask.” The actor inside the suit required some helmet-off time every 20 mins as the suit can apparently get very hot under studio lights. “We were not allowed to be around him so we couldn’t see Master Chief while he did not have his face (mask) on, since in the game he never takes his face off.”

In the cave set when the portal gun is first fired, Halo’s Master Chief is seen firing. Master Chief’s gun was built around what what had been “some sort of paint gun that fired special zinc rounds that make a spark… we augmented that and added additional flashes etc,” says Lochner. Some of the explosion hits are real, some are fully digital.

In the cave set when the portal gun is first fired, Halo’s Master Chief is seen firing. Master Chief’s gun was built around what what had been “some sort of paint gun that fired special zinc rounds that make a spark… we augmented that and added additional flashes etc,” says Lochner. Some of the explosion hits are real, some are fully digital.

Maya

Borderlands 2 from 2K Games/Gearbox software was honored in the trailer with Maya literally dropping in from a barn fire fight through her portal gun shot.

Characters such as Maya have very distinctive colors, and interestingly the final grade of the project was done in Apple Color, by Motion Maven at the very end of the process. The director had gotten most of the looks he wanted in camera so the Locktix team just aimed to match ‘unity’ to the plates and then the combined shots were graded in Color. The project did not require heavy grading or stylistic work.

Lieutenant Commander Shepard

The Mass Effect 3 sequence in space was shot at the same time as the nominations factory, with a large on set greenscreen. This allowed for the space shots to be added by Locktix and their lens flares as Maya gives Commander Shepard his nomination. Bioware were on set, too. “They went all in on getting it correct,” says Lochner. “They sent down the Mass Effect suit and we were all really trying to pay attention to details of the game to make sure sure looks identical.”

Zombies (no, not Walking Dead…)

At first viewing the zombie sequence was thought by many on the internet to reference The Walking Dead, but not so, for a start, the zombies are more like the ones in Resident Evil than Walking Dead, plus that game has very little gameplay where the protagonist fires a gun (The Walking Dead game is not a first person shooter). This was a point Lochner confirmed: “The zombies were just generic zombies since there were several zombie games released in the past year.” At one point there was discussion of having the zombie’s have red glowing eyes added in post production but for creative reasons this was dropped.

Jason Brody

The Far Cry live action sequence is one of the few that Locktix did not need to work on. In the quick sequence we see Jason Brody buried to the neck in sand, gun at his head as he gets his nomination. This was actually handled internally by NoodleHaus’ in-house designers.

Baldini number 22

A FIFA ref calls a nomination card on player 22, Baldini – which by the way just happens to be a FIFA version of director Tim Baldini. Commenting on the project the director stated that, “the overall concept was to make the most epic gamer / fan film we could make, obviously we went for the most popular games of the year.”

The director was very involved with the post process and this worked well with Locktix’s approach of showing work frequently and early to directors, unlike some other post or effects houses. Locktix is heavy on “client in attendance,” says Lochner. “We have the clients come in and we like to dial in things together. If you have a vision let’s get it happening right here right now.”

The FIFA sequence was one of the only sequences not shot with the Alexa. To get the locked in camera angle this was done with a wide angle GoPro on a rig, a technique Motion Maven had used before, with the rig removed in post later. “We made a carbon fiber rig that comes from the front (chest) and all the way around to the back – and that holds the GoPro and we had a second GoPro off to the side of the rig, so we were able to get coverage for the rigging to make the paint out easier,” explains Lochner.

‘Chen’ Desert traveler

Indie game Journey would go on to score big at this year’s DICE awards, especially in the categories of music and sound design but also casual game of the year and outstanding innovation in gaming. The game itself is beautiful and only available on Sony PlayStation. While Thatgamecompany won eight of its 11 categories, including game of the year, the project nearly bankrupted its developer according to game designer and company co-founder Jenova Chen (ex-EA), according to an interview in Polygon. The game was honored in the opener with a brief shot in keeping with the enigmatic game itself, looking out over sand dunes to a distant figure.

Connor (Ratonhnhaké:ton)

The huge finale of the opener was reserved for Connor from Assassin’s Creed 3.

The huge finale of the opener was reserved for Connor from Assassin’s Creed 3.

This was the largest live action location shoot. To expand the crowds of the battle sequence, Lochner used additional plate photography to pepper in all the extras – “all fun visual effects magic,” he jokes.

Arrows that kill people clearly were added in post, but the engineer fired his own real arrows in the ‘factory’ location. The team shot a set of fx elements such as smoke and atmosphere to be able to add to shots.

The arrows and blood were composited in Nuke. The company has a complete Linux pipeline. Locktix built a solid linear color space, Python/ Linux pipeline, and is careful to source projects that work with that pipeline so they don’t have to spend time on building tools and can instead just focus on “the artistry”.