Epic Games has released 4.20 of its popular game engine. 4.20 has been in Beta for some time now, and the new release includes some impressive new features, including

- Render updates such as new Depth of Field, and area lights

- Digital human improvements

- New Niagara Particle effects.

- Animation and ProxyLOD Improvements

- Various video, comp and audio improvements for virtual production

In the enterprise space, Unreal Studio4.20 includes upgrades to the UE4 Datasmith plugin suite, such as SketchUp support, which make it even easier to get CAD data prepped, imported and working in the Unreal Engine. These improvements are key to photorealistic real-time visualization across set design, automotive design, architectural design, manufacturing, and more.

Rendering Updates:

One of the strengths of UE4 has been its continuing improvements in render quality. Game engine output was, once upon a time, very noticeable and ‘gamey’ is now looking very close to offline renderers. We are yet to see full raytracing as standard but this latest round of improvements once again lifts the bar. As such, engines like UE4 are becoming increasingly of interest to visual effects and animation companies. The growth of virtual production with real time engines is growing as the gap between production looks and real time looks converge.

Cinematic Depth of Field (DoF).

Depth of Field is greatly improved in 4.20. Old DoF approaches could produce a slight band or edge around defocused objects, especially in the foreground. 4.20 now has a brand new implementation of Depth of Field to achieve cinematic quality. It is a replacement for Circle DoF method. “Our new Cinematic Depth of Field is in my opinion, the best in the industry” commented Kim Libreri CTO of Epic games. “The team have done a great job, and it is fully controllable”.

The new implementation of DoF is faster, more natural and has alpha channel support. It supports dynamic resolution stability, scaling down capability and procedural bokeh simulation. The procedural bokeh simulation allows artists to configure the number of blade in the ‘lens’, adjust the diaphragm and configure the curvature of the blades.

Digital Humans Improvements

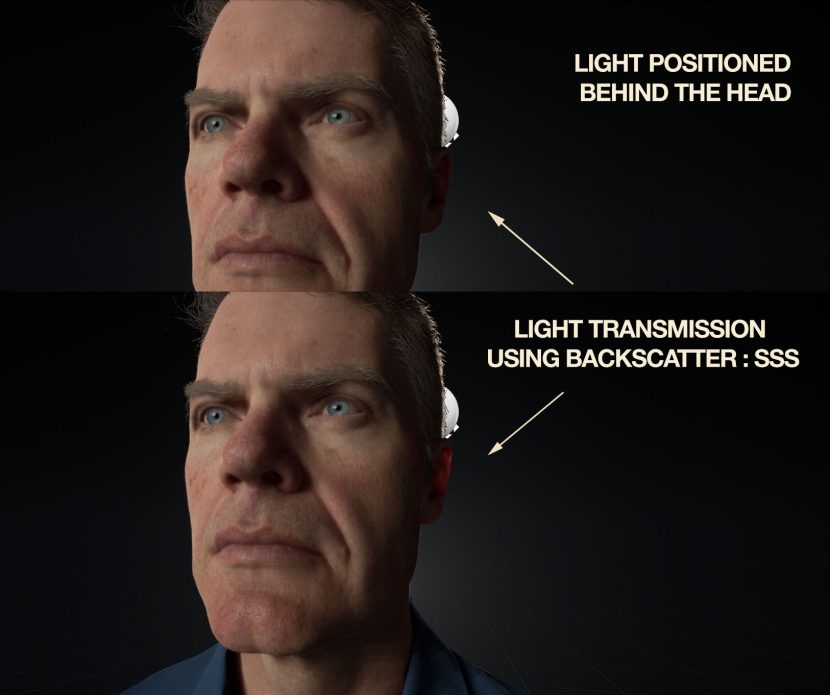

A set of improvements targeted at both skin and eye rendering. Including dual lobe specular for skin, backscatter subsurface scattering (SSS), an improved eye material model and more.

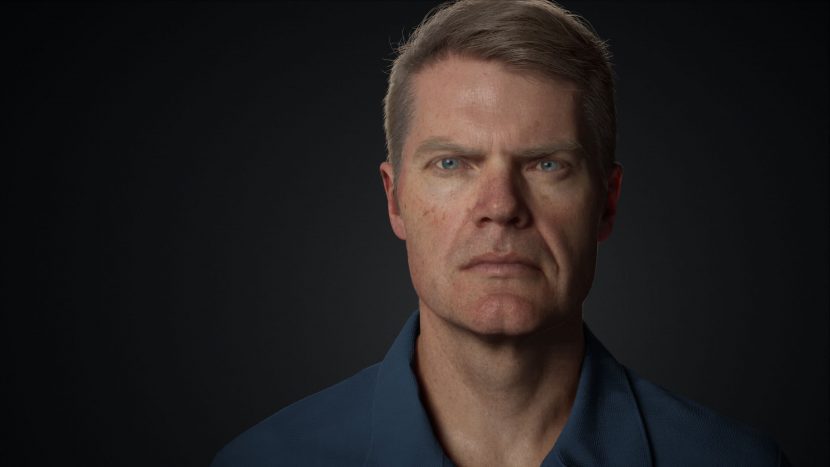

We are proud to say that shipping with version 4.20 is a copy of our own Mike Seymour. Digital MIKE uses these new SSS and skin solutions. This static version of Mike is complete with hair and eye shaders. This is based on last year’s SIGGRAPH MEET MIKE.

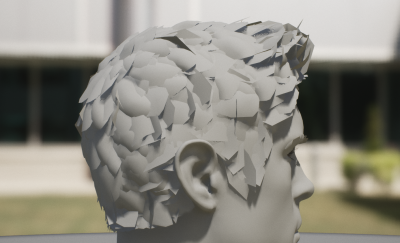

MEET MIKE was a WikiHuman project for SIGGRAPH 2017 and was followed up with the SIREN project. This free version of digital MIKE is based on last year’s MIKE but with some minor improvements. The original version ran in a custom build of UE4, it now runs in 4.20 and with some additional new features. For example, this version of MIKE has peach fuzz venus hair (see above).

New features include

- Added a new Specular model with the Double Beckman Dual Lobe method

- Light Transmission using Backscatter for SSS Profiles.

- Better contact shadowing for SSS with Boundary Bleed Color.

- Short distance dynamic irradiance, through Post Process Materials in this version.

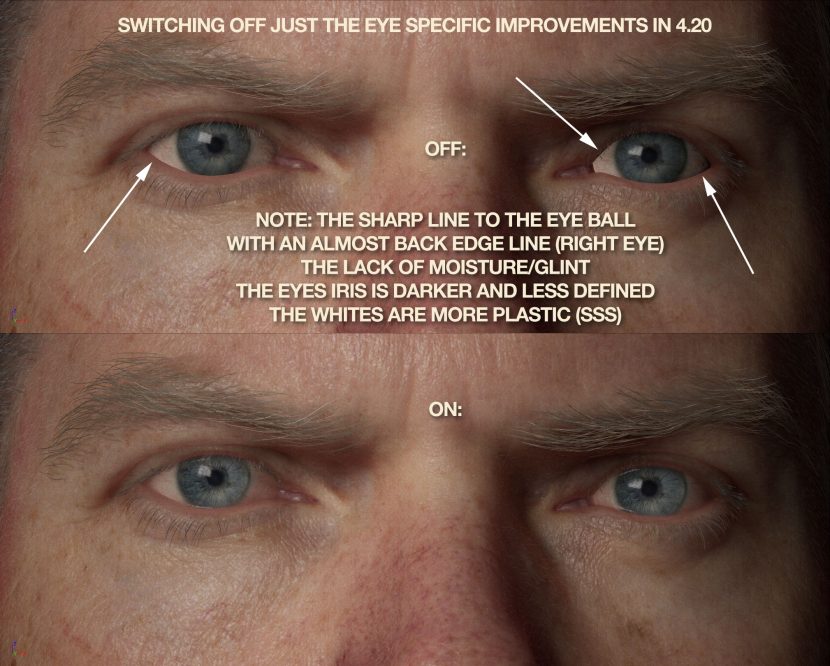

- Added detail for eyes using a separate normal map for the Iris.

All users now have access to the same tools used on Epic’s “Siren” and “Digital Andy Serkis” demos shown at this year’s Game Developers Conference.

As MIKE in 4.20 is not a rigged head, the Peach fuzz in the sample MIKE is not articulated peach fuzz is it static. But as subtle as it appears on MIKE, on SIREN at GDC, on a woman (who clearly does not shave), her animated skin was strongly improved by having venus hair on her face.

We spoke to Peter Sumanaseni, Senior Technical Artist at Epic Games about his work. Prior to joining Epic Games in January last year (2017), Sumanaseni was at PIXAR for 16 years, where he was Lead Lighting Technical Director on such films as Finding Dory, Monsters University, UP and Cars. He was also instrumental in PIXAR’s transition to doing lighting/looks development in the Foundry’s Katana. In 2013 he won the VES, Outstanding Animated Character in an Animated Feature Motion Picture, for Brave.

Sumanaseni explains that the boundary bleed color is evident on a digital face when someone has two surfaces with some sort a deep cavity. For example, between the mouth and the teeth or around the eye sockets. “We want to scatter light, but with just our standard screen space scatter, it kind of bleeds out too much light from one object to the other” Sumanaseni explains. “I had an example where we scattered the light around the teeth and the lips, but you ended up see this kind of ‘halo’ on the lips. That halo was from the light kind of scattering from the lips to the teeth. But in reality you have this deep cavity, so you want to sort of ‘scatter shadow’ instead of light. We don’t have that concept of scattered a shadow, so we came up with this idea of defining that color that one object bleeds into another and you could define it as a darker bleed color”. In essence you are defining the shadow color that’s being scattered from the inside the lips onto the teeth. Similarly one could define the inside shadow of the eye socket onto the eye.

One of the things that makes the skin of MIKE, and also of Epic’s GDC Siren, look so good is the use of a specular model with the Double Beckman Dual Lobe method. This is now available for anyone to use. “That’s new to our subsurface profile model and it sort of mimics some of the key skin properties. There is a lot of the detail in the roughness of skin. We can’t really render with a regular micro face scan and we can’t really capture all that roughness with just normals” comments Sumanaseni. “With this you get all these fine details, you get these sharper highlights and also the broader highlights from the skin”. Without this, digital skin can look a little more like “plastic or rubber and it just doesn’t look as organic, as what the dual lobe look gives us”.

Generally speaking, in UE4 there is not dynamic global illumination. While the engineers at Epic work on a more general solution for some time down the track, in 4.20 there is Short Distance Dynamic Irradiance, through Post Process Materials. “In the meantime, there’s a post material that kind of does the same thing. It samples a color from the final image and does some distance and normal calculations to figure out how much light is bouncing around. In that sense it kind of does a similar thing”.

Without short distance dynamic irradiance, faces look considerably less believable. “It really helps with the CG grays and without it you tend to have a lot of harder edges which are dark and gray. For example, things like the edge of the nose become a really dark and contrasty. Whereas in reality, light would bounce from your cheek to your nose and fill that in. Without indirect balance it’s really hard to capture like the natural values of the skin” explains Sumanaseni. In the demo download MIKE, this exists as a post process.

Comparing this to the last sample Digital Human, which was Twinblast released in UE4.15, there is a lot of little improvements that all add up. These aspects “take you away from it looking like a CGI character that’s rendered in real time, and you start feeling that this could be matching an offline software renderer” he comments.

The Twinblast example also had hair that was done with cards, and the eyes were much less detailed.

On closer inspection Sumanaseni feels like this new MIKE character could be “a cinematic, a full CG character, instead of just a ‘video game’ character in an older video game. That’s essentially what we’re trying to do”.

For comparison below we have turned off the 4.20 enhancements to the eyes, to show you how much the eyes have improved with the UE 4.20 series of small improvements. (click the image to blow it up)

Rectangular Area Lights.

There is now support for a Textured Area Lights, accessible from the Modes panel with other light types, (they do not do ray traced shadows yet, they use more traditional rasterized shadows)

There is now support for a Textured Area Lights, accessible from the Modes panel with other light types, (they do not do ray traced shadows yet, they use more traditional rasterized shadows)

- The act mostly like a Point Light, except they have a Source Width and a Source Height to control the area emitting light.

- Static and Stationary mobility shadowing works like an area light source with Movable dynamic shadowing working more like a point light with no area currently.

Niagara Effects system (early access)

Niagara is Epic’s next-generation node-based simulation system for real-time VFX. It replaces Cascade, which has been apart of Unreal for almost ten years. It is an advanced particle system and new in 4.20. In UE4 the particle emitters are modified by Modules.

- Niagara is a lot easier to get data into than Cascade via a data interface. Both from inside UE4 and from arbitrary external data. Every aspect of the particles are exposed and can be mapped.

- it is now much better for GPU and CPU simulation.

- Cascade’s ability to add behaviors, even if you are non-technical, is enhanced in Niagara

- Unlike Cascade, Niagara is much easier to add new features (without coding)

- Niagara can even simulate particles that only feed/affect other particle simulation, they don’t need to be rendered.

- Promotes modular effects, that are good for reuse and sharing.

- More state driven transitions on Particles

- All of Niagara’s modules have been updated or rewritten to support commonly used behaviors in building effects.

“I think it is going to change things for our games customers, and awesome for our new entertainment customers”, commented Kim Libreri CTO Epic Games.

What will be of great interest to the effects community is the Houdini support that allows offline simulation in Houdini, which can then be exported out in a number of ways such as CSV format and then brought into Niagara. Houdini calculates all the split points and impact points of say a destruction sequence and then all that information, – velocities and normals etc – can be access in UE4. Some of this data could be imported from Houdini before, but the extent of the data is now much more complete. For example if an object shatters in Houdini, the points where the parts hit the floor, and all the split points on the object can be exported, along with vectors say every ten frames of every piece of the falling object. These data points can then trigger particle emitters in UE4. The Houdini plugin ships with 4.20.

Wyeth Johnson, Epic Games’s Lead Technical Artist from the Special projects team, explained the difference between Cascade and Niagara. “Cascade is entirely fixed function, so if you want a new behavior, you basically have to get into the C ++ code and fundamentally change those behaviors at the ground level”. The second issue was performance, when you added to the simulation in Cascade it would often “bloat the entire simulation. The simulation got slower as you add complexity” he adds. One of the primary things Epic aimed to do in this new version was to unwind the effects pipeline and take the need to program (to maintain performance) out of the equation. This new version lets content creators create functionality directly without needing to get into the source code, and yet deliver simulations with impressive performance.

VR/AR Updates:

With this release of Unreal Engine, the Unified Unreal Augmented Reality Framework provides a rich, unified framework for building Augmented Reality (AR) apps for both Apple and Google handheld platforms. Both platforms can be developed for from the one single code path. This release includes a host of bug fixes that are aimed solely at AR and VR development. It is now compatible with AR Kit 2.0.

We have a special fxguide story focused just on ARKit and 4.20 here at fxguide.

The Unified Unreal AR Framework includes functions supporting

- Alignment,

- Light Estimation,

- Pinning,

- Session State,

- Trace Results, and

- Face Tracking

Also new is the Blueprint template HandheldAR, which provides a complete example project demonstrating the new functionality.

“Native mixed reality capture for VR – simple green screen post mixed reality composites, can you do more with the Composure Plugin which was released with v4.17.

Animation/Physics Updates:

ProxyLOD Improvements.

The new proxy LOD tool can be used in a Level of Detail (LOD) system. This tool lets you replace a group of meshes with a single simplified mesh requiring only a single draw call. It’s most useful when viewing a detailed area such as a city or village from afar.

This ProxyLOD has been critical to allowing Switch and Mobile implementations of Fortnite run as smoothly as they do. “It is very useful and really good,… it is the key reason that Fortnite can run on the iPhone or mobile”, states Libreri.

The system is a built-in replacement for the licensed Simplygon. This setup was shown as an early look in UE4.19. Prior to that, UE had an LOD system that took a single mesh and produced a lower poly version. With ProxyLOD it takes a large number of meshes and builds a single mesh to represent that with baked down materials and simplifies faces that aren’t seen etc. It is typically in an open world game, if you had a building made up of hundreds of meshes, this will simplify it down to one mesh that is small and used in the distance.

For example for buildings you can remove all the internal geometry though windows. This can dramatically reduce an asset size.

- Shared SkeletalMesh LOD Setting. Implemented SkeletalMesh LOD Setting Asset that can be shared between SkeletalMeshes

- Streaming GeomCache and Improved Alembic Importer. Geometry cache has been completely rehauled.

- The Alembic importer has been changed to iteratively import frames rather than importing all frames in bulk.

- Scripted Extensions for Actor and Content Browser Context Menu. The context menus for actors and content browser items can now be extended.

- RigidBody Anim Node Improvements.

- Clothing Tapered Capsule Collision. Added support for tapered capsules to physics assets.

- Copy Vertex Colors To Clothing Param. Added tool to copy SkeletalMesh vertex colors to any selected clothing parameter mask

Virtual Production Updates:

- There is now Shotgun integration, “if you are a studio that uses Shotgun you can now have network communications between Shotgun and the Unreal Engine, that helps with asset management, along with notes etc”, explained Libreri.

- There is now video i/o support, HD SDI input can be be Genlocked into the engine using (initially) AJA video cards. Libreri adds that, “the Engine understands timecode now, so one can sequence event, and slave to video. This means if you are building say a small video production video green screen stage, you can time everything perfectly”

- The engine can record the audio output or any submix’s output to a .wav file or a soundwave asset.

- Steam Authentication has been added.

- Final Cut Pro 7 XML Import/Export. Sequencer movie scene data can now be exported to and imported from the Final Cut Pro 7 XML format. This can be use to roundtrip data to Adobe Premiere Pro and other editing software that supports FCP 7 XML.

- Sequencer Updates:

- Frame Accuracy. Sequencer now stores all internal time data as integers, allowing for robust support of frame-accuracy in situations where this is a necessity.

- Media Track. Sequencer has a new track for playing media sources. It is like the audio track, but for movies.

- Sequence Recorder Improvements and Track Improvements. Tracks, Actors and Folders can now be reordered in Sequencer and UMG animations.

Epic is one of the most successful games companies in the world right now. Fortnite is a mega hit, and Epic has been working hard on improvements in the engine to help Fortnite and then bringing those improvements into the main engine for all to use. This is especially the case in Mobile UE4 environments.

Mobile Updates:

- A set of the mobile improvements and optimizations made for Fortnite on iOS and Android

- Improved Android Debugging

- Mobile Landscape Improvements

- RHI Thread on Android

- Occlusion Queries on Mobile

- Software Occlusion Culling

- Platform Material Stats

- Improve Mobile Specular Lighting Model