Mars methodology

Stereo, GoPros – filming challenges

When your red planet is just too blue

Seeing through the visor VFX

Into the storm

Life on Hermes

Snagging the Martian

Monitoring the situation

Earthly effects

On Ridley Scott’s 2012 space adventure, Prometheus, the visual effects team had a post schedule of 34 weeks. By contrast, the director’s newest film with a space theme, The Martian, had only 24 weeks for post after the film’s original release date was brought forward. This tight turnaround, in a native stereo movie that would call for complicated ‘science-fact’ Mars surface scenes of astronaut Mark Watney (Matt Damon) stranded on the planet, as well as high action in-space sequences, required some innovative thinking from visual effects supervisor Richard Stammers.

Indeed, Stammers, who had also worked with Scott on Prometheus, would adopt several techniques on-set and in post to get through the effects work efficiently but without compromising the results. These included relying on tablet AR tools and techvis and simulcam solutions for virtual production during the shoot, an approach Scott had not utilized before. GoPro footage, used to capture close-in action that would normally be hard to achieve, would also form key final shots in the film alongside the primary native stereo RED camera footage. Principal vendor MPC developed a special compositing tool to quickly deal with the blue skies of Jordan and turn them into the appropriate Mars landscapes. And Framestore capitalized on its Gravity experience to deliver photoreal CG spacecrafts and characters.

In this article, brought together from interviews by Mike Seymour and Ian Failes, fxguide delves into the effects work with the key players, including Stammers who was the overall visual effects supervisor and aided on the production by VFX shoot supervisor Matt Sloan. MPC handled Mars surface shots, Framestore worked on the space sequences and The Senate was responsible for NASA scenes and earth-bound shots. Previs was delivered by Argon, with The Third Floor contributing techvis and simulcam solutions. Territory Studio produced hundreds of on-set monitor animations for playback. ILM, Atomic Arts and Milk VFX also came on board to complete the film’s visual effects.

Above: Mike Seymour takes us through MPC’s Mars effects in this new DesignFX video with exclusive breakdowns, thanks to our partners at WIRED.

Back to the top.

Mars methodology

Watney’s life on Mars would be captured via a clear shooting methodology devised by the filmmakers. Most scenes – predominantly Watney’s ‘Hab’ (habitat) base – were filmed at the massive soundstages at Korda Studios in Budapest against greenscreen. Some shots were acquired in the Wadi Rum desert in Jordan – a place deemed incredibly well-suited to the arid Mars environment. The location was also the source of additional VFX plate photography and reference. That would leave MPC with the challenge of matching Jordan footage with studio footage and crafting close-up and wide Mars exteriors.

Stammers considered early on just how the filmmakers would depict the correct ‘color’ of Mars. “There’s still a lot of debate about this,” he says. “The images that NASA has produced have more of a neutral color balance on them, that is, they’re in Earth color tones. And there’s also been different cameras used to take Mars photos with different lenses and treatments, so you don’t really know for sure. So what I did was analyze all these and at the end we always knew we were going to be shooting in Jordan which of course already has a Mars-like appearance. So we took all the images we liked, color balanced them all to be in the same ballpark as each other, and put that in the same color tone or white balance as the scout images of Wadi Rum.

Added to that were the rock formations, craters, volcanoes, distant dust storms and even dust devils, all based on real phenomena on Mars. “Mars isn’t just this amazingly beautiful place, it’s also dangerous,” notes Stammers. “Every step of the way there’s always something challenging Watney – anything could go wrong at any point, which made Mars a real character in the film.”

Shooting began in Budapest on the greenscreen stage – a proposition that immediately posed the issue of light source. “Dariusz Wolski our DP wanted to go with just one single light source,” recounts Stammers, “just to keep the shadow direction consistent, and just have a singular shadow. But with the huge size of the space – even with a massive bright light source you do get an inconsistency in the exposure – if you were really close to the light the ground got really bright, further off into the corner got really dark. That became the most complex part of balancing our plates to any of the location work.”

In the final film, scenes regularly intercut between the greenscreen stage and Jordan plates – by, say, finishing up of a shot driving away in the rover which is back to the location in Jordan. “So there was a really big emphasis on making sure our greenscreen work really matched,” says Stammers. “Because we were doing that all upfront, one of the things I made sure we did was know what our lighting would be in terms of time of day. During our initial scout, before we started shooting, when we decided on location of the Hab site, it was really important that Dariusz and I agreed on what the time of day was. We went scouting the location together at different times of day, and agreed there was a sweet spot, which basically meant the sun was low in the sky and we had nice beautiful warm light, and the angle of the sun could fit with the angle of the sun in our stage. It meant we couldn’t pick a time of day where the sun was too high in the sky because we couldn’t get our lights higher than the stage ceiling.”

The filmmakers found that 8.30am in the morning was a beautiful time of day to shoot, so a full 360 degree texture shoot was carried out of the whole environment for that time. Half hour intervals for the rest of the day were also captured, but the hero environment for the greenscreen extensions was one single time of day which matched what would be able to achieved in the stage space later. “We pre-built that environment as best we could,” adds Stammers, “so that when we started shooting on greenscreen we had that available as a full 360 panoramic to view so we could look at the quality of the light, and also as a framing reference.”

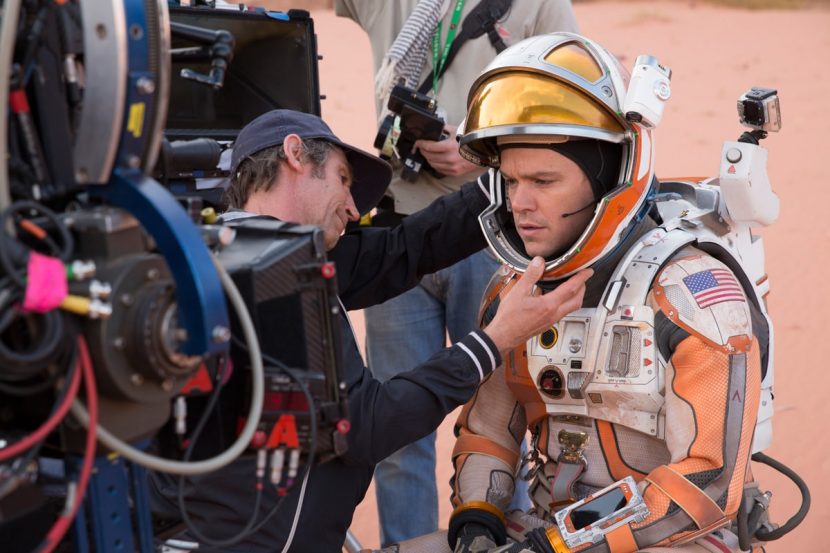

On the greenscreen stage in Budapest.

Having the environment pre-built also enabled the use of some augmented reality (AR) tech on phones and tablets for quick on-set previews. “We could use the accelerometers in the devices to look around at any point on the set,” describes Stammers. “I could go up to Ridley with an iPad and say, beyond the greenscreen this is where this mountain is, and if you panned over there you’d see that. We could pan to the exact positions and it had a really good realtime preview of what everyone expected to see. And then we might say, well what does that look like on a 50mm lens? We built a lens scale grid into the floor of our 360 panoramic, so I could just point the iPad down at the ground and do a pinch zoom into a lens scale grid that would line up to a 50 mm lens and pan back up.”

The AR tech was a mix of off-the shelf tools that made use of pre-built domes of the environments. “MPC’s supe Anders Langlands also wrote a version of the software where he could move the camera position to anywhere in the set and we could move the set props around,” says Stammers. “So, for example, we had those solar panels and the rover and we could move them to match the physical props on the stage and key that on the greenscreen stage and see that as a live preview of a key.”

Plates from Jordan, including helicopter aerials, were used as reference for both generating CG geometry and as real elements to be incorporated into final shots directly or via photogrammetry. “We sent people out there before the shoot happened and during the shoot,” notes MPC visual effects supervisor Tim Ledbury, who shared duties with Langlands. “We were shooting hundreds of rocks for photogrammetry, scanning was done by the on-set data guys, which gave us a big library of rocks and photographs. We had a few favorite rocks we put in, and there were some sets of mountains and volcanoes. We had some CG craters which were partly artistic and partly based on Mars.”

“Many of the plates we shot in Jordan were replaced in some way, except the very middle strip that were the amazing mountains,” adds Stammers. “In the background we’d add additional mountains that were even further scaled. Most notable is in the area of the Hab site where the main mission is taking place, there’s one really distant volcano which is a nod to Olympus Mons, Mars’ largest volcano at 59,000 feet. Ridley wanted something epic in the background to dwarf even the big mountains that are there at Wadi Rum. Then we added other ones that were half that size but much bigger than the ones present in our plate photography.”

Interestingly, even plates filmed in Jordan required extra augmentation since a large amount of bush and scrub were present. “You couldn’t stand anywhere without seeing a bush,” says Stammers. “Even our main site, even though there wasn’t too much vegetation, you had to replace the ground plane. That gave us the opportunity to replace things with rocks and craters.”

Ledbury suggests also that the benefit of having a distinctive shooting location such as Jordan for the Mars scenes meant that there was a great starting point for the VFX work. “There’s a shot I love where Watney’s going across a dried river bed and there are these dust devils twisting around – that shot looks beautiful, but the starting point was beautiful. Working with real location footage is almost a rare thing these days. Some of the comp’ers had been working on all CG shots for years it seems and they were glad to get their hands on some high quality cinematography.”

Physical stunts

At one point a section of the Hab blows up and flips in the air, destroying Watney’s beloved crop of potato plants. “Special effects supervisor Neil Corbould did a great practical flip for that,” says Stammers. “They built a really cool catapult underneath the tubular section of the airlock that flipped it up into the air and sent it 60 feet across the stage, together with all the gas escaping and debris. We enhanced that with the environment, additional smoke and escaping gas sim.”

As if MPC did not have enough complicated work already for the Mars surface (and a required fast turnaround) the studio was also called upon to deal with a subtle ‘effect of lower gravity’ effect. “Anytime you see Matt Damon walking on Mars,” recounts Stammers, “Ridley wanted to give a slightly floatier feeling to the walk. This came up on day one of photography. Now, the ideal frame rate for this would be 32 or 36 fps so Ridley had Matt walk faster to compensate for that. But where it shot us in the foot was we couldn’t shoot 32 or 36 in stereo – we couldn’t sync the cameras together. So everything was shot 48 and we had a massive amount of stereo re-timing to do which was frankly incredibly painful and was a real challenge in our schedule.”

Ultimately, MPC realized around 160 of these stereo re-times, which it did before creating any of the effects. “What swung the decision to do it upfront was the many layers of dust we had to put in,” says Stammers. “If there was another layer of parallax we were worried it would cause even more confusion and work. So the matchmoves for these shots were done at 48 fps original speed and the cameras were re-timed to match the re-time plate once that happened.”

Back to the top.

RED stereo and GoPros – filming challenges

DOP Dariusz Wolski filmed The Martian on RED DRAGONs in 5K, shot in native stereo on 3Ality rigs (the film finished effectively in 2K). “We had five stereo rigs on set,” says Stammers. “We shot with three on most setups with a spare or handheld or a Steadicam on standby. We also had second unit running which had one stereo camera, one mono camera. And our helicopter unit in Jordan at the end of the shoot was also in mono, so we had some conversion work to do.”

Central image processing and management systems for the film were delivered by Fluent Image, led by Jon Ferguy and Kate Morrison-Lyons. “We worked with the production to choose debayering and image scaling algorithms, and designing the colour pipeline and image naming schemes for the feature,” outlines Ferguy. “The shoot took us to Budapest and then on to Jordan where we produced dailies and archived camera-original material.”

Back in London, Fluent Image created the OpenEXR visual effects and stereo turnovers with VFX editorial that would go out to vendors. “Submissions from vendors were delivered back through our systems, went through an automated data QC and were automatically transferred into 2 review suites ready for the VFX and Stereo teams to review,” says Ferguy. “Towards the end of post, we generated DI pre-conform packages from the Avid EDLs provided by editorial. These are a combination of regenerated left and right EDLs with additional colour and stereo metadata embedded, and OpenEXR media tuned for realtime playback in DI.”

B-roll from Jordan,

In addition to the RED footage, an interesting challenge were GoPro cameras and their resulting images. These cameras formed both props worn by the characters and actual final footage (captured in mono) – that would later need to be incorporated into the film. Incorporating GoPros into the shoot allowed for some in-close views and extremely convenient shooting scenarios. “I have to say,” admits Stammers, however, “that I was very much in denial about the thought of having to use GoPros going in. The plan was that the GoPros would feature as practical props that were filming like security camera footage relayed onto a monitor that would be captured by the REDs. But as we did in Prometheus where we had shoulder mounted cameras on the astronauts, those featured in the movie. We did some designs for graphic overlays, and gave stereo separation with, so we could have a mono background slightly split to give it positive depth with the overlay in negative space.”

“During setup for principal photography,” adds Ferguy, “it looked like there might not be a large amount of GoPro footage. However the feedback from editorial about how well the initial material was working meant we had to reevaluate that very quickly – within a few days the CDL based on-set grading workflow we were using for the Red Dragon was adapted for use with GoPro as well. We ended up with around 135 hours of GoPro footage across 16 GoPro cameras. One thing that was made clear to us early on was that the GoPro material was always intended to have its own look in comparison to the RED material – that made the decisions on colour workflow easier – we were not trying to make the GoPro material look exactly like the RED material all the way through the pipeline – just maintain as high quality an image as possible through to DI where the final look would be set.”

GoPro stats from Fluent Image

– 11.7m frames of GoPro / 135 hours

– 25.1m frames of RED / 290 hours (counting stereo frames only once )

– 1,828 GoPro clips

– 12,024 RED Clips (stereo clips counted once)

The 8bit GoPro footage had to handled very carefully. “We knew that a conversion from 8bit YCbCr to 8bit RGB could easily introduce additional quantisation errors,” says Ferguy, “causing banding on some of the lovely shallow gradients in the Wadi Rum skies. We decided to adapt our in-house image processing tools to handle this part of the conversion – going straight to 16bit EXR and avoiding any low bit depth intermediate stage. Using our own tools meant that we were also able to extract the metadata tucked away in the GoPro headers – not just timecode, but also all the Protune settings for that clip, field of view and camera serial number – this meant we were able to the same level of tracking we do with cameras lIke the RED DRAGON – this additional information is incredibly useful when trying to track down an issue to a particular setup change or camera body.”

MPC’s Ledbury notes two particular sequences captured on GoPros that required special attention. “One was for one of the journeys Watney takes where there’s a shot looking right into the sun. That was interesting in terms of highlight clipping and we had to re-master that to get a better range in there. We had to luma key the highlights and put as much dynamic range into it as possible. The shot I’m most proud of as a GoPro shot was where he was setting up the Pathfinder. We’re right on Watney’s shoulder and he’s looking around and we had to put on a CG visor onto the helmet. The distortion on the GoPro is massive and also the quality we had to inject back into it turned out really well, and you would think they may have just stuck a RED camera on his shoulder for that shot.”

Back to the top.

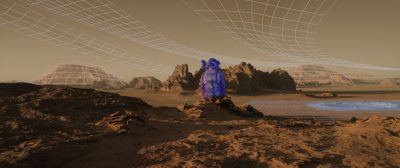

When your red planet is just too blue

Having solved the complex CG builds for Mars, having incorporated the desired aspects of Jordan into the shots, and having dealt with GoPro and stereo issues, MPC still had another mountain to climb – Jordan plate photography that featured rich blue skies. That would normally have involved extensive roto work, but instead the studio devised a NUKE tool dubbed EarthToMars or ETM to effectively remove the blue. “My 2D supe called it ‘the most awesome de-spill in the world,’” laughs Ledbury, referring to compositing supervisor Lev Kobolov who devised ETM. “It seemed like a fairly simple thing just to get rid of the blue, but it kills all of the other colors. So ETM was a way of picking out the depth hazing and the blue and bounce light and being able to control that.”

Kolobov came up with ETM after realizing that controlling the blue would certainly be possible but would be incredibly time consuming. “All of the conventional tools like hue correct, keying or blue spill didn’t give us enough control,” he says, “and didn’t perform well around the edges, and were not easy to implement on a large amount of shots without a lot of additional rotoscoping or tweaking.”

Filming in Jordan.

“Most blue spill tools we tested were based on ‘IF’ statements,” adds Kolobov. “For example, ‘IF specific pixel has bigger value for Blue than Red and Green,’ it meant it needed to be changed. The problem with these tools was the edges and getting a nice fall off, so we had to write a special tool for NUKE. I came up with an idea for how it could work, and MPC senior 2D lead Alex Cernogorods then created the code and suggested how we would control it.”

So how does ETM work? Kolobov, again: “The tool detects any traces of blue and using a proprietary algorithm removes it. We are able to control what color it will change to and how much it changes. ETM also exports an alpha channel of the change for additional colour correction. It does a much better job than the current standard conventional tools and saved us vast amounts of rotoscoping time which would have otherwise been necessary. The tool produced such a great result that we also built our own green spill tool based on the same algorithm and use it for all our greenscreen shots on The Martian.”

ETM then became part of several steps, including DMP and effects work to add clouds, streaks and other atmosphere and color, in the process of dealing with the martian skies through to DI. “All of the plates shot in Jordan were first balance graded and than using our ETM tool, the Martian skies were created,” describes Kolobov. “We used ETM on almost every plate from Jordan before sending to the client for DI testing. Once the client’s notes were addressed and the sky’s look was approved, the look for each individual shot was released and picked up by the compositors working on the shots. This workflow ensured that the look of the skies was consistent, created quickly and could then be approved by the client as early as possible.”

Back to the top.

Seeing through the visor VFX

Films featuring characters with visors or helmets often, ahem, face the problem of unwanted crew and environment reflections, or not being able to clearly see your lead cast members. The Martian had that issue too, necessitating MPC to carry out extensive Mars-based visor VFX.

“Given we shot a lot of the surface scenes in a studio,” says Stammers, “the visors were inherently reflecting our greenscreens and individual lights, bounce curtains and black silks. It was basically a mirror to the whole crew and stage. We had very few shots where we actually kept the visor in. Even in Jordan we took the visor out because we were seeing reflections of the crew.”

Prop visors served as incredible reference in restoring the glass look, but an added complexity came from the fact that the actions of the astronauts themselves – the movement of their arms and feet, for example – needed to be reflected in the helmets. “The crew are doing experiments and banging things with hammers and twisting in drills, say, and each of the visor replacements had to have a reflection of what they were doing because they were looking down,” explains Stammers. “So we had to do a full digi-double roto-animation of their performance to get a CG reflection of their arms and legs and whatever they were doing reflected in the visor.”

Watch a scene from the film.

Environments and buildings also needed to be reflected. “We had built all the environments for the Hab so we had all of that to use, which was pretty good resolution,” notes Ledbury. “So we’d combine those with the roto-anim’d performances, sometimes from witness cameras. When you first stick the reflections on as they should be, it does cover a lot of the faces up, so we had to do a lot of pulling back the reflection or deciding where the reflections would come – mainly avoiding the eyes.”

Since the actor’s head had to be appropriately positioned inside the transparent helmet, MPC rendered deep color AOVs of the visors for deep compositing in NUKE. Says Stammers: “In a profile shot you’ve got the back part of the visor behind their face, the front part of the visor in front of their face and so we had to do that with extensive deep renders – about 14 passes for each visor.”

Back to the top.

Into the storm

Watney’s isolation on Mars occurs after he and his fellow astronaut crew members are attempting to leave the planet during a severe sandstorm. Shot on the greenscreen soundstage at Korda, the storm involved significant practical effects orchestrated by Neil Corbould’s team that included dust and dirt propelled by giant fans at the actors, who were often held back by wires to have something to push against. MPC then carried out several levels of digital augmentation.

In previs, Argon was able to contribute directly to ideas for the storm. “For instance,” says Argon’s previs supervisor Jason McDonald, “originally during the storm, once the order to evacuate is given, all 6 astronauts make their way together to the MAV (the rocket), this made things complicated once Watney was taken out because Martinez, the pilot, had to go in one direction to ready the MAV and the others go searching the other way. It was much cleaner to have Martinez already in the MAV before the order to evacuate came in, which would also give us something to cut to for his reactions as we begin to realize Watney is gone. It also added more texture to the scene to be able to cut out of the noise and darkness of the storm into a quieter, brighter interior.”

Watch b-roll from the storm shoot.

Views of the approaching storm were created in Flowline and Houdini by MPC, but as the crew is enveloped, much of what the audience sees is practical. “We fogged up the stage and made it nighttime, turned the greenscreen off and fired as much smoke and particles at the actors as we could,” explains Stammers. “We shot that at 48 fps and went back to 24 fps without doing any interpolation. Ridley liked the idea of the particles being really sharp, so they have reduced motion blur because of that. That was our starting point and we shot additional dust and particle elements to layer on top too.”

Further CG dust and particles were rendered by MPC and then placed into the shots along with the practical elements. “We helped with the stereo depth by putting more and more layers closer to the lens,” says Stammers, “trying to maintain that perception of depth all the way to background.”

Back to the top.

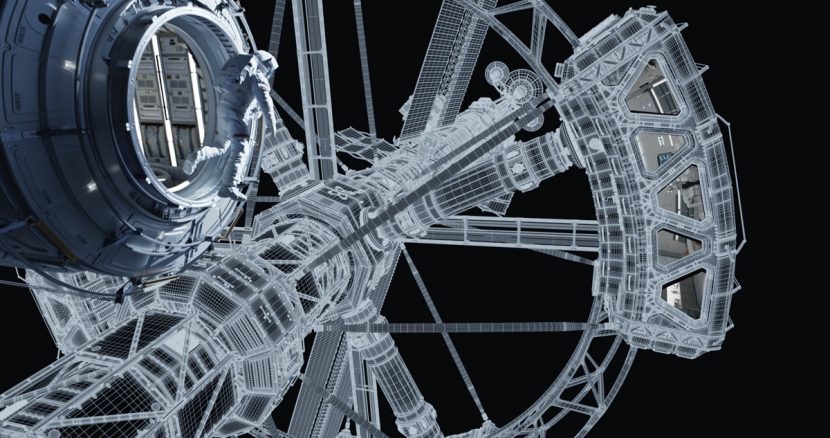

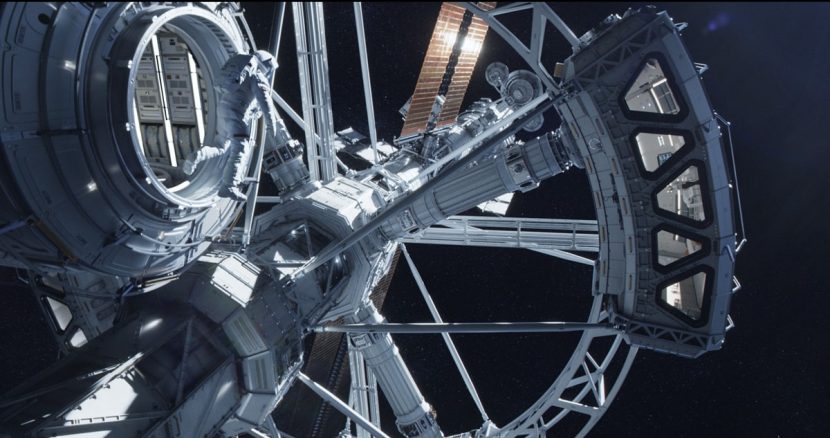

Life on Hermes

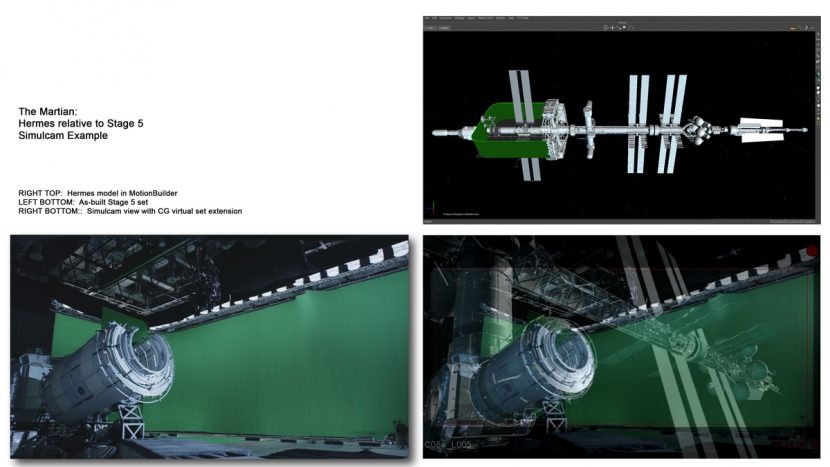

Watney perseveres on Mars while his crew head home in the Hermes, a spacecraft outfitted for the long journey to and from the red planet and featuring a rotating section to deliver artificial gravity. Based on Arthur Max’s art department concepts, Framestore handled sequences involving the craft using partial set-build live action plates and fully CG exteriors and interiors. Previs from Argon and techvis and simulcam services by The Third Floor aided significantly in crafting shots and orchestrating zero gravity wire work.

Framestore launched into a full-scale build of the Hermes during principal photography. “We tried to keep it very authentic to what NASA would have built,” outlines Framestore visual effects supervisor Chris Lawrence. “Arthur Max went to great lengths to talk to NASA about what they would really do. There was a story behind it before we even picked it up.”

Utilizing vast experience in building spacecraft from Gravity, the team at Framestore directly referenced detail from the International Space Station (ISS). “For example, we had looked at the large solar array wings for Gravity,” says Lawrence. “Unfortunately we never built them clean, because the ISS was always damaged in Gravity, so we had to completely re-build them! But they were very familiar to us and we had great details and reference already. We’d match the lighting and photos and do side-by-sides. We’d add greebles and lights for extra details, so hopefully the result is born from authenticity while not representative of a specific thing.”

Lighting the Hermes, and later lighting the characters out in space, involved subtle reference to the red planet below (a Framestore creation, as was the Earth). “The near-Mars shots were a tricky one to light because if you’re in Mars’ orbit a browny orange bounce light isn’t necessarily going to look fantastic,” suggests Lawrence. “So we played around with different hues and amounts of bounce. We had to match the DOP’s lighting on set where he’d used a fairly neutral bounce. In the end we added some Mars bounce pass to the plate photography in NUKE – that was essentially de-spilling the green and red spilling instead – to give a very subtle cue to tie things together.”

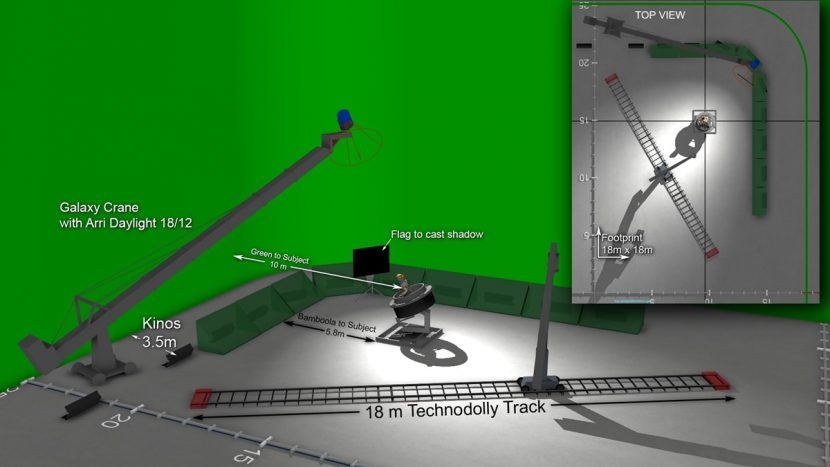

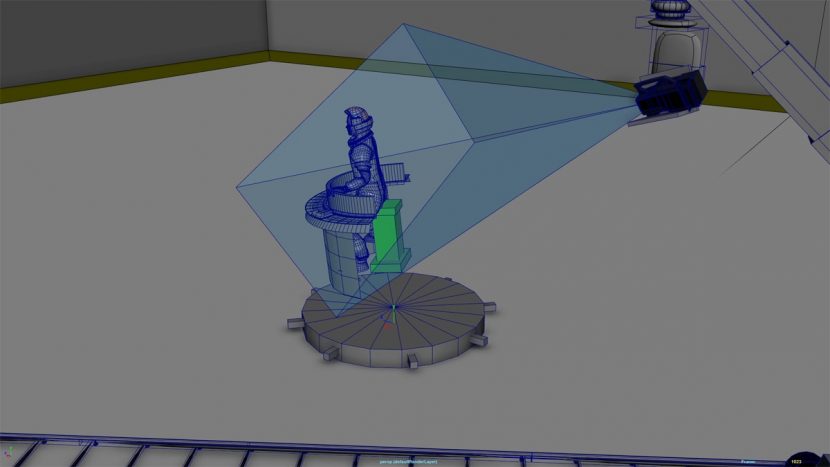

Shooting the live action for the space scenes utilized techvis from The Third Floor – the first time on a Ridley Scott film. “Casey Schatz, our virtual production supervisor from The Third Floor, took the previs that Argon had been doing and turned it into a really good techvis sequence,” outlines Stammers. “That meant we could utilize a Technodolly and a previs and give Ridley a live output of what the background would be. So whilst we had very small set pieces of the Hermes airlocks, say, he could frame up on the shots and see the full extension of the Hermes in the background, Mars in the background, et cetera. Ridley’s always struggled with greenscreen and he was blown away by how much we could show him. We could just move the camera six inches to the right and you could see the whole ship!”

Argon also worked closely with The Third Floor to deliver specs. “For example,” says Argon’s McDonald, “there is a scene where Lewis floats through a corridor in the Hermes in zero G. We created a digital wire rig and techno dolly inside the Art Department’s model of the corridor and animated Lewis and Martinez’s characters throughout the small scene which was approved by Ridley. This animation was then used by Casey to begin to set up the shoot on set and drive the truss system that carried the actors and technodolly to carry the camera.”

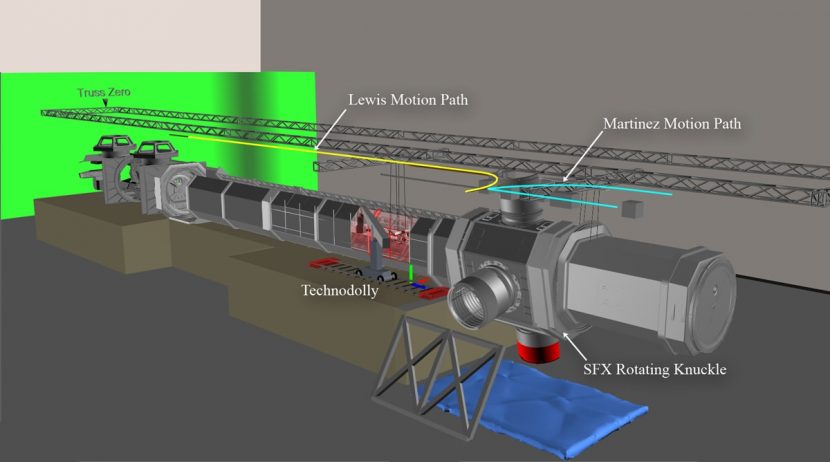

One particular sequence that Third Floor’s techvis was used for was the Hermes Open Message scene. Here, Lewis (Jessica Chastain) floats down the corridor past the lens, joining another astronaut as they go down a ladder. “The Hermes Open Message scene was all about timing and being able to figure out how to position and move the actors, cameras and rigs in a way that would maintain the cinematic illusion that the action was happening in zero gravity,” explains Schatz. “We used a Technodolly, which was small enough to sneak through an opening we made in the corridor and could also be controlled precisely enough to get close to the set. Then, working with the stunt department, we wrote a system to control the motion of the actors on an 80-foot 2D truss system. Additionally we were outputting the camera curves to the Technodolly and the rotation curves to the rotating SFX spinning section.”

The techvis from was able to control the wire rig with some programming from The Third Floor. “Using Python,” explains Schatz, “I wrote a tool that allowed us to select an astronaut and send it to the truss rig in a way that did not accelerate too quickly and replicated the look of true zero gravity. We could animate the move in Maya, use the curve editor to soften it out, and then send it to the rig on stage. Once the actors were moving through space correctly, we also output animation to the camera and to the rotating ‘gravity knuckle,’ so that everything was in sync and stemmed from the same Maya file.”

Filming on set.

“The techvis included stage dimensions, camera information and a variety of other information that I would synthesize from around the production,” explains Schatz. “This way, we could essentially ‘practice’ the shoot in Maya before the actual shoot day. As part of the techvis, we were able to help determine what would be built and what would be extended digitally for these space sequences. There were a number of shots we helped develop also that required precise control over the motion of actors on rigs and trusses and being able to seamlessly transition from animation to live action.”

“For the Hermes spaceship,” adds Schatz, “we used geometry straight from the art department that was constantly updated to reflect evolving design changes. For views looking down to the surface of Mars, we used high-res NASA textures. The camera rigs were the SuperTechno 50 and the Technodolly, which we modeled in detail per specifications from Panavision. Both rigs adhered their real-world counterparts not just in dimensional accuracy but the velocity characteristics as well. The character rigs were The Third Floor’s standard auto rigs.”

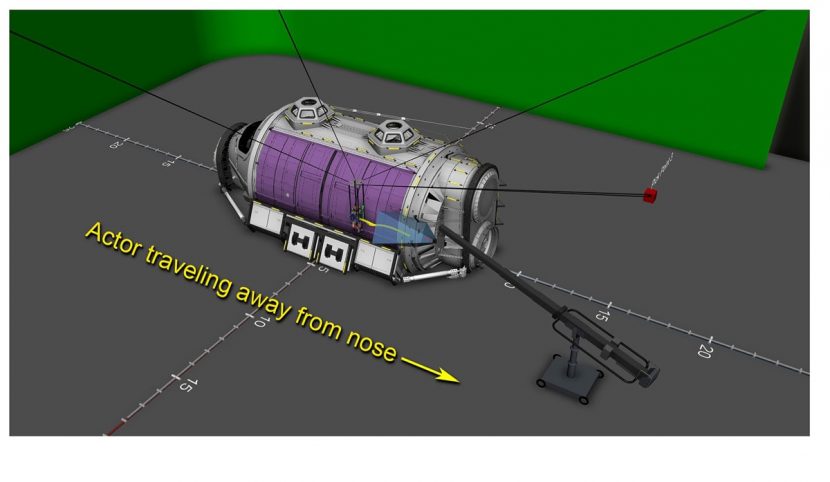

In addition to techvis, The Third Floor utilized the Technodolly’s ability to stream to MotionBuilder and developed a simulcam setup so Ridley and the crew could see CG extensions of the space station set while shooting. The simulcam also came in handy for the zero gravity scenes. “There were these sequences where Ridley wanted to show the relationship between the length of Hermes on the inside and the spinning part of the ship where you have this rotating junction – the knuckle – which went off to the four pods of the gravity wheel,” says Stammers.

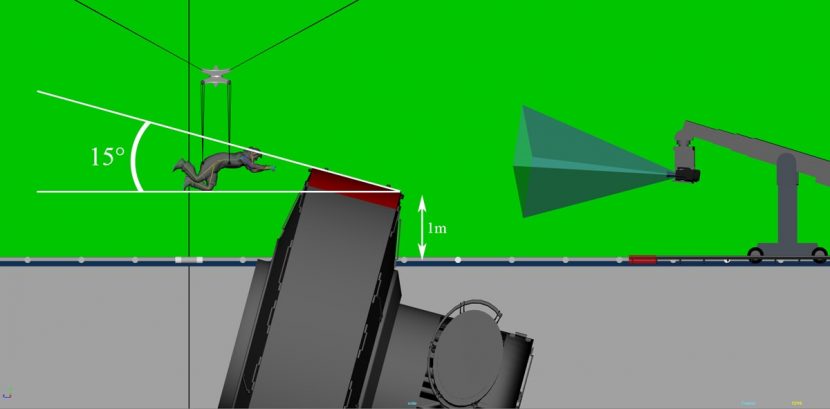

Importantly, Schatz had spent considerable time consulting and collaborating with the various departments to orchestrate the techvis. “My typical approach would start by grouping the shots according to shooting technique (crane, dolly, handheld, interior, exterior, etc.) and other information like lighting direction or types of set pieces being used. We would then interact with different departments, the art department, visual effects, stunts, special effects, showing printouts and QuickTimes and gathering their input about what needed to happen on the stage. Was there sufficient greenscreen or did they need to fly in additional coverage from above? Was the planned camera ‘legal’ in the distance it would cover and how fast it would accelerate? Was there enough room for performance of the stunts? We would take this feedback, make adjustments and output the techvis again. Certain things became evident looking at the scene in the computer, such as the fact that a specific rig, if rotated too high, would place the actor too close to the ceiling at 15 degrees but would be OK at 10 degrees. By bringing together all of these details and making it accurate to stage and camera dimensions, everyone was able to get a confident idea about what would work on the shooting day.”

The approach was used also for the Airlock Bomb Strategy scene in which Beck traverses the exterior of the Hermes.”We did this on Stage 5 at Korda Studios in Budapest where the stunt department (led by stunt co-ordinator Rob Inch) set up a 4-way bridle system,” describes Schatz, “providing the ability to fly the actors in three axes as opposed to the linear run that was needed for the corridor. Sections of the Hermes were built, and Beck’s motion had to be carefully plotted relative to the set pieces. We were able to give curve animation to this winch system as well, so the techvis helped inform the layout in addition to the actual movement of the astronauts.”

Framestore had built an interior digital set of the Hermes for such shots that could be combined with the ceiling-less set. “We roto’d the talent off the background plates, and then stabilized the actors and removed their wires,” says Lawrence. “There were a few shots where they enter the knuckle of the gravity wheel as it’s moving around, so we had to do this choreographed dance of zero G wire work to the set movements. The knuckle itself ended up being a complete CG build since the set was only half built.”

The zero gravity shots of the actors in full spacesuits were often a hybrid of live action and CG doubles. “We collaborated with the costume department to get details on the helmet that were in the same place as we’d have tracking markers, so we’d have as few tracking markers as possible,” states Lawrence. “There were lots of other nice things the costume department would do for us in terms of how the cloth moved that meant we could blend seamlessly between the two and have a live action and a CG spacesuit right next to each other in the cut without you noticing the difference.”

Framestore’s digital spacesuits again made use of technical expertise in CG wardrobe creation pioneered on Gravity. “The best results come when you can use photogrammetry as a starting point,” says Lawrence. “We had the NASA physical suits and we used Clear Angle to scan them. We got the talent in the suit so we could see what their proportions were, see where all their joints were and get them doing funny poses, bending arms and legs and standing on one leg – helps us get the rigs correct with the right pivots.”

“We then created high res models which followed the live action and had some of the creasing built-in,” adds Lawrence. “Because it’s a cloth model it’s done in such a way that the UVs are all uniform and topology is uniform, so it creases nicely when it’s simulated. All of that displacement detail is actually in the model and that’s really nice because it means you can simulate through it and the shape of all of the wrinkles and bits of cloth will change as the actors move around. The CFX team always get squeezed the most but they were able to do these suit simulations really fast.”

More behind the scenes from the set.

Occasionally the precise dynamic behaviors of the actors could not be captured on set because of the complex wire work, “but it would at least get you shots that they could cut with,” says Lawrence. “That would then inform a locked cut that we could take over either by replacing faces or cutting off the waists and doing CG legs. That was a very shrewd move on Richard’s part to allow us to do that because it really gave a skeleton to the sequences we were working on that we could flesh out.”

Like MPC, Framestore also delivered digital visor work, this time for the space scenes. “We had real visors in some shots which meant we had beautifully lit reference of exactly what the visor should look like,” acknowledges Lawrence. “In turn, it meant we actually pushed our visors further than anything we’d done before, because we had to introduce new layers and get the warping right on the edge of the visor glass – things we may not have factored in without having the realistic reference.”

Back to the top.

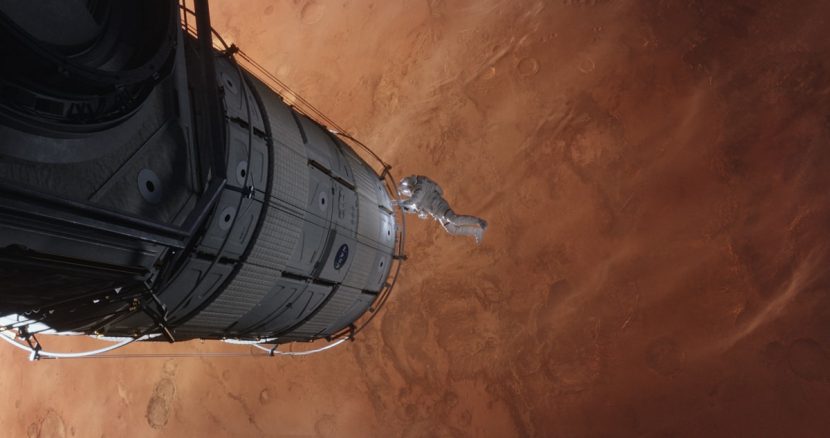

Snagging the Martian

The crew of the Hermes return to Mars as Watney blasts off from the surface in a Mars Assent Vehicle (MAV) that had been intended for a future Ares mission. Using Argon previs and techvis by The Third Floor, Framestore delivered shots of the MAV as well as Watney’s ultimate daring snagging by the crew.

Shots of Watney in the space capture tumbling were techvis’d by the The Third Floor. “Working together with SFX supervisor Steve Warner,” says Casey Schatz, “we devised a rig that could pitch Watney to make the camera appear to be above him, then animate that axis so we appeared to be underneath him at the end of the shot. In conjunction with knowing the height the Technodolly could take the camera, we found the right ‘mixture’ of camera and subject movement such that the net result gives the impression of Watney tumbling through space.”

Damon was filmed inside a set-piece of the MAV, which in the film has pieces removed to reduce its weight and allow Watney to (almost) make it to the Hermes. For the unstable flight of the MAV, Framestore then worked on shots of the craft spinning, sometimes only keeping Damon’s face from the plate, as well as adding floating nuts and bolts and exterior views of the planet. “One shot that was always in the previs but for some reason they didn’t shoot was a POV of the interior MAV, and it’s Watney looking around trying to work out what’s going on,” describes Lawrence. “They didn’t shoot it so we had to build the interior MAV and do this fully CG interior for one shot, which turned out great.”

Filming Matt Damon's space tumble.

Leaving the Hermes, Lewis tries unsuccessfully to reach Watney, who then stuns the crew by slashing his spacesuit and using the exhaust pressure to leave the MAV and manoeuvre through space. Several challenges for Framestore existed here – they had to preserve and augment live action pieces filmed on specialized rigs, add in a floating tether, while maintaining the intensity of the scene.

“Matt was on a truss mounted with a rotational gimbal in the middle and they were dangling off that with trapeze wires,” says Lawrence, describing how the live action was filmed. “It was great because it gave a physicality to their performances – the fact that there was centrifugal force pulling them out and they had to pull against it really helped the scene feel more visceral. The hardest part for actors can be having them imagine what’s around them in this huge greenscreen set.”

“Still, it was the toughest technical animation we had to do on the show,” states Lawrence. “The tether, for example, well, it’s almost impossible to create a live action floaty tether so that had to be CG. We also had to interact with it because they were pulling each other on it. Ultimately what we did for the whole sequence was have a mix of live action and CG characters. There’s two quite similar shots which are close in the cut – shots of them coming together. One was done live action and the other one which was a similar angle and action was done as CG digi doubles with plate faces. That came down to what was working best motion wise in the cuts.”

Back to the top.

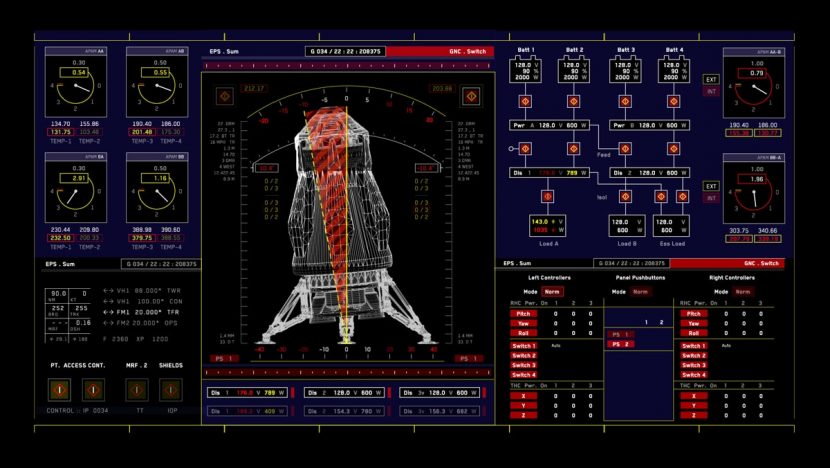

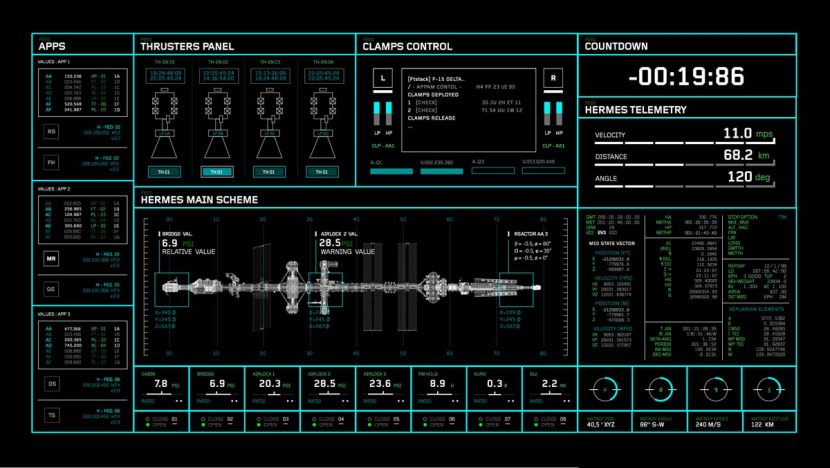

Monitoring the situation

With scenes taking place between Mars, the Hermes and Mission Control, much of the film’s story is therefore also told with the aid of monitors and displays. Territory Studio, in collaboration with production designer Arthur Max and UI art director Felicity Hickson, was responsible for realizing screen content via motion graphics, largely piped-in on set for the actors to directly interact with.

“The biggest challenge,” says Territory creative director David Sheldon-Hicks, “was to find the best ways to represent complex scientific information in a very clear way. That involved a steep learning curve for the whole team as we actually had to understand or at least appreciate the science of what we were trying to represent on screen before we tie it to the dialogue and distill it. This process really stretched us, but it was satisfying to be able to add a level of real depth to the graphic elements.”

The studio crafted design languages for each of the key sets – Mission Control, the Hermes spaceship, NASA Jet Propulsion Laboratory (JPL), Mars Ascent Vehicle (M.A.V.), HABitat facility on Mars, Mars Rovers, NASA offices, Pathfinder, Space Suit Arm Computers and crew personal laptops. This resulted in around 400 shots of mostly animated graphics for on-set playback.

Real reference was always key, notes Sheldon-Hicks. “Ridley invited NASA and the European Space Agency to advise on every aspect of the project and Dave Lavery at NASA was our prime contact. He supplied us with every conceivable reference material, from old missions plans and data, probe images, mission control photos, engineering schematics, and satellite imagery of the surface of Mars, types of work stations and software they use, to daily schedules and reports. At NASA control and JPL they use keyboards and mice like everyone else so we stayed true to this. It would have strayed too far from reality if we started creating unnecessarily complex interactive controls. As an example, a lot of the interaction with systems in space is through physical controls, so our graphics function as displays rather than user interfaces.”

Mission Control proved to be the most challenging UI work, since it involved around 100 screens that included a bank of 18m x 6m LED monitors. The look and feel of the screens came from NASA research and reference. “Each screen has a real purpose in that context and we needed to make sure that we reflected that,” outlines Territory art director and lead animator Marti Romances. “It was important to give a unique identity to the set, which features a lot of information, including realistic video feeds and telemetry data that the actors react to and interact with. I focused on creating a visual language to wrap those realistic elements in. Out of the possible routes we suggested, Arthur Max chose the combination of blue gradients and fine white and red linework. Once the final screens were programmed by Compuhire, our on-set playback partners, they really brought that set to life.”

Screens on the Hermes, a slightly futuristic craft, involved a different approach. Territory referenced NASA screens as well as what SpaceX were doing. “We ended up with a good set using different dark tones on the backgrounds, dark blues, purples and greens,” says Romances, “and very vivid and bright colours -(mainly whites and bright screens – for the data and buttons on those screens.”

Territory designed user interfaces firstly in Adobe Illustrator and then animated windows and widgets in After Effects. “Building the graphics in a non-destructible mode (being able to scale it up and down without losing pixel quality) was key,” notes Romances, “as we knew we were going to be repurposing lots of the graphics in different aspect ratios. NASA’s incredible transparency and their huge image library helped us for reference purposes, and featured as content elements in some of the screens. When we were not using NASA imagery for the Mars surface, our Head of 3D Peter Eszenyi, was creating satellite versions of Mars, the HAB, the rovers and other elements that were key to story points.”

“Most of the requests for the interactive screens, such as those in Mission Control, were image sequences for Compuhire to program,” adds Romances. “The screens had to be programmed to display images refreshing in a realistic time frame, and our animations and simulations were usually reduced to an image every 3 seconds. Other screens were programmed to simulate typing scenes from the crew laptops or Mission Control computers. So no matter what the actors were typing, the right message was appearing on screen.”

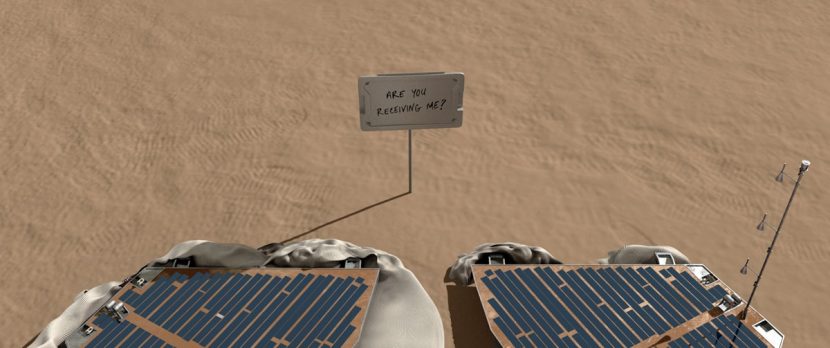

One crucial monitor scene involved the beaming back of Watney’s handwritten message from Mars via the Pathfinder spacecraft. “This is something that most people categorise as an easy shot, just a backplate photocomped onto another photo,” describes Territory head of 3D Peter Eszenyi. “But the way we approached it was that it needed to be a photoreal, fully flexible, 3D scene, so if there is any change regarding the camera angle, the time of the day, different lighting setup or a wider lens, we could respond very quickly.”

“To achieve the level of detail I needed to create the shot,” continues Eszenyi, “I gathered all the information available about the Pathfinder’s optical systems, figuring out the exact distances between the panel that Watney places in front of the camera and the lens. I designed a few versions of the environment, and we ended up using a photo from the actual shooting location camera projected onto 3D geometry as the base. We received references about the panel format and materials and Matt Damon’s handwritten sentence to be put on it. I created different versions of the Pathfinder solar array and instruments as well as the deflated cushioning to make sure we could deliver wider renders if the production needed them.”

On-set playback was challenging for scenes directly on Mars, too, as Romances explains in relation to a scene of Watney dealing with some mechanical changes inside of the MAV: “With just a few adjustments to screen buttons, he sorts out the problem. This screen was fully functional for the on-set performance, but to visually represent what was happening to the spacecraft required several steps. We had to isolate the interactive areas and buttons from our looping animations, and then trigger new animations when the buttons were pressed.”

That also applied to the screens Romances designed for the Hermes simulator, when Martinez is controlling the Iris probe or the MAV remotely. “For example,” he says, “the MAV simulator that astronaut Martinez controls from the Hermes was designed in Illustrator and featured some interactive buttons that had to be pressed on set following a predetermined sequence. We isolated all the interactive buttons to be programmed for the on-set performance. In the middle of that screen we had to render a visualisation for each stage of that remote controlled probe. So we animated and rendered each different stages of the lift off of the MAV in a way that could be triggered on set connected with those buttons. The MAV 3D renders were done in Cinema 4D using a wireframe pass to achieve a more realistic look. We prefer doing things that actually work on-set, not only to help the actors marry their performances to the action, but also to have those exact transitions when they interact with our screens. All the code typing by Watney in the Rover communicating with Mission Control was fully functional. And the same for all the messaging sequences.”

Back to the top.

Earthly effects

Plenty of action in The Martian takes place Earth-bound, mostly in and around NASA and JPL facilities. The Senate handled 180 shots featuring set extensions, greenscreen comps, DMP work, crowds, screen replacements, CG rocket additions and split screens to parts of the story on Earth.

For NASA office shots, The Senate produced a 3D matte painting of the space agency’s grounds to be composited into greenscreen areas of the plates. “As it was set in the future, we did not have to recreate Johnson’s Space Center as seen exactly, but were allowed to work with Richard Stammers and Ridley Scott to create something a bit different,” says Senate visual effects producer Paula Pope. “However, we did use reference photography from the different NASA facilities to assist with creating the look.”

Skinny Watney

Framestore also delivered several shots for a one-off scene of an emaciated Watney preparing for his departure from Mars. A body double stood in for Damon for a view of the character from behind, with close-up shots in front of a mirror involving some digital thinning. “Matt is really quite built up and muscly,” says Chris Lawrence. “So we had to slim him down which involved re-creating the background and warping the edges in. It was quite time consuming detailed comp work.”

“There were some other establishing shots of NASA’s Johnson Space Center that required a separate 3D digital matte painting asset to be used in both day and night shots,” adds Pope. “All shots had geometry built so that we could generate depth information into the plate. We also had to add crowds to these scenes to make otherwise empty areas appear full using elements and CG. This sometimes proved challenging to make work in stereo and a lot of roto and cleanup was required. We also added crowds, DMPs, CG jumbotron structures and screen content to scenes involving Trafalgar Square and Beijing.”

The screen content and replacements were often complicated by the fact they had to be achieved in stereo. Richard Stammers calls out one particular shot by The Senate that involved moving from a monitor view of Jeff Daniels’ character at a press conference into a final view of him full screen. “It was a really nice transition done by The Senate and they did a great job of compressing the stereo aspect of it so it could stay flat until the point of the transition, and then you introduce the stereo depth to it, as it transitions to a mono flat textured 2D screen to a live action stereo view.”

Back to the top.

All images and clips copyright 2015 Twentieth Century Fox.