The state of play

See you at the party, Dream Quest

The curse of mocap

Seeing double

The age of miniatures

Next stop, Mars

Open your mind…

Give those people air!

The magic of matte paintings

The brilliant Rob Bottin

Recall: looking back

On 1st June, 1990 TriStar Pictures and Carolco Pictures released Paul Verhoeven’s Total Recall. The film, based on Philip K. Dick’s story ‘We Can Remember It for You Wholesale’, was a box office success and praised as intelligent sci-fi satire, cementing star Arnold Schwarzenegger as a major action hero. The film was also praised for its innovative use of practical stunts, special effects make-up, miniatures, optical compositing and CG rendering – culminating in a visual effects Special Achievement Academy Award for Eric Brevig (visual effects supervisor), Alex Funke (director of miniature photography), Tim McGovern (x-ray skeleton sequence) and Rob Bottin (character visual effects).

For the film’s 25th anniversary, fxguide has gathered members of the Oscar-winning effects team to share their thoughts and memories on the seminal pic – from the almost disastrous x-ray scene, to the ‘smokey mattes’ approach for shooting miniatures and the final reactor shots.

The state of play

ERIC BREVIG (visual effects supervisor): Because it was very early days in CG, it wasn’t really an option to do things that were fully shaded, photoreal and textured. All of our imagery, like the Mars exteriors, was organic. There was a spaceship shot and I guess that could have been attempted, but using miniatures and motion control technology was such a mature technology at that time.

ALEX FUNKE (director of miniature photography): It was in that era when pretty much everything you needed had to be invented from scratch – a piece of equipment or a technique. Visually it was a really exciting film to make. We knew very little about the rest of the film while we were shooting some of the miniatures, so we said, ‘Right, we’re going to make this the most convincing martian landscape that we can.’ And as it turned out it fit in really well.

TIM McGOVERN (x-ray skeleton sequence): Mocap got sold for the x-ray sequence on the basis that no one could do an x-ray. If they shot a stop motion skeleton mimicking Arnold’s motion, they couldn’t make it look like an x-ray. And that was part of it because it had to look real-time. But if we did CG we had to figure out how to animate it convincingly enough – we showed mocap tests and it looked very fluid. It was supposed to be easy and give you data and then it would be fine. It was hard at the time, but it would be easy today.

Back to the top.

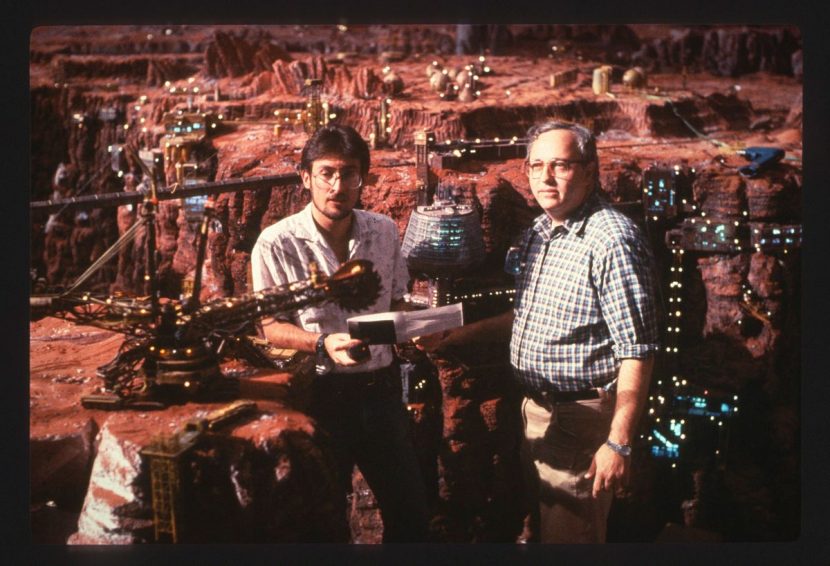

See you at the party, Dream Quest

BREVIG: Dream Quest Images was the primary vendor and we hired Stetson Visual Services, headed by Mark Stetson, to create the miniatures. They were built in his shop in LA and delivered to be assembled where Dream Quest was. Mark arrived with the pieces and assembled them on the stages at DQ. Then MetroLight handled the x-ray sequence.

At Dream Quest, we had an industrial space with several large areas that were set up so that they could be used as motion control stages and smoke stages. We had set this up with electronics that could sense the smoke density in the room and maintain it over very long exposures. Most of the miniatures were shot at very long exposure times for believable depth of field, so that a five second shot or each pass would take hours to shoot.

Because of that, everything shot this way took an incredibly long time to produce. So they had to prepare and start shooting the miniatures while we were still in production and I was in Mexico. Using very simplistic tools like video, lipstick cameras and mock-ups, we would do the equivalent of previs. I would have those down in Mexico where we were filming principal photography, and be shooting to try and fit the pieces in, and they would be preparing to shoot miniatures for things that did not require tie-ins or for what I’d shot. The two units dovetailed until I returned.

I think Paul Verhoeven was very dubious the entire time we were shooting. It was an interesting progression. On the set I could tell he was suspicious that I did not know what I was doing and none of things would work. Bordering on actually saying so to me! As the shoot continued we had a talk saying his name’s on the whole movie, mine’s just on the effects, I’m going to make sure they’re really good – don’t worry. It was only actually after he started to see some of the tests in post that he really got excited. He didn’t anticipate the degree of precision and complexity we were going to put into the work. He was just delighted.

Back to the top.

The curse (and the blessing) of mocap

BREVIG: The script called for our characters to, in one long dolly shot, be seen walking and disappear behind an x-ray screen. And we thought this might be a good opportunity to use computer graphics and motion capture. MetroLight did CG and it was a perfect opportunity to do something that at that time would have had to be done with stop motion. And then if it didn’t work we could always bail out and use traditional animation techniques. Well, it didn’t work exactly as planned…

McGOVERN: Mocap was first being used to analyze golf swings at the time. The golf industry was a multi-billion dollar business so the analysis of swings was something they could charge a lot of money for and show them how to do it better. Jim Rygiel was at MetroLight then and was involved in the tests for the movie originally, but he then moved to Boss Film. I was head of production at MetroLight and the senior technical director at the time, so I took on the sequence.

We did tests to see what the look of the thing could be. We had to figure out how to do opacity mapping. That’s what it took to give us a texture that looked like the striation of bone – it just couldn’t be like a solid bone with no veining. In an x-ray you see all that detail in the shadows and that’s what makes it look real. We were able to write a new shader in our renderer. In those days everyone pretty much had to write their own software. By this time there was Wavefront that had a choreography program called Power Animator – we used that but then had our own renderer and modeler and compositing software.

BREVIG: For the shoot in Mexico City, I had them build the set with these giant panes of tempered glass that were painted black. We shot the actors walking behind the glass and the initial idea was that we would motion capture them after the fact.

McGOVERN: We had a representative from a motion capture company down there in Mexico City. You had to calibrate the space that the action was going to take place in. You put all your cameras around that – six or eight I think. There was a length of this x-ray device. We put the calibration bars on either side of this length – so you have two posts at either end, you run the calibration and you see it from all of the cameras. In theory you tune the total resolution of the system to just the pixels in that area. We calibrated and ran tests and this guy says, ‘Everything’s great.’

BREVIG: I was concerned that the actors might perform differently since we we’re seeing them in one shot walk behind it and then we’d see their skeletons continuing. So I had some holes cut in the back of the set behind the glass and put some normal 4-perf cameras with extremely wide angle lenses on it. So I actually photographed the back side of the glass with the actors continuing their work – that way I knew what they were doing when they disappeared from view from the main camera. That turned out to be the key, because even though there were semi-successful attempts to capture the motion of the actors, it never matched up.

McGOVERN: Arnold showed up on the first day we were calibrating. He’s walking around smoking a cigar on a big sound stage we’re using for motion capture. He walks up and says, ‘Is there anything you need me to do?’ And we said, ‘Yes, could you take this sensor and put it on top of your head?’ He looked at us and it looked like he thought, ‘These guys are fucking with me. I’ve never heard of putting a bar on top of my head.’ But he does it and we’re able to see it’s within the calibrated space.

When it came time for the real deal, we had asked if Arnold would wear a black gym outfit – long shorts and a tight t-shirt. And then we had to put these balls on him which were much bigger back then with front projection material all over them so that you could have a light at the front of each of the cameras and it would always show a spot to every camera. We needed to see these balls really well so that’s why we wanted him to wear black. But Arnold shows up wearing white – white shorts, white top. I said to Arnold, ‘You need to go back to the trailer and change – you need to wear black.’ He looks at me and says, ‘No, no, no, I’m not going back to the trailer. You talk to wardrobe.’

So here I am with the biggest star of the time. So I figured we’d have to shoot all this and hoped it didn’t screw us. We shoot everything, Paul Verhoeven directs him with all the action. Our motion capture guy tells us that it’s the best data we ever had. But then over the next few weeks we had to keep chasing him up and then we sent one of our own guys up to their facility and he goes, ‘It’s not working.’ I said, ‘What do you mean it’s not working?’ I rang the company up and they said, ‘I think we can give you a cycle of the motion. I said, ‘But guys, it’s not a cyclical motion – he doesn’t do anything in a row that’s the same. It’s changing all the time.’

Really what the problem was, was that for the size of Arnold in the frame that was being captured there were too many reflective balls in too small of an area. They didn’t actually merge with each other, but it was too small and too close for their software to figure it out at the time.

BREVIG: I told the MetroLight guys that I had this footage of exactly what was going on there – and they loved it. They used it and could frame by frame adjust the CG skeletons to be precisely what the actors were doing – so it lined up perfectly.

McGOVERN: With the witness camera we could see when Arnold goes in from the front camera that motion and everybody else’s motion from the back side of the screen. So there was at least the ability to project this and extract that to match visually at keyframes. Then you try to interpolate it and hope it goes through and have enough keyframes to not overshoot and undershoot.

But for the other shots they didn’t shoot that reference footage. However, I thought, ‘Wait a minute, we did have footage of the little balls flying around.’ So on realizing we had all these cameras there, I thought maybe I can turn this back into something that I can have on the screen at the same time with the image from the Silicon Graphics workstation with the skeleton so I can match it up and somehow from video.

Because I had done some TV commercials – Sexy Robot and some CG ones also for Benson & Hedges – I was really comfortable with doing key frames. And this meant we were able to salvage it. At time there wasn’t much else to fall back on when the motion capture didn’t work out. For the skeletons, you could get a plastic human skeleton that was made from the mold of a human skeleton – you could buy one of those and take it apart – we had a 3D digitizer which was made by…it had a pedestal and a 2x2x2 cube that you could have something inside of and you’d register that point and you had to connect those points. But the dog skeleton was a Timber Wolf – we got a skeleton from a museum, the LA County Museum’s next to the tar pits in LA – to digitize and then give it back. We weren’t really sure it was going to work because we were really trying to match a real human as soon as it went behind the screen. I was completely convinced this was the only way to proceed because the bridge had been burned.

I went and processed all the footage, selected the best angles, and then I went to Image Transform which was known for being able to do wizardry at that time. They were able to get us the sharpest, cleanest image and be able to transfer all this stuff to laserdisc. Then I called my good friend Ray Feeney. I said, ‘Ray I need to have the image on the Silicon Graphics workstation and the image that’s on this laserdisc somehow overlapping each other.’ What we figured out was you could shine a video camera at the Silicon Graphics machine. You could plug both the source of the laserdisc and the source coming from that camera looking at the SGI image only which was just vectors, and there was a way of merging them that Ray had figured out – those two signals colliding and they actually overlapped, as if one was keyed over the other. To this day all I remember is that I had to plug this box into that box and then on another monitor that was smaller – a video monitor – I could look at the two images combined and hand that over to a technical director.

I got a team of guys together – George Karl, Rich Cowen, Tom Hutchinson – who were hands-on dealing with the film or the video we had and giving me tests constantly to look at. We broke it up into shots and numbers of characters. Luckily the piece that we had the film for had the most characters in it. So multiple guys could be doing those characters and getting that shot done. The guys were doing take after take after take and then what we had to do to see it in the cut, was we had to take the whole sequence, take it over to another camera that had a light box on it, and basically you’d bipack a black and white version of the shot – the whole sequence cut together.

You’d take that – it’s called a printback really – you’d expose that to the light so you’re actually making a contact print onto raw stock from the negative of the image, so that you get a positive of the edit of the whole sequence. You hold that latent in the magazine with a zero point that’s a 100 frames out from the first frame, and you take it and load it onto the SGI and you shoot the skeleton wireframe sync’d perfectly per shot so that you’re doing a second exposure over the 11 shots.

Then you take that back and put it through a processor and then after you’ve done that – which takes about 30 minutes – you’re able to have a dry piece of black and white film that has the level of animation for each of those shots for each of those characters burned into the same piece of film as the footage that was the sequence. So you could play that back on a Moviola and see how you were doing. You’d have a black skeleton running across into an x-ray looking reverse image of the live action footage. We did between 15 and 21 tests.

Once we had the animation finalled we’d render every shot, the look we had gotten with Paul – the right blue/green color which made it look like a screen x-ray. That negative was processed and taken onto Dream Quest. They would do a film optical, and we’d see it in full final quality.

BREVIG: The elements they gave us which we then optically composited into the footage were exactly the performances that the actors were doing that were hidden from camera.

McGOVERN: The thing is, to get the final images you have to run a final optical maybe four or five times through the optical printer. With opticals the more times you do another take, the more dirt they pick up or they get scratched and that shows up in the effect. The shots were ready to be done and my producer George Merkert saw it and we realized we needed to make new elements. But the budget had really been blown by that point. George and I heard between us it was going to be $5,000 to run a new optical again, so we both came up to the VFX producer of the movie, B.J. Rack, and said we would pitch in and split the optical and we’ll pay for it. She looked at us, and she was kind of moved by that. But she managed to make it work and we didn’t have to pay for it. They ran it again and it’s beautiful.

BREVIG: Because I had them give us the just lit images on a black background it made the compositing, other than rotoscoping foreground characters, extremely clean. So we were just adding glows to it and so forth. So it was re-creating exactly what happens if – the idea was that it was a giant cathode ray tube or x-ray machine in which you would only expect to see these glowing outlines projected on the black screen. The trick was trying to make it all look like it was in camera. They gave us the images and we perspectivized it and tracked it to the dollying cameras when we composited it.

Back to the top.

Seeing double

BREVIG: Although the equipment was still fairly primitive, we were able to use a real-time motion control dolly rig that Dream Quest had built which gave us the ability to shoot Arnold as a hologram in a moving camera shot, and then shoot repeat passes for an empty background as well as him playing a second character in the same shot.

The equipment performed perfectly, but I remember that the video assist person, whose workspace was not on the set with us, had a hard time figuring out how to trigger the camera halfway through one very long shot. We were in an abandoned cement factory location and we were shooting at about three in the morning, and the system went down for several minutes. With a very unhappy Arnold glaring at me for holding up his shot, it seemed more like several hours until we got the word he had solved his problem. Luckily we got the shot quickly and Arnold was fine about it after that.

Back to the top.

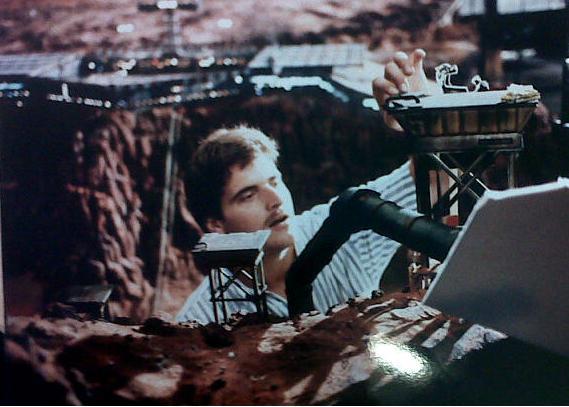

The age of miniatures and ‘smokey mattes’

FUNKE: The motion control rig had been completely designed at Dream Quest. It was beautifully accurate. To move it they used a ladder belt, which I think nobody else seems to use anymore, but it was a precision steel belt encased in plastic. That was tensioned in the track and then it ran into a thing called the Omega driver which was basically a big sprocket that it runs over, which was attached to the dolly. The cameras were standard Mitchells or converted reflex Mitchells.

Our visual effects plates were shot in VistaVision. That was because as you go through the optical process, at every step you lose and lose and lose quality – and it’s unavoidable. So the best thing to do is start with the biggest possible negative. Even today working on projects – some of the guys with roots in this technology say, ‘Man if only we could shoot this in VistaVision!’

BREVIG: What we did in particular for the miniatures was we spent a lot of time figuring out new techniques for how to use photographic passes – different exposures – to give the miniature work the look of something that was more sophisticated than just a bunch of models shot in smoke.

FUNKE: The issue we had to deal with is how do you great this sense of great distance even though the back wall of the set is only 20 feet away? Normally for an Earth-bound miniature you shoot in smoke. But of course since there’s no atmosphere on Mars, you can’t just shoot on smoke. So we thought about that for a while and we thought, ‘Why don’t we shoot the landscape miniature in clean air and then shoot a matte for the back edge?’ – which you would do anyway. Then shoot a smoke pass and a matte pass of the smoke – this was the trick.

BREVIG: These ‘smokey mattes’ gave us completely authentic fall-off, natural graduation from near to far of what the atmosphere in the room was doing. Essentially it’s a z-depth pass done photographically. We could then take the elements of the background, which were usually matte paintings and use that as essentially as a dynamic hold-out. And that way the look would be photographic although it was a composite, but there was no sense that there was a matte line or a hard edge because it was an organically designed matte pass.

FUNKE: If you shoot a matte pass of the smoke, essentially it has a built in soft edge. So effectively you can have the foreground image as sharp as it should be, but where it starts to peter out at the back edge you can use this smokey matte to slightly diffuse everything that’s behind the matte. So you effectively create a kind of aerial perspective even though there’s no actual air to create. It worked extremely well – there’s still a struggle in the optical department because there’s a balance you have to reach behind the hard edge matte and the smokey matte. But they had a terrific optical department and they pulled that off.

BREVIG: I had to pitch it to the compositing team, and they just looked at me saying, ‘Are you out of your mind?’ I said, ‘No, no, let’s try it.’ They tried it and they got it and thought it was great. We then did that on everything and it also gave us tricky ways to deal with blue spill and reflections on the sets. I brought giant rear lit bluescreens down to Mexico where we shot. We put them behind the live action sets. What I didn’t know was the production design was going to be black, glossy surfaces everywhere. So for a while there I was really concerned that we were just going to be buried in unwanted reflections of all the bluescreens. So we tried a new idea which was to take essentially the blue record and fabricate a color difference matte that gave us just the reflections as a separate layer. Then by putting the color or imagery from the background elements through those, they very nicely showed up every place that reflections should have showed up. So we essentially turned an unwanted blue spill into a desirable martian red spill.

Back to the top.

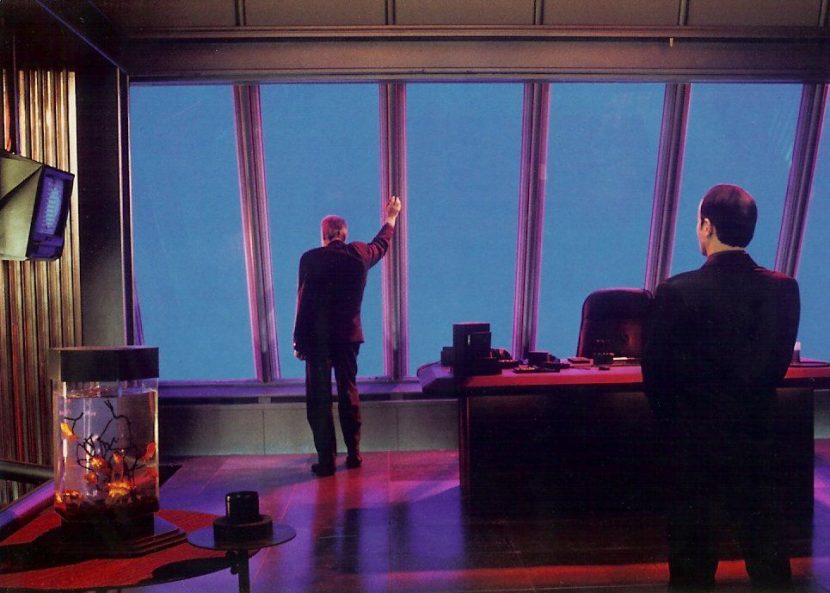

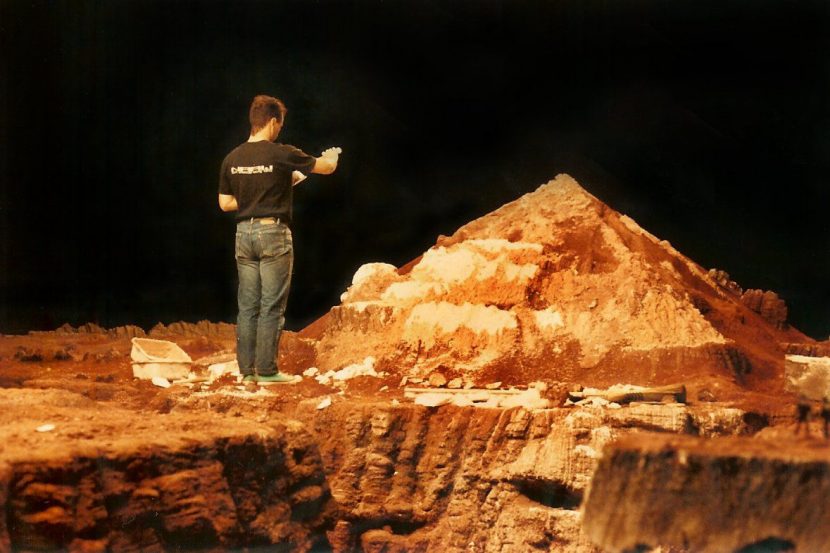

Next stop, Mars

FUNKE: This was shot DQ43 – I remember it well. It even has number 43 on the side as an in-joke. It starts with Arnold and the burly miner in the window and then we pull out even further, even wider as the train goes into the tunnel. Then we follow this and go up over the cliff to reveal the landscape.

BREVIG: That shot was insane! What made it possible was us not knowing not to try it. It was storyboarded and then we just divided it into the equivalent of multiplane layers. We knew we could use progressively smaller and smaller miniatures as you moved away from the camera, as long as you layered them in. For example, for our foreground miniature – the train you see – I shot Arnold and some extras in a train set, and then we rear projected that into a little model car using miniature projector created for The Abyss to put the characters in the subs.

FUNKE: Because the train’s moving, we couldn’t make the shot long enough, there wasn’t enough train track. So we decided to put some things along the side of the track – some telephone poles, basically, and objects. We’ll put them on a separate motion control track – so here we are on the close shot of Arnold and the miner in the window and pull back a little bit. The things next to the track go by zip zip zip – nothing’s moving, the train’s not moving, the camera’s pulling back but these things are zipping by as if they’re moving. Now we start the train and camera moving – you’ve created the illusion you’re moving when you weren’t and now you can do the move.

BREVIG: For the furthest mountains the camera’s barely crawling as it’s dollying left to right in that shot, whereas for the closest model layer the camera is really zipping along. We really had to put a precisely located mountain that blocks the lens for a moment so we could transition to another scale.

– In this segment from Berton Pierce’s ‘Sense of Scale’ doco, hear from modelmakers Mark Stetson, Tom Griep and Ian Hunter.

FUNKE: So we follow the train into the tunnel. There’s a weird thing – a slide there because we’re moving forward but somehow we’ve got to get 90 degrees to look over the landscape. It goes over an edge of the cliff and then it goes up to an intermediate cliff that we built – it’s a three layer thing. We go off an edge of that into the landscape, the reason being that the scales are all different. The scale of the landscape is way smaller than the scale of the train. So you have to keep the scales all isolated, which you do by filming a foreground piece of the matte. And then you have to match all these camera moves!

BREVIG: We knew the techniques would all work individually, but I think what was gutsy was putting them all into one shot that just went on and on and on, especially because it was all photography and mechanically created, you don’t have the luxury of what we do now and going back and tweaking the first piece of a scene to make the end of the shot look better. You have to commit as you go and hope that by the time you’re done shooting that maybe three weeks later when you shoot a live action element that it’s all going to fit with your cunning plan from the beginning.

FUNKE: I think it was one of the most successful shots in the movie because you’re creating the illusion of motion in the movie at that point. What if you’re shooting it for real – would you keep the camera still – no. That shot was actually based on the last shot in the film The Heat of the Night with Sidney Poitier where you start on a close-up of Sidney looking through the window of the train, and then it pulls back and cranes up. It’s one of my favorite shots in all of cinema.

Back to the top.

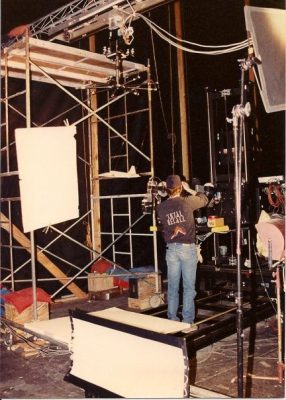

Open your mind…to four different miniatures

– Watch the ‘Open your mind’ clip.

FUNKE: Kuato says to Arnold’s character, ‘Open your mind’ and reads Arnold’s mind and then tells him about the alien reactor. You’re at a high angle and you come rapidly down to a big excavation and back up to the bridge where the bad guys are walking.

BREVIG: The idea was the camera had to be able to fly around untethered and then we would incorporate that into the footage of the backgrounds.

FUNKE: There’s actually about four miniatures and one live action piece all put together. After you push into Arnold’s eye, then you’ve got this bay and then a really big miniature built on its side – it was Mark Stetson’s idea to do that so we can get more height. It’s tricky to do because with these long columns, so matter how strong they are you’ve still got to keep them from sagging. You push in through the long shot – we had to build a special Mitchell camera with nothing sticking out – it had a probe lens to get between the columns. .

It wasn’t in the original story but we had to do a hookup because we had the dive down and up again, but there was no ceiling, so we had to dive down to that big excavation and then as it’s down in that excavation, we do a transition to a whole brand new set which is a set with a ceiling. Now we’re pushing up past the reactor rods, and then that’s coming up to the bridge which is another scale miniature. We had this larger bridge that was shot separately and we were rushing up towards that, and that hooks up to a live action shot of a camera moving along with the guys walking on it and that was shot in Mexico City.

BREVIG: We had to communicate the vast scale of this completely fantasy concept. It’s as much a problem today as it was then. When you have a fantastic concept – meaning unbelievable, it’s a fantasy, audiences are initially not going to want to believe that it’s real. So what I always do, is put something in the shot to overcompensate and make it believable. That meant having a live action component so you could see little human beings in that environment which helps make it credible and understand the scale.

These are buildings the size of the Empire State Building in rows of 8×8 supposedly moving down into the subterranean ice. The whole idea is so far fetched that you have to go out of your way to say, ‘No, look at the tiny people.’ The other thing I would do is try and keep the camera moving because parallax allows you to judge the scale of things. If you have characters in the foreground looking at something and the camera’s not moving, you don’t know if that’s wallpaper six feet in front of them, or a distant mountain. So similarly by always having the camera, even if just creeping sideways, you can understand the scale and the distance of what’s in the shot.

FUNKE: This was a major thing to line up, but the Dream Quest motion control software was quite good at doing scale adjustments. You could say, ‘We’re going from a 200th scale miniature of the actual interior of the mine to a 24th scale of the bridge,’ and it was able to quite effectively do the conversions of the speed. But it was still a hard thing to stitch together! These days you could tweak it and push it around but back then it had to fit together the first time. Well, not the first time, but probably the 20th time anyway…

BREVIG: To matchmove a shot like that today, you would have guides in there and and track it in post and make the world CG around it and it would all fit together nicely. But because we were tying in miniatures, we had to have some way of matching the two photographic elements together. A motion control system didn’t exist that would allow you to do big crane shots. So what I did was I figured out if we used witness cameras at various orthographic positions on the sound stage and I put retro reflective markers on the movie camera and basically made a grid on the floor for the downward looking witness camera and on the wall for the sideways looking camera, that both of those if we photographed in sync with the film camera, would give us the two axis of motion of our movie camera while it was in photography. And then we could fairly easily extract, ie matchmove, by analyzing the footage from the two witness cameras.

FUNKE: Tracking shots were always a challenge. Mostly they were motion control moves, but the only way to test it is to shoot on black and white high contrast film and then bipack it into the Moviola to see if the miniature is tracking with the live action. Not even video assist was available then. We forget how many advantages we have now doing this kind of thing.

So every time you thought what you got was a good line up – it also involved cutting clips out of a print and putting them into the viewfinder to check line-ups – but because you’re looking at the clips you can’t see very well. We called it RAR because there was a type of Kodak film called Rapid Access Recording which was designed for photographing explosions. We could develop it right away in a Kodak Prostar machine. You load up a RAR, you shoot a pass, then you put it through the Prostar and then you bipack it with the print and see if it was working. You then go in and tweak the motion control until you finally got a match. It was a very tedious process.

Back to the top.

Give those people air!

FUNKE: We did the reactor activation shots all practically at different miniature scales. The rods crash through the ice and it breaks it and all the steam comes out. That was a row of sheet metal ducting. That had lots of lights inside of it, because we had to really overcrank it. It had lots of holes cut in it and then it was wrapped in a shell to create that strange mottled look that the rods have, so the light could be dialed in. That’s shot realtime overcranked. And then it’s liquid nitrogen pumped in there. For several weeks we were the largest users of liquid nitrogen in Los Angeles County. Every day a truck with new flasks of liquid nitrogen would come out. When you’re shooting with that it’s like, open the valve and it blasts out! That’ll empty one of those flasks in about a minute.

There were model guys all around it pushing strings and levers to make it happen. In order to do the longer shots to see the rods pushing down, that had to be shot high speed but we couldn’t get them bright enough at the scale that the rods had to be built. So I fooled around a bit with Scotchlite. I figured that we could make the holes – you dress the columns with a rusty corroded look, then have ragged edge pieces of Scotchlite placed on the outside – then by projecting into a half-silvered mirror with a very powerful projector we could project the color onto it. So effectively the hot light that’s supposedly in these columns, that’s actually a reflection in the Scotchlite of the color pack we had in the projector – the biggest Xenon stills projector we could get. There are numerous flaws in that particular shot – for good reason, because the ones that are in front are brighter than the ones in the back so we had to put thin strips of neutral density on the beam splitter – the half silvered mirror. It’s the voodoo you have to do to make it work!

Under each of the places that the rods are pushing in there’s half a dozen spot par lights – a thousand watt pars shining up through gels, then there’s all the shots of steam and nitrogen blasting in. Everything happens at once – it’s very exciting because the camera’s running high speed and it’s go! And everything comes crashing down. The timing was tricky because the impact has to initiate the smoke, but you have to lead the impact slightly so the nitrogen has time to get there.

Nitrogen is wonderful stuff but it’s not very directable. If you want to make a flow of nitrogen it’s great, but the moment it has to appear, it doesn’t like that. That made it more like a performance piece where you have a lot of people having to operate and work together to get an accurate timing.

We did a count – it was a trick I use a lot. You record it – so you say 1 and 2 and 3 and 4. Then you play it back so that everybody hears the same count and knows between the 2 and 3 that I need to open this valve, for example. It really works because everyone’s on the same timeline.

For the final mountain exploding shot, we had the actual miniature mountain – which was huge – about 30 feet high – but in order to shoot it we had to go to the Agricultural Hall no 5 at the Ventura County fairground. It had been a blimp hangar, and they somehow motor’d there. It was enormous! We had to go there so we had enough height to put a backing up and room for the blast coming out the top. Bob Primes shot that for me.

It was loaded up with detonating cord and mortars. The det cord is laced in and shatters the top that the same time the air mortars loaded with gravel fired. If you put a high explosive in there, all you do is blow it out. So you need to have the control in there to have the direction and aim of all the chunks. And the det cord lets you cut the thing exactly where you want it. Because it was really blowing up, it always had a very visceral quality to it which is hard to achieve any other way than blowing up a real mountain.

They also used A and B smoke which was a combined smoke chemical. The two liquids are inert until they come together and then they instantly produce a gigantic volume of smoke. We put one of the liquid components on a lot of the stuff that would get blown out and the other component on areas of the mountain. So as these were blown out, boom! there would be a blast of smoke. It’s horrible stuff to work with so all the guys from Stetsons had made these samurai capes – the stuff was so nasty so they had taken black plastic polyethylene sheeting and built these extra protective coats.

– Watch a clip from the final sequence of the film.

You can see a live action element of Arnold and Rachel Ticotin lying on side of the mountain and behind them the jets are blasting. We did that by projection – we did a high speed shot of the blasting of the nitrogen, then a motion control shot using – we put a little piece of white water color paper, another trick we used a lot – into the set and then onto that piece as a projection screen we projected the live action plate.

We did the same thing when all the air is established and the people walk out through the broken window and stare up at the sky. After we shot the landscape miniature, we put a little projection screen made the very flat water color paper – which is almost a perfect diffuse reflector, it’s not directional at all – so essentially when you’re projecting on it, even though you’re projecting onto the little piece of card, you can look at an angle and you don’t lose any brightness. That’s the whole trick.

Back to the top.

The magic of matte paintings

BREVIG: We had great matte artists on this show, including Jesse Silver and Bob Scifo. There are two tricks to matte paintings which applies as much now as it did then. Use them in a smart way – don’t make them carry the weight of the entire shot. Help them out with live action elements, atmospheric elements, things like that. We’d photograph a scene knowing there would be a matte painting, but we’d also shoot little elements – tiny people doing something, smoke elements, lights blinking – it will look less like a painting if you see things moving. Those are the things that help keep them from being perceived as somewhat artificial. The way humans identify what they’re looking at is they think back to what does it look like that I’ve already seen before that looked like that. The trick is to not make matte paintings look like a painting. Make them look like a photographic record of something that’s real. We’d try and do that in every shot.

Back to the top.

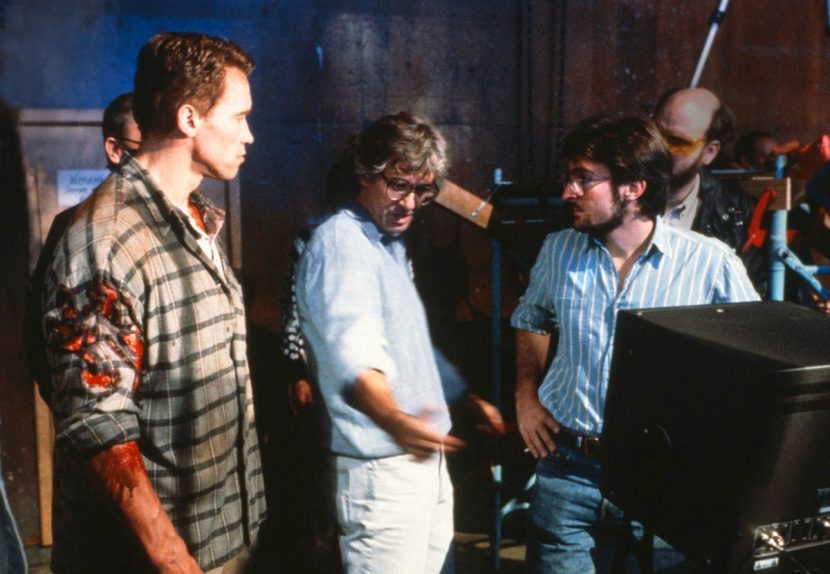

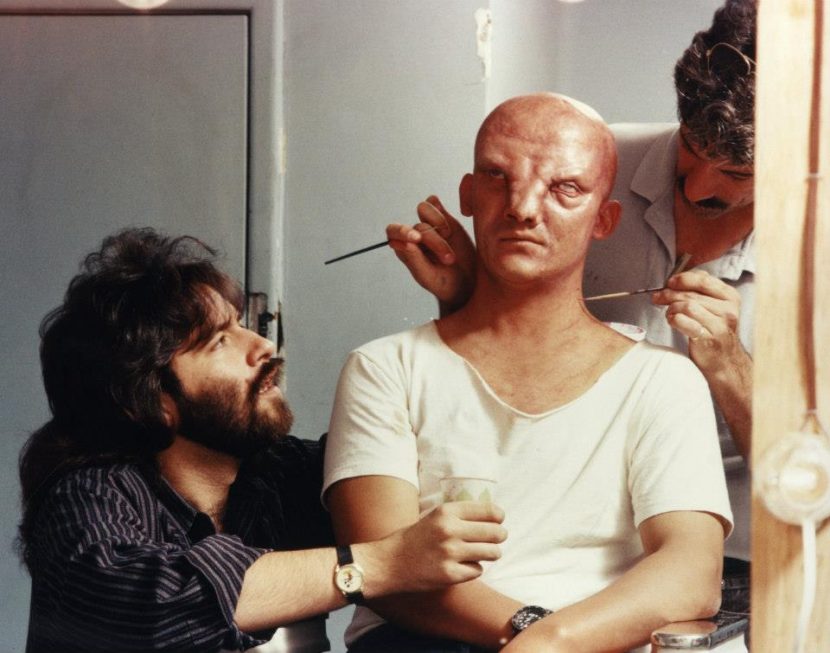

The brilliant Rob Bottin

BREVIG: We worked with Rob in that there were a lot of scenes where there were shared techniques. If we were having actors falling out into the Mars atmosphere and ballooning up we were doing the photography with the actors and environments and then the close-ups of the prosthetics would be done as inserts. One great thing about Rob’s work was that it didn’t need post fixing. He just worked with the tools he had until it looked great, and it was essentially an insert shoot when it was photographed. So we didn’t have a lot of hands-on participation in terms of what he was doing, except that we both collaborated where we would take it.

Back to the top.

Recall: looking back

BREVIG: At that time the tools didn’t exist in the computer, so we had to work out real-world solutions. It’s very easy to just default to a piece of software to solve a problem. I really enjoy working with other people who are still open to sweetening shots using photographic elements. I think that you can still tell. Actual photography really does look good and I think it’s important to keep that in mind. I think you can sense it. It’s a really subtle difference, because there is awesome CG work being done. Probably the best thing is when you actually can’t tell how it’s done, then it usually means you’re on the right track.

FUNKE: At that time when we doing films this way, if you were still doing commercials, you’d go into the video effects suite and do all this cool stuff and say, ‘Man I wish we could do this in film!’ That’s what probably drove part of the digital revolution because the film guys were green with envy that the video guys could do all the color correction, re-positioning and everything. But I’m very proud of the work and the Oscar we won.

BREVIG: Winning the Oscar was extremely surprising. They announced it in advance of the awards show that year – I didn’t know that ever happened! It meant that we could prepare emotionally for the award. I knew at the Bake Off we had a good reel. There were awesome other films that year but we were fortunate to win the award.

McGOVERN: I remember I got home from MetroLight and I get a call from my mother in law who said the Academy called and you won. I go, ‘You can’t win! You get nominated.’ She said, ‘No, it’s some sort of special award.’ So when everyone got an Oscar nomination, we got an IOU for an Oscar – I still have it.

Back to the top.

Whatever happened to Rob Bottin?