It can be easy when looking at MODO to focus on its modeling tools and lose sight of how cutting edge the included MODO 901 renderer is and how it has developed over time.

Before MODO was with The Foundry, and before it was the core of Luxology, Allen Hastings in 1988 started developing the LightWave 3D renderer. It went on to become very successful in VFX for television and film (Babylon 5, Titanic, and Battlestar Galactica). So when Stuart Ferguson, Brad Peebler and Hastings founded Luxology in 2002, their original plan was to develop a new renderer based on the same approach as LightWave, an accumulation buffer algorithm but with some enhancements such as using buckets for better multithreading.

However, this plan changed in July 2003 “after Brad and I visited DNA Studios in Dallas,” recalls Hastings. “They had used LightWave on Jimmy Neutron but were not confident in its ability to deal with their next film project, The Ant Bully. In particular they needed micropolygon displacement mapping, improved anti-aliasing and motion blur, and very large scene handling, and they were leaning toward switching to Pixar’s PRMan. Before doing so they asked if we thought a “moon shot” effort on our part could make our new renderer a viable alternative.

This required a whole new approach and Hastings spent the next few months reading SIGGRAPH papers and brainstorming new ideas, and by October of 2003 he had a brand new renderer working that included many of the capabilities DNA had requested. “At that time it was not yet complete enough to use in their film, but it gave Luxology a great foundation for the renderer that was eventually released as part of MODO.”

The newest version of this renderer has just been released and is part of MODO 901. The MODO renderer is bundled with the software, but it is not an add-on after thought, some major innovation has meant that MODO’s renderer is really good and rendering significantly faster. Actually, MODO has always pushed the rendering envelope for its customers.

While it was more than three years after that initial ‘moon shot’, MODO was demonstrated at SIGGRAPH 2004 and released in September of the same year. The most unusual aspect of the new renderer was a deferred shading architecture that allowed it to compute anti-aliasing and shading at different rates, one of the strengths of PRMan, but something that few other renderers could do. REYES-based renderers do this by first dicing geometry into micropolygons, then shading at their vertices, and finally computing visibility at sample points within each pixel.

To avoid the need to dice everything and the wasted effort of shading points that might not turn out to be visible, the then new MODO renderer inverted the process, first sampling visibility (without shading) at densely spaced points within each pixel for high quality anti-aliasing, motion blur, and depth of field. After all pixels in a bucket had been processed, a second phase identified sets of related samples (i.e. having the same material and similar surface normals and positions) and shaded them together. Depending on a user-specified shading rate, shading might only need to be computed once per pixel no matter how many visibility samples were within the pixel. Several years later this “decoupled sampling” idea was independently proposed by other researchers who were probably not aware that the MODO commercially available renderer was already using it, and had for a few years.

The initial visibility samples within each pixel can be generated in various ways. “At first,” explains Hastings, “traditional polygon scan conversion was used for speed, but as ray tracing became more efficient it took over as the method of choice, providing new capabilities such as lens distortion and alternate camera projections. UV-space scan conversion (and in MODO 901, UV-space ray tracing) is also supported for baking texture maps.”

The same deferred shading mechanism worked for all these “front ends”, although there is also an interactive preview front end to the renderer that computes shading immediately rather than deferring it.

To handle scenes larger than could fit in memory, the MODO renderer supported geometry caching. At the start of rendering, all objects were represented as empty bounding boxes, and only when one of these boxes is hit by a ray does it get filled with geometry. Each object is divided into smaller parts called segments, and if displacement mapping is applied, these segments are diced into micropolygons when their boxes are first hit by a ray. If a user-specified memory limit is reached during the render, then older segments can be flushed to make room for newly visible geometry.

“Other features supporting large scenes included manual instancing, automatic instancing (“Replicators”), and multiple levels of detail for each object. Huge resolutions (up to 100K x 100K pixels) were supported by not allocating a full size frame buffer, but instead writing buckets to disk and then reading them back a row at a time to save the final image,” recalls Hastings is discussing the impact of the original version of MODO’s renderer.

Another trend that shaped the early evolution of the Luxology renderer was the introduction of more physically accurate renderers like Maxwell. “Although this seemingly contradicted our original goal of competing with PRMan, it was decided that the Luxology renderer should have such capabilities too,” says Hastings. “As a result it was designed to use radiometric units both internally and for specifying light sources, and a physically plausible BRDF with Fresnel was offered.” Other related features included absorption (obeying Beer’s Law) and subsurface scattering, and a choice of two global illumination methods (brute force and irradiance caching).

After the first public release of the renderer as part of modo 201 in 2006, obviously new capabilities continued to be added. Some of the major ones over the years have been multiple render passes, volumetrics, caustics, hair and fur, photometric lights, stereosopic rendering, network rendering, image processing, and support for plug-in shaders.

Probably the most significant “under the hood” change that affected users came with MODO 501, released in 2010. “First the ray tracing code was greatly accelerated by taking advantage of SIMD processing – similar to what Intel later offered in their Embree libraries,” notes Hastings. “Then a new sampling method was implemented that provided most of the desirable properties of low discrepancy sequences (such as faster convergence), but avoided noise regularity artifacts and QMC patent issues. MODO uses the 2D version of this method for effects like depth of field, soft shadows, and global illumination, and a 1D version is used for motion blur and dispersion (spectral sampling).”

MODO 901

The newest version of MODO’s renderer in 901 continues adding features, particularly in the area of materials and lighting.

A new physically based material shading model has been added, inspired by the “Disney Principled BRDF” presented by Brent Burley in 2012. It has actually always been ‘possible’ to perform physically based rendering in MODO, but users had to change several settings to do so. Turning on the Conserve Energy option, for example, caused MODO to switch from a traditional Blinn-Phong BRDF to the Ashikhmin-Shirley BRDF, which “can be thought of as a precursor to the Disney work in that it had the same goals of using simple parameters and not violating physical laws,” explains Hastings. In MODO’s implementation, some aspects of energy conservation were enforced, but users still had to specify matching reflection and specular amounts, reduce transparency to account for specular reflection, and manually enable blurry reflection.

The new shading model handles all these details automatically, and also provides a more natural specular profile known as GGX (or Trowbridge-Reitz) as well as a diffuse retro-reflection effect as roughness increases. Disney researchers found that these features allow the model to better match the appearance of real measured materials.

In 801 there was a separate diffuse roughness control and “we’d use that to cross fade between pure Lambertian diffuse and the Oren-Nayar diffuse shader model,” says Hastings. “For the newer 901 we have simply replaced the Oren-Nayar with the Disney version. Basically the new model just looks better, so it is now the default in MODO 901.”

Other material-related additions are the ability to control the glossiness of the clearcoat effect, and how much it should be affected by bump maps. Setting the latter to zero can be used to simulate smooth varnish on a bumpy tabletop, for example.

On the subject of lighting, one of the improvements is support for MIS (Multiple Importance Sampling), which is a way of combining different sampling strategies with the goal of reducing noise in shading. In this case the two strategies being combined are light-based sampling and BRDF-based sampling. (MODO 801 had offered MIS for environment lighting, but in 901 it is now available for direct lighting as well).

The traditional way of sampling a light source is to choose random points on the surface of the light, determine how much of the light reaches the surface point being shaded, and multiply by the BRDF of the material. The BRDF is simply a function that describes how much light from a particular incoming direction (such as from a direct light source) reflects in a particular outgoing direction (such as toward the camera). This direct or light-based sampling works well in many situations, but has problems when the material is shiny and the light source is large, because it’s unlikely that a random point chosen on the light will fall within the narrow specular lobe of the BRDF. When that rare event does happen, the result can be a bright speck or “firefly”.

Another way to compute shading is to choose random directions proportional to the BRDF, and fire rays to see how bright the scene is in that direction. This indirect sampling is used for reflections and global illumination, but it can also be used for direct lighting if geometry (such as luminous polygons) is created to represent light sources. BRDF-based sampling works especially well when the material is shiny, but poorly when the material is rough and the light source is small, because it’s unlikely that a ray fired in a random direction within a broad specular lobe will hit a small bright light. Again fireflies can result when that happens.

The strengths and weaknesses of the two sampling strategies thus complement each other very well, so it makes sense to use both and blend the results, which is exactly what MIS does. From the work of Eric Veach (who won a Sci-tech in 2014 for his work on this amongst other things), in MIS, the results of each method are weighted in a way that avoids double-counting the lighting, and in a way that automatically suppresses rare events that cause fireflies (because it knows that the other method will work better in that situation). Since indirect rays were probably going to be fired anyway (for reflections or global illumination), enabling MIS doesn’t really cost much render time, and the benefit is often a significant reduction in shading noise. The creation of geometry to represent lights is also automatically handled whenever MIS is enabled so it’s very easy to use. A nice byproduct is a new option to make direct light sources visible to the camera.

MIS produces much better results, more accurate and just about as good as you can do. MIS in 901 has been implemented for diffuse and specular shading and is in complete sync with the move to area light sources. For both spec and diffuse, MIS can greatly reduce visible noise in MODO renders. In earlier releases, the shading was computed by choosing “random sample points on the light source, determining if they are visible by firing shadow rays, then multiplying the incoming light by the surface’s BRDF,” commented Shane Griffith, MODO’s Product Marketing Manager. “Or, if the user enabled the light’s Visible to Reflection Rays option, its specular shading was handled as part of computing blurry reflections, which involves choosing random directions according to the BRDF and seeing if rays fired in those directions hit the light source.”

As fxguide first reported in April, both of these approaches are likely to be noisy compared to the same scene rendered with the new MIS approach for the same render effort. Users can activate the MIS using the Direct Light MIS channel of the render item. The user can specify whether to use MIS for diffuse shading, specular shading, both, or neither. As the renderer was not slow, comparatively speaking, this new solution will be welcomed by users for both its speed and improved noise performance.

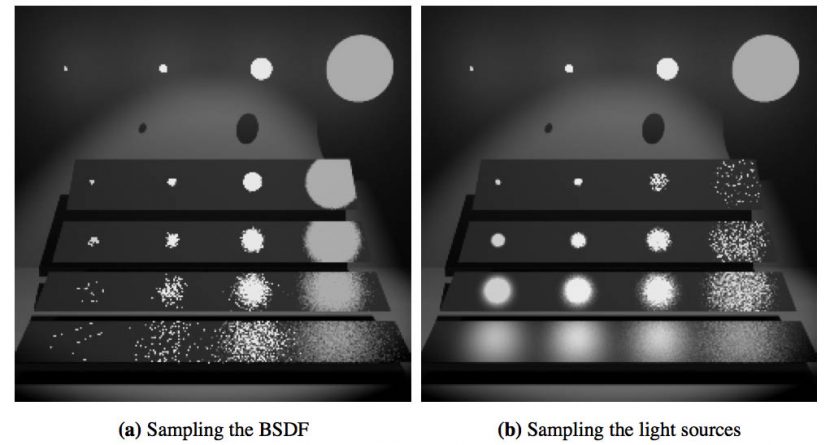

In Veach’s 1997 thesis and in a paper he co-published just prior (1995 Veach & Guibas), this is an image which shows the problem and solution solved via MIS. In the original 1997 black and white original there are four area lights, varying in size by a factor of three from their neighbors (and varying in radiance by a factor of nine so that they each emit the same total energy).

(Note how in the Veach example, sampling the BSDF works for area lights and not tiny spots, and sampling favoring the lights does the opposite)

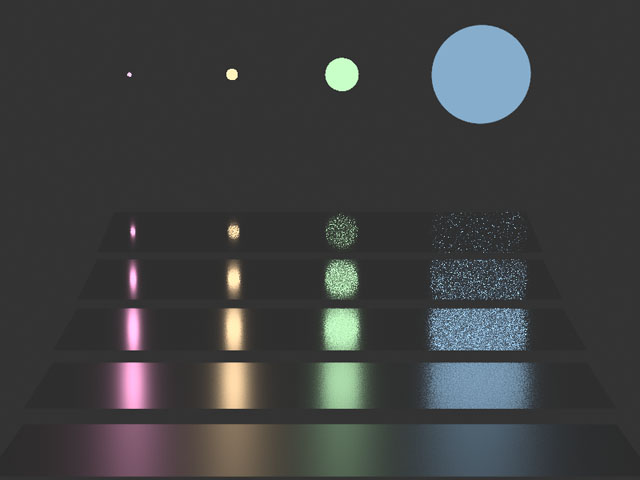

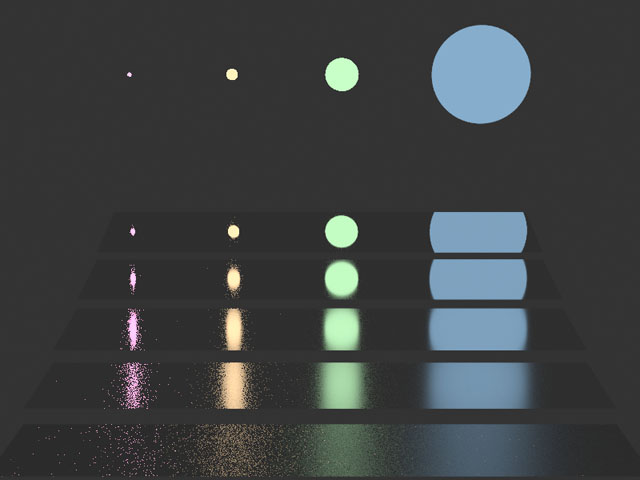

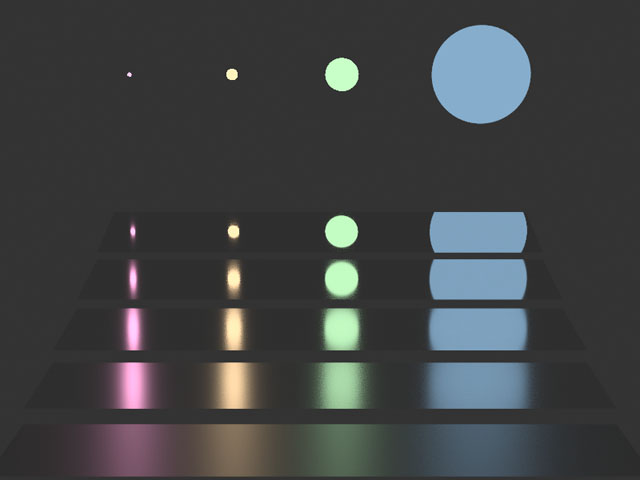

Below is MODO’s replication of this classic shot in three images each showing five rectangles using a highly specular GTR material with roughness settings of 2%, 4%, 8%, 16%, and 32%.

The first image uses traditional area light source sampling. This does not work well when the light is large and the roughness is low, because the chances are very small that a random sample on a large light source will fall within the very narrow specular lobe of a smooth, mirror-like BRDF. When such a rare event does happen, the result is an unnatural bright speck.

The second image shows what happens when the reverse is the case (again non-MIS) – the lights are specified to be visible to reflection rays, direct specular shading is turned off and blurry reflections are used instead. This does not work well when the light is small points and the roughness is high, as you can see below. This is because it’s unlikely that a random direction within the broad specular lobe of a rough BRDF will hit a tiny light source.

The MIS solution covers both cases, all three images are rendered in 901 and interestingly the render times are roughly the same for all three.

While the results are less dramatic for diffuse, MIS is used also for 901’s diffuse

Another lighting improvement in 901 is a new way of determining how many direct samples are chosen on each light. Previously, users specified a separate sample count for each light source in the scene. These counts would be the same for every pixel, and although they would scale with the importance of the shading evaluation, there would never be fewer than one sample per light, limiting render speed in scenes with large numbers of lights.

The new method is controlled by a single light sample count for the entire scene. This “pool” of samples is adaptively allocated to the various lights with probabilities based on a quick estimate of their importance, which can vary for every shading evaluation. The estimate for each light accounts for factors like brightness, distance, and incidence angle, but not shadowing (since that requires firing rays, which is what we are trying to minimize). The sample count can actually be less than the number of lights while still maintaining unbiased results, because every light that can potentially contribute will have a nonzero probability of being sampled. The new adaptive sample allocation can greatly speed up scenes with large numbers of lights while at the same time reducing noise, and it improves ease of use by replacing many settings with just one.

In addition to the material and lighting improvements, two image processing features have been added. One is the ability to determine the exposure of the final image using the camera’s f-stop and shutter speed controls together with a new ISO setting. This is a great way when trying to match live action, when you are setting up your camera – it works as a base to match the results of the footage from on set. Setting the white level now “is just so easy,” says Hastings, “you can interactively drag a slider and you are not affecting your motion blur or depth of field.”

The other change is the option to apply Reinhard tone mapping to the individual RGB components of each pixel, which some say gives a more natural look than the previous method of tone mapping the overall luminance of each pixel. Some tone mappings produce very unnatural colors compared to traditional photography. The new model avoids the sickly over saturated ‘at the window’ look of many tone mapped solutions.

The new 901 also has Adaptive Light Sample Allocation “In 901,” explained Brad Peebler, The Foundry’s President of the Americas, “the ALSA (adaptive light sample allocation) direct illumination method adaptively allocates samples from a global count to the lights in a scene based on its estimated contribution. This can result in faster renders with less noise and requires significantly less user input. Faster, cleaner and easier to use. ALSA is a triple threat feature.”

ALSA is a new feature point – given the number of lights often required in a production shots. Massive numbers of lights slows the render, but with this new adaptive approach when there are multiple lights you can ignore the traditional sample counts for each light item, reducing render time dramatically. Instead, each light’s probability of being sampled will vary at every shading evaluation depending on factors such as the various light’s brightness and distance. The main benefit becomes apparent with large numbers of direct light sources. In such cases you can expect to see a very real reduction in render time and reduced noise. Hastings points to one customer who had a car tunnel animation which actually rendered ten times faster due to the new ALSA.

Gregory Duquesn, MODO product manager at The Foundry points out one of the big issues with rendering in 901 is scalability and the ease of rendering very large data scenes. In earlier versions, MODO had some issues with large geometry sets and also there was an issue with very large textures. The new Deferred Mesh item lets you offload assets to disk, loading them only at render time; simple proxies or bounding-boxes represent the item in the scene with minimal memory impact. Other complexity issue improvement is the ability to mass-convert scene image clips to tiled OpenEXRs, and better ways to expose only selected inputs in assemblies. The new OpenEXR support thus allows very high resolution textures to be rendered. In a related point you can also render directly vector logos that might be used as decals or textures. As a badge or logo is added as a vector art texture element, “you have infinite resolution” in a very light weight asset, points out Duquesn, “you can zoom in and out as much as you want and it is resolved at rendertime.” The vector artwork created can be created in Adobe Illustrator or converted from a CAD file.

Progressive Rendering improvements in 901 go much beyond the Preview that was possible in earlier versions of Modo. In the new Viewport in 901 is hardware-accelerated and used to manipulate geometry and and materials in a real-time setting that accurately displays lighting and shadows, BRDF materials, reflections, gloss, screen-space ambient occlusion, high-quality transparency, anti-aliasing, supersampling and a number of 2D post-processing effects. The GPU functionality is from the Foundry’s MARI team is called Clear technology. Actually it is not even in the current MARI GPU libraries yet but will be in the next version of MARI.

What’s next? The team is very tight lipped but they are investigating specialist cameras for applications such as VR stereoscopic rendering. The whole Oculas Rift momentum has placed pressure on customers around the world to find good stereo VR solutions.

The Foundry will be showing the new MODO 901 at Siggraph 2015 in LA from August 11th.