“Last year a new Japanese celebrity burst onto the scene. But “Saya” was a different kind of star, because she is the product of a Tokyo computer lab, ” wrote the BBC discussing the virtual human Saya.

“Last year a new Japanese celebrity burst onto the scene. But “Saya” was a different kind of star, because she is the product of a Tokyo computer lab, ” wrote the BBC discussing the virtual human Saya.

Saya is an evolving digital human project using primarily off the shelf tools. Fxguide was keen to learn more about the Saya, especially with the release last week of her video for the real world audition model competition, Miss iD. We spoke via a translator to ‘her parents’, (certainly her creators) CG artists, Teru and Yuka in Japan. The husband and wife duo work as TELYUKA professionally.

FXG: Saya, initially began the project to create a short movie, is that film still happening?

TELYUKA: It initially started as a short movie project, however we are now thinking about different avenues for this. If there is an opportunity, we would be definitely interested in making Saya as a movie. But for right now, we are focusing on improving Saya’s level of perfection. We have recently released her PR movie.

This is the PR movie for Saya at the semi-finals for a project called “miss iD“.

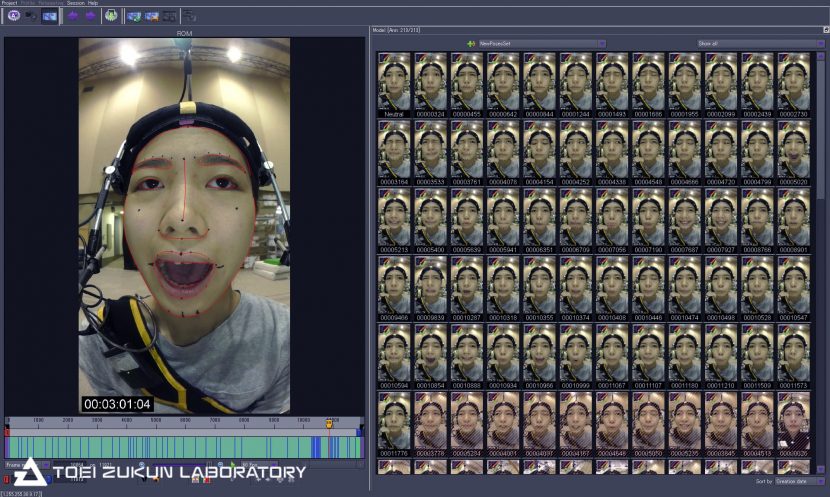

We model the assets, do scene set-up, rendering and compositing for Saya. We are partnered with, Toei Zukun Lab who assist with performance capture, and with Logoscope who does color management and technical support.

FXG: Can you discuss the research that was happening at the Tokyo University : Agriculture and Technology Communications & Signal Processing Lab?

TELYUKA: Initially, we started working on Saya to enhance our own techniques, but when we published her still image, we received many offers for collaborations from a variety of industries. At that point we realized that we could potentially apply Saya’s expression for various fields.

Professor Sugiura, KEIO MEDIA DESIGN provided us with valuable advice for Saya’s role and its future development and helping us find a direction for Saya’s development using state of the art technology.

One such example is the next generation “digital signage” utilizing Saya.

The professor advised us saying, “although touch screen based digital signage is used to guide tourists to search destinations, they often seem like too uninteresting since they look like static boxes of information. It will be nice if even older people, foreigners and people who are naive using technology can use it easily by means of Saya, as if they were asking someone they know.”

During Fall 2016, we embarked on a challenge to render Saya on a SHARP 8K monitor. We collaborated with Professor Sugiura in order to see how realisticly Saya can be displayed on a 8K monitor. As a future goal, we are aiming towards enabling audience to interactively communicate with Saya. Right now we are researching the possibility of integrating Saya’s identity into society. We took part in “Miss iD” to examine people’s reaction when they see her compared with real girls. Can they catch the change in her mood? Can they imagine her in daily life? How much of people’s imagination does Saya trigger when they see her? We think these things are very important in creating a realistic human character.

FXG: Is there still plans to have her to interact in VR? Or in a live engine such as Unity or UE4?

TELYUKA: We have been receiving various requests to use Saya for various interactive projects. We would like to interact with not only Head mount displays in VR but also use her in a AR and other realtime platforms and devices. However, regarding the engine, we are still researching, so we haven’t decided which one to use yet.

FXG: What is the current workflow for Saya?

TELYUKA: With Maya being a main platform, we also use ZBrush for modeling, MARI / Photoshop / Quixel for textures. As you can see, we use standard tools in the industry. (Please see the workflow below)

FXG: Did you scan an actual girl or do photogrammetry?

TELYUKA: We did not use scanning or photogrammetric techniques. Saya is not a living person, she is a created character. Using existing photos as a reference, we created her from the artists’ viewpoint targeting her to be a teenager and also a uniquely Japanese “Kawaii (cute)” girl.

FXG: Is she full rigged and if so, is this a FACS rig ?

TELYUKA: She is rigged to be able to do a series of movements. Regarding FACS, during her initial conception, we referred to the ostensible component of its theory. However, after pursuing this further, we built the rigs using our own interpretation, therefore although it could be said to be similar in nature, we wouldn’t say it is a complete FACS rig. Basic movements such as closing the eyes and lifting the eyebrows etc. are created by a set up using SkinBind and Joints, then additional elements such as folds and wrinkles are made by copying the base object and imparting additional effects, eventually adding the blend shape targets to them.

We used 104 joints for the base deformation and 21 for the additional deformations. It wasn’t intentional to set up this number of joints from the beginning. Those were added a bit by bit as needed.

At first, we started working on Saya with her eyes closed, and then we moved on to eyebrows, cheeks and her mouth. The most difficult part was around the mouth. Since actions such as stretching, puckering up, rolling etc. vary, the biggest challenge remains as to how these expressions can be accomplished in a realistic manner.

Since the shape of the head is not completely symmetric, the adjustment for skin weights becomes a tedious process. However, we were able to make these adjustments and adding the accompanying changes on the shape of the whole head by doing the basic setup for facial in SkinBind.

Besides that, LipSticky is added as a special effect for the region around the mouth. This is to express the movement in the soft contact area in the lips, when the back of the upper and the lower lips slowly pops during opening of a dry mouth. Currently, we use WireDeformer, to trigger this automatically set by distance.

Regarding the tongue, despite it being not very visible from the front, it is the most flexible part in the head. It required various expressions such as stretching, shrinking, twisting and rolling up etc. We set up SkinBind and Joints for the tongue too, in order to sort out what actions will be required.

FXG: What SSS are you using for her skin inside V-Ray? And roughly, how long is the render time?

TELYUKA: Initially we used “VrayFastSSS2″ and”VraySkinMtl”, but we are currently using “AlSurface”.

For the head to render at HD resolution, it takes about 4 to 5 minutes.

FXG: Can you discuss the resolution of her skin, is it anisotropic specular response with respect to expression wrinkles?

TELYUKA: From the forehead to the jaw we apply a texture equivalent to 8K resolution. For the whole head, we apply a texture equivalent to 16K, but split it into 4K tiles. We did not use anisotropy. Regarding expression wrinkles, this is a topic we need to work on next.

FXG: How are you animating her, do you have a HMC input pipeline? I have seen a still that looks like you do, if so which solver are you using?

TELYUKA: For Saya’s facial animation, we use the monaural image based facial capture utilizing HMC which Toei Zukun Lab developed in-house. For the solver, we use Dynamixyz Performer2SV.

FXG: What do you do as your primary jobs? I understand this is a side project?

TELYUKA: We had so far worked on movies, visual images/videos, games etc, but currently we are working on Saya’s development as our primary job. We have established our company, to research and produce virtual humans, and to do other work with clients associated with that.

FXG: Thanks so much for taking time to talk to me, is there anything else you would like to add?

TELYUKA: We watched “MEETMIKE” from SIGGRAPH 2017. The range of technologies integrated in it is amazing and a source of envy. Compared to that, in terms of technology, we are not doing something extraordinary, so we don’t have any other specifics to mention here.

We are as artists aiming to create characters that enable the audience to feel an affinity, and we believe that we should be engaged in that. Therefore, the academic or technical aspects sometimes happen to be treated perfunctorily for us. Collaborating with those who have the academic and technical knowledge is very exciting and we think it a very important factor to create better work, so we would like to adopt as much as possible if the opportunities arises.

.

Sugoi!