Jon Favreau’s Golem Creations in partnership with Industrial Light & Magic (ILM) collaborated with Epic Games to bring The Mandalorian to life using a new form of virtual production. Here in Part 1, we talk to Richard Bluff, Visual Effects Supervisor, about the lessons learned in mounting The Mandalorian. ILM along with production technology partners Fuse, Lux Machina, Profile Studios, NVIDIA, and ARRI, the team developed a new real-time surround stage. In Part 2 of this piece, we will discuss some of the history and key technology required to build such a stage, with the team from Epic Games.

The new virtual production stage and workflow allows filmmakers to capture a significant amount of complex visual effects shots in-camera using real-time game engine technology and surrounding LED screens. This approach allows dynamic photo-real digital landscapes and sets to be live while filming, which dramatically reduces the need for greenscreen and produces the closest thing we have seen to a working ‘Holo-deck’ style of technology. The process works as the camera films in mono and can dynamically update the background to match the perspectives and parallax a camera would record in real life. To do this the LED stage needs to work in combination with a motion capture volume which is aware of where the camera is and how it is moving. While this technology is producing stunning visuals for the Disney+ The Mandalorian, ILM is making its new end-to-end virtual production solution, ILM StageCraft, available for use by filmmakers, agencies, and showrunners worldwide.

Over 50 percent of The Mandalorian Season 1 was filmed using this new methodology, eliminating the need for location shoots entirely. Instead, actors in The Mandalorian performed in an immersive and massive 20’ high by 270-degree semicircular LED video wall and ceiling with a 75’-diameter performance space, where the practical set pieces were combined with digital extensions on the screens. Digital 3D environments created by ILM played back interactively on the LED walls, edited in real-time during the shoot, which allowed for pixel-accurate tracking and perspective-correct 3D imagery rendered at high resolution via systems powered by NVIDIA GPUs. The environments were lit and rendered from the perspective of the camera to provide parallax in real-time, with accurate interactive light from the LED screens lighting on the actors and practical sets inside the stage.

Jon Favreau and cinematographer Greig Fraser helped develop the system and then shot the pilot. The remaining episodes then had Barry “Baz” Idoine as the DOP. Along with the impressive list of episodic directors, the production changed its workflow to make many more decisions up-front and then have the ability to make concrete creative choices for visual effects during photography, achieving real-time in-camera composites, with the game engine providing ‘final pixels’. When it was not possible to the shoot final backgrounds in-camera, the ILM team would replace the Live LED sections with traditional 3D but still avoiding many of the issues of green screen, such as the issues of green spill and poor contact lighting on the characters.

Compared to a traditional green screen stage, the LED walls provided the correct highlights, reflections, and pings on the Mandalorian’s reflective suit. These would have been completely absent from principle photography if shot the old fashioned way.

The technology and workflow required to make in-camera compositing and effects practical for on-set use combined the talents of partners such as Golem Creations, Fuse, Lux Machina, Profile Studios, and ARRI together with ILM’s StageCraft virtual production filmmaking platform and the real-time interactivity of the Unreal Engine (UE4) platform.

“We’ve been experimenting with these technologies on my past projects and were finally able to bring a group together with different perspectives to synergize film and gaming advances and test the limits of real-time, in-camera rendering,” explained Jon Favreau adding, “We are proud of what was achieved and feel that the system we built was the most efficient way to bring The Mandalorian to life.”

“Merging our efforts in the space with what Jon Favreau has been working towards using virtual reality and game engine technology in his filmmaking finally gave us the chance to execute the vision,” said Rob Bredow, Executive Creative Director and Head of ILM. “StageCraft has grown out of the culmination of over a decade of innovation in the virtual production space at ILM. Seeing our digital sets fully integrated, in real-time on stage providing the kind of in-camera shots we’ve always dreamed of while also providing the majority of the lighting was really a dream come true.”

Richard Bluff, Visual Effects Supervisor commented, “working with Kim Libreri and his Unreal team, Golem Creations, and the ILM StageCraft team has opened new avenues to both the filmmakers and my fellow key creatives on The Mandalorian, allowing us to shoot principal photography on photoreal, virtual sets that are indistinguishable from their physical counterparts while incorporating physical set pieces and props as needed for interaction. It’s truly a game-changer.”

Lessons learned

Richard Bluff and the ILM learned many lessons when filming using this new approach. fxguide sat down with him to gain an insight into this new virtual production approach.

Set testing

For each ‘location’ there was a walkthrough on set. Even before the sets were made production-ready and optimised for real-time time performance, ILM would render out a Lat-Long 360 and put that up in the LED set. During these lunchtime test sessions, the production design team would also bring in practical pieces that needed to be matched. This would then be followed up with a more precise lighting adjustment on the day that set would be used for final imagery and real takes.

Practical Sets: match real and virtual sets

During this pre-shoot period, there are many digital and practical adjustments that are needed. The first combined set builds not only allowed for blocking but time for the practical art department to re-paint props if need. Adjusting colors was complex. As the practical sets were lit by the virtual LED set, matching colors was almost a chicken and egg problem. If part of a ship was real and part digital, adjusting the digital color would affect the light being thrown onto the real partial ship. If the colors did not match, it was sometimes easier to repaint the set, knowing how it would look bathed in the light of the digital version than to adjust the color of the digital set and thus also alter the lighting falling on the practical section. Just adjusting the digital could contaminate the light falling on the props and/or actors. “Let me give an example,” says Bluff. “Imagine an interior where you’ve got skylight coming in. Say the sky/day light has got a bit of a blue cast to it, and yet it falls on a warm colored wall,” he adds. “If all of a sudden our practical wall is lighter and we start brightening up a matching digital wall to match, then we could be breaking the illusion that there’s sky light coming and that it is the sky that is providing that lighting”.

Volumetric 3D color correction.

The UE4 team early on developed a new form of color correction which can best be thought of like a 3D spherical ‘power window’. Inside the spherical volume, anything could be adjusted. The system works with spheres instead of circles, as it works in the full three dimensions of the UE4 world. The volume can also be feathered or softened to ease or graduate the color correction effect in and out of the desired space. This volumetric color correction does not need to be an exact sphere, and it could be interactively controlled from an iPad by someone in the LED volume. For example, if a section of mid-ground rocks needed to be more saturated, the operator could select the objects, or move the color correction volume back over that section of 3D and any part of any object in the volume space could be graded.

Practical Set Dressing: Props

Inside the stage, there were many real props. Sometimes these continued into the virtual world of the screens or similar items existed also on the LED walls. In addition to being able to color correct the object, the crew had to account for the 2D notion of them as ‘bounce light’ objects. Imagine a line of bright yellow posts each a fair distance apart, with one on set and the rest stretching into the distance.

In the Mos Isley hanger scene, there was practical sand across the entire practical floor, which then also continued in the virtual ‘set’, giving the illusion of a much bigger hanger. “On the practical set, we had these yellow and red canisters for set dressing,” explained Bluff. “And we also had the same red and yellow canisters on the digital wall that are supposed to be 30 feet beyond where the practical sand ended. Of course, those are seen with the correct perspective from the camera’s position, and therefore those red and yellow canisters appear smaller on the screen. But in reality, when you’re not looking through the lens of the camera, those red and yellow canisters are literally 12 inches off of the sand on the screens because the screens are butted up right against the sandy floor,” he explains. “As the LED screens are providing all the lighting onto the practical set, you end up with this red and yellow light source that is directly lighting up the sand in front of it.” In the camera’s reality, the canisters are many yards away from that sand but on set they are only, six to twelve inches away. The advantage of the LED set is that it is the lighting source for the show, rather than C stands with Kinoflos and reflectors, but “it also is a problem because it’s a lighting source,” Bluff points out. The problem here was accentuated by the shot being on Tatooine and the sand was bright and reflected colors from the screen. The Mandalorian team did not have this problem so noticeably when filming with dark tones on the floor, for example in the lava flows of Nevarro.

(Taika Waititi, Gina Carano, and Carl Weathers.)

Lighting back into 3D

Just as the real sets were lit by the LED walls, the ILM team had to take into account the way the 3D world would be lit up by light bouncing off the practical set items. A large object on the practical set should always be bouncing light back at parts of the digital set. An onset real yellow canister should bounce yellow light on to the digital assets near it and this had to be accounted for by the 3D lighting team. Hence ILM needed to always work in close lock sync with the art department. This was very evident in the Mos Eisley Cantina. This was partly because it was an iconic set in the Star Wars world and also because of the complex internal props. “We had to fully build out everything that appeared, in the Cantina itself, which included the bar itself, all the trim, the drinks, the dispensers, everything in the middle of the bar, because they were all incredibly reflective surfaces” explained Bluff. “Additionally, all the tables, the chairs, the booths and everything within the environment was fully built out and then the team at ILM in San Francisco started selectively turned off to the digital camera the elements that we didn’t need to see visibly, but yet still needed to cast indirect light and shadows.”

High Dynamic Range Imagery (HDRs)

HDRs captured with SLRs is a cornerstone of any on set VFX teams day to day work. There was no sense in the ILM 3D lighting teams getting HDRs from the Mandalorian set, as the set is lit by the 3D team’s own environments. ILM had to use substitute HDRs and not rely on any useful on set HDRs.

Practical Sets Staging

Many of the sets were built with raised ground planes and props to hide the corner point at the bottom of the LED screens where the panels would otherwise meet the floor. Similarly, grass or practical rocks are placed in the midground to blend the transition and hide the seamline at the bottom of the set.

Exterior Lighting and Shadows

When filming outside, while the LED set provided an excellent skydome that could be repositioned using an iPad interactively, the LED screens act much like a large softbox, and not a point source of illumination. Even with their high 1800 nits, the actors would not gain a strong exterior lighting profile that would produce the typical exterior sharp shadows from parallel light (coming from the sun). As such the stage was made so that LED panels could be selectively lifted out and additional traditional spotlights could be added.

Alternatively, the production actually filmed outside on a location near the Manhattan Beach Studios stages, where the art department built various Mandalorian sets.

Cameras and Colour Response

ILM’s Matthias Scharfenberg and his team tested the ROE Black Pearl BP2 LED capabilities and matched them with the color sensitivity and reproduction of the LF. This means that The Mandalorian pipeline was camera specific to the Alexa LF.

Audio

Due to the curved shape, hard surfaces and LED ceiling, the stage was very loud in terms of audio. Standing in certain points in the space, the natural acoustics would allow conversations meters away to be easily heard. Thus the set had to be run quietly without extraneous noise or chatter off camera.

Depth of field

To reduce moire patterns the background was often shot slightly soft, which diffused the possibility of a pattern that would give away the digital wall. This can be easily achieved with a combination of lens choices and light levels, however, inversely there was no way to remove defocus. If the wall was out of focus since it was too close, yet the digital imagery would be notionally much further away, and thus in focus, there is no way to make the imagery come back into focus. “There is no magic trick that helps that this focus problem, it just came down to the choices that Greig Fraser and Barry Baz Idoine made with the lens packages,” comments Bluff. In this case, the only option is to replace the digital LED footage with sharp, in-focus material in post-production.

Moire Patterns

Jon Favreau wanted to mimic the original Star Wars movies and obviously A New Hope Ep IV in particular. Thus the intent was never to produce overly sharp imagery. Favreau and Frazier wanted “the creamy look that you get from the Anamorphic lens that we saw back in the 70s on our show,” says Bluff. As a result, the fall-off was going to be greater, which was always going to help with any potential moire issues that one might see with the LED screens.

Capture Volume sensors

The Mandalorian’s camera and any props could be tracked by the Profile Studios’ motion-capture system of infrared (IR) cameras. These surrounded the top of the LED walls and monitored the IR markers mounted to the production camera. These physical profile sensors needed to be visible so they could track the camera. There was no way to hide them in the ‘sky’ and thus they had to be removed in post-production.

Latency and Lag

From the time Profile’s system received camera-position information to Unreal’s rendering of the new position on the LED wall, there was about an 8 frame delay. This delay could be slightly longer if the signal had to also then be fed back to say a Steadicam operator. To allow for this when the team was rendering high resolution, camera-specific patches behind the actor (showing the correct parallax from the camera’s point of view) the team would actually render a 40% larger oversized patch. This additional error margin gave a camera operator room to pan or tilt and not see the edge of the patch before the system could catch up with the correct field of view.

Real backgrounds & Miniatures

The purpose of visual effects, live or post, is to make the world seem real, and as such, Bluff had planned from the outset that whenever possible real footage should be used in the backgrounds. “I went through the archives here at ILM and grabbed any images or photographs from Tunisia, or any other real locations where Star Wars had been shot, such as Greenland,” recalls Bluff. ILM then built a Mandalorian library of these for use by the team. To add to these, the team built a full photogrammetry reconstruction of the interior of a disused and rundown concrete building on Angel Island in the Bay area of Northern California.

ILM shot hundreds of shots and built a highly detailed and texture heavy 3D environment which was exported to UE4. “Once we threw that on the LED screens and we could dolly the camera back and have the character walking with the camera and effectively moving through doorways and we could see all the correct light shifts, well that was kind of our ‘Eureka’ moment,” says Bluff. This extended to building miniatures and building pano-spheres after photographing them. “And that for me was when I started having a conversation with Jon about forgetting the fact that this was using a game engine…let’s even build a miniature knowing it’s going to be photo-real. Let’s do full photogrammetry on the miniature and then put that into the game engine as well.”

Matching Renders

While 50% of the shots were able to be done in-camera, that still left a lot of shots that had to be done with a more traditional ILM environment pipeline. The in-camera environments were rendered in UE4, but ILM rendered the standard pipeline environments in V-Ray and not in UE4, so these had to match both in terms of visual complexity but also color, texture and focus. ILM’s pipeline was to model in 3DsMax or Maya and then to ray trace the imagery in V-Ray.

VR scouting

As the sets had to be built ahead of time digitally and accurately, this allowed the filmmakers to use the assets for VR camera scouting. Jon Favreau was of course very comfortable doing this having used VR so extensively on The Lion King. This also allowed very quick set dressing and lighting tests to polish the details with the virtual art department under the supervision of Andrew L. Jones.

Tech Background

• LED screens that made up the curved wall used the ROE Black Pearl BP2 screens. These have a maximum brightness of 1,800 nits, which is extremely bright. At peak brightness, the wall could create an intensity of about 168 footcandles, the equivalent of an f/8 3⁄4 at 800 ISO, assuming the camera is shooting at 24 fps with a standard 180-degree shutter. This meant that light levels were not a problem, and oftentimes the production would have the LEDs turned down significantly in brightness, well off their maximum brightness.

• LED screens that made up the curved wall used the ROE Black Pearl BP2 screens. These have a maximum brightness of 1,800 nits, which is extremely bright. At peak brightness, the wall could create an intensity of about 168 footcandles, the equivalent of an f/8 3⁄4 at 800 ISO, assuming the camera is shooting at 24 fps with a standard 180-degree shutter. This meant that light levels were not a problem, and oftentimes the production would have the LEDs turned down significantly in brightness, well off their maximum brightness.

• The Mandalorian used the Panavision’s full-frame Ultra Vista 1.65x anamorphic lenses. The anamorphic squeeze allowed for full utilization of the 1.44:1 aspect ratio of the LF to create a 2.37:1 native aspect ratio, which was only slightly cropped to 2.39:1 for the final output (see American Cinematographer Feb 2020).

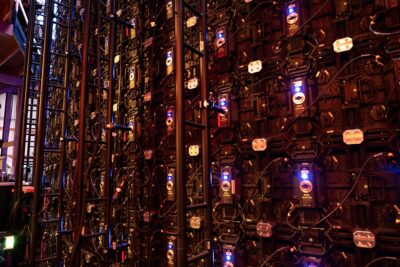

• The stage was curved and 20ft high. The 180 degree LED video wall, comprising 1,326 individual LED screens.

• The main LED screen was a 270-degree semicircular background complete with an LED video ceiling. This provided a 75′-diameter performance space for the actors and the props.

• The opening in the stage was required for access and filming, but there were two hanging 18′ x 20′ flat panels made up of 132 LED screens, could be lowered into place to complete the 360-degree effect.

• The panels used a linear colorspace to be as neutral as possible.

• The screens had a 2.84mm pixel pitch. This is important as the pitch affects the moire pattern that can occur when filming an LED screen in a shot. The T-stop of 2.3 of the Panavision Ultra Vistas Lens is similar to a T-stop of 0.8 in Super 35. This produces a very shallow depth of field, thus forcing the LED screens to be often defocused. The production typically used longer lenses, normally either 50mm, 65mm, 75mm, 100mm, 135mm, 150mm or 180mm Ultra Vista lens that ranged from T2 to T2.8, and the DOPs exposed the shots around T2.5-T3.5.

• Onset there was a ‘ Brain Bar’, which was a line of workstations and artists from ILM, Unreal and Profile that controlled the set and the motion capture volume.