For ‘Ashes to Ashes’, a trailer for Epic Games’ 2011 Xbox 360 release Gears of War 3, Digital Domain utilised Unreal Engine to give a cinematic glimpse of some of the game’s characters and plotlines. Director Vernon R. Wilbert Jr., also the trailer’s visual effects supervisor, talks to fxguide about how it was realised.

fxg: The trailer seems to have both a sombre and heroic mood – what was your brief for the piece?

Wilbert Jr.: One story stood out strongly to the agency, agencytwofifteen. That was this of idea of char – there is actually a level in the game at the time that was called ‘Char’. I remember them telling me that it was pretty standard for games out there to have a snow level. So if Gears of War had a snow level, this would be it. It was basically a city that’s been destroyed by its own government using the Hammer of Dawn weapon, a weapon that comes from satellites moving into position to wipe out an enemy. So what’s happened is they’ve destroyed sections of the city and burned alive its inhabitants. It leaves a strikingly sombre place, almost a sacred place.

When I started seeing the concept art, I kept seeing these people in these poses and it kept bringing up the idea of Pompeii. The idea was that the character Dom (Dominic Santiago) would be running through this city being shot up. At one point he was going to be re-united with his team and jump over a barrier and his whole team would be there and he’d be fighting this fight. After seeing these images and how heart wrenching they were, I wanted to continue the story Dom has that starts in other Gears pieces, ‘Last Day’ and ‘Rendezvous’. The story is that he has an inner conflict that he has to overcome. There’s something inside of him that is stronger than the enemies on the outside. He had a relationship with his wife who had been infected and who he had to kill, and he had two children who were killed as well. So there’s a lot going on with him. Marcus (Fenix), being the leader, also has to deal with that. So we thought it was a stronger story to have Dom go off on a scouting expedition and end up engaging a platoon of enemy soldiers and then run back towards Ground Zero. Then as he’s running back he starts to lose his way because the fog and the ash from all these people are all around him. It’s a visual interpretation of what’s going on in his mind – his fog of doubt. He’s lost everything that he cherishes to this enemy. Finally, when the enemy wounds him he sees what we’ve come to call the Madonna, which is a Michelangelo Pieta-type sculpture holding two children with a face that’s kind of non-descript so that the viewer is able to put into that that Dom’s family is lost.

At that point, Dom gives up and he falls into that de-focussed nothing state. Then this Demon comes in front of him and is about to kill him, but then who to come in there and be with him is the person that makes it the whole reason for being there and continuing on – Marcus. I’ve heard specifically from people who are in the military now that it’s not really about the politics and it’s not about who’s right and who’s wrong – it’s about the man to your left and the man to your right. You’re fighting for those guys. Dom is lying there and to his right is Marcus, who saves him. And to his left is Anya, who hands him his weapon and says get to work. Then enters the rest of his team, and they get to work. Even though we leave them in a desperate situation, as we typically do in these pieces, it’s not really about giving up, it’s about fighting for the man to your left and right. And that’s really the whole theme I wanted to bring to this piece.

fxg: How was the trailer conceptualised? Was it storyboarded and previsualised?

Wilbert Jr.: Well, the agency had a brief and I started off with a treatment from that idea. We went into boarding. I was lucky enough to get Dwayne Turner, who has boarded all the Gears of War pieces with us. We boarded together to work out the shots. From there we go into previs – we take the boards and we cut them to a track that we think is necessary. In this case we chose ‘Heron Blue’ by Sun Kil Moon. We’d previs the shots to get the angles and timing right. Then we moved into motion capture, which was important to me in this piece, and not necessarily because mocap is a quick way of getting animation. Actually, what I love about the motion capture process is the ability to bring in a human actor and presence into something that typically isn’t seen that way. I think the Gears pieces are a great example of taking something that has nothing to do with what you call ‘acting’. It’s big characters and polygons and they shoot and kill people, but what’s great about it is we bring in this pathos and ethos to these characters. I think people have really loved that we can take these characters and give them a presence and a state of mind from ‘Mad World’ on.

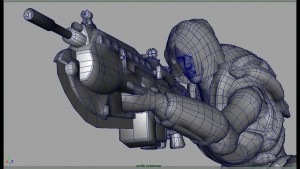

For the motion capture process, I wanted to use people who had actually done the real deal. So we got in Special Forces people. One of them, the lady who played Anya, actually got a Bronze Star. I had them act out one of those last scenes together as a group and I gave them a scenario of how would you act as a small elite team in this situation? And they did what they had to do in that moment and we did that multiple times. From there we take mocap as a base and bring our animation team in and do the necessary adjustments to polish it down. We import that into the game engine itself. That’s the most important part, because what you see is what you’re going to get. We use the actual game assets from Epic and the Unreal 3 engine itself. We actually become a side group of Epic – we have everything they have and we’re just using their stuff basically and shooting a virtual film. It’s a true machinima piece. From there we do some frame dumps of what we shoot, cut it together, do a slight grade to it and we’re done.

fxg: How does the game engine plug into DD’s existing pipeline?

Wilbert Jr.: That’s the great part about it. When we were first approached by Microsoft Xbox back for the original E3 trailer for Epic when they first announced this game, the idea of shooting something using a game engine was exciting, but how do we do it? This gaming platform is not built to do that, it’s built to make games. What a bunch of people have done it in the past is that they’ve hotwired their game engines to make films, such as with Red vs. Blue right in the beginning. At Digital Domain we have a particular pipeline that we use to make films and to make commercials that makes us successful, because we have these two pipelines and one helps the other. We also have a talent base I wanted to pull from our film and commercial divisions and they’re based in Maya or Houdini or something else. So I had to work out where the gaming engine fit in the existing pipelines, and I figured it fit best as a Maya-to-RenderMan type scenario where you’re doing a lot of the work in Maya and then you’re doing all the lighting and rendering through RenderMan. If we used the engine that way, we could get the most bang for our buck. So we created a separate pipe for the engine so it existed in its own world, so no one else could access it. Because we were using exactly the same engine as they were to make the game, we had to make sure the software was protected. Each artist had their own Gears box. It’s not too different from how most companies work when they’re creating games – they have a games box and a standard PC.

Once we got the animation we needed, we ported it over using some tools in the Unreal engine that will take animation out of Maya into the engine. We wrote some specialty code here so that we can control certain parts of the engine that we couldn’t control before. We had our own technical directors write specific code. In particular, we wrote code to bring in all of own camera tools – cameras set up outside of the engine can be brought in. The cameras inside the engine are designed for games so everything’s on a really wide lens and they move around in a certain way. They’re not built to look like you have a Fisher or are on a crane, or even a Steadicam. So when we’re animating for real world situations we want to make sure we have the right camera and lens kit. We also wrote a device to export frames out of the engine itself. The engine is a 30p standard hard-coded export, so we wrote some code that would pause the engine every 30th of a second and dump a frame, and then continue on. We also wrote some code to access double HD images, almost 4K, with floating point. So when we get the images out we have the ability to colour correct and get all the important information we have in an uncompressed format.

What’s interesting about the engine also is that because it acts like a virtual set, there’s no idea of compositing. There’s no sense of alpha channels. When you need to know what frame one is, or say the last frame, you can’t do that. In live action, when you’re doing say motion control you can have a bloop light when you’re running a particular movement and you want to know what frame one is and the end frame, the bloop light will tell you that this is the frame before the first frame and the footage after is exactly your move. So we had to create a similar system in the engine where we flashed a red frame, indicating the frame before the actual animation and the camera would begin. It’s pretty interesting to see everything in there working – the animation, clouds are moving, smoke is dancing and fire is happening – and then bloop, our red frame goes off and the action starts and then the bloop light goes off again and the action is done.

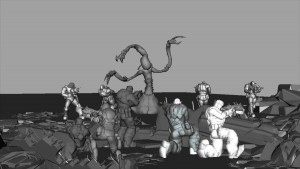

fxg: How did you approach the animation in general, especially for the tentacled creature near the end?

Wilbert Jr.: The reveal of the tentacled creature, the lambent, was very important to Epic. I always look at this piece as a great introduction piece – just about every shot introduces something new to the viewer that they want to highlight. There are two forms to the lambent, the tall technical creature and the polyps, the spider-like creatures emanating from the base of the lambents. Then there’s these tall vine beanstalk-like things coming from behind the lambents and this was the delivery device for them. The stalks have these bulging pustules on the side of them and out of them bursts the lambent. The animation of the creatures was basically hand-keyed. I wanted to get the best we had, so I brought in animators who work on our major films. I gave them the pretense that this particular character needs to attack in a certain way and to raise at the lens a certain way. The animators would sometimes give feedback about the shape and size of the neck so that it could give a more successful and powerful move. So it was very collaborative dealing with those creatures. For the more motion-capture stuff, we did a fair bit of adjustments for the facial animation and timings to hit certain beats in the story and to correspond with the music.

fxg: Can you talking about porting animation from Maya into the game engine?

Wilbert Jr.: The motion capture was brought in through Maya to the game engine, as well as all the key frames. Animation of the lambent was done in Maya and ported directly over. The polyps were completely AI driven and done completely inside the engine. Epic is actually creating the game as we speak, so this was very early on for them in the creation cycle. They’re actually very far along, which was great, but we did run into a scenario where the polyps wouldn’t go up and down surfaces. So we had to do things to make that happen. It was funny – at one point we really wanted one of the polyps to be on top of one of the stalks and jump down and Anya take them out. But the polyp wouldn’t come down! Well, we figured he was afraid. That’s just because the code was constantly being upgraded, and we had to work with Epic. I liken it to going to buy the latest Porsche, taking a test drive and then they’re actually putting wheels on while you’re driving.

fxg: How were the shots of the ashen people being blown apart accomplished?

Wilbert Jr.: The reason why there’s fog everywhere, as disgusting as this may sound, is that it’s burnt human flesh dissipating through the air. The piece is set at 6.30 in the morning. I called it Christmas morning. When you look at the first shot you get the feeling of Christmas morning with light going through the beautiful clouds. It’s cool and blue and cold and happy. But that’s only for the first 30th of a second, because after that you get amazing doom. What you’re watching is the most horrific thing you’ve seen. The way the ash people break needed to tell that story. They are frozen in a moment where if you only had 30 seconds to figure out what to do, what would you do. We wanted to show these scenes of what people do. Also, for the ashen people, Epic is still developing them but we needed something final. So we produced some concept work, showed Epic and they loved it. So we modeled and textured them and brought that asset back into the game engine.

We open up on a scene at the base of our crane tilt-down. I always call it our senior couple. Those two are embraced in their last moments, knowing that something’s coming down the street. Then we have people hiding in doorways and we come to our last man and he didn’t know what to do. He’s locked in almost a Munch-type scream and Dom runs through him. He’s trying to not run through him but he clips him and it turns into this ash cloud – it doesn’t so much fall as continues to contribute to the fog-type atmosphere. We worked in the game engine using their ash structure and tried to manipulate it to work well with the story. So when we hit the first guy we get a sense of mass but it’s almost as if he’s made of air. Then we have a sister shot, the Madonna shot. The Locust come in and swipes at the woman. There’s a strong dichotomy of what’s going on there. We had to make sure our effects had a sense of weightlessness. The Unreal engine has an amazing set of tools for effects, so we’d try different things like using a kit of parts. Sometimes they’d break completely and sometimes you’d get success. It’d make people who do games freak out, but when we get a good result, they’re happy.

fxg: Were other effects like gunfire, trails, dust and smoke done in a similar way?

Wilbert Jr.: Well, right from the treatment I wrote, I remember driving around on a Christmas morning with my parents and it was really foggy and you’d see Christmas lights blinking, especially at night, but sometimes in the early morning. And if it was foggy you could only see five foot in front of you, so it was very scary, but with a beautiful soft bokeh effect. I felt that the trailer could have the same thing. So the gunfire muzzle flashes are the Christmas lights. They should be beautiful in a beautiful fog. So we made sure they were prominent. They’re very bright and are going off behind the Madonna and everywhere. There’s a chaos happening a beauty to it.

Wilbert Jr.: It’s great because we’re starting from all the work done by Epic – their textures, their characters and their buildings. What we do is build almost a digital backlot. We do a tech scout through the engine to try and find where the scene is going to take place. We actually look at different levels and find one we like. We pick a street, say, that you will actually play a game. We picked two major areas. Lighting-wise, the tools in Unreal are very similar to what you’d find in any third party package – you have spotlights and point lights and directionals. Then you also have fire and smoke. Because we’re familiar with all of that stuff, we get our guys in to light the scene like a film set and they do a beautiful job. But what happens at that point is that we want to punch it a little bit more. So with the permission of Epic we perhaps add some sky, put some more clouds there and paint in a sky matte for example.

From that point on, we were very particular on lensing. Because this is an early morning piece, I wanted to make sure we stayed on particular primes. I used a lot of 35s and 50s and here and there you get a 100, but typically it was an 80. I wanted to make sure that if the sun was at a certain point, we’d be at a particular F-stop. So a lot of this was shot with a 2.8 in mind and sometimes down to a 1.8. That would have a particular depth of field. With Dom, the way he reacts when he’s knocked down, I wanted to make sure there was a certain depth of field in that shot. We thought that if we kept it as if we shot it for real, the audience would have an easier time accepting the characters as real. Then for colours, we made sure our palettes reflected the sunlight and that it all bounced a certain way and had that natural feel. We treated this as a negative. The idea of having a digital file was similar to what you might get if you shot it on RED. So we had this ‘negative’ file and then would do some colour correction to get the actual positive we needed out of it. It had a particular look to it, but it had all the information we needed, even though it was a little bit flat and brown. Then we just applied a grade to it and cooled it off a little so that the highlights were a little more warm and the cools were more bluey-green.

fxg: One of the nice effects is the interactive lighting from the weapons fire.

Wilbert Jr.: We got really lucky with the system Epic had built in the engine, so that weapon and tracer fire was already set up to some extent. What we would do for the weapon fire is animate a point light to give the lighting effect. In filmmaking you have the ability now with the new DSLRs and RED chip coming out to be able to shoot something where the lighting is found light and to be able to use that to add drama to your scene and not have to make sure you enough light to get the right exposure. That was the concept we brought into this trailer – what is the light in the scene if you were there as a photographer doing your thing. I thought there would be some light but it would be too dark for certain things, so we were very particular about the muzzle flashes as lighting sources. Fire and explosions going off were also very important.

fxg: Finally, how did you use the editing and music to tell the story?

Wilbert Jr.: We stayed closely tuned to the storyboards and previs. We spend a lot of time at the head of these pieces planning them. It can be very difficult for those involved sometimes, because often we need to get to the next stage of production to get shots to finalise. But we spent the appropriate time, because the previs is where we select our track and define our piece. That’s the system we’ve set up here. It was extremely important that we walk out of editorial with the previs and essentially our production, with our music with our imagery – basically what I call my shooting bible. Everyone can then understand what the piece is before we go into production.

Credits

Gears of War 3 “Darkest Hour” (AKA “Ashes to Ashes”)

Directed by: Vernon R. Wilbert Jr.

Agency: agencytwofifteen, San Francisco

Scott Duchon: ECD

John Patroulis: ECD

Paul Caiozzo: Creative Director

Aramis Israel: Art Director

Rey Andrade: Art Director

Tom Wright: Sr. Producer

Peter Goldstein: Group Account Director

Production, Animation, Editorial & Visual Effects by: DIGITAL DOMAIN, INC.

Ed Ulbrich: President, Commercial Division; Executive Vice President

Karen Anderson: Executive Producer

Vernon R. Wilbert Jr.: Director; Visual Effects Supervisor

Tim Jones: CG Supervisor

Melanie La Rue: Sr. Producer

William Lemmon: Coordinator

Dwayne Turner: Storyboard Artist

Russ Glasgow: Editor

Niles Heckman: Previs Artist

Ryan Vance: Technical Director

Mårten Larsson: Technical Director

Erich Hauptmann Jr.: Technical Director

Derek Crosby: Technical Director, Character Rigger

Adrian Dimond: Character Rigger

George Saavedra: Character Rigger

Rick Glenn: Lead Animator

Marc Perrera: Animator

Tim Ranck: Animator

William R. Wright: Animator

Margaret Bright-Ryan: Lighter

Jon Gourley: Lighter

Daisuke Nagae: Lighter

Matthew Bell: Modeler, Lighter

Trisha McNamara: Modeler

Daniel Thron: Matte Painter

Christopher DeCristo: Lead Flame Compositor

Lisa Tomei: Flame Compositor

Les Umberger: Flame Compositor

Production Company: Anonymous Content

Joseph Kosinski: Creative Director

Jeff Baron: Executive Producer