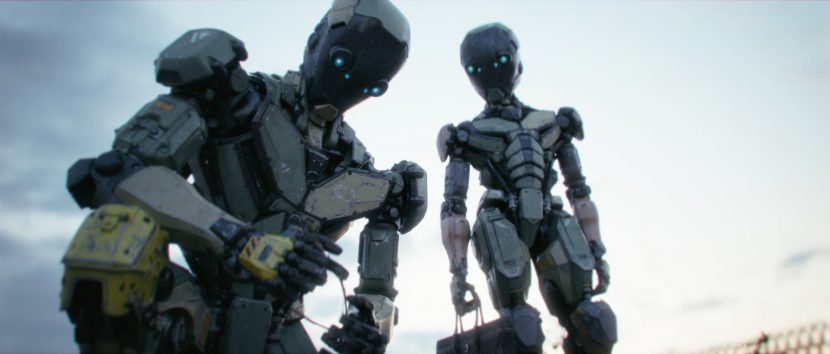

When robot rebels go after you and your family, what do you do? Take ‘em out. Robot Style.

Director Kevin Margo worked on this film in the Winter of 2013, the film was used as both a creative exploration and a testing ground for Virtual Production. “At the end of 2013, I proposed the short film, and a virtual production prototype pipeline to the Chaos Group and then we hit the ground running in January 2014”, Margo told fxguide.

Now some 4 years later, the team are announcing that Construct is in feature film development with production company Automatik, helmed by Brian Kavanaugh-Jones (Midnight Special, Operation Finale) and Fred Berger (La La Land). Alexander & Lee’s, Brandon Millan will produce the script penned by Marcos Gabriel with Nightfox financing development. The feature film version of the film will build on the story of working man Aiden Connolly whose robotic son is kidnapped by a desperate father working with a rogue group of AIs in a world where robots represent the lowest socio-economic class”.

Construct , the short film, is a 12-minute short, pitting a gentle android against the murderous robots that mean to do him harm. The virtual production pipeline that Margo first suggested in 2013/2014 did not have the advances of today’s real time raytracing but it did attempt to use a version of ray tracing with a ‘polaroid’ named stills solution that used ray tracing. The system allowed a noisy image to be seen in real time and then a ‘digital polaroid’ to be clicked which would be a still that quickly converged to a much cleaner image.

“We did six or seven mocap shoots in January and February 2014 and at the same time Vlado and some of the V-Ray team were prototyping, plugging V-Ray via the SDK into Motion Builder.” explained Margo. “February 2014 was when we were in the motion capture volume starting to prototype all of these things layered on top of each other. We had my lead actor in a mocap suit, we had a virtual camera on the stage, and we had the first alpha builds of this Motion Builder pipeline running.” This first capture session was all the footage seen in the Part One behind the scene’s video above.

Created by Kevin Margo and a team of artist friends, Construct has used the latest advances in technology to turn in a feature-grade film, without the budget or resources of a major studio.

Kevin Margo is a filmmaker, director and VFX/CG supervisor, who has worked on everything from the Batman Arkham cinematics to the infamous leaked Deadpool test footage that earned the film a greenlight. Since 2011, Kevin has created two short films, the surreal sci-fi viral hit, Grounded and Construct, which has been a test bed for virtual production technology.

The Construct team has grown over the years, utilizing a wide range of talents, from Blur Studio artists (where Margo has directed and supervised VFX) to the stunt choreographer for Daredevil and Deadpool. Liam Neeson’s stunt double even got involved, providing movements for the film’s antagonistic foreman. In total, 75 artists and performers have contributed to the short, helping Margo realize 210 all-CG shots, often out of his 300-square-foot apartment in Venice.

Designed for realism, Construct’s run-and-gun, “handheld” shooting method was so immersive that the short began fooling other artists, who assumed that Margo had tracked the digital robots atop of live-action plates. “Everybody wanted to know what camera I was using,” said Margo. “When in reality, our team was just churning out well-lit, photoreal CG backgrounds using ‘natural light’ and V-Ray.”

The second Behind the scene’s video (above) was from 2015, one year later. It was also presented at GTC that year. For this GTC presentation, Margo partnered with NVIDIA and Chaos Group, gaining access to the emerging tech that would help him reach his goals.

“Nvidia invited us to use their GPU farm cluster in Santa Clara, it’s like 150 GPUs” he explains. “So then we wound up shooting that second part of the behind the scene in Culver City. We had a little makeshift MoCap setup and we were streaming essentially the transformed data of the robot skeleton up to Santa Clara”.

The team had VR scene files sitting on the Nvidia GPU in the cluster and they were just receiving essentially transformed data for 150 robot parts in real time. “Actually, we only actually wound up using 32 GPUs. Each of the GPUs was assigned a little part of the overall frame. Each GPU who was running on a little tile and then, very quickly, it would get stitched together into a single image and then compressed.” This was then streamed in the background to Culver City so that it could be displayed on a virtual production monitor that Margo was viewing. “This was all being capturing in tandem with the performer and their data being recorded”.

This system of rendering at a different location raises the issue of latency. For the system to work effectively there needed to be very little lag between any move by the DOP and Margo seeing that data returned back from the Nvidia GPU farm to his monitor right next to the DOP. The first tests at 2am were hugely impressive. “At that time in the early morning, we would see maybe a 70 to 100 millisecond delay, which is maybe a two or three frame lag,” Margo recalls. “But then we came back and shot the video at 9:00 PM the next day – which is peak Netflix download time! All of a sudden we were seeing a 10-15 frame lag. It’s just fascinating those kinds of issues that arise when you’re working with the public internet”.

Construct is no stranger to surprise. When the first clips were released at GTC 2014, the one-minute teaser and accompanying behind-the-scenes video wowed artists and press with their innovative use of virtual production techniques, leading to appearances on CNET, Indiewire, Creators and Kotaku. In fact, an operating idea behind Construct was to speed up the CG workflow, so Margo could start to mirror live-action productions.

“Our story isn’t typical, in that we were in this situation where two massive companies were either giving us expensive hardware or building prototype tech just for us,” said Margo. “They really put the wind at our back, so we could explore GPU rendering and virtual production at the highest levels, and we put those creative benefits back into the short. At the time, we were doing things that had never been done before. No one had thought to ray trace while motion capturing.”

After all the capture was complete, the team started working on the data. “The short, itself, wound up taking, about 20 months, – almost two years to essentially produce the total 12 minutes.” In the time since finishing it Margo has been working on the pitch for the feature film version.

For a computationally heavy production like Construct, the application of GPU rendering, a style known for speed, seemed like the fastest path to finishing. Through NVIDIA, Margo was given access to their Visual Computing Appliance (VCA) system, which stacks eight high-end GPUs together. Its computational power was so remarkable that Construct’s total rendering time dropped from 480 to 60 days, giving artists even more reasons to add elaborate details.

Chaos Group provided a direct line to their development team, who created a brand-new rendering technology for Margo that linked V-Ray RT (now GPU) to Autodesk’s MotionBuilder. Motion capture performances could now be applied to ray-traced characters and environments in real-time, providing Margo with the type of information needed to give directives or make decisions on the spot.

“Being able to compose the shots with all my color, lights and 3D assets in the monitor was life-changing for me,” said Margo. “When you are in the moment, the last thing you want to do is stop and reassess. Real-time ray tracing gives you the freedom to be fluid and experimental, mirroring the feeling of live-action cinematography.”

The Construct team has continued its experiments since then, connecting their work to V-Ray Cloud / Google Cloud.

Understandably, Margo is very interested in new developments such as the Nvidia RTX real time ray tracing card announced at SIGGRAPH 2018. He is still a major advocate of the benefits of virtual production. “My aspirational goal is to replicate the live action filmmaking process as closely as possible in a production setting. It’s really about trying to get everything happening concurrently”, he explains outlining his view looking forward. “It creates the conditions for experimentation, exploration, dynamic thinking, and happy accidents to emerge organically in a way that I think that the current animation pipeline and process just does not facilitate”.

Margo points out his lighting and rendering team often have to reverse engineer good compositions to camera and animation that had been locked down often months earlier. “And the only artistic element that was considered when compositions were being established early in the process (on set) was just more or less form silhouette because the fidelity of the viewport rendering was not good enough that you could consider reflection, depth of field, naturalistic and physically based lighting and materials or volume-metrics”. He wants (virtual) cinematographers embracing all the potential reality of what an offline render can do,…” at the same time you’ve got the director, the key department heads and the performers onset – so everybody can be riffing off each other and you kind of walk off the day set with so much more creative clarity and commitment”.

“We are moving full steam ahead, developing the feature and meeting with creative/production partners that are as passionate about this world and the future of virtual production as we are,” said Margo. “Small teams are rewriting the map from their living rooms, and from what we can tell, Hollywood is starting to take notice.”