Project DreamSpace is a three-year EU funded project which aims to combine the work of universities, industry and professionals to produce new and innovative workflows. It is especially focused on virtual production and moving technology processes earlier in the production, such as on set. It is ambitious as it is broad and recently in Germany the team presented their progress at FMX. Some of the technology impressed, some showed promise, but perhaps the most impressive aspect is the power of mixing different academics, industry researchers and actual practitioners together with a reasonable planning horizon. Too often the technology must serve the immediate project, the shot that is directly in front of one, and newer unexplored approaches are left as pipe dreams or as after-hours hobbies, – no so with DreamSpace.

From just an industry stand point, there are two very powerful and significant players, The Foundry and Stargate Studios Germany, both experts at real world visual effects, but the other partners in the project also carry with them serious intellectual and academic force. Some of the other less well known partners have literally dozen of PhD researchers and teams of people developing core technologies that aim to give this project some really solid innovation. The brilliance of the alliance is that without the real world industry direction, the pure research may be ‘good in principle’ but unable to work on a real world set, but when combined with the project’s industry partners, DreamSpace stands a real chance of benefiting not only European productions but global pipelines as well.

What will be interesting to see is how these ideas advance over the coming 12 to 18 months. On paper the plans for several of the projects are extremely promising but not all the technology is yet working fully and some is still currently more theoretical – not something the project wants. The team is very keen to have DreamSpace tools used in production, – as the team expressed themselves “the only lasting reason production will use new technology is to save time, money and increase creative flexibility”

- The companies presenting recently as part of the European DreamSpace project were:

- The Foundry

- iMinds

- Stargate Studios Germany

- Saarland University

- Ncam

- Filmakademie Baden-Wuerttemberg

Stages in virtual production

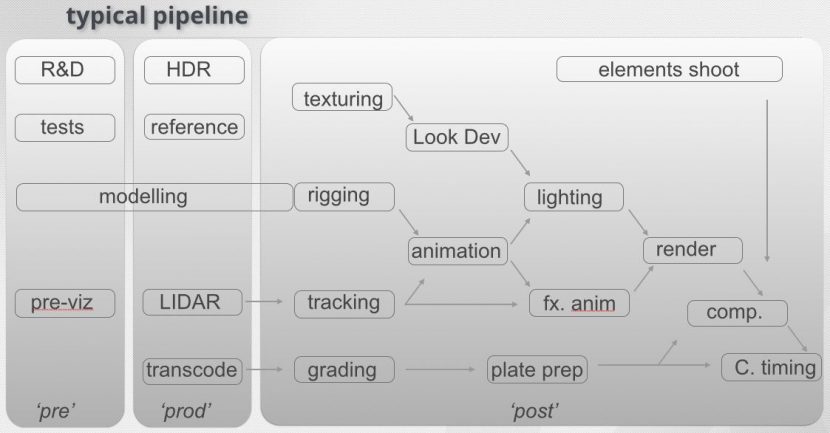

To fully see how the companies fit together one has to consider a traditional production model and then compare it to the new or virtual production model.

Traditionally work is done to prepare for the shoot, but primarily it is about the old notion of pre-production then production with data gathering and second unit, all feeding into a major post-production phase. The DreamSpace team do not pretend that they have alone advocated this transition to a more virtual production model, but they are embracing it and exploring it for any creative opportunities or financial benefits.

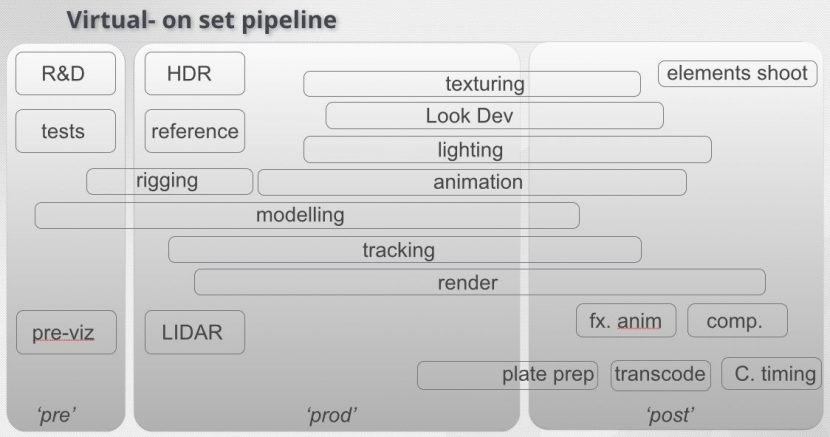

The new model blends old roles and the new, this first view shows a simplified version of traditional post

This second diagram shows how these lines are blurring and work is moving earlier in the process.

This second diagram shows how these lines are blurring and work is moving earlier in the process.

In the new approach things are blended, physical production design leads into virtual production design and set modeling on computer, since CG set construction and optimization is vastly faster and easier before any actual physical set construction is done. It also allows for the boundaries between set and set extension to be discussed, explored and agreed upon before shooting.

For studio work, the stage is calibration, and any on set testing and alignment is done. This may involve some prelight and camera – compositing testing live in the studio.

Principle photography and on set comping can allow for a much higher shooting pace with the filmed page count achieved being as high as 6 to 30 pages a day. In traditional feature work, 2 pages a day would not be an unreasonable expectation.

Dailies can now become ‘hourlies’ as shots can be reviewed during the day and groups of dailies distributed widely from the on set technical village even as shooting continues.

Referring to the diagrams above, Nic Hatch CEO of Ncam points out that “there is no single workflow which can be defined, whereby everyone can or will follow”, adding that ‘keying’ an important part and achievable during the shoot process if the setup is done correctly, although here again not everything is of the came difficulty or complexity. “For example, a ‘simple’ green screen car shoot setup might be achievable with a good camera track, a decent key, good LED lighting based on pre-shot plates and some good colour correction tools, all at TV episodic quality…this to be achievable with relative ease. However, full CG transformers and exploding buildings would present a significantly more challenging proposition. And there is, of course, a lot of middle ground”.

Even today, he adds, previs rarely stops at the end of pre-prod. “Postvis is becoming more important due to audience test screenings. I would say that today, previs continues throughout the production process in terms of feeding the virtual production assets. Postvis is therefore hopefully part of the output of the virtual production process. Or do we call it on set vis or prodvis!”

The nature of such generalizations are that one size rarely fits all and here this is especially the case, The Foundry’s Jon Strack adds that “I would suggest that rigging, animation, texturing, look-dev would happen up-front so that it is available on-set. Creative decisions on layout, lighting, timing could then happen on-set through to post”.

Edit and picture lock on a 120 minute program can translate to processing and editing an 180,000 frames which in turn could be 12,000 actual six second cuts or edits. Making sure the Metadata is carried forward with the transcode can be vital, anything speed up by a multiple of over 10,000 times is a huge difference(!) Making the editing process as integrated with project management tools and automated is key. Especially when episodic work has multiple overlapping episodes. One episode is being prepared, while another is being shot, while another is being edited and another is in sound, vfx or grading or all at once.

To help with getting things right in the first place, not only is it desirable to previz shots and see the green screen replaced by virtual set, – but any efforts to get the virtual set lit correctly so that the CGI components and the live action share the same lighting plan is helpful. Here virtual production offers the change to relight the CGI or the set based on each other. With real time match-moving of any camera high quality data means avoiding having to start from scratch on each new shot or scene when actual post-production starts.

As post does start producing final shots it is key to make sure these final comps from say Nuke, can be effortlessly seen in context and dropped into the final sequence and/or dovetailed with the Color grade.

The end game of the work DreamSpace is exploring is really that all processes span the production chain and for different types of productions the load would happen at different parts of that chain. “For example in high-end episodic production today there is front loading of the preparation so that shots can be delivered with a fast-turn around. With Virtual Production the focus can be on “the creative decisions on-set feeding through to finalizing in post” explains Strack, who believes these are exciting times for production. He adds, “the story for us has been the general shift and merging of what is considered “pre”, “prod” and “post” with digital pipelines – for example with pre-viz on-set and the potential to deliver final composites from set”

While some of this may seem like just common sense, – as many artists know there is a real gap between what could be possible and what actually happens. Lens data is not recorded, meta-data is lost in transcoding, match moving is hard due to lack of reference, lighting fails to match plates and assets need to be rebuilt, while previz shots seem to have been ignored or forgotten altogether in the chaos of a live action shoot. Such a production falls into “fixing in post” rather than using tools from post to better get it right on set.

Immersive Experiences

One aspect of the DreamSpace project that does not neatly fall into this model the Immersive experiences work, which is a cross between VR and Art piece. Many of the DreamSpace technologies are relevant to VR and newer applications of viewer experiences, and the DreamSpace team would be wrong to ignore such areas, but the actual VR work the DreamSpace project is exploring is more tangential to the processes discussed above, than linearly included in them.

Their work here is almost avant-garde experiments with art pieces being developed that are exploring new and innovative audience experiences, particularly with respect to art, culture, and immersion. The avant-garde pushes the boundaries of what is accepted and expected in the entertainment realm, and why not, but it seems like this work is across most of the areas and yet not directly extending the current approaches directly in a technical sense.

Stargate Studios Germany

Having been in production for over 25 years, starting in the USA, Stargate Studios is the ideal production partner to be in DreamSpace. The company is already a global leader in virtual stages and virtual backlots. In addition to Los Angeles, the company has offices in Vancouver, Toronto and Malta – with more than 200 artists and VFX supervisors. In Germany it has facilities in Berlin and Cologne. It is the German team that is working as part of DreamSpace.

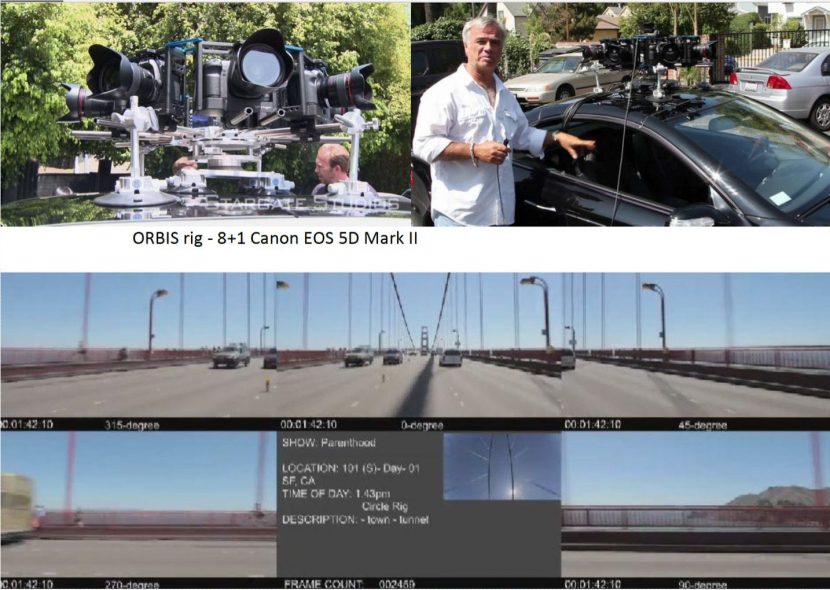

Stargate Studios Germany has long both experimented and then implemented virtual car rigs, live action real time composited virtual stages and it is easy to see how the Stargate Studios real world uses such as 180 or wide field of view location capture work, is a natural fit with the work of iMinds, Ncam and others in the DreamSpace team.

Stargate Studios Germany is perfectly placed to use the technology as it is being developed by the other European partners but also to specify for them, the needs of the broader community – feeding back into the research initiative’s goals.

The Foundry

The Foundry provides not only the serious image research of their extensive R&D team but a global perspective on best practice. While it is not the intent of The Foundry team to directly produce a new single product based on their involvement, the team from the UK are very much exploring ways of taking Foundry products on set and making a better use of their tools away from normal ‘post-production’. This is currently most evident in finding better uses for Nuke and Katana on-set. As of FMX, there was little evidence of the Modo team being involved (this may be due to the Euro-focus of the project vs. the primarily US Modo heritage or it might be just the nature of the projects or problems being explored). Much of the real-time lighting is Katana focused, not that Katana has a particularly strong reputation in real-time compared to Modo, Mari or any of the other Foundry products, but at last year’s Siggraph Pixar did show a GPU Katana prototype that was remarkable for its real-time RenderMan environment work. While the work was very much under investigation last year, …real-time sets lighting was shown first, being an order of magnitude easier to do in real-time than full Pixar character work. The EU DreamSpace work is not built on Pixar’s work but it shows more than one group sees Katana as a good tool in this area of real-time lighting.

An example of how The Foundry’s work may cross over is the investigation into VR and AR/MR tools. When working with 360 degree spherical imagery, the image is often displayed as a Lat-Long projection. Clearly, much like a normal world map, when an image is flattened in this way, any area at the top or bottom of the screen will be stretched when viewed Lat-Long. This is why Greenland seems so big compared to Africa. A (Mercator projection is even worse than a normal Lat-long – making the two seem similar in size when Greenland is a fraction of the size, 1/14th the size of Africa ). In compositing terms, this means a blur kernel or oval paint brush shape would need to dynamically change size – growing dramatically, as it moves up a Lat-long, vs. the same stroke or kernel at the ‘equator’.

“As VR & AR continue to command attention from content creators, our main challenge remains finding ways in which complex, highly technical content can be created faster, with more flexibility and creativity” explained Jon Starck, Head of Research at The Foundry and technical coordinator for Project DreamSpace.

iMinds Omni-Directional Stereo Video

The Flemish government started a research unit: the Expertise Centre Digital Media, which is now a part of DreamSpace.

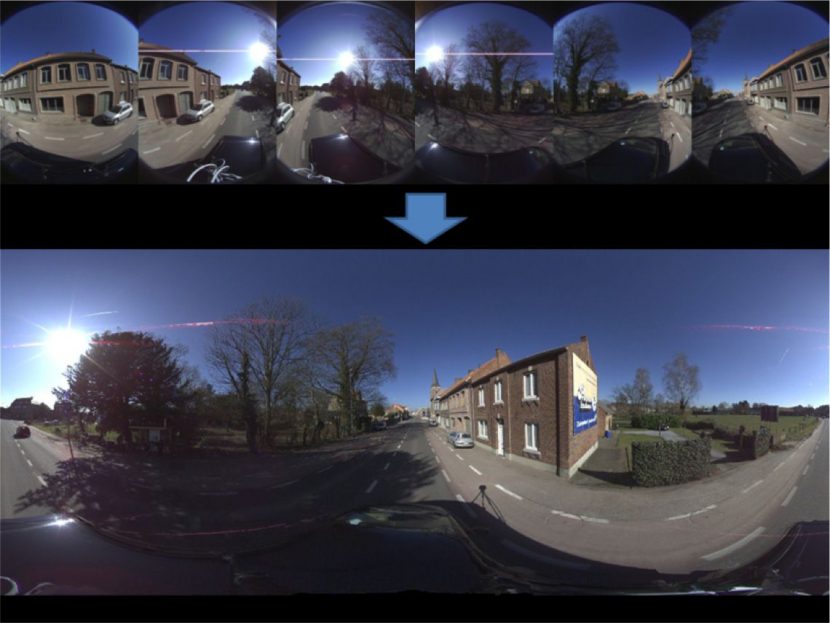

One of the key projects was a 360 “ODV’ plate shooting rig which could capture an environment in stereo. The first rig is able to film all directions at once and stitch these pair of images into 2 offset / stereo

plates. The stereo is important for it’s own sake and the ability to use the stereo offset to work out depth information which, in turn ,can be used for location or set reconstruction.

At FMX the first of these rigs was on display and it was built based on 2 Megapixel cameras which each operate at 720P shooting at 25 fps. While this is valid, it is only the first step, the new rig that is being built currently represents a major leap in production quality and thus usefulness.

The next rig is being made with 12 Megapixel sensors, which are sensors that are also being used in some production BlackMagic Cameras. These new sensors are not only allowing 4K resolution but a much wider 12-13 stops of dynamic range and can run at much higher speeds, capturing up to 180 fps.

The complexity of the rig is not only in being portable but making sure the 360 stereo alignment holds, since simple stereo fixes such as an ‘x translation’ of one eye – valid in normal stereo post-production – is simply not viable when the images are 360 stitched moving plates.

The team is both highly dedicated and very aware of the issues of real world production’s needs for fine accuracy precision. It is easy to see how some of the virtual set work will integrate and mesh with this key capture technology.

Universitaet des Saarlandes

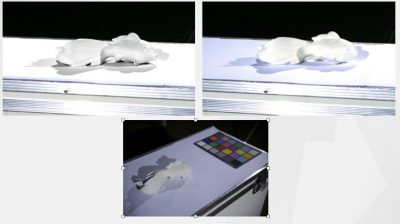

Universitaet des Saarlandes is a huge research institution with some 30 professors and 300 Ph.D. students spread across three campuses in German. At FMX their primary project was showing a complex and bi-directional relationship between real world lighting and virtual lighting. Something that might typically end up in a real-time Katana system, but for now is a stand alone process.

To achieve the lighting on-set ‘feedback loop’ to virtual lighting, – the team does a series of light probing on set, sampling the light at various points in the stage volume. The system discussed at FMX was a closed system that then had both control of the lights on set via a computerized DMX controlled lighting desk and a synced system allowed one to place the virtual object back in the set with the same lighting. The key to the innovation was as the lighting changed on the set, or the position of the virtual object changed in the volume, the lighting changed to match. Furthermore, the opposite was also true, a virtual light could be adjusted and there is no reason this would not also feed the real world lighting desk controlled studio lights.

The light capture estimates from a number of light probes, a CGI light setting that can be imported into a renderer. These provide an accurate directional and positional light setting that preserves the fine details lighting directors need, e.g. to light faces in a dramatic way. This goes beyond the usual HDR-light probes illumination which give no fine detail in the close range of objects. (As of FMX the system was using just point lights, but the team seemed eager to explore area lights as well).

Tracking changes of the lights in real-time and these are directly transferred to a real-time raytracer to give the creative team the means to make decisions on set on the lighting of both the real and virtual scene components.

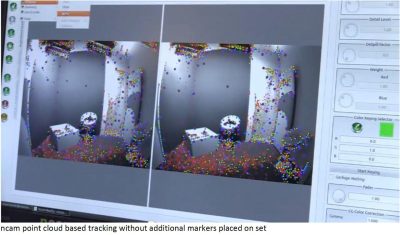

ncam

Ncam is a real world markerless camera tracking system with depth keying that is working today. The system interfaces with Autodesk Motion builder allowing control of

- vertices

- textures

- normal

- transparency

- animation

- caches

The system interfaces with realtime renderers. The ncam rig at FMX allowed one to stand in front of a green screen and then be composited in real time into a virtual set, both the virtual set and all the camera work matching what is happening live on the studio camera. Furthermore, as the system has depth information, if the virtual set has a shop front – or window, the live action actor would be inside the window but in front of say the shop counter, – if their position in the real world matched that layout of the virtual world. Rendering parts of the scene in front and simultaneously behind the actor – in real time based on their stage position is vastly more helpful to directing a scene than just a slap comp of the actors on top of any type of background. The system we tested produces a planar map of the actor, thus one can not turn side on to camera and have one’s right hand in front of the window and the left hand still behind, one is either all in or all out. Still, this automatic use of depth is more than a gimmick and in concert with the ease of matching camera and lens movement, the speed and low latency of the system make it highly desirable in many virtual set applications.

The system interfaces with realtime renderers. The ncam rig at FMX allowed one to stand in front of a green screen and then be composited in real time into a virtual set, both the virtual set and all the camera work matching what is happening live on the studio camera. Furthermore, as the system has depth information, if the virtual set has a shop front – or window, the live action actor would be inside the window but in front of say the shop counter, – if their position in the real world matched that layout of the virtual world. Rendering parts of the scene in front and simultaneously behind the actor – in real time based on their stage position is vastly more helpful to directing a scene than just a slap comp of the actors on top of any type of background. The system we tested produces a planar map of the actor, thus one can not turn side on to camera and have one’s right hand in front of the window and the left hand still behind, one is either all in or all out. Still, this automatic use of depth is more than a gimmick and in concert with the ease of matching camera and lens movement, the speed and low latency of the system make it highly desirable in many virtual set applications.

Ncam is a proven technology, a key tool and a real asset to the DreamSpace project overall

.

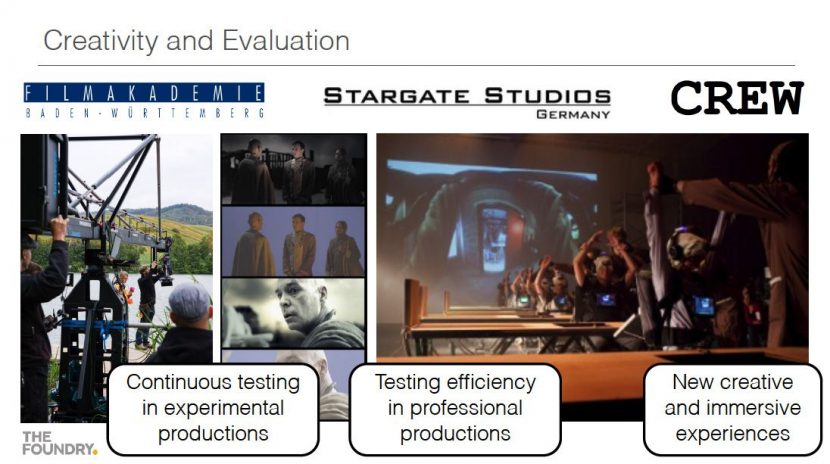

Filmakademie Baden-Wuerttemberg

Filmakademie Baden-Wuerttemberg is the final member of the team presenting a stage one of the DreamSpace project, and their mandate is to allow creative control of the technology and environments that have been discussed.

“Our major goal is to develop an intuitive approach to editing set, light and animation parameters – no expert skills should be required”

The team have executed a series of tests including evaluations using

- Oculus (with camera attached for AR view),

- Leap Motion for gesture recognition and also

- the Meta Space Glasses

At the moment the most effective tool seems to be a tablet editing tool : it’s simple and yet powerful interface allows one to be mobile on set but still, light, animate and edit parameters in a way that is open and accessible to anyone.

It is not just the notion of using the tablets as control surfaces, the team advocate allowing the tablet to be a device that one can use to ‘see through’ and thus see the principal camera (tracked via ncam) in the virtual space. Where on set one may see the actor on green – on the tablet as you control the comp – you see the live actor in the virtual set, lit and moving as one.

The light capture and “harmonization tablets” are connected to a DMX controller adjusting real and virtual light parameters at the same time.

Helmet and VR solutions are not off the table but the challenges in using them in the real world and on set are non-trival. The team will no doubt be exploring the much less isolating technology of Holo-lens and Magic Leap further once they are more developed but straight VR helmets isolate a creative from the collaborative on set creatives and can be even dangerous given the nature of sets, cranes, cables and props. For now, the tablet solutions are both effective and accessible and lessons gained here can easily be applied to other technologies as the DreamSpace project moves forward.

In Summary

While technologies can be developed without formal projects like DreamSpace, it is unwise to not seek a wider collaboration between academic researchers and industry practitioners. One can not predict what will come from such efforts, – if the research is too prescriptive there is no chance of unexpected developments. Short term immediate research is often little more than incremental problem solving. With budgets under pressure and timelines ever tighter, as an industry we would benefit from more three, or four year research initiatives. Virtual production is barely an infant compared to the decades that normal production has had to evolve work practices and techniques. It will be interesting to see what comes from the concluding stage of DreamSpace.

For more information see DreamSpace web site.