Pav Grochola is a Effects Supervisor at Sony Pictures Imageworks (SPI) and was co-effects supervisor on the Oscar winning Spiderman: Into the Spiderverse (along with Ian Farnsworth). He was tasked with solving how to produce natural looking line work for the film. (Check out our previous story on the whole film here).

A critical visual component for successfully achieving the comic book illustrative style, in CGI, was the creation of line work or “ink lines”. SPI in testing discovered any approach that involves creating ink lines based on procedural “rules” (for example toon shaders) were ineffective in achieving the natural look that was wanted. The fundamental problem is that artists simply do not draw based on limited ‘rule sets’ or guidelines. SPI needed a much more robust solution to the problem of creating artistic looking hand drawn line work and a way to stick and adjust these drawings onto the animations.

Pav Grochola joins as a guest contributor to explain how SPI used Machine Learning (ML) to solve the problem.

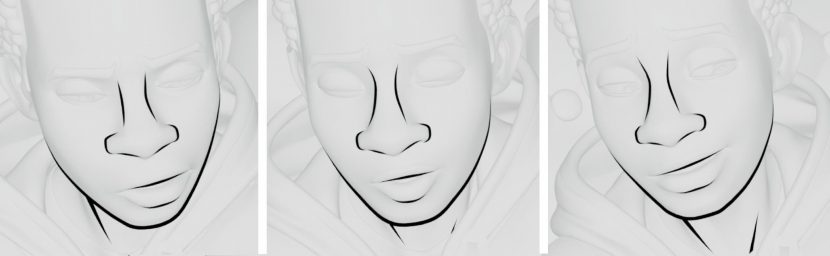

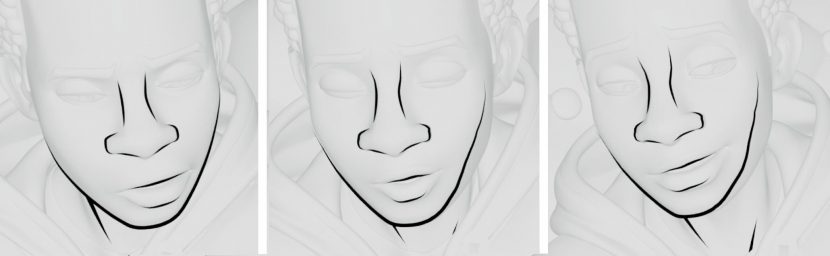

Above is an image to illustrate the challenge of creating hand drawn ink lines on top of animated characters. This shows a typical example of using a “toon shader” and the undesirable result it produces. There is an overall lack of hand drawn design and resulting look is procedural. SPI needed a better way to capture the complex artistic decisions made when drawing.

In the next image above, the ink lines are drawn with the desired design on the left panel, however they are not adjusted as the character moves. Notice how the ink lines look good on the left panel – however they quickly fall apart when Miles turns his head. “The line work no longer matches up correctly, particularly the jawline. We would get this result if we tried to create ink lines as a simple texture map. We needed a way of easily adjusting the drawings so they maintaining their correct design throughout the animation” explained Grochola.

In the bottom figure above, the ML system correctly adjust the drawing as Miles turns his head.

SPI method

The team investigated other ink line techniques, for example the “Paperman” short by Disney. This method created screen-space velocity fields from the animation data and advecting lines with these fields. “Though successful, we found dealing with velocity fields cumbersome. They were particularly problematic when there was overlapping geometry – for example if a hand moved across a face. Splitting out multiple velocity fields become impractical” says Grochola . The Disney Paperman approach also did not lend itself to storing drawing solutions from one shot to another, an efficiency that the team were keen to have, given their feature film shot count. After consideration, Grochola explains that they agreed, “we wanted to create a system that leveraged machine learning”.

The SPI ink line tool has two modes to overcome the two main technical challenges with creating a realistic hand drawn set of ink lines: The ink line “manual mode” tool was created to adhere ink lines (curve geometry) onto 3D animated geometry with easy controls for artists to modify the drawings. The ink lines needed constant adjustment to maintain their correct 2D inspired design to avoid appearing like a standard texture map, instead of a dynamic drawing. The second technical challenge was capturing and cataloguing these artistic adjustments made to ink lines in order to speed up the workflow using machine learning. This ‘machine learning mode’ sped up the artist’s ability to produce ink lines in much more predictable fashion and with greater efficiency.

Manual Mode

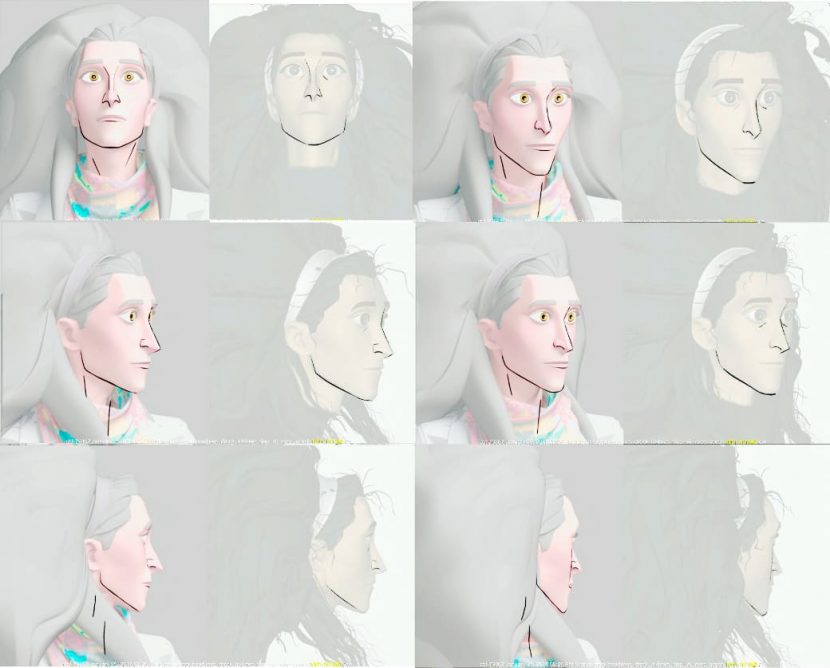

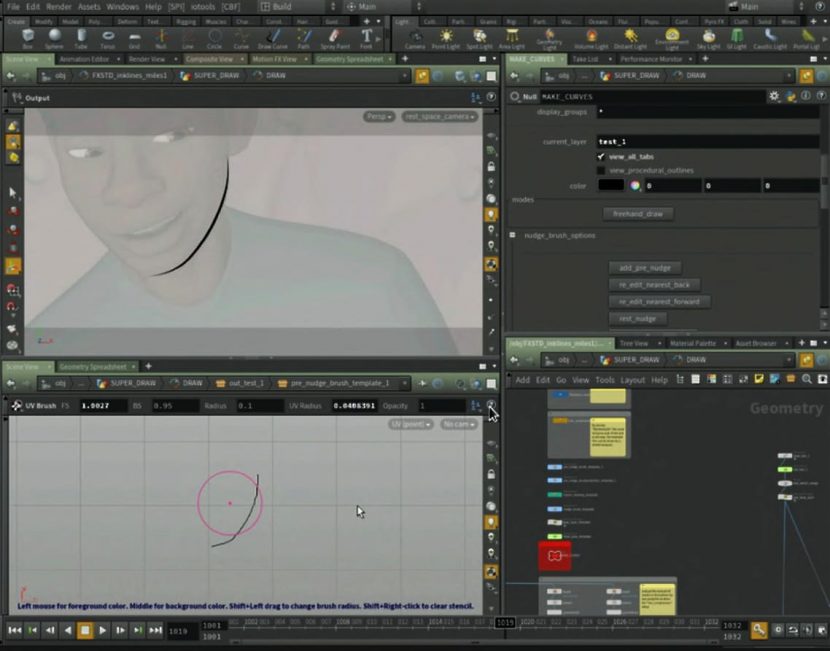

For the film, Artists draw directly onto the character to get the correct line for a specific pose on a character. After drawing ink lines artists used a nudging “brush” to quickly and easily adjust their drawings. Nudges were automatically keyed and interpolated by the tool. “We projected the curves directly onto the geometry and interpolated the nudges in screen-space or an arbitrary UV space (in case of machine learning interpolations). Having artists work in 2D screen-space was critical, as this is the most intuitive space for artists to draw and nudge to get the result they were after” recalls Grochola. The translation back to a 3D space happened automatically, saving the artist the time and effort to make these adjustments. (See the image below). The artists could work in 2D space and use a soft brush to nudge the drawing.

Machine Learning Mode

There is a complex relationship in the adjustments artists made to ink lines and the characters position to the view angles and expressions. Using the manual tool the team would make these adjustments by hand. “We determined that in order to speed up artist’s productivity over the course of the project we would utilize machine learning to help our animators get an initial predicted result that would give them a reasonable first pass of creating ink lines on the characters” explained Grochola .The image below shows a machine learning prediction. Using the standard “nudging” workflow the artist could still make adjustments to correct the drawing. Using the standard “nudging” workflow the artist could still make adjustments to correct the drawing.

Machine Learning Training

For machine learning training the team moved their drawings into an arbitrary UV space. Drawing would move around in this space base on artistic adjustment. The movement of these points, in this space became the “target” data for machine learning. When predictions were called, they were called in this space and then “transformed” back into 3d space. Machine learning attempts to answers the question: What is the relationship between drawings in this space relative to the input features – such as relative camera angle and relative deformation? See below.

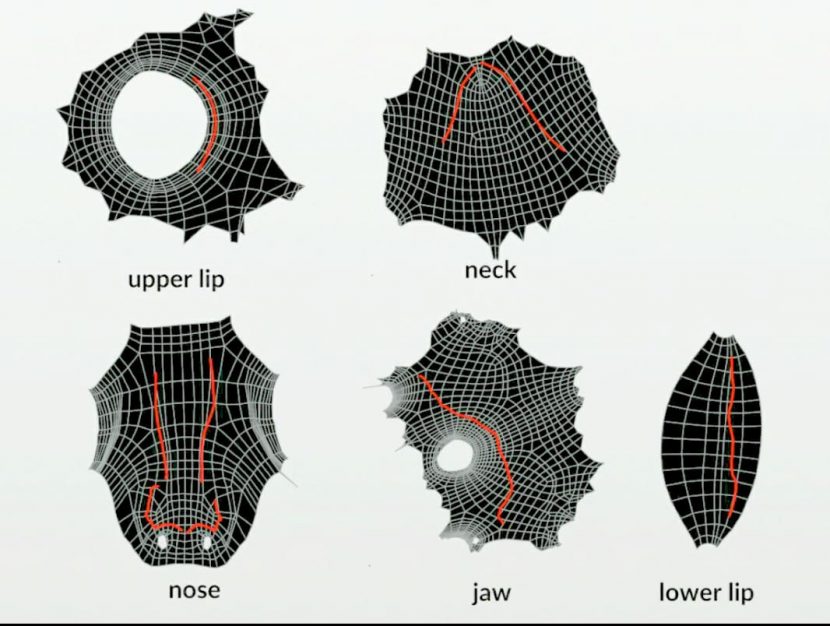

For training data, “we created arbitrary turntable animation from different angles, along with expression changes. Using the ink line tool in “Manual Mode,” we had artists create drawings for these angles and expressions” (as seen in the final images below). The team then moved these animated curves into an arbitrary UV space for machine learning training. This became the “target” of a first-generation set of training data (around 600 frames per character). The “features” were relative camera angle and expression changes. “We then trained a model using scikit-learn (specifically GradientBoostingRegressor) directly in Houdini. Using python and scikit-learn we then loaded these newly created learning models into Houdini and could call prediction for new animations” says Grochola. “We went through these steps one more time on another set of arbitrary animation. This time we used the machine learning prediction from the first-generation training data and nudged them using the tool in Machine learning mode.” This became the second-generation training data, reinforcing the correct solutions and adjust the incorrect ones. The final trained model used the first and second set of training data and showed definite improvement over the first.