With deadlines for the films worldwide release looming, I, Robot visual effects supervisor John Nelson spoke to Fxguide on a busy Sunday afternoon in Los Angeles. We also caught up with lead visual effects house’s Digital Domains Andy Jones & Erik Nash, and WETA’s Joe Letteri, on the push to finish one of the summers biggest effects films.

The potential for artificial intelligence to backfire on its human creators has been a long preoccupation of science fiction. It is the central theme of Isaac Asimovs pioneering collection of short stories, I, Robot, adapted into a major feature film by Australian director Alex Proyas (Dark City, The Crow).

Digital Domain in Venice, California handled the bulk of the visual effects work, more than 500 shots, its largest project to date, much larger than even Titanic.

Weta Digital in New Zealand contributed an additional 300 shots alongside Modern FilmVideo (150 shots) and Rainmaker (75).

Typical of major international effects features, the sun never sets on production of I, Robot in the weeks before completion. The model shoot was in Toronto, Canada, three hours ahead of LA while Weta is 20 hours ahead.

Typical of major international effects features, the sun never sets on production of I, Robot in the weeks before completion. The model shoot was in Toronto, Canada, three hours ahead of LA while Weta is 20 hours ahead.

John Nelson’s entire day is structured around the constant flow of temps, approvals and notes to the three separate effects teams. “I have friends at ILM that say that when films are digitally released – we’ll be changing them right until the day before it opens,” said Nelson. With the digital intermediate (DI) revolution and fast transfer between NZ and London, the sun never sets on people working on a show like this” explains Nelson.

For each shot, a 1K file is FTP’d to Nelson in LA, where he looks at it then takes it to Avid to see it in the cut. Nelson then decides what to prep to show Proyas. He then talks to Proyas, “wherever Alex is” and “I try and include whoever produced that particular shot in the call. So for example with Weta, we have lookup tables (LUTs) of what frame we are looking at, that way the system is secure – as the actual frames aren’t sent (just the metadata)”. A remote player developed by Weta for its work on Lord of the Rings is used for this task. The system runs on a Mac, with simultaneous viewers, cursors and control at both ends. On LOTR Weta used a custom video conferencing tool, although this has since been replaced by iChat.

When we spoke to Nelson there was only nine weeks to go till the films delivery, however shots were still being requested by the filmmakers. Rough animation for one final plate shot the week previous had just been approved, and the Digital Domain team was moving to quickly complete the shot.

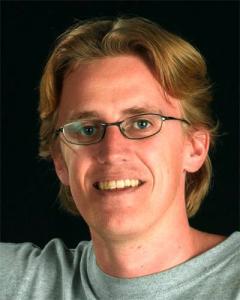

Winner of the Visual Effects Oscar for Gladiator in 2001, Nelson has said he likes epic visions.” He joined ILM at the end of the Abyss, and worked on T2, when as he says “you had to write the software to do the show, after T2 you still had to write some but it started the trend of how we work today”.

Winner of the Visual Effects Oscar for Gladiator in 2001, Nelson has said he likes epic visions.” He joined ILM at the end of the Abyss, and worked on T2, when as he says “you had to write the software to do the show, after T2 you still had to write some but it started the trend of how we work today”.

In 2002, Nelson was called in by John Gaeta to go to the aid of Centropolis when the facility got into difficulty with its shots for the Matrix sequels. Nelson worked to sort out the problems until the company folded, – while Nelson is well known and respected At Sony Imageworks (SPI) he did not follow the shots over to the new facility when the Centropolis shut, but his friend Jim Berney took over which eased the decision. “I am still very proud of the work we did on those shots (for the Matrix)” he adds.

One of the processes that sped up the production of irobot on set was the use of encoded heads on set and realtime revisualization. The system aligns the camera on set and allows for the split to be composited in realtime with a virtual set. It allows the DOP and director to see set extensions live on the stage and frame the shot accordingly.

One of the processes that sped up the production of irobot on set was the use of encoded heads on set and realtime revisualization. The system aligns the camera on set and allows for the split to be composited in realtime with a virtual set. It allows the DOP and director to see set extensions live on the stage and frame the shot accordingly.

For I, Robot, Nelson worked to reduce the amount of time it took to align the system. Prior to production, the process was taking well over 30 mins a shot. By the start of principal photography, the team had reduced this to eight minutes. I, Robot is the first major feature film to use this particular system. Nelson said, “We did a real world system, where we encode everything then put it through the software. The first day was nail-biting – I thought I’m going to get killed if I slow down 1st Unit, so I forced everyone to come in over a holiday weekend and get their set up time down, it was vital”.

The system triangulates the position of the camera using established points on the set and the on-set computer. It then digitally relays the information to the video split system placed next to the director for him to view the live compositing. The realtime previsualisation team was headed by Joe Lewis of General Lift. One major benefit the production found in working this way was that the temp comps of the film were completed while shooting the live action plates.

All the CG shots were recorded and given to each of the vendors, saving weeks of time that would have otherwise been spent waiting for the first batch of temp shots to appear from the various vendors.

Digital Domains Andy Jones was tasked with the job of supervising the animation of Sonny, a renegade NS-5 domestic assistant. The NS-5 is no ordinary robot, boasting 6kg of processing circuitry, a revolutionary Positronic Brain and almost one mile of aluminium wiring. In the film it is fully responsive to over 20,000 voice commands and loaded with a supposedly ultra-reliable Teresa 2.1.2 Operating System. The animation team faced a challenge in not wanting to animate the robots too well, with apparent human motion, or they would not appear as robots. The robots were meant to have agility and strength beyond a normal person, but still needed to look believable.

Digital Domains Andy Jones was tasked with the job of supervising the animation of Sonny, a renegade NS-5 domestic assistant. The NS-5 is no ordinary robot, boasting 6kg of processing circuitry, a revolutionary Positronic Brain and almost one mile of aluminium wiring. In the film it is fully responsive to over 20,000 voice commands and loaded with a supposedly ultra-reliable Teresa 2.1.2 Operating System. The animation team faced a challenge in not wanting to animate the robots too well, with apparent human motion, or they would not appear as robots. The robots were meant to have agility and strength beyond a normal person, but still needed to look believable.

“It was hard at first to get the balance right,”‘ said Jones whose credits include Animatrix: Final Flight of the Osiris (2003), and animation supervison on Final Fantasy, Godzilla and Titanic.

The production used quite a bit of motion capture, but as the objective was to produce ‘robotic movement’ not human, the mocap data was altered to make the shoulders and hip movement more mechanical To train the motion capture actors, a ‘Robot School’ was setup with actor and dancer Paul Mercurio (Strictly Ballroom). Systems developed by Jones for the Animatrix were used to clean up the data.

We built a tool that transfers the motion capture data to puppet like controls that the animators can work with, said Jones.

We really wanted to avoid the weightless properties that some digital characters have – that was the challenge. The team worked hard to make the robots look heavy”.

We really wanted to avoid the weightless properties that some digital characters have – that was the challenge. The team worked hard to make the robots look heavy”.

Proyas designed the look of the Nestor Class 5 (NS-5) robots in collaboration with designer Patrick Tatopoulas, (Dark City, Godzilla, Underworld, Alien vs Predator, Chronicles of Riddick). The animation team changed the basic robot design only slightly to make the chest and shoulders more restricted in movement. Tatopoulas and Proyas had worked out the look of the NS-5 a year before filming.

Getting the right emotional performance for Sonny was the major challenge for Nelson. Sonny is a character that needs to carry an enormous amount of the story. There was great deal of debate before the decision was made to rely on CGI. “I came from being a cameraman, said Nelson, and I know that to make CG look real takes a lot of work. My attitude is if you are going to do 3D then do it with a lot of 2D material”. For Nelson, ending up with CGI sets or characters is “at the end of my logical set of choices, its hard and expensive, but the only way to get certain things like an emotional performance from a see-through robot”.

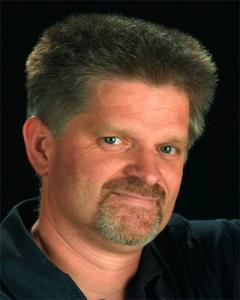

A posable full scale stand-in Robot was used on-set as lighting reference. “At first glance it looks very similar but on closer inspection the way the joints and waist work is more mechanical” comments Erik Nash, Digital Domains VFX supervisor. Nash worked on the original Star Trek the Next Generation. At Digital Domain his credits include Apollo 13, Titanic, Time Machine and xXx.

A posable full scale stand-in Robot was used on-set as lighting reference. “At first glance it looks very similar but on closer inspection the way the joints and waist work is more mechanical” comments Erik Nash, Digital Domains VFX supervisor. Nash worked on the original Star Trek the Next Generation. At Digital Domain his credits include Apollo 13, Titanic, Time Machine and xXx.

As the robots were to be CGI, the production developed a system to capture the on-set lighting reference. A special fisheye digital still camera was built into a robotic pan-and-tilt head to capture four fish-eye image positions.

Multiple exposures from an automated rig produced a high dynamic range (HDR) map, which could be used as a starting point for lighting and reflection maps.

Each shot containing the robots required the following elements to be shot:

* a rehearsal with the proxy standing in for the robot;

* the actors with the proxy;

* the actors without the proxy;

* a clean plate;

* a 2m reference robot for lighting;

* a chrome ball and 18 % grey ball; and

* an automated HDR pass.

Digital Domain’s digital pipeline was Maya animation, with lighting in Renderman. Some sets were created in Lightwave. The 3D department output highly complex floating point EXR files for each aspect of the 3D. (OpenEXR is the high dynamic-range (HDR) image file format developed by Industrial Light & Magic.)

Digital Domain’s digital pipeline was Maya animation, with lighting in Renderman. Some sets were created in Lightwave. The 3D department output highly complex floating point EXR files for each aspect of the 3D. (OpenEXR is the high dynamic-range (HDR) image file format developed by Industrial Light & Magic.)

These were bound into one file and then handled by a special plug-in developed for Digital Domains own Nuke compositing application. Known as MAKEBOT, this plug-in allowed compositors to adjust almost every aspect of the 3D render. Virtually no models were used in the production of the film apart from one scene showing the destruction of a house.

“We really only had to commit (at the 3D stage) to the direction and relative lighting ratios of the scene,” commented Nash, as the embedded files include all reflections, transparencies, colours etc. These are all able to be individually adjusted during the composite.

This approach contrasts with Wetas pipeline on Gollum, who was virtually fully rendered before the files were passed to compositing.

Digital Domain really worked back to front for each of its 500 shots.

We would clean the background, add shadows, add back of the guts, mid of the guts, front guts of the robot, add the skin inner then the skin outer , then highlights and then reflections,” said Nash. For half of the scenes, the team needed to paint out the proxy and use that plate. In the other half, the clean plate or actor without proxy could be used. In spite of this there is only one motion control shot in the film, the rest is tracked.

Digital Domain staffing reached a peak of approximately 180 people working on I, Robot, of which 30 to 40 were Nuke compositors.

Nash points out that Digital Domain although Digital Domain has a large group of staff compositors, “there is a large community of both Nuke and Shake freelance compositors in Los Angeles to draw from”.

Final composites are output as Cineon files so that Modern Videofilm in Burbank can grade them for the Digital Intermediate. Modern debuted its DI service in 2002 with Jim Cameron’s Ghost of the Abyss, and its system is built around the Quantel iQ and da Vinci 2K colour timing desk.

No mattes or components were passed to Modern – only the finished 10-bit log files.

Nelson comments that the production got great advice from Peter Doyle (the man behind the digital grading of Lord of the Rings) and choose Modern Videofilm because of its new Northlight scanner, which Nelson calls a “beautiful machine”.

Among its 300 shots, Weta Digital produced set extensions showing a futuristic version of Chicago in 2035. The process began with huge amounts of reference stills of the Windy city, including LIDAR scans of key buildings and areas.

Among its 300 shots, Weta Digital produced set extensions showing a futuristic version of Chicago in 2035. The process began with huge amounts of reference stills of the Windy city, including LIDAR scans of key buildings and areas.

A complex car chase through the city and a long futuristic tunnel was achieved by Weta as 100% CG incorporating a digital version of Will Smith created by Digital Domain.

Weta’s pipeline is floating point, although it does not employ the EXR file format as used by Digital Domain. “EXR was something I worked on before I left ILM,” explains Letteri, who joined Weta Digital in 2001 as Visual Effects Supervisor on the The Lord of the Rings: The Two Towers and The Return of the King. Previously, he had been at ILM since 1991, and became a VFX Supervisor on Mission: Impossible in 1995.

Letteri is the winner of two Academy Awards for the Visual Effects of The Two Towers in 2002 and Return of the King in 2003. He is also the recipient of the Academys Technical Achievement Award for co-developing the subsurface scattering technique that was used to bring Gollum to life.

Letteri is the winner of two Academy Awards for the Visual Effects of The Two Towers in 2002 and Return of the King in 2003. He is also the recipient of the Academys Technical Achievement Award for co-developing the subsurface scattering technique that was used to bring Gollum to life.

As Weta’s pipeline is already floating point, Letteri claims it already enjoys much of the advantages of EXR. Although the Kiwi company is looking at adopting EXR for King Kong.

Robot crowd scenes were handled by Joe Letteri and his 120 staff at Weta Digital using Wetas famous Massive software. Weta also handled many set extensions, as well as the tunnel chase sequence with Sonny.

Together with Twentieth Century Fox, Audi developed a spectacular concept car for I, Robot. The film is set in Chicago in the year 2035, where the police department drive a concept car created by Audi engineers, based on special features suggested by director Proyas.

Together with Twentieth Century Fox, Audi developed a spectacular concept car for I, Robot. The film is set in Chicago in the year 2035, where the police department drive a concept car created by Audi engineers, based on special features suggested by director Proyas.

The RSQ is a mid-engined sports car that races through the Chicago of the future on spheres instead of wheels. Its two doors are rear-hinged to the C-posts of the body and open according to the butterfly principle. In addition to the RSQ concept car, Audi supplied further series production cars which appear — in disguised shapes — in the movie’s traffic scenes.