With the announcement this week that Oscar winning filmmaker, director and producer, Steven Spielberg will executive produce an original “Halo” live-action television series with exclusive interactive Xbox One content, created in partnership with 343 Industries, – fxguide looks at the recent ultra-successful, CG animated series, Halo 4: Spartan Ops, which finished session one, not long ago, after 10 episodes. The series followed the plight of the UNSC Infinity after the events of Halo 4. Axis was the lead production house behind Spartan Ops, in collaboration with 343 Industries. Other studios to contribute included BlackGinger and Realise Studio. fxguide goes behind the scenes of the Halo episodic adventures.

Axis

Axis is known, to many people, for the amazing animation they completed a year or so ago on the Dead Island trailer, work that broke through to the popular press (LA Times and Variety). The trailer worked on many levels, but fundamentally was a very good storytelling device that connected with its target audience. Both engaging and disturbing, the trailer was a remarkable piece of communication.

https://player.vimeo.com/video/64745418

– Above: watch a behind the scenes look at Axis’ work for Halo 4: Spartan Ops.

Axis Co-Founder and Spartan Ops Director Stuart Aitken points out, in relation to game trailers, that “there are amazingly executed trailers out there but some – not all, but some – leave me a bit cold, because the emotion isn’t there. That constant focus on showing your characters just doing some cool move. I feel maybe we have seen it before. It is getting to the point that we are saturated with that, that it has lost some of its impact. Dead Island was a reaction to that. Interestingly we have clients now coming to us asking, how can we make this trailer more interesting, and it is a challenge as we also need to represent something about the game whilst looking for a unique edge.”

While the company had already been talking to Microsoft and 343 Industries, the Dead Island work “definitely helped keep us on their radar,” says Aitken. When the Axis team was first approached it was not to just produce something cool. Yes, there are some great set pieces but the aim was always more than that; it was to show the audience the relationships and backstory of the characters, and to then dovetail in with a more meaningful multiplayer experience. It was always story focused from the first briefing.

Spartan Ops is more than a trailer, and more than cut scenes. With Spartan Ops, the linear storytelling element informs the gameplay as the linear story of one leads into the game extension of the other. This was actually something missing, in Dead Island. While that piece was a huge, massive success in one sense, if there was a criticism it’s that the trailer did not reflect the game and there was, “a difference between the trailer and what the game turned out to be,” notes Aitken. With Spartan Ops the two elements blend thematically better. The linear storytelling still renders at a very different level from the game, but one picks up from the other, rather than cutting across audience expectations. As such, Spartan Ops has been a huge success.

Axis was founded in 2000 by a small group of artists and animators to create world-class animation. Currently, the company has about 70 people working in the Glasgow studio, located in the center of the city. The company has close, professional, working relationships with other key UK companies recently featured here at fxguide, Ten24 and Di3D, both of whom have worked with Axis on recent projects, such as Dead Island and Spartan Ops.

Axis was initially approached to come up with ideas to deliver 10 episodic stories, but the style of how that would appear was wide open. Internally, Axis discussed everything from live action to 2-D motion-comic approaches or more graphic solutions. “The story element was the biggest thing we were approached to deliver,” recalls Aitken. “That was the thing, how can we tell a story over initially 10 episodes. As a director, it was really refreshing to not resort to a box of tricks to make Spartan Ops work, but rather use the more traditional routes of good dialogue and good characterization.”

“Spartan Ops was designed to appeal to a broader audience,” adds fellow Axis Co-Founder and Executive Producer Richard Scott. “Some Halo players have been known for a sort of hardcore experience yet there are a lot of players who do play for the story, who do play to explore the narrative, and who are less into the hardcore first-person shooter, yet still enjoy a multiplayer experience. They can still enjoy that experience here and I think this is a real attempt to cater to that wider audience – respect the hardcore players but also allow other people to enjoy multiplayer, too. There is a sense in the industry that there can be barriers to entry on the multiplayer side and because of that sense you have to be hardcore.”

The final style of the animation is aimed to fall somewhere between the quality of the game – which is great, but needs to function inside a real-time game engine and feature film photoreal CGI. Spartan Ops, like many trailers and cut-scene sequences, needed to deliver impressive visuals but avoid the uncanny valley and keep a level of stylized look. “At some point you could ask – why not just do this live action?” says Aitken. “After all, the budget may not be a million miles off, but I think there is an accepted principle that realising the characters in CG does have a closer link to the gameplay than if one had chosen to shoot live action.” The narrative sections inform a gamer of the ‘quality’ of the game. A great narrative animation in the same world as the game sends a quality clue to what the game will be like, both in terms of look and story.

Even though Spartan Ops was always intended to be very high quality, the Axis team deliberately wanted to steer away from the desaturated palette of ‘TV commercials’ and embrace the colors and palette of the actual game. “I am a big fan of the commercials that Neill Blomkamp has done for Halo over the years,” says Aitken, “but one thing I notice is I don’t think they feel like playing the game. They are almost set in a parallel universe that isn’t quite Halo. The game world has always been quite colorful, and those commercials can be desaturated, and gritty – great but different.”

Avatar is another interesting reference for Axis. When the team first saw it they joked, ‘Cameron is doing what we do but with $500 million dollars.’ Aitken adds to that another well-known director: “I think even Peter Jackson is getting more stylized. Effects are making up so much of the frame. There are just so many interesting ways this can develop as the consoles improve.”

As a result of years of experience, Axis brought to Spartan Ops a great balance of remaining connected to the game and also producing exciting new elements that were outside the game. The main story was written by Brian Reid of 343 Industries, but 343 embraced Aitken and invited the Axis creatives into the process to contribute to the script, something Aitken really appreciated.

The scope of the project was defined by the characters and dialogue, with the “wow” factor eye-candy coming in to support that. Key to that were the environments and the ‘stages’ that the characters had to inhabit. It was difficult to fully map out all the environments at the very start, so the team entered the project knowing there was some uncertainty on the final environment count, and certainly Axis signed on before there were final scripts. “If we are in this type of situation again we might need to come up with ways that we are able to scope out that complexity more – earlier on,” acknowledges Aitken.

From start to finish, the project spanned one year. The team aimed to have more content locked down as one script moved through the pipeline. For example, the edits and cuts would be fully locked down before lighting, thus avoiding unnecessary rendering, but earlier in the process the team allowed themselves some flexibility to find the best story and craft the best script. At motion capture, which came right after storyboards, they had a week in rehearsal and a week on the Giant Studios motion capture stages. The scripts are completed for motion capture, but it is after the capture in the layout stage that an “enormous amount of editing is done,” says Aitken. He also says Axis tries to not deal with locked coverage in motion capture: “I don’t like to see our storyboard force the motion capture to be ‘set in stone.’ It is nice to let those performances come out in the capture sessions.”

Giant provided raw motion capture, and this rough mo-cap is placed in Maya with proxy sets. At this stage the assets are only stand-ins there to tell the story. It is not until the blocking, staging and performances are agreed on does the team commit to detailed models, environments and props.

Now the team will adopt a multi-camera, live action approach to ‘film’ or visualize those hero performances from a number of different camera angles inside Maya – something that is very easy with the now virtual performances. And it is this coverage that is edited for the final story arcs.

The sequences are XML-exported from Maya into Adobe Premiere (the team also has an XML Premiere back to Maya capability). Aiken comments: “I actually ended up using Premiere more than any other application, personally, in this project. With the editing process, everything crystalizes.”

Now that the sequences are cut, the team reviews each episode and decides what needs to be cut and what needs to be there to tell the story.

The final story elements can now be locked down and moved through to final lighting and rendering.

In 2012, Axis expanded but knew it would want to farm out some sequences to help finish the project. Two other companies helped Axis with Spartan Ops under the direction of Aitken. The key to this was making sure that the companies that joined the process could also finish the shots in Mantra, Houdini’s native renderer. Axis relies almost exclusively on Mantra, and not just vanilla mantra but one with a lot of Axis’ own pipeline customizations. The companies would be picking up the shot at this last stage so being able to integrate seamlessly with Axis was vital. Thankfully, Axis found two companies exceptionally well-placed to help. Of the 1,400 shots in the final series, BlackGinger and Realise Studios were tasked to take on about 100 shots each. “It is a credit to them both for being able to pick up our shots really quickly,” says Richard Scott.

BlackGinger

Based in Cape Town, the seven-year-old South African company mainly services commercials, but also works on series and some film projects. BlackGinger uses the latest technology and software and, more importantly, has a very strong Houdini lighting pipeline into Mantra. fxguide spoke to Jason Slabber, VFX and lighting lead at BlackGinger. The company has a staff of about 40, with a crew of about five Houdini artists who do VFX and lighting, and a similar number of Nuke compositors.

Because the company was responding quickly, BlackGinger adopted the shot lighting approaches developed in the UK. “Sergio from Axis came out here to South Africa the week before we started,” says Slabber. “We integrated their tools it into our pipeline, but all the assets and caches were provided by Axis.”

Normally the company uses XSI and Houdini, with some custom exporters, but in all cases the company focused rendering in Mantra. “We mainly worked on lighting sequences that were in the Librarian room and a few space shots. We’ve done around 120 shots for Axis, since the beginning of October up until now.”

For each sequence, director Stuart Aitken would provide a lighting brief and give the team a direction on where to go. BlackGinger would then light the scene and the director would do paint-overs, a process of drawing over the render extensively in Photoshop to directly show how the final lighting should look and what changes were wanted. “This was great,” adds Slabber, “the guy is incredibly talented. His lighting cues were really nice. This meant only a two or three stage revision process. It was quite fast. The paint overs and lighting direction we got from Stuart were so specific we could really achieve a lot. He enabled that and made it really easy for us. You can get used to directors not knowing what they want or over lighting things, but Stuart wanted dramatic things at times and a cinematic kind of lighting. It was really nice.”

“I am quite hands on,” explains Aitken, and “no discredit to any of the artists, but I had not had a chance to work with BlackGinger and Realise. It is much quicker to get the first lighting passes back based on the color scripts, and it can be 80% there, but that last 20% is really important. So often times I would go into Photoshop, adding stuff on top and it really helps. Especially since, as a director, you are faced with a lot questions and the thing most people don’t realize is that you haven’t had a chance to think about that thing at all before then. Until that person asks you, there is no way you can think ahead of everything and every detail. So a lot of this (paint over) is about my own thought process. I might know there is something I want to improve and that paint over process helps me get more precise about what should be done, to what is needed.”

In lighting the characters, Slabber opted to use a lot of ‘set style’ lighting of big bouce cards in the scene. That would affect just specific characters, adding extra rim light and also filling out the fill side to give characters, yet keep them apart from the background. While this film style lighting is technically incorrect, only in so far as the characters have their own light, it produces a very cinematic look, “and flows really nicely from shot to shot,” says Slabber. “The important thing was for the character to look good – frame to frame.”

Morne Chamberlain, the R&D programmer who integrated the Axis tools into BlackGinger, discusses how they were so easily able to integrate all the Axis shading and lighting setups (HDA’s) at his studio: “Basically they gave us a Python script that they use on their side to set up the environment variables. I had to integrate that into our pipeline tools, and where applicable change the paths/values that the environment variables were set to, in order to conform with our pipeline and directory structure. I set it up in such a way that it is only used on Spartan Ops, and not on other projects.”

The initial set of assets from Axis was models and their textures. Part of this was setting up the path/directories in which Houdini looks for HDAs. That had to be set up so that Houdini loads all of their assets from the directories where BlackGinger was keeping it. Also Axis’ directory structure was slightly different than BlackGinger, as Axis had separated out shots from each scene, whereas BlackGinger had all the shots in an episode together. “So I wrote a Python script to add an extra directory for each scene with symlinks to the shots, so that the mapping from their directory structure to ours worked properly,” explains Chamberlain.

BlackGinger also wrote a Python script for copying animation caches that the team downloaded to each of the correct shot directories, automating the process. In situations like these, fast and accurate asset and directory structures are key. After the initial disc drive of data hand carried, the team still downloaded half a terabyte of additional data and animation caches. This is particularly interesting as a lot of the scuffing and weathering was built directly into the shaders.

“For the Mantra node, the most important things were really just to match their settings and their AOVs/output planes,” says Chamberlain. “I got some ideas about how to improve our node from the way theirs was set up, but the primary thing was matching the settings. For the MDD procedural, they provided the source code but there were a couple of minor syntax changes I had to make because it didn’t compile with GCC (basically the standard compiler on Linux).”

The Nuke team, not only composited with each character being rendered individually, but as a multilayer render pass with a scripted precomp from the pipeline. Each of these 25 passes per character were rendered out as individual files (not mutli-layered EXRs) and then comped with lens flares, glows and glints. Ash Ryan headed up the Nuke comp team and they had to deal with a certain amount of ‘grain’ in the render (due to anti-aliasing reflection sampling settings) this required the use of a filter Axis had developed. The team found it was faster to degrain-filter, say a reflection pass, than wind up all the samples on all passes – especially on a pass that is, say just the reflection pass. All the motion blur and depth of field was added in comp. The final resolution was 720P for mastering.

Realise Studio

Realise, a boutique production house, was another of Axis’ close collaborators on Spartan Ops. Knowing that Spaniard VFX supervisor Jordi Bares had moved there from almost 10 years at the Mill, and knowing how brilliant Bares is with lighting and rendering, we assumed he was behind the great work at Realise. Not so, pointed out Bares: “Although I would love to take the credit for it, the fact is that the work was done by Paul (Simpson) and his team. I only helped in the first weeks, with visualizing the look dev of the space sequences, defining a lighting style, and as a creative reference but nothing technical. Truly, Paul is your man here and deservedly so, he is a genius.”

Keen to find out more, we spoke to Paul and his team about Realise’s work on the series.

fxg: What was the scope of the work Realise contributed to Spartan Ops? Which episodes and sequences did you work on?

Realise: Working closely with director Stuart Aitken, we supplied a combination of special effects, lighting, compositing and rendering work on four episodes, for around 130 shots. With Stuart’s direction and reference materials, we also designed or developed significant elements such as the ‘slipspace’ faster-than-light travel device and the maw portal, both used throughout the episodes. It was an exciting project to be involved in. We’ve been aware of Stuart and his work at Axis for many years but have never managed to work together before now.

– Above: see some of Realise’s work for Spartan Ops.

fxg: Can you talk a little about how your pipeline was used to work with assets from Axis and other studios?

Realise: We were working directly with Axis, retrofitting their Windows-based pipeline to work efficiently and reliably with our Linux system. At the start of the job, we created a mirror of their asset system and before working on any sequences, we’d synchronize all the elements, ensuring that we shared the latest versions of every detail within the shot. It took between half a day and a day to set up each shot.

fxg: What was Realise’s typical approach to a character animation shot in terms of what assets you received, and the steps from animation through to final render?

Realise: Axis provided the character animation, which we would incorporate into the sequences we were working on. Having laid out all the scenes, we’d work on the lighting, either using the existing set-up that we’d made for the scene or creating a bespoke lighting rig, according to the director’s instructions and particular demands of the shot.

fxg: Can you talk about one of the challenging spaceships shots in particular, such as a hyperspace shot, and how it came together?

Realise: The space sequences were intense, with the amount of detail and elements within every shot and the complex geometry of the spacecraft. For example, the huge Infinity spaceship used around 16 gigabytes of memory.

During the main battle sequence, there were many craft firing and interacting, creating challenges in lighting, movement and consistency. We often couldn’t render every element simultaneously because it used up too much memory. But actually separating the elements helped with the compositing process. We also developed certain effects like smoke trails and explosions using a combination of 2-D and 3-D solutions.

The ‘slipspace’ feature was also quite challenging because there’s nothing real to emulate. Using the director’s comments and his reference materials, we designed and developed the look and feel, creating a few iterations before we were all happy. Our own bespoke volume rendering set-ups were crucial and we used some house-compositing techniques to enhance the effects.

Bonus Q&A with Axis

Axis CG supervisor Sergio Caires and pipeline supervisor Nicholas Pliatsikas discuss some of the technical requirements of Halo 4: Spartan Ops.

fxg: Did you have to work with a large volume of data?

Caires: We have not come across any renderer (and we have been through a few big ones) that can handle anything like the amount of data that we push through Houdini’s Mantra so effortlessly and then have the audacity to ray trace against it. Its reliability and robustness gives us supreme confidence in its ability. For example, take the UNSC Infinity ship modeled by Ansel Hsiao (truly a spaceship modeling god). He asked the question “What’s your polygon budget?” I already knew he could model unique detail at a rate unachievable by mere mortals. I still replied with words to the effect of “go nuts on it” and we ended up with an amazingly detailed looking model with around 26 million polygons. Mantra has amazing success rate for renders completed.

Pliatsikas: We really did have a huge amount of assets on board with Spartan Ops. This does of course create large volumes of file IO, so the flexibility that Houdini gave us was paramount. We rebuilt our asset system in Houdini to the extent that it was pretty much as automated as you can get bar maybe a few small missing features.

Our asset system was able to generate the Houdini assets automatically, along with the shader templates all hooked together with Houdini’s digital assets that have user editable artist areas allowing for procedural modification pre and post deformation.

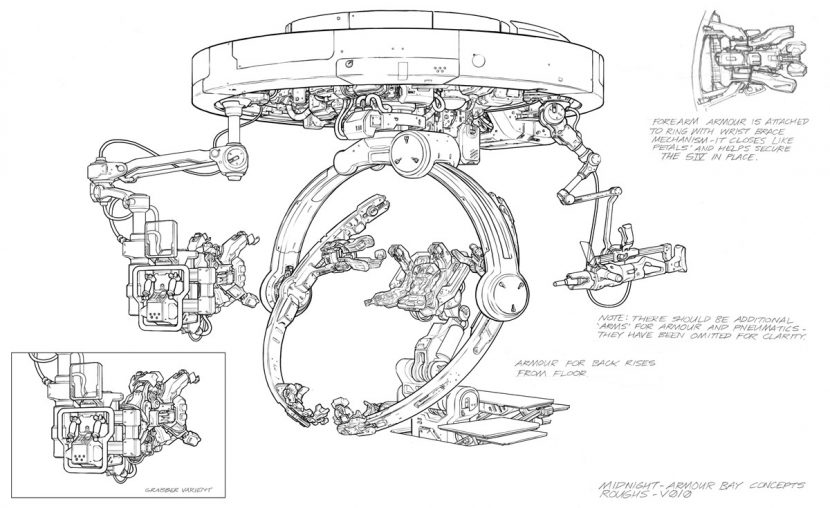

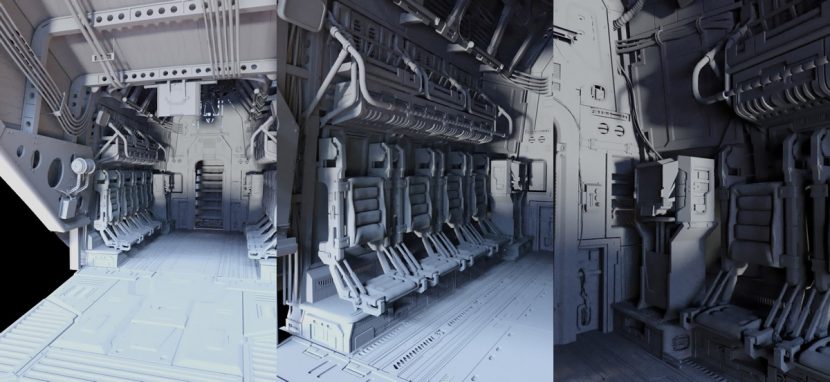

Even the integration of our own custom render time delayed load procedural, which loads cache data directly from our animation software Maya, was completely seamless. Houdini is open enough that we could even use CVEX to modify our geometry cache data through the delayed load at render time. The latest geo core rebuild in H12 really was perfect timing for us to be able to handle such massive amounts of data, the new instancing system was important when rendering the armoury in Spartan Ops: Episode 1, when the Spartans get suited up. Those Gyro Bays were pretty complex objects.

We also found that material IFDS were a major requirement when dealing with large amounts of assets, especially when utilized with our UberShader. In terms of set-ups, Material IFDS are great in concept and really do help resolve IFD generation times. I’d like to see them more widely supported, not just at geometric and render time procedural level but at an object level.

fxg: Could you tell us more about any customizations you may have made to Mantra?

Caires: I wouldn’t say we have customized Mantra, but we have customized almost everything that feeds into it. This is one of the great things about Houdini, someone else has done the really hard work of designing software so impressively flexible and well thought out that it can easily be customized by anyone to suit their particular needs.

There are a couple things of note that we did to help us optimise renders. We have our own irradiance caching methodology, so that we can do indirect light lookups in a similar way to how Houdini’s photon maps work but with an evenly distributed point cloud instead.

These were a pain to generate but we have now figured out how to generate it using the same mechanisms and hooks that Mantra uses to generate nice evenly distributed point clouds with baked irradiance for its SSS shader. You have to try really hard to find a black box in Houdini that you can’t open to tweak or re-purpose!

We also created an invaluable little SOP that lets you assign property SHOP’s to polygons outside the camera frustum so that we can very aggressively take down dicing quality in directly unseen parts of the scene. We would love it if mantra had a button for this!

We have a really neat procedural shader that let you do add things like dirt in crevices and dripping from under overhangs, and add wear and tear to edges like chipped paint on edges and scratches. This was used pretty much on everything from the UNSC Infinity to the Spartan armour.

Pliatsikas: The main customisation we did for Mantra was the introduction of our custom Delayed Procedural. This gave us quite a few features that really helped, mainly to remove the bottleneck of another geometry caching stage.

I suppose the set up we had with the automatic HDA system is similar to the current alembic workflow in Houdini, but on steroids. We wanted to push this further and have the animation data from Maya actually rendered directly in Mantra. This comes with a few requirements, ability to do certain edits to the source geometry post deformation on the delayed load it self would be beneficial.

With the ability to embed the point order as attribute within the Houdini GEO format, we could then alter the point order/ delete faces (etc) and our delayed load would still apply the correct deformation to the geometry and not scramble the mesh as it would in other applications.

We also found that with the scales and distances we had to work at, we hit certain floating-point calculation problems. We solved this by creating an offset system where we moved all data to the origin centralised around the camera itself. This had to happen on component space, due to world space caches being utilised, so again we were able to use the custom delayed load with the same offset data as the rest of the scene based off the new camera position at origin.

fxg: What was the hardest shot to work on?

Caires: Probably the reveal sequence for the hangar bay in Episode 1. This was really hard because it had a large number of unique animated assets that had to be done before the shot could be completed; not to mention the environment itself was very complex, so there were a lot of dependencies that needed to be resolved in parallel to make it happen on time.

Actually every shot in Episode 1 was hard to pull off on time due to all the interdependencies that needed resolving since a great deal of assets that appeared in all the episodes had to appear in the first.

Pliatsikas: I agree, Episode 1 had the heaviest content both render wise and dependency wise. I think it’s partially to do with Episode 1 being the “teething” episode too. We had to iron out all the production kinks. This could be just fixing issues and bugs in new tools, or simply notice content that needs tweaked, refined and so forth.