Watch our WIRED DesignFx piece with our media partners WIRED magazine.

Roland Emmerich

Watch Mike’s interview with Roland Emmerich, exclusively for fxinsider members.

Volker Engel

VFX Supervisor Volker Engel also has co-producer credit on the film. on the film 2012, both Engel and Marc Weigert became co-producers (Weigert joined this film, Independence Day Resurgence, midway through). Since then, Engel has both produced and vfx supervised, although his connection to director Roland Emmerich illoes back even further to their start in the industry in Germany many years before. “I’m happy to say, it was actually Roland who convinced them (Sony Pictures) that the people who are responsible for the visual effects on these gigantic projects deserve another credit,” explained Engel.

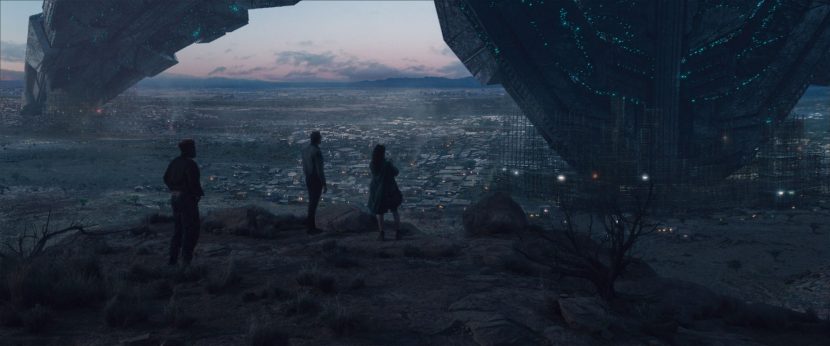

With Engel co-producing, it meant that he started very early with the project. This was key as Emmerich decided to start the process by making a series of approximately 20 key images that would indicate the look and feel of the film. “At the same time, Fox had received the screenplay. Roland explained to me it would be very beneficial for them to also see a slate of images,” said Engel.

In October 2014, Engel stated working with visual effects company Trixter in Santa Monica to create these key iconic images that would be the visual template for the film. “At that time, they had an art director called Luis Guggenberger ” explained Engel. “Simone Kraus, one of the owners of Trixter and supervisors, together with Luis myself, and Roland, we were the four people banging out these images.”

Guggenberger hired five or six other artists from around the world that he had worked with and it “became a bit of an undertaking that spanned all over the globe”. Engel met with Emmerich every second day to go through the latest versions of these images and discuss them. In between, he would sit together with the team at Twitter and discuss what the artists had done and “I would second-guess what Roland would want, – which I guess I can do with my now, ..25, or 26 year relationship with Roland” joked Engel.

It was this artwork that Engel believes helped green light the new film and the team put the style frames in the production offices to serve as inspiration. These iconic images more or less translate very specifically to actual shots that the team made for the actual film. For example, when the mothership approaches the moon and you see Jake and David being propelled upwards by all these rocks coming down. “As well as the big foot of the mothership hitting the water close to Galveston and creating this big tidal wave” commented Engel. They also included a similar shot of the foot coming down in Washington, DC. and the destruction in Singapore, when the mothership flies over the city state and this gravity/antigravity sequence plays out.

If the first film had a signature, it was the destruction of key landmarks around the world. And that was reflected again in this film as various key landmarks fell in the path of the oncoming invasion. “Yeah, they love to get the landmarks. That’s Roland. That’s just fantastic, he’s kind of poking fun at himself. Of course you go could say why did this building have to fall onto the Tower Bridge and not right next to it? But that is less fun,” laughingly remarks Engel.

A hallmark of the first Independence Day landmark destructions were the incredible vfx miniatures. Unfortunately, while a vfx professional can have a romanticised view about miniatures, in a modern film they are hard to justify. “There’s certain scenes where I still wish we could do it and it would make sense. Yes, you get a certain quality out of it… I tried it,” Engel comments.

Emmerich had considered it might be a great move to have an opening in this film which was very similar to the first film. Engel thought that this might be a great place for a miniature, but in the end the scene was cut and no miniatures were used in any part of the new film. “It would have been a one-off, which meant that, even at that point, it actually didn’t make any sense when we compared to creating it digitally, which was pretty much less than half of the budget,” Engel comments.

What did work very well in the new film was the collaboration with the art department, which was particularly tight. “We were lucky enough on this movie to have our visual effects setup and the art department only being about 50 metres away from each other on set,” Engel explained. Even with this tight collaborative loop Engel would like to see the two departments merge. “I’ll tell you my ultimate dream is that we have one department and it is just one big space and we’re all working out of that space. We’re sharing the same assets,” said Engel. On Independence Day: Resurgence, the team did take the step of having a digital asset manager in the art department, working very closely together with VFX. Then, once the film was in post-production, Uncharted Territory become the data hub for all the various VFX companies who worked on the film.

Uncharted Territory

The Uncharted Territory team did 268 shots, the largest number by shot count, focusing on the Interior mothership, EMP blast / inside the Area 51 hangar, flooded Austin, and created hundreds of planes. Uncharted Territory ran the post-production and effects teams with Shotgun custom tools and a vendor transfer tool called VTT.

Many of the vendors commented on how effective and efficient this setup was for co-ordinating so many assets and shots. “All of the 15 or 16 vendors that we eventually worked on the film came together via VTT,” said Engel. “First of all, it could be accessed by all the vendors, so it had the whole library of the ships and planes and everything that we did. It also had the drawings that were done by the art department. Everybody had access to everything through this central server that we had set up.” VTT was built by two of the two programmers at Uncharted Territory and combines custom code with off the shelf tools such as Shotgun.

Uncharted Territory is run by Engel with Marc Weigert, who was only able to join this production during post-production, because the was busy doing something else before that. But shot count wise, actually Uncharted did the most shots, but not necessarily the most complex – if you compare to it to say Scanline.

They were also the hub for the on set work with N-Cam.

N-Cam on set

Emmerich made extensive use of N-cam on set. The director worked very closely with the system on a daily basis with DFX Supervisor Marion Spates. It is estimated that the N-Cam was used for about 90% of the VFX shoot. “We actually used it extensively already on White House Down,” commented Engel. “So we decided immediately we want to do this again. It had also made quite some leaps in technology.”

On Independence Day, the system was used to show animations on set. “Let’s say we’re inside Area 51, inside the big hangar,” Engel related. “We have dozens of jet fighters lifting off. Roland wants to pan off one of the faces of his actors and pan with one of the jets flying out of the hangar. He actually saw that right there on his monitor”. DFX supervisor Marion Spades was responsible for this part of the on-set supervision, together with two person team from N-Cam.

This ability to generate animation on set meant that if Emmerich came up with a new idea, he would simply say what he wanted to see and the “team would have about 15 minutes to come up with the sequence and shooting would start using the new assets,” said Engel. This allowed the director to frame his shots more accurately and also show the cast what would be in the shots come post production.

Working with MotionBuilder, Marion would set the key frames and show Emmerich, who could see the elements on his video village monitor split. They were able to use two cameras, so their A and B cameras would both be running at same time with N-Cam. The team had used N-Cam less widely on White House Down and everyone was keen to exploit it even more on this film. “Roland just loves the fact that he can see what it’s going to look like at the end and what he can do with it,’ says Engel.

The shoot was monoscopic, with a stereo conversion later. The cameras were primarily Red Dragons shooting 6K anamorphically. “I would say anamorphic made everything 20 percent more difficult,” says Engel. There were some issues with tracking and yet, “I have to say that Roland and his DP Markus, were looking for a very specific look, which was sometimes a bit of an old-fashioned – so they were okay if there was some vignetting happening on the edges of frame or got a little bit more out of focus on the edges and so on” explained Engel. “That was actually part of the look.”

Scanline

Scanline was the first of the external vfx companies to join the project after the initial work by Trixter. “I can’t say enough about what Scanline did,” remarks Engel. “Obviously, they’re known for their water work. But going back to these images we talked about for the studio presentation…there were two specific images from that initial 20 that they did. One is with Julius’s boat, burning oil rigs around him and tankers capsizing and so on. Then there’s the super-wide shot where you see the wave with the foot on the right side and the city of Galveston on the left side”. Engel explains that both of these iconic shots look remarkably like the concept art specifically due to the work Scanline managed to pull off.

“As Jeff Goldblum says in the first trailer – it’s much bigger than the last one”

For Engel, one of the biggest challenges was the opening ship arrival. The script called for the new spaceship to be about 3,000 miles wide in diameter. “Starting with it on the Moon when it’s scraping over the moon’s surface (so what MPC did there) and then it went on to enter the Earth atmosphere, extending its legs (vfx by Scanline),” comments Engel. “You’ve seen re-entry sequences in other movies but this is not just one pod that does a re-entry. This ship is just so gigantic.”

Scanline’s team was headed by Bryan Grill as VFX supervisor, and one issue he immediately dealt with was an unexpected issue of the earth’s curvature. The 3000 mile wide ship extended way beyond the horizon line. “In normal life the horizon is roughly 12 miles away, so the ship was always going to be cut off” as it wrapped around the planet.

The team struggled initially with what would be seen of the mothership. In space, where there is no atmosphere, one should be able to see the whole ship. But seeing the whole ship makes it hard to indicate scale. By contrast, as the ship breaks into Earth’s atmosphere, the team had to juggle the concepts of size and how much glow to add, how much smoke or vapour would be generated, and thus if one would actually be able to see it at all. “Those were shots I was particularly happy with when I saw those coming together. The touching down on the planet, because, just as Jeff Goldblum says in the first trailer, it’s much bigger than the last one” jokes Engel.

The City Destroyer Ship seen in the African desert is the same design as the main ship in the first film. In the story line it is one of the only ships not destroyed, but rather beaten in ground combat. The problem was to find out how to digitally replicate it. There were no plans or blueprints for the ship, only reference from the first film, but even in this film, the ship sometimes changed between shots for creative reasons.

Grill did ask if perhaps there was a model from the original film in a museum somewhere and it transpired that that had possibly been one in a Hard Rock Cafe in Florida. While Grill offered to fly himself down to photograph the model, it transpired it had either been sold or moved somewhere unknown. In the end, the best world expert on the first ship was Volker himself. “He spent more time with it, and knew it the best,” explained Grill.

Grill estimates that in terms of staff on this film, about 20% were in LA and 80% were in Scanline Vancouver. “Mostly compositing and lighting was in LA, Vancouver did the main pipeline,” he commented. The structure of Scanline is such that there is no need to allocate shots between LA and Vancouver as the company is set up with a dedicated gigabit connection between the offices and all the PCs are virtualized (PC-over-IP). Mohsen Mousavi, the VFX/DFX supervisor for Scanline in Vancouver, joked he started work at Scanline in 2013 and it took him almost 6 months before we discovered his personal PC machine was not actually in Vancouver. Even with two 2K monitors on his desk, “I just did not know the machine wasn’t there – you see no lag,” he jokes.

Mousavi explains that one of the remarkable aspects of the Scanline Flowline software is that it can handle such vast simulations in one pass. “Honestly, if you were trying to do this in other packages like Houdini or fumeFX, you’d need to do the sim with a 1000 containers,” sas Mousavi. “Flowline can do it in one simulation. It gets distributed over the farm, but it is still one simulation and if something goes wrong, it is easier to send the whole simulation to the farm again to get the fix, than to try and do just the 5% fix and somehow composite a solution.”

When you have a vast object in terms of scale and then a smaller but detailed object closer to camera, you can get precision error. You get it with any software – Maya or Max or whatever – but the precision error means numbers get effectively rounded and that is bad for simulations. For example, this would be in issue seen in a shot having a small detailed fishing boat in front of a vast huge tidal wave.

The solution that the team came up with was to publish the scene out of layout in one scale (say 1:1) and then as they are building the next component (say a simulation), one can import it at a different scale. Once the simulation is done the camera can be published at a different scale and this means you can have multiple different ‘scales’ in the same scene. This is not in reference to the objects looking to an audience a different scale but rather the computer seeing their data at closer scales. Creatively, just for effect, the team could then also simulate at a different scale to the scene scale.

This went further, as the mothership was so vast the asset was itself scaled between shots. An example of this would be when a foot of the ship comes down in the water in the boat sequence. “The toe that comes down in Galveston at 1:1 scale was about 22 km wide,” explains Mousavi. “If you put that toe down on Washington, one toe covers most of the city and so you couldn’t put all three toes down inside Washington.”

The solution was to creatively vary the scale for the shot or the composition. While this alone is not a problem, it does affect many of the procedural aspects, procedural textures, displacement maps, and shading detailing and those need to be corrected. Scanline introduced a metadata tag to allow for an inverse compensation to procedural items if the model scale was varied for creative or blocking reasons. The simulations were also scaled appropriately.

The Scanline pipeline was also a deep pipeline. Scanline built a lot of custom exporters to be able to work efficiently with V-Ray. “We were able to do a lot of deep compositing with the volumetrics,” says Mousavi. “We were a bit sceptical at first but it worked really well.”

The deep pipeline meant that the company had to manage huge sim caches and vast deep data caches which need to be maintained until compositing. This is where the shared infrastructure between the two offices really pays off. For example, in a shot with the Mothership landing with smoke, Mousavi said that one iteration overnight of a simulation would be 400 terabytes of data. That’s for only one iteration and Scanline would nearly always need to more than one. In fact, the team could be sending six or seven simulation iterations to their farm at once over a weekend.

The beam in the ocean plasma drilling sequence was referenced from the beam that destroyed various landmarks from the first film. The issue in this film was how it would interact with the water. In the end the problem was partially solved as most of the drilling was only seen on video screens on monitors in Area 51. “We originally had a whole 800 frame drilling sequence that starts with first hitting the water,” says Grill. “That got cut up and used on monitors and so we just went back and only did the bits that were required.”

For more on Scanline’s work on Independence Day: Resurgence, listen to our fxpodcast with Bryan Grill and Mohsen Mousavi.

Weta

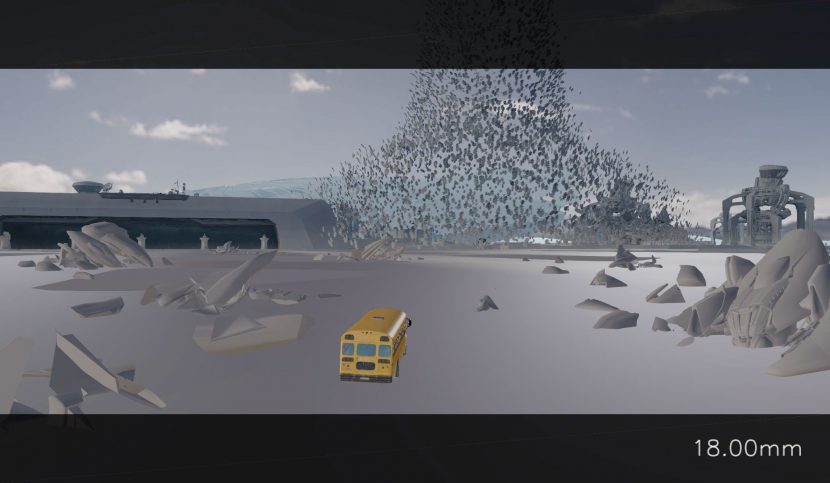

One of the standout sequences in the film is the Queen Alien attacking Area 51 and chasing down a bus load of kids as our heroes try to escape across the desert salt flats. All of the shots of the Queen Alien were handled by Weta Digital in Wellington.

Emmerich worked for the first time with Weta Digital on this film and he told fxguide “I will again.” He was not only extremely pleased with the end result but went to great lengths to explain how Weta’s team creatively contributed to the film by suggesting, contributing and collaborating in a way that has clearly impressed him and Engel, who commented: “I have to say and congratulate Weta also for the work that they did – to take what we had planned and make it even better”.

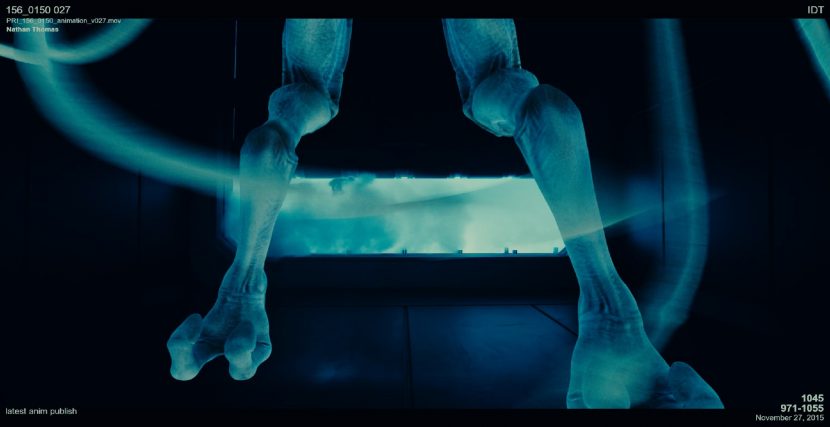

Matt Aitken was Weta’s Visual Effects Supervisor. He and the team produced some 230 shots including the Queen’s chamber, the Queen protected by vortex in Area 51, her subsequent stealing of the A.I. sphere leading to her death, and of course the Queen & bus chase. Aitken credits Dave Clayton and the animation department at Weta with re-pre visualising the sequence and extensively changing the camera framing in ways that really appealed to Emmerich and Engel.

Part of the reason for the need to extend the work that had been done in previz was that the bus sequence was one of the first things shot and thus the standard pre-viz team had actually not had as soon to work on the sequence as some of the later principle photography. For the bus chase, Engel remembers trying to figure out in pre-vis at the beginning of 2015 and he was reminded of something he did similarly in White House Down, where there is a chase over the White House lawn with the President’s limousine.

Principal photography shot the salt flats for about three days. The team first shot plates and then a lot of of still photography for the lake and the surrounding mountains. There was also a lot of live-action passes shot from a helicopter with a real bus. The team put out orange cones all over the salt lake for tracking markers.

Initially it was expected that more of the live action bus would be used and that while Weta would add the alien mother they would also be removing tire tracks. In the end, a vast amount of these shots became fully digital shots, with the alien, the bus and all of the environment replaced. “We’re only half-jokingly saying it’s great reference, because it’s amazing reference for what the bus is doing and Weta got a lot of material to match to for their shots” explained Engel.

Editorially the production cut and edited the live action to get the scene closely blocked out before Weta replaced most of the shots with matching fully digital shots. There were cases where the Weta team did keep the real bus and then added the queen to it.

Aaron Sims was the creature designer for the Queen Alien and the other new aliens such as the soldiers. For a couple of weeks he was also joined by Patrick Tatopoulos (who created the alien for the first film) to offer some additional ideas. Sims and his team met with production designer Barry Chusid and the director about twice a week even when the production was already shooting in Albuquerque. “That was a very detailed process. That was all hammered out in advance,” comments Engel.

There are effectively two Queen Aliens: her exoskeleton suit and then the Queen Alien herself. Aaron Sim’s team handed off medium resolution geometry with some Z-brush model work. Emmerich was happy with the Queen’s shape and basic design, Weta then took over and did the fine detailing and all the texturing.

Aitken and his team did show various options using the original photography but then showed alternative options with the fully CG approach and the new flexibility that the fully CG shots allowed. “Roland signed on.. and so in that sequence there is almost no live action photography in that exterior sequence that is plate based, the bus is almost completely CG in that sequence,” explains Aitken. Weta was initially going to contribute on a different set of shots and this changed to focusing on the Queen, so while Aitken was on set he ended up not being on the set for the bus salt lake shoot.

Paul Harris at Weta produced a very powerful shader for the salt flat floor, which worked for big wide shots and right down to closeups near the wheel. “He encapsulated everything in this one procedural shader and it became a real workhorse for us,” says Aitken. “I think it looks great.. it looked just like the real plates but without the camera truck tire tracks.”

What made the shot more difficult was staging the sequence in full broad daylight. Once the production accepted a fully CG solution for almost every exterior bus shot, the team could position the sun to best effect. While Aitken comments that the contrast range was a fairly standard 3 stops between key and fill, being able to place the Queen and the bus at the best angle to the sun really helped in making the shots work individually and as an edited sequence.

To get the background to look real was some new technology that Weta is only just now incorporating in their Manuka renderer. “Manuka now incorporates a physically based sun and sky light, for this light you get the disc of the sun and the whole sky dome, and you just dial in an azimuth and turbidity (cloudiness or haziness caused by particles that are generally invisible to the naked eye, similar to smoke in air). For example, is it alpine air or Beijing on a bad day,” Aitken explains.

This new light did not produce the actual sky in the shot (it has no clouds, etc), but it did give the correct exposure for the sky dome and is physically accurate to all the environment. “That gave us skies much brighter than what we had done in our matte paintings, our matte paintings were too saturated and blue.. the moment we graded our skies to match, – the shots suddenly looked photographic” explained Aitken.

This model, of course, lit the bus and Queen as it lit the sky and the environment in one consistent, energy conserving way. Weta could not simply just match the CG to the plate shots as the principal photography had been shot over 3 days and throughout the day, so they were vastly variable. “By contrast the new lighting model was consistent and photographic across all the shots in the sequence,” says Aitken.

Regarding the animation, this was the first time the audience had seen either a Queen or a running sequence. Weta did a lot of exploration to find the right run cycle. Aitken relates that “once we got Roland’s approval on that run cycle, that became a critical piece of reference for us because the sequence is essentially a chase.”

There were several options for the ending, but in the final version,one of the challenges for Weta was the ooze and gel like substance that surrounds the actual Queen. Weta solved this with two advanced simulations,. “There is one which is a surface based simulation, that covers her and the inside of the suit,” says Aitken. “Then there is a sim which we shaded the same way but it sloshes around and spills out…I was very proud of how that came out.”

Interestingly, a shot was added where the windshield of the bus is covered in the same ooze. “That was one of the trickiest of all of those sim shots believe it or not. I knew it worked when I saw the render looking into the bus with David, Julius and all the kids, which was them on a ‘card’ and ray traced through the fluid sims with diffusion etc. Tthe audience needed to know what they were looking at before the wipers started for the gag to work,” he explains.

ImageEngine

Image Engine were tasked with modelling and animating all the aliens in the film other than the main Queen Alien. The team did about 165 shots. Of those, 96 shots included characters.

The three main locations were in the holding prison, up inside the alien ship after the failed attack, and the foliage sequence in the harvest area. Martyn Culpitt served as the VFX Supervisor for Image Engine with Jason Snyman as Animation Supervisor, Mark Wendell as CG Supervisor, and Keegen Douglas as Comp Supervisor.

In this film there were at least three very distinctive aliens. The Queen Alien at the end of the film (handled by Weta) and then Colonist and Soldier aliens. The Colonist were the only aliens that had been seen in the first film. Thus, the Soldier aliens allowed Image Engine more flexibility in terms of design under the supervision of lead character designer Aaron Sims. The Colonists are about 12 feet tall and the soldiers much larger and more aggressive, and they have their armor more built in. They also have distinctively new forms for their legs. “The main thing is the size – they are a lot more intimidating and the colonist had a rifle and now the soldiers have more of a cannon,” explained Culpitt.

From a CGI asset and look-dev point of view, however, there is an extra ‘alien’ since there is “the colonist suited and then the colonist that falls out of the suit” explains Mathias Lautour, Image Engine Look Development Lead.

In the first film the aliens were puppets and in this film, especially in the mothership, the team needed to develop a free standing walk cycle. Image Engine was selected for their outstanding character work in films such as District 9 and Chappie amongst others.

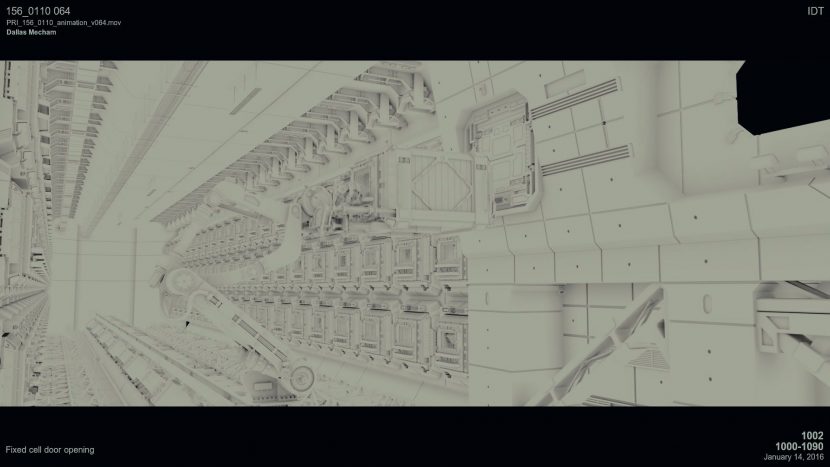

The Prison set

Image Engine created the alien prison inside Area 51 and everything that happens with the aliens inside Area 51 where Dikembe Umbutu uses his machete and stabs an alien to death. Importantly, Image Engine also had to animate the little alien creature which emerges out of it.

The initial design for the Colonist aliens was very much from the first film. The production actually provided a model from a museum in Berlin from the original 1996 Independence Day and was scanned for reference. “Unfortunately it was pretty old…it was dusty and the colour was washed out” explains Lautour. Emmerich was keen for the digital model to match this model as closely as possible.

The prison set was a vast multi layered set with a large amount of geometry, lights, and a population of annoyed and pissed off captured aliens.

Most of the aliens in the holding prison were Colonist and they had to be animated in a huge variety of states, from subdued to wildly excited. A lot of effort was placed into exploring their tentacles and how these might b used by the creatures. The team enjoyed exploring the creature logic and fleshing out the reasons for their behaviours and how to manifest the correct tone of the characters at that point in the story into the alien form. Image Engine felt very much like this was a collaborative effort where the film makers allowed them to explore animation and contribute major design/character points.

The alien that comes out in the prison is a Colonist alien based on the alien seen in the first Independence Day. The shader and look dev was quite complex and made with large amounts of sub-surface scattering (SSS) and there is actually an internal illumination under their skin. The key was to try and make it work in the shot when the set was very dark, and very blue. The alien was also covered with layers of slime fx animation and this somewhat also made reading the alien itself difficult. Of course, the set was dark for a reason and so the team needed to balance having the aliens in the darks and menacing while also making them stand out just enough to allow the audience to understand what was occurring.

The animation started with what Animation Supervisor Jason Snyman describes as a “layer of mo-cap.” The team used the Xsens motion capture system and blocked out some spaces in their office. “We got some chairs, measured it out and scaled it to the size different and then acted things out,” says Snyman. “We did a few variations of what ‘crazy would be versus a bit more calm.”

This initial mo-cap, used almost as a reference, lead into complex keyframe animation. The tentacles were used in part for balance, but also as a way of expressing their motivation. The team spent more than three months developing the look and feel of the tentacles. The original tentacles were done with guys flicking ropes around off screen next to a physical suit, so the question became how much like the original should the new tentacles look like?

In the end, the team took the approach of trying to recreate the ‘rope flicking’ completely. They developed a simulation that had that movement from the original concept but then took into account the body movement of the new digital character for a combined result. The concept that the team worked to, in terms of the intent of the tentacles, was not dissimilar to a peacock. The aliens do use the tentacles to do things and grab objects but they also developed the trashing around tentacle behaviour to intimidate. One reference was to think of the tentacles as the mane of a lion.

From Image Engine’s point of view, the animators spent a lot of time considering the motivation of the aliens and how that could be represented. The team actually animated “a bunch of clips that did not make it into the movie of the Prison aliens sitting around feeling sorry for themselves in their cells, kicking the ground, feeling sorry, and also we tried the Hive mind approach of having them move in unison”

As there were a lot of captive aliens in the cells, the team took time to explore types of emotion. One of the original directives was that the aliens aren’t monsters, they are still ‘people’ in effect. How they would move in the cells was extensively explored including the colonists moving more like insects, crawling on the ceilings, into corners, or looking out and being curious. It was a wide range of motion to present to the VFX supervisor and the director.

While on set, Culpitt took an HDR (see image to the right). He so liked the look of the way the light played that he showed it to DOP Markus Förderer, who decided to include this as a new shot. Below is a screen grab from the video monitor split showing the RED camera monitoring of the same shot during set up (hence the last-minute cleaning).

This became a shot in the final film when the attack of Area 51 happens and the alien colonists escape.

Above are 1) the output from the actual plate photography, 2) the alien CG blocking and then the 3) final frame.

As can be seen above, the team shot the plate photography as 5:1 RC compression at 5600K white balance at 6K resolution but rated at only 500 ISO, which allows a third of a stop extra in the highlights as the camera is rated for 800. Image Engine found the blue screen shots like the one shown below to be clean and very workable for keying.

To recreate the lens flares from the anamorphic lenses, the team worked hard to match digital flares to the real flares off set. They had lens flares shot on set and these were matched using the standard tools in Nuke, and the Optical Flares plugin from Video Copilot.

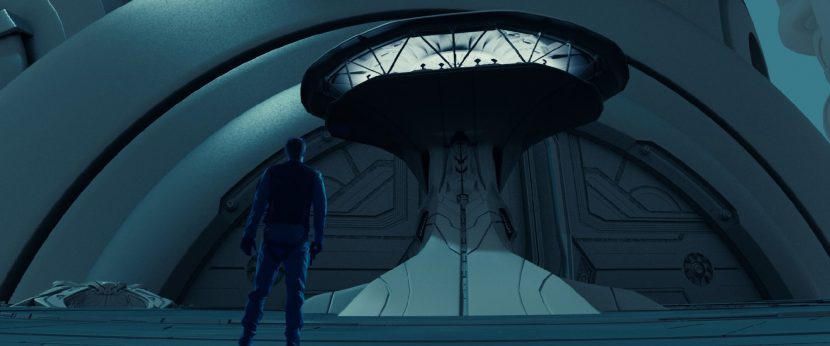

The Mothership set

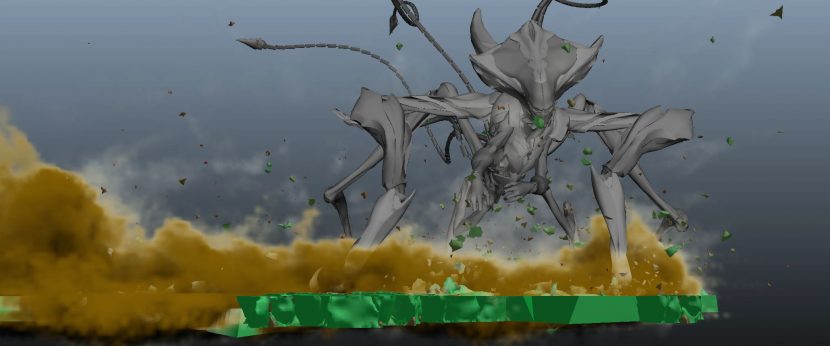

When our heroes fly into the Mothership and the attack failed, Image Engine had to create a series of unusual environments as well as a host of new solider aliens.

The pipeline at Image Engine is Maya-based with Mari used for UV texturing the aliens, along with some Photoshop texture work for the platform. The team used 3Delight for rendering. The rendering and shading environment is based on Image Engine’s open-source node-based framework Gaffer, embedded inside Maya using Caribou, their host-agnostic Look Development & Lighting engine. All the compositing was done in Nuke. The simulation work was done in Houdini and then exported to Caribou.

Image Engine finds 3Delight to be an excellent solution for both their rigid body and character work. With Gaffer and Caribou, they are able to drive 3Delight using a great layered based approach to shading, which allows considerable artistic flexibility using their now extensive shader library.

For the platform our heroes find themselves on inside the alien ship. In the original film, the floor of the alien ship was tin foil crunched up and painted black. Once again for this film, the art department painstakingly replicated this and fully detailed the floor of the set . But the night before the shoot, the production, under Roland Emmerich’s supervision, decided to paint the entire set – in particular the floor – completely blue. This apparently was the right decision as Emmerich loved the new digital floor, telling the Image Engine team, half jokingly “Great…now I never need to make another set!”

On set, the art department told Image Engine that this film was only matched by Emmerich’s own Godzilla in terms of square feet of blue screen. There were six full stages of complete full blue screen coverage.

The harvest set

In total, the team did 165 shots and 28 of the shots they worked on were the incredibly complex harvest set. This ‘food bowl’ for the aliens was both complex to develop in terms of look-dev and required the most number of nearly fully CG shots for the team. Mathias Lautour, Look Development Lead, commented that this sequence required by far the most amount of look-dev…a huge amount given the relatively small number of shots in the film. “It was the biggest part of our workflow – it was pretty epic actually,” Lautour relates.

The tentacles of the soldiers were made to be more like an octopus, much more a stealth mode than the colonists tentacles. This gave the soldiers a more menacing rather than hostile animation.

The actual environment was all CG apart from the blue screen there was only a water tank. The intent was to have the actors move through the water but Image engine replaced almost all the water, apart from some splashes.

Keegen Douglas, Comp Supervisor, and Martyn Culpitt were on set for the plant sequence. The art department had built large twisting plants but again these were dispensed with since it was decided that anything on set filmed would not able to be animated to interact with the digital alien soldiers. There were some stumps and surface kelp, but again this was all replaced or comped into the digital environment around the actors legs. The only live action alien elements in the whole film were a couple of alien feet that are seen as they step into the water from the POV of our heroes who are submersed.

In the largest of the giant harvest shots in the alien mothership, there are half a million plants or 47 billion polygons of plant geometry were made up of instancing of three basic heights of alien plants and two different variation of plant types using 3Delight.

MPC

MPC worked primarily on the moon sequence, with a global team of over 300 artists delivering some 219 shots, 65 of which were fully CG. One of the challenging aspects for MPC on the film was the number and scope of the digital assets that were required.

MPC opened the movie with a ten minute action-packed 200 shot sequence based on the moon. It starts with arrival of a new weapon to the Earth Space Defense Station. It finishes with the alien mothership destroying the Moon Canon and most of the moon on its way to the earth to harvest its core.

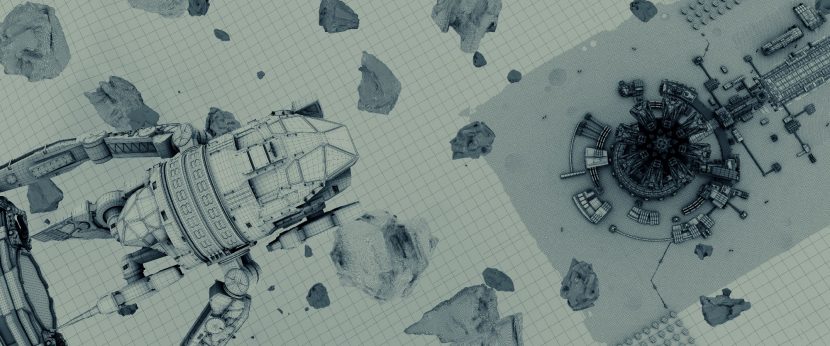

MPC built the lunar environment using NASA reference stills and art department concepts. “We invested heavily in well-made assets; we knew we would re use these models in multiple shots,” explained MPC visual effects supervisor Sue Rowe. In total there were 26 major assets that had to be built from the moon base and its Cannon, the moon tug, the mothership, and of course the wreckage it created.

For more distant views or one off angles, MPC took the asset and projected 3D digital matte paintings on to the geometry. The most complex asset was the moon Cannon itself. It is over 300 metres tall (the height of the Eiffel Tower) and features heavily in the opening of the film. In addition, MPC built the mothership, numerous CG lunar vehicles ranging from the Moon tug (a nifty flying machine with Mechanical arms for lunar cannon maintenance) to smaller moon buggies, and fighter jets. They also created CG digtial doubles of David (Jeff Goldblum) and Jake ( Liam Hemsworth).

“However, the majority of our work was creating the Lunar surface,” explained Rowe. “We had 2 main sites on the moon, the Sea of Tranquility’ and the ‘Van de Graff Crater’ on the far side of the moon.” Bringing the moon to life was fascinating according to Rowe, how said “we studied the properties of the moon surface to make sure our simulations would be geologically representative. The production supplied us with some great looking concepts of the Moon and Base, from this we built the many hundreds of smaller buildings in a modular fashion, so we could re use them economically.”

The team studied the previz to allow them to focus their time on modeling relevant detail. MPC added lunar dust particles, and lunar buggies to the moon base to give it life, as well as little landing lights which flashed on and off, referencing more earth bound industrial sites such as oil rigs and airports for inspiration.

“One of the fun things we did was add multiple layers of tire tracks and foot prints to the powdery surface. This made it look like a working military base,” explains Rowe. The sheer scale of the moon base was a task in itself; MPC took four months to build it and another four months to destroy it.

“We thought that asset was big, then we started on the mothership,” joked Rowe. In this film, the aliens return in a massive 4,500 km (3000 mile) wide vessel. The ship dwarfs the moon and the audience once again sees the huge shadow travel over the lunar surface, evocative of ID4. Ultimately, the humans and the aliens fire on each other but the mothership demolishes the moon base with its iconic green laser, synonymous with the first movie.

MPC used Maya to build assets, lighting was done in Katana, and comps were done in Nuke. Matte paintings were rendered in Photoshop and re-projected in Nuke. The rigid body destruction was done using MPC’s proprietary Kali software. “It’s a powerful tool,” says Rowe. “Once set up, the attributes of the materials are defined and then the physics of the explosion are added. It creates really great predictable, repeatable results.”

MPC researched the geology of the moon surface in great detail. It was important to the team that the destruction of the organic surfaces looked realistic. “We used RealFlow and Houdini for the iconic alien green beam from ID4,” Rowe explains. Emmerich wanted a “homage” to the 1996 beam, but with some minor added enhancements. MPC also created the shield hits and the beam in multiple other shots and shared this with the other vendors.

When we first see the mothership as it heads towards the earth via the Van de Graff Crater, our heroes are in full space suits investigating the alien crash site. The mothership ploughs into the surface of the moon creating a meteor storm of debris, which creates a tsunami of wreckage and lunar soil heading straight for our heroes. The moon tug sweeps in at the last minute and saves them.

What entails then is a “cat n mouse trip” as the moon tug tries to stay ahead of the mothership’s wave of destruction. For this sequence, live action plates were shot in a studio against blue screen. “We had partial sets for the lunar surface, our actors were on wires to simulate the differences in gravity,” explains Rowe. “We simply added glass to the helmets to tie them into the Virtual world they were immersed in.” As soon as the explosions start MPC switched to full CG digital doubles. All the actors were scanned and photographed in detail to be digitally replicate them in the CG suits.

After the beam decimates the moon base, the mothership heads past the destroyed moon on its way to earth. “Our final shot is of the Mothership detonating 8 of the ‘Orbital Defense Satellites’ in one enormous blast. The shot is full CG and was the last shot to complete on deadline day. All that action and it is only ten minutes into the start of the film!” Rowe laughingly adds.

Cinesite

Cinesite in Montreal & London worked on the exterior Area 51 shots, pretty much everything without the Queen, according to Engel. “There’s specifically a big piece in the movie where the queen ship flies towards Area 51 and then starts attacking it,” he explains. Cinesite joined the project a little later than some, beginning in December 2015, working on the project for five months in total. They did not get the final layout of the Area 51 assets handled over to them until March 2016.

The team at Cinesite, lead by Holger Voss, completed a majority of their 141 shots in just two months. Prior to that they used dummy geo. The team got a non-destructed version of Area 51 from Weta Digital. Weta had developed the assets for the Queen’s attack for the orb; Cinesite did the earlier Area 51 when the Queenship comes over the mountain and attacking.

Cinesite was a company that really needed to take full use of the Uncharted Territory Hub. Not only did it get the Area 51 geo from Weta via the Hub, but also all of the fighters that attacked and the main Queenship. Keeping up with the current versions of so many multi-vendor assets is a process that can cause major problems but worked remarkably well using the VTT system. Area 51 was a fully CG build that could be viewed from any angle, while the surrounding environment was projected matte painting work.

The only major asset that Cinesite had to create themselves was the QueenShip. This was done based on a proxy completed at Uncharted Territory, and in part based on the City Destroyer. As with the other assets, they passed that asset back into the VTT and it was then used by Digital Domain.

Cinesite rendered using Arnold. The company moved to Arnold about one and a half years ago, and the team find it produces great images and is easy to get started in. The biggest issue is “noise in the indirect, so that was tricky to keep the render times under control,” explains Voss. Arnold will converge to a very low noise solution, but it may take some time to do so. Voss found the main issue was therefore balancing lower noise vvs higher render times on the vast complex and asset rich shots. Voss feels “everyone can get started really easily and you can get good looking results within a few days. But then the synaptic part of getting render times and noise ratios into a good level still requires senior people to be involved.”

LUXX Studios

LUXX studios executed five different sequences which had very different types of VFX. The team was headed up by Chris Haas, VFX Supervisor for LUXX Studios, and Andrea Block, VFX Supervisor (art-direction) and VFX producer, LUXX Studios.

One of most interesting sequences was to show a futuristic version of Washington, DC that could exist in present times. In the film, Washington has been rebuilt and done so with the benefits of alien technology such as anti-gravity engines. This mean it needed to look different from today and display the new designs and advances the alien technology had allowed, but not be too fantastic to detach from the audience’s everyday perceptions.

Layouts for the shots were done at LUXX Studios in close cooperation with Engel according to Emmerich’s vision. “The setup of the full CG shots was intricate since Roland likes the freedom of full control in CG space and pays very much attention to detail,” explains Haas. “He has very keen eyes and made fantastic choices by trying out many iterations for setting the right framing, timing and movement of camera.”

The team worked on the project for six to eight months and it was both challenging and rewarding for Haas and his team. “We greatly enjoyed to be guided by such an experienced and knowledgeable team of VFX Supervisors like Volker Engel and Doug Smith with Marc Weigert at their side. As well as our VFX Producer always keeping control and overview on this immense work amount and VFX shot count”.

Futuristic Design and build of Washington DC

In October, LUXX received the first concept sketches and city plans by Vienna Architects showing the New Washington City Core. The New Forum now flanking the Mall, a bigger New Capitol at head in the East, and an immensely huge Washington Memorial in its Center commemorating the victims in the War of ‘96. Only the basic structure and locations of the key buildings were saved as the rest of the city was redesigned after destruction. Only the White House stayed exactly the same in scale and look.

LUXX designed new high-rise business districts, created futuristic, modern skyscrapers and apartment buildings and public park spaces for new city elements behind the Capitol, along New Forum and White House area. Andrea Block recalls that “many concept ideas were inspired by a research trip to World Exhibition in Milan 2015 but we found out that Roland was looking for a much more present day copy of a futuristic Washington believable in 2016”.

At the start of the design process LUXX expected to reuse much of Washington model that they had built for White House Down two years earlier. But eventually, Emmerich wanted to add so many new buildings, shapes, materials and set elements that almost all inner core of Washington was rebuilt.

Alex Hupperich, 3D lead pointed out “the vast scale, amounts of assets and level of details were stretching the limits of 3DS Max to be handled within one scene. Even though we used V-Ray proxies, rail-clone (parametric modelling plugin) and forest builds, we had to split up the environments for shot angles.”

The huge stretch of grass called for different sorts of vegetation, extra textures for color, dryness and walking paths for more variation. The modeling and shading of metal surfaces, especially of the Marine 3, was demanding on reflection, modeled patches, dents and bolts plus the magnetic engine bust and blinking navigation lights. “Our 3D Team did an excellent Job on rebuilding what the aliens had destroyed in ‘96,” adds Hupperich.

Modelling Alien Technology Gear

In addition, LUXX added some futuristic elements showing the advanced technology of the new Era such as the magnetic elevated light rail and new devices like floating ultra big screens or Jumbotrons with a special designed light pattern on their surfaces for their on and off state. They also created floating construction platforms for transport and equipped with camera cranes for TV coverage and flying moon tugs which serve as airborne construction vehicles for all types of construction tasks. “Those were send to us as models or concept art and we were redressing or rebuilding them for extra details, designing textures with fine tuning for shading,” explains Haas.

LUXX modeled three different types of airplanes: Awacs, Marine 3 and Air Force One. These inhabited three different sets of airfields: Area 51 Nevada, Andrews Airbase Washington and the Cayenne Mountain Complex with military base and nuclear bunker in Colorado.

Generating Audience for president’s speech on independence Day

Another big task was the animated audience of the bleachers surrounding the President during the speech of Independence Day. “Many hundreds of animated CG people were animated by using motion capture of our team members,” explained Block. “In order to get most natural behavior during listening, [we created] small interaction between neighbors showing curiosity, impatience, compassion, joy, excitement, surprise, and anxiety. Idle motion, cheering and clapping, sitting, standing up, standing all mixed with different emotions called for by the content and continuity of the shots in this sequence.”

Over two hundred different people were produced to form the full crowd. The people were hand selected from a library to show an average crowd of Caucasian, Afro-American, Asian and Hispanic population. The actions were recorded by TD Martin Wellstein with a custom tailored Kinect motion studio for sitting, clapping, idle motion, standing up, taking pictures with future mobiles and many more. All actions were performed by LUXX team members who acted according to special VFX unit requests.

Distribution of single people on bleachers, VIP sections and main grounds were executed using the Forest Pro Software tool. Special attention was paid to motion and time offsets in order to avoid patterns and obvious repetition, form clusters to create the most believable motion as a crowd. Later, Emmerich asked for more people directly behind the president in the inner Court bleachers of the Capitol. For this setup the CG people were too close to camera and needed facial movements which was not doable within fixed time frame. Therefore, Marc Weigert setup a plan with LUXX VFX Supervisor Chris Haas for an additional VFX footage shoot. This was used for most close-up crowds to be supplemented by CG elements in the mid-ground and background.

Compositing

There were about 68 shots that LUXX setup for layouts, some 13 were were cut and replaced by 26 new shots during editing. Live action footage was shot anamorphic with mostly zoom lenses that showed a lot of lens aberrations and distortions towards frame edges. This was very challenging for LUXX team in terms of tracking, keying and matching the look of live action plates with CG crowds and sets.

Special effort was needed for integrating light reflections, retouching optical refractions on keyed foreground elements, as well as painting props out of reflections and adding shadows of CG elements on plates. Extra plates were prepared for inserts on Jumbotrons. These were treated with a light pattern and special look-dev for the screen surface.

The opening CG shots including epic camera moves of wide cityscapes and the big crowds of audience raised the bar on complexity for LUXX. “Our great head of Comp Artists lead by Tobias Gerdts put a lot of effort into light treatment, extra details and depth perception of each subsequence,” commented Haas. “Especially on the immense challenge of massaging the crowds and creating fine details on the metal surfaces of the airplanes and highly reflective facades of modern Washington.”

BUF, Crazy Horse and others

BUF in France created the opening titles, which is a single one-minute shot flying through different galaxies and past a planet that had been destroyed by the aliens. The sequence ends up on the master alien mothership.

Digital Domain executed the whole dogfight around the mothership. Our heroes fly towards the area where the Queen ship is actually attached to the mothership, being shot at before the alien fighters eventually appear. It’s “a little bit an homage to the first movie” explains Engel.

Crazy Horse created the cockpit displays. The team had just over 30 shots. “Almost each time you’re inside, you also have visual effects because you have these holographic targeting controls. Basically, you have an alien heads-up display, so that’s what Crazy Horse did for us” explained Engel.

Trixter not only did the early concept images in the beginning of the production but they did everything when this big AI ship appears out of the wormhole towards the beginning of the movie, when all this moon dust is being sucked up and creates this wormhole where the ship appears from. The company also later in the film, did the research hangar, where the sphere starts basically telling its own story and how they were attacked themselves by the aliens, – all told in these holographic images.

Summary of shots

Uncharted Territory – 268 shots

Scanline, (Bryan Grill) 248 shots

Weta (Matt Aitken) 230 shots

MPC, Vancouver (Sue Rowe) 212 shots

Image Engine (Martyn Culpitt) 165 shots

Cinesite, Montreal & London (Holger Voss) 141shots

In House (Opticals) 140 shots

Trixter, Munich (Dominik Zimmerle) 122 shots

Digital Domain, Vancouver (Dave Hodgins) 95 shots

LUXX (Christian Haas, Andrea Block) 66 shots

Crazy Horse Effects (Paul Graff) 33 shots

28 more shots from smaller vendors such as BUF in France.