Virtual Production is a hot topic. While it could be argued it is an extension of how we have worked building on pre-viz and just extending to new toys such as VR, there is another argument to be made that it will change the way films are made, and the very nature of what a ‘film’ is. This is not a VR discussion, but a much bigger issue that looks to make narrative production and storytelling real time. It’s true it encompasses VR but it is much more than 360 video or new camera tech. The revolution to real time offers the chance to shift major entrenched players. Suddenly Unity and UE4 are entering rendering discussions previously dominated by Arnold, RenderMan and V-Ray. And in turn V-Ray is connecting with EPIC games to explore content being build not in Maya but in UE4 itself. Motion capture suits are falling in price and digital Cinematography has reached a level where it is hard to perceive the difference between something shot on 8K vs 6K vs 4K, begging the question, where to next?

Autodesk is one of the several companies facing the prospect of either being disrupted or being a disruptor.

Hilmar Koch is spearheading Autodesk’s efforts to explore more aggressively virtual production.

Koch has not been hired to make a product or work in a specific team. He has been hired to put together a crack team that will help Autodesk navigate the evolving landscape of real time virtual production. It is a blue sky job with a very open brief. And Koch has just the sort of track record that Autodesk needs.

Hilmar Koch joined Industrial Light & Magic in 1998 as a Senior Technical Director. He was one of the co-recipients of the SciTech Academy award (certificate) for developing Ambient Occlusion, which was first used in Pearl Harbor in 2000.

He started his career with a degree in Mathematics from the Technical University in Munich, followed up by studying arts and computer graphics at Columbia College Chicago. Before ILM, he was a Digital Effects Supervisor for Blue Sky Studios in New York and worked on the Academy Award-winning short, Bunny.

Before Koch left ILM, his job title was Director of Advanced Development Group, and just prior to that he was the Director of Virtual Production which at ILM meant he helped guide advances in capture-technology and digital tools, which dovetailed with the ILMxLAB. He also collaborated with the Walt Disney Imagineering team, Disney Research, and other studios in the Disney Technical Empire. “I worked in what is called offline computer graphics, so not immediately on productions, but taking the time to develop the imagery… After I felt I had explored offline computer graphics, I got really interested in exploring things with immediacy… which gave me personally a new way of creating things,” he explains. Hilmar set himself the task of providing immediate feedback for others and working on new ways of both experiencing and creating visual stories. This is when he moved from the head of R&D to the Director of Virtual Production.

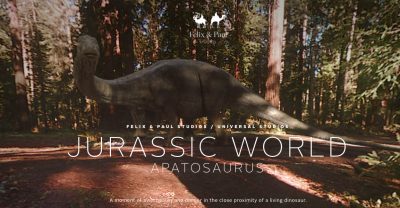

In addition to all the films he worked on such as Avatar, Star Trek and Transformers, he was also involved with many of the key VR and AR projects that the ILMxLAB explored, such as the VR project, Jurassic World which was produced with Felix & Paul studios in Montreal. “That was two years ago and I may have heard a rumour that there is the world’s first VR sequel coming!” Koch joked, referring to an as of yet unannounced VR follow up to help market Jurassic World: Fallen Kingdom, both of which are expected in 2018. “While the original was not unlike 360 video it was visual effects in Virtual Reality… I oversaw ILM’s production and I got to work with Felix and Paul, both wonderful directors.” What marked the first VR experience was that, far from being a VR chase sequence, viewers got to experience what it would be like to be in a forest with an Apatosaurus. The format was very non-traditional non-linear, more experiential with less story. Koch was also on the team Holo-Cinema which has been in operation in various forms as an experimental lab piece at ILMxLAB. This was shown at Sundance in 2016.

His new official title at Autodesk is: R&D Director, The Future of Storytelling at Autodesk. “The role is looking at how storytelling will evolve. It is an unusual new role as it was created thinking really far into the future, and not just about the problems we have today”, he explains. “The mission is to find out as much as I can about storytellers, find their ideas, how they develop them, what keeps them inspired… and then eventually find new experiences or tools that can empower them to give them a new way of working.”

So what is virtual production? It spans a range of approaches and technologies but they all share common traits to varying degrees:

- real time

- interactive

- it spans Motion Capture to Virtual Reality and Augmented Reality

For most people Autodesk’s strongest card in this space is MotionBuilder. Originally named Filmbox when it was first created by Kaydara in Canada, it was acquired by Alias, and renamed MotionBuilder. Alias was acquired by Autodesk.

MotionBuilder has

- Real time display and animation tools

- Facial and skeletal animation

- Best-of-class support for motion capture devices

- Ability to derive skeletal animation from trajectories tracked by motion capture systems

- Ragdoll physics

- Inverse kinematics

- Plus an easy path into both Maya and 3DsMax

It is also designed to be used on set or on a MoCap stage. MotionBuilder allows motion capture and live input data to be recorded in the program’s non-linear editor so artists can record multiple takes in rapid sequence; actors can act out their scenes uninterrupted and stage crew can work instantly with Editorial to build and refine shots.

“the tools of the past are somewhat confining”

MotionBuilder has helped a lot of people over the years and it has served the community really well. In the real time space it has been used for virtual production, “however the media landscape is changing,” Koch explains, “and we are seeing all these companies working on these fantastic universes of stories, it is not confined to one movie or one episode… so the tool of the past are somewhat confining. Autodesk has given itself the challenge to really think all inclusively about the media spectrum and trying to be closer to the people who ideate, closer to the source of the creative process… you want to be there at the start with support, technology, methodologies and leveraging new technology such as machine learning.”

Autodesk already has deep learning and new AI technology on its radar. In this area, Koch feels that “generative methods which are result of machine learning are already in Autodesk’s current arsenal of methods. It has already been used very effectively in designing things, from architecture to design…and I would like to take some of the edge off the fear people have about machine learning,” he comments. “I feel I have a personal responsibility to translate that power into something that is supportive, helpful and enjoyable for artists and creatives.” Koch does not subscribe to the view that the evil machines will replace us, nor that it is something we need to be worried about as “the human experience and our point of view still matters at the end of the day more than anything else”. He sees his job as to help make these tools something that literally “makes the world a better place.”

At a technical staffing level, Autodesk is competing not just with rival media and entertainment companies but with every major Silicon Valley tech giant for the best engineers and systems designers. Apple, Facebook and other tech giants are looking to increasingly enter the creative story telling space, and they are also spending literally billions of dollars. Furthermore, the startup community is far from the image of backyard, garage operations. According to a recent WIRED story AR-MR startup Magic Leap could be valued at $6–8 billion in their next round D of funding. “Once again, the Chinese e-commerce giant Alibaba will lead. (Alibaba’s executive chairman Joe Tsai sits on the board along with Google’s Sundar Pichai.) Just 15 months ago, the Florida-based mixed reality company raised $793.5 million, adding to the $592 million it had previously raised and earning a valuation at $4.5 billion.” (WIRED). Magic Leap has yet to sell anything or even release a product. If Magic Leap is valued at $6-8 billion, what is Autodesk worth in comparison? $22 billion (market capitalisation). Significantly larger of course, but if a deep learning programer gets in early on a startup and sees it explode into a Tech Sector Unicorn (+1 $billion) valuation, then they stand to make a lot of money from stock options, where as few people expect Autodesk’s share price to skyrocket, as any large established company’s share price rarely does.

There is no doubt that while talking to customers is key, providing the underlying technical innovation especially in the area of Deep Learning will make an enormous difference, especially in this part of the entertainment technology sector. At SIGGRAPH fxguide itself is involved with a special project that deploys multiple deep learning solutions in one VR experience. We will no doubt not be alone at SIGGRAPH in this respect. These new advances and AI approaches, especially in machine learning, computer vision, tracking and image reconstruction, will end up deploying multiple AI solutions throughout a pipeline within a very short time.

Koch is aiming to connect in the realm of pre-viz, new media, real-time technology, immersive and linear experiences, to leverage mobile and other technologies in the creative process. “Stories have to live in a far richer context now. Take the Marvel Studio – it is making so many movies and they have to be coherent… and then this offers so many other opportunities to go in and extend further… you don’t start with nothing anymore… you build out from context, which is both a challenge and an opportunity.”

What does this mean for an Artist?

It is hard to position one’s career for success, and even harder to bet on the right technology. Many in the VFX community are exploring these real time tools. At fxguide and fxphd we have been covering these issues and will continue to do so. Learning MotionBuilder is one such option, and Maya 3D and animation skills are always relevant, but increasingly members of the Effects community have been looking at Game Engines.

Autodesk has Stingray which blends fairly easily with 3DsMax. The Stingray Game Engine started life as BitSquid by a company called FatShark. The company was acquired by Autodesk in 2014. Stingray was first released as an Autodesk product in 2015. Stingray has been used for games such as Magicka Wars, and Warhammer: End Times, as well as for VR and other visualisations. But Stingray is a distant competitor to the two big game engines that Autodesk would need to match or connect with: Unreal and Unity.

ILM and Peter Jackson’s Wingnut AR (ex Weta Digital) were both on stage at the Mac developers conference WWDC showing Unreal (UE4) solutions. John Knoll introduced a VR Star Wars staging and blocking demonstration and the Wingnut team showing an AR battle on a desktop with the new ARkit from Apple. ILM even rendered 4 shots for the last Star Wars film in UE4. Anecdotally, UE4 artists are in huge demand and working on a range of projects outside traditional games.

Unity like UE4, have virtually free use for startups and student programs. This easy access, coupled with outstanding technical innovation, has lead to wide scale adoption. There are other powerful and key Game Engines used directly for AAA development, such as CryEngine and Frostbite and other user friendly tools such as Amazon’s Lumberyard and GameMaker, but Unity and UE4 are the clear market leaders and ‘go-to’ tools for many VFX professionals working away from direct game titles.

Kenneth Bonde is one such senior artist who is moving beyond VFX to Real time and he is perhaps typical of the type of person who is radically reframing their tool set. Until recently he was VFX supervisor on a major animated Lego TV series in Europe. In this role he supervised a team doing typical Maya, Render Nuke style pipeline work. After facing another iteration of scheduling issues due to having to re-render a whole sequence and wait overnight to continue, he started to wonder if there was not a more immediate way of working. When that project concluded, he set to exploring whether Real time game technology might not be an option. He decided that Unity, UE4 and CryEngine were viable candidates and settled on Unity, “as it was developed originally in Denmark.” Bonde guessed he might have an easier time with a product that came from his part of the world.

This week he became a certified Unity Developer but isn’t looking to deploy this technology on game projects. He will be using these tools on projects that, until recently, would have never been considered a candidate for a Game Engine. “I am not looking to work in Games or be hired on a AAA title,” he explains, “but this technology has come so far.” Bonde is a highly skilled VFX supervisor but like an increasingly large number of artists, he is shifting his focus in a way that has huge implications of the industry. He has no intention of turning his back on traditional VFX tools, but is looking to work in a radically new pipeline and in a way that companies such as Autodesk are keen to understand and address.

For Bonde, the move to fast virtual production is not an “either-or” proposition. Rather, he is looking to AI and deep learning and many other technologies continuing to disrupt our industry. “That stuff is just moving so fast,” he points out, “but I am really keen to learn and deploy these new technologies.” People often cast the view that new real time AI machines will replace artists, but the truth is much more likely that artists will only be replaced by other artists who know how to use these new tools.