In the second of our reports from GTC, we cover HFR with Douglas Trumbull, ZOIC’s ZEUS pipeline, Rhythm & Hues Crom VFX platform, as well as a few more details about Octane’s cloud solution and the GRID VCA.

Our fxphdod this week includes a report from GTC, covering more details about the GRID VCA and Otoy cloud renderer from our first report from GTC. In addition, we have an interview with Jascha Wetzel from Jawset about their GPU-based renderer TurbulenceFD for Cinema 4D and Lightwave. He covers its features as well as some of the performance improvements that can be made in the future due to the announcements that were made here at GTC this week. Listen to the podcast over at fxphd.

All in all, GTC is an impressive conference. If you’re a tech-inclined artist, there are lots of interesting presentations applicable to work in our field — as well as cool ones outside our arena.

The Impact of High Frame Rate Stereo Production on Cinema

There were a series of presentations one afternoon at GTC covering High Frame Rate (HFR) and stereoscopic considerations. Doug Trumbull kicked it off with an overview of the work he’s been doing to bring his vision of HFR to reality. It’s best to watch his entire presentation at Ustream, but some of the key points are summarized here.

His current concentration is Showscan Digital, where he’s developing a 120fps system. He is shooting and finishing a short film at his facility as a proof of concept to the creative as well as the tech.

One of the biggest issues in creating this environment is working towards a high brightness screen. His initial Showscan used 65mm negative filmed at 60 frames per second with 70mm prints projected at 60 frames per second, Even in the late 70’s this was being projected at 30 foot lamberts of brightness onto giant screens…this is much brighter than the standard theater setup today.

A big issue with maintaining brightness is falloff of light from the screen. His new system utilizes reflective fabric stretched over a convex hemispheric frame, pulled tight via fans, to achieve the shape. This redirects light back at the audience as opposed to off to the sides, ground, and ceiling.

Tech has certainly changed since his initial foray into Showscan, with cameras now readily available to shoot at high frame rates. His short is being filmed using Canon C500s shooting 4K at 120fps, using a 3ality TS5 rig for stereoscopic.

Timothy Huber & Steve Roberts

After Trumbull’s talk, Timothy Huber (Managing Partner of Theory Consulting LLC ) and Steve Roberts (founder of Eyeon), spoke in another session about some of the production concerns regarding Trumbull’s current project.

Huber has been working on the infrastructure for Trumbull’s HFR Near-Time Projected review theater on-set. The goal of the setup is to work through the technology needed as well as to service the actual production of the short film. In the end, 120fps 4K stereo is the goal, but for this project they are reviewing work at 60fps (per eye) 2K stereo due to various current realities in getting the data to the projectors. They can review shots in the specially constructed “Near-Time Projected Review Theater” within minutes.

They are filming with a Canon C500 4K at 120fps, using the 3ality TS-5 rig. According to Huber’s presentation, the 4K RAW file is 17MB per frame, which works out to be 4,020 MB/s for the stereo pair. The right eye camera output is also synced to their virtual reality software, which allows reference to previs for monitoring. The full RAW data is saved and archived, and an uncompressed 2K de-bayered from the original is provided for monitoring.

Due to the tremendous amount of data throughput, performance concerns make using hard disk drives impractical. One major reason is that the translation from the hard drive to the SAS protocol to PCIe 3.0 is simply not efficient enough for their needs. So they have ended up using Viradent FlashMAXII PCIe Flash cards. These skip the SAS bottleneck (since they are PCIe cards) and they can feed directly to the GPU and the projector. The edit, comp, and projector workstations are connected via 56Gb Infiniband, which provides the necessary bandwidth.

With Eyeon Fusion celebrating its 25th year, founder Steve Roberts has been spending a good deal of time in production working on Douglas Trumbull’s project. Roberts has enjoyed the process of getting back in the trenches, as he feels it is the best way to help build a tool that can be used in production. His time on the project has been invaluable.

More specifically, Generation is effectively managing the entire pipeline, keeping track of versions, work in progress, and farming shots out for compositing in Fusion. They’re also using Eyeon Connection to integrate with the Avid system, providing a simple way for getting footage to and from edit and comp.

The ZEUS Pipeline at Zoic

It was great to see another presentation by Mike Romey who is Head of Pipeline at ZOIC. We’ve always been impressed with their pipeline, setting a high bar in the production of visual effects for episodic television. He gave an outstanding overview of ZEUS, short for Zoic Environmental Unificaiton System, which is a full service production pipeline from prep to delivery.

He started by giving some interesting numbers regarding production at ZOIC:

- 2-3 weeks to deliver a typical episode

- 150-450 shots per episode

- 800-1000 versions published per episode

- 2500 – 3500 notes per episode

- 15,000-30,000 frames rendered per episode

A typical virtual production day at ZOIC is about 16 hours — and talent is on set for a great part of that time. They can cover approximately 100 camera takes per day, since the virtual set makes it much faster and they can burn through the script.

For season one of the ABC network series Once Upon a Time, they had 33 days of green screen. Season two is in production and they project having 78 green screen days. Due to the nature of TV production, they have lots of different directors and actors and it takes some time to get them used to shooting virtually. So much of their pipeline at the start is meant to help make things easier for them.

One interesting aspect about the pipeline is the prep of the environments. The artists at ZOIC begin the process by taking the production concept art and building and texturing 3D assets. Because of the realities of the tight production schedule, artists utilize VRay RT for interactively finalizing the look of lighting. They then bake the lighting into the textures, automatically building everything from hires to low res versions for use on various devices and even on-set during production. This process is effectively the same as is used in gaming, helping speed interactivity and responsiveness.

At the same time artists are building these assets, they are also baking very hires texture panoramas. These panoramas are much like an HDR one would photograph. A strong addition is that the result also has an embedded position pass that can be used later for compositing in NUKE.

Another asset that is created is a Unity gaming asset which is used in ZOIC’s iPad app. This app allows a director to browse various sets which have been created for the series and then load them as the current project. They can they survey the scene with a built in camera. The user can control the lens type, pan and zoom, track, dolly, and animate the camera and more. They can measure distances on the set, make annotations, and add items to the set such as chairs, tables, and people. Once the director is happy, the app takes a screen grab of all the current project items and sends them via email.

In the second quarter, ZOIC aims to release a version of this app for the iPad that they plan on calling “Tech Scout”. The app will come preloaded with various assets so that users can experiment. Romey had a beta version of the app at GTC and it’s quite compelling. Moving forward, the team at ZOIC plans to gauge industry reactions to the app and decide whether to expand it with more features such as allowing custom scenes for users. At the vary least, it’s incredibly interesting to get a hands on with a piece of tech which is used in the virtual production environment of ZOIC.

Once in post, the artists go from using the baked assets to the pre-baked assets. Much of the interactive work on the CGI is done with VRay RT. Romey feels that GPU renders seem to be workable in the HD resolution they’re working at for episodic television.

But several issues are problematic. One major issue is pulling out the various passes from a GPU render — they aren’t currently available, since it is the beauty render which is the result of the GPU render. Also, most GPU rendering is about ridged body resources and not fluid sims such as Phoenix or fur renderings from Shave and a Haircut. That being said, ZOIC just started building a GPU render farm with 20 blade machines using NVIDIA Titan cards. This install will be used for the commercials department, where After Effects and Cinema 4D is used.

Crom: CPU/GPU Hybrid Computation Platform for Visual Effects (Rhythm & Hues)

Nathan Cournia gave a presentation covering Rhythm & Hues VFX platform called Crom. Crom is a VFX platform that was developed in house at R&H and includes look dev, scene lighting, compositing, and miscellaneous tools such as information management.

The software platform was developed to update their original in-house software, which was created 25 years ago. They need to support improvements and technological changes such as multi-CPU, multi-GPU, the cloud, and their varied international locations. It needed to seamlessly interact with Shotgun, Mantra, and their other in-house R&H tools. They wanted to unify the user experience to create a modern pipeline. They wanted to make it extendable with Python as well as provide easy to use tools for the artists to create their own custom nodes.

Cournia focused on the VFX compositor for the talk. It is a hybrid GPU/CPU compositor which means it can run most nodes transparently on either the CPU or GPU. So when an artist needs to work on a shot interactively, they’ll work using the GPU. But when working on the render farm, it is converted to OpenCL to process in the background on the CPU.

Cournia focused on the VFX compositor for the talk. It is a hybrid GPU/CPU compositor which means it can run most nodes transparently on either the CPU or GPU. So when an artist needs to work on a shot interactively, they’ll work using the GPU. But when working on the render farm, it is converted to OpenCL to process in the background on the CPU.

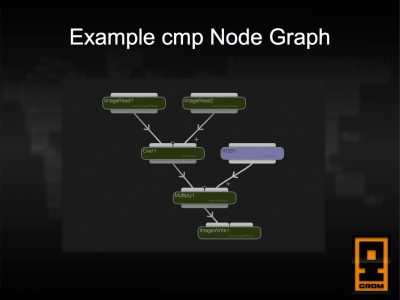

An artist builds a process tree in very much the way they would do it in NUKE, Shake, or Flame’s Batch. The software itself actually has very few “built-in” nodes itself, and they basically define very low level operations such as Add, Translate, Crop, Text, etc. The power and strength of the software is actually through the use of user-defined macro nodes, which will be discussed later.

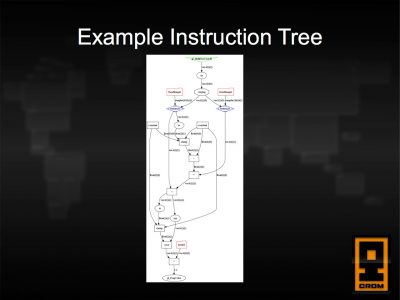

Under the hood, the VFX compositor graph is a very low-level instruction tree. Basically, it looks like a process tree an artist might view, but the operations are incredibly granular and are actually the specific tasks that the software executes to create the comp. This would be things such as reading an image off the file system, converting that image data into a 2D texture, adding two textures together, clamping colors, etc. Instruction tree nodes can also be created from Crom’s expression language, creating very fast per-pixel expressions.

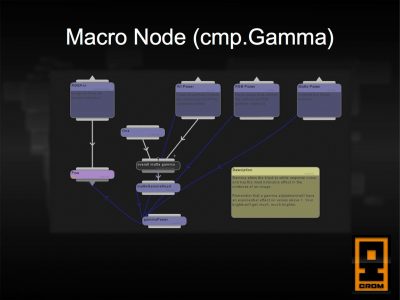

From an artist perspective, the real power is from the macro nodes. A large number of useful nodes has been built up by R&H artists, which form the basis of most operations in the software. These macro nodes can contain other macro node. A typical comp might have well over 250,000 underlying nodes….but these are effectively hidden to the end user through the use of macro nodes, which make the presentation to the user much simpler.

The software includes a graphical interface to operations, so an artist doesn’t need to know C++ or Python to program very powerful nodes. They can also create custom interfaces for the nodes, which look just like the interface of the overall program.

The real key with the macro nodes, as well as the visual editor to create them, is that they can be just as fast as the built-in nodes. There isn’t a different language that is being used that needs to be translated…and those translations take time. The nitty gritty of building the macros through the granular instructions is hidden to the user, yet they get the efficiency of something programmed directly.

Since the presentation was at GTC, Cournia mentioned that one of the problems with GPU processing was that it is very easy to saturate the GPU. The software cannot send cancel commands to the GPU to stop processing and this can cause the perception of hanging. Consider the situation of an artist scrubbing back and forth, sending data for the various frames to be processed. Without a cancel, this can quickly back things up from a processing perspective.

So they ended up creating a dispatch queue for the GPU, queuing commands in order of operation as to what needs to be done. By delaying the dispatch very slightly ( imperceptible to the user), they can cancel operations that are unneeded before they reach the GPU. It’s just one example of the hurdles that need to be overcome when dealing with a hybrid GPU/CPU environment.

More information from the keynote: Octane cloud + GRID VCA

Otoy & Octane Cloud

After our first report from GTC, we were able to glean a bit more information about the Octane cloud rendering setup as well as the GRID VCA and it’s customization capabilities.

The Otoy demo that was part of the keynote was bit confusing because it was during the section on the newly announced GRID VCA. What was actually shown was Otoy’s GRID system, which uses the NVIDIA GRID tech. Jules Urbach, OTOY chief executive officer, was running a virtual session connected to their servers in the Los Angeles area, but since it had 122 graphics cards accessible for rendering….it was something that could not be done using the VCA.

Another question we had was how would the cloud offering work? Well, pretty much the way it was demoed on stage. If an artist wanted to use the service, let’s say for 3dsMax, they would upload their 3dsMax scene file to servers accessible to Otoy (specific server details to come later). The user would then launch a virtual session, running the app on the Otyo server. This way, they can utilize the hundreds of GPUs that will be available in the racks. They could then either render the scene or interactively work on the scene via the virtual session and then render. If they made any changes in the scene file, they would need to download the file to their local machine to use at a later date.

GRID VCA

We had several questions regarding customization of the GRID VCA. In the initial release, the appliance will be configured to assign one GPU per remote session. This means it won’t be possible to assign multiple GPUs to a single user. Also, CPU use will be pre-configured as well. NVIDIA is certainly interested in providing more flexibility in the future, but for the 1.0 release they wanted the install and use of the appliance to be truly plug and play, as well as to be bulletproof.