To bring general world-ending mayhem to his latest disaster film, 2012, director Roland Emmerich relied on a contigent of effects vendors under the supervision of Volker Engel and Marc Weigert. In this article, we focus on the work of two of those vendors: Digital Domain and Sony Pictures Imageworks. In this week’s fxpodcast, we discuss Double Negative’s work on the film.

Podcast

In this week’s fxpodcast we speak to Alex Wuttke, (Quantum of Solace, 10,000 BC, Batman Begins ) visual effects supervisor at Double Negative (DNeg), about the two main sequences of 2012 completed at DNeg in London.

Escape from LA (by plane)

One of the major disaster sequences in 2012 features struggling author Jackson Curtis (John Cusack) and his entourage flying through a destructive Los Angeles earthquake. For the sequence, Digital Domain created crumbling buildings, highways and ravines and, ultimately, huge land masses disappearing into the ocean. The general chaos and art directed destruction brought upon LA necessitated some new software developments. “We knew from the start,” recalled Digital Domain visual effects supervisor Mohen Leo, “that the biggest challenge would be the effects simulations and that there would be enormous rigid body simulations to do. We looked at the current state of off-the-shelf solutions for that, but none of them were really up to it. Internally, some people were interested in basing a new system on an open source project called Bullet. Bullet is very simple, but it’s fast, stable and, because it’s open source, you can build on top of it.”

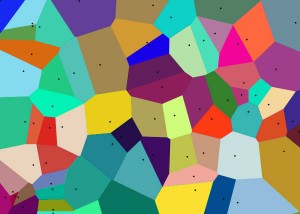

Effects supervisor David Stephens managed the development of a new shattering pipeline and rigid body dynamics tool based on Bullet, which became known as Drop. “Our shattering pipeline essentially used a Voronoi diagram * shatter algorithm. So you take the volume and subdivide the surface and turn your volume into polygonal cells. At that point you have your individual bodies, but going into the simulation you want to define relationships between those bodies, in terms of how one piece joins to the next, the strength of it and the axis of freedom those pieces will have to move against each other. Most of these things were built as plug-ins to Houdini. For Drop, we wrote a series of Houdini plug-ins and built OTL networks which would start from the shattering and move to rigging up the joint constraints between the pieces, defining their strengths and various things. Eventually all that data would be marshalled together and sent to the Drop plug-in to do the actual simulation.”

Drop allowed artists to simulate everything from rock walls to collapsing buildings to tumbling cars and even small pieces of gravel. “The really good thing about Drop,” noted Leo, “was that, despite all the simulation, it was really, really fast. Some of the collapsing office buildings are made up of thousands of pieces, but for say a 100 frame shot, you could run a simulation in a matter of an hour or two. This meant that artists could perform a number of iterations and variations and work more creatively, as opposed to waiting overnight to see if it looked OK.”

To begin work on the fly-through sequence, Leo referenced production artwork and previs from German effects outfit Pixomondo . “One of the important things for Roland for the sequence was that it had a recognizable LA feel to it. So he wanted to make sure we got all the elements into the shot that make you immediately think of LA, from the palm trees to the telephone poles, with a mix of art deco buildings right next to modern office blocks.” Artists from Digital Domain shot reference of Los Angeles streets and other assets, sending them to the director for approvals. “Even though, in the end, Los Angeles was re-arranged into something that worked for the sequence, all the buildings are based on what is there in the downtown area. We even went up in an airplane to get some aerial reference of LA to lay out the really wide shots at the end of LA sliding into the ocean.”

A largest-to-smallest methodology was adopted to work through the layers of destruction seen as the plane takes off and flies through the crumbling city. “What we would do,” said Leo, “was start off with the biggest sections of rock shattering and show the simulation to Roland and Volker and Marc and get a buy-off on the overall timing. Then we’d go to the next level with buildings and boulders and cars added, and so on, until we were adding the smallest levels of detail like dust and sparks and smoke. So often there would be four or five levels of rigid body simulations building on top of each other.”

Falling skyscrapers were hand-animated for their initial rocking motion, before the simulation and rigid body system took over. “The system would be set up so that the rigid bodies would be parented onto the initial hand animation during the first phase,” said Stephens. “Then as it got closer to the actual impact the simulation would go live, taking up that velocity and orientation – so that it was continuing the right motion – but now with an actual live body. Then you would relax the constraints that held the building all together so it would break apart properly. In addition to the technology there was a lot of sleight of hand – stacking the deck in your favour if you will – with the simulations, making sure it would hit certain marks and behave in certain ways.”

Artists built skyscrapers and office towers modularly by modelling office floors and stacking them on top of one another. Noted Leo: “It was also important for the large office buildings to not just contain the outer shell, but to have structural walls and floors and internal things modelled as part of the building so that when they simulated the effects it would look like how a real building would collapse.”

For the falling rock walls in the ravine created by the model the entire area. “We took a rock wall we built to about 100 yards long,” explained Stephens, “and then we would go through and carve off large chunks of that for our shattering destruction process. This gaves us a library where you had one nice area of earth that would crumble and fall apart in a pleasing way over about a few hundred frames. We then made about eight of these libraries and were able to populate the ravine by staggering and off-setting the animation of them throughout the shots. That meant we could push an enormous amount of data through but still have something we could choreograph fairly well.”

Wide shots of pieces of land mass entering the ocean were hand animated. “We had proxy buildings on the land,” said Stephens, “then we’d animate the entire block, and effects would start cracking off and breaking up the edges, crumbling individual buildings and doing water interactions.”

Many of the shots required the addition of dust and smoke, as well as pyro effects for things such as gas main explosions. Compositors relied upon either Digital Domain’s library of practical effects or rendered volummetric smoke and dust effects. “The dust was a long creative process with Roland,” recalled Leo. “The whole look initially started out a little more rocky, with chunks and light dust, and then evolved into having a more earthy, soil feel to it. That became a technical challenge for us because there was an enormous amount of volume rendering there. All of that was done with Storm, our proprietary volume rendering system. Some of those simulations would take a long time – you have long rock walls on both sides of the canyon and all of them had huge amounts of earth just continuously billowing out. It had to interact and be motivated by the breaking of the rock wall.”

Using Nuke, compositors worked with multiple render passes and additional control passes to control the final look of the shots. “Nuke was a really big part of the success of this sequence because it let us continue to have access to the camera and the lightweight geometry and to place in additional atmospheric elements and both CG and practical explosions.”

Digital Domain’s close proximity to the area of destruction – the plane takes off from Santa Monica airport – meant that not only was reference material easily accessible, but that artists also had an excellent local knowledge of how the sequence should look. “I wanted to get a picture of the whole effects team at a very nice restaurant I know along the airport,” joked Stephens. “Unfortunately, they didn’t end up using the building in the sequence, so I was thwarted, but I was really hoping to get it in there.”

“It was definitely a fun sequence to work on,” concluded Leo. “It’s also the kind of project that is so unashamedly over the top and self-indulgent with the destruction that there was never a moment where somebody would go: ‘There are too many collapsing buildings in this shot.’ That made it more creative as well, because the effects artists could come up with their own ideas for where to add in elements.”

(editors note: Voronoi diagram*: this is a diagram also used in computer graphics to procedurally generate some kinds of organic looking textures. It can be 3D or 2D, but it has been used in a huge variety of maths problems from disease control to agriculture (Ag Science). In 2D it can be thought of as dividing a space into areas determined by distances to a specified discrete set of objects or points on the surface. So note in this example – the dividing line or crack line, appears equally distant in between any two dots)

How to build an ark

Interior

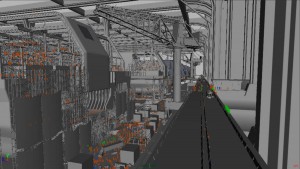

A glimmer of hope for the future of humanity rests in massive industrial cruise ships, or arks, being built in the Himalayas. Shots of the enormous arks under construction in an enormous hangar were delivered by Sony Pictures Imageworks. “It’s a huge space and probably the biggest thing I’ve ever been involved with in CG,” recalled Imageworks visual effects supervisor Peter Nofz. Working from production artwork and previs by Pixomondo, Nofz’s team showed the 800 metre-long arks in various stages of completion as hundreds of workers scramble to finish them on time.

Live action was confined to a soundstage shoot in Vancouver, where production shot actors on a passenger terminal set. “There are several minutes in the movie where people are arriving at the site on a helipad and then taking buses into the inside of the mountain. We had to add the arks, highways going into the arks, construction vehicles, gantries and just all manner of other equipment in this enormous area.”

Nofz utilized a significant amount of added atmosphere to help sell the size of the hangar space. “Marc Weigert was very keen on figuring out how much atmosphere was necessary to communicate how big things were. We tried to add in atmo at the compositing stage, but realized we really needed to see movement, so a lot of it was rendered as volumetrics.”

After constructing a massive CG environment, SPI blocked out the crowd choreography for the shot, placing hundreds of virtual workers and soldiers in the scene. Crowd agents used sound stimuli to avoid animated vehicles and construction equipment in the scene. An additional crowd simulation was used for the agents riding inside the CG bus. the SPI team placed lights throughout the all CG ship construction area and loading dock, to simulate the lighting design of a large warehouse.

The final composite shows off the high level of detail SPI added to the all CG shot, using models,animation, textures, shading, lighting, effects, and crowd simulation techniques to create a photo realistic vast environment.

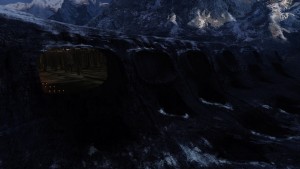

Exterior

Imageworks also completed exterior shots of the arks travelling from the inside of the hangar to the outside on Y-shaped towers, with clamps holding the ships in place. “The idea was that, when the water arrives, the clamps hold the ships in place for the first big wave, and once the water calms down, the ships can be released. Scanline VFX was responsible for that part of the sequence.”

To enable assets to be passed on to Scanline, Nofz decided to rely less on Imageworks’ proprietary systems than would normally be the case. “We went for a more brute force approach and modelled everything in Maya. Then we looked at the sequence as it was coming out of editorial and realized we didn’t need to do everything, especially in the interior where we could use matte paintings for the background. At rendering time, we were also able to turn off half of the ark geometry in the distance because nobody would have seen those anyway.”

For a scene in which waiting passengers make a desperate bid to reach one of the arks, production shot running and scrambling extras on set. Imageworks then intercut the real actors with fully CG crowds rendered in Massive which follow the ark on its way out of the hangar. “We went to the set and took lots of photos of the extras there and then modeled our CG people off of those. For the main characters, we had scans from Eyetronics.”

Final rendering for the ark sequence utilized Imageworks’ in-house Arnold system, with Shake used for compositing. Nofz felt that the scale of the ark building scenes somewhat matched the end-of-days feeling in 2012. “A space like this does not exist anywhere, so no one knows what it actually looks like. They’d send us hundred of photos of shipyards, but then that was only a tenth of the scale of what we were building. It ultimately became an aesthetic call, because there was nothing to compare it to.”

SPI used a matte painting, projected onto the 3D CG valley geometry, to add scale and detail to the shot. An all CG 3D interior environment was modeled and shaded, then placed behind the projected matte painting.

SPI added CG arks, emerging from the 3D interior, rolling out into the CG valley. Environment accent lighting was added to highlight the transition between the interior and exterior spaces. The final composite showcases a combination of CG techniques used to integrate the valley and ships, transitioning from the interior loading dock, out into the expanse of the Himalayan valley