In this week’s podcast we have a huge treat – an hour-long detailed discussion of the visual effects in Tim Burton’s smash hit Charlie and the Chocolate Factory. fxguide talks to all three major fx houses: MPC, Cinesite and Framestore CFC. We get an overview and then breakdown one sequence in detail in the podcast and discuss the relationship between physical models and vfx in the written story.

We begin this week’s podcast with a chat with visual effects supervisor Chas Jarrett, whose company MPC handled the majority of the visual effects for the film Charile and the Chocolate Factory, and then explore one sequence in detail.

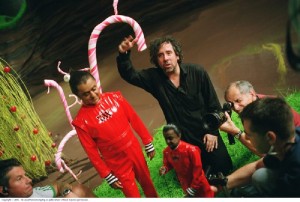

Bringing the Oompas to life was one of the primary tasks awarded to MPC. In shots with 5 to 20 Oompa loompas appearing in a scene, then actor Deep Roy would play them all. Shot in separate takes, and from different marks, he would act out each part in multiple motion capture passes. “I think of it as doing nineteen second takes,” offers Roy, whose extensive training for the roles included daily Pilates sessions and dance classes. “the most challenging part was trying to remember my position from one performance to the next, counting in my head and remembering at what point to turn or where to look. It was a lot of rehearsing”.

As if that was not complex enough – the Oompa-Loompas are only two and a half feet high, so Deep Roy’s virtual image had to be scaled – particularly complex as the Oompas are often interacting with other live action actors.

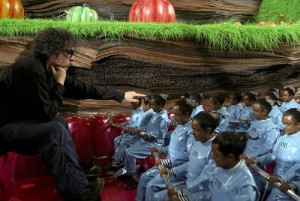

In the second half of the podcast we speak with Andrew Chapman and Andrew Whitehurst, two of the artists who worked under Framestore’s VFX supervisor Jon Thum on the team who tackled some 88 VFX digital Squirrels. The Nut room sequence is so complex it was skipped for the 1971 Gene Wilder version of Roald Dahl’s famous children book. Tim Burton attempted to film as much of this in camera as possible with live, trained squirrels. “when I found out what was wanted it was a bit overwhelming”, says senior Animal trainer Mike Alexander, of Birds & Animals Unlimited. Alexander had worked with Burton before as chimpanzee wrangler on Planet of the Apes, but even he admits “squirrels can be very tough, and training 100 of them was inconceivable”. After 19 weeks, the on-screen solution was a crafted combination of 40 individual and curious real squirrels, animatronics and CG squirrels.

We continue our coverage of Charlie and the Chocolate Factory here in print with a separate discussion with Cinesite about the role of physical effects and VFX. Cinesite was awarded the task of working on the interior and exterior shots of the factory itself. Half of their 160 shots were accomplished with models and the other half with computer animation. The model division is managed by Cinesite’s Model Unit Supervisor Jose Granell, who supervised the construction of a massive 1/24 scale miniature of the factory and townscape as well as smaller models – fudge mountain and the hospital wing. The factory and townscape were a major undertaking by themselves. At 120 feet long, 80 feet wide, 25 feet tall there were 1500 individual houses with 12,000 windows thousands and thousands of fibre optic lights. Each shot was filmed in several passes and then sent onto Cinesite Soho where Visual Effects Supervisor Sue Rowe oversaw the project.

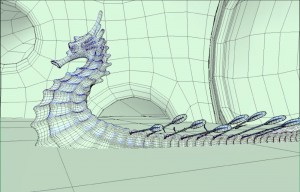

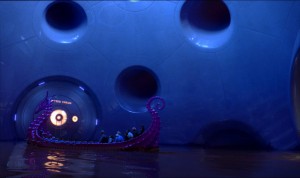

Maya was used to build 3D cars, cats, smoke and CG people were added with React a crowd simulation software that they initially developed for King Arthur but the majority of their digital work focused on the creation of the interior environments of the factory with the glass elevator as the centre point. Wonka takes his guests into a magical elevator that has the ability to move in any direction. The elevator was the only real element in the shots the rest was built entirely in the computer. About half of the CG environments were derived from photo references, the other half was directly created in Maya (associated to Renderman).

We spoke to Antony Hunt (Model Unit Executive Producer / MD Cinesite) regarding the model shots and Sue Rowe (Cinesite VFX Supervisor) about Cinesite’s workflow

Exterior models shoot:

fxguide: We wanted to ask about the role of the production designer and the art dept. to the external model building designs in the film?

AH: Production Designer/Art Department sent basic (drawn) plans of the factory and town layouts, then plans of individual houses and detailed factory – we then constructed the models from these drawings, but no CAD was used by the model department.

fxguide: What role did previz have, and at what stage was the previz?

AH: The role that the previz played was instrumental from day one. Rather than having a complete blank canvas we were able to establish the size of Wonkaville and of the factory to help us to determine how big the miniature would need to be, subject to camera angles etc. The previz came after we budgeted however we had a good view of what the requirements and shots would be after meetings between Jose, Nick Davis and myself. Also further down our pre-production we were able to take the previz and check that all camera moves could be achieved on the motion control camera, determing camera heights distances from the camera to certain positions on the model.

fxguide:. When quoting a project like this, how final is the script? Large scale models require long lead times, and production design may require lengthy pre-production – so it must be hard at bidding or quoting stage to have an accurate idea exactly how complex the models will need to be ?

AH:: It was clear from a very early stage after consultation between Nick Davis, Jose and myself what shots would require a miniature. Tim Burton was very clear that he wanted to use a miniature and not a 3D model or matte paintings. Apart form the wide establishing shots of both the factory and the town there were many shots that were required from the model of backgrounds for when Charlie, Grandpa Joe and Willy Wonka were flying round the exterior of the factory and Wonkaville for the flying elevator sequence. We had a fairly clear plan when we budgeted the miniature unit so from those original meetings most problems that occurred from the creative process of film making were accommodated for within the original budget.

Sue Rowe adds:

SR: In answer to your question I would say that some shots from the bid ended up a little different to the final shots but this is often the case, the design process is an organic one.

At the time of the elevator blue screen shoot Alex M and Tim B had an idea of what they were after but it definitely progressed a long way during post. Alex McDowell spent time here at Cinesite with John Roberts-Cox our 3D supervisor designing shots in Maya. Together we created designs for the shots of the nut room,TV room, and the enormous refrigeration room which housed fudge mountain.

Inspiration came from 1950 american fridges! We added dry ice elements over its surface for added scale etc

The sheep station was great fun, it is pure Burton, only the sheep and the Oopmas were real the Candy floss Maker and glass storage vats were all created in 3D.

fxguide: You used REACT for the crowds, can you explain it and how is it different from say Massive ?

SR:

REACT is our propriety software originally written for the crowds on King Arthur.

R. – Reasonable

E. – Embedded

A. – Agent

C. – Crowd

T. – Tasks

Alexander Savenko used REACT on Charlie and says it consists of several Maya and Renderman plugins. The core of the system is an AI plugin. It allows you to create agents, specify their behavior and appearance, add collision objects, draw agents paths, etc.The process of animating consist of two stages. First, AI engine simulate agents motion, than motion-blending engine creates final high-quality animation by looping and blending motion clips together. Renderman plugin reads baked animation and generates geometry at rendertime. There are also specialized Renderman shadeop plugins optimized to compute ambient occlusion and do shadow testing for crowds scenes. I would say our AI engine is “simpler” than Massive, however our whole system is very well integrated into our pipeline – and of course we wrote it, so it will evolve with our film requirements!

fxguide: Did 3D use camera moves provided by the motion control rig – or just solutions from a 3D tracker ?

SR:We have a great tracking department here headed up by Jon Miller. He worked closely with the model unit sending data back and forth in order to get the moves everyone was happy with. Where the shots were real, like the outside of the factory we took the live action plates tracked them in boujou, matchmover and 3de and then setup in maya using the previz model of the factory for lineup.

When the shot had been checked the camera data was exported as a .xsi file to the set. This was then used in softimage to create the motion control data for the rig. Jose would then shoot the multiple model passes based on these moves.

The Glass elevator sequence had been blocked out in previs so we knew the kind of moves to expect. In some cases the motion control data would give us the starting point but in some shots we did end up having to track the plates by hand.

Tim wanted to elevator to travel at break neck speed though the set so we took the BS footage and retimed it to give us a more dynamic move. Often original tacked cameras were replaced with new and digital doubles replace the actors

fxguide: After 3D what is the pipeline?

SR: We have developed a proprietary 3D lighting pipeline named Kik – it involves a GUI in maya, a single “All-In-One” renderman shader which extracts surface properties directly from the 3D objects then renders

multiple images for every frame – the final image is automatically re-constructed though a custom “Kik-beauty” node in Shake which then acts as a real-time mixing desk for balancing all the lighting properties

of the CG over the live-action plate

The composite issues were interesting. For the exterior factory shots we worked on integrating models into Live action and making that believeable. We used multiple smoke passes to add depth and scale to the factory. We added live action plates of people walking, cats and birds and some matte painting set extension to create Wonka Ville.

For inside the factory it was the opposite everything was “hyper real” and the colours intense, the challenge here was to make something surreal look real. Again we relied on well shot live action FX plates for added realism.

The highly reflective glass elevator did give us a few problems with spill and unwanted studio light highlights – but the benefits of shooting a real elevator clearly out way the negatives. Actors with real reflections and multiple refractions are always better than relying a computer generated environment map.

fxguide:. Was there a second camera filming from the side to get a side reflection pass?

SR:As explained above the refelections were mostly real, enhanced slightly. We did use the light refraction and reflections to our advantage and made it more glassy in some shots.

In some cases, where no reflections were visable we used our CG characters reflections the glass. About 40 % of the shots were completey Computer gererated and the characters action was motion captured.

These guys were used for the mid and distance shots. Infact much of the firing range scene totally computer generated. The fireworks were animated intially using Maya Dynamics according to the VFX Supervisor’s direction. These were then used as a template within a procedural system that enabled us to efficiently render large numbers of simultaneous bursts. Volumetric smoke plumes illuminated by the fireworks were also added using this system.

The launch of the fireworks were triggered by simple controls on the Ompa guns, making the process of aiming and animating and rendering a matter of a few mouse clicks. The Oompa’s and guns were rigged to be driven by the same controls, so that when a gun was told to fire, the Oompa animation clips would be played back at the corrrect frames.

fxguide:Were HDR image probes done of the set for 3D ?

SR: Simon Maddox was our lead lighter on this sequence he did use HDR sources for the outdoor reflections, these were usually composites of the model set and photographed skies because we needed a complete environment rather than the model with it’s studio and greenscreen surround. For indoor scenes we often made a simplified 3d proxy of the environment and either ray-traced it or created environment maps of it. We used floating point renders to enable HDR generation in shake of comps that became HDR environment maps – so for example hospital windows modelled in 3d had luminosity greater than 1 so we could get bright reflections that would motion blur correctly without too much falloff.

The pipeline included tools in maya that visualised the area of a 3d set visible in the glass elevator wall reflections by drawing lines from the camera to the elevator and drawing the continuation of those lines as they would be reflected from the planes of the elevator walls, so we could see what part of the 3d model would show up in the reflection because it would be within the volume defined by the shape. This meant we could contrive to have objects of interest, usually chimneys, visible in the shot and nicely composed in the glass reflections.

fxguide:The matte paintings, were these just photoshop layered files or camera mapped digital environments?

SR:The approach to the matte painting is always defined by the shot it varied from the usual 2k to 16k plate for the sky dome.

The skies were sourced by David Early who heads our Matte painting department. He took the photographic stills and stitched them together enhancing the light and transparency of the clouds using photoshop. This was then taken into a 3D virtual environment which gave us freedom to spin the camera 360 degrees if we needed. The elevator shoots out of the Factory chimney and suddenly plummets from the sky back down to factory level and for this we used the sky dome and multiple layers of clouds which whip past camera, to add a more dynamic feel we added loads of camera shake.

The flying glass elevator shots demonstrate the problems we had with some of the original live action footage, this was shot using motion control however the limits of the rig and the actors safety requirement meant that the elevator could not go as fast as Tim wanted it to. We found that retiming the film plates and accentuating some of the actors movements gave us the look Tim was after.

In some cases we completly did away with the real actors in the elevator and replaced them with a Cg elevator and re mapped the actors into our virtual elevator.

All images Copyright, Warners 2005, All rights reserved.

This article can not be reproduced without permission.