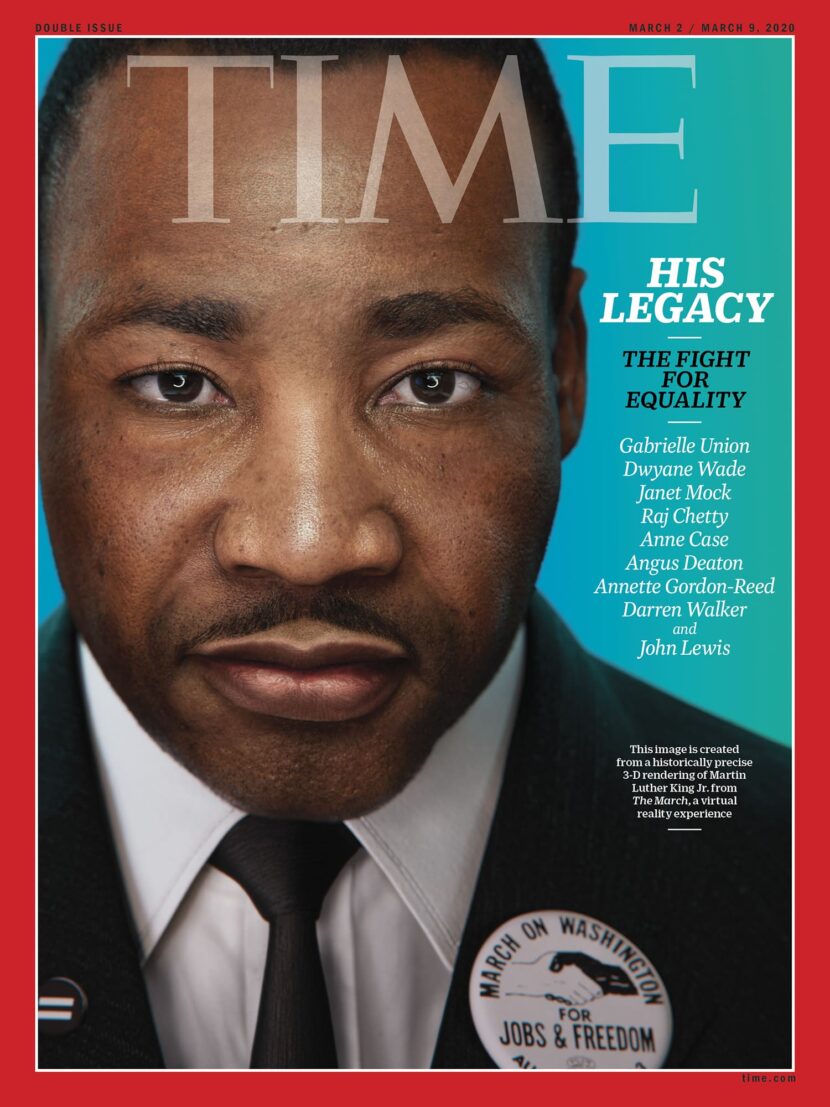

TIME and Digital Domain undertook an exceedingly ambitious project to produce a 3D recreation of Dr. Martin Luther King Jr., as part of a new VR experience called The March. This first-of-its-kind production allows visitors step back in time to play witness to one of the most important moments in American history: Dr. Martin Luther King Jr.’s “I Have a Dream” speech. The project was designed so that participants could walk with other marchers, while hearing the real audio from the crowd, and experience a 3D recreation of the Washington Mall, before standing five feet away from the Martin Luther King himself.

In order to bring the 1963 March on Washington for Jobs and Freedom to life in virtual reality (VR). The TIME team embarked on a three-year journey to virtualize Martin Luther King Jr. and his iconic “I Have a Dream” speech. Digital Domain joined the project after a year and the actual production took approximately 10 months.

TIME Studios debuted The March as an experiential exhibit in February at the DuSable Museum of African American History in Chicago, IL. DuSable was the first independent African American history museum in the country.

Facial Reconstruction

Dr. King was created using a mix of Digital Domain’s Masquerade system, which combines high-end performance capture, machine learning and proprietary software to create the 3D base model. The facial input was captured simultaneously with motion capture of the body. From there, artists used Autodesk Maya and Houdini to perfect Dr. King’s body and face before adding the model to a historically accurate reconstruction of the National Mall, all within UE4.

Facial Performance Capture

Digital Domain began by casting a performer who was similar in size and shape to Dr. King in August of 1963 to recreate his body movement. The King estate suggested their official orator Stephon Ferguson, who has been publicly re-enacting the “I Have a Dream” speech for the past 10 years, since he could match these characteristics and provide a realistic reference for Dr. King’s gestures during the speech.

Ferguson’s performance was recorded at Digital Domain using Masquerade, a motion capture system designed to track minute details in a subject’s body and face. Facial scans, combined with machine learning (ML), were also performed on another person to build pore-level details into the base model. From these bridge scans, the team was able to compare eye position, skull shape, cheekbone and nose ridge position, skin tone and blood flow to the current build of Dr. King. These elements were then given to Digital Domain artists, who took the model (approximately 50% complete) and began adding in Dr. King’s distinct visual traits.

Artists assembled a large reference library of images and video from the speech and week of the ’63 march to aid in this process. Since people’s bodies and faces change month to month, this was the only way to accurately portray Dr. King on that particular day. In addition to different angles, the team also sourced as many color photos as possible, since unlike a lot of old footage which is predominantly black and white, the VR experience is in full color. The result is a historically accurate recreation that matches Dr. King’s features and mannerisms in real-time.

Performance

At the start of the project, an actor was scanned in a Light Stage at USC-ICT. Clearly this was not a scan of Dr. King, but the stand-in actor. The Light Stage session gave the team detailed data and insights into how similar skin would look under a range of lighting conditions and detailed facial pore data. The Light Stage provided polarized lighting data and from this, the team was also able to build blood flow maps. While there were no 4D or time-based samples with continuous correlation, the expression snapshots give the hemoglobin redistribution based on each key expression, which the Digital Domain team then interpolate between to get Dr.King’s facial blood flow.

Digital Domain used Dimensional Imaging (DI4D) for the time-based data related to the motion capture that performer Ferguson gave during Dr. King’s speech recreation. This allowed Digital Domain to track how Ferguson’s face and eyes moved while making certain words. To help, Ferguson was given visemes to recite, which are standard sentences designed to push the mouth around and activate all the muscles in his face.

After the DI4D session, Ferguson returned to the Digital Domain Performance Capture Stage (DD-PCS), where a dot marker set was drawn on his face, and he was fitted with a Head-Mounted Camera (HMC). This marker set, specific to Digital Domain, comprises roughly 190 dots which are applied to the face. Using the HMC running at 60 fps. The team uses the DI4D time-based data and the actual MoCap performance on the DD-PCS, to drive the Dr.King face built from data from the Light Stage session.

At DD-PCS, Ferguson gave the same performance that he did at DI4D. With both the HMC performance and the DI4D performance feeding into software that DD’s machine learning (ML) team has written, the team was able to correlate 2D video data to 3D model data quickly, with high degrees of accuracy.

Ferguson was then outfitted with a motion capture suit and asked to recite the full “I Have a Dream” speech. Using a video reference, Ferguson performed the entire speech, both physically and vocally. Using this facial and body capture, DD synchronized and mapped his facial performance to Dr. King’s current digital likeness, while the animation team vetted and adjusted his performance to match. The ML software offered a strong starting point for the animators, who spent most of their time adding human nuance to the digital Dr. King. The FX team then used this body and face performance to add additional secondary animation onto the cloth and face, giving some procedural motion to the final build.

Tech Specs of the Experience

The installation used the VIVE Focus Plus headsets. The Focus Plus has 1440 x 1600 pixels per eye (2880 x 1600 pixels combined), and a density of 615 PPI. The headsets have a 110° field of view. The 10.5 minute VR experience runs on a customized build of the Unreal Engine 4. The installation combines seven scenes, with high-fidelity environments, running at 75 frames per second (75Hz) with six degrees of freedom.

Early on the team had hoped to have multi-GPU rendering, when the team discovered that multi-GPU rendering in VR was not possible, they leaned on lessons from their DD Digital Human team in producing Digital Doug in VR for SIGGRAPH. Digital Domain incorporated the Digital Human’s R&D knowledge to achieve the levels of performance needed for Dr. King at the Installation. The actual geometry data for Dr.King is very similar to the geometry data that Digital Domain uses for all of their pre-rendered assets. Luckily, Dr. King had short hair, as this is one of the most demanding aspects to render in real-time when focusing on digital humans. The team was able to pre-simulate additional facial movements, such as facial jiggle, as well as cloth simulation, using the company’s feature pipeline. The Dr. King team then optimized for their project’s very specific real-time target hardware. One huge advantage the team had was that the project was not designed to run on a range of hardware, just the specific Focus Plus hardware that would be used at the Museum.

Usually, a VR experience such as this would require viewers to be tethered to a PC or a backpack. But the team decided that would impede movement and potentially discourage viewers who might be wary of VR. To solve this, Digital Domain created custom tools that would allow the entire experience to run wirelessly on a single graphics card (GPU). This allowed attendees to see Dr. King in the highest resolution possible, without giving up their ability to freely explore the 10 x 15 ft. play space. Real-time tools were developed to run in the background of the location-based experience, ensuring viewers could see the most realistic form of Dr. King using a single GPU, yet still not have a noticeable latency. As much as image quality was key, the process could not add significant latency or lag to the experience without running the risk of making visitors feel motion sickness.

Environment

Digital Domain’s Integration and Environments team spent a week at the National Mall in Washington D.C., gathering onsite measurements for Constitution Ave, the Reflecting Pool and everything leading up to the Lincoln Memorial. Using a selection of LIDAR and high-resolution texture scans, they were able to recreate the key locations in great detail. Historical photos and maps from the day in 1963 helped artists model the rest.

The Magazine cover used the same data and modeling pipeline but was rendered at Digital Domain using V-Ray. The UDIM texture count was 50 for V-Ray and it dropped to 4 for the Real-time version used UE4, (~custom build of UE 4.23) as part of the optimization for real-time. Other differences, the version above has peach fuzz or venus hair. The real-time VR experience never allows the viewer to be as close as the TIME cover and so it was left out. In VR no one can ever get closer than about 9ft from Dr. King.