Adobe delivered one of the most impressive papers at this year’s SIGGRAPH technical papers. The essence of the paper is to build on previous research to provide Stylized Facial Animations based off an arbitrary example style, such as a painting. This paper is the basis of Jakub Fišer PhD at the Czech Technical University in Prague. This work is so impressive, it seems almost like magic, but in reality their clever solution takes advantage of some special aspects of faces, some core Adobe Photoshop tech and the previously published research that the team has been working on for some time.

We spoke to Dave Simons, Senior Principal Scientist at Adobe Research, and he has been with Adobe since Aldus Corporation was purchased by Adobe in 1994. He is one of the authors on the Adobe SIGGRAPH PAPER called Example-Based Synthesis of Stylized Facial Animations.

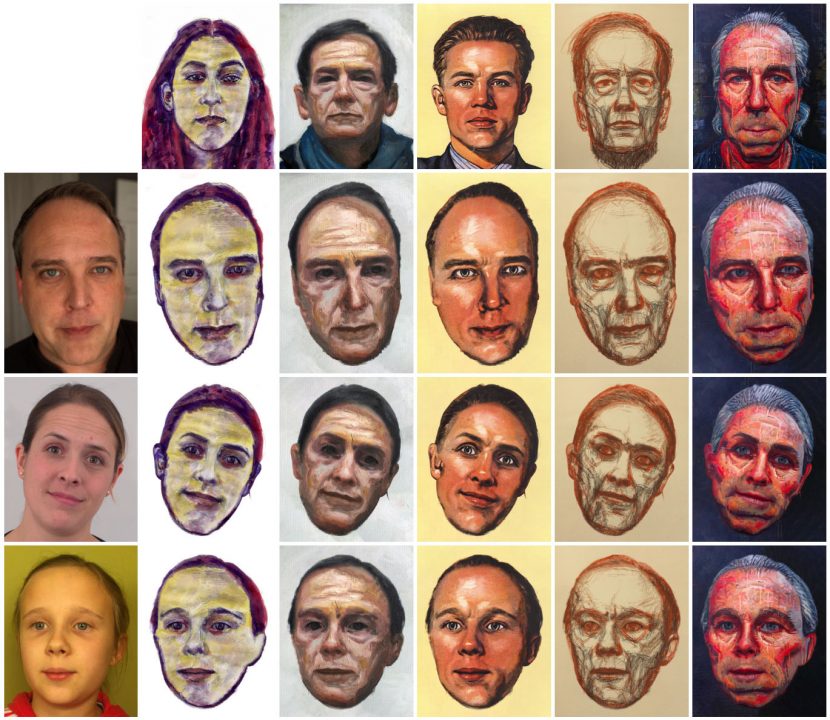

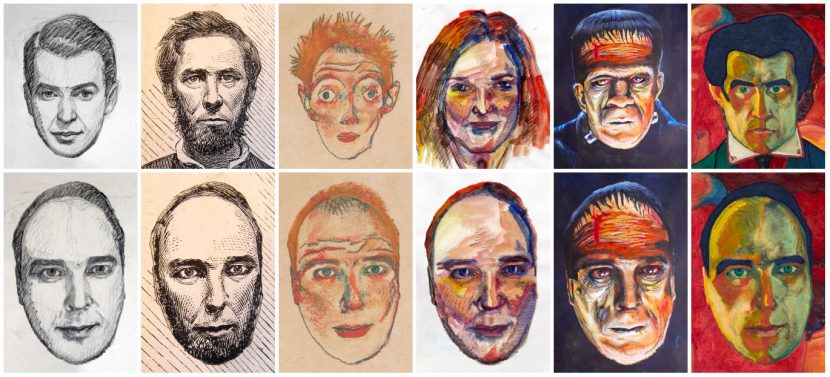

The videos they have produced show the borrowing of styles from portrait paintings and then converting a clip into a rich similar style that looks not only alike but appears over time to resemble hand-painted animation, where every frame was created independently. The approach does not soften the style into a blurred version of the source style, nor does it look like a 3D face with textures projected on. The solution is both brilliant and incredibly effective. It is also a very specific approach. In it’s current form, it would not work if applied to say a car for a Superbowl commercial. However for faces, it defines best of class in a strong research area that has had many teams targeting a similar goal.

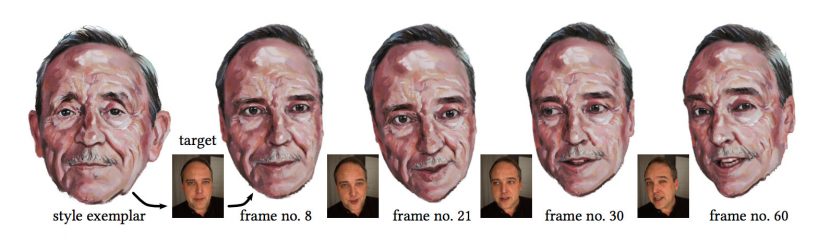

Unlike some other approaches that use a neural style transfer [Selim et al. 2016], their method performs non-parametric texture synthesis that retains a lot of local details and paint stroke characteristics of the example input. It also has an adjustable level of coherence over time, to produce the illusion of each frame being drawn from scratch.

Background

Guided Texture Synthesis is the core concept of the paper – the idea that the texture from one drawn face can be applied to a video of another face. The process importantly makes the new synthetic face still seem a flat drawing or painting, and not a 3D face with textures tracked or projected onto it. Furthermore the artistic style can even be of a real bronze statute, it does not need to be a painted texture.

How they do it

While Simons only joined this team for the 2017 paper, most of the authors were together on a key 2016 SIGGRAPH paper which is a great starting point for understanding how this new work is so effective.

2016

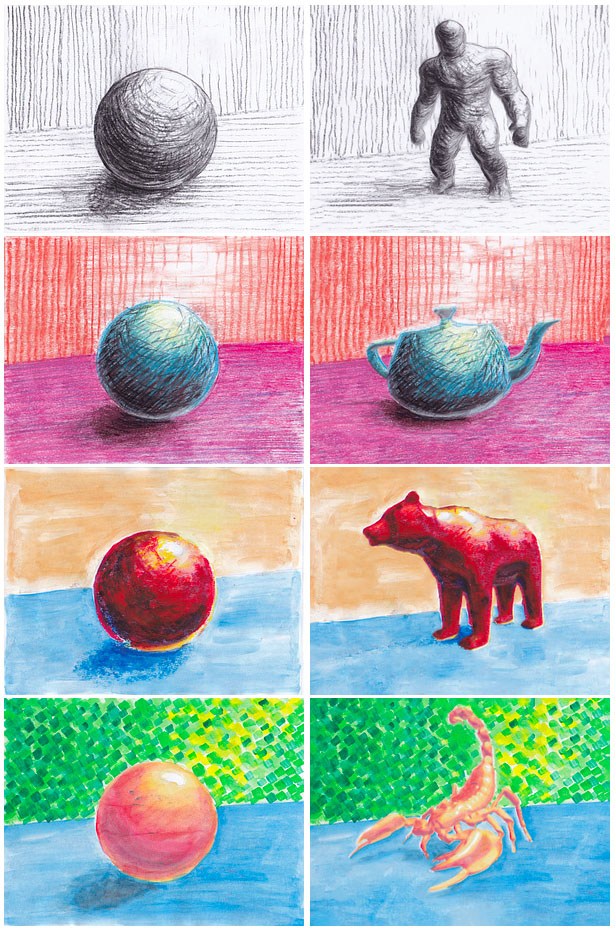

The key paper and research prior to this was the 2016 paper: StyLit: Illumination-Guided Example-Based Stylization of 3D Renderings. In this paper, the drawing style was transferred to a known targeted 3D model, but with the correct stylised artistic hand drawn look. Unlike the 2017 Face system which takes any painted portrait and applies it to any facial video, this system took hand drawn styles and applied it to a previously understood 3D scene. This is a key point. In the 2016 case the program knew the surface normals of the target object because it had AVOs from the 3D. In the 2017 case it knows how to apply the painterly style as it is a face, and all faces have roughly similar shapes and normals.

In the 2016 example if one shaded in a circle to make it look like a ball, the program can lift certain assumptions from the ‘ball’ and apply it to the 3D figure. For example if the ball is shaded lightly top right and shadowed bottom left, this defines how a reproduced 3D figure should be shaded, as it looks like it is lit from the top right, since the 3D figure has know surface normals. In the simplest form, if the normals on the shoulder face the light, then the style is applied from part of the ball that faces the light. If the normals inside the leg face away, then they are darkly shaded the way the bottom of the Ball was shaded. It assumes the ball is shaded sensibly and so it uses the same logic on the figure. Of course, if you artistically paint the shading unrealistically, then the figure is also similarly shaded, but then that is the look the artist wanted.

In the image above of the 3D ball is painted with a pencil style such as seen here, then the 3D model is shaded with the same logic and looks like the image on the far right.

Adobe MAX demo:

Based on the SIGGRAPH 2016 work, there was a demonstration at Adobe MAX 2016 and a download version released of the 2016 approach. In that demo people were invited to paint or draw over a printed stencil and see their unique style to be transferred, in real-time, onto a variety of 3D models. In addition to live video input, people could also use Screen Captured material. In this way people could display the stencil on the screen and modify it using tools such as Adobe Photoshop.

It is really important to note that the input same image can’t be anything, it has to be this sample ball, since the normals over the ball can be estimated easily for such a standard and simple shape. The process completely relies on the input being a Ball.

2017

Jump to 2017 and the team realised that while the previous version required a 3D model to apply the shading ‘logic’ or style to it, there is one special class of objects that are universally similar in shape and thus did not need an explicit 3D model…human faces. All faces can be assumed for this work to have two eyes, a nose, a mouth, etc. While the orientation and specific details are always different, the basic shape of most faces is the same. Once the team could interpret the specifics of the particular inputs, the same approach could be made. But it only works in this case if the input painterly reference is of a face and the target video is also of a face.

It is fundamentally important that both the source and target are faces. Only then can the computer know the nature of the input and map that to the output. This is very different to the 2016 paper, as here the guiding channels of how to transfer the ‘look’ are from something that is readily available in the source, compared to a special case ‘sphere’ example.

In a very crude sense, the computer lifts how foreheads, for example, are drawn, given they are fairly flat and vertical, and it then applies that to the forehead of the target face. But really the program does nothing this crude or heavy handed. It actually uses two pieces of technology users already access daily in Photoshop – the Clone repair filter and the content aware fill function.

There are several guiding rules for moving textures from one face to the other. The faces are segmented based on obvious features such as eyes and mouths etc. So, the regions near the top of frame transfer to other regions that are roughly in the top of frame. Shading and ‘appearance’ means that darker things texture other darker things, as you can see in the image below there is also a warp that approximately aligns one face’s regions to the other. But this all just sets up the right direction for the synthesis. The impressive painterly effect comes from the core Photoshop technologies.

Where the texture is sourced from comes from the same technology as the repair clone tool in Photoshop, which looks for similar tonal sources when touching up a picture. Instead of looking around a person’s cheek for some matching texture in the same picture to hide a pimple – the technology matches a similar appropriate patch from the other source reference image. Once a region is matched or guided to be an area for a matching texture, the actual texture is applied using the content aware fill technology. This avoids having to bend patches of texture and thus softening the final outcome. “The Synthesised image is made up solely of pieces of the original source image, cut up and rearranged and then overlaid, so it always preserves the strokes of the original as it is only made up of strokes from the original.”

As a result the 2017 paper produces much better and more faithful patches than nearly all other synthesised approaches.

To get the feeling that the face is painted and not painted on, one must move beyond an optical flow approach of bending the source to match the new face’s movements.

Temporal jitter, or noise control

While the segmentation, position and appearance all relate to the guided transfer function, the last stage of temporal control is very different. The input is still being mapped to multiple frames of the moving target. Normally researchers are worried about this boiling or popping but in this research the opposite was true. “The temporal cohesion is different, normally when we do algorithms in video converted from stills, one is really worried about making it temporally coherent,” explains Simons, so the new clip is smooth and flows naturally. But in this painterly world, that is not what is wanted. “For this paper it is the opposite, if we make it too temporally coherent it looks wrong, it looks someone has painted the texture on the person’s facial skin,” he adds. “She is a regular person with paint on – and it looks creepy and not what we want.”

Adobe’s approach introduces temporal incoherence, it can be dialed in up to a point that it becomes distracting. “It is a subtle difference but this provides one of the first times that you add incoherence,” he comments, going on to say that, “Colour me Noisy was one of the first papers that address some of the same issues,” referring to a 2014 Eurographics paper: Color Me Noisy: Example-based Rendering of Hand-colored Animations with Temporal Noise Control by many of the same team members as this 2017 research paper. This approach started the approach of using the ‘PatchMatch‘ algorithm which is based on the content aware fill approach.

PatchMatch was developed as an interactive image editing tool and uses a randomized algorithm for quickly finding approximate nearest neighbor matches between image patches. Previous research in graphics and vision have leveraged such nearest-neighbor searches to provide a variety of high-level digital image editing tools. However, the cost of computing a field of such matches for an entire image has been vastly too expensive. Adobe’s PatchMatch algorithm provided substantial performance improvements and enables it’s use in Photoshop’s editing tools. The key trick to their approach was that some good patch matches can be found via random sampling, and that natural coherence in the imagery allows the code to propagate such matches quickly to surrounding areas. This one simple algorithm formed the basis for a variety of tools – image retargeting, completion and reshuffling. PatchMatch was then generalized and used for a variety of other computer vision applications like image denoising, object detection, label transfer and more. But PatchMatch is most known for it’s part in the breakout success of the Content Aware Fill feature in Photoshop CS5. In this 2017 paper the PatchMatch is done through the guiding channels, linking the source with the target. “It is saying in this research not just I want it to look like this other part of the image, in the image domain, but it is also being guided by say gathering patches at the nose, and make the new nose – with nose-like pieces from the source image, due to the guiding channels,” explains Simons. “This works in part because an actual artist will use different brush strokes for the eyes compared to the strokes they would use for some other part of the face.. so this ends up with an interpretation that is much truer.”

Additional control.

For this paper, all the controls were kept with a similar weighting, but each of the stages can be adjusted and weighted differently. This provides room for additional artist control which will produce more or less fine detail, a more temporally consistent or inconsistent look and even a more painted on rather than 2D painting look.

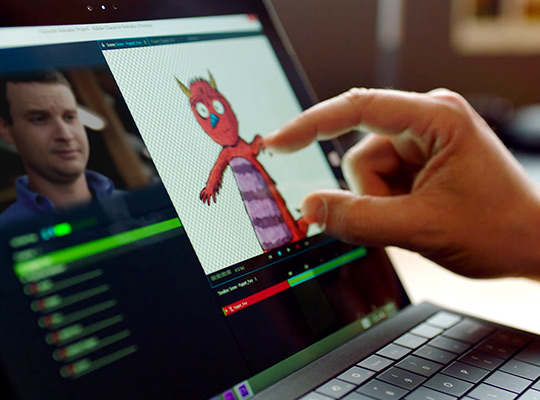

One more thing…Adobe’s Character Animator

While technically this may seem like a logical progression from previous work, in reality the path to this paper was not so obvious. This specific line of research came from the Adobe Character Animator project that allows people to real time puppeteer 2D cartoons. The team wanted to see how they could puppet stylised character animation rigs. “As part of that we tried some approaches on Video and it worked surprisingly well, except for the temporal cohesion aspect, and that was when we added that in. The initial target was that this work might go into Character Animator but now it is much more likely to be something like After Effects or Premiere, and that was not predicted,” explains Simons. The company does not pre-announce any major product enhancements so at SIGGRAPH this was just a technology demonstration, but Adobe must be motivated to get it into the products after such a brilliant reception at SIGGRAPH in LA.

While technically this may seem like a logical progression from previous work, in reality the path to this paper was not so obvious. This specific line of research came from the Adobe Character Animator project that allows people to real time puppeteer 2D cartoons. The team wanted to see how they could puppet stylised character animation rigs. “As part of that we tried some approaches on Video and it worked surprisingly well, except for the temporal cohesion aspect, and that was when we added that in. The initial target was that this work might go into Character Animator but now it is much more likely to be something like After Effects or Premiere, and that was not predicted,” explains Simons. The company does not pre-announce any major product enhancements so at SIGGRAPH this was just a technology demonstration, but Adobe must be motivated to get it into the products after such a brilliant reception at SIGGRAPH in LA.

While the technology can not be benchmarked in such a non-product environment, the current SIGGRAPH paper is running at a few seconds a frame on video clips. One of the main computational tasks is the guiding optical flow step to produce the guiding channel, so the process is fairly compute intensive. The test images for the paper on the CPU were implemented using C++ and CUDA. On a 3 GHz quad-core CPU it took around 30 seconds to compute just all necessary guiding channels for a one-megapixel frame. At SIGGRAPH there was a GPU solution which took a one-megapixel frame from 3 minutes on the CPU to just 5 seconds on the GPU (running on a GeForce GTX 970).

The good news is that now Dr Jakub Fišer started work at Adobe the week before SIGGRAPH full time.

FootNote :

Dave Simons, is the Senior Principal Scientist at Adobe Research, but he was originally one of the co-founders of Company of Science and Art (CoSA), which created After Effects.

AE was originally created by CoSA in Providence, Rhode Island, and first released in January 1993. CoSA along with After Effects was then acquired by Aldus corporation in July 1993, which in turn was acquired by Adobe.

It would be nice to see this newest research end up back in AE, perhaps next year when AE turns 25 !

fantastic article. The last part about Cosa (egg ) was a real Gem.

25 years next year !!! My god.

Cheers,

b