Destruction pipelines today are key aspects of any major visual effects pipeline. Many current pipelines are based on Rigid Body Simulations (RBS) or otherwise referred to as Rigid Body Dynamics (RBD), but a new solution – Finite Element Analysis (FEA) – is beginning to emerge. In this ‘Art Of’ article, we talk to some of the major visual effects studios – ILM, Imageworks, MPC, Double Negative and Framestore – about how they approach their destruction toolsets.

In VFX and CGI, RBS is most often relevant to the subdivision of objects due to collision or destruction, but unlike particles, which move only in three space and can be defined by a vector, rigid bodies occupy space and have geometrical properties, such as a center of mass, moments of inertia, and most importantly they can have six degrees of freedom (translation in all three axes plus rotation in three directions). Rigid bodies are also importantly defined as being non-deformable, as opposed to soft bodies, flesh sims, or deformable bodies. The one major exception to RBS is Finite Element Analysis (FEA). This is used by at least one major effects house – MPC – but until recently has been prohibitively expensive for all but real world engineering simulations in industries such as power plants and pipeline testing, for example.

The ‘explosion’ in destruction tools

While not an exceptional critical success, there is no doubt that the massive work done for the film 2012 was a turning point for the industry. Until that time nothing had been seen on that scale. Many companies such as Double Negative and Uncharted Territory worked on that film, but Digital Domain’s work received a huge amount of attention. Not only was the scale and extent of the building destruction sequences impressive on screen, but they were achieved in an incredible timeframe for what was required. The Digital Domain work relied on Bullet and it is safe to say that that film really accelerated the importance and industry acceptance of Bullet as a primary Physics Engine. 2012 is a watershed in visual effects large scale destruction sequences and Bullet and the effects companies like Digital Domain’s work perhaps eclipses in importance the film itself, although the film was very commercially successful for director Roland Emmerich. And the sequence is still often referenced. For example, the LA destruction sequence from the movie (the first half was created by Uncharted Territory, the second half by Digital Domain) was named as #6 in 3D World Magazine’s top 25 greatest CG VFX sequences of all time.

Read our coverage of Digital Domain’s visual effects for 2012 here.

For the sequence, Digital Domain created crumbling buildings, highways and ravines and, ultimately, huge land masses disappearing into the ocean. The general chaos and art directed destruction brought upon LA necessitated Digital Domain expanding their already impressive RBS pipeline. “We knew from the start,” recalled Mohen Leo, then the Digital Domain visual effects supervisor, “that the biggest challenge would be the effects simulations and that there would be enormous rigid body simulations to do. We looked at the current state of off-the-shelf solutions for that, but none of them were really up to it. Internally, some people were interested in basing a new system on an open source project called Bullet. Bullet is very simple, but it’s fast, stable and, because it’s open source, you can build on top of it.”

And since 2012, released in 2009, visual effects studios, academic institutions and open source projects have continued to contribute to the wide-ranging field of destruction tools – the results of which have appeared in films like Inception, Prince of Persia, X-Men: First Class, Deathly Hallows Parts 1 and 2 and many others.

Basic approach to blowing crap up

This is how, at a basic level, destruction tools might fit into a typical visual effects pipeline:

1. Mesh preparation, pre-shattering or fracturing and rigid body creation – this is preparing the geometry to break and shatter.

2. Constraints and choreography – this stage allows for various ‘glues’ and constraints that will influence the simulation. For example, painting a map that will make one wall stronger than another.

3. Simulation ending in baking in the geo and integrating this into a render pipeline – running of a simulation and then baking that into something with real geometry that can be handled by a renderer. As part of the simulation the system needs to solve intersection of various bits of ‘crap’ – this is called collision detection.

Types of destruction pipelines

At the high end, destruction pipelines themselves fall into three main categories. In this article we will examine each in turn below:

1. RBS approaches – Sometimes custom built around existing physics libraries that are widely available such as Bullet, PhysX or ODE. Of these, Bullet is now perhaps the most popular. Companies such as SPI, Frameworks, Weta and Dneg successfully use advanced RBS approaches. This category also extends to Houdini and other commercial software using Physics engines. For example, Houdini v12 has the Bullet solver tightly integrated into Houdini’s dynamics along with Houdini’s own native RBD engine.

2. RBS using specialist physics libraries such as PhysBAM from Stanford University – ie. PIXAR and Disney, but most significantly by Industrial Light & Magic who have done massively complex work with PhysBAM.

3. Destruction using Finite Element Analysis (FEA) – a traditional massive real world engineering approach now being used extemely effectively by MPC at feature film level, but brought to the games and VFX industries by Pixelux.

There are also a range of standalone commercial solutions and plugins for destruction work. If someone is planning to use an off the shelf solution today then it is likely to be Houdini, by far the preferred choice for most companies, even those developing in-house code frameworks. Houdini’s procedural power is key to its success. It should be noted that there are other packages and options that also exist from Autodesk – in particular there are some exciting things such as the DMM engine from Pixelux for Maya/3ds Max – or Exocortex Momentum for XSI, or Caronte Body Dynamics Solver from RealFlow which is a rigid and soft body solver.

Below we have tried to explain the general pipeline approach that is most common, that of object pre-fracturing, combined with constraints run through a physics engine, solving the key problem of collision detection and then converted to renderable geometry. Normally rendering per se is not a factor in destruction pipelines as the simulation is done, solved and then passed to a renderer such as RenderMan, V-Ray or Arnold for final rendering, but all the RBS ‘smarts’ are upstream of the render implementation.

Moving beyond RBS/FEA we also discuss destruction sims and their relationship to secondary fluid sims and particle approaches and areas of expansion in the ever-evolving area of VFX and CGI.

Note: we are not aiming to cover real time solutions for games, but this is a vastly expanding area receiving a lot of research attention and commercial success. For example, the popular game Angry Birds is using the open source Box2D rigid body engine to simulate the destruction of the structures.

1. The ‘Standard’ RBS approach

Several of the top visual effects studios and game developers are now adopting a workflow built around the Bullet open source physics engine at its core for collision detection and rigid body dynamics work. fxguide spoke to Bullet’s main author Erwin Coumans about the current implementation of the physics engine earlier this year (since November 2010 Coumans is now Principal Physics Engineer at AMD).

Bullet has certainly been used in some recent high profile films by major visual effects studios, who, says Coumans, often customize the code with their own in-house work and combine it with pre-fracturing tools. The physics engine was adapted by Digital Domain for the studio’s Los Angeles destruction effects in 2012, and by Framestore in its in-house fBounce tool for Sherlock Holmes. Weta Digital also integrated Bullet into its proprietary wmRigid software for rigid body simulation on The A-Team. Software companies, too, have now integrated Bullet into their applications. Cinema 4D uses it in MoDynamics and Houdini, 3ds Max, Carara, Blender and Maya also make use of the engine. Bullet 2.79 is the current release.

Below is a shot from this year’s SIGGRAPH 2011 by Michael Baker using Dynamica, which was developed by Nicola Candussi for Disney Animation studios, used in films like Bolt and donated to the Bullet Physics project by Disney.

This video was specially edited for fxguide and was originally rendered for Siggraph 2011: Dynamics and Destruction for Film and Game Production: Dynamica. Dynamica was originally developed as a Maya-only plugin. Disney has since switched over to Houdini for their FX pipelines. The original Dynamica toolset was also built specifically for simulating packing peanuts in the animated feature Bolt, so Disney donated Dynamica to the Bullet community. Each block above was voronoi pre-shattered, the explosions were controlled with intersecting passives. Particles were added after the simulation and the fractured shapes were re-proxied for fluid collisions. Michael Baker is a lecturer at The Art Institute of Las Vegas and developer with the Bullet Physics project.

Prior to Bullet, the Open source ODE (Open Dynamics Engine) was popular but main facilities have moved on from ODE to Bullet and even more recently Bullet accessed from inside Houdini.

A case in point is Double Negative in London: Dneg started with their Dynamite tool, built around ODE and Maya, and are now just rolling out Bang which is Bullet inside Houdini. We talked about Dneg’s tools with senior software developer Peter Kyme and Nicola Hoyle, a senior destruction pipeline artist. “For Harry Potter and the Order of the Phoenix, Dynamite, Dneg’s original RBS tool, was used for the sims of racks smashing in the Hall of Prophecies,” says Hoyle. “Obviously getting it to sim all those glass balls falling down and smashing really tested what Dynamite could do. Peter Kyme and the software team got the software running very well simulating 20,000 falling glass balls pieces in 5 minutes.” Hoyle adds that the team also used call-backs that allowed the software to switch – on collision with the ground – the falling spheres to an already run simulation of a smashing sphere, thus saving large amounts of time.

Dynamite was also used on The Dark Knight for the helicopter crash. Dynamite is a very fast Maya ODE solver. But by Inception things were starting to change. The team had an in house solver written during Prince of Persia, based on Bullet. This was used for the “buildings collapsing into the sea” scenes in Inception and even though the traditional Dneg solver was still also in use, the pipeline had already started migrating to Houdini. For example, Houdini was used for the Paris cafe sequence. According to Hoyle, Dneg’s new Bang tool promises to get all the power and speed of the Dynamite tools but in a new Houdini setup.

Peter Kyme believes the primary things in destruction tools that are required by artists, in order, are:

1. Stability

2. Speed

3. Flexibility

Nicola Hoyle agrees that stability is key: “Then we know we can always run our sims efficiently, if they fall over or have memory issues then we are never going to get any results,” she explains. “Speed comes next because what we are producing we want to happen quickly, and then flexibility so we can get whatever it is that our client wants, and have the flexibility to drive the simulation in whatever direction we need to go in. Pete Kyme is very good at writing in anything we need into the code to get the RBS to do what we need.”

Peter Kyme adds, “Assuming you have a stable RGB, then the most important thing is performance, particularly today with these insane levels of destruction where you have potentially hundreds of thousands of rigid bodies in a sim, and sims that take 10 hours to solve. Then the iteration time becomes the limiting factor on how quickly you can dial in to the effect you are looking for and that the director is looking for.”

Some readers may note that realism is not in that list, a point we put to Hoyle who responded by saying, “The code starts with physics, but there is so much chaos in nature that we’ll never get out of a computer simulation, without spending a lot more time writing code. And a lot of the time we have to tweak it to adjust weights, or other aspects and a lot of that is artist-driven.” So Hoyle and her team do not expect everything out of the code, but they do expect to be able to reliably control and adjust a stable simulation to get what the director wants.

Before working at Dneg, Nicola Hoyle was doing her PhD in computational engineering and got into VFX from writing code (Python and C++) for sequences in Harry Potter. Now she walks a fine line between writing code and doing shots, including on set supervision. But her artistic work does not mean that she has moved away needing to code. “Each film has a different challenge and we need to manipulate our solvers, whether it is a stylized look we want or to add in more realism to our simulations. So we find we write a fair few tools in the main build stage of a show and then we run those through to our shot pipeline where they can be used on the next film too.”

The 3 stages of RBS

Stage 1. Breaking up RBS objects, normally in one of 4 ways

Stage 2. Setting the constraints and designing the choreography

Stage 3. Running the sim and solving collision detection

Stage 1: Breaking up RBS objects

The first stage of RBS is preparing the geometry, in other words, working out how to break the geometry into ‘bits’. Erwin Coumans outlined four methods at SIGGRAPH Vancouver this year in Canada for breaking up a model:

A. Voronoi diagrams

B. CSG: cutting geometry using Boolean operations

C. Convex decomposition, this can be performed by artists by hand, or using automatic tools

D. Tetrahedralization, which can also be done by converting the 3D model into tetras.

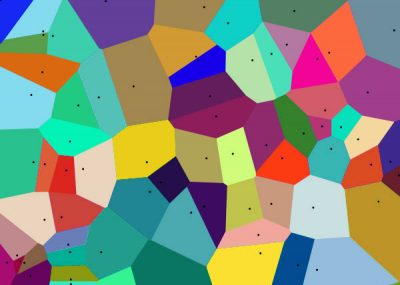

A. Voronoi Diagrams (mainly used in RBS)

A voronoi diagram is a mathematical concept used widely to produce natural looking fractured shapes. The principle is modestly simple and highly natural looking. A line is drawn equally distance between two dots but a right angle to them both. The initial dots (or particles) can be generated many ways including randomly.

One is effectively simplifying an object to a point cloud and then producing a shattered set of polygonal sub geometry. This concept is at the core of much RBS / rigid body dynamic procedural shattering.

B. CSG

This is a technique that has been around for many years. It uses the simple adding, subtraction and XORing of very simple shapes. These maths operations are called Boolean operations, and the whole approach is known as constructive solid geometry or CSG. It can perform volumetric operations between 3D models, making several simple geometirc shapes or objects build to much more complex and natural looking subdivided shapes. You can add two volumes together or compute the difference between two objects, or take the intersection of them. These operations allow you to break original 3D models into smaller pieces, similar to a cookie cutter.

C. Convex Decomposition (mainly used for collision detection)

One can also create a convex decomposition of a concave triangle mesh using convex decomposition. The word convex means curving out or bulging outward, as opposed to concave, which bends in (think cave shape). A artist can create a convex decomposition manually, using simple convex primitives such as boxes spheres and capsules. It is also possible to automatically create a convex decomposition.

D. Tetrahedralization (used for FEA below)

A mesh can also be decomposed into tetrahedral elements using Delaunay triangulation. There are some open source implementations available, including Netgen and Tetgen.

The Maya 2012 DMM Digital Molecular Matter plugin by Pixelux uses Netgen internally to perform a tetrahedralization.

Stage 2: Setting the constrains and designing the choreography

After the geometry is prepared and fractured into pre-broken pieces, they need to be constrained together. A RBS requires connections between the various pieces or they would immediately move apart in any simulation just based on gravity. There are several ways to do this. One way is to define connections between every piece and every other piece. This gives the most control, but the performance can be slow due to the many connections.

For example, if a building is being modeled for destruction at Dneg, Dn-shatter could be used to break up the geometry. This is a voronoi-based shatter tool, and one of the things one naturally gets out of such a process is adjacency of the sub-geometry, so it is easy to have some procedural constraints on the pre-fractured building.

Now if the artist wants to control which side of the building gives way, they can ‘paint’ on constraints. This is “part of the RBS set up process,” says Dneg’s Peter Kymes. “It is directing that simulation basically and there are various tools to do that, today much is being done in Houdini, because of its procedural tools, as it is very easy to, say some metaball in this area which we can manually paint, we want to weaken all the constraints on that side of this object, at this frame, and that is part of the RBS.”

Another way to compute connections is to automatically compute them based on collision detection: compute the contact points between touching pieces, and only create connections when there is a contact point. Then you can create a breaking threshold for those connections. Once we glued the pieces into a single rigid body, we can perform the run-time fracture. If there is a collision impact, we compute the impulse. If this impulse is larger than a chosen threshold, we propagate this impulse through the connections. Those connections can be weakened or broken.

After this, one needs to determine the disconnected parts, and then create new rigid bodies for each separate part. There are some special and very relevant cases in destruction pipelines. A good example is radial fractures. Certain types of glass when cracked have a radial pattern not immediately provided by a voronoi diagram. This effect can be provided by mattes or break maps. Radial cracks is one of those more classic special cases to be solved. They have both cracks radiating from the impact point, but also circular cracks around the impact point. Dneg uses Dn-crack which is a procedural surface shatterer. It does not do a solid volumetric shatter like a voronoi fracturer, it does a poly-surface fracture.

It works by the user providing a set of rules written in some scripting that describes how a crack might appear on the surface and how it might propagate to child cracks and how each of those would build and then the underlying code builds these cracks, based on these rules over the surface, intersecting them and importantly terminating them at points where the crack meet etc and then the final set is it divides all the geometry up into resulting fractured bits. In fact, this was the first major piece of code that Peter Kyme wrote when he joined Dneg and one of the example pieces of scripting he demo’ed it with was radial fracturing. The TD can set this up and then the artist can be presented with a series of controllers or sliders to then control and refine the effect.

Stage 3: Running the sim and collision detection

The simulation relies on the Physics engine and a key component of that is detecting objects bounding into, or smashing through, each other and the effects this has on the objects involved. The simplest form of collision detection is a bounding box. If all objects are contained inside simple boxes then it is relatively simple to avoid boxes overlapping and thus avoid collision. The problem is that while this is very fast, most simple boxes would often produce a shape too large to produce quality simulation. Objects clearly not that close to one’s eye would still seem to bounce off each other, as the boxes that fully contain them extends out in unnecessary ways.

Imagine the size of a box to contain a lamp post – it would be vastly wasteful and inaccurate if it was used to work out when a car might collide with the roadside lamp. By contrast, working out the exact collision between a car and a lamp post without any speed improvements would be excessively complex and time prohibitive, especially if the car was say a convertible and thus had concave geometry. Concave intersections are particularly hard.

One of the most popular solutions is to subdivide the master object into a set of smaller objects, but all individually convex. This is referred to as convex hull collision detection. “Bullet has robust convex hull collision detection, unlike the ODE,” says Erwin Coumans. “Convex hull collision detection is important when simulating fracturing objects.”

Coumans adds: “The two main stages in most collision detection pipelines are the broadphase and the narrow phase. The broadphase stage reduces the total number of potential interacting objects, based on bounding volume overlap. In Bullet there are various broadphase implementations for different purposes. The most general purpose broadphase implementation is based on dynamic bounding volume hierarchies: axis aligned bounding box trees that are incrementally updated when moving, adding or removing objects. On the other hand there is a GPU accelerated broadphase through OpenCL, but this has certain restrictions on the object sizes.”

The narrowphase stage deals with precise contact point generation between pairs of colliding objects. Bullet uses a few generic algorithms, such as GJK (Gilbert–Johnson–Keerthi) for fast distance between two convex shapes and deals with a wide range of collision shape types. There is support for continuous collision detection that compute the time of impact between moving and rotating objects, but by default Bullet computes the closest distance and penetration depth at a discrete point in time, to generate contact point information or solve collision detection.

Dneg’s Dynamite, for example, is a hybrid. The underlying technology is ODE. “We evaluated many engines and we looked at ODE and thought we could make a really good tool out of this,” says Kyme, “and over time I folded in stuff from Bullet such as convex hull collision detection which ODE doesn’t have. For example, Bullet has a very good convex hull collision detection engine for doing narrow phase collision detection with convex hulls which is obviously a big deal, or otherwise you are just stuck with boxes and spheres and capsules, which is good but can only get you so far. But having a tri-mesh based collision detection is a big win.”

Houdini, by way of contrast or amplification, uses a number of different collision detection techniques depending on the type of geometry (volumes or thin plates) and the conditions encountered in the physical system. “Our primary collision detection solution makes use of Signed Distance Fields (SDF), commonly used in Level Set Methods (LSM) to represent volumetric data,” notes Cristin Barghiel, Director of Product Development at Side Effects. “For dynamic fracturing, Houdini uses a unique take on voronoi partitioning that allows for fast iterations, concavities, and unlimited art direction. Our voronoi fracturing works transparently – and equally well – with our native RBD and Houdini 12’s newly integrated Bullet engine.”

So good is Houdini’s Bullet integration that Dneg’s Peter Kyme is moving to a new solution for Dneg called Bang which uses Houdini and Bullet as its basis, thus completing the transition from ODE and bounding box only solutions. “In terms of collision detection I always encourage artists to optimize their sims,” says Kyme. “A lot of times an artist will take the most complex, narrow phase collision detection collider available, which could be the actual tri-mesh of the thing they are simulating and I always explain that that is much less stable, ten times slower, and very, very unlikely that you will need that level of detail.”

The next step back from actual Tri-mesh is the convex hull, it is effective but much faster than an actual Tri-mesh solution. “A convex hull is basically a mesh that surrounds an object whereby all the concave bits are removed,” says Kyme. “So it is a convex shape, because with collision colliding with a concave object is an infinitely harder problem than colliding with a convex object, so you create this convex hull around the object and it is actually very efficient and very stable – so 99% of the time that’s what you are going to want.”

“If an artist wants to do a hero object that is very concave,” continues Kyme, “the approach is to divide the shape up into multiple shapes and then glue them together into one combined rigid body and we have tools that do that process which is called convex decompositing. So you might take a lamp and decomposition it to say three objects, and that will be enough, as you can hide a lot with motion blur and other objects, particles etc.

Finally, the simplest solution is back to a bounding box, something that can still be useful. “If you are running a 100,000 collisions, every bit counts, so then you could do just a primitive bounding box shape,” notes Kyme. “In the simplest form a sphere is the fastest, then a box then a capsule. As mentioned above, Houdini also contains level set collisions but Kyme feels that they are not normally as fast as a convex hull collider. “Level sets are nice for working out if you are inside an object or not, but from raw performance it is not normally as fast, which is what you have to shoot for, actually it is not even just about time it is also about scale – if you can have 10 times faster you can have 10 times more objects, which means 10 times exciting sims!”.

Framestore uses a range of solutions for collision detection, from very accurate near full geo for things very close to camera to most commonly convex hull, but doesn’t use level sets methods. Interestingly, however, Framestore does use level sets elsewhere. “It tends to get used in our hair simulator but not a lot in our rigid bodies – one sort of assumes you have greater scope and more room for inaccuracies in rigid bodies – you end up covering everything in smoke anyway,” jokes Framestore’s head of research Martin Preston.

Interestingly, RBD is normally non-deformable, hence the name Rigid Body Simulation. While this is generally true, Houdini’s native RBD system is capable of handling deforming rigid bodies. If the user turns on ‘deforming geometry’, Houdini can recompute the signed distance field (SDF) for each frame and make sure that collisions and impact forces are computed correctly for all deforming shapes. In addition, Houdini’s cloth comes with extensive plastic, elastic and tearing properties which can be used for some simulation of deformable hard bodies.

One of the major visual effects companies with a pipeline built on Bullet is Sony Pictures Imageworks. We recently visited SPI and sat down with CTO Rob Bredow to discuss SPI’s general approach to destruction pipelines.

Other key RBS implementations

Softimage and Exocortex

Exocortex released Momentum 2.0, a high speed multiphysics simulator, for Autodesk Softimage 2010, 2011 and 2012. The Exocortex Momentum 2.0 relies on the Bullet physics framework, and provides robust support for rigid bodies, soft bodies, cloth, ropes, constraints, interactions and attachments.

Nvidia and PhysX

A very strong player in real time solutions in particular is Nvidia and their PhysX Engine. There are several very successful plugin software tools written and built on the PhysX engine. See this article at fxguide on Alex Roman’s work in the ‘Above Everything Else’ spot for more. The very successful and photoreal falling and smashing elements in the spot were created using NVIDIA’s PhysX and using RayFire and PFlow software.

2. RBS using specialist physics libraries (PhysBAM and ILM)

One of the strongest and most powerful physics engine’s is PhysBam, from Stanford University, and the work of Ron Fedkiw and his team who have been focused on the design of new computational algorithms for a variety of applications including computational fluid dynamics and solid mechanics, computer graphics, computer vision and computational biomechanics. Fedkiw himself has published over 80 research papers in computational physics, as well as a book on level set methods. For the past ten years, he has been a consultant with ILM. He received screen credits for his work on Terminator 3: Rise of the Machines, Star Wars: Episode III – Revenge of the Sith, Poseidon and Evan Almighty.

One of the most impressive recent films ILM has done for destruction pipelines was Transformers: Dark of the Moon. It provided some of the most detailed and complex sequences ever seen, raising again the bar for destruction. We spoke to Brice Criswell, senior software engineer at Industrial Light & Magic about ILM’s PhysBAM engine library developed with Stanford University. The now defunct ImageMovers also used PhysBAM. Intel also uses it for benchmarking, but outside ILM, Disney and Pixar are also users, but it is really ILM that until now has been the primary heavyweight user of PhysBAM. But as of SIGGRAPH this year the “full Geometry library was released,” commented Jonathan Su, one of Fedkiw’s current PHD students who helps co-ordinate the PhysBAM libraries, “and the OpenGL/Tools/Ray Tracing were all released September 2010.”

At ILM, the PhysBAM approach is similar to the ‘standard RBS’ above but with some differences and of course a huge range of ILM custom tools and very advanced techniques, all of which has been extremely relevant to ILM’s roster of recent films including the huge destruction sequences of the third Transformers film which has some of the most advanced work to date in the industry. One important tool that ILM uses is level set methods in a way different or an extension from say the level sets are used in say Houdini. According to Wikipedia, “a level set data structure is designed to represent discretely sampled dynamic level sets functions. A common use of this form of data structure is in efficient image rendering. The underlying method constructs a signed distance field that extends from the boundary, and can be used to solve the motion of the boundary in this field.”

“The term ‘LSV’ [level set value] – and even I get confused on this,” notes Criswell, “as we use it constantly internally – but perhaps different from the public use of the term and different from what people know about – it is really an implicit surface that is used for the intention of colliding objects inside the simulation. PhysBAM uses a level set data structure to preform its narrow phase collision resolution pass. The level set has a nice property of providing fast lookups for when querying the distance of particle from a geometric surface. The level sets data structure used by PhysBAM will divide up the spatial domain of the geometry into box cells, and each cell stores its distance to the surface(phi). From the collection of nearby cells we can calculated a gradient field that produces the normal vector pointing towards the surface of the geometry. From the normal and phi functions on the level set, we can calculate the exact distance to the surface of the geometry.”

The same system works if you start on the outside of the object, although is less reliable due to bounding boxes. Looking at these vectors and normals one can tell if you are inside or outside an object for collision detection. ILM then takes this further and uses level sets for fracturing. “One of the things you can do easily with level sets is walk the surface of an object,” says Criswell. “What I mean by that is – I have broken up all of space into a grid and at any point I can show ‘how close am I to the surface?’ and if I don’t know, then I can use the grid to get me directly to the surface.”

ILM has ‘convenience tools’ that allows the code to walk from grid point to grid point. In the case of a single level set when say a particle enters a collision detection bounding box, of a single modeled space ship panel, it is easy to say when it is inside or outside the panel on the side of say a space ship and push it away, so the particle bounces more accurately off the door panel and doesn’t just bounce off the much looser – bigger bounding box of that door panel. “But in the case of fracture we are not using level sets the same way,” explains Criswell. “We are not representing a single object, we are representing a bunch or group of what we call the zero iso contours, which is the points where on the level set you are actually on the surface.”

The zero iso contours are very relevant to fracturing tools like voronoi fracturing tools. Voronoi points are set up to fracture objects but ILM takes the voronoi points and then finds the points actually on the surface of the model, since the voronoi seed points cloud doesn’t know about the surface normally – it is just fracturing space – into a bunch of cookie cutters, but at ILM the level sets takes that fracturing to the next level allowing the code to walk the exterior of, in this case the spaceship, fracturing it. The system that ILM uses is this ability to not have to create geometry based on the level set, but rather it uses the level set directly to cut the high res geometry.

Collision detection of Stanford’s PhyBAM simulation software is all based on level set approaches, “based on Eran Guendelman’s Nonconvex Rigid Bodies with Stacking paper from SIGGRAPH 2003,” explains Criswell. “It is a principle of how their system works, that their RBS requires that you have a volume in order for objects to collide properly, so they use the level set method to generate this volumetric data structure in the beginning of the sim, and during the sim they will use the particles off the surface of the rigid bodies to test them for depth off the level sets of the objects that they might be interacting with. It is as fast of an analytic look up as you could imagine, the level set has a really nice property that at any point in space I can tell you exactly how far away from the surface you are and so it make a good collision look up model. But the downside is that it takes a long time to generate and the memory space of a level set increases cubicly!”

http://player.vimeo.com/video/33569547–

A video from Ron Fedkiw’s PhysBAM research: 20 walls are fractured by one sphere.

Luckily the nature of level sets is also that you can change the resolution and easily at ILM control this level of accuracy. “As you want something to become more complex you have to cubicly increase the memory space of the level set,” says Criswell. So, like other methods, ILM’s artists and TDs need to make trade offs between time and accuracy very carefully.

ILM also has many other very refined other tools such as their soft body Fleshmesh tool, and PhysBAM has very impressive fluid simulations. The limits of the machines will always produce barriers and Criswell says that one of the things this does is produce a layered simulation approach, if you want to simulate a million bodies, you will need to do it in multiple passes. And an artist needs to decide what is most important, and what is key to interact with which key objects. This occurs not only in the one simulation but also in the pipeline, where it is important to work out which simulation is the one to run first and which will affect the others, will soft bodies drive RBS which will drive fluid sims or is there some more important order, and do any of these simulations need to loop back for secondary simulations.

3. Finite Element Analysis (FEA)

FEA is a more physically accurate approach to destruction tools, which uses the widely used finite element method to solve the partial differential equations which govern the dynamics of an elastic material. FEA is a more physically correct way of simulating deformation and fracture is based on continuum mechanics. A 3D mesh is approximated using a collection of elements, usually tetrahedra. The strains, stress and stiffness matrix is used to compute the effect of forces and deformations.

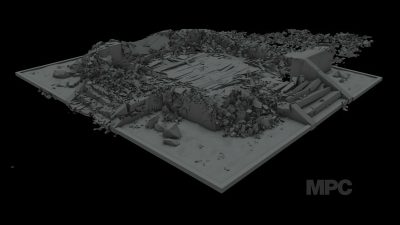

FEA came out in the 1940s and 1950s and was used in manufacturing in the 1970s and 1980s, but only recently has found its way to visual effects. MPC is one of the leading uses of FEA for visual effects work. MPC Vancouver software lead Ben Cole thinks the time taken for it to be adopted might just be trends and fashion. “It is possible that the technology is only now fast enough to be workable, and don’t forget it took a fair while for RBS to be adopted in VFX.”FEA was first developed in 1943 by R. Courant. FEA outside our industry consists of a computer model of a material or design that is stressed and analyzed for specific results such as possible faults in a nuclear powerplant. It is also used in new product design where a company that might want to verify a proposed design will be able to perform to the client’s specifications prior to manufacturing or construction. FEA uses a complex system of points called nodes which make a 3D grid called a mesh. This mesh is programmed to contain the material and structural properties which define how the structure will react to certain loading conditions. Nodes are assigned at a certain density throughout the material depending on the anticipated stress levels of a particular area. Regions which will receive large amounts of stress usually have a higher node density than those which experience little or no stress. The mesh acts like a network in that from each node there extends a mesh element to each of the adjacent nodes. This web of vectors is what carries the material properties to the object, creating many elements.

The problem is that FEA was once expensive to compute, very expensive. Enter: Pixelux. Pixelux is the developer of the DMM Digital Molecular Matter system which uses a finite element based approach for soft bodies, using a tetrahedral mesh and converting the stress tensor directly into node forces. And by some very clever assumptions and tricks, DMM is fast, very fast, realtime fast.

DMM was designed for video games over a six and a half year period starting in 2004. From 2005 through 2008, Pixelux DMM technology was exclusive to LucasArts Entertainment as a part of the Star Wars: The Force Unleashed project. The FEM system in DMM utilized an algorithm for fracture and deformation developed by University of California, Berkeley professor, James F. O’Brien, as part of his PhD thesis. The O’Brien algorithm was refined, optimized and implemented into the DMM middleware by a team led by Eric Parker, the CTO of Pixelux, and the DMM tools pipeline was designed and implemented by a team led by Mitchell Bunnell, the CEO of Pixelux.

Unlike traditional realtime simulation engines which tend to be based on rigid body kinematics, FEA allows DMM to simulate a large set of physical properties and very quickly. Developers can assign physical properties to a given object which allow the object to behave as it would in the real world. In addition, the properties of objects can be changed at runtime allowing for additional interesting effects (See ‘chopping’ below). DMM can be authored or used in Maya or 3ds Max to create simulation-based visual effects. Since integrating DMM technology into their custom ‘Kali’ destruction engine, MPC has produced shots for the movie Sucker Punch and X-Men: First Class, amongst others.

– Above: watch a breakdown of MPC’s visual effects for X-Men: First Class.

FEA is universally agreed to be a valid approach and one that is without a doubt backed up by years and years of serious research, but it was just considered impractical for VFX, until now.

MPC approached FEA due to a very specific problem in the film Sucker Punch and its giant samurai battle scene. “We just didn’t think RBS would do it for us,” says Ben Cole, “so we had to think outside the box and we’d seen this paper published about doing faster stuff using tetrahedral FEA in SIGGRAPH for real time applications and we thought well if you can do it for real time applications – to some degree – then we can probability hit it much harder for VFX and make it work.” MPC worked directly with Pixelux for their implementation at MPC, actually using Pixelux’s solver inside their own code.

Collision detection normally is bounding box or convex hull, (and level set volumes) but in FEA actually puts a mesh completely and then does ‘tet-collision detection’. The original object is fully enveloped in a tetrahedral mesh, and interestingly a fixed size or resolution mesh, a non-adaptive mesh solution for speed/efficiency reasons. For collision detection, outlines Cole, “the tets are thrown into some broad phase collision detection and compared to each other, but the collision detection between one tet and another is much simpler than an arbitrary convex to convex, I mean tets are already much simpler than convex hulls and you don’t need to test every tet to every other tet if they are connected together as you are using volume preserving.”

The tet-mesh is not identical to the object geo, but it is close. But as the number of tets is fixed, one has to put the detail where you think you might need it. Ben Cole explains that MPC “went back and forward on this issue at the beginning, but I quite like what we are doing at the moment and using a fixed number of tets. One can do an adaptive solution, you can make solvers that will switch and add more detail as things crack, but actually it is quite difficult to compare frames for example. But if you know something is fixed, if you know the number of tets is fixed you can use that for comparison. And we actually use that trick for our render time chopping.” ‘Render time chopping’ refers to the ability to swap the geometry for completely different geometry at rendertime with this fixed approach.

Even through the tets are fixed in number they can be stretched and shaped in ways that provide more realistic wood splintering, for example. Pixelux use a concept separate to this called splintering but MPC did not adopt this one part of their solution. “We found we could not get it to scale to the magnitude we needed,” admits Cole. But visual wooden splintering (as opposed to the Pixelux concept) works extremely well in the MPC implementation. Says Cole: “The FEA solver works extremely well if you just stretch the tets until they are long and thin. Of course, if you are being mathematically pure you would not do this, as it produces unbalanced tets”. The underlying theory of FEA suggest that balanced tet-meshes are important but, as Cole says, “for visual effects where you are not that worried about real world physical accuracy,” it works really well. And this is one of the keys of the new-found application of FEA, visual effects it seems just does not need things to be that accurate – just reliable and fast.This is the secret Pixelux unlocked – by making some serious assumptions they can still produce stunning results and yet be computationally fast enough to work in a production environment, and in their case in realtime for games.

On the columns in the giant samurai battle in Sucker Punch, MPC had tet-meshes “with a million tets” so the artists did not need to be concerned with exact placement of individual points or tets, their level of control is much higher. The material is literally a uniform material finite element solve, so you make the columns and they shatter the way they would as if they were wood, inside the accuracy of the engine. There is no pre-breaking, there is no voronoi or cookie cutting with FEA, it is, in a sense, a much more pure solution. “The tets are connected together into a large group where there is a nodal point in common,” says Cole. “That point is defined to have a certain strength, structural robustness and if a threshold is exceeded it breaks that connection. The algorithm that is used to fill a volume with tets is sort of random enough to get what you want. You control now where the density is but where the exact vertex points (nodal points) are inside the material.”

To control the way it breaks means controlling the ‘toughness’ of these nodal points or vertexes. You can apply a 3D map that will create say weaker regions or the materials can just be modeled to be more separate pieces that will break more easily, literally you build the model – less structurally sound in places, so naturally the FEA destroys that section more, but the actual fracturing itself is physically based so the size and shape of the pieces you get is actually accurate to the material. “It is going to be based on how you hit the thing or how you drop it,” explains Cole. “It is based on material properties, when you do a voronoi based rigid body approach, you have effectively pre-broken the thing, but the artist has designed the shattering. You don’t need to do that with FEA, as you have well defined internal forces and so the pieces break apart you want, the way they should break apart.”

And there are controls, for example MPC has a size constraint that will govern how small pieces will become – to avoid things becoming pulverized if the director does not want that. The TDs at MPC have quite a lot of controls to make the effects they want to and rig the scenes – even making density maps based on voronoi maps – but again this is just a density cloud affecting the nodal points and not a voronoi break structure, the system still breaks the based on the actual materials. A TD could fully still pre-break something if there was some reason to, but of course this would be the exception rather than the primary focus.

One other great aspect of FEA is plastic deformation “for free”. Structure creep, plastic or elastic deformations can just be done as part of the standard system, without any cheats. At MPC an artist can literally bend a steel girder, by applying forces to a ‘steel’ object with plastic deformations. It just works, there is no need to turn on or off any special code.

Finally, a key aspect of the MPC solution that is very important is one mentioned briefly above, and that is rendertime chopping. Effectively the MPC system is simpler, bending does not require a soft body solution that feeds a RBS, that has special cases for plastic deformations, each with special rigs, or Geo or tools to prebreak. Instead the MPC approach is to think of the object as existing inside a cage – a tet-mesh cage. And you do all the simulation on the ‘cage’ but it is easy to swap the geo inside the cage at anytime – since the sim is on the cage and not on some special version of the original object. This level of abstraction is very powerful.

The concept is similar – to use an analogy – to a traditional Free Form Deformation lattice or FFD. An FFD bends what is inside it – of course an FFD in no way handles destruction or fracturing, but the FFD cage can deform any geo inside it, so it is easy to imagine bending a cage and then swapping the geo that is inside – maybe from a low poly count to a high poly count. In the same way a FEA lattice handles fracturing and you can easily swap what is inside it, right at render time, only instead of bending an object, the object is being ripped apart. And while chopping up high resolution geometry into bits against a tet-mesh is quite expensive, as the tet-mesh never changes – it is non-adaptive – as it is fixed, one only needs to do that once for the last frame and then it can be applied back up the animation to every frame, across the entire simulation.

To put it another way, in RBS you need to actually break up your object before you start, so it breaks correctly. With FEA you see how it breaks up at the end and that feeds back up the entire sim, telling you how to break the object effectively and efficiently. Before you render something, if you have changed the simulation, one can just run the chopping again. It will determine how this object should be chopped up and you do not need to re-visit modeling and pre-breaking. One eliminates the need to revisit the breaking stage for each new simulation. This speeds up the entire workflow of a destruction pipeline. It is both more accurate, less dependent on tricks and eliminates a looping back in the production pipeline between modeling and simulating.One aspect of procedurally breaking up an object, say a painted concrete wall, is the texturing of the interior generated parts, for a start the tetrahetrial breaking up of an object “does tend to chop up into tetrahedra style shapes – and not everyone likes that,” jokes Cole. Also the new objects have no UV for texturing. One can still project a texture over them, as you don’t need UVs for projection or one can look to convert the newly created shapes into something else that does have UVs, although that problem is not entirely trivial.Cole also points out that at MPC, prior to FEA, they were not on the whole happy with convex hull collision detection, so the natural FEA collision detection is naturally preferred. So not only did the shattering and simplified workflow mean that the FEA is now standard on every show at MPC, Cole also points out that the TDs like it – people are happier with the collision detection accuracy that comes with the process. Since the great X-Men: First Class boat destruction sequence, it is standard at MPC now. During Sucker Punch, MPC was running to stay ahead of the production, so after that film the techniques were refined and packaged into their Kali destruction tool.

Kali is the goddess daughter of destruction and rebirth in mythology. “So it makes some sense, as a name,” says Cole, enthusiastically. “What we came up with is a modular architecture, so every time we do a show we add to it. X-Men’s boat, for example, had a lot of different materials that had to interact with each other, compared to the FEA used in Sucker Punch. We also had to deal with highly reflective material on the boat that we did not have to deal with on Sucker Punch. That required a lot of thinking about how we were allowing things to bend and flex and not being too linear about that, and there was also ripping and tearing.”

MPC is building on Kali, and it delivers already very good information out for say particle emitters. “It is just like you plug in a particle emitter and you almost immediately get great stuff to work with,” says Cole. “For example, you are tracking crack propagation and so you can easily emit along the crack as it goes. Much of this comes from that fact that the FEA Kali solution is just a lot less of a series of hacks or special cases and as such it provides really valuable information for secondary effects and sims.”

So why aren’t more companies using FEA?

There is perhaps a few answers to this. First credit to MPC who is trail blazing this area of visual effects and really taking a leadership role in the industry in FEA destruction pipelines. MPC is large enough to devote serious effort to the problem with now five global locations. Ben Cole is, for example, based in Vancouver, having moved out there three and a half years ago, and the majority of Kali’s development team is based in Canada.

Then, the idea of replacing an existing huge destruction workflow pipeline is not trivial. Staff and entire software systems need to be retrained and rebuilt. “I think there is a way to go on the FEA before we’d ever consider making the move across,” says Dneg’s Peter Kyme. “But it is definitely worth keeping an eye on. So far we have not found the need to move over. As with most things in visual effects you can push for things that are more correct that are trying to handle everything and to a certain extent that is what methods based on FEA are doing which some facilities have started adopting, via third party middleware. Here you are effectively tetrahedralizing the entire structure, which allows things like plastic deformations, which does not constrain you to just RBS, but allows you to deform things, but there is still a performance hit for taking that direction.”

The downside of not doing FEA, and staying with a traditional RBS, says Kyme, “is that there is more setup, but the performance win is such a lot, that for most of the time, that for us at the moment that (performance) is still the sweet spot, but it certainly something we are continuing to look at, and normally eventually things do go towards a more physically correct way of doing things.”

Brice Criswell points out that PhysBAM does actually contain some FEA, “but they have not really invested research to make it very efficient, and instead focused a lot of their research on their RBS and their Cloth which is a spring-mass solver.” As ILM is wed to PhysBAM, and very successfully so, the studio also does not use FEA, but Criswell says, “I think it could be argued at some point ‘well why don’t we use that?’ and the answer comes back that the tools we have given the artists and the speed that we have provided the artists in our system are completely sufficient to solve the kinds of problems they were trying to solve. Now, with that said, there has been a lot of interest in destruction simulation and Pixelux uses FEA with a lot of hardware acceleration which provides a nice lower resolution solution, and only in recent years have we seen this scale up well enough for production resolution.”

In a world split between custom or Houdini / Bullet solutions or ILM’s PhysBAM or MPC’s FEA, Brice Criswell makes a very important point: “I don’t have an opinion either way that we should only be doing one or the other. I think all the approaches are all valid, and they are nothing more than different development paths or directions that people have taken. I think they are all valid directions and at some point I think that they are going to converge back to solving the same problem which is just trying to model material values on objects at very small scales.”

Commerical software

Houdini

Houdini, by Side Effects Software, is currently the ‘go to’ software for RBS. Its growth in this market segment is widespread and well earned. The software provides control, flexibility, and speed and as such is now being adopted as part of the workflows of top companies from procedural trash in Pixar’s Toy Story 3 to Dneg’s new Bang tool. In fact, Dneg now uses Houdini half the time for their effects pipeline. “We use 50:50 between Maya and Houdini for effects work,” says Nicola Hoyle. “We run Dynamite in Maya and our cloth sims. We tend to run any heavy rigid body stuff through Houdini, all the fluids, dust and fire are also all done in Maya as well.”

We spoke to Side Effects about the huge growth in Houdini, but first here is a simple and effective example of a destruction workflow by Martin Matzeder, done in Houdini.

1. A control object is animated to ‘paint’ the path of destruction.

2. A sopSolver records the animation and stores it per frame onto a grid or “control geometry” and deforms it to create a bulge in front of the smashing effect.

3. The grid gets thickness, and is voronoi fractured with the information collected in step 2, so we have a pre-fractured geometry

4. The fractures are ‘parented’ with a SOP to the deforming grid, created in step 2, they also get a attribute that stores the information when to release into the rigid body sim RBS.

5. Every piece that has the flag set to get into dynamics, gets fractured again, the resulting pieces are collected into a group.

6. The RBS itself is quite simple, it just has some ground, gravity, and it just copies the geometry into the RBS, that is created in step 5, (as a group).

Houdini, especially after the release of version 11, took a strong position in the market for RBS. And now with the addition of Bullet and serious speed improvements in v12, this seems destined to grow even further. “Houdini’s system is very open and our community will extend it as they see fit,” notes Side Effects’ Cristin Barghiel. “Both the voronoi fracturing we introduced in Houdini 11 and Bullet integration coming in Houdini 12 were put into production by customers long before we added them as features. Their customizations often inspire us to add these capabilities, and in the case of the voronoi fracturing, we hired the TD who first developed this technique to build us an out-of-box solution. Following the extensive work on performance enhancements shown in the Beta of Houdini 12, especially with respect to geometry architecture, caching and manipulation, rigid body dynamics in Houdini is now capable of managing at least one order of magnitude greater data sets than in previous versions of the software. Thanks to the tight integration of Bullet into the dynamics (DOP) environment internally, the savings in memory apply equally well to Bullet and the native RBD.”

Both Bullet and the native original RBD support dynamic fracturing in Houdini 12, as well as pre-fractured geometry out of the box. One of the strengths of Houdini’s dynamic environment was that it was designed from the start to handle multiple solvers interacting with each other in a natural and intuitive fashion. As a result, RBD objects affect fluid flow and, mutually, fluids impart forces onto the rigid bodies where that behaviour is warranted.

– Above: a video showing the capabilities of Houdini v12.

Both Bullet and the native RBS have advantages that artists can benefit from. A notable advantage of Houdini’s native RBD is its ability to handle difficult shapes, ill-formed geometry, bad intersections and complex initial conditions. “Bullet shines in terms of performance with regular geometry, and offers unique advantages with its flexible handling of glue/constraint networks,” says Barghiel. “All of Bullet’s qualities play well with Houdini’s ability to handle complexity and with the intensely procedural nature of our package.”

“We will continue to evolve the solutions we have and look into new technologies such as finite elements to strive for greater realism in the destruction sequences,” comments Barghiel. “Performance will continue to be an important goal, as will the ability to handle increasingly large data sets. One area of interest to VFX artists and to us implicitly is volumetric sculpting and rendering. Houdini’s volume tools and mantra’s volumetric renderer have a long tradition, and we aim to develop comprehensive solutions for this important area of the VFX pipeline.”

Other terms, approaches and considerations in destruction pipelines

Glue

Rigging objects with constraints, both Dynamite and Bang support ‘Glue’. Rather than sim’ing the pre-fractured object with say 500 objects – rather than simming all 500 bits – with some 10,000 constraints, what you want to do is sim the whole object as one body until something happens. Then, effectively just the one rigid body is simulated until some threshold is violated – some stress is exceeded – which the artist can specify – and then it will convert to multiple rigid body separate objects. This works very well for brittle objects like say china, glass or terracota which is much faster simulated as one object until it breaks into 500 bits, especially if you multiple this by many such objects in a scene.

Call backs

An important technique is call backs. The standard approach for multiple sims is to simulate the RBS first, as they are more likely to influence fluids than the other way around. From the results of that, and the various call backs in the code, it will then create particles or other secondary elements. There are various reasons, for example, why Dneg felt it needed to do more than just rely on Houdini and write their own code on top. Up to recently, Dneg was using the Houdini solver directly, but it was found to not scale as much as was wanted, so the team started to rely on Houdini’s particles and secondary instancing on particles for both efficiency and stability. But now with their own Bang software on top they can go much further with the base RBS before needing to use techniques such as instancing particles (although there is still always normally a need for secondary sims for dust and other things even with Bang).

Fluid sims

Secondary simulations are one of the most challenging and cutting edge areas of destruction pipelines. For example, a whale crashing through the water surface will displace the volume of water accordingly, and a nearby boat will be disturbed or destroyed by the turbulence and possibly tip over. “Interaction between different simulators is very tricky,” says Dneg’s Nicola Hoyle. “It is something that we have a basic pipeline for, but to get that running really smoothly is something that we have not fully developed, mainly as we have not had the need to, we have always been able to split the elements apart and run with a separate simulator for each part and produce a realistic sim by combining elements together. It is tricky, especially with fluids. Also, with Houdini we have the option of outputting flow vectors for interaction between the different solvers but having one thing that runs through everything – RBS, fluid cloth, et ceterea would be a good challenge! With the tools in our pipeline nothing is impossible, but feeding everything together and having it run interactive would be difficult – not impossible but difficult”.

While it is often required that a RBS interact with a fluid sim, this is normally done by the fluid sim existing around the RBS but not actually affecting it. The sim of the RBS is done first, and the fluid sim second. It is not iterative, but as fluid sims can extend to pyroclastic clouds, it is now quite common as this form of massive ash cloud is often required on huge VFX explosions. A pyroclastic cloud flow is fast moving current cloud of ash or smoke that seems to flow – controlled by gravity – as a rolling wall of gas but exhibiting very fluid like properties. Many people believe that the fall of the Twin towers on 9/11 produced a destruction cloud similar to a volcanic pyroclastic flow. As such the imagery is very much now a part of the zeitgeist. It should be noted that the fall of the towers and its concrete pulverization was similar to a volcano’s dust cloud, but the collapse of the Twin towers generated such a flow, though without the high temperatures normally defined by a technical “pyroclastic flow”, which is normally over 1000 degrees centigrade.

A good example of this is the pyroclastic cloud in Immortals with the climatic collapse of the mountain, and the resulting flowing dust/smoke cloud. This was a sequence completely handled by Scanline (Hereafter, 2012 etc), a company well known for their outstanding fluid sims. The company has of course also been responsible for a full range of ‘normal’ visual effects, including the complex RBS train crash at the start of Super 8 completed in partnership with ILM.

Some of the most advanced RBS are those which aim to model both RBS and its actual interaction with fluid sims. In Houdini, for example, everything is connected in an effort to address secondary and related sims. This allows artists to query every component in the system. “Whether you need white water generated from a FLIP sim or dust emitted from a dynamic fracturing sim,” says Cristin Barghiel, “Houdini makes it easy to grab forces and turn them into particle simulations or manipulate them using procedural modeling techniques. Artists can therefore rework sims knowing that the dust and debris being emitted will update procedurally in response to the changes. This allows for more iterations and ultimately a better result for the artist.”

Particles

Sometimes it is enough to reduce the sim to a particle simplification. If you have either very small particles or particles of uniform shape, perhaps small in frame, then the lack of object orientation is not an issue and thus particles or particles instancing other rise fixed geometry can serve just as well as actual RBS geo. Other trades offs occur if this approach is taken, such as the lack of realistic collision detection, but motion blur and the vast number of particles combined with much faster render times makes this a viable option often used.

Still other approaches combine particles and referenced geometry. “Our Houdini set-up uses particles,” says Dneg’s Nicola Hoyle. “We shatter it first and then each chuck is represented by a point at its centre of mass, and it uses a level set of its collisions and then we at render time instance that piece of geometry which is defined in its own separate RIB file onto the point which has all its transformations sorted on it. It is very, very fast to do and you can handle tens of thousands or even millions of particles in a simulation. I think that is the way forward, honestly. You get a lot more control with rules that you can write into it, especially in Houdini like the connectivity powers and the glue constraints or any other geometric constraints. It’s all much more malleable in Houdini and I think that is the way to go – to control with rigging particles rather than dealing with geometry.”

Deformation

RBS are rigid and do not deform, but for some pipelines there are complex and effective combinations and variations possible. One example is the interaction with soft body dynamics such as cloth, which can work on a system such as mass & springs. A cloth body can be modeled as a set or network of ‘mass’ nodes, all connected by elastic springs which then obey some set of rules, most of which are a based on Hooke’s law, (cloth flexibility is approximated by the principle that the extension of a spring is in direct proportion with the load applied to it). The nodes may be either 2D or 3D. A metal car chasing at high speed looks remarkably like a cloth simulation as it crashes and buckles. This was used by ILM for the pod racer crashes in The Phantom Menace, for example.

At ILM there is a deformation approach used on objects such as cars and crashing ‘metal like’ ships, helicopters etc. which is not based on just treating say the metal car as cloth. ILM’s Deformable Rigid Body System allows for plastic deformations. The term ‘plastic deformations’ may sound like an odd one but it is a term in physics that denotes an irreversible deformation, a solid door panel of car, or the hood of a car being bent or pounded into a new shape displays plasticity as permanent changes occur. In contrast the tire bouncing and bending shows an elastic behavior.

If a car is being smashed up it may deform over a very small number of frames. Before the crash and after it has been crumbled the door or hood of the car are inactive, but they animate/transform just during the crash, so the ILM team can turn on the Deformable Rigid Body System, and benefit from the free form deformation and then turn it off again, thus only incurring a few number of very expensive frames, as this form of deformation is really computationally expensive and there is no point having it running if the object is not changing.

Brice Criswell points out that prior to the Deformable Rigid Body System, the artists might have needed to run a flesh sim and that would have to run for the entire sequence. The new system is much more efficient and adjustable by the artist, even though the actual few frames that the Deformable Rigid Body System is active is very computationally expensive.

Bending and denting of rigid materials has become an increasingly important aspect for the realistic destruction of objects. For the movie Avatar, ILM first developed their system of seamless transitions between rigid and deformable simulations.

The solution uses a low resolution spring-mass simulation as a pre-processing step to the RBS. As Brice Criswell, Michael Lentine and Steve Sauers published at SIGGRAPH: “At the start of the simulation, a tetrahedral and thin shell representation of the rigid bodies was initialized as a spring cage to model the surface deformations during the rigid simulation. At the beginning of each relevant frame, the cages were advanced using a spring-mass simulator and their resulting deformations were transferred to the surface of the rigid bodies. After all the objects are processed, the rigid bodies were advanced using a rigid body simulator.”

Interestingly, as an aside, Criswell explained that another trick that is similar that was used on Transformers 3 was when the buildings crashed down. The team did a low resolution flesh sim of the building crashing into the adjacent building and then that was taken and feedback into the RBS, so the building had an overall bend and wobble at a gross scale level, while having incredibly detailed RBS for all the wall, glass, furniture and concrete simulations that provided such realism to the shots.

Custom code in production

There are often small opportunities for production to get R&D to actually write code for specific shots, larger projects rarely would allow the time during production for full scale coding, but many productions get small problems solved during post – by the R&D team. A good example was on the last Harry Potterfilm, where Dneg needed to destroy a bridge and wanted more art direction control. The explosion in question was being done in Dynamite, but the team wanted the initially blasted items to not fly away as much as an initial explosion would correctly cause. So the R&D team provided some dampener controls. The initial explosion is very violent but the material has velocity dampening. Unfortunately this tends to make the materials look lightweight and float around like paper (which would exhibit this property), so the team gave the artists special adjustable gravity that allowed items after a threshold to have reduced gravity. This makes the falling parts of the exploding bridge look both bigger and more majestic and not just fly off the screen.For every company there is a key film that expands their pipeline and gets their destruction work on the map. For many – Digital Domain, Dneg et cetera – that film was 2012, but for Framestore it was Prince of Persia (although their amazing work on the first Sherlock Holmes film also really impressed the audience). Martin Preston, head of research at Framestore, outlined the primary tools that were developed from their work on Prince of Persia. “All our tools have been developed from this concept that we want to fine grain control things,” he says. To this end they have a range of tools from volumetric to more direct breaking tools. They can pre-break things or procedurally break things, their primary tools are F-Shatter and F-Cutter.

“We work on the basis that everything will be pre-broken but that things can also be procedurally initiated if something hits something mid way for example,” explains Preston. “The F-Shatter tool is a volumetric tool so you start out with geometry that is closed and defined neatly, and any shattering that takes place, well you have an interior to work with, you know what that interior geometry is going to look like. The more lightweight one is F-Cutter which is a surface based approach where you basically cut based on a surface projection, there is no assumption that there is an interior.”

These tools are two ends of the spectrum. If an object is being shattered in the back of shot and perhaps partially hidden by dust or particles, then F-Cutter is ideal, but if the object is closer to camera and it is important that the interior can be shaded properly then Framestore uses its volumetric F-Shatter tool.And this approach of highly effective in-house tools is repeated across the industry. Framestore does very fine work, and distinctive, and yet its algorithmic approaches are not that different from some others, even if their implementation is. Framestore now has the ability and serious production pipeline to tackle massive scenes in a way that only a few major companies can. Massive destruction sims are still very much in the domain of work that is built on some off the shelf tools or Physics engines coupled with a first rate R&D department with people like Martin Preston and his opposite numbers at the big top ten effects houses. Almost all Framestore’s tools are inside Maya. Framestore does have Houdini but it is used for things other than RBS. “All of our technology effort has gone onto Maya, and rendering then in PRman or Arnold,” says Preston. “But having said that, it is really just bundled up inside Maya, we are not really using or relying on Maya.”

Martin Preston remarks that they are currently working on a huge rigid body simulation film right now, and while they can’t discuss that film yet, Preston outlined how the artist teams are supported by the in-house development team. “The way we do it is to have R&D people devoted to the effects guys. They work really closely to the effects TD. They will be the guys who see first hand the problems happening in production and then offer to go off and write some custom code to perhaps solve a problem unique to this production or this one shot. The reason we can do this is that we have built our simulator in such a way that you can script event, so if you want some particular deformation, then I will write a script and when something happens in the simulation that triggers this, then this will happen, like a plastic deformation. You don’t end up with a giant black box that does all these things, you end up with lots of little things that you can use.”

In conclusion

As noted, it was the film 2012 that seems to be a turning point for much of the industry. This film’s enormous requirements really brought destruction pipelines into wide acceptance but raised the bar on so many in house pipelines. The film caused many companies to develop much bigger and more robust pipelines. Even as computers get more powerful simulations on the scale of 2012 are staggering.

“Computational power is the key to all of this,” notes Dneg’s Nicola Hoyle. “The destruction we came across in 2012 really pushed our pipeline forward as well. I think it did across the board. By the time I went to SIGGRAPH I heard all the stories that really pushed the pipeline for how many objects you can sim all at one time and how you rig them.”

While no two pipelines are identical, certain patterns have emerged and it remains to be seen if just as this workflow stabilizes whether wide scale adoption of FEA will completely change again the nature of most destruction approaches. Certainly, over time computationally expensive but more direct or pure solutions do tend to rise, but for now it is the artistry and skill of the effects TDs and animators that allows for such high levels of production value and realism. Experienced effects TDs and developers bring a tremendous amount to any production they work on and are highly sought after around the world, and rightly so.

Very special thanks

Peter Kyme, Nicola Hoyle, Aisling O’Brien, Rachel Devenport: Double Negative

Martin Preston, Stephanie Bruning, Gemma Samuell: Framestore

Ben Cole, Jonny Vale: MPC

Rob Bredow, Don Levy, Sony Pictures Imageworks

Brice Criswell, Greg Grusby, Industrial Light & Magic

Ryo Sakaguchi, Mårten Larsson, Tim Enstice: Digital Domain

Cristin Barghiel, Bill Self: Side Effects Software

Erwin Coumans: AMD

Michael Baker, the Art Institute of Las Vegas and developer with the Bullet Physics project

Martin Matzeder, destruction artist

Jonathan Su, PhD student at Stanford University

Had a very quick glance and this article (like the others) looks outstanding, the videos really help to hammer home the ideas and examples.

Can’t wait to read it properly!

Thanks again for this article and the other ‘Art of’ series, they are fantastic.

KaBOOM! Great article, thanks FXGuide.

Absolutely fantastic article!! Great videos too! Thank you FXGuide.

Hey thanks guys, just a shout out to Ian in our office who helped a lot in pulling the article together.

Glad it is getting such a good reaction.

Any suggestions for other ‘Art of ‘ stories you’d like to see? Someone suggested an updated Art of HDR, any others?

Mike

Maybe like an “Art of the Perfect Composite”? I know that’s awfully broad, but perhaps it could be an ‘Art of’ that ties a lot of the other ‘Art Of’ concepts together.

Great Article … and Great Videos … tnx alot

Yeap Mike , we want Art of HDR … 🙂

Awesome article, and I agree, Art of HDR would be great Mike.

I’d like to see a History of Vfx Software article similar to part one of the Art of Tracking article but possibly going further back than 1990 and including 3d software. Its good to know where everything evolved from. Maybe the time is also ripe for an Art of Texturing article covering UV’s vs Ptex vs procedural approaches and software like Mari.

Great article, extensive and accurate, just I miss you mentioning Pulldownit plugin for destruction, it is used currently by some mayor VFX Houses like Cinesite, just to let you know.

Pingback: The Art Of Destruction - By Mike Seymore - Stereopixol

Pingback: » 3D CGI Links

Pingback: Art of Destruction (or Art of Blowing Crap Up) | CGNCollect

Pingback: Blowing Up Building Games Online Free

Pingback: » Blowing Up Building Games OnlineVergamet Game

Pingback: Blowing Up Houses Games Online | Sovantha

Pingback: El Arte de la Destrucción 3D en FXGuide - CICE

Houdini foreva