It is hard to believe that Paul Debevec published his PhD just ten years ago. His work in defining high dynamic range images (HDRi) was almost immediately adopted by the major effects companies and expanded into one of the most significant visual effects areas of the last ten years. Debevec’s latest paper, which builds on HDRi and his now famous light stage, has just been presented at Siggraph this year. This is a stage rig that can reproduce the exact lig

Background

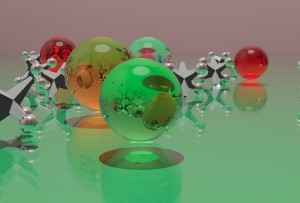

Most HDR images are made by photographing multiple exposures of mirror balls or large chrome ball bearings mounted on a tripod. This produces a very high dynamic range image image once the separate exposures are combined into one HDR file. This process, often referred to as capturing a Lightprobe, has effectively recorded the light hitting that point from almost every angle, making it very useful in CG|. HDR images are also made by stitching together multiple images into a panorama see the Batman Begins story. But there is no reason why HDR images can not simply be multiple combined exposures of a normal scene or shot.

1994 and before

In 1994, some work that Debevec had earlier done as a high school student with his own photography in dark rooms played a significant role in some work he wanted to do for a friend. As a teenager, he had set off fire crackers with very long exposures “what looked to me like dots of light – would be on the film as great streaks of light,” says Debevec. Years later as he got into CGI he realized that such effects were not seen in CG images or if they were they were rather “heavy handedly added after the fact.”

A friend of Debevec’s, Brian Mirtich, had a chance to have an image from his research on dynamic simulation featured on the front cover of Berkeley Engineering Magazine. The problem for Debevec was that “still photographs of these falling bowling pins and spinning coins did not convey the motion inherent in the research”, so he started thinking about the problem of adding motion blur and simulating long exposures. “I wanted somehow to get that magic effect of the streaking of those bright regions,” says Debevec, and this was the moment that he realized that as you average together 20 or 30 conventional images to simulate motion blur you lose optical streaks. The specular highlights are clipped at a 100% level and because they are averaged down, the highlight becomes washed out or averaged out. But this doesn’t happen in the real world since there is no clipping.

A friend of Debevec’s, Brian Mirtich, had a chance to have an image from his research on dynamic simulation featured on the front cover of Berkeley Engineering Magazine. The problem for Debevec was that “still photographs of these falling bowling pins and spinning coins did not convey the motion inherent in the research”, so he started thinking about the problem of adding motion blur and simulating long exposures. “I wanted somehow to get that magic effect of the streaking of those bright regions,” says Debevec, and this was the moment that he realized that as you average together 20 or 30 conventional images to simulate motion blur you lose optical streaks. The specular highlights are clipped at a 100% level and because they are averaged down, the highlight becomes washed out or averaged out. But this doesn’t happen in the real world since there is no clipping.

Debevec decided to ask Brian to render two passes of his animation, one that was just the diffuse pass of the bowling pins or coins that were flipping round, and another that was just the specular pass. In those days the render was merely a Phong shader, so “the specular was never that strong in a high dynamic range sense,” says Debevec. “But what I did was convert these specular highlights to floating point images, making the specular much higher, and then added it all back together only converting it to 8 bit for the final output.” In converting the images to floating point, it allowed the specular highlight to be above 100 %. As the image is averaged, the specular highlights do not grey out and instead remain strong and produce vivid streaks. Thus simulating much more exactly the fire crackers of Debevec’s youthful home photography. And HDR was born.

Working nearby Debevec was Greg Ward. In 1985, Ward began development of the Radiance physically-based rendering system at the Lawrence Berkeley National Laboratory. Because the system was designed to compute photometric quantities, it seemed unacceptable to Ward to throw away this information when writing out an image. He settled on a 4-byte representation where three 8-bit mantissas shared a common 8-bit exponent. “When I first started developing Radiance in 1985-86, I had in mind separating the pixel sampling from the filtering steps in the calculation, which required passing floating-point data to do it right,” explains Ward. “Since none of the image formats or toolkits at the time seemed to handle floating-point very efficiently, I decided to roll my own.”

“Had we spent a little more time examining the Utah Raster Toolkit, we might have noticed therein Rod Bogart had written an experimental addition that followed precisely the same logic to arrive at an almost identical representation as ours”. He explains further, “the Utah Raster Toolkit, which I had only looked at briefly back then, had a poorly documented feature where the alpha channel acted as an exponent, just as I ended up doing in the Radiance RGBE format.”. Bogart later went on to develop the EXR format at Industrial Light and Magic. Another motivation for Ward was that he hoped to use his calculations to come up with lighting values. “throwing away the absolute values I had gone to so much trouble to compute seemed wasteful,” says Ward. “In the long run, using floating-point (HDR) images had many benefits in the post-processing phase, including tone-mapping possibilities that I began to explore in the late ’90s after seeing some of the exciting work done by Jack Tumblin and Jim Ferwerda.”

. The Online Wikipedia names Ward as the “founder of the discipline of high dynamic range imaging.” But in Ward’s own words “I never thought I founded anything….I think credit should really go to Paul Debevec for HDRI, as my part in it was mostly limited to providing some useful tools so he could implement his ideas without too much programming.” Greg Ward, for all his modesty, produced both the breakthrough open source Radiance software package and defined the Radiance image file format, which was initially the format of choice for everyone working in the field of HDR. While the file format stores floating point images in much less space, there were some things that could be improved. “Although RGBE is a big improvement over the standard RGB encoding, both in terms of precision and in terms of dynamic range, it has some important shortcomings,” says Ward “Firstly, the dynamic range is much more than anyone else could ever utilize as a color representation. It would have been much better if the format had less range but better precision in the same number of bits.”

HDR was immediately of interest to film makers due to its ability to record or sample extremely accurately lighting conditions and to allow previously unheard of levels of realism. It fit perfectly with new models of global illumination and attempts to model radiosity. For the first time we were able to film a set of exposures and combine them into one file – which could then be used to produce a set of 3D lights (normally 20 to 30) that would very accurately reproduce the lighting that was on set.

1996/1997

Debevec actually worked on two pieces of research that for a while were considered by most people to be the same: Photogrammetry, the ability to reconstruct models from still images, and HDRi. Debevec’s first film, The Campanile Movie made in the spring of 1997, was actually photographed in a manner to completely avoid HDR issues. “We choose a cloudy day to avoid needing multiple different exposures,” he says.

The Campanile Movie used image-based modeling and rendering techniques from his 1996 Ph.D. thesis “Modeling and Rendering Architecture from Photographs” to create photorealistic aerial cinematography of the UC Berkeley campus. To get the aerial shots around the top of high the Berkeley Tower, Debevec and UC Professor Charles Benton actually flew a kite and took pictures from it for the photogrammetry and texture maps. In the end, the 150 second film was created with just 20 images from the eight rolls they shot. Few people know that the original storyboards had the Campanile falling over and crushing the hero, but that never made it into the final film.

1999

It took less than 2 years for the work in The Campanile Movie to make its way into mainstream feature films. Masters student George Borshukov, who worked on the project, went on to work at Manex Entertainment where he and his colleagues applied The Campanile Movie’s virtual cinematography techniques to create some of the most memorable shots in the 1999 film The Matrix. The backgrounds in the famous rooftop “bullet time” shots in the film used photogrammetric modeling and projective texture-mapping. Beacause the bullet time cameras all face each other, a virtual version of the roof top was required. VFX supervisor John Gaeta had seen The Campanile Movie and immediately saw its potential.

The technology was also used to produce the landmark short film Fiat Lux at Siggraph 99. The New York Times described the film as “An orgy of technical expertise… a thousand Ph.D.’s software engineers and animators burst into applause.” The short is set in an image based model of St. Peter’s Besilica featured hundreds of steel spheres and falling monoliths and was described by the film makers as “an abstract interpretation of the conflict between Galileo and the church, drawing upon both science and religion for inspiration and imagery.”

Fiat Lux not only explained the films goal, but it was also the motto of the University of California. The ancient church built in AD326 and rebuilt by Renaissance architects in the 16th century was digitally rebuilt from just 10 photographs. To the Church were added slow motion mirrored spheres and solemn black doiminoes all bathed in softlighting. The 4500 frame animation took a hundred 167Mhz xcomputers and 20 PII working full time for three days to render.

2000-2001

Films such as Mission Impossible II and and many others started using HDR imagery and around 2001 Debevec’s HDR Shop program became available, allowing anyone to make HDR images and use them in production.

As the techniques became understood, the research work split into several related and yet different paths. Companies such as the Pixel Lieberation Front (PLF) used the Photogrammetry side of the initial research very effectively in films such as Fight Club. In Godzilla the animators at Centropolis needed a good low-res matchmove set for the big lizard to run down. A clever reverse solution was provided by PLF to build and match each building in order to place it by hand into the match moved lived action footage – allowing for interactive effects to be added.

Inspired by Debevec’s work, several companies developed photogrammetry products. Canoma, originally written by Robert Seidl and Tilman Reinhardt, was sold to Metacreations and then Adobe. One product which faired much better was RealViz Imagemodeler which was recently updated to version 4. RealViz also released Stitcher for building panoramas. Another successful program is PhotoModeler Pro, a Windows based software program from Eos Systems, which allows the creation of accurate, high quality 3D models and measurements from photographs. PhotoModeler Pro 5 is widely used in the fields of accident reconstruction, architecture, archaeology, engineering, forensics, as well as 3D graphics. The latest PhotoModeler Pro 5 offers fully automated camera calibration, NURBS Curve and NURBS Surface modeling, supporting a variety of file export formats and enhanced photo texturing.

While these companies advanced digital Photogrammetry, it is worth noting that it was not long after George Eastman helped invent photography as we know it that the first use of the term Photogrammetry was made. The term was first used in 1893, by Dr. Albrecht Meydenbauer, who went on to found the Royal Prussian Photogrammetry Institute. But it has been with the advent of digital cameras that the techniques have had wide spread application.

In 2000, ILM started working on a format for HDRi data. A few HDR formats existed but some were extremely large in size and none really stood out as a default industry standard. ILM had rejected 8 and 10 bit formats due to the lack of dynamic range necessary to store high contrast images. 16 bit formats tended to not account for ‘over range’ elements – i.e. specular highlights – and tended to clamp the highlights. 32 bit float tiff files were enormous in terms of storage.

File formats are simply “containers”. The full TIFF format was built on the original PIXAR Log Encoding TIFF, which was a log 11bits per channel file format, developed in house at Pixar by Loren Carpenter to maximize the use of their film recorder. 96 bit TIFF is a ‘dumb’ 24 bits per pixel format which poorly compresses the file with a fairly ineffective compression such as gzip. The Radiance file format came from research started in 1985 that was freely distributed beginning in about 1994. The original PIXAR TIFF had 3.6 orders of magnitude (3600:1) in 0.4 % steps (1% in Luminance is edge of human perception). As noted earlier, the Radiance format has 76 orders of magnitude, which is say 62 more than you need for any level of light. This handles imagery from the shadow under a rock on a moonless night all the way up to the Sun itself. It is therefore vastly over specified for HDRi work.

2002

In 2002, Industrial Light and Magic published C source code for reading and writing their OpenEXR image format, which has been used internally by the company for special effects rendering and compositing for a number of years. This format is a general-purpose wrapper for the 16-bit half data type, which has also been adopted by both NVidia and ATI in their floating-point frame buffers. Authors of the OpenEXR library include Florian Kainz, Rod Bogart (of Utah RLE fame), Drew Hess, Josh Pines, and Christian Rouet. OpenEXR has since grown to be the dominant format for HDRi files. The format can store negative and positive values at about 10.7 orders of magnitude (the human eye can see no more than 4 ). The OpenEXR specification offers extra channels for alpha, depth, and others, which makes it invaluable for high end effects work. As it is simply a ‘container’ , the float format that can be used for other things – such as optical flow – XY floating point confidence level. Debevec thought that ILM’s release of Open EXR format was “really a great thing…they were putting the seal of approval of one of the best visual effects houses in the entire world on thinking about things this way and doing things in this sort of way”.

One of the first films ILM used their new EXR format on was the 2002 Time Machine. “The new floating point allows us capture a larger dynamic range, ” said ILM’s Scott Squires – VFX sup in a cgw article, “because its floating point we can get more than 16 bits of colour per channel”. At the same time – on the same film in fact Digital Domain was working on their own floating point point pipeline, but based on RGBE file formats. This lead to Digital Domain first adding f-stop tools to Nuke. At this stage Nuke was still exclusively an inhouse Digital Domain tool.

There is also a Microsoft format scRGB, but it is not widely accepted because the number of bits it uses provides poor accuracy. Prior to OpenEXR, one of the best float formats was the SGI LogLUV format, which was released in 1997. It was developed by Greg Ward while he was at SGI in 1997. Ward set about correcting the mistakes he felt he had made made with RGBE (Radiance file format) in hopes of providing an industry standard for HDR image encoding. This new encoding was based on visual perception and designed so that the quantization steps matched human contrast and color detection thresholds much more closely. It is a good format and was used in many 3D aps prior to OpenEXR.

Ward himself still uses both the RGBE and SGI LogLUV file formats. “For HDR photos, I prefer LogLuv TIFF because it’s fast, accurate, and reasonably compact,” says Ward. “I still use Radiance’s RGBE format with Radiance, as retooling the whole package around LogLuv would be a ton of work for very little benefit. Either format provides sufficient accuracy for lighting design and human perception. OpenEXR is an excellent format for visual effects, as it has significantly higher accuracy equivalent to an extra decimal digit of precision over RGBE and LogLuv. This isn’t strictly necessary for perception, but provides a guard digit for extensive compositing and blending operations as required in your industry. The only drawback of OpenEXR is that you can sometimes run out of dynamic range, but I believe the circumstances where this is a problem are rare. OpenEXR is also quite complicated compared to Radiance’s format, and comparable to TIFF.”

While others explored Photogrammetry or brilliantly extended the HDR formats, Debevec moved on to do his Light Stage research. The Light stage has evolved from its first appearance at Siggraph almost 6 years ago. At Siggraph 2000 Debevec presented Light Stage 1.0 as a way of capturing the appearence of a person’s face under all possible directions of illumination. The original verison allowed a single light to be spun around on a spherical path so that a subject could be illuminated from all directions as regular video cameras were used to record the actors appearance. It literally worked by someone pulling ropes. It took a minute to capture one image of a face and the subject could not move, so often multiple attempts were made to get a good result.

Debevec and his team soon moved to LightStage 2.0 and the technology became more useable. The first major production to use the Light stage for a feature film was Spider-Man 2. In the first Spider-Man film, the villain wore a mask and rode a hover-board, making technical challenges much less daunting. In Spider-Man 2, the villain Dr. Otto Octavius (Alfred Molina), more commonly known as Doc Ock, has four octopus-like tentacles and doesn’t wear a mask. Thus, the digital effects team at Sony Pictures Imageworks (SPI) had to add CG tentacles to the actor in 178 shots and create photorealistic Doc Ock digital doubles for 38 shots.

Debevec and his team soon moved to LightStage 2.0 and the technology became more useable. The first major production to use the Light stage for a feature film was Spider-Man 2. In the first Spider-Man film, the villain wore a mask and rode a hover-board, making technical challenges much less daunting. In Spider-Man 2, the villain Dr. Otto Octavius (Alfred Molina), more commonly known as Doc Ock, has four octopus-like tentacles and doesn’t wear a mask. Thus, the digital effects team at Sony Pictures Imageworks (SPI) had to add CG tentacles to the actor in 178 shots and create photorealistic Doc Ock digital doubles for 38 shots.

At this point in Light Stage’s development, the reflectance field of a human face could be reliably captured photographically. The resulting images can be used to create an exact replica of a subject’s face from various viewpoints and in arbitrary, changing lighting conditions. To create Doc Ock’s face, Molina was seated in a chair and surrounded with four film cameras running at 60 frames per second. Above his head was an armature that rotated around the chair. On the armature, strobe lights fired at 60 frames per second. At the end of eight seconds, they had 480 images from each camera of his head in one position, but with different lighting conditions in each.

To create photorealistic movement for the actors’ faces, SPI developed a system based on motion capture. However, it was not a motion-captured performance. The actors were recorded using Vicon motion capture cameras. The actors wore 150 tiny markers in his face, each marker a 1.5 diameter, retro-reflective sphere flattened on one side. From this a library of faces and expressions was built up.

To match the lighting on the set, the crew took high dynamic range images (HDRI) using fish-eye lenses to create 360-degree environments. Once a CG character’s head was surrounded with those global environments, a software algorithm could calculate which of the 480 images from each camera to blend together to create the character’s face. If the set was dark on one side, for example, the algorithm wouldn’t access images taken on that side of the actor’s face.

In the end, the HDR capture gave the lighting and the Light Stage provided the actors face which matched the lighting conditions. This was mapped on to a CG face with the appropriate expression according to the script. The Lightstage was still only grabbing one very complex shot of the head – and not the actor actually acting. The work was breakthrough, but still required someone to animate the action.

Several other important events happened around this time, which was a truly expansive period for HDRi. Lightstage 3 was shown for the first time at Siggraph 2002, with the now-familiar 156 LED dome. This recorded the performance in both visible and infra red light to produce an infrared based alpha or matte channel. Gone was the swinging rib of Lightstage 2 and in its place was a fixed dome.

Several other important events happened around this time, which was a truly expansive period for HDRi. Lightstage 3 was shown for the first time at Siggraph 2002, with the now-familiar 156 LED dome. This recorded the performance in both visible and infra red light to produce an infrared based alpha or matte channel. Gone was the swinging rib of Lightstage 2 and in its place was a fixed dome.

Also around this time, Ward – who had briefly worked at Shutterfly – took 3 months off between positions and built a robust cataloging tool which includes an HDR generator called Photosphere. Photosphere is free like HDRshop, but unlike HDRShop Ward developed the program for the Mac OSX platform. While the program does many things, it introduced a series of advances for HDR. First, it reads the exposure information from the file header and thus does a dramatically faster job of calibrating the camera and producing the necessary camera response curve calibration. This was always a very slow and time consuming process on HDRshop. The technique was also adopted by Adobe when it introduced HDR creation to Photoshop CS2. “I read the exposure from the Exif header, which CS2 does also,” says Ward, “but then I apply Mitsunaga and Nayar’s polynomial solution rather than the SVD method of Debevec and Malik. I’m not sure which is more accurate, but for sure the poly solution is faster”.

The other innovation of Photosphere was the automatic alignment and stabilizing of HDR images one to another. This feature was also incorporated in CS years later, but Photosphere is about 6 times faster. “Ironically, I offered to license my facilities to Adobe, but they hate not owning things outright,” explains Ward. “They ended up spending nearly two years developing their own methods, which don’t perform quite as well. The alignment algorithm is slow but passable, and their camera response recovery sometimes does strange things.”

Greg Downing, having worked at REALVIZ in France on their Stitcher and Image Modeler teams, developed a method for HDR Stitching which was featured in Debevec’s 2002 Siggraph image based lighting course. Downing would later move to Rhythm & Hues to help develop their second generation HDR pipeline and work as a lighting TD, including main character lighting, on Garfield the Movie.

2003

HDRi was used effectively for the Mysique character in X-Men 2 using a pipeline of image captures with a Sigma fisheye lens, Photoshop, HDRShop, and image-based lighting. HDRi had also been used briefly in the first X-Men movie where Senator Kelly dies and becomes a pool of water.

2004

At last year’s Siggraph Version 2 of HDR shop was released which includes support for OpenEXR and using CameraRAW information rather than just Jpeg images to make extremely high quality HDRs. HDRShop 2.0 implements multi-threaded operations which speeds up processsing.

2005

Debevec’s team has broken through the critical limitation that stopped the actor from being able to act, presenting a paper entitled “Performance Relighting and Reflectance Transformation with

Debevec’s team has broken through the critical limitation that stopped the actor from being able to act, presenting a paper entitled “Performance Relighting and Reflectance Transformation with

Time-Multiplexed Illumination” at Siggraph 2005. The latest version, Light Stage 5 (there was not a Lightstage 4 ever shown) has turned into a giant bubble with masses of LEDs around the outside – all pointing in to the centre from every direction. With a high speed camera shooting an incredible 4800 times a second, the actor is filmed delivering a line of dialogue in real time with every possible lighting environment.

Since film runs at 24 frames per second Debevec explains “there is actually spare time in there to do other things.” The actor acts and the lights flash incredibly quickly as the camera captures it all at over 2000 times a second. When done, pick any HDR lighting reference plate – the desert, the ocean, the original St. Peter’s Besilica, whatever – and the computer can play the actor’s actual performance with the correct lighting. It is not doing image processing or colour correcting but instead discards all the lights that wouldn’t have been in the reference and plays the performance showing only the correct – exact – lighting to match. It even creates a black and white matte by running a few passes of the over 2000 captures that had the actor in perfect silhouette.

As you can imagine there are a host of limitations. The camera technology only allows for the actor to be captured at 800 x 600 resolution and only for 4.3 seconds before the RAM of the high speed camera fills up and you have to break and download the data to a hard drive. But various optical flow techniques are built into the process to allow for motion blur to be re added to the image and solve the otherwise extremely short exposure time of each “frame”. There are also some issues with noise in the shadow areas and issues such as when the actor casts self-shadows, such as with a hand moving across their won face. Thse can produce a sort of slight ringing shadow.

In the closing paragraphs of this year’s Siggraph paper, the authors hint at a hybrid system applied to “traditional studio lighting arrangements.” Such a system would for the first time allow for NO lighting decisions to be made on-set during effects work and *any* lighting to be perfectly dialed in during post-production — matching the lighting of the environment the actor is composited into. In Light Stage 1 the team acquired 1800 light directions in about 1 minute but now the team can capture the performance with any lighting in real time.

The real world on-set version could perhaps be a system that would record fill, key light and rim light separately on set. This would allow a compositor to re-light a live action performance in exactly the same way a 3D artist does. According to Debevec, “it is analogous to an audio recording which records the various instruments of a band separately and then they are mixed together in audio post.” The system would not simulate the lighting – or colour correction the image in a DI sense – it would literally show you the same performance with any combination of lights on or off. The result would not only look real, it would be *the* real lighting for that new composite.

At Siggraph 2005 the same team is planning on showing initial tests of recording not only the lighting of the performance and all lighting info in one pass, but also recording a combination of multiplex light sources and a geometry capture. Thee actor is recorded with all the lighting and all the geometry of the performance, allowing the team to derive light effects like venetian blind effects across the face or derive ambient occlusion passes and specular passes. Channels can be produced that allow the live performance to be adjusted like 3D such as making the skin look wet or more diffuse or colored – allowing for what Debevec calls “virtual make up” design for the first time ever.

At Siggraph 2005 the same team is planning on showing initial tests of recording not only the lighting of the performance and all lighting info in one pass, but also recording a combination of multiplex light sources and a geometry capture. Thee actor is recorded with all the lighting and all the geometry of the performance, allowing the team to derive light effects like venetian blind effects across the face or derive ambient occlusion passes and specular passes. Channels can be produced that allow the live performance to be adjusted like 3D such as making the skin look wet or more diffuse or colored – allowing for what Debevec calls “virtual make up” design for the first time ever.

As for the current time limit on each take, Debevec notes that “there is now a 24GB option for the camera which would allow for 25 seconds of capture at 800×600”, so as technology advances, so to will the process become ever more viable.

In the years ahead this work will undoubtedly spread from the lab into mainstream film making. With the speed at which past innovations have been adpoted for practical situations, it most likey won’t be long before this new technology enters mainstream film making.

Applications using HDR

In 2005 Autodesk released Toxik, unlike its older brother Flame, – Toxik was designed from the ground up to support HDR – OpenEXR pipeline. This includes full OpenEXR colour grading and compositing.

By far the most common compositor for OpenEXR film projects is Shake, who’s floating point file option has allowed for its integration into most commerical feature film pipelines all around the world.

As mentioned above HDR tools were first added to NUKE in 2002 and these have been widely available for several years now.

Most 3D packages support both the importing of light probes and now most renders also output OpenEXR floating point files, most notably Renderman – which is the most widely used render engine for floating point feature pipelines.

HDRi APPLICATION OVERVIEW

HDR Shop v2

There are two versions of HDR Shop – the free version 1 and an updated commercial version that includes OpenEXR support, Ability to read RAW files directly, scripting and multi-threaded solutions. HDR shop – unlike many other program – allows for complex operations once the HDR is made. These include tools to help combine two captures in order to remove the Photographer in the reflection.

HDR Shop 2.0 is available under a commercial license or an academic license. HDR Shop 2.0 is not available for free download for non-commercial/personal use. The price for a commercial license for the executable program for a two-year term is $600 for a single-user.

RADIANCE

Radiance is still today currently the only rendering system that seriously targets lighting and delighting design applications. For a while, LightScape (which became 3D Studio Viz) was a useful tool, but they’ve since abandoned lighting for more commercial markets. There’s just not as much money in building design, as visual effects. The program’s author Greg Ward jokes that “they spend billions of dollars constructing these things (buildings), but the design part is given short shrift every time”.

Radiance has been used on quite a few of the designs done by Arup in London who used Radiance at various stages. You can also check out the work done by Visarc on their website, as they use Radiance almost exclusively. Recently, Ward himself has been working to help with the design of the new New York Times building designed by Renzo Piano Architects.

As far as Ward knows, Radiance has not been used on any big budget special effects films, as it emphasizes accuracy over beauty and makes the wrong compromises for film. “Numerous times people have asked me to tweak this or that to make the output more beautiful at the expense of physical accuracy,” says Ward, “but that would make it just another rendering tool rather than what it is: a unique, validated lighting simulation tool.”

http://radsite.lbl.gov/radiance/

PHOTOSPHERE

A brilliant image catalogue program, written by Greg Ward, for the Mac OSX that includes very fast and very accurate HDR tools, such as automatic HDR stabilization, image/camera calibration and multiple file export options. It is a technically accurate and much more powerful version of Photoshop album or iPhoto – but without some of the GUI sparkle.

http://www.anyhere.com

(not www.photosphere.com – which is an unrelated image library company)

PHOTOSHOP CS2

Photoshop now includes HDR creation tools, but they are best accessed via Photoshop and not the companion Bridge program. see the fxguide Photoshop CS2 HDR article

http://www.adobe.com/products/photoshop/main.html

EASYPANO PANOWEAVER

A cost effective PC stitching program that allows virtual tours via Tourweaver. Panoweaver is a professional tool that can stitch digital images and creates 360-by-360 panoramas, and then produce virtual tours, or output panoramas up to 10000*5000 pixels

http://www.easypano.com/index.html

RESEARCH PAPERS

Light Stage 5

http://projects.ict.usc.edu/graphics/research/LS5/index-s2005.html

Performance Geometry Capture

http://projects.ict.usc.edu/graphics/research/SpatialRelighting/

Light Stage 3

http://www.debevec.org/Research/LS3/

Light Stage 2

http://projects.ict.usc.edu/graphics/research/afrf/

Light Stage 1

http://www.debevec.org/Research/LS/

REFERENCES

- Reinhard, Erik, G. Ward, S. Pattanaik, P. Debevec,

High Dynamic Range Imaging: Acquisition, Display, and Image-Based Lighting,

Morgan Kaufmann Publishers, San Francisco, 2005. - Seetzen, Helge, W. Heidrich, W. Stuezlinger, G. Ward,

L. Whitehead, M. Trentacoste, A. Ghosh, A. Vorozcovs,

“High Dynamic Range

Display Systems,” ACM Trans. Graph. (special issue SIGGRAPH 2004), August 2004. - Ward, Greg, Maryann Simmons,

“Subband Encoding of High Dynamic Range Imagery,”

First Symposium on Applied Perception in Graphics and Visualization (APGV),

August 2004. - Ward, Greg, “Fast, robust image registration for compositing high dynamic range photographcs from hand-held exposures,” Journal of Graphics Tools,

8(2):17-30, 2003. - Larson, G.W.,

“LogLuv encoding for full-gamut, high-dynamic range images,”

Journal of Graphics Tools, 3(1):15-31 1998. - Larson, G.W., “Overcoming Gamut and Dynamic Range Limitations in Digital Images,” Proceedings of the Sixth Color Imaging Conference, November 1998.

- Larson, G.W. and R.A. Shakespeare,

Rendering with Radiance: the Art and Science of Lighting Visualization,

Morgan Kaufmann Publishers, San Francisco, 1998. - Ward, G., “The RADIANCE

Lighting Simulation and Rendering System,” Computer Graphics, July 1994.

Pingback: The State of Rendering – Part 1 | 次时代人像渲染技术XGCRT