Here at fxguide we often cover work by VFX studios on large scale – and big budget – Hollywood releases. But what if you’re looking to make a large scale film on a smaller budget? And what if that film involves giant metal robots? That was the challenge faced by Nvizible and visual effects supervisor Paddy Eason on director Jon Wright’s Robot Overlords, starring Ben Kingsley, Gillian Anderson and Callan McAuliffe. We find out about some of Eason’s innovative methods for completing 260 complex shots – from getting involved early, using on-set camera tracking and using a multi-cam array and stills to generate backgrounds for the film’s signature flying sequence.

fxg: Hi Paddy, how did you and Nvizible come to be involved in the show?

Eason: We’ve worked with the director Jon Wright on his previous two films, including Grabbers. So we were involved on Overlords very early, even before there was a script – the first I heard of it was an email from Jon where he’d come up with a two-page treatment with a basic idea of the world of the story and the basic setup, and he was just inquiring whether I thought it was a fun idea, and visual effects-wise whether we thought it was feasible. Of course, we said yes! And that then became a writing project for Jon – he co-wrote the film with Mark Stay.

I remained involved through the writing process in the sense that there’s a little writing group Jon set up, which he calls the Tiny Think Tank – that’s a loose collection of friendly writers, directors, producers, a visual effects person – all people who’ve maybe done one or two features, read each other’s scripts at an early stage and give feedback. It’s a good way to test story but without going to producers, and getting beaten up at that stage!

fxg: At that stage are you mainly giving visual effects feedback or also story and other creative input?

Eason: Any creative feedback. Obviously I would have a visual slant on things. It’s not really related to feasibility of visual effects. I write myself a little bit, so I had some story input. And on a couple of films some of my ideas have ended up in the film which is great. On Overlords it’s a little more formal and I have a story consultant credit, as well as visual effects. And that’s because of these meetings we had.

fxg: How did your involvement continue as writing continued?

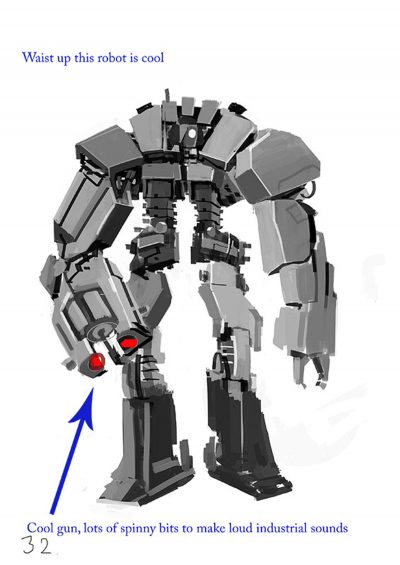

Eason: Alongside the writing of the script, Jon was assembling visual material – he produced a booklet of loose concept illustrations of moments from the film, which he did with a couple of concept artists, which he called the Robot Compendium. It was focused on the robots specifically, with a little block of text explaining what the sentry is, what the drone and cube are. That was something to help his own visual development. It also became something you could show to potential financiers to visually explain the film. ‘Oh, I get it, it’s a kid’s scifi movie with giant robots – I get it.’

We also put together a little short, a teaser, quite early on. That was just half a day of filming. We borrowed a RED camera, we had a Steadicam op. We went out to a suburban street in London, had a couple of extras. And we just filmed some empty streets as if after the robot occupation had occurred. The shot was a person’s POV walking down the street and there are some people leaning out the window saying, ‘Get indoors!’. The final shot of the teaser is a giant robot stomping around the corner walking up to camera and aiming its gun down the lens saying, ‘You’ve got 10 seconds to comply.’

That was funded by the BFI, and that became another tool to help sell the film. That was a period of about a year where we were aware of the script, the drawings, we worked on that teaser, and we did another teaser. It was that stage where you’re moving towards closing the finance and getting it greenlit. And normally for VFX people we’re really only involved after that stage – after a film is going into prep and greenlit.

fxg: When the film was greenlit, what did your role then become?

Eason: There was a definite change when the finance was in place and it became a real project. At that stage we moved onto the final robot designs. Jon brought on board somebody we introduced him to on Grabbers – he’s a creature designer/concept illustrator called Paul Catling and Paul is a world class artist. He designed the robots and we met every couple of weeks. Paul would very quickly rough out a whole load of options, Jon would pick his favorite and refine them. Paul also can move from a loose illustrative style into 3D building fairly soon, which is great from us because we know that Paul is going to be able to give us something that looks good from every angle and is an actual 3D shape rather than an impossible drawing.

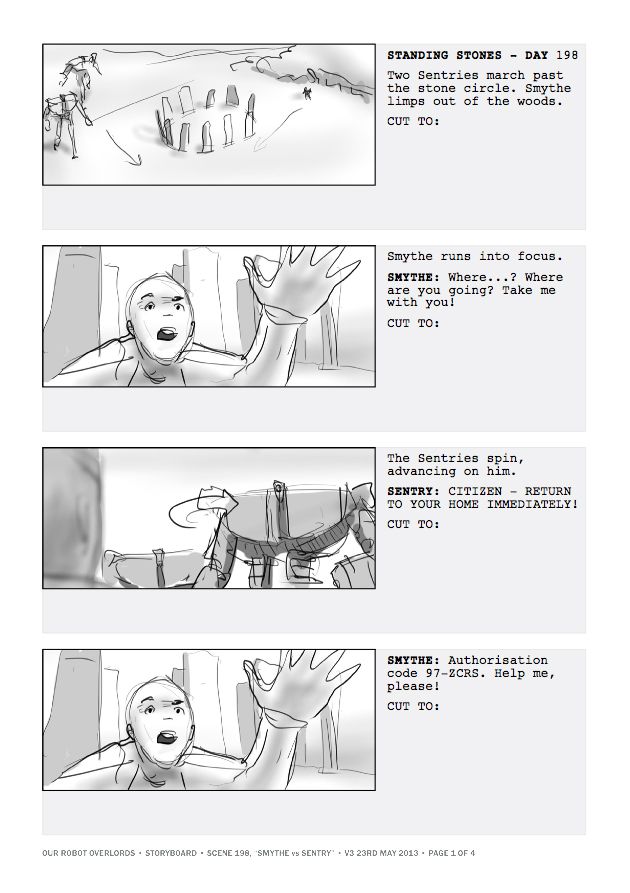

fxg: Were there storyboards and previs animatics done too?

Eason: Yes, all these things tended to overlap. Jon would storyboard scenes that needed it. We also moved into previs during pre-production, and carried on doing previs with the team in London even during the shoot. We would be a few days ahead of the shoot just getting previs ready for certain scenes. Even when we were shooting in Northern Ireland, every evening in my hotel I would download the latest previs from the London office, get it onto my iPad and grab Jon the next morning and show it to him and get his feedback.

fxg: Because this isn’t a huge budget film, were there things at that point you were doing or advising Jon to do that might be different on a much larger film?

Eason: Well, Jon is very pragmatic and does understand visual effects. So even in the writing of the script he would have half an eye on which things are practical and which aren’t. In the final stages of writing the script, I had started doing my VFX breakdown and budget for the film. Jon would see my breakdown as a work in progress and he would be able to look at the cost of a certain scene and go, ‘Woh, that’s coming out quite expensive. I seem to be blowing a lot of VFX budget on that one scene that’s not that important to the story.’ So he and Mark would go and re-write that one scene a bit, say. The VFX budget did have an influence on the script.

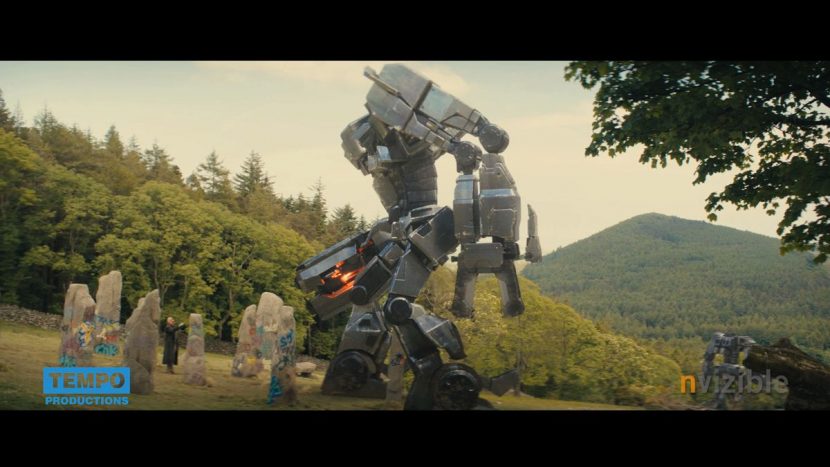

We came to a realization that big epic scenes of giant robot spaceships in the sky actually aren’t that difficult or expensive to shoot. You just need to shoot a landscape. Because you’re doing the big robot in the sky, it’s using assets you’ve got already. But what is difficult and expensive is direct interaction between the robots and people or sets or location. I think there may have been some scenes early on in the script of the kids riding on the robots – the sentry would have its hand out and the kid would be in the hand walking down the street. It would have been possible, but expensive. You’d either have to have a CG kid or some kind of gimbal rig for them to sit in. So we decided to limit the direct interaction to brief moments.

Live previs thanks to ncam.

A couple of the kids get grabbed by the robots and yanked off their feet – there’s a lot of stunt work there but it’s brief and with lots of light shining at the lens, which actually made it more scary. There’s another brief moment when the robots are chasing the children and it kicks a car. We would have done more of that if we could but there’s a balance in terms of time and budget.

fxg: Did the budget impact anything you could do on set surveying?

Eason: We were relatively well prepared with storyboards and previs, and Jon is quite good at not changing what he wants. That kind of pre-planning does also free you up to be more spontaneous, too – most of the shots have a hand-held feel. On set we didn’t have a huge VFX presence. Most of the time it was me and a data wrangler or a VFX assistant and sometimes I would have one of my lead CG guys.

We LIDAR’d one location, a castle where a gun fight takes place between two different kinds of robots. We knew because it was a night scene we’d have to be casting light onto the castle walls, and we might have robots running up and down the walls. The foot falls were important too. So we invested in a day of LIDAR with 4DMax. That was also a useful day to have those guys with us because they were also able to scan the cast – we grabbed a cyberscan of Gillian Anderson and Ben Kingsley and so on, even though we probably didn’t need them – but it was useful to have them in the bank. Still, we could scan the other actors who were digi-doubles in a flying sequence. Most of the time on set we were taking measurements to help in tracking and matchmoving, and we also took lots of reference photos.

fxg: What challenges did the robots throw up – in terms of animation but also their metallic texture?

Eason: Jon wanted the robots to have an industrial and crudity to them. He didn’t want a lot of fine detail – they had to be clunky and industrial. Nothing is painted, nothing is aesthetically finished. The idea is that the machine civilization of the robots only build as much as they need to to subjugate a planet. They’ve studied the Earth, they know about human armaments, gravity and they’ve just built robots that are good enough to do what they need to do. They’re all covered in bents and scratches.

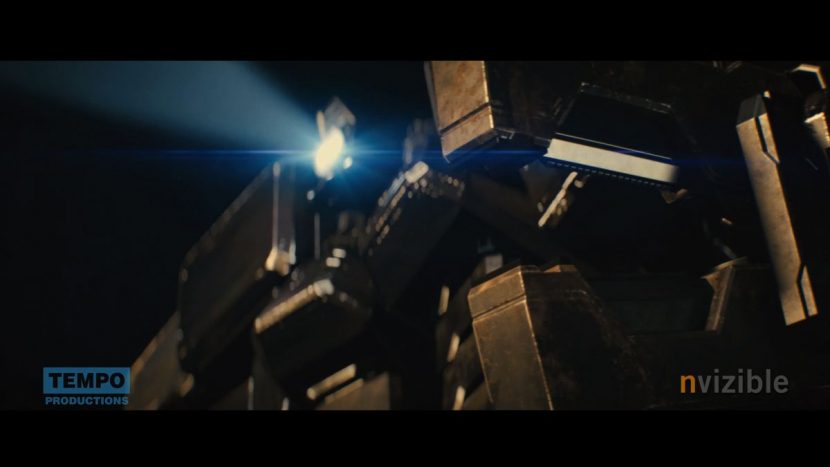

They had to be seen in sunlight and at night. We didn’t want to get into a whole lot of creative lighting. We used V-Ray’s physically based renderer. We shot a lot of lighting references of HDRs so that the robots could be illuminated by whatever was there on location.

One of the main challenges was getting a big shiny metal thing to look heavy and big and real. The trouble with metallic objects is, because most of what you see is from a reflection, you don’t really get a dark shadowed side, a dark underside or bright top side. You don’t get the modeling that you would normally get from light indirection – the diffuse component of the object is quite minimal on the metal object, it’s mostly reflections. If you’re not careful you end up with something that looks quite airy and light and doesn’t look heavy and big. So we had to mess about a bit to make sure we got something big and dirty and metallic, but also heavy and have a dark underside.

fxg: What were some of the compositing challenges in the film?

Eason: Well, John loves his lens flares and using anamorphic lenses and we had to match non-VFX shots very closely. The assistant editor assembled a reference reel for us of all of the other lens flares in the film – all the lens effects from the non-VFX shots. We just tried to use that beautiful warm blooming in our shots. We also had a great collaboration with a specialist company who we outsourced tracking to – Peanut FX in London. They are just brilliant at this stuff.

fxg: How did you accomplish the flying sequence towards the end of the film?

Eason: We broke down the boards, previs’d it and refined the designs of the Sky Ship, the drones, the sentries, the cube. It’s a flying sequence. You’re talking about shooting just your lead actor in a bluescreen studio and that’s it, and everything else has to CG or VFX. That’s a scary prospect because you want this scene to tie-in to the rest of the film and not feel different or fake.

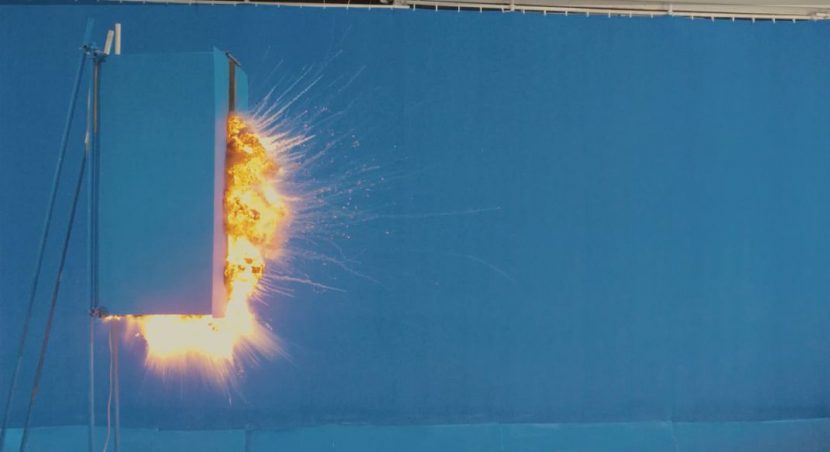

The only thing we built for the character Sean riding the Sky Ship was the tip of this thing we called the ‘pinnacle’ – the pointy bit on the front, just for him to lie on it and have something to interact with and for us to have something to frame up on. Then, a tool we brought to the production that really came to its own was a tool called Ncam which is our in-house for real time camera tracking and it feeds you a previs version of your CG assets and live comps them with the feed from the camera and shows it to the camera operator or video village. It lets you see your CG in realtime. In this case, what it gave us was the rest of the Sky Ship attached to our small set piece. It let the operator compose the shots much more effectively than if we were composing with a giant bluescreen.

We previs’d the whole scene, but we had lots of setups to get to so we had print outs of every shot on the wall – a big board on the side of the studio, and we would just go through them one by one. We had 30 or 40 setups to do in two days. We had the pinnacle on a big crane, a big farmer’s crane and we could manipulate and move that. We had the camera on a crane so we could swing that around for aerial type camera moves, and a wind machine to blow his hair. It’s a big exterior where we had to ultimately comp Sean into a daylight scene.

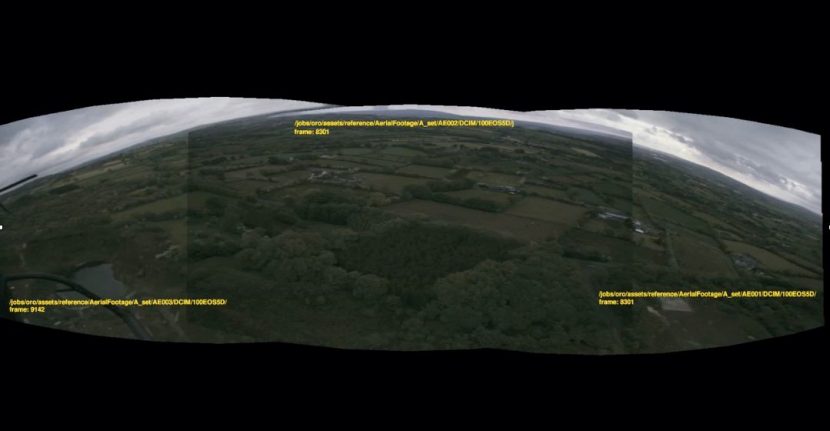

As well as the challenge of the CG spaceship and other CG elements, the remaining big challenge was the landscape below him and the sky above him. For that, we went out in a helicopter and had a multi-camera array to shoot the backgrounds. We could shoot 160 degree field of view, out of which we could crop whatever angle we wanted shot by shot. That’s a fairly standard method of doing things, but we couldn’t afford on our budget to have three movie cameras – we couldn’t have 3 or 5 EPICs or Alexas.

But I worked out that if we had three Canon 5Ds shooting stills, they are really hi-res, so we’re covering our 160 degree field of view with the stills. We flew the path of the Sky Ship – basically a straight line – starting at about 1000 feet up and descending to maybe 100 feet. We flew that straight line several times with the helicopter orientated so I could shoot looking forwards, looking to the left, looking right, behind us, down, up – and shooting three frames per second. So you can imagine a helicopter flying along as slowly as he could while keeping it smooth.

We’d take the resulting thousands and thousands of stills back to London, take the lens distortion out, stitch them together into a panoramic frames in DXO Optics Pro and then run them through optical flow algorithms in NUKE to turn a series of stills into what looks like flowing live action motion. We could adjust the speed at that stage to make it either flow slower or faster. We had enough stills shot fast enough that the motion analysis could render us new in-between frames that we were missing from not shooting on movie cameras.

That gave us the stabilized footage that we could render out as backgrounds for any given shot. It was one of those processes where there was a little bit of finger crossing – is this going to work? All my VFX instincts told me it should, but until you see the first rendered backgrounds it was a slight concern. The nightmare scenario was that it just didn’t work for whatever reason, and then we would just have to generate CG landscapes – which would have been a major challenge.