Joss Whedon’s Avengers: Age of Ultron not only reunites and introduces a formidable and popular cast of Marvel characters – it also saw the re-assembling of several formidable visual effects studios to create characters, environments and numerous effects for the film.

Avengers re-assemble

Advancing Hulk

Holo-J.A.R.V.I.S., Holo-Ultron

Ultron is born

Ultron in his Prime

Quick Quicksilver

The magic of Scarlet Witch

Seoul searching

Envisioning Vision

Battle for Sokovia

Tribute to the heroes

Putting the UI in UI

Powerful Previs

BONUS: Stereo conversion

Guiding the Age of Ultron effects effort was VFX supervisor Chris Townsend and VFX producer Ron Ames, who both oversaw a huge slate of digital work by a vast array of vendors. “It is incredibly important to get the casting of your VFX vendors right,” suggests Townsend. “Just like you would cast your actors, you cast your visual effects team too. We’ve built up relationships with these companies and we know their strengths and where their creativity lies and what their skilset is, so we’ve been very fortunate that we can build on that.”

In this article, fxguide finds out from Townsend and many of the VFX vendors on the show – including ILM, Trixter, Double Negative, Animal Logic, Framestore, Lola VFX, Territory, Perception, Method Studios, Luma Pictures and The Third Floor – how the studios were cast and how the biggest characters and biggest scenes were carried out.

Above: watch our break down of ILM’s incredible VFX for Hulk, in this video made in conjunction with our media partners at WIRED.

Avengers re-assemble

The action: The film opens with a one-shot tie-in sequence of the Avengers – Hulk, Iron Man, Captain America, Thor, Hawkeye and Black Widow – as they raid a Hydra outpost in the fictitious Eastern European country of Sokovia in search of Loki’s stolen scepter. ILM combined numerous plates and CG elements to finalize the shot.

Casting ILM: “It was one of three mega shots in the film – it’s over a minute long,” notes Townsend. “It was shot over a period of about 7-10 days – a massive undertaking. We spent a month previs’ing the sequence with The Third Floor (see Powerful Previs, below) and it really was in Joss’ mind the idea of showing that these individual characters are all fighting but they’re all acting as a team and helping each other. ILM spent months on it – and we could have spent many many more months on it – it was the last shot we finaled.”

The shot features several hand-offs from one character to another. “And then,” says Townsend, “in a single shot we wanted to bring them altogether in a single splash page – it was very carefully art directed by Joss exactly how he wanted the characters to be and in which order and where they should be placed in the frame. It’s very much – and here we are, we’re back in the Avengers – it’s on! The idea is we’re straight into it, it’s an Avengers movie, we’re re-introducing all the characters as individuals and then showing them as a team, which is what they are.”

The characters are battling Hydra combatants in a snow-covered forest during the sequence. To get fast-moving plates, production utilized a Spider-cam rig set up on location (at Bourne Wood in the UK). “Then we had full environments as cycs that ILM re-created digitally,” says Townsend, “projecting those as virtual worlds when we were doing camera moves that you couldn’t do in the real world. Then they stitched it together – so there’s 8 or 9 different major stitches in the sequence, and added in various CG trees and snow.”

“Also, some elements were shot separately, including Scarlett Johansson as Black Widow because she was pregnant at the time. We shot elements of Chris Hemsworth as Thor in London on a greenscreen set. We shot greenscreens of Chris Evans as Captain America riding a motorbike – then it was combined with photography from Italy near the castle. It’s a massive massive shot we stitched together.”

Back to the top.

Advancing Hulk

The action: Perhaps one of the most challenging effects creations in Age of Ultron was Hulk, Bruce Banner’s ‘Other Guy’ played by Mark Ruffalo. Already seen in previous Marvel films and finely realized in the first Avengers, the character was once again revamped for scenes of both widespread destruction but also quieter and more emotional moments. ILM drew on its own previous Hulk discoveries to deliver the creature.

Casting ILM: “We needed a strong player who had a core strength and could take on the strong character animation work,” says Townsend. “There was lots of destruction and big action pieces – there’s only a couple of companies who can handle that and ILM was one of the obvious partners. The first Avengers Hulk turned out so good and in my mind it was the best that had been created, but I felt in my mind that there was still a lot of places we could explore, in terms of trying to create a more realistic character, something that the audience would believe rather than just thinking it was a really good CG green character.”

Above: Black Widow calms Hulk down.

“We talked about pulling the model apart and almost going back to ground zero and what worked and what didn’t,” adds Townsend. “Our challenge was being able to create the comic book action figure and that splash page look, but then creating something as a character who is an ensemble cast member who you could believe was as there as Hawkeye (Jeremy Renner) or Tony Stark (Robert Downey Jr.). You should be able to believe them in exactly the same way and you shouldn’t pay any more attention to this massive green guy as you would to the other characters. Ben Snow, ILM’s visual effects supervisor, was very enthusiastic about wanting to build a better Hulk and building a better performance. I think there are lots of shots looking into Hulk’s eyes and you really see into his soul, and I think that’s an incredible challenge making a massive green comic book guy come to life.”

For ILM, that opportunity to build on what had already been accomplished with Hulk led to further development of the character’s facial animation system. “One of the things that had happened on the first film,” relates Snow, “was that the facial library on Hulk had been built in tandem with the facial library that had been built on Banner, which is Ruffalo – the Ruffalo version of Hulk. So one of the first things we wanted to do was make sure Hulk’s facial library matched. The first thing we did was go back, look at our Mark Ruffalo material and some new material we captured of him, and say, ‘Let’s get Hulk’s shape library much closer to Mark’s, and much more of a correlation to Mark Ruffalo.’”

“We revamped the entire model,” adds ILM animation supervisor Marc Chu, “including the facial shapes, and really tried to work on matching the intent we saw from Mark Ruffalo when he was on set, or pushing it to where Joss needed to for the comic action beats. We explored making it more in that comic realm. We pushed how far he could open his jaw – it is dislocated in some cases!”

Indeed, ILM still had to enable extreme facial movements, but their new base was an underlying – and re-built – muscle system for both the face and body. This included, explains Snow, “an underlying sub-mesh which was like a tet-mesh and on top of the muscle shapes, to make the muscle shapes more sophisticated and then we’d ride everything on a full blown fascia simulation. So we got better skin sliding which I felt was one thing that could be improved.” The new muscle system was pitched by Sean Comer and Ebrahim Jahromi.

The process began with model supervisor Lana Lan and creature supervisor Eric Wong who took the sculpt of Hulk through a ‘calisthenics’ session that matched one by on-set Hulk reference performer and body builder Rob de Groot. “We filmed it with three different cameras,” says Snow. “He was doing all the sorts of things you might do in a range of motion for testing a rig. And you could really see the secondary movement of the muscles, how the muscles tensed. So we sat down and looked at all of that stuff and said look, really we need this to be full outside-in situation. ILM’s muscle system in the past has used extensive underlying muscle rigs, but we’ve always started on the outside – we’ve sculpted the outer-body and then we’ve made these underlying systems really to help with the physics of it.”

“What we did was talk to some doctors, professors of medicine, locally,” continues Snow. “There’s been quite a bit of research of when you flex a muscle, the way it retains volume – it can get firmer, its shape will change, all of that stuff. We were able to leverage the research so that in an action like an arm bend, then the muscle doesn’t just squash, it actually changes shape, it becomes different in some way, and that further gets communicated back to the surface.”

Above: watch a clip from the Hulkbuster sequence.

The result was that ILM could run an auto-simulation on Hulk’s skin. “The animator would do their animations,” explains Snow, “and we’d run an auto sim that would take a few hours overnight. My argument was I don’t want to come in and see a render of Hulk without muscles properly deforming. So it gave us in a way the best of both worlds – the auto sim gave us correct believable physical looking muscle sims and we got subtleties and nuance that really we’d have to consciously animate in there.”

ILM artists could still make alterations to Hulk’s shapes where necessary. “We still gave animators the ability to dial shapes to add emphasis or intent,” notes Snow, “but we ended up moving up towards a lot more physical and correct thing where you had an underlying three-dimensional mesh driving a skin mesh that would slide in two dimensions and then there was a soft spring mechanism attaching those. I feel like his muscles in that area alone have really got a lot better.”

To help Ruffalo get into character this time around, ILM devised a unique ‘monster mirror’ live capture toolset that would drive a Hulk rig that the actor could view in real-time. “ILM’s shape library for facial animation is something we call FES,” says Snow, “and it’s based on facial action units. We worked out a slightly reduced set that were derived from actual final Hulk shapes that could be driven real-time. Mark would sit down and we had a laptop set up and initially two laptops slaved to each other. He would be able to pull shapes in this system and then you could see what Hulk would do.”

“Early on during pre-production while we were building assets,” adds Chu, “we built a rough version of Ultron and Hulk and created this monster mirror setup that we could bring to set or the studio – it’s very portable. That allowed the actor to quickly sit down, see their avatar selves as Hulk or Ultron – to get into the mood. It’s really like digital makeup with real-time feedback. The great thing I like about it, is that it’s extremely portable, they could have it in their trailer if they wanted to and do it privately before they go out on set. It was fun to see people try it out – anybody could sit in front of it and it would basically figure out their facial shape and re-target that onto whatever creature it was.”

The system was markerless and able to derive Ruffalo’s performance without much latency. “It was a pretty impressive project and it’s something we felt would really help,” says Snow. “The main thing, though, was to give Mark the tools to get into character. He felt this would be a useful tool for him. Because it was using a facial library derived from Hulk, it gave a pretty good representation. One of the problems going to full facial motion capture, it’s not necessarily going to be the same curves as a real time system’s going to give you. There’s a certain extrapolation where we weren’t saying it will be exactly the same. For that reason, we talked to Joss about this, we said do we want to use this on-set? Joss felt I really want to be looking at the acting – not this facsimile of the acting and obsessing over that.”

In that regard, too, ILM adopted more of a ‘Davy Jones’ approach to acquiring Ruffalo’s on-set performance. That meant that, although a helmet-cam system was used in places, animators mostly referenced Ruffalo’s raw acting. “Mark Ruffalo had performed Hulk on the previous film and he provided our animators with a good reference,” states Snow. “Going into this film, one of the things Chris Townsend had asked us to do was, Can we tie everything into the actors, can we set it up for mocap? We did a lot of preparation early on for motion capture. And we recorded a bunch of mocap with Mark for Hulk. And on set for scenes with Black Widow we had him with a helmet cam and full motion capture suit actually acting it against her. But it was also just taking his raw performance, too.”

The ‘lullaby’ scene with Black Widow, in particular, was the first time the audience got significant close-up time with Hulk. “We put in a lot of work for the hands in terms of layering, certainly using scans and detailed studies of the hands,” says Snow. “We shot some specific stuff just to study that more. It was a lot of detailed paint work to get the nuances. Oddly enough the biggest problem was that we had had Mark in those shots, so he was wearing cricket gloves to try and give them a bigger volume. We talked about having them green – they weren’t able to get them green for us in time for the shoot. We have her stroking his arm and she’s stroking it up and down the cricket glove and we had to break Scarlet’s performance and re-match it. Just getting the two to blend together was hard enough. We looked at the nails and the way you have little ridges in the nails, used dirt to our advantage. There was a lot of detailed hand-work to get it up the speed.”

Hulk was rendered in RenderMan, with ILM playing close attention to his sub-surface scattering, especially in terms of how deep the light penetrates on cheekbones versus say a nose. “It’s fairly labor intensive,” offers Snow, “because these objects aren’t built out of flesh and bone. So we built a skull inside there and we did stuff so that we could then calculate, ‘OK, what would Hulk’s skull look like? How far would a ray penetrate to the bone?’”

Not only was ILM’s Hulk called on for hero fighting sequences and the more nuanced interactions, but the character is also realized in ‘Angry Hulk’ form when Scarlet Witch takes control of his mind. Banner and Stark, foreseeing the possibility of Hulk raging out of control, had developed ‘Victoria’, a Hulk-busting unit that Stark as Iron Man wears to tackle the character’s angry form as the two wrestle. Shots of Victoria being launched from an orbiting satellite were handled by Luma Pictures, with ILM capitalizing on significant experience in rendering Iron Man to bring the Hulkbuster suit to life.

For Angry Hulk, ILM’s initial brief was to make the character less green and more gray – a design element that was ultimately brought back more into the realm of the original Hulk. “We did do things like add more veins to him, rosacea, and a lot of different layers of detail – the way Joss described it was as if he was a little bit strung out, like a heroin addict. That’s where the ideas of the sunken eyes and rosacea came from.”

Originally the frenetic sequence between the Hulkbuster and Angry Hulk was envisaged as being ‘tobacco filter yellow’ in its color palette. “So we started doing Hulk development and showing Hulk tests and then put the lookup in,” says Snow. “With Hulk we found with our initial round that, ‘Wow it works great,’ then we turn on the tobacco filter and, ‘Woh it looks…Oh.’ One of things they wanted to explore was making him almost totally gray, not totally gray but almost no green. So we were reigning back and back and then it became fighting the filter a little bit. I have to say that gray is an awful color to be doing any creature in, because it’s just going to go where it goes. And Hulk is green, so luckily Marvel said we want to reign back a little bit. And there’s less tobacco in the end.”

Ship breaking: The Avengers battle Ultron at a Johanesburg shipbreaking location, a sequence that ultimately leads to the Hulkbuster versus Hulk scene. As Iron Man and Ultron make aerial manoeuvres, they weave past the corpses of old ships – a completely real location, as Townsend explains. “A small team was sent to Bangladesh to shoot both aerials and ground based shots of the beached ships. What you see there is real; we changed a ship’s name, painted out a few people, but that bizarre exterior location is real, and a ship breaking yard on the Bangladeshi coast.”

Back to the top.

Holo-J.A.R.V.I.S, Holo-Ultron

The action: Looking to convince Banner on the viability of his as yet unrealized Ultron global defense program, Tony Stark shows Bruce Banner holographic representations of J.A.R.V.I.S. (Paul Bettany) and the A.I.-like control structure of Loki’s scepter. He sets J.A.R.V.I.S. to work on combining the operating system code structures and ultimately Ultron comes to life – still just as code at this point. Ultron soon seeks out more information about his prime directive – peace on Earth – via the internet, shown also as a 3D graphical representation of cyberspace. This work was completed by Animal Logic.

Casting Animal Logic: “Animal Logic is incredibly skilled at creating things you’ve never seen before,” describes Townsend, “and they’re very aware of storytelling and the fluidity of the cut and the way things change constantly in Marvel’s world. Marvel relies a lot on the graphics and the storytelling ability of the visuals. And Animal Logic have a great sensibility with that stuff. I love their attitude.”

Artists at Animal Logic explored initial concepts with Townsend for how an operating system for both J.A.R.V.I.S. and ultimately Ultron (James Spader) would look. “Chris had some great ideas about cause and effect type stuff,” states Animal Logic visual effects supervisor Paul Butterworth. “There was a central processing unit sending out information, it would fill up buckets of areas and they would open up. It’s all active, all moving, all twisting.”

J.A.R.V.I.S.’ holographic representation was conceived as an orange series of arcs. “It looks almost like a traditional orrery,” says Butterworth, “with planetary rings that move around and rings made out of blocks of data. These were moved to be manipulated by audio. When you see J.A.R.V.I.S. or hear him talking, you see all this stuff vibrate almost like a volumetric equalizer around on his arcs. He can get agitated as he spins around, move his rings around in weird ways.”

On the other hand, Ultron’s hologram was intended to be even more sophisticated than Tony Stark’s already high-tech J.A.R.V.I.S.. “He has multiple blue shells of bio artificial technology and weird faceted spheres that crease and move around and fold,” describes Butterworth. “Sometimes it can be like a rhomboid, sometimes a sphere. Between each of those layers are these fibrous connections that have energy light, almost like plasma light. That data then grows a near layer or shell on top of it. There’s almost this kind of panels of data almost like circuitry. Data will move to another layer.”

Before shooting, some rough style frames had been designed so that Downey Jr. and Ruffalo could get a sense of what they would be looking at. “Robert in particular is fantastic at focusing at a spot in mid-air and knowing what he’s controlling and he’ll grab something off the table and switch some switches,” says Butterworth. “Our challenge is then to place things in the eyeline that animate and make sense of his performance. It’s about reverse engineering in a way.”

The final holographic looks were achieved mostly in Maya and Houdini, the latter importantly allowing for procedural effects. Using deep compositing in NUKE, which helped in adding in the actors amongst the holograms, artists then worked to add bokeh effects and maintain a short depth of field in many of the shots. “From previous holographic work,” notes Butterworth, “we’ve learnt how to manipulate the plate to take the exposures down and put the hologram on top so it feels like you’ve photographed it in that environment. Then there’s subtle harmonics of flicker and light and aberrations to give the viewer that sense that this is a projection system rather than just an object.”

The holograms remained complex creations. “They really actually can be nasty things to make,” admits Butterworth. “If you did have an array of points of light in reality, there’s nothing from the front points culling the back points – it would just become this white or colored cloud. So we take that movie leap of faith where from whatever angle you’re looking at it, we’re culling out the back information – you’re seeing more into the front face and into the volume to give it a volume as such. But there’s a lot of clever cheating to give them shape and form.”

As Ultron in code form begins to learn at an astronomic rate, he races from just a dark void to a complex web of shapes – cyberspace. “Here,” details Butterworth, “you’re getting all this other kind of data and other constructs. You see J.A.R.V.I.S. as a piece of software within this environment connecting and calling out the data – it’s a weird esoteric world and you don’t know what’s up or down. How do we represent this space? What does data look like? Is it pictures, audio, sound?”

“We tried to work out how to represent that gargantuan amount of data and quite quickly settled on fractals and bulbs,” adds Butterworth. “They had a sense of order about them but also chaos. It didn’t matter how close you got to it there was an ever increasing layer of detail. Things could form and then break away and form another structure. Then we tried to make controls out of the fractals – they have a mathematic structure but if we have to art direct it, it was a nightmare!”

Animal Logic chose to show the many millions of pieces of data Ultron searches through as they were stars at a planetarium. “You would see the internet growing from nothing to these forms that are massive amounts of glass cubes with colored data in them,” says Butterworth. “You could take a glass cube and bring it up close and it would be a file that’s got Captain America in it. We used our knowledge of fractal forms to make complicated moving point clouds and then instanced the hell out of them to make data files that moved around with little bits of colored files in there.”

Coming back into the real world, Ultron has learnt – perhaps somewhat incorrectly – that Stark is a war-monger and that peace on Earth may not be possible. He essentially murders J.A.R.V.I.S. – shown as a breaking up of the hologram – and begins building himself in machine form. For Animal Logic, the holographic journey spanned much of the film’s production, but it was a rewarding one. “They’re like moving sculptures,” says Butterworth. “It’s one of those areas, too, where we are doing so much design work and traditional visual effects which not all facilities get to do.”

Back to the top.

Ultron is born

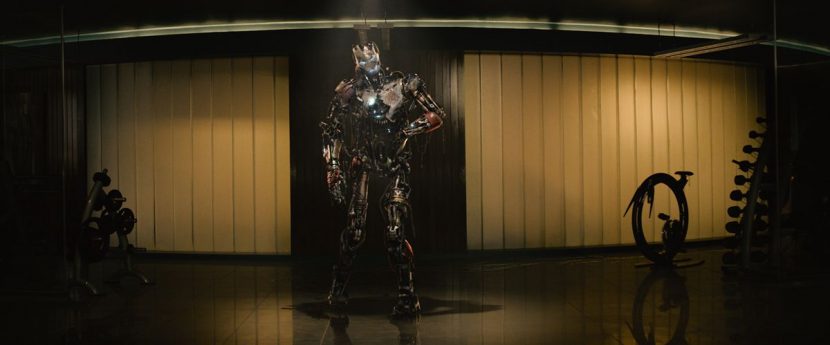

The action: After his digital awakening, Ultron surprises the Avengers, who are relaxing at a party at their tower, by appearing to them as a kluged-together robot formed from pieces of Stark’s robotic iron legionnaires. Trixter made this Ultron Mark I suit, and also the legionnaires who are used as both army and clean-up reinforcements by Stark, and then maliciously enlisted by Ultron to fight the Avengers at the party.

Casting Trixter: “Trixter are very good at taking original concept art and taking that all the way through to precise modeling into beautiful animation,” states Townsend. “They created Ultron Mark I which is a bastardized version of him, made from various bits of Iron Man and Iron Man suits to create this almost monster. They worked very closely with James Spader’s mocap performance too.”

Spader saw some early tests done by Trixter on the Ultron Mark I and suggested an approach as to how its broken down body could be achieved in animation. “He said he’d like to do something with his body like have his arm in a sling or weights around his ankles,” relates Townsend. “We worked very closely with mocap provider Imaginarium in London and we developed ways to make Ultron move. Spader then gave us a fantastic master class in acting – one particular day he stood there in his mocap outfit in London and he talked at length at how Ultron Prime would move relative to Ultron Mark I, relative to the Sub-Ultrons, which are the army of Ultrons he creates. A Sub-Ultron would walk in a much more militaristic way, with hips forward and legs apart. Ultron Prime is bit more elegant and you walk in a slightly different way and your left shoulder is always leaning forward, and slightly pigeon toed because you’re an athlete.”

“The intention was to let the James Spader performance come straight onto the audience but there were cases where we were requested to re-build shots where no mocap data was available, too,” explains Trixter visual effects supervisor Alessandro Cioffi.

Trixter blocked out geometry for Ultron Mark I in Zbrush and then continued modeling and animating in Maya (the animation was supervised by Simone Kraus), with lighting and rendering done through KATANA with RenderMan. “The complexity of Ultron Mark I was not only the complex geometry,” says Trixter CG supervisor Adrian Corsei, “but also that he required a layer of simulation on top with cloth and fluid sims. When he was moving the oil was flowing on the ground and surface. It was like Iron Man meets The Walking Dead!”

An interesting challenge in completing the scenes lay in how Ultron Mark I addresses the Avengers – somewhat theatrically. “He almost has his own stage,” notes Trixter compositing supervisor Dominik Zimmerle. “It really gave us the opportunity to work on the whole picture from start to finish. The idea was that he was entering in the pool of light – we tried to synchronize the entrance to make it as dramatic as we could.”

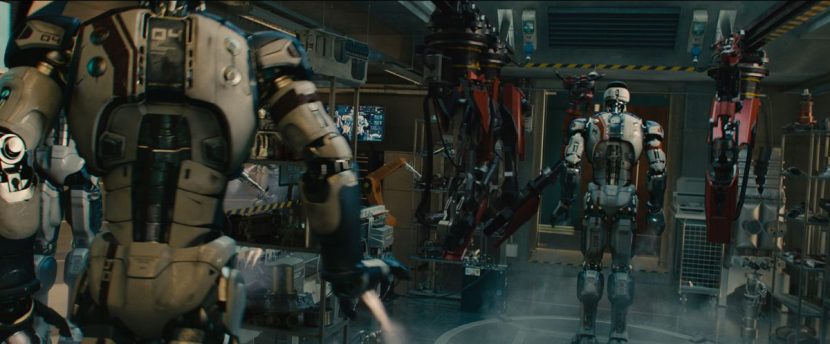

Ultron Mark I has assembled himself from Stark’s iron legionnaires and engaged them to fight the Avengers. The legionnaires were also Trixter creations that made use of many levels of damage textures, with their on-set performances carried out by actors in gray suits. “Joss wanted to give an obviously robotic type of motion and behavior to leave no doubt there was a human inside them,” says Cioffi, “so the CG character would not match the stuntman in the plate – that was a real compositing challenge to re-create parts of the plates.”

Trixter also had to deal with the background New York scenery. “For the NYC backgrounds during the fight,” says Zimmerle, “we worked mainly with a spherical lat/long image of New York City with high dynamic range values. We added a lot of atmospherics like twinkling lights, grading it a little bit to integrate it. Then it helped that our matchmoving department provided us cameras for each shot aligned to LIDAR scans so we could always rely on positions.”

Trixter completed an almost minute-long shot of the legionnaires returning earlier to the tower after a mission. “This starts as a completely full CG environment,” explains Cioffi, “as they fly in past the foreground then approach the tower, go through a scanning and sterilizing chamber which is also CG, and then it blend to live action seamlessly. The camera pushes up to lab where they are stored and damaged ones are assembled and the camera keeps craning up to upper level where Tony is working.” That shot also provides a glimpse at one of the legionnaires that has a damaged face – the result of an earlier acid attack that corrodes its metallic mask (an effect done in Houdini). The corroded and damaged mask later becomes the face of Ultron Mark I.

Back to the top.

Ultron in his Prime

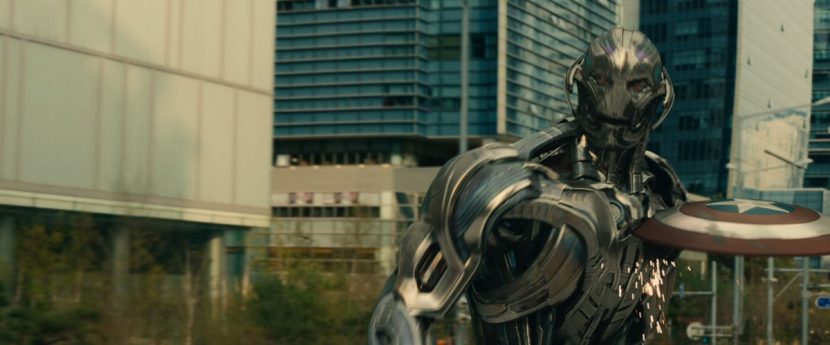

The action: In his final incarnation, Ultron Prime is a complex metallic robot capable of spectacular athleticism, flight and fighting ability, but with considerable elegance – a characteristic of Spader’s performance, too. ILM built the asset and carried out the lion’s share of Ultron Prime shots, with others shared among the vendors, including Double Negative for the Seoul chase sequence.

Casting ILM: “The amount of rigging required to create Ultron Prime is immense,” says Townsend, “and you need a company who has not only the technical know-how but also the scale of their operation to be able to handle that kind of work. Again, ILM was an obvious solution.”

“One of the things Joss and I talked about early on was that he had to be the ultimate robot – he is the perfect robot,” adds Townsend. “He’s elegant, beautiful and sophisticated. By casting James Spader as the performer and actor, they brought a certain quirkiness to him. Joss wanted there to be an almost human like quality to him as a robot, but in the same way we talked about with Iron, always with a mechanical integrity. Ultron Prime couldn’t have malleable metal panels – he had to have rigid metal panels that would slide against each other, which meant that ILM had to create this incredibly complex rig in order to animate him and have these individual panels around the face and mouth and cheekbones, allowing him to emote in the same way that Spader emoted.”

Original designs by Marvel were shared with ILM, with Ben Snow noting that their reference points for Ultron Prime were a Swiss watch or something incredibly intricate. “Ultron has to be one of our most elaborate lead character rigs,” says Snow. “It’s about ten times as complex as something like Optimus Prime. We use a proprietary system called BlockParty and he has about ten times as many nodes [from what we’ve done previously]. He’s got about 600 nodes just for his face.”

Spader’s on-set, mocap and voice recordings helped define that detail required for the face, of course. “We had James on set for a lot of the interaction with the other characters in the movie,” says Marc Chu. “That was really great reference for getting his delivery, his take, the lip sync. And then little idiosyncrasies he throws in and ticks which makes him more believable. Then, on the other side, as sometimes the movie develops beyond what was originally shot, we would get direction from Joss about what moments he wanted, and we would animate traditionally.”

“We photographed James Spader with a dual camera setup which Glen Derry had devised and used for various films like Avatar and Real Steel,” outlines Townsend. “He was also captured with a dual-mounted head rig at times. We were shooting it as mocap or as image based capture with markers and witness cameras. Every single time he was out there he was that character, even when he was just off screen doing the voice for other actors. He would get into the physical pose of the character he was playing. He absolutely lived it and it was brilliant because what we got out of it was a performance that we matched directly as a mocap performance or that we were inspired by.”

The actor also had the benefit of the ‘monster mirror’ configuration as had been used by Ruffalo for Hulk, but the translation of his performance required a different approach due to his metallic nature. Says Snow: “It’s a robot and you don’t want it to be deforming like human skin – you don’t want squash and stretch. So in the rig we had to write a bunch of new tools so that we could actually have plates – so when he opens his mouth, the plates that open his cheek would slide one under the other.”

“We ended up with an extremely elaborate model – but the way the animators could drive it was still more like a FES type model. He was fully mocap capable as well. You could still use the controllers you were used to, but then it would be like a Transformers character where you’d be manipulating shapes to get the performance. We were then able to take that tech written for the face and roll that into his body, so he had a lot of intricate motion, so as he flexes his pecks you see the plates sliding on his chest. It gave us the chance to make it more intricate.”

Above: the team face Ultron at Klaue’s base.

“There are some moments in the film that I think are remarkable where you can see that Ultron is listening,” comments Townsend. “You watch the performance and subtleties – he’s eyes just get narrower a little bit, say, and he’s not saying anything, he’s just listening. It’s a very subtle piece of performance which was incredibly important. We needed to get that level of sophistication and in order to do that we needed to create this incredibly complex robot. That fit in very well with him needing to be the ultimate robot. It was very much based on Spader. At the beginning I didn’t think we were going to get much more other than the voice, but James had said originally to Joss look if I’m going to do this I don’t want to just be the voice, I want to be the character.”

Back to the top.

Quick Quicksilver

The action: Pietro Maximoff/Quicksilver (Aaron Taylor-Johnson) and his twin Wanda are the results of experiments by Hydra’s Baron Wolfgang von Strucker (Thomas Kretschmann). In the case of Quicksilver, he exhibits superhuman speed. Trixter, which had done early work on the look of this effect for a tag sequence in Captain America: The Winter Soldier, devised the look for Quicksilver’s streaky appearances and shared that with other vendors.

Casting Trixter: “I wanted to go to a company that could bring some sophistication and elegance and artistry to creating that,” says Townsend. “I’ve worked a lot with Trixter before and I tasked them with bringing that artistic impression to those characters. They jumped at the chance and did a great job creating the characters, and spreading that out to other folks who took that on in other shots of the movie like ILM, DNeg and Method.”

Characterizing Quicksilver’s shots was that there would not be one single ‘look’ – the audience may see his super-fast streaks, or they may see time slowed down and be ‘with’ Quicksilver. In addition, the character would typically effect the environment he was in. To achieve this, Trixter broke down the various methods that could be used to produce the Quicksilver imagery, including locked off cameras, high speed photography, clean plates, nodal pans and a digital double plus blends to live action.

One of the essential components of the look of Quicksilver is what was called the ‘photographic trail.’ “Chris Townsend did a lot of test shooting before production,” explains Trixter’s Alessandro Cioffi. “He found that shooting at 6 fps with a very open shutter and some fast movement that he could get this very long and smooth motion blur trail of object moving in camera. This was re-created in compositing. With high speed photography, having a lot of frames at our disposal, we could re-create the same effect so it was very fluid and long graphic trails – in combination with the trails done in Houdini and combined with comp tricks.”

“What we had was various frames per second footage, for example 120 fps or 72 fps depending on the needs of the shot,” adds Trixter’s Dominik Zimmerle. “To create these photographic trails, sometimes when he was slow in the frame or when we had a higher frame range, we could use just the plate marked ‘Pietro OUT’ and create an optical trace with NUKE tools like time echo frame interpolation. Then we needed to blend these motion trails into the key layers, bringing in Pietro’s natural colors into the trail and vice versa so that it gave a natural trail to the whole thing.”

Sometimes the high frame rate footage did not cover the whole interpolation, as Zimmerle explains: “In this case we needed to further interpolate the original footage and create the optical trails out of the even slower footage we re-created out of the original ones. An additional problem was when we had tracking cameras – if we interpolated frames with each other, this meant we also interpolated the camera movement with each other. This meant we needed techniques to stabilize Pietro’s trails and re-track it into the camera and still felt natural in the scene. This involved a lot of masking, rotoscoping, keying – also using the roto-mated geometry to extract pieces of Pietro and bring him back into the plate. We might also put his trail on cards and track it in again into the shot. Each shot was different and each had its own demands.”

Back to the top.

The magic of Scarlet Witch

The action: Pietro’s twin Wanda Maximoff/Scarlet Witch (Elizabeth Olsen) causes havoc amongst the Avengers and, later, Ultron’s army, using her telekinetic powers and explosive magic. Again, Trixter devised a look for Maximoff’s red energy and then other facilities, including ILM and Method Studios, expanded on the work.

Casting Trixter: “We had some very artistic requirements for two of our key characters that are new to the film – Quicksilver and the Scarlet Witch,” says Townsend. “Wanda’s effects were very tricky, excuse the pun. Of all the characters in the team, hers were the most magical, but we still wanted to give a grounded reality to them where possible. The very first time we see them, zapping Cap down the stairs in the opening scene, we wanted them to be very subtle. The first time we make a real statement with them is when she infects Tony Stark, making him go into his nightmare. Joss wanted to echo the HexSpheres that are often drawn in the comic books, by the likes of Steve Ditko, with their geometric shapes.”

Townsend notes, however, that adhering too closely to the still imagery of the comic books would look too ‘fantasy’. “So we backed off a bit,” he says, “and brought more organic shapes in, and a softer more ethereal feel to movement. As her powers increased and intensified throughout the film, we ramped things up a bit, but Joss really liked the star blast shape so in the majority of instances when she uses her powers, there’s a hint of the star in the initial bursts.”

Above: meet Quicksilver and Scarlet Witch.

“We never wanted the red effects to look too smokey,” adds Townsend, “too much like red lightning or electricity, have too much of a linear laser beam feel, or too much like a blaster beam; we had to tread a fine line between always keeping it a little bit magical, but making sure it still fit within the constraints of our grounded visuals.”

Trixter took these ideas – that Wanda’s magic be more subtle and not too graphic – and conceived an energy force that flows up her wrist and to her fingers in a smooth motion, then almost gently entering another character’s brain. Her more forceful powers were of course much more violent. “In either case,” explains Trixter’s Cioffi, “we had to roto-mate the actress’ hands in pretty much every shot. Based on the geo tracked on her hands and fingers, we could simulate in Houdini some initial energy that would rapidly or less rapidly grow from wrist to fingers and move to targets. It was all about subtlety and elegance. It couldn’t be like fire or smoke – it had to be a super natural power.”

As noted, other vendors also worked on Wanda’s powers. Method Studios, for instance, used both fluid simulations and particle systems to create the elements. “These would be rendered with an additive shader which allowed us to accentuate the tendril detail of the simulations and create intensity in areas of higher particle density,” says Method Studios visual effects supervisor Chad Wiebe. “One thing that Chris Townsend was very specific about from the onset was that the effect should not look ‘smokey’, so the key for us to achieve that was pulling some of the highlights and edge details from the sims, and comping out much of the mids so that we were left with thin tendrils of particles which created the look. From there we would also add distortion to the background behind the effect to give it a slight feeling of invisible energy traveling with the tendrils of particles.”

Back to the top.

Seoul searching

The action: The Avengers realize that Ultron is using Dr Helen Cho’s (Claudia Kim) facilities in Seoul, South Korea to create what will become Vision. Captain America, Hawkeye and Black Widow are despatched to retrieve the being inside a cradle. A chase ensues through the streets of Seoul featuring a truck, the Quinjet, Black Widow’s motorbike, several cars and a train – which also sees the intervention of Quicksilver and Scarlet Witch. Geoff Baumann, additional VFX supervisor, oversaw the location work in Korea, and supervised Double Negative in post.

Casting Double Negative: “We looked at some of the Fast & Furious stuff that DNeg had done,” notes Townsend. “We love what they’d done for their environment builds and they’re incredibly good at cars, and set work. So DNeg was an obvious go-to as partners to do Seoul.”

Based on extensive previs by The Third Floor, production scouted and filmed in several locations in South Korea. Knowing that the locations would be mixed and matched in post, DNeg played a large part in acquiring environment reference and surveying the locations. “We needed to cover all the locations with LIDAR and texture photography,” notes DNeg visual effects supervisor Ken McGaugh, who worked with visual effects producer Andy Taylor on the entire sequence. “Our environments supervisor Stephen Ellis went out to Korea with me. It was our policy on the show to build textured, lightable proxy environments of every location. There are frequently shots that get created last minute and you might not have a plate for it, so we were able to use our proxy environments as a foundation for building our CG plates.”

During the six week shoot in Korea, the first two to three weeks were spent in prep and texture photography. “Part of the chase, for example,” says McGaugh, “goes down Gangnam High Street and it’s like Oxford Street in London with lots of tourists and shoppers. We didn’t actually have enough time to get everything they needed plate-wise. So the thought was they might have to do a digital version of Gangnam. We got some traffic and crowd control people and we’d go store front to store front and block off all the pedestrians and just do texture acquisition of that shopfront. And we did that the whole block down. So that was quite tedious, but that data was invaluable. When you need it, you need it. Maybe 90 per cent of the data we didn’t use, but the 10 per cent we did use, if we didn’t have it we would have been screwed.”

Other plate acquisition came in the form of aerial photography and camera arrays used for the truck chase and train scenes. The team utilized the Pictorvision rig built originally for Jupiter Ascending that uses six cameras and covers a 140 degree view. A home-built eight camera array with a 360 degree strip of cameras was also used and mounted atop a truck. “That was very valuable for the chase,” comments McGaugh, “since a lot of the action takes place on top of the truck as it’s driving down the streets in Seoul. It gave us the freedom to drive the camera moves based off the animation we were doing of Captain America and Ultron fighting. There’s always times when you need to go off the camera array and that’s where our proxy environment came in handy. We were able to top up and fill the gaps of the environment and do some shot specific clean-up work there.”

DNeg used its custom tools for stitching and managing the thousands of stills that were generated. “We have a tool called Jigsaw which is how we ingest all of our still photography,” says McGaugh. “It allows you to organize and group things based on function or task, and then you kick off stitches and it automates not only stitching and registering the brackets – it’s also how we do a lot of our photogrammetry as well. We have a photobooth set up where we can do digi-double capture, either full body or face.”

McGaugh saw great importance in building proxy environments for every location, aided by LIDAR scans carried out by Motion Associates Ltd. under LIDAR supervisor Craig Crane, who also supervised scans for the entire show. “For example,” says McGaugh, “we had a parking lot environment underneath a raised freeway, and it was over a kilometer long. We took dozens and dozens of 360 degree spherical bubbles. And then in Jigsaw we had a toolset that would facilitate registering those bubbles to the LIDAR. That involved using Photofit which is software from our Batman Begins kit days – our main photogrammetry toolset – and then we’d automatically bake out texture maps. So you’d get this crudely textured environment on your LIDAR, but then you’d also get a NUKE setup that you could go in and up-“res certain sections if you wanted to do to do say plate reconstruction.

“For doing reflections,” adds McGaugh, “we’d have this baked down textured environment and if a reflection or reconstructed plate wasn’t detailed enough you could take the NUKE export of that and it would have all your bubble projections onto your geometry live, so you could go in there and clean things up and patch seams and upres where it needed to be upres’d.

Above: watch b-roll from the Korea shoot.

CG asset-wise, DNeg incorporated ILM’s Ultron Prime, Captain America and Quinjet CG models into its pipe, while also building the Sub-Ultrons and cradle, the truck and train and many CG cars. That work was overseen by 3D supervisor Malcolm Humphreys and build supervisor and 3D sequence supervisor Steven Tong. “The Sub-Ultron asset was designed in conjunction with the Marvel vizdev department,” explains McGaugh. “They primarily designed the head and we designed the body. We knew other vendors would be using it not only as a body but also chopping it up and changing it, having them come apart in different ways. We had to build it with lots of detail beyond our own needs. You peel the outer layer off the Sub-Ultron and there’s just more and more detail every layer down.”

“They’re meant to be incredibly utilitarian,” adds McGaugh, “but we had to walk that line between looking cool and looking functional. And the main way to do that is to have lots of hoses and pipes. They had to look interesting but also had to look like weak points, like someone with some scissors could run by a Sub-Ultron and cut a cord and disable it. So we still had to make it look armored in some way, hardened and tough.”

Modeler Rhys Salcombe led the build for the Sub-Ultrons. “They are so complex with so many pieces, being rigid pieces, we had to make sure things moved correctly,” notes McGaugh. “The typical build process is that you take concept art and you realize that in geometry and you lookdev and texture it and get buy-off on turntables. And then you go to make it move and realize everything is intersecting! So we took a different approach on this show where we actually from the very beginning we designed it with motion in mind.”

DNeg animators employed the studio’s Xsens MVN suit to mocap certain actions, then integrate this into their own work. “The brief from the client was that the Sub-Ultrons were meant to be slightly insect or non-human in the way they move,” says McGaugh. “Slightly jerky, quirky actions. And so we tried to filter that on top of the animation we had. If they were walking or repositioning themselves while standing, that’s very human so we would motion capture that – then to make it less human we would off-set the hips from the torso. It was still human based motion but different parts move out of sync with each other to make it look awkward.”

Since the Sub-Ultrons had a gunmetal look, their primary lighting contribution came from reflections. “We found in a controlled turntable environment you can get them looking very good,” says McGaugh, “but then when you start putting them in real or shot environments it needed work. Korea was very smoggy and flat lighting in general, we would play up their diffuse aspect more than their specular aspect.”

Ultron Prime takes on Captain America on top of the truck, inside it and on a train – with DNeg taking original plates of stand-in actors in gray suits, stunt doubles and the hero actor to complete the shots. “Almost every shot with Ultron there was a stand-in that we would paint out,” notes McGaugh. “Oftentimes the actions of the stand in weren’t the final actions – they were more for camera reference or for actor reference. We still opted for those plates – they were much better because the cameraman knows what to look at.”

Rendering Ultron and the Sub-Ultrons – and the other CG assets – utilized DNeg’s physically based setup which was done in a mix of RenderMan, Isotropix Clarisse and Mantra. “Inside the truck, for instance,” says McGaugh, “we were mostly replacing the plates with our rendered CG truck interior. That was because the truck interior itself is glossy and we had these robots moving around – there’s these blue lights under the cradle illuminating everything. Our general rule of thumb was we would – for train and truck – whenever he was in a shot, we needed to have a body track of him, and we’d render his digi-double, we’d render the truck – everything which is real in the plate and the CG versions of, and then compositing could actually decide which to use – where is most beneficial. We have some shots where Cap is tacking Ultron and it was a real stunt double tackling a stand-in, where everything except for his back is CG, his costume had really nice wrinkles in the back.”

“The train interior we tended not to render CG because it’s quite complex,” continues McGaugh. “We always rendered a gray shaded and reflection lighting passes of the train interior that we used in comp to add interactive movement to. In particular there’s a section of the train fight as they’re going through this tunnel – it has these large archways of sunlight outside, so we needed an arch lighting effect running through the train – the train set piece itself was shot with no interactive or moving light at all. It was all compositing to make it look like it was moving through there.”

The sequence features Black Widow chasing the truck on a motorbike after being dropped from the Quinjet – a shot first shown at ComicCon in 2014, and produced via a complex combination of CG work and compositing (DNeg’s 2D supervisor was Ian Simpson). “We’ve gone through many many versions of that shot,” recalls McGaugh. “That was actually a stunt double on a motorcycle launching off of a ramp on the middle of a road in Korea. We had to paint out the stunt rider and the motorcycle and the ramp, but we re-projected them onto an animated version of the motorbike dropping out of the Quinjet. It was a real Frankenstein shot, especially in the early days because we didn’t have a Black Widow asset for first incarnations of the shot – for trailers.”

Above: catch a glimpse of the motorbike shot in this featurette.

“So,” notes McGaugh, “it was a fully CG head with CG hair on the stunt rider’s body. Parts of the motorbike were CG and parts were real. When it’s dropping from the Quinjet it’s a CG motorbike, but real body and CG head – a sandwich! When it hits the ground it becomes real motorbike, real body, CG head. All the integration work was really tricky. Then for the final, we had our proper Black Widow digi-double and it became the full CG motorbike and digi-double on the drop, and when it hits the ground it becomes the real bike, real body, but still a CG head. In that case it was actually a petrol version of the bike which was slightly different from the electric Harley. In some cases we had to do some slight modifications to the bike, but most modifications we had to do to the body shape of the stunt riders – neither had body shapes that were very Black Widow-like.”

At one point Captain America loses his shield – often a DNeg CG creation – and Black Widow picks it up mid-ride on her motorbike. “I love that shot,” exclaims McGaugh. “On the surface it looks very simple, but creatively it was very difficult to nail – it’s a key moment. That was a female stunt rider and she’s reaching down grabbing air, but her hand was nowhere near close enough down to the ground. So there are lots of cheats on the shield position and its size. For some reason as photographed her arm looked way too long, so we had to actually shorten her arm to look more natural even though it was real. There was a full head replacement and we had to add CG cars that swerve around her and in the background. Those cars really add a sense a danger and excitement. It actually took me by surprise how much value they added, because there were quite a few real cars in the shot and it already felt quite exciting. Making it so she barely misses or threads a gap between two cars really helped the shots.”

In fact, DNeg became well-versed in delivering CG cars for the sequence. “Easily half the cars on the road are CG,” states McGaugh. “And there’s one car in particular, an old beaten up Korean Chairman. It’s a car that got used over and over and over again. It became a joke that if something is lacking in the shot we just throw a Chairman in it. We had a small team in Korea – there were lots of stunt cars – and every picture car was LIDAR’d and texture referenced. We built a sub-set of them to a higher level than others. We captured much more data than we were allowing for building. Some of the cars got much closer to camera than we ever intended to get.”

In one particular shot, the practical photography called on some more fairly nuanced work from DNeg. “They called it the ballet shot when all these cars with pistons flip that they filmed with a Canon C500 mounted to an RC car,” recounts McGaugh. “One of the pistons hitting a car shot out and hit the RC car. It breaks the filter on the front of the lens and the car tumbles over – it settles the right side up looking exactly the direction the cars are sliding and we’re putting Cap there. You can see the broken glass from the filter in-camera and the tumble of it. It’s a really beautiful shot but it’s a complete accident. Through that broken glass we had to add digi-Cap on an upside down car and CG truck and a CG Ultron Prime. And we had to cover up all the stunt people on the side of the road.”

Ultron Prime almost escapes with the casket by commanding his Sub-Ultrons to fly down and latch themselves onto the truck. “An animator worked on that for almost a year,” recalls McGaugh. “That was shot was originally conceptualized differently. We had a little ceremony for the animator to give them a bottle of champagne – it was only 5 or 6 days short of a year on the shot.”

Back to the top.

Envisioning Vision

The action: Ultron Prime seeks to construct a perfect humanoid body for himself out of synthetic tissue and the gem from Loki’s scepter. The resulting Vision (Paul Bettany), is ultimately, however, brought to life by Thor’s lightning melding with J.A.R.V.I.S’ code which has been uploaded by Stark. Bettany played the character on set, with Lola VFX making android-like alterations to his distinctive cranberry/purple-colored facial features, and Framestore and ILM delivering CG body and cape elements.

Casting Lola VFX: “We went to Lola who I’ve worked with before and who did the amazing Skinny Steve,” states Townsend. “Their approach I really like – they didn’t go with let’s just go all-CG, they really thought, let’s use the photographic base we’ve got and build on that rather than throwing it all away. And then Lola worked closely with Framestore on the birth of Vision sequence, and with ILM for the final battle – whenever you see a close-up of the face, it’s Lola’s face on a CG body or digitally altered body made by Framestore or ILM.”

In Vision, Townsend sought to create a character that was ‘very ambiguous visually.’ “He needed to be a character that as an audience we would look at and we wouldn’t quite understand what he was and how he was created. In our world of visual effects we often talk about the Uncanny Valley when it comes to doing digital humans – the closer we get to a human the harder it becomes and the more oft-putting it becomes and the more dead the character becomes until you rise up the other side of the valley and you get a perfect CG human. I wanted to approach the Uncanny Valley from the other side. We made Paul Bettany up and put dots on his face and I wanted to push him so he dips down into the Uncanny Valley from the other side – starting at human and going a little bit synthetic, so that he’s a little bit off-putting when they’re looking at him as an audience. So you’re not quite sure how he’s created, but at the same time you believe he’s there.”

“I wanted to use these very fine CG cut lines,” continues Townsend, “and take away his pores, and smooth out his skin and make him a little bit more synthetic, and add a little bit of sub-surface glow to him, so that as a character you get everything from the performance Paul Bettany gave on set which was phenomenal but you also have something that is a slightly more synthetic character.”

On set, Bettany wore a scalp and head prosthetic that was attached from his forehead around the ears, down to his neck and shoulders, along with a small chin piece. His face and neck were then airbrushed in the cranberry/purple color. Lola’s brief was to take the prosthetic and make-up effects and produce “an indefinable, otherworldly being that wasn’t human, wasn’t a robot and likewise with the audience being able to guess how it was done,” outlines Lola visual effects supervisor Trent Claus. “We wanted to find a look that didn’t look like it was done with makeup but also not done with all CG. Somewhere in that middle ground – a very difficult place to take a live action character.”

As with many of their previous digital make-up effects, Lola principally relied on 2D compositing solutions for Vision, mostly in Flame. However, the back and top of Vision’s head was 3D, and a 3D approach was also crucial in realizing the final face shots. “We created a model of the final design of Vision which we tracked and roto-mated to the shots,” explains Claus. “That would provide a guide for all our comp’ers for where the design lands and also lighting cues and that sort of thing, like the edges of the plates on his face, to know where the highlights should be hottest and the shadows should hit to create that 3D illusion. It was used as the starting point, and then all of those surface treatments in 2D compositing were done using that as a guide.”

The 2D face work began with referencing the lighting and texture cues from the prosthetics. “We did a whole round of skin treatments on the footage before we began applying the design elements,” says Claus. “We worked at removing pore texture and imperfections and some wrinkles, but you want to maintain all of the structure. You can’t simply just blur grain everything – you can’t obliterate it all – you still want it to look like Paul Bettany.”

Some of this clean-up included work on the prosthetics themselves. “In many cases it would intrude into the face where it shouldn’t have been a prosthetic,” notes Claus. “So we’d have to clean plate around his head – the background – but also clean facing that! We’d be creating more skin where it hadn’t been formerly obscured.”

Unlike prosthetics, Lola would actually be transforming the skin itself rather than adding on top. “We transformed different parts of his skin to match different qualities of the skin,” states Claus. “We made some areas more reflective, some areas less reflective, and then create the machined edges through light and shadow, patterns through varying the shade of the skin. There’s a corrugated or horizontal pattern that’s applied to his skin. It’s an intricate system of plates and layers and valleys and machined edges that were actually all created for the most part with effecting the actor’s skin itself.”

Vision’s mechanical-like eyes were approached slightly differently, but still with predominantly a 2D solution in Flame. “They were mostly done as matte paintings,” says Claus. “It was designed as a series of concentric circles emanating out from the pupil and it looked to me like they were cut apart in compositing and made so they could expand and contract like a camera diaphragm.”

“This was done with a CG sphere that we would do as an eye replacement,” adds Klaus. “The compositors would then hand animate not only where he’s looking and focussing, but also reacting to lighting changes. They would be hand-animating the revolutions and contractions. Then over the top of that you’d add the more human elements – reflections and the wetness and little bit of tissue around the edges you’d expect to see in a human.”

While Lola handled Vision’s facial features, his body and cape were completed by Framestore and ILM. The ‘birth of Vision’ sequence, for instance, features a fully CG Vision bursting from his cradle after being blasted by Thor’s lightning. Framestore worked on the body of Vision for these shots, starting with his ‘light cage’ that was like a 3D blueprint, through to a digital double for Bettany. “We started with scans of Paul Bettany as just Paul and then also in an undersuit that he was wearing underneath his costume,” explains Framestore visual effects supervisor Nigel Denton-Howes, who shared duties with Rob Duncan. “We had another scan of Paul in full costume as well.”

Framestore’s initial body design effort centred on Vision’s complex array of plates on his skin surface, a feature that was ultimately pared back in the final look. “The rigging was extremely complicated with the plates on the surface,” says Denton-Howes. “They were originally supposed to move around a little bit. In the final film they are more static.”

The studio’s proprietary muscle sim and skin slide solver was used for the plates lookdev. “We have a system which attaches a skin layer onto a surface – we used the same solution to put the plates on top of the skin,” describes Denton-Howes. “It’s really powerful in that we can be moving multiple layers of skin and they all work together moving up and down and the anatomy underneath the surface. It really allowed us to get some anatomy underneath the surface that you could really believe in as he was moving around.”

Back to the top.

Battle for Sokovia

The action: Ultron uses vibranium he has stolen to construct a machine that will lift part of Sokovia into the air with the aim of dropping it again like a meteor – and causing the extinction of mankind. With Vision, Quicksilver and Scarlett Witch now at their side, the Avengers set about evacuating the area, while battling Ultron and his Sub-Ultrons amidst the earthquake-like chaos. ILM took the lead on major simulations and effects for the shots, with other vendors also contributing.

Casting ILM: “We studied a lot of footage of earthquakes, mudslides, ice shelves breaking off, collapsing buildings and buildings being demolished,” outlines Townsend. “We wanted to make it feel as real and visceral as possible; it had to be grand, scary and cinematic, but not to Hollywood cool; we wanted a messiness to it, and a level of detail so that on multiple viewings you’d see more and more. ILM did an incredible job, creating these amazing sims and artfully rendering them and compositing so that they told the story while being awe inspiring.”

Production filmed in several locations for the sequence. “Joss’ brief was to create the feel of a mix of almost medieval Eastern European buildings with Soviet Bloc style 60’s architecture,” outlines ILM’s Ben Snow. “The street level stuff was shot in several small towns in the Aosta Valley in Northern Italy and at a decommissioned Police Training facility in Hendon, London. The art department had dome some street level concepts based on these locations. Our VFX team went into Aosta a week ahead of the first unit and shot a bunch of stills – panoramas, tile-sets, building textures. Chris Townsend and I selected a bunch of streets and buildings we got LIDAR scanned and photographed – as inspiration for our generalist asset creation of the city. I was directing the aerial unit and we got a lot of footage both as reference for all of our wide shots and to be used as shot. We did the same set of photos, scans and aerials at Hendon and created CG models of the whole set there which was dressed both for pre and post lift off.”

The rising up of the land causes buildings to crumble and the earth to crack open. ILM took a new approach to simulating the destruction than they had previously done. “We’ve mostly been doing destruction at the building or maybe city block level,” says Snow. “Our pipeline has been to make a traditional CG model, break it apart using hand modelling and fracture software, and use a library of parts to populate the interior. If the original building had been a generalist asset created in 3ds Max for a large digital matte painting environment we’d covert it to our traditional pipeline for the destruction. But for Age of Ultron we had the unique visual of a whole city ripping out of the ground.”

“Our starting point,” continues Snow, “was a much more complex asset with many buildings which we wanted to see break apart with the same detail in as our prior stand-alone building destruction shots. So Ian Frost did a great job with the rigid sim team and generalists in creating a new workflow where the generalist model of the city and ground its sitting on could be taken into Houdini and destroyed, plus then through our proprietary Zeno Plume tool for dust simulation, then back into Max for rendering the buildings with V-Ray. It was a difficult task and we were sweating while it was being developed, but it made things much more flexible in the end and allowed the wider shots.”

The final ‘look’ for the shots came from aerial photography reference plates aimed at guiding the lighting. “Much of the wider environments were generated by Johan Thorngren’s generalist team as digital matte painting type work using the material shot as look and lighting reference – and the final versions rendered by them using 3ds Max and V-Ray,” says Snow. “For the closer shots we shot material on-set, including some drone footage and tried to use as much of the real plate as possible, shooting off some practical dust and debris on set and then adding to it in CG. The closer shots were simulated with our Zeno rigid simulation tool and rendered in RenderMan via our traditional pipeline. We recreated the set pieces as CG to help blend and further damage them, and did some cosmetic damage like cracks as generalist work.”

“We’ve found that an essential workflow is to ensure that rigid destruction, the dust and debris FX TDs and the compositors work as closely as possible,” adds Snow. “Anyone whose done this sort of work had probably had the experience of tweaking a rigid simulation to the nth degree then seeing it covered up by CG dust and practical elements in the final version. Florent sat down with Mike Balog, our creature/rigid destruction supervisor and Jon Alexander our composite supervisor and worked ways we could get the simulations into composites as quickly as possible. We encouraged the compositors to lay in whatever 2D elements they could – so we could get to the ‘look’ of a shot as quickly as possible and work out where to put our resources. It was still a slow process but the teams did a great job and it certainly saved us time.”

One particular challenge of the sequence was that it was told from ground level, in and around the machine that lifts up the land, and atop the meteor-sized chunk. ILM had to pay close attention to issues of scale and spatial setting. “It was a difficult problem,” acknowledges Snow, “and from a story standpoint Joss and editorial were working during post to make it as clear as possible. The Aosta valley where we shot much of our ground level Sokovia footage is ringed by the Italian Alps and also gets quite hazy, which was a pain for some of our aerial days, but we got some great reference photos of mountains through the haze. We used that as inspiration for the shots of the city lifting up, adding haze to help sell the scale. Communicating that the city was flying was something we put a lot of thought into – it’s a hard sell when you’re shooting on the solid ground. We did a series of tests – moving the background at different speeds, adding camera float, adding blowing dust and paper etc. We arrived at a balance where we always added a slight float to the shot, some dust and paper and a slight movement to any horizon or clouds you see.”

Above: watch b-roll from the Sokovia shoot.

ILM had also plotted the height of the city over the course of the end sequence before shooting and had acquired footage and still panoramas from the helicopter to cover earlier views. “Then,” says Snow, “we dug out reference and reviewed options with the film-makers for the higher altitude and used that to inspire generalist matte paintings. So we had a clear playbook that said ‘at this time we’re at this height and it should look like this’ which was used not just by our artists at ILM but shared with the other vendors to keep us all consistent.”

Along with wide shots, ILM worked on simulations and effects for closer-in views, such as the bridge that is torn in two as the lift-off begins (Special effects supervisor Paul Corbould was responsible for the live action effects). “We created CG extensions to a partial set built at Hendon which actually jutted over a soccer field,” notes Snow. “We based the walls of the river on our scans and stills of rivers in Aosta and extended the bridge in CG with a break-apart model. We did rigid sims in our proprietary Zeno rigid simulation tools and used our Plume particle simulation software for the dust, plus some nifty compositing as is essential with all these sorts of shots. Florent Andorra and his FX TD team set up a bunch of shelf plug-ins for our Zeno software and Houdini to make it faster for artists to get a first past simulation on the dust and smaller debris which was then tweaked shot by shot.”

That bridge destruction, says Snow, was one of the more difficult sequences to realize. “As the lift-off starts we see Captain America running up the bridge to check there aren’t stragglers from the evacuation still on there. The bridge collapses as the city rips itself out of the ground in a series of closer shots and we cut wide to a shot with two building towers on the right and the bridge just beyond them as the city clearly lifts off, breaking along the river-bed. It was one of those shots where you see some very pale faces when you brief the artist team on what you’re hoping to see. The first challenge was getting the city looking real. As mentioned we started with aerial photography of one of the Aosta towns and a similar plate of the two tower building from our Hendon location. This gave Daniel Trbovic, our generalist in charge of the Sokovia flying city asset a great reference for look and lighting, and Alex Tropiec the compositor, a good guide to the haze and atmospherics. Just getting the non-floating city to look as real as we could was the first challenge – meanwhile the rigid team was getting the workflow of all that geometry through to fracture, rigid and particle simulations sorted out.”

“We finally saw some hardware renders of the breaking ground,” adds Snow, “then the buildings riding that ground and it was a relief, but that was when we got the FX TDs and the compositor laying in dust so we could focus on adding interior detail and more model-work on the buildings that needed it most. It took many months and I’m glad to say that while the shot wasn’t the first destruction shot we finaled, it was one of the first of the city lift off shots – sometimes shots like that can be the first to start and last to final!”

Back to the top.

Putting the UI in UI

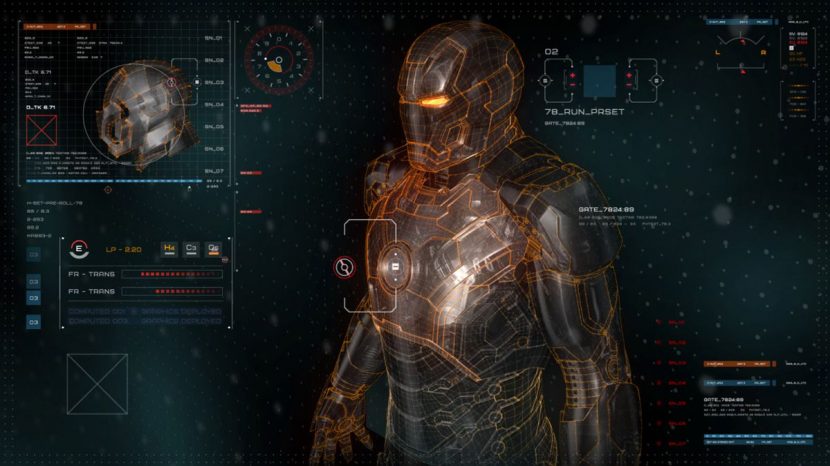

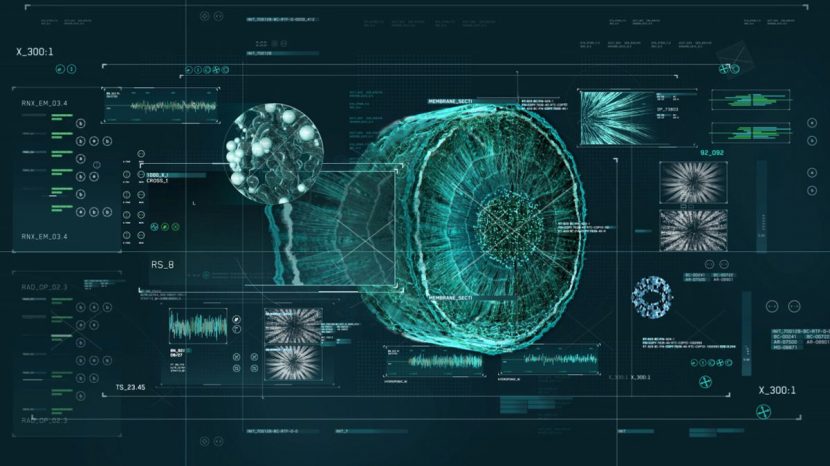

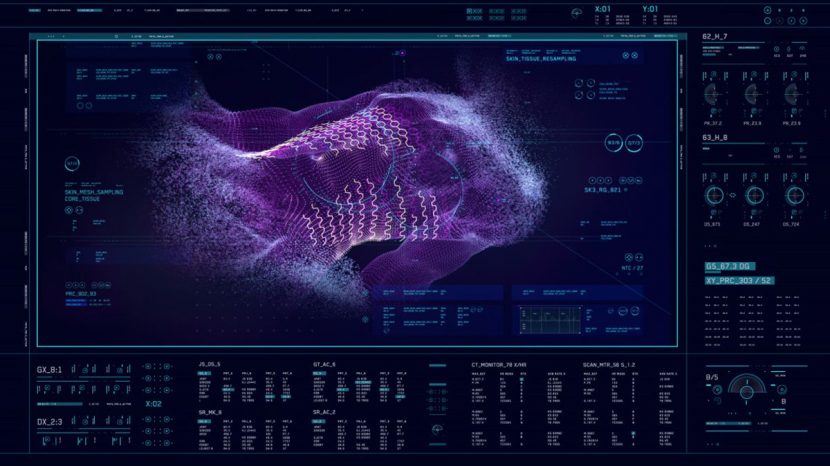

The action: On-set screens in Stark’s lab, Dr Cho’s laboratory, the Quinjet and other locations in the film were post-added and filled with unique imagery and animations that matched the character using them. Territory delivered the screen visuals.

Casting Territory: Having delved into screens and UI’s on Guardians of the Galaxy, Territory was well placed to bring the UI in Age of Ultron to life. And they were brought on early by Townsend and production designer Charles Wood and to guide the graphics from pre-production, production through to VFX delivery. “It will always be more developed and consistent if we’re talking to the art department and the director in the pre-production,” says Territory creative director David Sheldon-Hicks, “then dealing with actors and the director in production and then seeing that through in VFX.”

Above: watch Territory’s online reel for Age of Ultron.

An early design initiative was to come up with concepts that matched different characters in the film. “There was a certain bar – with Iron Man imagery in Stark’s lab especially – in terms of technical advancements that we had to show,” notes Sheldon-Hicks, “but with new characters in the mix we could also offer up new technology perspectives. We had to think about color palettes per character. The easy one was Banner – you just go for bright green with him. You’ve got Stark who historically was more in the oranges and golds mixed in with a bit of silver. Then we have Cho who was more surgeon/doctor and very advanced medical tech. The Fortress was more rough around the edges and more utilitarian.”

“Joss also wanted more realism and grit to the UIs,” adds Sheldon-Hicks. “This involved using more real-world content such as MRI scans or engineering drawings and blueprints and we let that collide with the more fantastic Marvel universe. When you look at data in its raw form it does become organic and becomes quite beautiful. So it was great to use more biological 3D scans. We also looked at 3D printing, the way things are 3D printed up and applied those ideas to way we animated the screens.”

Territory also took art department concepts – such as those for Loki’s staff – and digital models from the VFX vendors, and then began applying the studio’s own graphic styling to fit the UI environments. “It was all about building screens to tell the story and helping people locate what they need to see,” says Sheldon-Hicks. “So if you’re in Stark lab ideally what you want to see is an Iron Man suit.”

The next step was how the imagery and animation played out. Territory looked to make the UI part of a larger construct, meaning that imagery could be zoomed in on and shared across screens. “Usually screens are from one workstation and area,” notes Sheldon-Hicks, “but we were very aware that people would be sharing ideas and concepts and looking at different parts of a project and looking at parts zoomed out. The analogy was the Google Maps of UI – one person could be looking at the effect of plant cellular structures and be going in very close, whereas Stark might be coming out of that, say.”

Territory’s toolset revolved around mostly Illustrator and Photoshop for laying out styling designs. Wireframe work used Maya and would often involve ensuring the CG models did no look like a ‘dense mush’. “Then,” explains Sheldon-Hicks, “we bring it all into Cinema 4D which is a good workflow between AE and Cinema 4D – 2D animation in AE and 3D in Cinema 4D. Then if we do need to comp shots, we use NUKE to mock it up or do final delivery, but here we provided plates to another vendor to comp in. The great thing about Marvel is they’re striving for believability in a world of fantasy – and we can fit our work right into that.”

Back to the top.

Tribute to the heroes

The action: Age of Ultron’s main-on-end titles feature sweeping camera moves around a marble-like monument of the Avenger heroes depicted in battle against Ultron and the sub-Ultrons.

Effects casting: Perception handled the work after pitching several concepts to Marvel. “We presented all our ideas and the one they picked was what we titled ‘Earth’s mightiest heroes’, which was a monument,” explains Perception co-founder Jeremy Lasky. “It was something larger than life that you might see in a European plaza – some big and grand and heroic. It all looks like it’s been carved from one piece of rock.”

Percepton’s first step was to acquire the relevant character models. “We were fortunate that they had scans and CG doubles of almost all of the actors in costume,” says lead artist Doug Appleton. “We were provided those from ILM and the other vendors.”

For the quality of the structure itself, the team looked to real monuments. “We have the MET right down the street from us,” notes Appleton, “so we went down there to check it out and one thing we learned was when you think of marble you think of veiny marble with cracks. We go to the MET and all of these beautiful sculptures are cream pristine polished marble. So we had to re-think our approach. We also noticed how much light travels through marble – it was surprising. So we tried to do a blend of what would someone really sculpt this out of but also what do people think of when they think of marble. Also, we had the Sub-Ultrons slightly dirtier to help distinguish between them and the heroes.”

To get buy-off from Marvel on the camera moves, Perception built the structure first and then developed different views. “We ended up with probably 100 different camera moves just in playblast form,” explains Appleton. “We whittled that down to 27 select shots and then made the edit from that. Then we would go in there and start doing final shots.”

Perception artists worked in zBrush to sculpt the characters, then applied basic rigs in Maya for poses. “We’d then hand that off to the zBrush guys and they would go in and do stuff like fixing things,” says Appleton. “For example, we made sure Iron Man’s panels weren’t going to bend when he stretches his arm out. The artists would straighten it out and make it look like a solid piece of metal that wouldn’t be deforming in any weird way.”

The final lighting and rendering was handled in Mental Ray through Maya. “We worked on the marble textures and shaders at same time as lighting,” describes Appleton. “We wanted to experiment with different times of day. The initial look was magic hour but we considered what it had to look like at noon or overcast.”

Finding the right balance between marble quality and character detail proved challenging. “The models we received were hundreds of different panels and pieces,” says Appleton. “For us that wasn’t going to work with the sub-surface because we would start seeing how panels were intersecting. So we had to work out how do we combine all of those panels while still keeping that sharp detail but making it one solid thing.”

Back to the top.

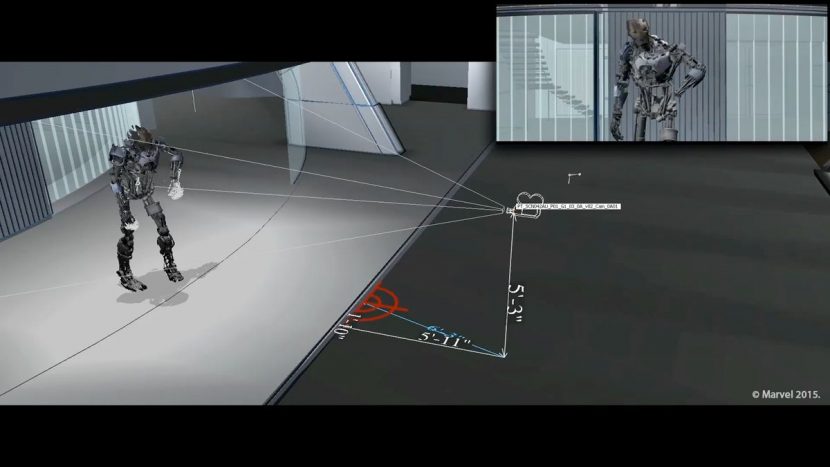

Powerful Previs