Earlier this year, Budapest studio Digic Pictures produced a stunning three minute trailer for the Bioware game Mass Effect 3. The trailer, dubbed ‘Take Earth Back’, tells the story of an alien invasion as Earth is attacked by the game’s Reapers. We go in-depth with Digic to show how the cinematic was made – in stereo – featuring behind the scenes video breakdowns, images and commentary from several of the artists involved.

Above: watch ‘Take the Earth Back’

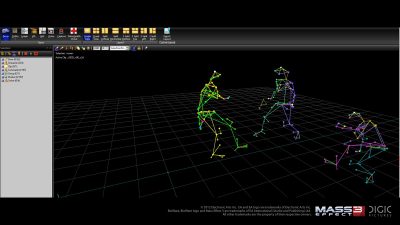

Motion capture

Artists: Csaba Kovari (Mocap TD), Istvan Gindele (Mocap TD), Gyorgy Toth (animator)

We used Vicon’s T160 camera system to record all motion for this piece. Currently, this is the most advanced optical motion capture system available, recording 16 megapixel grayscale images of 10 bit depth, at 120 frames per second. Our studio is equipped with 16 such cameras. What this means is that during the course of a shoot, when we record the paths of about 220 special markers, an enormous amount of data is generated: in the case of the Mass Effect piece the raw motion data was in the range of 100 gigabytes. We reconstruct the paths of these markers with Vicon’s Blade software, then re-target the data using Motion Builder onto the skeletons we work with in Maya.

In general we capture 2-3 or sometimes even 4 actors’ movements at once together with their props (swords, shields etc). We try to build all props accurately to scale, as much as possible, so at the end of the day we receive quite accurate motions after data cleaning and re-targeting.

The mocap actors’ experience in the choreography of fight scenes (Assassin’s Creed 2, Assassin’s Creed Brotherhood, Dragon Age 2, Assassin’s Creed Revelations, Mass Effect 3 teaser and trailer) is a great help to the director as we have worked with them on numerous projects since Digic Motion was set up in 2009. For a bit of trivia, the little girl’s mocap was recorded with our colleague’s daughter.

Usually the mocap shooting days are preceded by rehearsal days, for example if we have a two day mocap shooting session then the actors need at least two-three days rehearsal with the director. For the Mass Effect 3 – Take Earth Back trailer we had a twelve day mocap session, and recorded approximately seven hundred takes. Sometimes we may record up to thirty versions of certain important scenes and the director picks those that will be used for the movie. One scene might be made up of several different versions, depending on which looks better from different camera angles.

Usually we spend long hours on collecting references for designing the movements of the characters, let them be humanoid or beast like characters / creatures. Luckily enough, many of our colleagues are fans of the Mass Effect games therefore they were acquainted with its characters, props and unique universe way before we got into this project. Despite the precise, accurate mocap recordings a lot of keyframe animation is required for the characters’ animation. It is often the difference in size and proportions of the mocap actor versus his digital character, that necessitates manual retouching by the animators as well.

Character animation

Artists: Istvan Zorkoczy (lead animator), Robert Babenko (animation supervisor), Adam Juhasz (animator), Gabor Lendvai (animator)

In most cases the basis for the character animation is provided by mocap recordings, then during the actual animation phase we retouch, refine movements such as the interactions between characters/props, the posture of the hands and the facial expressions. Vehicles and other non-living objects have to be entirely animated in Maya using many scripts. The preparation of the recorded data is done in Motion Builder, where physical simulations “substitute” hazardous physical forces and dangerous movements that can not be done by the mocap actors. The route of each spaceship is determined by its own unique animation path, even where they are flying in formation, except for the fleet at the end of the trailer.

After the seven year old girl’s mocap recording was assembled into the shot we felt that it did not work well in the composition we created / designed initially, and we decided to adjust it by hand instead of arranging a new mocap session. We needed to straighten her posture, but at the same time avoid the full extension (popping) of the limbs. We also had to entirely remove some gestures that affected the whole body’s movement, while making sure that we kept the little girl’s spontaneous, girly movements, and natural clumsiness. Modification of extreme poses near the anatomical limits may have wide-ranging effects on all body parts, especially when each limb makes contact with at least one object, or gets constrained to one (eg. footsteps, manipulating the sunflowers, etc). Thus the control hierachy that defined whether the hands follow the sunflowers or the other way around, was often changed on the fly.

Watch the final animation of the girl.Adjusting the girl’s animation onto the ground object was also not trivial, as the mocap was recorded on the flat studio floor, while the ground object in the scene had a lot of irregular topological features, which in fact were changed several times during production. We used 4-5 animation layers in Maya for fine tuning the above, which were merged into the base layer at some point, to keep it clean and manageable. We ended up straying so far from the original data that we applied traditional animation principles more so than we normally would, considering camera-space and keeping track of motion arcs on screen etc, bearing in mind that the silhouette will still change with the muscle and cloth simulations.

The dynamics of the blinks were the most time-consuming parts of the facial animation. We paid special attention to ensure continuity with the following super-closeup shot, of the blink’s speed, its timing, the posture of her head, and the direction where she is looking. Following initial renders and simulation, there were then several rounds of final touches on her face, as changes in these seemed to always have an effect on her perceived mimicry.

Earth shot

Artist: Peter Hostyanszki (compositor)

That shot with the planet can be considered unique in the movie as it was entirely made in Nuke as a composite. During the concept phase we drew up not only the artistic guidelines for the final look of the shot, but also worked out the technical workflow to be able to realize it.

From the first animatic we could clearly foresee that a single projection could not work for the scene as the camera pans across a large part of planet, so we knew we’d need more camera projections. First we created these projections using several cameras, then the Earth’s basic texturing was done. We repositioned some continents for the sake of nicer shot-composition, then the direction of the lights were finalized, with emphasis on the position of the borderline of light and shadow, the so-called terminator.

The matte paint artist was then given basic renders from this setup. The matte painting had to be painted in two separate parts, first the light side then the dark side. To cater for compositing, he needed to provide separate layers for the surface of the Earth, the city lights, the fires, smoke elements, explosions, two layers of clouds, and the lights of the explosions you can see between the clouds. To perfectly fit the two sides (the dark and the light side) the finished bright-side painting was projected to its place, then it was used as the base matte of the dark side.

Watch a progression showing how the Earth shot was created.In the meantime, the basic stereo parameters were set for the scene using our in-house stereo camera rig, enabling us to see how the composite solutions underway would work in 3D. In the following step, the planet’s atmosphere was re-created in the composite based on the initial concept-painting, as the multi-camera projection setup meant this couldn’t be painted in 2D.

As the final matte paint was ready we displaced the surface of the Earth so that the matte paint would be projected on a more realistic geometry. After this came the animation and compositing of explosions and flashes. The flashes were painted on multiple layers, from the Earth’s surface upwards to the upper cloud layers, and several versions were tested before the animation was finalized.

One of the final steps was the addition of the Reapers to the shot. This changed a lot compared to the first concepts because we found that their sizes in proportion to the planet had a great influence on the perception of the planet’s size.

What made this scene even more exciting for us was that for the very first time we used our own live-action lens flares that we recorded ourselves. After extensive testing, we recorded the beautiful glosses produced by an anamorphic lens, with specific takes for each shot. These were then slightly retouched and amended for the final visuals, with procedural lens effects we had previously prepared in Nuke.

Matte painting – North American city

Artist: Peter Bujdoso (matte painter)

The first step for making a background image (matte painting) is the creation of a concept image based upon the director’s guidelines for his vision. The composition, atmosphere, the colors, the shades and shapes, the direction of the lights and the balance of the 2D and 3D elements are all defined at this point. Meanwhile we start collecting as many reference images as we can, generally photos, some of which might have certain details that could be useful as elements of the matte painting.

As the concepts are approved by the client we start working on the matte paintings. We create psd files with several layers, ie we work almost entirely with Photoshop files. The camera movements have great bearing on the layers’ complexity.

Those objects that are level with the ground surface such as the ruins, the debris, the tall buildings etc, are painted on a separate layer. On others are those elements that are made of different materials, that might need to be amended in compositing (animated glow, wavy water).

We follow the basic principles of traditional painting techniques, making use of the technical possibilities provided by the software’s tools. First we draw up the main volumes and shapes to create a composition, then work towards the finer details using small but important parts of the reference pictures to make the matte painting more realistic. Parts that could fit well are taken from the references and are integrated into the matte painting using Photoshop tools, adjusting colors and shapes as necessary. The proportion of “freehand painting” versus montage work depends on the actual project’s needs, whether the background needs to be more realistic or more painterly, and also on what we see and from what angle: if we can’t find a reference matching a certain shot’s angle, then we have to realistically paint it, and it is the same if the quality of the references (resolution) does not meet our technical needs. Last but not least, the matte paint artist’s character, and his or her personal preferences towards certain methods and techniques, also makes a huge difference.

It is also important to consider the painting’s “afterlife”, that is, whatever further adjustments or amendments might be needed during compositing. In the case of this shot we had to paint the smoke elements on a different layer each: the closer the smoke is the more distracting its stillness is, but this way we could apply some animated effects to them in post to add some realism. (The smoke column in the foreground had to be done with 3D smoke simulation separately from the matte painting. The steady background wisps near the horizon could stay motionless as you can’t really feel they are not moving.) The flames’ and the debris’ incandescence had to be painted on different layers because we could make them more realistic by adding slight vibrations to achieve heat haze effects. The matte paint is judged to be ready after the addition of all important 3D elements such as the Reaper ships.

Compositing – North American city

Artist: Ria Tamok (compositor)

The North American city shot is based on a digital painting. After the pre-production phase everything in the shot except the big smoke column and the alien spaceships (the reapers) was done in Photoshop and Nuke.

It was crucial to consider the technical limitations of stereo as early on as the pre-production stage. The same applies for planning the matte paints. Even though in this shot the pre-production steps were quite similar to a standard single camera production, doing the image projections could only be done in the 3d space of Nuke, because we see different sides of the buildings in the beginning and the end of the camera move.

Using two cameras, we had to watch out for the framing, so we added some extra headroom (+10%) at both sides of the image, to make sure that the painting covers the image for both eyes. The topography of the city was based on a concept design paint and a mood board done by the matte painter. Planning the shot, placing simple 3d geometry, setting the key light direction and the camera movement was all done in Maya. Defining the stereo screen plane also happened at this stage. Based on all this information we split the islands and buildings into different distance sections. After doing the preview renders we assembled the layers in Nuke.

By default we’d use the camera set by the director, but in this case we had two cameras (both slightly offset from the original shot camera) and we wanted to avoid rendering an extra image. So we chose the left eye as our main camera, and used that for compositing, and also used it for projecting the matte paints onto 3d objects.

The actual pre-production of the matte paint is done at this stage. Based on the camera move we choose the frame where we project the image back to the 3d geometry, and set the position and focal length of the projector camera. That is when the previously rendered 3d sequence gets replaced by the Nuke projection. Because of the stereo effect the shot wouldn’t work with a simple painted background, we needed the actual 3d buildings too. So we exported simple 3d geometries from Maya, loaded the obj files into Nuke and projected the matte paint on the actual objects.

We assembled the psd file for the matte painter from the basic 3d renders. As a perspective cue we also render an image with a checkerboard texture added to the objects. Because the projection happens onto actual 3d geometry the painter had to paint the buildings respecting the contours of the buildings from the reference image. But if a painted building really required a deviation from this simple geometry we modified the 3d object to match the painted form. Occasionally we projected the back and front side of a demolished building separately. The motion blur coming from the camera movement was calculated by Nuke when doing the 2d rendering of the 3d space.

Usually our matte paints are done without defocus and atmospheric effects: adding these effects at the compositing stage allows us to match them more easily to the other shots of the film. We regularly use painted smoke, clouds and fire elements, just as in this shot. Because of the stereo images though, we had to take special care that the painted smoke layers were all properly placed in Nuke’s 3d space. We matched Nuke cards to the exported 3d objects and the smoke was rendered on them.

The 3d geometries were used in Nuke to make the buildings occlude the reapers and interact with and integrate well with the smoke at the ground level. The matte painters also created masks that we projected into the 3d space the same way as the matte paint image, and used those masks in the compositing process.

In the meantime, the render of the animated reapers was completed. To add their moving shadows to the painted background we rendered separate shadow and occlusion passes, and used them as masks for color correcting the background. Since the basis of the shot is a digital painting we had to heavily color correct the reapers and the foreground FumeFX smoke to match the painted background. Atmosphere and defocus were added in post, and the tone mapping and grading step made the shot match the look of the rest of the film.

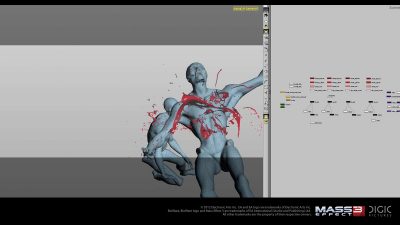

Blood effects

Artist: Daniel Bukovec (Houdini TD)

For blood effects we utilized Houdini. It was vital for us to have artistic control. There were two types of blood splashes: blood from gun shots and blood from blade cuts. Their speed and look differs greatly, so it was crucial to have maximum control over them.

For gun shots we sculpted a velocity field as an initial starting point for flip fluid simulation, and the flip solver took over from there. So the starting shape could be drawn by hand as curves or as a sculpted polygon geometry, then point velocities were applied with various criteria like main direction, turbulence, falloffs etc. In particular, fast moving characters posed a challenge for simulating fast gun shots, they had to be slowed down to have enough information on frames, then brought back to actual speed.

Since the cinematic was made in stereo most of the effects were rendered in 3D, but we had to use a few real elements, like blood spray, to sell a gun shot. Blade cuts were a bit more towards pure fluid simulation, as these effects are much slower than a gun shot, so it is easier to control them. An additional effect was the “flying flesh”, which was made by defining a wound form, usually a squashed/deformed cylinder, which was cut up into little pieces with a voronoi fracture tool and sent to cloth simulation, so the pieces could bend a bit. We wrote an Alembic translator for Houdini, to export the resulting geometry to Maya, to be rendered with Arnold.

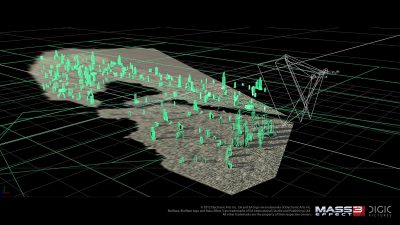

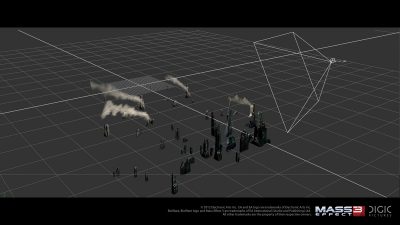

Destruction effects – Islamic city

Artist: Ferenc Ugrai (Houdini TD) and Viktor Nemeth (Lead Effects TD)

What the director wanted to see through the camera was already relatively well worked out during the animatic phase. However, the initially strictly artistic considerations made the execution of dynamic simulations difficult, as these usually need to be based on the laws of physics. Sometimes we have to digress from reality in terms of physical actions to achieve those visual results which we want to see, yet in the end these simulations have to look realistic and believable.

When dealing with dynamic settings, we had to bear in mind from the beginning that we needed to have maximum control over the “accidental.” In this case, we had to pre-define what events will be affecting each element and when exactly – then the execution was left up to the physics, allowing us to create controlled chaos.

Since physical simulations tend to require lengthy calculations, it sometimes happens that we don’t have enough time to try out different variations to work towards the desired results. In this case, and especially if the given effect is only of secondary importance, we take full control over the animation and the role of simulation is diminished. Example: in the moment when the top of the skyscraper falls into the camera some viewers might not realize at first that at the bottom of the picture a laser ray cuts another building in two. First the windows break then the supporting pillars start deforming and at the end the walls and slabs are swept away by the laser.

The building was modeled in high resolution (millions of polygons), therefore rigid-body dynamics didn’t even occur as a possibility. We had to build up a system where each and every constituent part was substituted with a dot. This made it possible for us to set up the destruction by the laser in real time. Houdini’s procedural system was a great help in achieving this. At the SOP level we connected custom deformers that reacted to the laser’s diverse characteristics. At the end the amended dot-cloud was used to help deform the high-res geometry almost in real time.

The explosions, flame and smoke effects were done in FumeFX. Thanks to our in-house tools we made an asset out of each and every FumeFX grid, then we rendered these in Arnold with the help of the our integrated project management software. For the Reaper laser beam we created three FumeFx simulations: one for the moving white core, one for the red vortex laser around it, and one more for the heat-haze effects. The three flashes around it were object based, so altogether we worked on six layers.

The buildings were broken up and dynamically simulated in Houdini, and the result was imported into 3ds Max as a vertex cache. First we created an rough animatic or previz for the layout and animation of the explosions using simple geometry (animated white balls.) When the director and the art director approved it we made a fifty-frame simulation that we substituted in for these animatic explosions. Ultimately, we rendered separately each and every explosion, their lights, and reflections – this way we could tweak the timing of the explosions in Nuke. For the part of the building that falls into the camera, all the explosions and flames were simulated on the spot. Where the laser cut the building in two, we rendered a separate mask, so the building below the flaming part was burnt out later.

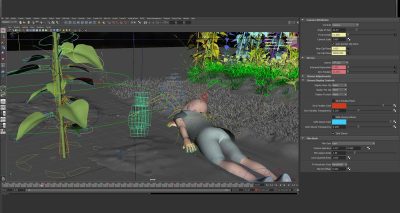

Asian industrial city shot

Artist: Viktor Nemeth (Lead Effects TD)

We started working according to the moodboard, so altogether we had five massive explosions, each with their own flame and smoke effects, and debris. Several additional dust and smoke layers were placed into the scene right where the laser ray hits the ground.

As we progressed with the scene more and more things had to be changed, even the camera setup was changed midway through the project. The FumeFX grids had to be enlarged for the new camera moves and the missing parts had to be simulated.

The simulation of such an explosion is made up of three phases: one low-res (approx. 150x200x300 voxels) then once it is approved by the supervisors we calculated the wavelet (at this point approx. 1.2-1.6Gb/frame). We minimize the grid, take the temperature and speed out. This way only 80-160 Mb/frame cache gets to the server.

In this scene we also used indirect lights bounced from the FumeFX grid – rendered in a separate pass, of course. This scene ended up with nearly thirty effect layers, plus the light passes generated from the effects. The debris and sparks were made in 3ds Max, driven by the low-rez FumeFX simulation’s speed, helping us to achieve the appropriate turbulent movements. In total, all the simulation files for the Mass Effect TEB trailer (including explosions, smoke columns, dust, debris, haze etc) took up approximately 2.7 TBytes of space on the server.

Lighting – ‘sunny to shaded girl’ shot

Artist: Balazs Horvath (Lead Compositor)

For the lighting of the sunflower field scenes, we tried to emulate a physically accurate sunny environment, for which creating our own HDR environment image seemed to be the best solution. We used a sun spotlight together with its environment skydome that had no sunlight on it – this is what we made the HDR image for. We tried to get the colour and intensity of the sunlight from the HDR image but in order to do that, we had to take a photo of the direct sunlight in a way that it would not exceed the ccd’s sensitivity. During the shoot of the chrome ball, we also took head-reference pictures so that we could compare these with our 3d faces that were rendered with our skydome+spotlight setup. These played an important role in helping us to refine the face shader. We repeated this comparison with a cloudy sky as well, even though ultimately we used only the sunny HDR in the movie.

Watch a breakdown of the HDR lighting setup.Our aim in compositing is to achieve a photorealistic impression. Wherever possible, we try to achieve this by using physically accurate models. The workflow was set up by modeling real-world processes. Optical effects are added on raw renders after emulating environmental effects. These are followed by color corrections that aim to reproduce the physical characteristics of film stock, then comes the final grade.

To help speed up the compositing process, we use a lot of automated tools that are applied in a standard order. The first step is the adjustment of render layers with their respective passes. These “pass-adjusted” renders then receive further adjustments down the line, such as color corrections, atmospheric effects (air perspective, depth based color correction), defocus, and lightwrap. After all this come the global effects, like white balance, and lens effects: vignette, lens distortion, lensbloom, glow, lensflare and at the end of it all comes the tonemap and grading for the given sequence.

Out of the above, the values for the atmospheric effect and the defocus can be adjusted all together in one place, even though these are calculated separately for each layer. The defocus uses a single lensed physical model together with a Frischluft plugin. Camera information is gained from the imported camera’s meta data. The point of focus can be animated in compositing. In addition to the above, we have several in-house gizmos that aid us in making the compositing process more flexible. In these examples the main steps that are generally in the compositing workflow can be followed, starting from raw render layers all the way through to final grading.

Watch a breakdown of the comp layers for the sunflowers field scene.In this example above you can see the compositing steps for the “half sunny” phase, with three shot-specific solutions:

1. The compositing solution for the reaper’s shadow animation that played an important role in the scene’s lighting

2. Adding details to the ground textures in post, by utilizing the UV projection pass

3. The sunflower layer’s specular modification

In the above example – the sunflower scene’s “shadow phase” compositing steps – there was emphasis on adjusting the girl’s layer via its passes. We replaced the reflection in her eyes and tweaked the face and eye shaders based on the reference photo.

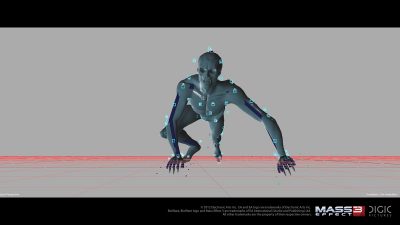

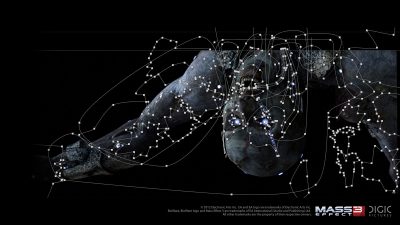

Husk transformation scene

Artist: Peter Hostyanszki (compositor)

The “transformation” scene had been much anticipated by fans: how exactly does a human being turn into a Husk? According to the given concept the human body transforms within a couple of days time. Due to the brevity of the scene the best solution for showing the passing of time was to imply a “time lapse” technique.

First we had to work out the lighting in Maya. The various periods of the day had to be lit and animated frame by frame, to show the way the weather changes from sunny, clear blue skies into cloudy, overcast weather that better emotionally matches the darker, moodier scenes to come.

The second half of the scene was done first in compositing, then came the time lapse animation working backwards from the end of the transformation. The sky had to be rotated frame by frame, then graded to the actual lighting. The animated atmospherics played an important role in helping to get across the idea of time passing, and further helped to enhance the vigorous time lapse effect.

Once the scene itself was assembled, the transformation of the character was to follow. Both characters (human and Husk) were rendered separately through the entire range of the shot with the timelapse lighting, then we could proceed with the work in compositing. It was important to stabilize the movements of the camera shakes on the rendered character layers so that the masks would remain at their respective places. This process turned out to be quite easy as we used the data from the render’s position pass in the tracker, and so we could easily reapply the camera movements onto the final layers.

The next step was to fit the two bodies onto each other, as they had rather differing shapes and volumes. We used warps to fit each character’s body parts to that of the other character – then we animated them in opposite directions: at the beginning the Husk was totally deformed so that it would have the features of a human being, then by the end of the transformation it was back to its original shape. With the human being it was the opposite. Once the two bodies perfectly covered each other, we could proceed with the animated masks and paints that helped create the illusion of transformation.

Once the initial mask animation was ready on the most important parts such as the eyes, the mouth and the neck, we proceeded with the cracking and decay of the skin, then the vaporization of the metal parts. As we were making the new masks it was important to make sure that the effect should have direct influence on them so that we could immediately see the results as we were drawing them. We used the UV pass to map a number of dirt and ground textures onto the character, to serve as a basis for the cracking up of the skin and the vaporization of the metal parts. The character underwent a number of animated color-corrections using these masks, in opposing directions, and so while our man started to look sicker and sicker, the husk got prettier and prettier. These color-corrections were added at several stages of the pass-assembly part of the composite tree, for example as separate nodes on the color, SSS (sub-surface-scattering), reflection, and specular passes, but also further down the tree, onto the assembled branch.

The animation of the transmutation was next. The most important parts, such as the eyes, the mouth, the neck, the ears then the clothes and metal parts were treated separately one by one. We produced several animation tests for the pipes which give the Husk much of its character, to have them appear as dynamically and dramatically as possible. In the final step, we worked out the disappearing of the hair and the metal parts in detail, frame by frame, making sure they disappear gradually, to give a sense of a beautiful, slow process of decay. As a final touch, we created some shadows on those parts of the husk where the human character was occluding lit parts of the husk’s body.

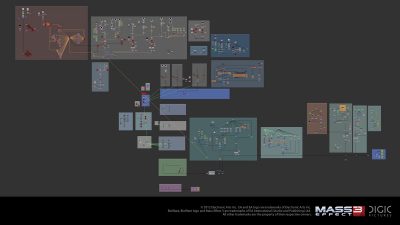

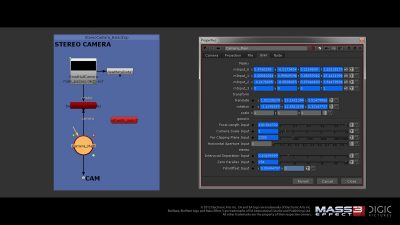

Stereo pipeline

Digic’s stereo 3D pipeline has been engineered according to usual standards. The 3D phase is built upon Maya’s two camera stereo rig (we’ve taken out the middle camera completely, to spare our rendering resources). Depending on the demands set by the spatial structure of a given shot, we sometimes used more than one stereo rig, with highly varied parameters. We had to doubly render (left and right camera) and store each layer and their various component channels such as normal, position, fresnel, incidence, reflection, refraction, specular, translucency, txcoord, zdepth, light passes, and so forth.

A script helped to make available to our Nuke stereo cameras all relevant meta-data written into our 3d renders, thus we could change their parameters during compositing. This proved sufficient for matte paintings projected onto exact 3d geometry – however for projections onto simple planes, we had to create 2D masks or fake a z-like channel in Photoshop to help place the matte painting in stereo space.

The first shot in the film especially posed several new challenges for us to solve. This shot encompasses enormous space: the clouds seen at the beginning are at a distance of several kilometers, while in the foreground we have a ladybird marching on a blade of grass at a distance of about 40 centimeters. All the while, the stereo camera keeps changing its focal length between roughly 60 and 160 mm.

The scene’s perceived space is constantly changing due to the camera’s movement, while the unfolding events keep changing the weighting on the various components of this space – where exactly are we looking, and when? The stereo3D parameters (interaxial separation, screenplane’s position) must also change over time accordingly. This was one of a number of shots where we performed additional depth-grading and parallax refinements on each layer in Nuke, primarily in order to be able to reduce the differences between planes at various depths in such a way so that the plasticity of the little girl and her environment – indeed their perceived depth in space – could be maintained.

In general, our lessons learned during this project about stereo3D fit well into our line of earlier experiences with virtual technologies: in order successfully solve the task at hand, first we must develop a thorough understanding of the underlying physics, human physiology, and perhaps most of all, the workings of the human psyche.

All images and clips copyright EA International (Studio and Publishing) Ltd. Courtesy of Digic Pictures.

Thinks links to the images appears to be broken. Otherwise, I really enjoyed this article. Thanks guys.

Thank you – those image links should now be fixed.

Hmm.. videos are not working for me. Even when I download mp4 its still most of the time black.

And I really enjoyed this article to. Great job fxguide team 🙂

Pingback: 21600×21600の超高解像度!!地球のテクスチャーのご紹介!! – CG Tips

Pingback: Mass Effect 3: Take Earth Back Trailer | CGNCollect