Rarely do companies seriously attempt to change the way we traditionally work in visual effects, but French start-up Isotropix, with its product Clarisse iFX, is attempting just that. Clarisse iFX aims to change the way CGI pipelines work, via one central mantra: reduce the amount of time from any interaction to the machine starting to render final images. It wants minimum time to first pixel output in any situation.

Clarisse iFX is a new style of high-end 2D/3D animation software. Isotropix is a privately owned France company and has been working on Clarisse iFX now for several years. It has been designed to simplify the workflow of professional CG artists to let them work directly on final images while alleviating the complexity of 3D creation and rendering out as many separate layers and passes. Clarisse iFX is a fusion of a compositing software, a 3D rendering engine and an animation package. Its workflow has been designed from scratch to be ‘image-centric’ so that artists can work constantly while visualizing their final image with full effects on. It wants artists to see the final as much and as constantly as possible.

At its core, Clarisse iFX has a renderer that is primed and ready to start final renderings within milliseconds of your finger touching something requiring a re-render. It provides a lot more, but this central mantra means that the program feels remarkably fast, insanely faster than it should given that the program is rendering on CPUs and not GPUs.

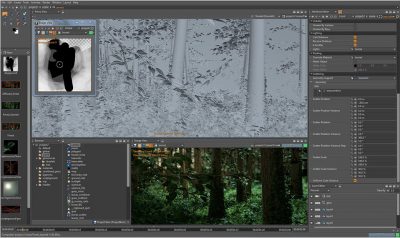

This image of the forest is a 30 billion polygon procedural forest where the procedural rules are similar to typical particle animation logic. The trees here use anisotropic modified solution not unrelated to say fur. The forest uses Clarisse’s combiners which are a concept similar to groups crossed with instancing, allowing for density based point cloud generation on objects. The artist sampled the underlying trunk geometry, generated points which were used to scatter procedurally leaves and branches.

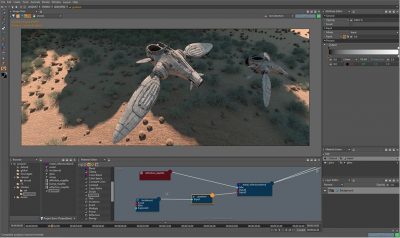

Extending out from this core are some other remarkable innovations. Most interesting is the layers approach to 3D. Everything in Clarisse is abstracted to a render element – even the UI. It takes the object-based workflow approach even further than say Nuke. In practical terms, this means a blurring of Photoshop layers with common 3D approaches. For example, you could have a complex 3D forest scene that is then textured onto a toy 3D ball which is then in a 3D room scene which is then on a flat postcard. Like Photoshop layers, you could apply any 2D color correction or image processing filters to any of these stages such as linear dodge, hue/sat (color correction), 2D defocus and gaussian blur etc (with full 3D motion blur also possible and depth of field coming), but unlike Photoshop, nothing is explicitly rendered out. They all ‘sort of’ live inside the one primary file. There is no need for a user to really concentrate on whether the image layer is a 2D image or 3D image. At any time you can go back to the tree, the toy ball or the room geo.

This makes the product a natural for matte painting / digital environments. Artists can project textures that are actually 3D over complex and multiply-instanced geometry, all rendered in beautiful detail. The program supports much flexibility. A digi-environment artist can build, model and tweak environments of incredible complexity in unbelievable times. In fact it would be to be expected that digi-environment artists may adopt the product first, when it rolls out later in the year.

The new workflow is to import models from any software package such as Maya, and then they can be instanced (with the help of volumetric randomizing placement tools), lit and rendered extremely quickly. In our testing, within seemingly seconds we had built out from a few models a 2.7 billion polygon scene, thus producing scene complexity from very little effort. The program supports complex models being imported including vector displaced, vector normal Mudbox files.

The renderer is their own global illumination Monte Carlo renderer – soon to be working with an irradiance cache (as the program is still in private beta). It is not a physically-based shader system, it is not approaching rendering from say the complete realism simulation of a Maxwell renderer, but it works hard to produce photorealism and most importantly it boasts speed via layer and image caches. If it does not need to fully re-render something it doesn’t, and the end target quality is an easily accessible variable.

The build we looked at was running in 64 bit on Linux. The program runs on Intel or AMD based x86-64 CPU, with a minimum of 2 GB RAM, but this should be normally much higher. It will at release run on Windows XP Professional SP3 or Mac OS X 10.6 (later in the year) or Linux Red Hat/Centos 6 and it does need an OpenGL 2.0 compliant graphics card. While the program is not GPU, “we never closed any doors to GPU in the future,” explains Sam Assadian, Isotropix CEO and Founder, who spent several hours with fxguide going over the system.

So popular was the first technology showing that the company has revised its plans for a public beta and may just go directly to beta with a group of mid-to-large companies. The product was intended to be released to a public beta mid July, or certainly before SIGGRAPH, but fxguide believes that is no longer the case.

“Clarisse’s workflow is both very intuitive and powerful. Image quality is just greatly improved and in actual cases, CG artists’ productivity is heavily boosted up to 10 times!,” says Yann Couderc (Upside Down, Immortals, The Nest) a former VFX supervisor/lighting artist, now working for Isotropix.

Clarisse iFX is powered by a modern and robust fully multi-threaded evaluation engine, smart enough to avoid unnecessary re-computations when scene parameters are changed, and so capable that it automatically detects and eliminates redundant data to save memory. “As a former render TD, I easily recall the number of hours I’ve spent optimizing manually 3D scenes that used too much memory to be rendered. It’s amazing how many painful hours I could have saved if only we were working with Clarisse at the time,” adds Couderc.

Future

There are a few additional things to note – the color space models are limited to Linear or sRGB, and while not working yet, the final shipping release will have point based and ray traced sub surface scattering. (Ray traced will be released first then later on Point based). In terms of file interchange, Alembic or GoZ (Zbrush’s format) are not yet working although they too are also planned for release 1.0.

Of course at some point any renderer will fail to be responsive and here there is both per shading group visibility, variable noise rendering setting and renderer decoupling of geometry anti-aliasing from shading antialiasing. Only when the product is released or in public beta can one truly assess the interactivity in a serious production environment.

While the program we saw worked as one integrated unit, there is an SDK and it is worth wondering if down the track a version of this program – the non-UI backend (cnode or Clarisse node) – could not be made to work with a product like Katana. Rather than being in opposition to each other as some third party commentators and bloggers have suggested, it seems like the backend of Clarisse would make for a perfect Katana SDK plugin renderer. Katana ships with no shaders and any renderer can gain full SDK access to Katana, making the marriage of the two a tempting development to contemplate, but not one that either The Foundry or Isotropix have even hinted at.

SIGGRAPH 2012

You can see Clarisse iFX for yourself as there will be a Tech Talk at Siggraph in LA:

SIGGRAPH 2012 Tech Talk: Wednesday August 8th @ 3:45 pm

If you want a private demo, you will need to be lucky, before fxguide even spoke to Assadian last week he noted, “every slot was booked out there has been so much interest. Artists are telling us it is like looking 10 years into the future – they are so happy.” As a result the company is trying to arrange additional demo slot capacity.

Pingback: The State of Rendering – Part 2 | 次时代人像渲染技术XGCRT

Pingback: Clarisse - Clarisse Ifx - Isotropix