Siggraph really got fully underway today with a range of talks, technical papers, panels and seminars. Although the trade show will only open tomorrow, Siggraph 2011 in Vancouver got off to a great start.

Pixar’s shading techniques for Cars 2

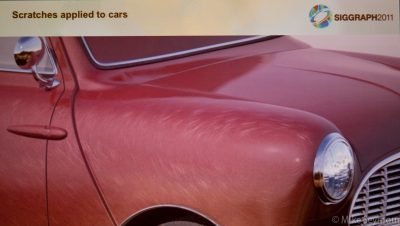

In one of a number of Pixar talks on Cars 2, artists Philip Child, Junyi Ling and Alex Seiden gave an insightful presentation on three different shading techniques used for the film’s vehicles. The first technique was a method that dealt with the multiple layers – paint, rust, dust and others – efficiently while also drawing on the previous assets used for the first film. In the end, Pixar saved 17% in terms of time and some minimal memory, but there was no change to the artist workflow. The second technique was a procedural metal-flake illumination model that approximates the complex properties of modern car paint, including different looks for paint up close and from further away. The third technique was a new method for rendering circular scratches that seem to appear on car finishes, especially around specular highlights. Interestingly, it was attempted to replicate these kinds of scratches in the first Cars, with further development for the sequel. Ultimately, Pixar defined what they called the ‘specular gradient vector’ to make the scratches work.

In one of a number of Pixar talks on Cars 2, artists Philip Child, Junyi Ling and Alex Seiden gave an insightful presentation on three different shading techniques used for the film’s vehicles. The first technique was a method that dealt with the multiple layers – paint, rust, dust and others – efficiently while also drawing on the previous assets used for the first film. In the end, Pixar saved 17% in terms of time and some minimal memory, but there was no change to the artist workflow. The second technique was a procedural metal-flake illumination model that approximates the complex properties of modern car paint, including different looks for paint up close and from further away. The third technique was a new method for rendering circular scratches that seem to appear on car finishes, especially around specular highlights. Interestingly, it was attempted to replicate these kinds of scratches in the first Cars, with further development for the sequel. Ultimately, Pixar defined what they called the ‘specular gradient vector’ to make the scratches work.

Computer Animation Festival – Live action VFX

A fun part of SIGGRAPH is always the Computer Animation Festival, which now includes the Electronic Theater, various Festival Screenings, production sessions and of course the Festival Awards. In future fxguide articles we’ll be covering Moonbot Studios’ work for The Fantastic Flying Books of Mr. Morris Lessmore which won the Best in Show Award and Platige’s Paths of Hate, winning the Jury Award.

A fun part of SIGGRAPH is always the Computer Animation Festival, which now includes the Electronic Theater, various Festival Screenings, production sessions and of course the Festival Awards. In future fxguide articles we’ll be covering Moonbot Studios’ work for The Fantastic Flying Books of Mr. Morris Lessmore which won the Best in Show Award and Platige’s Paths of Hate, winning the Jury Award.

Today we got to see the Visual Effects for Live Action screenings, which were mostly making of reels from some of the top houses. Stand-outs included reels for Transformers: Dark of the Moon and On Stranger Tides (ILM), Battle: Los Angeles (Cinesite), Green Lantern (Imageworks) and Framestore’s breakdown for their Tale of The Brothers animation in Harry Potter and the Deathly Hallows: Part 1. The Electronic Theatre has a screening on each day of the conference – definitely recommended.

Nvidia Panel

Fxguide’s own Mike Seymour moderated a panel for Nvidia on VFX use of GPU in production. This panel explored the ways in which companies are using GPU both for artist interaction and large scale render speed improvement, and explored issues such as maintaining two code bases. The panel of senior CTOs and production professionals pulled no punches in discussing when GPUs worked and issues that arise at the absolute cutting edge of vast GPU farms and massive simulation parrallelization algorithms.

Fxguide’s own Mike Seymour moderated a panel for Nvidia on VFX use of GPU in production. This panel explored the ways in which companies are using GPU both for artist interaction and large scale render speed improvement, and explored issues such as maintaining two code bases. The panel of senior CTOs and production professionals pulled no punches in discussing when GPUs worked and issues that arise at the absolute cutting edge of vast GPU farms and massive simulation parrallelization algorithms.

On the panel was:

– Dan Bailey, DNeg

– Hugo Ayala, Blue Sky

– Magnus Wrenninge, SPI

– Olivier Maury, ILM

– Sebastian Sylwan, WETA Digital

This panel was recorded and can be found in the following story: /quicktakes/nvidia-gpu-panel-special-bonus-audio-recording/

Also open was the Studio with some great interactive booths.

The visual effects of Thor and Captain America

Marvel presented a great panel talk with no less than 10 speakers representing the visual effects artists behind Thor and Captain America. These included the film’s co-producer Victoria Alonso, vfx supes Wesley Sewell and Christopher Townsend, and reps from Digital Domain, Luma Pictures, Double Negative, Whiskytree and Lola VFX. Like many of SIGGRAPH’s film VFX presentations, the panel featured a number of befores and afters reels of the work from both films, things you probably won’t see anywhere else, not even on the DVD.

Marvel presented a great panel talk with no less than 10 speakers representing the visual effects artists behind Thor and Captain America. These included the film’s co-producer Victoria Alonso, vfx supes Wesley Sewell and Christopher Townsend, and reps from Digital Domain, Luma Pictures, Double Negative, Whiskytree and Lola VFX. Like many of SIGGRAPH’s film VFX presentations, the panel featured a number of befores and afters reels of the work from both films, things you probably won’t see anywhere else, not even on the DVD.

For more information about Captain America, check out our in-depth articles on Lola’s ‘Skinny Steve’ VFX and our new piece covering the many other shops that contributed to the film.

Open standards and the need for standardization

This afternoon also saw a key panel on industry open standards and the need for standardization moderated by Sam Richards of Sony Pictures Imageworks (SPI) and Hannes Ricklefs (The Moving Picture Company), Ray Feeney (RFX), Rob Bredow (SPI), Steve Cronan (5th Kind), Ryan Mayeda (DD) and Tommy Burnette (Lucasfilm Singapore).

This afternoon also saw a key panel on industry open standards and the need for standardization moderated by Sam Richards of Sony Pictures Imageworks (SPI) and Hannes Ricklefs (The Moving Picture Company), Ray Feeney (RFX), Rob Bredow (SPI), Steve Cronan (5th Kind), Ryan Mayeda (DD) and Tommy Burnette (Lucasfilm Singapore).

This panel highlighted some of the open-source projects that are helping effects companies share data and explored areas for future collaboration both on set and in post production. For example, the panel pointed out how completely inadequate camera sheets can often be, sometimes even being received as hand written faxes. In most cases, production companies need to set up a hub to ingest data from sets. Companies such as 5th Kind are working actively to promote database and even tablet based approaches, but there is virtually no standardization or even agreement on naming conventions from most productions. Because there is not much standardization in this area, a standard framework for information exchange could provide huge efficiencies for both production companies and vendors.

The panel explored options for sharing assets such as plates, models, and textures. Most films today involve multiple vendors. As Rob Bredow, chief technology officer at Sony Pictures Imageworks (SPI), explained, “we (SPI) touched about 12 films in the last year, and about 8 of those we were the lead facility, and I can’t think of one of those films that did not involve collaborating with other facilities.”

Ray Feeney, co-chair of AMPAS’ Science and Technology Council and one of the SciTech industry leaders (Winner of the Academy’s Gordon E. Sawyer Award), also discussed the ACES, IIF program for colorspace and color management that the Academy has been working on now for several years.

Also discussed during the panel was an open source baked modelling initiative, Alembic, which we covered at Siggraph last year, and which SPI and ILM co-developed. Alembic is very close to release of version 1.0 and has been used in production by ILM and SPI.

More on this tomorrow following the formal Tuesday press event.

Disney’s stereoscopic 3D conversions for The Lion King.

Today’s Changing Dimension talk included a discussion from Walt Disney Animation Studios on the stereo conversion of The Lion King and Beauty and the Beast. The tools Disney used had to create new depth on the traditionally 2D animated film without the use of geometric models. The process included significant roto on the scenes and characters, with a number of individual techniques to provide the depth. One included ‘character inflation’ which gave a base roundness, which went further with gradient primitives used to augment and sculpt depth within the images. Depth also needed to be added to ‘effects’ such as dust and for this the textural detail came in useful to give the final stereo look. Disney also developed tools to validate the dimensionalization results.

Today’s Changing Dimension talk included a discussion from Walt Disney Animation Studios on the stereo conversion of The Lion King and Beauty and the Beast. The tools Disney used had to create new depth on the traditionally 2D animated film without the use of geometric models. The process included significant roto on the scenes and characters, with a number of individual techniques to provide the depth. One included ‘character inflation’ which gave a base roundness, which went further with gradient primitives used to augment and sculpt depth within the images. Depth also needed to be added to ‘effects’ such as dust and for this the textural detail came in useful to give the final stereo look. Disney also developed tools to validate the dimensionalization results.

Foundry User Group: GeekFest

We finished off the day at The Foundry’s annual GeekFest, this year held at District 319 in Vancouver. First off the rank was Brandon Fayette, CG Supervisor and Production Lead at Bad Robot Productions, who demonstrated how he used the Denoise tool NUKE 6.3 on Super 8 for noisy plates. He also discussed using MARI to quickly create some terrain to make one of the shots work.

New versions of NUKE and MARI are in the works at The Foundry and they gave some insight into what they are planning for future releases. Jack Greasley, product manager for MARI, gave an update on features coming up in version 1.4 which is due before the end of the year.

PSD support: Mari 1.4 will allow you to load layered PSD documents, make modifications, and save back out

Adding a C API to allow 3rd party coding for image formats, model formats and more OpenColorIO support

Planning to add ATI support for Radeon and Fire GL cards (subject to change)

Triplanar projection support in real time on the GPU

Refine and improve camera controls

Greasley also gave some thoughts as to where Mari might possibly go moving forward. They are definitely exploring the idea of turning Mari into a more general high end, layered, image editing and painting program that is targeted towards the vfx and cgi pipeline. He specifically talked about Mari being a program that could paint not just on 3D models, but also on a 2D canvas in HDR. Could be interesting…

NUKE Product Manager Jon Wadelton gave a preview of new features expected in version 6.5, the next major release from The Foundry.

NUKE Product Manager Jon Wadelton gave a preview of new features expected in version 6.5, the next major release from The Foundry.

Roto and Paint core update. Re-write some of the core code to make it faster in situations with many keyframes and larger screens. Also improving stereoscopic roto and paint, with easier ways to offset roto between eyes.

Upgrade to Primatte version 5.0, Image Modeller 2.0, improvements to Copy/paste, keyframes from one time to another in the dope sheet and curve editor, Alembic and OpenColorIO support RAM playback. And most excitingly the first ‘blink’ (GPU) nodes for Nuke : Denoise, Kronos, & Motion Blur.

Jeremy Selan from SPI and The Foundry’s Andy Lomas discussed Katana, which is still in alpha but will soon go to beta and will likely be fully released this year. The guys took us through some of the earliest Imageworks (where Katana was originally developed) films to use the look dev and lighting tool through to The Smurfs.

Weta Digital’s Peter Hillman and NUKE ‘legend’ Frank Rueter presented on Deep Image Compositing for Rise of the Planet of the Apes. This was a fascinating talk on the history of deep compositing at Weta, starting with the swarm attack on a truck in The Day The Earth Stood Still, then on Avatar and the King Kong 360 ride film. Weta’s deep compositing tech is being incorporated into the OpenEXR 2.0 release.

For Apes, a live action film, Weta originally thought deep compositing would not be as useful as say in Avatar, where complicated jungles and creatures interacted. But it turned out that for shots of the digital apes fighting humans (which involved legs crossing) and in other complicated shots like the bridge attack and helicopter crash, deep compositing was still the best option.

Keep a look out on fxguidetv for an interview with Weta’s Peter Hilman on the deep compositing work in Apes.

Thanks for the update, all this looks great!

Thank you so much!