The long-awaited reveal of the Magic Leap One: Creator Edition capped off a milestone year for Augmented Reality and Mixed Reality. fxguide takes a snapshot of the AR & MR landscape and the implications for experience design.

Broad Visions

In 2017 Google’s ARCore and Apple’s ARKit releases put markerless tracking into handheld mobile devices. Microsoft’s Mixed Reality (formerly Windows Holographic) was released as a standard component of Windows 10. The vision of seamless AR across mainstream mobile devices is getting closer but we are not quite there yet. While AR/MR vendors continue to think big, terminology continues to confuse, with broad and sometimes overlapping definitions ready to trap the uninitiated.

Representing the latest generation of mobile AR, both Google’s ARCore and Apple’s ARKit build up a dynamic model of the physical world around the device using a combination of visual (camera) and inertial (motion sensor) data with different algorithms. ARKit’s technique is called Visual Inertial Odometry (VIO) which relies on low-level integration between camera data and Core Motion data from onboard sensors (accelerometer and gyroscope). ARCore builds on Google’s first mobile AR effort – Project Tango. It uses Concurrent Odometry and Mapping (COM) to track a wider variety of planes (e.g. ramps, vertical walls) than ARKit which only tracks horizontal planes.

Prior to release, much of the iOS 11 / ARKit speculation suggested the inclusion of tracking technology known as Simultaneous Localization and Mapping (SLAM) – a broad set of problems and algorithms defined by the robotics community – some of which leverage 3D point cloud data provided by depth cameras. However, the first release of ARKit (followed swiftly by the release of ARCore) provided a more lightweight platform that does not require depth sensors. Simpler tracking without SLAM opened up ARKit compatibility to older devices such as the iPhone 6S from 2015 (but not unfortunately to the iPad Mini 4 from the same year). ARCore compatibility is currently limited to Google Pixel, Pixel XL, Pixel 2, and Samsung Galaxy S8 devices.

Apple’s iPhone X introduced a depth camera (TrueDepth) but one designed for facial AR tracking not general-purpose location tracking. The TrueDepth system projects 30,000 infrared dots onto the user’s face to track facial poses and expressions. Importantly, the TrueDepth technology in the iPhone X is only in the front facing of the iPhone’s two cameras.

Without much fanfare, older AR tracking technologies like geo-location and image recognition (i.e. of tracking markers) continue to be useful even if the Apple/Google rhetoric can suggest that these have been superseded by ARKit/ARCore. From a designer’s perspective, vendor lock-in remains an issue for any non-trivial use cases that span image recognition and markerless tracking.

Imagine a scenario where you focus your mobile device on a specific real-world object to have it recognized as a marker (say a movie poster or toy) as a trigger to spawn a location-specific experience that is seamlessly tracked as you walk away from the marker. Neither ARKit or ARCore are sufficient to make this work without additional components, focused as they are on markerless tracking.

The recent Vuforia 7 release aims to help developers deal with this AR fragmentation by providing AR cloud services and a single API (Vuforia Fusion) to over 100 different Android and iOS device models. The Vuforia Ground Plane feature provides limited support for horizontal plane tracking to older devices that are not ARKit/ARCore compatible. An exclusive partnership with Unity means that Unreal developers are not supported.

The picture is no clearer with the term “Mixed Reality” as different vendors compete to define the concept in terms of the evolving capabilities of their platforms. Microsoft’s launch of Hololens in 2016 set the benchmark for MR albeit at a price point suitable only for enterprise use (>US$3,000). At the time, you could describe Microsoft’s MR approach as a sort of “AR 2.0” that leveraged both camera and inertial motion data. That line was blurred with the 2017 release of a range of “Windows Mixed Reality Headsets” that offered no AR functionality at all. “Mixed reality filming” muddies the term further. Advances in compositing, first developed to produce 3rd person videos of VR experiences, have advanced in film/TV production without the need for depth sensing or odometry.

Design Guidelines

Looking through the latest guidelines provided by vendors you can glean some additional clues to the current state of AR/MR.

From the Apple ARKit guidelines site:

- Use the entire display

- Be mindful of user comfort and safety

- Introduce motion gradually

- Consider whether user-initiated object scaling is necessary.

- Avoid trying to precisely align objects with the edges of detected surfaces.

- In general, keep interactions simple.

Apple is arguably leading the way towards mainstream AR adoption. A focus on solid horizontal plane tracking (e.g. ground planes) appears designed to ensure your first AR experience works. Having acquired Vuforia’s main competitor in the AR space, Metaio in 2015, Apple’s ARKit is now one of the most accessible of the markerless tracking technologies.

The Google Mobile AR best practices blog provides useful tips on designing for one hand (the other hand holding the device) and environmental considerations of “experience space”. ARCore’s support for detecting multiple planes (not just a horizontal ground plane) is behind the recommendation to create “visual affordances” and cues to manage users’ expectations as to where digital objects can be placed.

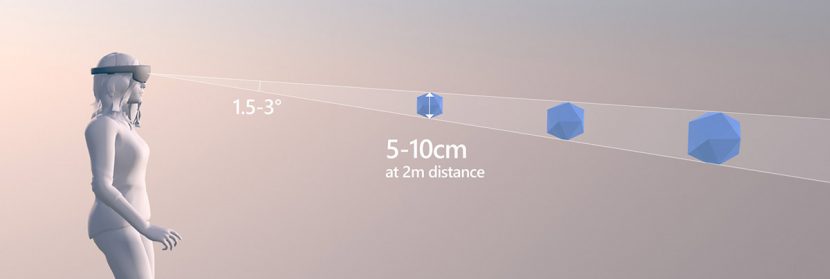

The Microsoft Mixed Reality design guidelines provide useful advice on sizing and placing targets in the context of limited viewframe devices like Hololens. According to Microsoft users will often fail to find UI elements that are positioned very high or very low in their field of view.

Microsoft also provides guidelines for gaze tracking – mostly commonly implemented as head tracking (i.e the eyes themselves are not tracked, only the headset orientation) but real eye tracking is increasingly available. Pupil Labs sells clip-on eye trackers for VR and MR devices while Magic Leap’s system includes eye tracking.

“The gaze vector has been shown repeatedly to be usable for fine targeting, but often works best for gross targeting (acquiring somewhat larger targets).”

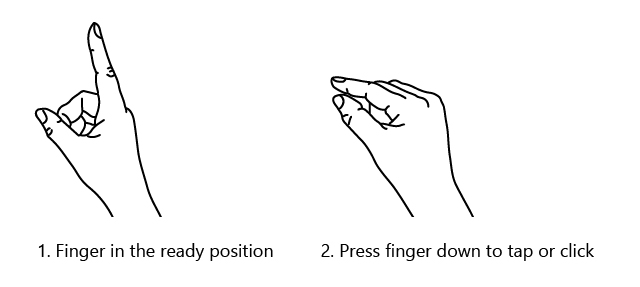

Hololens supports a number of core gestures that are recognized by the system and there are published techniques for combining individual taps into composite gestures.

Apps

On any given day, it is estimated that 60 million emojis are used on Facebook and another 5 billion on Messenger. This represents a desire to add an emotional context to our communications. AR combined with facial animation, derived from users own direct facial expressions, offers an exciting path forward for App developers. Currently, several apps are under development such as the AR Avatar Director, and from Embody Digital. This soon-to-be-released app allows an iPhone X user to input not only their voice and tracked facial expressions, but also edit their own dialogue as it is automatically converted to text. The app then provides a cartoon AR avatar that can not only be repositioned in the room but customized. Beyond facial animation, AR Avatar Director uses voice stress, text analysis (your voice is automatically converted to text) and additional user editing on that text/script, to provide plausible body motions, gesturing, and movement.

This App and several others will be explored in our follow up look at AR applications in January here at fxguide.

Next Steps

2018 looks set to be a year of AR innovation in small incremental steps along with some possible potential huge leaps.

As many more people come into contact with AR experiences through ARCore and ARKit-supported devices, there will be ample opportunity to test out new AR functionality only possible with newer devices and combine it with tried-and-tested older forms (geo-location, image registration). With support from 3rd party real-time engine vendors like Unity and Unreal, AR features can be rolled out in the context of larger builds. Artillry.co estimates that ARCore will reach 3.6B phones by 2020.

Magic Leap’s recent announcement and exclusive interview with Rolling Stone Magazine puts the Magic Leap One: Creator Edition on the wishlist of many after many years of speculation. With only sparse details at hand, the company’s “digital light field” technology could be a game-changer on several fronts. The company claims to have manufactured a process through which the brain accepts digital light field information alongside the analog light field (the intensity of light in a scene, and also the direction that the light rays are traveling in space) through the use of so-called “photonic wafers”. It has been suggested that if this approach proves successful that it will do away with traditional stereoscopy, the mainstay of all current HMDs providing a 3D experience.

From the Rolling Stone story:

The Magic Leap One: Creator Edition system comprises Lightwear (goggles), Lightpack (pocket-sized computer equivalent with MacBook Pro specs), and Controller (the handheld remote). The goggles will come in two sizes, with a forehead pad, nose pieces, and temple pads that can all be customized to tweak the comfort and fit.

Cost-wise it will be priced similarly to a high-end laptop.

“By the time they launch, the company will also take prescription details to build into the lenses for those who typically wear glasses.”

The MLO is described both as a spatial computer and as a wearable computer. The Lightwear goggles have an impressive array of sensors to tap into – at least six in-built cameras, four microphones, and speakers.

“The demonstrations I went through didn’t really present an opportunity to see if the goggles could do that (support multiple focal planes) effectively.

“The exact capabilities of the Magic Leap light field system remain a closely guarded secret but it seems unlikely that the capability to shift ocular focus within an experience would have been omitted if available.

“The viewing space is about the size of a VHS tape held in front of you with your arms half extended. It’s much larger than the HoloLens, but it’s still there.”

Designers have been given notice that they will need to continue to work with limited real estate to present characters, props, and data. As with the Hololens, the focus will be on hero elements positioned directly in front of the user (in the viewing space) rather than on immersive environments. When the Hololens was first released, the field of view was flagged as a significant issue but since then the product has found its place in enterprise applications despite this.

“We’re using the meshing of the room, we’re using eye tracking, and you’re going to use gesture, our input system for most of the experience.”

While we wait for AR/MR headsets to be released with a broader field of view, the Hololens continues to lead the field in hands-free and gesture recognition in MR.

For budget-conscious designers, one workaround to owning a Hololens and Magic Leap One (along with other similarly priced high-end headsets to come) is simulating these devices with a pass-through AR experience.

This is the premise of the upcoming ZED mini from StereoLabs – a stereo binocular camera with a 110° field of view that will ship with a mount for Vive or Rift. The ZED mini functionality is currently available in the larger form factor ZED as a bolt-on depth camera for spatial computing applications. Using a “fake” real-world view inside VR (provided by a video feed) designers can use pass-through AR to test the varying field of view offered by different devices.

Last but not least, the open web standards for AR/MR continue to mature with an open-source JavaScript API. Recently re-badged WebXR with the release of an iOS app, the Mozilla led efforts to bring mixed reality to the Web mean that in 2018 we can expect to see support growing for AR web experience creators.