In 2005 a small group of engineers via a cold call and a chance encounter at Burning Man, land themselves at LucasArts producing finite element analysis for a Star Wars franchise game, with 2 years work to do in 6 months. Today their software is used from iPads to major features.

Finite Element Analysis (FEA) is not new. In fact, it is a main stay of serious engineering, but until Pixelux the idea of it running in anything like the speeds required for gaming was just unheard of – the technique is literally used to model the stresses on nuclear power plants and these guys wanted to use it to bounce Imperial Stormtroopers off the digital walls of the LucasArts cafeteria. But they succeeded and their Digital Molecular Matter or DMM engine is the leading FEA physics engine in the entertainment industry and at the heart of MPC’s Kali destruction pipeline – most recently used to help with the ship crashing shots in Prometheus.

And while DMM software now spans iPads to major feature films, few people know the story behind this rising star of simulation software.

DMM is a physical simulation system which models the material properties of objects allowing them to break and bend in accordance to the stress placed on them. Structures modeled with DMM’s ‘tet’ based system can break and bend. Objects made of glass, steel, stone and other real materials are all possible to create and simulate in real-time with DMM. The system accomplishes this by running a finite element simulation that computes how the materials would actually behave.

So what does DMM do? It allows for very realistic and often time very fast simulation, specifically destruction simulations. For example, the video below shows a wine bottle breaking using the DMM plugin to Maya combined with a fluid sim from Naiad and rendered in V-Ray, by Paolo Copponi, a freelance LA artist.

The video shows a Naiad 1-way coupled simulation. The DMM sim was done first and Naiad water added after, all rendered in V-Ray. Copponi spent approximately seven hours for the particle simulation and eight hours for the mesh simulation on his PC. The video shows roughly two million particles at sim level before polygonalization.

DMM software has been used in many feature films already, including:

Avatar (2009) – Trees breaking, helicopters smashing: Weta Digital

Sucker Punch (2011) – Stone floor and statutes, wooden pillars, building exterior in the Temple Scene: MPC

Source Code (2011) – Train hitting the brick wall: MPC

X-Men: First Class (2011) – Chain destruction of luxury yacht: MPC

Sherlock Holmes: A Game of Shadows (2011) – Tower collapsing, tree shatter, wall getting smashed: MPC

Harry Potter and the Deathly Hallows – Pt 2 (2011) – Stone knights: MPC

Mission: Impossible – Ghost Protocol (2010) – Shattering glass in server room: Fuel VFX

Wrath of the Titans (2012) – Kronos effects: MPC

Mirror Mirror (2012) – The Queen’s Cottage destruction segment: Prime Focus

Prometheus (2012) – The ships crash: MPC

…and more, plus now commercials, a host of games and even iPad applications.

We interviewed Vik Sohal, COO at Pixelux Entertainment

fxguide first came across the work of Pixelux’s Vik Sohal and the great team at DMM from the work of MPC, but for many it was Pixelux’s breakthrough SIGGRAPH paper claiming FEA at game speeds. At the time of that paper we certainly discounted the software as FEA was not close to being used in non-realtime rendering yet alone games. FEA in a game engine seemed, well, ‘far fetched’. We were wrong, and we have enjoyed working with DMM for over six months now as the company becomes more widely known.

DMM: a background

Pixelux’s DMM really started in 2003. “We originally started out wanting to make a game involving a giant monster and a city,” Sohal told fxguide. “The monster was going to destroy the city – of course that’s what monsters do. Unfortunately the technology to destroy cities didn’t exist, so we started investigating how to do it. We tried a whole bunch of different approaches. The first thing we tried was a volumetric approach, just using octrees and representing everything volumetrically. We started that way but at some point we thought we’d need to start something with finite elements.” The company was actually originally incorporated in Febuary 2004, in Geneva, Switzerland with the intent of developing technology that would automate art asset generation for video games through advanced simulation.

Pixelux’s research went well, but at the same time they began investigating what other research was being done with finite elements and found some work done by Dr James O’Brien. “He was a professor at Berkeley,” recalls Sohal. “In ’99 or so he’d written a paper about deformable fracture. It was a great paper – the problem was that the simulations that he did in it looked fantastic but they would take days to run. The approach was not viable to film or gaming schedules – to break say an ash tray model would take a day or so to compute. It was not practical to use in production.”

Watch some early examples of sims completed with DMM.

Even with that limitation, Sohal called O’Brien. “I gave him a call, and he responded and we struck up a great relationship. Even today he’s one of our advisors. We basically implemented his technique.” Initially the team implemented something called ‘tet-splitting’ which is more realistic in terms of simulation but harder to do in real-time. The DMM solution is based on tets or tetrahedra. This basic concept is central to all the code and implementations. (For more on the maths and algorithms of DMM in production, refer to fxguide’s Art of Destruction December 2011 story, which has an extensive FEA section).

“We just kept working on this stuff and we had some nice videos we showed around, and we went out looking for money,” continues Sohal. “We talked to a lot of venture capitalists. I did a thing a lot of people tell you never to do – I just cold-called a bunch of venture capitalists. And one of them went to Burning Man – he ran into the guy who was the Director of Technology at LucasArts at the time. This guy just said what do you think of this technology, and he said, we’re looking for something like that…”

LucasArts and Star Wars: The Force Unleashed

Most startups suffer from having to launch, raise finance, building marketing and sales – but also just build a company – with an infrastructure, hire a receptionist and find stationery suppliers – all the mundane things you need to run a company in addition to doing actual research and development. Pixelux skipped that entire problem. They almost immediately signed with LucasArts and could focus all their energy on just the problem of making their technology work, but with the added pressure of a killer LucasArts deadline. Pixelux wanted two years – they got six months.

“We did this one animation of a castle with rocks hitting it, and somebody showed that to George Lucas, and he really liked it. More specifically their whole philosophy for games at that point was to create simulation-driven gameplay. They saw our technology as a possible thing to do that,” explains Sohal. “We were looking at about two years to develop the technology. They said we need this in about six months. We said OK. There were six of us then”.

Further DMM examples.

The initial demo the team made for LucasArts was a test, a playable game ‘level’ that showed the FEA – DMM running. “The first thing we showed them was just a tower that you would drop things onto and it would break. It was very, very simple, probably about 300-400 tets. That opened the possibilities – they saw the potential – but this stuff was totally not optimized yet.”

The team developed the technology of a fast FEA with some rudimentary tools to allow artists to ‘author’ the objects. “You have to take an artist mesh and chop that up (‘chopping’) – tessellate it – slice it up into a bunch of tetrahedrans,” says Sohal. “The way you slice this up is important, because it represents the number of degrees of freedom the object has – where it can bend.”

A key aspect of FEA technology is that items can bend and then snap and break – just as objects do so in the real world. This is often handled as more of a cheat, but in FEA the bending and breaking are properties linked to the model and the material. The control is via the tet grid, its size and density.

“If you want to simulate wood, the cheapest way to do that computationally speaking is you create a tessellation and then you stretch the tessellation out,” notes Sohal. “So it’s not uniform – that’s how you do anisotropic materials. We’re assuming the tet density is not going to change during the course of the simulation, unless you do tet-splitting, which you can. We can do it, and we have the whole framework to do it. It’s just that tet-splitting opens a new set of problems – for example, a split-limit, because this stuff can create geometry like you wouldn’t believe.”

The tower LucasArts saw interested them and they asked Sohal and the team for a more specific demo. To show the practicality of the software, so that it could be used in a game-like environment, the second demo had to show it could do multiple types of materials – wood, steel, glass – interacting, not just rocks or stone.

“They also wanted to have a stormtrooper gun,” adds Sohal. “You take these stormtroopers and just throw them at things. The funny thing about it is that if you take a human being and throw them at a stone column – we all know what’s going to happen – the column’s going to win. But they wanted to have this visceral reaction, this vision. The important thing is when you’re taking something that’s an engineering simulation and putting it in a real world of film – people have an expectation of what it’s meant to be. So we had to tweak the simulation. One way we tweaked it was to make the column a little weaker and made the stormtrooper weigh 5,000 pounds and made his body a little more rigid.”

The first demo was just one column, and four or five stormtroopers. “That was a lot of fun – people enjoyed that,” says Sohal. “The next thing we did was a whole room which looked suspiciously like a Lucas cafeteria – I don’t know why…It had wooden beams, it had glass panels in the ceiling. It had very simple lighting. At this point we were on-site at the Presidio. We kept adding things. We had rebar instead of concrete columns. We had rebar rods sticking up and waving. And we had a brick wall. As people were playing with this environment they were making up their own games. One guy was able to wear away beams by throwing stormtroopers at them. We found that people could not leave the room until they’d destroyed everything!”

The year was 2005. Then, in 2006 the team got the green light to start integrating the technology into The Force Unleashed. The key to the unique game play that attracted LucasArts in the first place was the very realistic solutions that come from a full FEA solution. While the early games had a limited number of objects resulting from, say, a shattering wall (compared to a feature film), the engine produces very real outputs to any simulation given to it.

Examples from Star Wars: The Force Unleashed.

Still, it increases the need to model and build the assets, or the result one gets may be accurate but surprising. Sohal recalls an example of this from a test done during this period. “I’ll tell you a funny story – we were working on a project at Lucas and they wanted us to do some liquid stuff. So we added a liquid sim to DMM. It was funny because we dropped this thing that looked like a donut into this liquid sim. It was simulating hydrostatic pressure – it was really a height field. The top of the sim was rippling like water and things would float on it, but if things would sink in it, the pressure would increase. So we’re watching this thing and the donut is getting smaller as it goes down. We realized that that was the result of a simulation – the donut was made of a floppy material, and hydrostatic pressure’s increasing from all sides and it’s going to crush it!”

The Pixelux team had an exclusive with LucasArts – DMM was exclusive to them for this period of time. “That was good because it helped us stay focused on the project. They were great partners,” Sohal says. The team did a demo at E3 to show the results. Different versions for different platforms were also required, often quite differently implemented than the PC version. “There’s all kinds of very specific platform optimizations and they’re reflected in the code now,” says Sohal. “From the outset we were focused on speed – it had to run in real-time, and faster than everything else, so they could do their physical DMM sim and then also had Euphoria and some rigid-body stuff and game engine logic. So they had all this stuff working together – and the interesting thing is that some of these things were being developed as the project was progressing.”

Peter Hirschmannn, the DP of product development at Lucas, was right behind the project and how it fitted into his vision of how games and their assets should be designed. “If you build a structure and you don’t make it strong enough, it’s going to collapse. He loved that,” recalls Sohal from his time at Lucas. “It was totally in line with their core focus of this game, which was simulation-drive gameplay. Simulation in a game means that the game is never going to be the same twice. That’s reflected in the game, where you can take a wookie and throw him into a tree or bridge and he’ll break it differently each time.”

In 2009, DMM2 was announced with a GPU-accelerated version to be made available through a partnership with AMD, and LucasArts announced The Force Unleashed 2, again using DMM.

SIGGRAPH paper

“Our CTO Eric Parker wanted to write a SIGGRAPH paper, so he and James sat down and wrote the paper and explained what we did. There was no fear on our part that anyone was going to steal it – a lot of this stuff was really just implementation – years of optimization,” explains Sohal, talking about the pivotal SIGGRAPH paper that would bring the group’s work to the film community.

Eric G. Parker and James F. O’Brien published Real-Time Deformation and Fracture in a Game Environment in the ACM SIGGRAPH Symposium on Computer Animation (where it won best paper). You can read it here.

The paper describes the simulation system that had been developed to model the deformation and fracture of solid objects in a real-time gaming context. From the abstract: “The goal of this paper is to describe how these components can be combined to produce an engine that is robust to unpredictable user interactions, fast enough to model reasonable scenarios at real-time speeds, suitable for use in the design of a game level, and with appropriate controls allowing content creators to match artistic direction.”

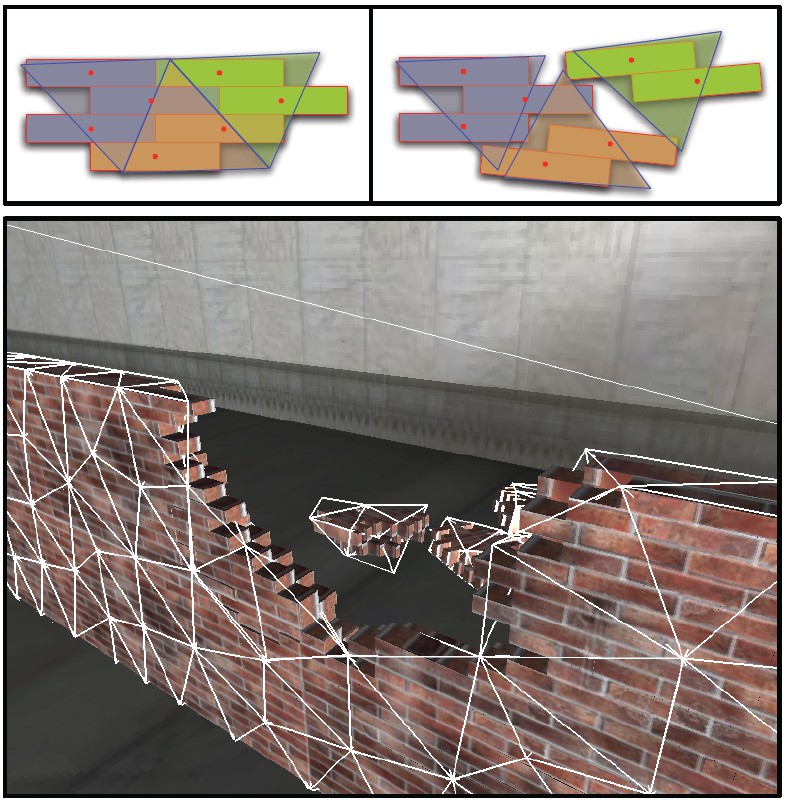

The apparent geometric detail of the simulation as seen above, is enhanced by the embedded high res poly surface in the tet FEA mesh.

Sohal explains further: “To control something, there are two elements to it – there’s the authoring part and the other thing is you have to be able to direct the sim the way you want. We put things in DMM that allowed people to turn things on and off – they can turn sims off, they can force node positions in certain places.”

It should be noted that the DMM engine can be used for more than just destruction, even creatures. “It’s for simulation, so you can simulate flesh as well,” points out Sohal. “You’re simulating a continuum and adjusting parameters – you can anything from jello to concrete with DMM. That’s the cool thing about it. You can do things you can’t even do in real life (although I think they’ve discovered a class of materials like this) – if you make Poisson’s ratio negative, for example, if you pull on something and apply stress to the test, instead of preserving volume, it increases the volume. So you get this cartoonish effect.”

DMM in films

DMM is expanding in its high-end use. “We are in the midst of licensing DMM to a number of large VFX houses right now. I think there are also about six high-profile films coming out this year that will use DMM in some major capacity,” Sohal told fxguide.

The first movie that used the Pixelux DMM technology was Avatar. Says Sohal: “They used it for some trees and the hovercrafts crashing into each other (by Weta Digital, using the Maya plugin). But the first big guys to put some effort into it was MPC.”

“MPC saw that paper and called me up one day and said we’ve got this project that needs a lot of destruction and particularly wood destruction, and we’ve seen your paper and the results and we think this could really do it. But we’re going to need you to make some changes to it.” The project was the shattering of the wooden beams in the temple scene for the film Sucker Punch.

“We got everything running on Linux, we made it all 64 bit which was a big project. We augmented our tools to deal with bigger meshes,” says Sohal. They told me what sort of meshes they were starting to play with and I realized they would regularly just create a million tet mesh – no problem, they would just do it. Their whole pipeline is structured so that the simulation runs outside of all the other parts of creating a shot. MPC are not getting any overhead from any system DMM is running under, just running DMM straight. Whatever memory or CPU resources it needs, it gets.”

Kali

MPC has the API version of DMM. It’s not exclusive, any company can license it, for example Tippett Studio are augmenting their destruction and creature pipeline currently. But MPC has built extensively on top of the DMM engine to create their own Kali system. The way the DMM solver is used in the Maya plugin and by MPC is ‘completely different’ according to Joan Panis, Lead FX TD at MPC. Instead, Kali sits on top of DMM.

Read more about FEA and MPC’s Kali in Art of Destruction.

Currently, in the Maya version of DMM, explains Panis, “it takes the actual render mesh chops, does the tessellation on the render mesh and you render that object, whereas we separated all that, so we can add more on top of this. For example, we can add a noise displacement on the subdivision, something we are working on right now, and this all comes on top of DMM, but DMM is still the core solver for Kali.”

Extensive work has been done at MPC to improve their pipeline since adopting DMM for Sucker Punch (with significant work done out of MPC’s Vancouver office). This latest example Panis mentions above allows for displacement noise on the inside of the tets to improve the internal revealed structures, for example. The Canadian MPC team are at the moment experimenting with this internal 3D noise displacement, since the surfaces were thought to not always be detailed enough, and now the fractured elements are noise displaced as they chop with the render geometry as a post sim effect.

MPC’s system is highly flexible and controllable. On Sherlock Holmes: A Game of Shadows, for example, MPC went out and watched a real tower collapse and then just changed the parameters to get greater realism to the simulations based on their real world observations.

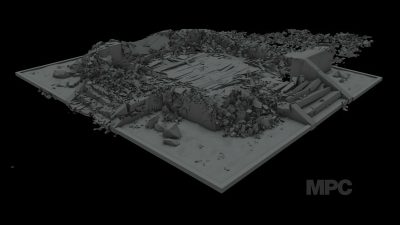

Kali has evolved and improved since Sucker Punch – most recently it was used in Prometheus. It allowed the crashing space ships to bend, buckle and then fracture and break. “This is something really great with Kali,” says Panis. “It works really well with metal, plastic or anything, so when we had the Prometheus crashing into the Engineers’ ship, we had the slow-mo shot of where the actual ship crumbles – that was done with Kali. The only thing was that the ship was very heavy (in geometry) to begin with and Kali creates additional geometry once we go throughout the whole pipeline and everything would become even heavier. So we extracted out just the front part where it would hit the ship, and just do Kali on that part, especially as it all gets engulfed in flames, and we ran it just like we did on X-Men: First Class (where Kali was used in the chain/yacht sequence). We get the materials with the plastic deformation and so it would crumble. Also the modeling was done with plates of metal so some would detach and crumble. It went really well in the end.”

Watch a break down of the Prometheus crash. VFX by MPC.

Interestingly, the crashing ships were scheduled to be completed by a certain time, but then moved up dramatically for MPC to be a trailer shot. So complex was the shot that a plan B was put in place to not have full plastic deformations with Kali and to cheat the bending metal, just for the trailer shots. But the vast and complex simulation and rendering was completed to the new earlier schedule and the B shot was not needed for the trailer, the final shot was ready in time.

Kali is an event based simulation, everything can be triggered based on age or timing. This aids tremendously in triggering secondary simulations and particle generators. The ‘age’ of any tet goes from zero to whatever value, but when it breaks, it resets to zero. “This means we can detect when the simulation event happens and when we see when it goes to zero we know it has broken and we can use that information to emit particles,” explains Panis. “Not only does Kali signal that the particle generator should start but it gets from the tet the position, the velocity et ceterea of that actual tet and uses these to drive the particle simulations. This was used to get additional debris coming off the Prometheus ship as it collides with the other ship.”

Kali uses DMM at its core and has a fixed size tet-mesh – this has not changed since it was adopted – but the team is now far more experienced at using the simulation tool, so the results are continuing to improve and MPC is producing stunning work. For Prometheus, the simulation team was only three people, plus those artists involved in rendering and modeling either side of the simulation.

Interestingly in the area of modeling, MPC has just added a new tool for Kali modeling asset creation. It is a tool “that the Asset (department) uses,” says Panis. “They have to have a specific guideline for a model to fit into the pipeline and go successfully through the chopping process.” Any model that is being used in a FEA simulation needs to be built for correct reaction to forces and be built, one might say, more like a real object.

The chopping process is “like a boolean thing,” adds Panis, “every piece of the object (say the Prometheus ship) has to have thickness, it can’t have just planes. The normals have to be in the correct order, you can’t have small triangles, you can’t have soft intersections, and this was a pain on X-Men. There was so much back and forth to get it right as we are chopping. Now we have this tool – they (the Assets team) just select the model, they just click the button and it flags if there are any problems.”

Read fxguide’s coverage of the visual effects in Prometheus.

It is worth noting that Kali takes in displacement modeling UVs so that say if someone wants to use vector displacement in RenderMan it is slightly difficult, as Kali has to use polygons. It can’t use micropolygons in RenderMan. The team needs to subdivide the model or Kali would be missing detail, but one can easily tag objects to make sure the vector displacement modeling is converted before chopping.

In summary, Panis says “the DMM engine is great, I have never seen anything else like it, DMM provides such great things like bending. Right now you either use rigid bodies, but then everything looks rigid, with DMM you get bending and rigid bodies, which is much more realistic. So I am really really happy we are using this solver.”

DMM in TVCs

Mark Toia has directed the first commercial that we are aware of that uses DMM in TVC production with a spot called ‘Launch’. The spot for General Motors/Holden called for a CG stadium to collapse. Toia decided to turn to Fin Design in Sydney to provide the extensive background destruction.

Watch a breakdown of ‘Launch’ from Fin Design.

Above: Just the stadium destruction isolated from Mark White at Fin Design.

DMM for games/iPad: Efexio

While Pixelux has had a lot of success in the high end, it is also about to help launch a new product and application of their DMM software. The project is with a startup called Efexio and it is what can be best described as ‘an iTunes store for pre-made visual effects’. VFX artists and teams will be able to submit VFX shots into this store which will then allow anyone in the general public to purchase and composite them into their own videos using a free compositing app. The potential for VFX professionals is interesting, as it will provide them a revenue channel between shows which they control, and own the IP.

An example of a composited CG elephant in Efexio.

Efexio is a combination of clip art, canned animation and gaming. The idea is to allow artists to generate animations and sell them, the animation is sorted in a type of stripped down version that can be easily downloaded. This allows for people to add these animations to clips or videos they shoot. But as the animation is not pre-rendered – it can be adjusted for ground plane, lighting and perspective.

The Efexio store will be going live soon and this will allow anyone to purchase and add visual effects to their home videos. The store will initially have some company generated effects (all fully 3D quality with sound effects and dynamic lighting adjustment). The effects (as well as the effects store) will be accessible from within the app on the iPad. Not long after, the store will expand to allow visual effects artists to submit effects to be sold on the Efexio store. This part of the store won’t be up initially, but will be brought online some time after the app is out.

Watch an interview with Vik Sohal about Efexio.

The Efexio app will be available in iPhones, iPads, Macs and PCs. Pixelux did all the research and development of the technology work including some of the shots. “We expect to add many things to the system, including matchmoving, camera tracking and a lot of other stuff,” says Pixelux’s Sohal. “The potential for movie promotion is really high as well. You can imagine that before a new film comes out, that digital assets are released to allow anyone to add that visual effect to their own home movie. We have been in discussion with a number of large film studios and they are very excited by the whole prospect.”

The possibility for previs is pretty high as well. By having a library of set locations as well as DMM-simulated effects, one could imagine a director having a field day with the iPad app. “I think that the Efexio stuff really represents a seismic shift in the VFX industry,” adds Sohal. “Coming into this industry from another, I am always surprised at how the talent that produces the majority of the amazing visuals in these films we all love is, frankly, unappreciated. I am hoping that the technology we are about to release will enable all those professionals to develop some alternate sources of income by going directly to consumers, making visual effects more accessible to a wider audience.”

A dinosaur from the Efexio app.

Efexio will have its work cut out, while the rewards could be enormous, various projects like this have been tried before, and have had only limited success. But if the animations are priced cheaply enough and the actual models are not exported, only the animations that use them, it could be a successful model. Instead of trying to get a few dozen people to buy a rigged model for a few hundred dollars, tens of thousands of people could buy an animation for a few dollars. The render and compositing quality of such iPad apps does not need to be as professional as a film project, to be successful, but as processing and time allows the app could produce progressively more realistic results.

It may go without saying that fxguide supports any move that allows artists more revenue and more control of their own IP.

Pixelux and DMM today

The DMM plugin update for Maya 2013 is being sent out this week, and it will be available shortly. This update will support Alembic caching, allowing users to create destruction sequences and export them in a platform-independent format for rendering.

Download this Alembic file below

Download the alembic file seen above (128MB)

“We continue to sell DMM,” says Sohal. “We have a deal with Autodesk so that it’s included as part of Maya 2012/2013 – that’s been very successful and lots more people are exposed to it. We’ve always been an engineering company, and the stuff we did for Lucas and DMM was a project. It resulted in a product and we market that. That’s what we do, we develop products. The team is still about five people – we have contractors we bring in too. We’re based in Sunnyvale – we’re not super-huge. The thing that makes me most happy is to see student demo reels – ‘here’s my DMM section’.”

No doubt . DMM is the most advance and accurate tool. I love it.

https://vimeo.com/43269044

Vik Sohal always reply any query or give nice comments on online DMM test videos.

But I don’t know why they never replied in a proper way when we ask about particle or fluid integration.

Although we know script exist and used by many but for a simple artist who don’t know scripting it is still tough.

You can check there forum, many non replied queries are there.

Some time I thing, they won’t even bother to answer, Is it there business policy. They already have big client.

If you can share it with your friends why not with us? Check this

https://vimeo.com/24031834

Hi Issac,

The ICE integration wasn’t done by us, you should ask Sylvain Nouveau for that code, he has not shared it with us. All the other particle work that has been posted has been done by our customers (mostly plugin customers). There was a very nice tutorial on particle emission from surfaces that one of our users graciously posted for everyone. You can see it here:

http://www.pixelux.com/forums/showthread.php?752-Dmm-And-Maya-Particles-(-Emitting-Maya-Particles-From-Dmm-Object-)

Other users have posted work done using that technique.

As for a particle emission script that uses direct access to the DMM node we have inside of the plugin, that is still something we have to get people working code for. Right now we are focussed on the Maya 2013 build of DMM plugin and afterwards we will address this.

Best Regards,

Vik

Sir, Thanks for you reply.

I think if you can at least provide a way to select post simulation exposed tet faces (May be .outputTetFlags or inTetFlags (I may be wrong)) so we can move them one side in UV and do some displacement mapping and emission of particle and fluid ( I already did that manually in my test, soon I will upload a video). If mesh is high res.manual is extremely tedious. With script it will be fast. So Love for DMM forced me to learn some scripting, And i hope I will come up with some solution.

Anyways DMM is SUPER.

I am sure it is not a business policy – they are a small company!!!

DMM does not do fluid sims

Mike

Small wonder

Pingback: Plugins and programmes looked into for experimentation – Animation studios