Marvel’s latest comic book hero to receive the big-screen treatment is Doctor Strange. The creation of artist Steve Ditko, Strange (played by Benedict Cumberbatch) is a master of ‘black magic’ armed with magical objects and a pet cloak, he defends earth against other dimensions.

Dr Stephen Strange is a brilliant surgeon whose career abruptly comes to an end following a tragic car accident. Unwilling to accept that his life must change, Strange becomes obsessed with healing himself. Finding no solution in science, he travels the world searching for an answer that might bring him relief. His journey takes him to the temple of the Ancient One (Tilda Swinton), where his discovery of magic begins.

The film was supervised by Stéphane Ceretti. The French born VFX supervisor began his career as an animator and then as a VFX supervisor at BUF Compagnie in Paris. He moved to London, where he worked at Moving Picture Company and then Method Studios. Ceretti’s first adventure with Marvel Studios was as second unit supervisor on Joe Johnston’s Captain America: The First Avenger. He went on to work again as a second unit supervisor on Marvel’s Thor: The Dark World. In 2014, he worked on Guardians of the Galaxy as the Marvel Visual Effects Supervisor and along with Nicolas Aithadi, Jonathan Fawkner, and Paul Corbould, was nominated for a 2015 VFX Academy Award.

The film was shot partly at IMAX resolution. This allowed not only for a better cinema experience but also a different, more square aspect ratio for those sequences. The IMAX aspect ratio is 1.90:1 and the normal aspect ratio for the other shots is a standard 2.35:1. For some scenes, companies such as ILM delivered two versions of a shot: a master IMAX framed for that aspect ratio and a second version with different composition that could be used for other digital and second screen releases later.

DOP Ben Davis, who shot both Guardians of the Galaxy (2014) and Avengers: Age of Ultron, lit the film and chose to use both the Arri Alexa 65 (6K), as well as with the Arri Alexa XT Plus. For several of the high speed sequences, such as the car crash, Love High Speed provided special complex Phantom Flex 4K rigs, shooting at up to 1000 fps. The particularly powerful final grade of the film was overseen by Senior Colorist Steve Scott.

ILM

ILM produced some of the most remarkable footage to appear in any trailer this year when the world saw glimpses of the chase through New York. The folding Manhattan is known internally as the Mirror Sequence. Although not featured much in the pre-publicity for spoiler reasons, ILM also produced the equally breathtaking reverse time fight sequence in Hong Kong at the end of the film.

Richard Bluff has already won a HPA Award for Dr Strange’s VFX work, along with Stéphane Ceretti. Bluff has done many films including the brilliant small budget Lucy. He had previously been nominated for a VES Award for Marvel’s Agent Carter (2015), – which he shared with several others including Sheena Duggal, who also contributed in a smaller role with some shots in Dr Strange.

The film was in post-production for a relatively short 5 1/2 months in total, so key work was started often before principle photography. Bluff and team started about 10 months before principal photography “to plan out the New York sequence but also to Lookdev a lot of the Elder Magic, and the Astral projection,” explained Bluff. The idea of an Astral projection as a controlled or willful out-of-body experience, was central to many of the film’s effects sequences. The concept of an existence or consciousness as an “astral body” that is separate from the physical body has been well discussed in various forms of esotericism, – But ILM still had to work out what this would actually look like on screen. As the film progressed, the Astral body work would move to other facilities and ILM would focus primarily on the vast New York and Hong Kong sequences. ILM delivered under 400 shots, with New York having fewer shots than Hong Kong (200 vs 150). Shot count is not a good metric of workload, for sequences with only a few shots, some of the best ILM artists had to work hard to pull them off. The entire film had 1450 shots in total, considerably less than Guardians for example, but “every single shot was a challenge”, explains Ceretti.

For the New York Mirror sequence, the actors were almost entirely on green screen, although there were some location shots at the start of the sequence. “All we had in camera were a few walkways, these industrial walkways had several fly away pieces so we could reconfigure it into an L shape or just a long walk way, but anytime I saw it on set I knew we would be replacing it with CG as it had to be deconstructing or reforming into something else during the shot”, explains Bluff. There were a lot of treadmills, gimbels and sliding floors “which were a ratchet system or with grips pulling on the end of a bungie rope to get the right characteristics”. There were a few dressed pieces filmed on the skidpan at Longcross studios for the big Escher illusion shots. The art department built a section of New York with cars and newspaper stands which were filmed with extras, “but it was one of the few external sets that we used”.

Lighting was a major issue, as the light on the ground in a major city in New York is very diffused, since the sun rarely shines directly down, producing hard or dramatic lighting. One of the reasons that Richard Bluff and ILM were chosen for the New York sequence, (aside from ILM’s gold standard reputation), was that Bluff had been on the team that made the Digital New York seen in The Avengers.

Bluff knew that lighting would be an issue, the flat indirect light is something that could not be avoided for the live action shots done in New York, but once the city started to move he took every advantage of the bending and folding sky line to more dramatically light the characters. “For the majority of the day you are going to be in shadow, so we had to get some light coming though on the material we shot at Longcross studios for the believability or the whole shot would be overcast, which no one wanted. So for the majority of the time they are in shadow but then whenever we did digital double take overs – as soon as we went to doubles we immediately started throwing light on the characters and cementing them into a more interesting background”. When the team did not have digital doubles they even used stand in geometry with full match-moved animation so they could do some degree of relighting on the cast live action footage “to again break up that lighting” says Bluff. “that was the biggest concern I had going in… to make it interesting and not bland shadows throughout”.

ILM split their work, all of the asset development was done in San Francisco but most of the main work as done in the Vancouver office, “but it was very much a fluid workflow between the two teams” comments Bluff.

To bend and warp New York presented a large number of issues, although Stéphane Ceretti commented that “not once did ILM say they couldn’t render something”. On a film like Avengers or Transformers the effects would flow through the normal ILM pipeline. This clearly delineates and handles large rigid body destruction sequences, environments and character work etc. On this film “there is such an enormous variety of work, one of the reason’s I was tagged for this was my years running the Generalist Group at ILM, .. we had a pretty good idea going in how we were going to do the work, but it wasn’t until we started pulling the shots apart that is started to really be clear” he says. Normal pipeline rules don’t apply when buildings are folding in on themselves as they did in New York or when all the effects had to be timed backwards as they needed to be for Hong Kong.

For each sequence when the shots were turned over, ILM’s Post-Viz Supervisor Landis Fields, did a first pass on the shot in Maya,” just working freehand” explains Bluff. “He did a lot of technical animation himself. Once we started to get a handle on what the gags were we were introducing into the shot and issues, then we would divide up the work”. Every so often this meant for example, that the environment work had to be run through Houdini, ingested in the traditional model pipeline and then passed from Houdini to Katana and then rendered in RenderMan.

“Often when the scene became so complex we had to move the environment work over to our generalist pipeline,” Bluff explains. The Generalist Department in Vancouver gave the artists the most flexibility in fine tuning the work. “They would work very closely with the mainline pipeline artists or the effects artists so we could push the city in and out of various pipelines”. For example on wide shots New York was 3Ds Max and V-Ray (the environment pipeline) but for medium to closeups it was being handled in the main RenderMan pipeline, and some shots even ended up being rendered in Arnold.

The new Clarisse pipeline that was used on the last Star Wars (EpVII) Environment work was explored but as the massive New York assets from Avengers needed to be leveraged, they decided against using it and they preferred to stay with a normal V-Ray Environment pipeline. One of the reasons the team could be so productive is just how familiar they are with these tools.

Bluff recounts how nearly all the Escher-style New York shots were handled by just one artist: Jonathan Mitchell. “He pretty much single handedly did all the 3D work in those shots, not only did he do the traffic, cars bending around streets, modelling every asset in there, texturing them, and the lighting – he even did the first pass of the comp before handling it over to the compositor who would do the shot,.. he did everything other than the digi-double takeovers”, Bluff explains. “The reasons why he was able to do all that work pretty much himself was because of the V-ray – Max pipeline that we have used over many years”.

At some points the director decided to play with the Escher ‘gag’ by using a camera projection approach. The director did this so that when the camera moves, the effect deliberately breaks down, and the audience sees the effect itself was an optical illusion. Not unlike making a complex 3D ‘forced perspective’ virtual set – only to break the illusion and even further mesmerize the audience. “John was able to execute this himself – he wasn’t having to going through three different departments involving say 5 different artists – he was able to do it on the fly and bang out three different versions in the space of a couple of days” adds Bluff.

For the closeups, the team had less creative flexibility in terms of shot choreography as the world needed to be driven by the performance of the actors and the effects work became “more about Mandelbrot-ing the set and doing clever duplication”. These shots used the alternative pipeline of Houdini feeding into Katarna”. While at the beginning of the process there was a very specific fractal references, the team got to use the term Mandelbrot-ing to refer to everything from deformations, fractals, 3D kaleidoscopes, clone duplication with offsetting and even pushing deformer objects through buildings to break up surface qualities.

ILM also tapped into their own artists Bluff explained, “we were very lucky with a few key people we had on this show, like Florian Witzel (ILM Fx supervisor) who worked with me on Lucy, and Andrew Graham in Vancouver, .. who as a hobby, builds real world art kaleidoscopes- outside of ILM. When he was put on the movie kaleidoscopes were not ‘a thing’ in the movie. It was not until we started LookDev-ing the ‘Zoetrope’ Fight arena, in New York, that he came up with a few tests”, he explains. “We threw them in front of the client along with some other ideas they had asked for, and they immediately gravitated to them, saying ‘that’s our movie ! That’s the way we are going with other sequences too!” – which we did not know at that stage”.

The geometry needed to be rendered in New York was incredible, but Bluff tells a very funny story. Bluff did several shots himself, which he likes to do on any show he works on. When looking at one complex shot, Bluff wondered to himself if he could turn off some Geometry to speed up the render. “The majority of the buildings had interiors. Some went to ridiculous levels, they actually had offices with desks” he says ” When I was looking to simplify things- just to render something quickly, and I saw something called post-it notes, of which there were several thousand. I realised there was a 3D computer monitor asset with post-it notes on it, – and that was duplicated in an office many times – which was then duplicated in over hundreds of floors – in a building in the background…it is one of the dangers of having a library of high quality assets!”.

One of the other funny aspects of the New York scene were the pigeons. While in the film’s logic no one notices the chases due to the magic reality ‘bubble’ that the heroes are fighting in – early on during dailies the ILM team decided that the flocks of pigeons could notice something was wrong and react to vast bending buildings by flying out of the way. “It was a little inside joke, no one could tell what is going on apart from the Zealots, the Sorcerers, Mordo .. and the Pigeons”.

Hong Kong, was primarily executed in the ILM London office. Mark Bakowski supervised the London team. “One of the hardest things about it was just talking about the work. It might seem easy now, but everyone found it a challenge” explained Bluff. “For example, a shot might be part of a sequence moving backwards, so the buildings were re-forming, yet the principle actors are acting ‘forwards’, as normal in real time, yet there are extras performing with them that were acting backward, to collide with them, and then the next take of the next shot, the camera was going the opposite direction to shoot some actors running forward – who would be reversed in the comp – now everything I have just said there is utterly confusing – but that is exactly what we were dealing with !” he jokingly tries to explain.

In addition to this, the team would have to add in element shots that would need to be comped in going in reverse, and multiple layers of extras added…”so once we worked out the language and the previz – we could then go ahead and plan and execute the work, – but it was a completely different headspace from New York”. The Marvel team did a large amount of testing prior to principal photography to work out how to best shoot the material. A lot of care was taken to work out what cheats could be done and how to approach the principle photography.

The team had hoped to film the Hong Kong sequence in just 15 nights but it ended up being almost 23 night’s shooting on the Hong Kong Set. “It was a real challenge,” explained Ceretti, “During pre-viz we realised we were going to shoot a lot of motion control, and we worked very closely with ILM to see if we could shoot cleverly without so much motion control, we couldn’t. It was just so complicated, but we knew for some shots were shooting with the 65 – so we knew we could shoot locked off at 6K and then add movement in post”.

Due to the complexity of the Hong Kong sequence the team managed to only shoot five or six sequences a night. It was not just motion control or the problem of half the cast moving forward while the other half walked in reverse “we also shot some of the actors at different frame rates, the background guys were moving at half the speed of our foreground actors, who were moving at normal speed. It was a gigantic puzzle and we shot it in order as we were really destroying the street as we filmed”. he explains.

Due to the atmospheric effects in Hong Kong and all the dust, ILM used a Deep Composite pipeline for the sequence.

The complexity of the entire sequence was greatly addressed by the detailed Perviz provided by the team at The Third Floor. Bluff felt that anytime any questions came up with the actors – watching Previz would address most of their issues.

One aspect that Ceretti really enjoyed about the sequence he only discovered after the film was finished. He pointed out that when a building reforms it takes a while to work out what you are looking at, and then near the end of the reverse sequence it seems to pop into place and the audience can see that all the debris is from say a building. This last pop is something that the Audio team also emphasised, led by the film’s composer Michael Giacchino. “He thought about the music the same way,” comments Ceretti “All the attacks on the sounds are working exactly the same way our simulations are playing, they are all playing backwards in a natural way so it is a deceleration, and the music plays the same way, and I thought that was a nice parallel between our worlds” he said.

Commenting on the simulation Bluff said that at ILM they very often had to imagine what the sound effects would sound like. “To make the effects play in reverse did need some modification to the tempo, If you watched the animators showing shots to each other you’d see a lot of people hand waving and making sucking in air sounds – just trying to tell the story.. to ensure that when you arrived at the shot it wasn’t just a waterfall of debris”, he adds. Sometimes to make it work, Bluff’s team had to tweak the weight so things moved differently, particularly with the bamboo reforming in the background. “It is not logical when you look at it backwards” Bluff explains. “But it was a good challenge because it became creative and it was design led.”

“The audio team played all the effects backwards very carefully, they really did a great job” adds Ceretti. “Everything working together – sound and image – it was all thought about at many levels – which is nice”.

ILM did their Digital Doubles in RenderMan, unless they were “ants in wide vistas, which might have been done in V-Ray”, says Bluff.

While ILM produced photorealistic digi-doubles, these assets were passed to Method which was required to work at even higher resolutions because the digital Dr Strange would both come closer to camera and end up falling through his own eye ball.

In addition to this ILM fed some of the other teams the digital double assets that were the basis of the Digital Dr Strange. ILM needed to produce a digital double for New York and Hong Kong. Plowman Craven captured the static actor mesh and textures. The face asset scans of Benedict Cumberbatch were derived from the Disney Research in Zurich developed Medusa rig face, and interestingly, the Swiss team provided a new type of high quality eye scan and model pioneered by Pascal Bérard (ETH Zurich/Disney Research: Zurich). We will have a separate story on the technological innovations in Eye scanning later this week.

The actual eye photography as well as the body scanning of the actors and sets was supervised by Huseyin Caner. “Actually the eye was very challenging” he recalls. “We were asked to take very close up images of Benedict’s eye and this is only the second time we have been asked to do it, …and we did end up working very closely with the Disney guys working it out, .. it is like taking a shot of a curved mirror and you don’t want to be seen in the mirror!” he explains. “You need the right tools and really know what you’re doing, – Benedict was very patient especially as we were about 10cm away from his eye with a quite powerful light – he was very helpful”.

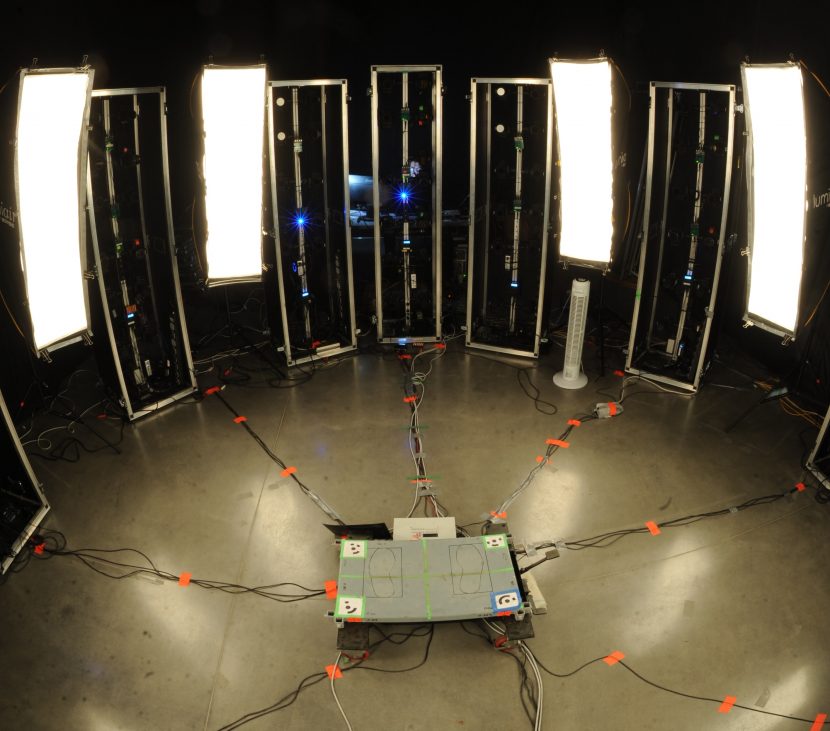

The team had a main rig set up on set of some 130 SLR cameras able to fire simultaneously. This means in a few seconds the team could do a fully 360 high resolution photogrammetry shot of any actor. Over the course of the film Cumberbatch was shot 5 or 6 times in various costumes. Each session taking about 5 mins, from walking in, – to being done. For all the props the team did additional high resolutions shoots – this included weapons, cloaks and key parts of the actor’s costume.

The rig Plowman Craven used has 4 extra Canon 5D cameras with longer lenses aimed at the actor’s face. “In one shot, in a split second we get head scan, body scan and the texture photos”, explains Caner. The Plowman Craven team hand over whatever the VFX teams requires and here they handed over cleaned up triangulated meshes. This is ready to be retopologized. They also provide camera positions (rig survey details), HDRs of the scanning room, textures, colour calibration shots and any special requests the teams had based on the item or actor.

Method

Director Scott Derrickson summed up, “‘Doctor Strange’ is a mind-trip action film that is bizarre, ambitious, wild and extreme. It’s filled with things that you haven’t seen before. Each set piece is an attempt to do things in ways that we haven’t seen in the past, and to give audiences fresh visuals and fun, adrenalized sequences.” This is never more true than in the sequence known internally as the MMT or Magical Mystery Tour

Chad Wiebe, Visual Effects Supervisor at Method Studios, who managed the Canadian Method team commented that the MMT “was the most complex scene that we worked on”, although most of that work ended up being done by Method’s LA team. Wiebe was Method’s VFX supervisor of Avengers: Age of Ultron, and importantly Method Studios had worked on Ant-man, led then by Greg Steele as VFX sup. The Ant-man connection was important as some of the imagery from the MMT would connect visually with the sub-atomic end sequence in Ant-Man.

“When you go into the MMT – there is definitely a moment of it that references the quantum realm that was done in Ant-man, “explains Ceretti. “Especially with the floating cubes and elements like that but it (MMT) was also a nod to what we had done in Guardians – especially with the saturated colours and other things like that”. The quantum scale of Ant-man and the saturated Knowhere, located inside the decapitated head of an unidentified Celestial being are all in the same universe as Dr Strange and so visual tie ins all build the richness of a Marvel Universe, at a design level.

At Method it had been the plan for the work to be split 70:30 between LA and Vancouver but in the end the only major shot that was done in Canada was when Dr Strange was shot out of the room and into the Wormhole, which starts the MMT sequence. The rest of the Multi-verse imagery was supervised in LA by Doug Bloom, with Olivier Dumont working alongside as the visual effects designer. Method did all the MMT with the exception of one part that linked visually to the end Dormammu sequence. As Luma Pictures did all the Dormammu effects and Dark realm animation work, Method worked with them for this part of the multi-verse.

Actually the sequence was planned to be 7 minutes but grew to close to 10 minutes in one version of the edit. In the end, the sequence was approximately 2 minutes of on screen effects and consisted of 7 realms each nicknamed by the production team, (although many of the nicknames were not entirely accurate by the time those sequences were completed).

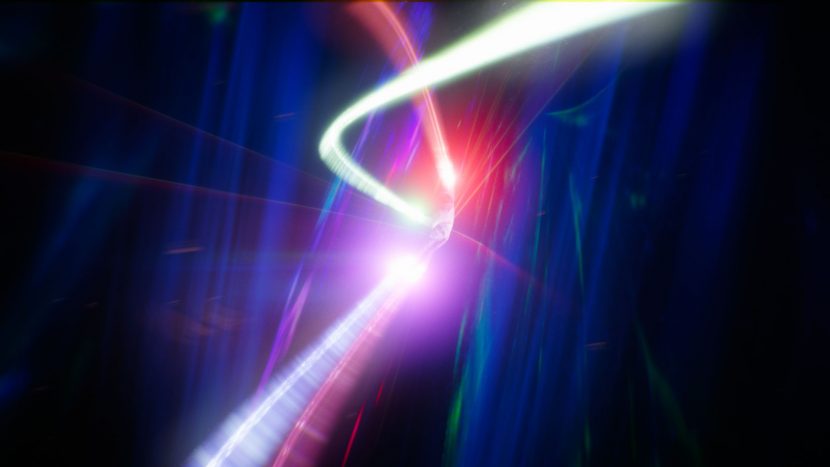

- From the Wormhole we find Dr Strange in the “Speaker Cone’

- He then travels to Bioluminesce world (but on screen this moved more to a blue world than the name implies)

- This leads to the fractals of ‘soft solid’ world

- Then the Quantum Realm that is a nod to Ant-Man

- Strange then has the fall through his own eye and Cosmic Scream

- The Dark Dimension Realm and finally

- Shape Shifting realm before returning to ‘earth’ in our realm or reality

This last realm did have Dr Strange morphing and changing shape, becoming cubes and all manner of different shapes, but ultimately the director decided the audience needed to see Cumberbatch and so most of the shape shifting was removed.

Doug Bloom points out that in addition to the stylistic reference to fractals, in the MMT sequence the team used actual “pure mathematical fractal geometry” which they baked down into volumetric representations which then moved through the traditional Method pipeline. They also used some fractal mathematics to place geometry, and then the team “manipulated that geometry by hand – so we broke the equation, but it still had the feel of a fractal” explains Bloom. Olivier Dumont adds that with the actual fractals the artists do not have much flexibility to manipulate the fractals “so you are better of to bake it out, and place it the way you want… that is the hard part with fractals they are not really controllable”.

They reference and used Mandelbrot equations, Mandelbulbs, full volumetric fractal surfaces and volumes “and we found what we could do reliably to control speed and frequency during our R&D period, and then it was a game of offsetting the animation until we got something that sat in the shot properly “explains Bloom.

As mentioned above Method in LA needed to do some of the most detailed digital double work for the MMT, and while they got assets and scans from ILM and the production, Method needed to work much closer to camera. “Their CG double wasn’t going to be used as closely as ours, so we had to be a bit more precise”, explains Dumont. “We also had to transition smoothly between a real shoot of Benedict on a gimple to a CG model without noticing anything, so we had to have all the proportions and textures just right”. The data from set included some cross polarised facial images from Plowman Craven’s work but this was extended based on Method’s “previous work on digi-doubles and really studying the reference photos – and in the end brute forcing it, rather than using an advanced acquisition approach – which is great if you have all the data, from say a lightstage but we didn’t have all that so it was a hybrid approach of reference and tweaking” explains Bloom.

As mentioned above Method in LA needed to do some of the most detailed digital double work for the MMT, and while they got assets and scans from ILM and the production, Method needed to work much closer to camera. “Their CG double wasn’t going to be used as closely as ours, so we had to be a bit more precise”, explains Dumont. “We also had to transition smoothly between a real shoot of Benedict on a gimple to a CG model without noticing anything, so we had to have all the proportions and textures just right”. The data from set included some cross polarised facial images from Plowman Craven’s work but this was extended based on Method’s “previous work on digi-doubles and really studying the reference photos – and in the end brute forcing it, rather than using an advanced acquisition approach – which is great if you have all the data, from say a lightstage but we didn’t have all that so it was a hybrid approach of reference and tweaking” explains Bloom.

Regarding Cumberbatch’s eye modeling, halfway through development, Method got the high resolution eye reconstruction from Disney Research in Zurich, based on stills taken on set by Plowman Craven. “That gave us the topology of the Iris, but that geometry had to be rebuilt for our pipeline, so even though it was theoretically high quality geo – it actually was used as reference as we had already started sculpting (Z-brush) based on the photography”. The eye and face of Dr Strange were all rendered in V-Ray, but without the benefit of the most recently SSS Alshader.

“We are only just looking at that now, we actually referenced the (DHL) Emily project” said Bloom. “We used it as a base but we have such a solid team of Lookdev artists who have spent many years doing digi-doubles here and at other companies and they just have a really good understanding of what we needed to make that skin look and feel realistic”. The character team at Method on the project was three Lookdev artists, one modeller (Maya and Z brush) and one texture painter working in Mari (from the Foundry). His face used a FACS based blendshape rig, “the modeller has a lot of experience with processing FACS data and hand sculpting all the blendshapes.. and we actually had him sitting with the artist doing the face rigging”, explains Bloom. “The one thing that was forgiving for us was that Strange did not have to deliver any dialogue.” The hair and facial hair was all done in XGen but by team members in Vancouver. Both grooms of his hair and face were developed in parallel in Canada while the LA team worked on the skin and general shaders.

The MMT came later than the initial work awarded to Method so the Vancouver team remained focused on the sequences Method already had, and LA managed the MMT. Ceretti commented that given the complexity and visual creativity required the company was a good match for the MMT, “Method have really good creative people there, .. also I had worked at Method for a few years – I know these guys very well and I know they are very creative, – obviously there is also the competitive bidding process, – but we decided to go with them”.

In Vancover, Method did many key sequences such as the Car crash, the rooftop training and the key discover that Dr Strange could control time when we first experiments with an Apple in the Library.

Method Studios completed a total shot count of 270 shots with a crew of 140 artists (including productions staff).

For the time warping apple shot, Wiebe did not want the CG apple to look like it moved from one blendshape to another. He felt this would not convey the sense of time, a blendshape animation would give the appearance of a morph which could be interpreted as just a magical transformation, rather the team focused on making it look more photographic and more like a timelapse which in turn signals the control of time not just a magical transformation of the apple itself.

For the Car crash Wiebe’s team in Vancouver combined High Speed Photography shot with Cumberbatch and some green screen car photography, “But the majority of the work from when Strange’s Lamborghini hits the truck and goes off the side of the road – is all a full CG environment, digital car, digital Dr Strange and remains fully cg until the point he hits shoreline water”, he explains.

Lamborghini lent the Dr Strange production six $237,250 Huracán LP610-4 Lamborghinis, or as Bloomberg news called them six “10-cylinder, wedge-shaped, screaming hunk of menace”. The article also reporting that the team trashed one of those six for the production.

Inside the car, there was a Phantom camera rig filming Cumberbatch in a special car set, but in the final film, while his face and hair were kept, most of the rest of the frame is digitally replaced for a more dangerous interaction with more flying glass and debris added. The team worked hard to make the CG cut seamlessly with the High speed footage.

Method simulated the car as both a rigid body dynamic (RBD) simulation with cloud sims for the airbag and Strange’s wardrobe. The car was simulated in Houdini and then rendered in a hybrid system of both Mantra and V-Ray.”The majority of the larger RBD pieces were exported to Maya as we could render them in V-Ray. A lot of the particulate, smoke and really fine detail debris was rendered in Mantra.” he explained “The Environment was also rendered through Maya in V-Ray”. V-Ray is Method’s native renderer and Wiebe tried to do most things in V-Ray, but the studio has also rendered other major projects in Mantra, heavy destruction films such as San Andreas were “all done in Houdini through Mantra for example, but as this show had a lot of physical rigid bodies such as the environments, we tried to stay in V-Ray as much as possible, – but all the effects, or particles such as the mandala (Elder magic) were done in Mantra”.

In the film there are various types of magic that the team needed to address. Visible spells from mandalas, magical runes shields, whips, stalks and aerial ‘lily pads’ that allowed them to jump off mandalas in the air. These were all done in Houdini.

The patterns came from the Art Department that had done very specific research and “a lot of it was based on sacred geometry” explains Wiebe. “It is really a play on Mathematics, …there was tons of different designs of ancient mysticism combined with mathematics… the combination of those elements made it unique and not really something I think we have seen before”.

These were also helped by having on set contact lighting from special LED props. The spark portals that Strange and others travel through were represented with a large on set physical chaser light array that cast not only contact lighting on the cast but also light spill on to the set and environments. The actors held light LED leads and small LED clear plastic shields for the same reason. While these requires some work to remove in post, the team thought the realism of the contact lighting was more than worth the effort. Framestore and other facilities all worked on the range of magical effects shots, as these spanned almost all the effects sequences.

The magic is light-based, producing sparks and energy, and uses a fiery yellow/orange colour palette throughout. ‘As with any form of magic, the complexity of the effect came from how subjective the look is and how much we wanted it to look real’, states Alexis Wajsbrot, Framestore’s CG Supervisor. ‘When we started on the effect, the first concept used long-exposure photography. The reference was a streak of light from Strange and his magical whip; the sparks were also long, creating a curved flare which you can’t generate without CG. It just ended up looking too fantastical; so we kept making the effects shorter.’

Framestore

In terms of ‘casting’ visual effects companies for particular work, Framestore had impressed not only Ceretti but also Marvel with their great character work on Guardians of the Galaxy with Rocket in particular. While this film had no such explicit CG characters, Ceretti knew there would be a lot of digital double work and they also wanted Dr Strange’s cape (or ‘Capey’) to be very carefully animated.

Framestore worked on over 365 shots between October 2015 and September 2016, covering work that spanned environments, the complex ‘Mandlebrotting’ of sets, incredibly high resolution digi-doubles of key characters, the creation of the astral form and animation of the cape.

Ceretti describes the cape as being heavily influenced by Aladdin’s magic carpet. In the story the cape is like a horse in an old western, “a horse and a rider – trying to break in a horse, and then they start to work together and at first the horse is a bit crazy and the rider doesn’t know how to work with it – which is what happens at the beginning with Dr Strange, the cloak is doing its own thing in Sanctum sequence and he doesn’t know what it is trying to say, and then they start working together and then he wears the cape, and he can fly and at the end there is definitely a connection between them – we really tried to tell the arc of the story between them”.

Cloak was not rigged with cables, so there were at least three different cloaks. In addition to the main full costume cloak there was a collar section so that the main cape could be animated later. On set for the action sequences they would sometimes film with a partial cloak and then re film with no cloak at all so it could be added later in post by the animation team.

Not all the cloaks were by Framestore but “all the big animation work with the cloak is done by Framestore” Ceretti explains. At Framestore the VFX Supervisors were Jonathan Fawkner, Mark Wilson and Rob Duncan.

A particular challenge for the compositing team was developing and propagating the look of the Space Shard effect seen throughout the Sanctum attack sequence. “The space shards were created using a combination of CG animated trails whose motion vectors were treated in comp to give us an interesting distortion for fast moving weapons,” explained Compositing Supervisor Oliver Armstrong. “A system created using Nuke’s particle tools was also used to generate slower, flowing distortions for the more static shots.”

Mandlebrotting the set

The Mandlebrot sets within the film are dream-like, kaleidoscopic interior shots which fold the environments, pulling them apart and re-configuring into complicated patterns around the characters. ‘The effect was quite difficult to nail down, as to how far we should go with it’, says Wilson. ‘Especially when our live-action characters had to be integrated within those scenes.’

Because of the quantity of movement within the whole set, nearly everything needed to be animated; even if the team didn’t strictly need to animate a prop, they had to make it available to the animators in case certain surrounding elements within the scene needed to move in a different way. Led by Animation Supervisor Nathan McConnell a new pipeline was introduced, whereby the animation team was set up with a rigging tool of their own. Says McConnell, ‘We designed a new workflow to create a toolbox for the animator, incorporating all of the levels of movement and pivots. The animators were able to rig everything themselves, and had the power to duplicate the geometry if needed.’

‘There’s the whole set bending and moulding, cloning and reconfiguring itself, but then there’s also the Mandelbrot pattern, which is the mathematical formula that creates these crazy patterns and the fractured world aspect to it’, adds Wilson. ‘Once we had animated all of these assets, our FX team then placed additional Mandelbrot sponge fractal patterns inside it, using Houdini to drive a proprietary Arnold procedural iso surface shader at render time to give us a mathematical organic growth that was really cool. That was all new to us!’

A shader writer at Framestore, Josh Bainbridge, was principally involved in the execution of the Mandelbrot shader. Bainbridge provided details regarding their work:

“Due to the complexity of the Mandelbrot geometry and detail required, we decided to extend Arnold to allow for intersection of arbitrary iso surfaces. What this meant was that a mathematical function could be chosen to define a shape, and then a ray marching technique called ‘near distance estimation’ was used to find the intersection along a ray. This allowed us to render a range of very complex and detailed structures (including the Mandelbrot fractal), without storing or looking up any volume data. It also seamlessly integrated the lighting and shading of the surfaces into our physically based rendering system.

Our solution involved every iso surface being confined to the space of a particular polygonal object. Attached to this object would be information on the mathematical function and its parameters to be used when rendering the surface. This allowed effects artists to change parameters for each object individually, and combine different iso surfaces in one scene. We then used the same polygonal object to gather other information such as texture parametrization, which allowed us to lookdev and light the fractals like any other asset.”

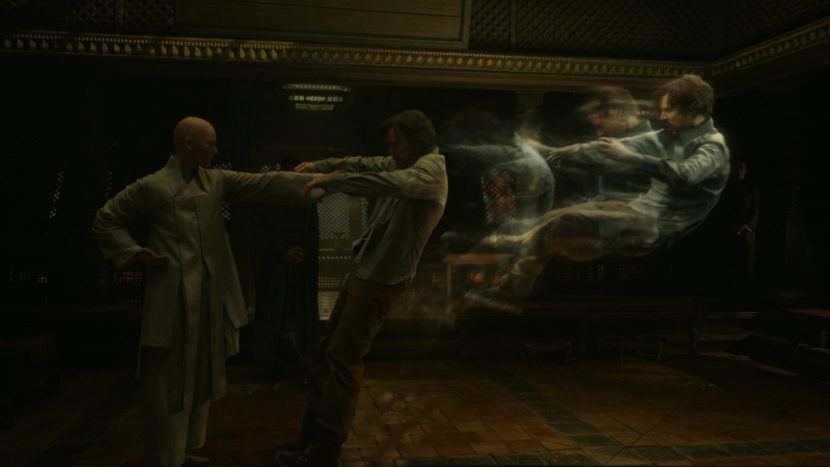

The Astral Form

One of Doctor Strange’s powers is that he can exist in another plane – what the Marvel world calls the ‘astral plane,’ home of his “astral form’. However, when Strange is in his astral form, he can still be in the real world although not visible to humans. It’s fairly established in the comics, but was a challenge for Framestore to digitalise how that should look. ‘It was one of the hardest effects we’ve had to deal with at Framestore; finding the right balance of a look that was subtle but also beautiful’, says Wajsbrot.

VFX Supervisor Jonathan Fawkner led the work on the astral projection scenes. It became clear early on that with Strange in a semi-transparent form, the background would play a crucial role. In every single shot the team therefore needed to vary the amount of transparency and the tools to make it feel as though Strange was transparent but still visible, using subtle tricks to keep the transparency coherent.

‘In the end we back-lit everything’, explains Fawkner. ‘When our characters were back-lit, you actually got a lot of negative space, which the eye perceives as contrast. It just so happens that when the character is transparent, the eye interprets both what is behind and that lack of detail at the same time. On top of that we had the FX team drive a particle system that gave the characters a slightly twinkly patina.’

It wasn’t just a case of making the characters transparent, however. Extremely high resolution CG digi-doubles were cut back-to-back with plate photography. These had to be graded so that they followed the lighting principles established for the astral form effect. Because the characters are able to fly, the team also had to find a level of gravitation that made them look naturally airborne, without encroaching on zero gravity effect. They also needed to illuminate our world. ‘Strange is meant to be a source of light when he is in astral form’, adds Wajsbrot. ‘We were always imitating some kind of aura around him, a volumetric emission from his clothes and skin in the same colour, which we controlled in compositing.’

At one point in the film, Strange is in his astral form and has to make himself visible to people in the real world to instruct a surgeon who is performing a complicated surgery. Framestore named it the ‘semi-astral’ state. ‘We had Strange emerge from a glassy, fractured portal’, explains Fawkner. ‘They did shoot Benedict on set but we had to adapt his body in some shots, providing CG arms and adjusting his position using our digi-double.’ This semi-astral form was less luminous and very slightly more dense: ‘It was always just a case of perception. How transparent do we make it? It was a lot of trial and error.’

‘As the film went on, we got a real feel for the world that Doctor Strange is living in, and the effects started to flow’, says Wilson. The film’s imagery and storyline belong in Marvel’s world, but the tone is unique in its use of magic and dark arts, illusion and mystery.

Lola

As well as key sequences and effects, Framestore also worked on the Crimson Bands of Cyttorak, a harness used by Strange to restrain Kaecilius. Interestingly, during this sequence a (digital) tear is shed. The tears and most of the eye work on the Zealots was done by Lola VFX.

As the Zealots are transformed by Dark Magic the area around their eye’s take on a very distinctive look which is based on a geode. What is remarkable is that while the effect appears to be very 3D and solid, it is all done with 2D compositing in Flame by Lola.

As the Zealots are transformed by Dark Magic the area around their eye’s take on a very distinctive look which is based on a geode. What is remarkable is that while the effect appears to be very 3D and solid, it is all done with 2D compositing in Flame by Lola.

There was some makeup applied in some shots, but most of what the audience sees, in terms of shadow and highlights is just detailed, hand crafted compositing. Lola has an incredible track record with Marvel films, from the incredible skinny steve to the face work of Vision.

The work was done in LA at Lola supervised by Trent Claus.

Luma and others

Luma did the first mirror dimension in London which sets the tone for the film and the all of the final Dormammu sequence. As already mentioned the Dark realm also appeared in the earlier MMT sequence. Here Luma designed the environment to make it have links to the style of Guardians and also be unique to this film. In the MMT there needed to be, in one shot in particular, a seamless transition from Method’s work into Luma’s work. Luma provided Method with a series of QuickTime and Maya files with Luma’s camera. Luma’s work at this point was very much in sync with what the client wanted and Method aimed to blend into Luma’s work extending backward the Luma camera. Method then gave back a unified camera that allowed Luma to render their sequence and have the two renders match perfectly. Luma then handed over their render for the final composite at Method. Method also provided the digital Dr Strange in this section. Once the transition was done the work was fully completed by Luma as the shots were stand alone.

The only last minute change was to grade the footage to make sure the link was understood by the audience by having the colours even closer together between the MMT dark realm and the final showdown.

The Dark realm also had influences of microscopic photography and tendral like links which were a nod to the original Marvel comics.

Set your rules in the world of fashion and amaze all the masses around you by attiring this colorful and attractive necklace. This elegant necklace has been inspired from the famous character which can be used as necklet also. This Eye of Agamotto pendant necklace is a very important relic that can be quite dangerous if used in the wrong hands, because it has the ability to do any number of things, the most dangerous thing about this is, it can sort of manipulate probabilities which are the part of doctor strange story.