Gemini Man is an action thriller film directed by Ang Lee and starring Will Smith, Mary Elizabeth Winstead, Clive Owen, and Benedict Wong. The film centers around a hitman who is targeted by a younger clone of himself. Both characters in the film are played by Will Smith, thanks to the talents of Weta Digital’s artists.

While there was a range of visual effects in the film (and other companies who contributed to those VFX shots), the remarkable advance in facial work seen in Gemini Man was done entirely by Weta.

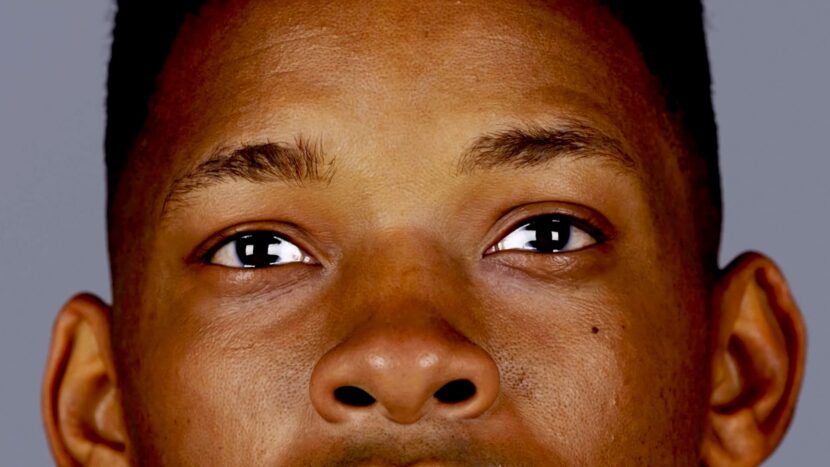

To produce the 23-year-old version of 51-year-old Will Smith, the production decided to not de-age Smith with compositing as has been so successfully done in many Marvel films. Rather the team decided to produce a fully digital younger Will Smith. The results represent some of the best digital human work ever done. The fidelity, rendering detail, and acting displayed by the digital character establishes a new benchmark for digital humans.

The Weta team started with a USC ICT Light stage scan of Will Smith and a series of photo sessions and turntables. Weta was not aiming to re-create Will Smith, but a younger version of Smith, referred to as Junior in the film. To achieve this, Weta first created a perfect likeness of 51-year-old Smith as a stepping stone to the 23-year-old version of the actor. Their photoshoots were extensive, Weta even photographed the “backside of his teeth” explained visual effects supervisor Guy Williams at Weta. Williams worked with production VFX supervisor Bill Westenhofer, and co-visual effects supervisor Sheldon Stopsack.

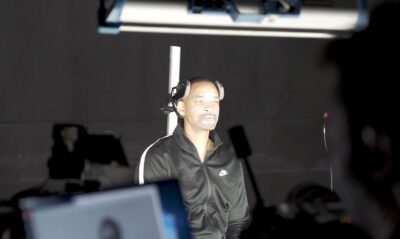

Weta photographed Will Smith on three separate occasions, over the course of the film. Weta did a shoot once in early prep, again at the beginning of the shoot and then again at the end of the shoot. The team did a FACS session at the beginning of the shoot and in addition to scanning actor Will Smith at USC ICT, they also scanned Chase Anthony who is a young African American actor. He was scanned for young skin texture reference. “Chase Anthony, is a 23-year-old man that kinda has skin that looked like Will Smith when he was younger, not his facial structure, – just his skin. We did a photo shoot with him and two shoots with Victor Hugo.” Hugo played Junior’s on-set reference for Will Smith to act with explained Williams.

Weta photographed Will Smith on three separate occasions, over the course of the film. Weta did a shoot once in early prep, again at the beginning of the shoot and then again at the end of the shoot. The team did a FACS session at the beginning of the shoot and in addition to scanning actor Will Smith at USC ICT, they also scanned Chase Anthony who is a young African American actor. He was scanned for young skin texture reference. “Chase Anthony, is a 23-year-old man that kinda has skin that looked like Will Smith when he was younger, not his facial structure, – just his skin. We did a photo shoot with him and two shoots with Victor Hugo.” Hugo played Junior’s on-set reference for Will Smith to act with explained Williams.

The production shot with what they called an AB approach. This referred to having Will Smith playing the role of Henry Brogan on camera, in situ and Victor Kigo, his acting partner, playing the role of Junior. Later the roles would be reversed. Using this approach, Will Smith had someone to react to, someone to act with, an eye line and also someone who could give the actor something more than just someone reading lines back to him. “Victor Hugo is an actor and he was trying to give enough performance to anchor Will’s performance. As a result, you get this beautiful synergistic performance between the two of them”.

Later in the production, Weta helped set up a mo-cap stage in Budapest, near the end of the shoot. The team mocapped all the AB performances again. “We flipped the equation so that Will Smith was now playing the role of Junior and Victor was standing in as the 51-year-old Will”. The other actors, such as Benedict Wong or anyone else important in the scene also came back and reprised their roles in the mocap stage. One of the driving factors for this production was providing the best acting environment for Will Smith. “One of the things that I said from day one to Ang was Weta’s digital performance will only ever be as good as what Will can give you”, Williams explained. Weta encouraged the production to not think of the Mocap as a technical exercise but as just another day of performances. “We put a lot of effort into trying to make the Mocap work as well as possible for Will and for the other actors”. The team decided against trying to have Will shoot one role in the morning and then swap for the afternoon as the other character. Such a schedule would have been both hard for the actor and “it would have burned an hour and a half in the middle of our shooting days, with makeup and costume changes – which you can’t afford to lose ” Williams explains.

Later in the production, Weta helped set up a mo-cap stage in Budapest, near the end of the shoot. The team mocapped all the AB performances again. “We flipped the equation so that Will Smith was now playing the role of Junior and Victor was standing in as the 51-year-old Will”. The other actors, such as Benedict Wong or anyone else important in the scene also came back and reprised their roles in the mocap stage. One of the driving factors for this production was providing the best acting environment for Will Smith. “One of the things that I said from day one to Ang was Weta’s digital performance will only ever be as good as what Will can give you”, Williams explained. Weta encouraged the production to not think of the Mocap as a technical exercise but as just another day of performances. “We put a lot of effort into trying to make the Mocap work as well as possible for Will and for the other actors”. The team decided against trying to have Will shoot one role in the morning and then swap for the afternoon as the other character. Such a schedule would have been both hard for the actor and “it would have burned an hour and a half in the middle of our shooting days, with makeup and costume changes – which you can’t afford to lose ” Williams explains.

On days where they were only shooting Junior, the production would set up Will Smith with precise tracking marks on his face and then he would wear the infrared head-mounted camera rig (HMC), which was powered by a battery rig carefully strapped to the small of the actor’s back, under his clothes. The HMC used infra-red light so it would not cast any visible light onto other actors, props or Junior’s costume. The infrared lights/dots of his vest could be seen through Will’s costume. “They actually show through the clothing as discrete dots and that helped us with tracking his torso,” commented Williams.

Weta insisted that the performance was king “and to that end, the body is part of the performance. That’s why we said we could never do a Will (Junior) head replacement onto another actor, that body would not relate to Will’s performance”. But Williams goes on to point out that if the scene had only Will performing as Junior in isolation, then that was no longer true. “All of a sudden the performance is related to the body. So now we only can replace the head”. Since the team was capturing the head in situ at the same time as the body. “We would mocap the shoulders so that we could do a perfect track of the head back onto the shoulders. We would call that a ‘b-side only’ shot”.

Weta insisted that the performance was king “and to that end, the body is part of the performance. That’s why we said we could never do a Will (Junior) head replacement onto another actor, that body would not relate to Will’s performance”. But Williams goes on to point out that if the scene had only Will performing as Junior in isolation, then that was no longer true. “All of a sudden the performance is related to the body. So now we only can replace the head”. Since the team was capturing the head in situ at the same time as the body. “We would mocap the shoulders so that we could do a perfect track of the head back onto the shoulders. We would call that a ‘b-side only’ shot”.

Face CGI

To build a digital 23-year-old Will Smith the team first built a digital replica of 51-year-old Will Smith, and only when this was matching the actor perfectly, did they retarget his performance to the young digital character.

A FACS session is done with a range of expressions and motion. For the FACS expressions, Will Smith had white face paint and dots on his face, the white splatter paint just helped the photogrammetry have something to ‘grab’ onto. The FACS session produces a set of animated meshes of the 51-year-old Will Smith. While Weta has a system conceptually similar to the Disney Research Studio’s Medusa rig for doing temporal capture, Williams says that “you’d be surprised, – we do less of the motion stuff than you would think. At the end of the day, it’s not really that helpful” he comments. “We care more about where parts of the face go – from A to B, then how they get there. Our system is thoughtful and smart, and so we actually ‘get there’ correctly”.

On the left is a photograph from the FACS session. For the expressions to be remapped to a young Will Smith the Weta team had to make a detailed animated face for Junior with the correct skin texture.

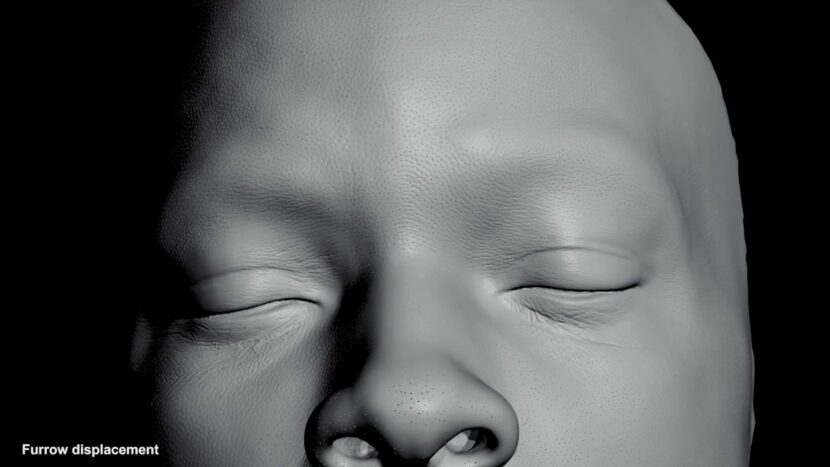

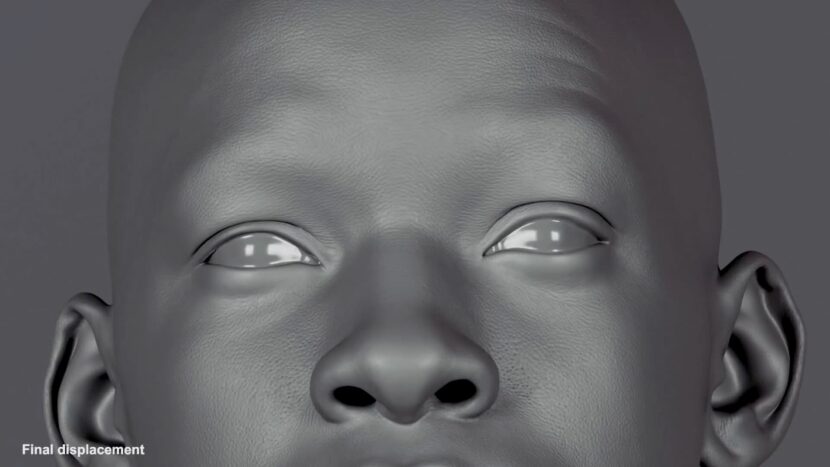

While there was scanned skin reference, the team found a new and inventive way to produce believable skin texture at the pore level, to use once the animation was approved.

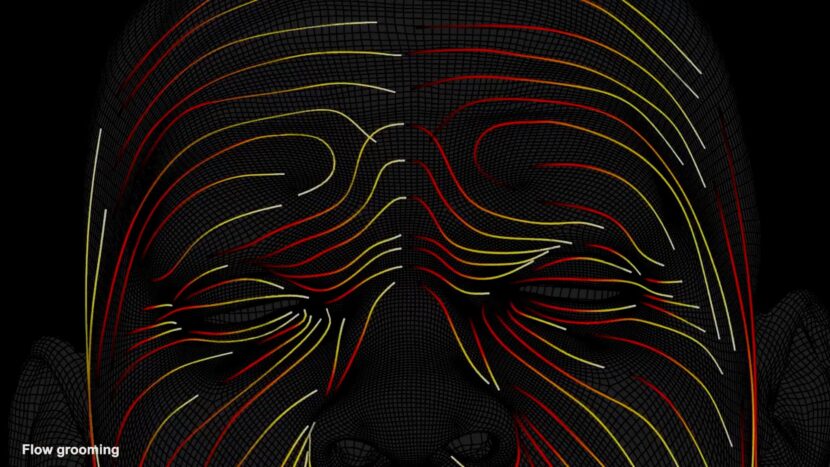

“While we sampled skin textures, one of our shader writers, came up with this idea of growing the pores of Junior’s skin. He basically came up with this very complex rule set for growing pores on a face” comments Williams. Adding “we developed a flow field that defined the flow of the young actor’s skin. Then it creates the connections between the poor sites. So you actually get little elliptical football-shaped pores”

This new approach was important as it no longer assumed a flat 2D UV space that might be derived from a latex mask scanned from an actor’s skin. It actually grows the pores in three dimensions, and not two. “This gave us the best looking face skin that we’ve ever seen” says Williams.

In these images of the Junior, the pore sites are represented with dots (above) and the lines between the poor sites create the wrinkles been the pores (see below). “You’ll notice that it biases the heavier lines along the flow direction”, says Williams. “You actually can see the flow direction in the wrinkle pass. The heavier lines are deeper, so the football shapes come from the heavier lines”. But he adds that the smaller lines are still important “because your face can fold in multiple ways.”

“The fact that we have this million+ polygon mesh of all the pores on a person’s face at high resolution is great, – but then we decided we could go even a step further,” says Williams. “We can run that through a tetrahedral simulation”. And as the face moves, contracts, compresses, and stretches, the pores buckle along the proper flow lines, because there’s a ‘grain’ built into the face. This means that all the micro wrinkles that occur as Junior’s pores start to collapse, are being done correctly”.

While this sequence shows the build up of the face, the animation is transferred first and then the pores are done after the animation is approved. The face facial movement is defined from the FACS session which goes into Weta’s advanced facial solver. The Weta face solver is rarely discussed in detail but it is thought to involve Machine Learning. This then drives the facial puppet. “The FACS session tells you how every muscle moves on the face, but it also tells you how the skin, with its differing densities of fascia reflects that movement”, says Williams

Digital Eyes

Weta also advanced their already complex and impressive digital eye pipeline. “We studied Will’s eyes to a pedantic level” joked Williams. Weta took a lot of macro photographs of people’s eyes. “We had six people sit in a chair for a day, and we just orbiting around them, taking as many photos of their eye, pulling the lids around etc” Williams wanted to make sure there was life in the eyes and that the digital eyes did not look like Dolls eyes. “CG eyes always seem a little bit like a doll’s eyes. I wondered why that was?” he asks. After extensive research, the team decided that it had to do with the way the eyelid and the eye interacted sympathetically to each other. “There’s a merging of the two and if you don’t treat that right, – and get the science right, then the eyes are always going to look like they are from a doll”.

Weta changed their assumptions about an eyelid and the way it ‘merges’ with the surface of the eye. Williams points out that the way it contacts the surface of the eye, causes the lid to almost act like a single surface with the eyeball. “Obviously the two are separate surfaces but we found that the backside of the eyelid is actually very soft. As such it sort of fillets up onto the eye. It squishes the eye and pushes out against the eyelid. The eyelid thus bulges up a little bit and you get this very small little ‘fillet’. That’s hugely important”.

Weta’s complex existing approach already modeled the cornea and the corneal bulge of the eye, along with the scalera, “and the Iris, – which are kind of obvious, but now we also matched the choroid”, explained Williams. The choroid, also known as the choroid coat, is the vascular layer of the eye, containing connective tissues, that lies between the retina and the sclera. The human choroid is thickest at the far extreme rear of the eye (at 0.2 mm), while in the outlying areas it narrows to 0.1 mm. “The choroid is inside surface of the eye”, Williams explains. “If you were to cut a person’s eye open, you’d see that there’s this black material on the inside of the eyeball. It is a sort of an inky black thin little film that sits inside your eye to keep your eye from seeing a lot of white reflection that might otherwise just bounce around on the white surface of the inside of your eye”. While common wisdom in simple medical texts describes the choroid coat as black, Weta discovered something slightly different. “The trick is the core roids are actually not black. It’s a very, very, dark blue”. The reason that this is important is that as your Scalera (the white of the eye) gets thinner, and thus the whites of your eyes go a little bit bluer”. Different cultures, different races all have different melanin contents. Melanin is a dark brown to black pigment occurring in the hair, skin, and also the iris of the eye. “We had to model all this stuff to get his eye’s right,” says Williams.

Another step that was important was to adjust the eyeball shape. “We abandoned ‘spherical’ eyes on this show,” Williams continues. Previously, Weta typically assumed spherical eyes. “But we realized that when you push an eye into an eye socket, the spherical shape deforms to fit the eye socket and the eye socket is not perfectly spherical”. This means that the eyeball pushes out in the corners, which helps fill in the corners at the corner of someone’s eyes. “It’s all these little things that we found out about that helped the eyes sit into the head better”.

The team also modeled the conjunctiva which is the thin film that sits on top of the cornea. The conjunctiva goes all the way up to the edge of the corneal bulge and connects. It is one of the membranes that helps keep one’s eyes wet and moist. It is part of the reason that blood vessels in the eye are actually on two layers and not one. The little blood vessels on their eye that you can see produce parallax with each other, which wouldn’t make sense if they were a single surface.” While this fact had been somewhat widely discussed in CGI, Weta found that the slight yellowing they saw in the corner of Will Smith’s eyes wasn’t occurring on the Scalera. It was happening on the conjunctiva. As this gets thicker around the edge of the eye, one can start to perceive a color influence of this relatively clear material. “The reason that’s important is if you map it directly onto the eye, then as the eye turns one direction, you would see it slide with the eye. But in reality, it doesn’t slide with the eye. It stays in the corner of the eye” Williams points out. Only when these complex and seemingly tiny features were addressed did the tam feel like “the eyes felt like they were alive and sitting inside his head”.

Digital Teeth

As good as the digital Junior looked, the team still kept question itself to improve the final image. Early on, the team decided that while the teeth were modeled to match Will Smith’s teeth based on impressions and photography, they still did not look correct. One of the things the team noticed in some of the photographs suggested that they had the color not quite right. “We basically had Will open his mouth as wide as it could. We then took a Maglite and just rolled it from right to left and took 30 photos of his teeth so we could see how light transmitted around the teeth” explained Williams. “We realized that there was this interesting color issue! Basically your teeth go from yellow to blue-ish. And so we tried to figure out why would your teeth do that?”.

It turns out, as no doubt all dentists know, teeth are made of two surfaces. The enamel and the dentine. Prior to Gemini Man. Weta had complex teeth models, but they had never before modeled the internals of an actor’s teeth!

Weta researched and discovered that the dentine inside a tooth is quite yellow and the enamel is blue. “And they are very transmissive materials, ” explains Williams. “Their culmination averages towards white. When you look at people’s teeth, you kind of ‘see’ white, but if you’re looking up at the thinnest part of the top of the tooth, there’s not very much dentine reaching up into there, as it is very thin. So you’re seeing more enamel than dentine. Thus it tilts towards blue. Towards the center of the tooth, there’s a lot more detine than enamel so it tilts towards yellow”. It wasn’t until Weta modeled the two layers of the actor’s teeth and gave them the proper materials that Weta felt like they started to get a suitably complex relationship of color that looked realistic in the closeups.

Lighting

Every light onset was scanned and captured to get the right spectral information needed to work into Weta’ Spectral Manuka pipeline. By using filters the team did a double bracketed set of HDRs, extending a standard 12 stops of light range in their HDRs, to closer to 24 stops.

The team did not do a spectral sampling session in the ICT Light Stage as “we actually build the face out of melanin components. Our renderer basically says, when light enters the skin, where’s the density of melanin and what are the characteristics of the melanin? And then it does the spectral math against the melanin as if it’s a contributing a pigment as opposed to just a color map”. This meant that Weta didn’t have a color map of Will’s face that included spectral information, rather they had densities of melanin and properties maps of melanin that resulted in, the facial rendering we see in the film. This was coupled with hemoglobin redistribution, so the team could effectively increase the blood flow to the young Will Smith jr. when he confronts his father. “Not only did that affect the overall color of the face, but we did the nose and the eyes even more heavily,” he explains. “We treated them with even more blood so that you start to see an effect as he is tearing up. We had the same kind of setup for his eyes”. The team made the blood vessels more apparent and this actually changed the apparent color of the eyes.

Weta Digital also developed a new technology called Deep Shapes, which allowed their animators to essentially change the reading of an expression from a deep facial layer and see the effect on the top level of the skin. “The concept is that as the muscle moves in the face, they do so are different depths. In other words, if a muscles affect is deep inside the fascia, then you see a sort of broad effect change on the surface of the face.

Gemini Man may not be finding favor with some critics but the facial technology and digital character work are remarkable, and the film has significantly advanced the level of realism for fully digital characters.