Lately at fxguide we’ve been investigating facial and performance motion capture in-depth. So when we heard about Faceware Technologies’ GoPro Headcam setup, we wanted to take a closer look. Faceware sent us their latest GoPro HERO4 kit. Here’s our road test.

Faceware is actually a collection of both software and hardware. In fact, the company not only sells the process but also has services for hire such as rigging and mocap in general. For this test drive we were looking at using the system in a simple previs style application, but the process has been used very successfully in the high-end. Of the top ten games of last year, Faceware was used in four of them including Destiny, Grad Theft Auto 5, NBA 2K15 and, most noticeably, Call of Duty: Advanced Warfare.

https://player.vimeo.com/video/121111740

– Watch Faceware’s GDC 2015 demo reel.

This last title is well known for its advanced rendering of a digital Kevin Spacey by DIGIC Pictures for game studio Sledgehammer and Activision. Spacey plays the role of Irons, chief executive of the Atlas Corporation, a powerful private military corporation with ambitions to restore order to the world in 2054 after a devastating attack that has wiped out much of the traditional forces.

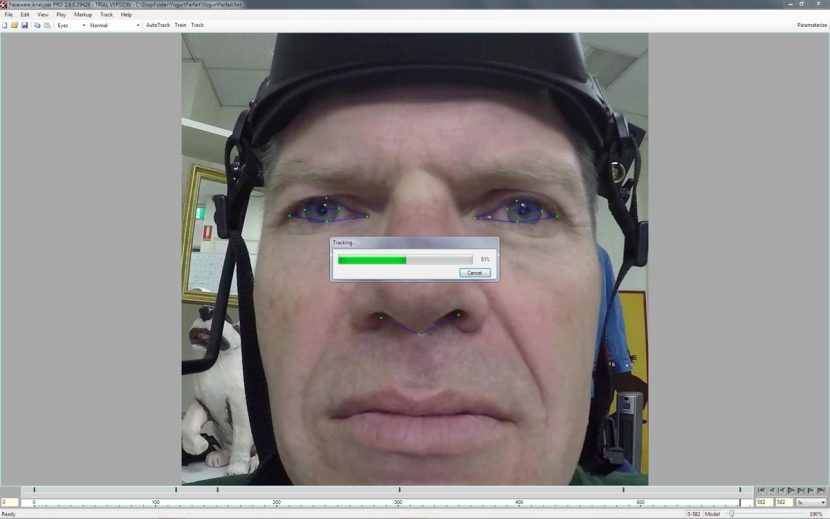

Of course, these high end games require extensive pipelines. For many users, however, a tool such as Faceware is ideal in providing 80% of what is needed, and thus rapid prototyping of ideas and mock-ups. The system at the mock-up level is only tracking major parts of the face, such as the eye brows, mouth etc. There is an Analyzer that allows clips captured with the company’s head rig (using a GoPro HERO4) or via a locked off camera. The idea is to bring in footage and then either manually or automatically run a tracking program. Actually, there is a third option which is a combination of manual and automatic and this is what you are most likely to end up doing. The system can learn from a series of manually adjusted key feature tracking marker adjustment to improve the overall track. The primary tracks are the:

- eyebrows

- eyes

- nose

- mouth

But additionally manual tracking can be done for the chin or jaw and the cheeks (really the top part of the cheek bone). We tested the autotracker on both Caucasian and Asian faces and both worked well, but the weakest track was the eyebrows, no doubt due to the hairy/ill defined nature of eyebrows. But with some manual adjustments and some cut and paste we quickly got the higher frequency jitter removed and the tracks dramatically improved.

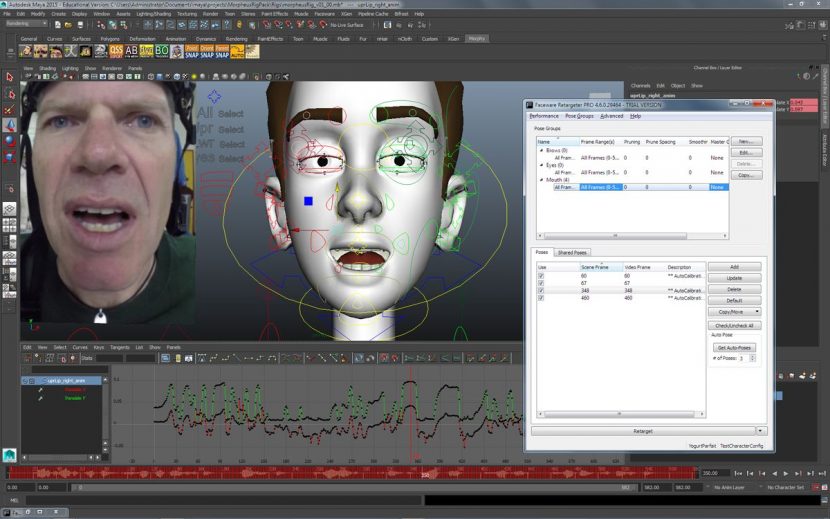

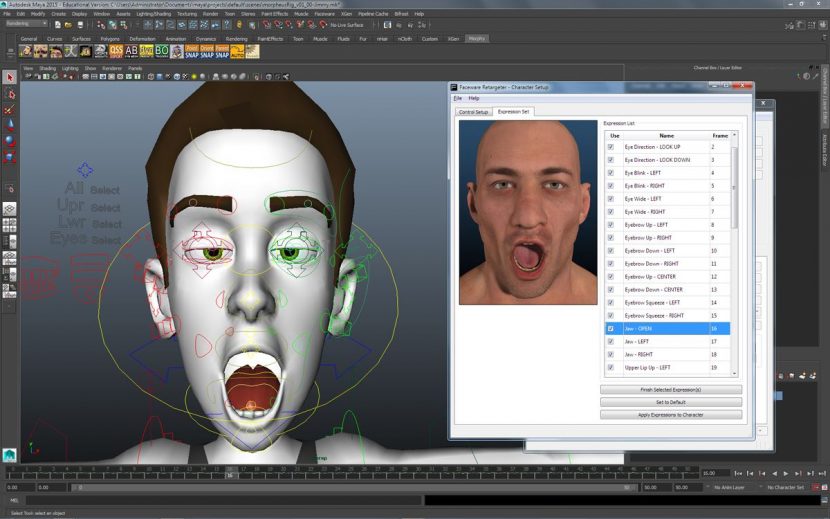

Once you have the track solved, the process of applying the data to your target face begins. The software is structured to allow you to align your subject’s data with your model – this has to be done once only and does take some time as you match the rig to at set of 50 example expressions. For example, you need to move your rig eye left to match the eyes left keyframe, then adjust eyes right for the next keyframe etc. This range of facial poses gives the character their range of expressive emotion. If you exaggerate the face now during this alignment calibration then of course when the system ‘sees’ an eyes left in the tracked data – well that is exactly what you will get.

Most previs would be more concerned with having the eye and forehead and lip sync strong enough to have life but not so strong it seems distracting. For our test when this was combined with the data our central concern was lips closed enough to avoid that gamey vacant look characters often get.

If you are just getting started there are free rigs you can download to re-target with and practice with. Faceware even has a service to help with rigging, but this is a bespoke service we did not test drive.

We were using the latest ReTargeter PRO 4.6 and it works well. Having matched the 50 keyframes, and thus set the rig up, you can start to look at actual data. We imported our track and it effortlessly animated the rigged character. Eye line is very likely to not be correct, of course, as your character will need to look in their scene and make the correct eyelines, and this will need to be adjusted – as anything can be, later in the process.

fxguide’s review

Watch our demo.

The head rig

We reviewed the newest Faceware setup, which utilizes the GoPro HERO4 camera. Since the camera now has a touchscreen on the back, this aids in setting up the head rig very quickly. Also, the rig arrived in a convenient Pelican-like hard case which would be easy to bring on set and transport.

With regards to the hardware we found the head rig was solid and comfortable, but you will want to combine the head data with body data. One of the nice things about not using a head mounted rig is that you get head /shoulder movement from the source camera, the helmet camera by definition is locked to the subject. A second consideration is the correct height of the camera to the face. This takes a little getting used to and experimentation, and for much more serious detailed work requires care as the jaw and lower lip can be forward or back along the line of the camera and look very similar, while in a side view clearly quite different. But over all it’s best to place the camera where the camera can see the mouth more front on, otherwise, it would be hard to define the edge on the inner lower lip, and this is a key tracking aspect.

The helmet is adjustable in terms of its width at the top and back and has extra paddle foam. There are three mounting arms – left/right side camera bar or hoop bar. We found the side camera bars a little short for mounting the camera to be positioned at the center of the face, unless one only pushes the camera bar partially through the bracket.

The GoPro HERO4 camera cage has a very snug fit, tight enough so that the camera doesn’t rattle, loose enough to be able to get the camera in and out of the cage easily and it is a good design. So adjusting the camera position up/down is easy, but sideways is hard with the side camera bar. But once you have found it the camera is safe and stable. There is a helmet strap that could go around the performer’s chin. But it is too tight for a performance and it would restrict jaw movement, this is really just a safety strap if there was a fight scene or some other rapid movement. If the rig is so loose, that the camera has give in it, then you need to adjust the helmet not using the strap. Overall, it’s quite comfortable to wear. Any fast head turn will cause the camera to sway a little but shouldn’t cause any problem for tracking.

Software

To use the system you need to be on a PC not a Mac and before installation you will need to install Matlab runtime first. The software licensing is though Codemeter, which is a part of the Facetware software install – it required the latest update to run when we were testing.

Analyzer

The Analyzer is the tracking software – it tracks the face and exports the data to a format the Retargeter can use to drive a pre-rigged face in Maya or 3ds Max. The Analyzer tracks three features into three groups of data: the eyebrows, the eyes (pupil movement and eyelid movement) and the mouth (lip movement). If the team get the chin and cheeks to work with AutoTrack (which is part of the Analyzer software) then the solve would be amazing. At the moment the AutoTrack feature works great. It will get you most of the way there if the video is shot properly, and just getting say 80% of the base work done is a huge plus. If you need to correct or add tracks by hand, the manual tracking is also fairly simple. There are 51 tracking points, which are called landmarks. You can manually place them on the face at key poses and ‘train’ the software to produce a better track.

The eyebrows are the hardest to track, depending on the hair color of the person. There is quite a bit of jitter in the tracks we did, so depending on your actor it maybe would help to draw a line along the edge of the eyebrow with makeup. You should export a neutral frame to help with the retargeting. It involves basically finding a frame in the video where the muscles are most relaxed, but once you know this, we established a neutral pose at the start of each session with the actor.

Once everything is tracked, the data needs to be parametrized so the track can be used in retargeting. Installation is fairly straightforward, though we did get a license error. But it was resolved after updating Codemeter, as mentioned above.

Retargeter

This runs as a plugin for either Maya or 3DS Max. Before you can retarget the tracking data to a 3D face, the Retargeter needs to know how the 3D face is controlled by the rigging.

The Retargeter needs to ‘learn’ how the face is posed by these controls. This can be achieved by manually posing the face to match the 50 or so pre-determined poses in the expression set. You only need to do that once for each face model and the expression set can be re-used for the same character on different shots.

Now it’s time to bring in the previously exported tracking data from Analyzer. You can also select to bring in the original video clip to compare with the animated face. After the tracking data is brought in, it can be retargeted to the face through the Retargeter’s solve automatically or with manual tuning. Once retargeted, the 3D face should move in sync with the original video and audio. You can play them side by side to compare. If some point in the video you find expressions do not match, you can always add specific poses. Retargeter can auto select poses for you or you can add your own. You can add your own keyframes on top of the mocap data, for example the movement of the tongue can’t be mocapped, you’d have to animate by hand. Also, lips can move independent of the jaw movement, the 26-point capture around the lips is not going to give you that information.

Overall it’s quite easy to use and very efficient. It should be extremely useful for projects where you want lip synced characters, and having Faceware will save you a lot of time. But it is not perfect. Since the Analyzer is not tracking the entire face, the final result will vary depending on how the model is rigged. But it does give you a great starting point for facial animation. Overall it is a great and very inexpensive tool for an animation pipeline and we would recommend the rig and software.

You can find out more about Faceware at their website.