It’s amazing where inspiration can come from. When axisVFX was called upon to help create a race of two-dimensional-like creatures called the Boneless in the Doctor Who ‘Flatline’ episode, they drew inspiration from, surprisingly, glitchy and failed 3D printed objects as well as sea slugs. fxguide talks to axisVFX visual effects supervisor Grant Hewlett, creative director Stuart Aitken and lead effects artist Joe Thornley-Heard about their work for the BBC show.

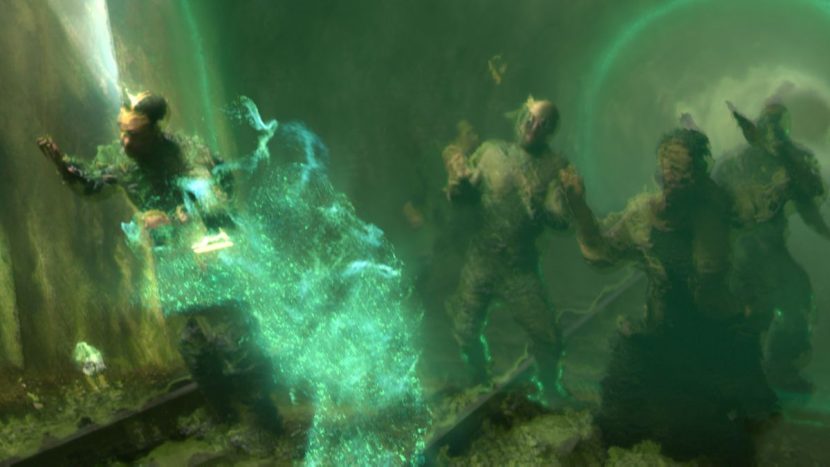

The Boneless are actually seen initially as only two-dimensional creations, distorting the world they move over until they become three-dimensional beings. Each required a different visual effects approach. Here’s a look at how each Boneless effect was created.

The 3D Boneless

Dubbed ’distortion zombies’, the Boneless in their human-like forms went through several design incarnations while axisVFX collaborated with director Douglas Mackinnon and VFX art director Ste Dalton on the look. “One of the early bits of reference we looked at was 3D printing that had gone a bit wrong,” relates axisVFX visual effects supervisor Grant Hewlett. “It was tied in to the idea that they would be permanently connected to the place they were born – so it suited the story that they couldn’t just suddenly chase after people and get to them very quickly. So they had to constantly re-draw themselves, but they weren’t very good at that so that’s where the distortion and pieces missing came from.”

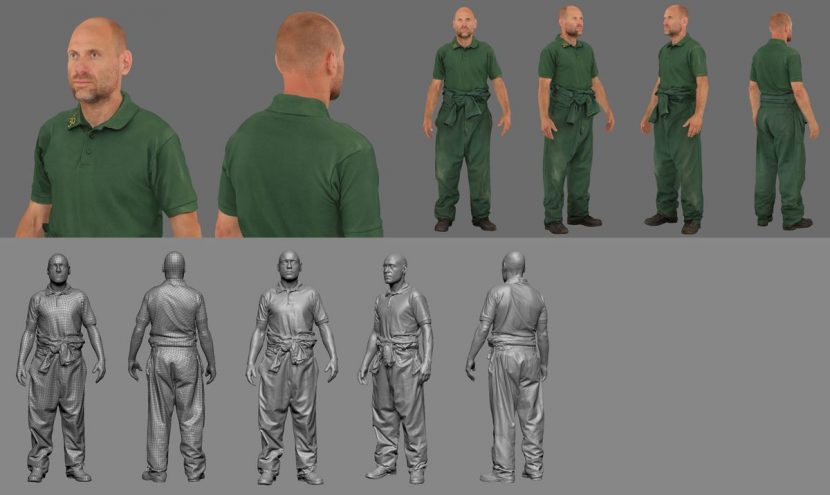

An early proof of concept was also devised to check that the visual effects team could deliver the zombies on the necessary TV schedule. “We had some mocap left over from a previous game project at Axis,” says Hewlett, ”and we put that on a scanned character and ran it through the Houdini pipe. We shot that through a few different cameras and proposed that as generally how it was going to look.”

axisVFX effects lead Joe Thornley-Heard devised the throughput in Houdini for how the creatures would be realized, beginning with an understanding of how the 3D printers produced those glitchy pieces. “I think having such good reference of those broken 3D printing models and also having a good idea of how those 3D models are created really helped,” says Thornley-Heard. “It’s got a nozzle that lays out liquid plastic in a spiral, so I was able to take a mesh in a rest pose for the character, and scatter points on it and add a curve up to the height of the model. Then I used the OpenVDB toolset in Houdini to go from that rest pose mesh to the next step. It’s really easy to stick points to the surface, move points around using that VDB representation of the mesh, and then it’s also really good for compositing.”

Here, the idea was to turn the curves into surfaces and combine them into the original mesh. “Because it’s all just volume data I could apply a sort of ‘iDistort’ in 3D to the level set using a sort of cellular noise as a look-up to do the actual glitching distortion,” explains Thornley-Heard. “We put some animation through that and you’ll get all of the head jumping a few units to the left or the right on a particular frame. So the combination of adding that spiraling curve as a texture meant I could take that rest pose mesh, stick it all to the animated character and output a hi-res level set mesh with UV attributes for shading for rendering and lighting.”

To capture the likeness of the actors themselves, axisVFX had initially considered a traditional 3D character approach of cyberscans and modeling, but ultimately considered it would be too time consuming and expensive. “We thought,” says axisVFX creative director Stuart Aitken, “given we were using this kind of aesthetic anyway, and we knew they were going to be distorted, we didn’t want them to look like CG characters.”

Instead, the actors were scanned at Ten24 with a handheld Artec scanner and simultaneously photographed by a camera array to capture textures that could be applied with photogrammetry techniques. “What we got out of that,” says Aitken, “was a pretty nice 3D scan that we needed to clean up a little, and photo ref. The guys did an awesome job of sticking these two things together. You don’t get the textures aligned to the scan – you literally have to take the photographs and re-project them onto the mesh.” A motion capture shoot also took place, with Mackinnon able to direct the performances for the zombies himself. axisVFX then followed a traditional lighting pipeline led by CG Supervisor Sergio Caires, with final compositing for the shots carried out in NUKE and overseen by VFX Supervisor Howard Jones.

Boneless in 2D

A similar distorted aesthetic was behind the 2D look for the Boneless beings as they first emerge and literally drag the textures of the world around them. This time, sea slugs became the unlikely source of inspiration. “We spent a long time working out how these creatures would drag textures before they became 3D,” outlines Hewlett. “It was really hard to do that, and not have traditional cues from specular highlights and shadows to be able to get that kind of movement.”

The Boneless are seen first as wall murals, shots accomplished by filming actors turning around in various ways, and layering their movements in Photoshop. For one shot zooming close into a line on a wall, axisVFX had to re-create the scene with a virtual camera due to physical constraints on set. “The reference for that,” explains Aitken, “was Holbein’s skull, a famous optical illusion where something’s really stretched at one point and once you get to the right angle it all forms. Because we had to take this line and turn it into a head, we had to get the camera millimeters away from the wall to make it work. We added an extension to the camera in Maya and the guys did that in NUKE.”

– See the mural versions of the Boneless in this preview.

“It was probably one of the most difficult things,” adds Hewlett, “partly because it was the very first shot. We were in this tiny flat in Cardiff and the camera crew are scratching their heads looking at our previs saying, ‘What the hell is this?’ We just had to shoot about six different takes and hope that we could put it back together again.”

An aspect that helped axisVFX in smearing the textures was working with the art department early on to ensure walls and props were ‘texture-decorated’ to begin with. “If it was all clean,” notes Aitken, “we wouldn’t be able to drag the textures around and you wouldn’t be able to see the things. So that was one of the reasons for having the stripey carpet and 60s wallpaper and that stuff and lots of patterns everywhere.”

The Boneless are further revealed when PC Forrest suddenly becomes absorbed into the carpet, providing a glimpse of the distorted textures. An idea for the final distortion look came from director Mackinnon who referenced a sea slug and asked axisVFX to devise their effects based on that. “It was more like a leech which has this stretch and compression movement,” says Thornley-Heard. “We had to follow the locomotion of a worm or a leech that has sections that stretch and squash, and it uses the squash section as a foot and extends its body forwards from that. It’s got a very creepy feeling and that was the kind of textural quality we gave to the individual creatures. They also had a flocking behavior, like starlings. So the combination of those made a mass of these writhing things that gave it the creepiness.”

Thornley-Heard built a 3D representation of the sea slug in Houdini that would then be applied to the plates inside NUKE. “That’s where a lot of the experimentation came,” he says, “because a lot of the movement of each individual creature was based on its texture and surface quality. The problem was you couldn’t represent that because that gave it too much shading. Some of the creepiness of the leeches is their sheen or stickiness and the specular.”

“What it ended up being was I had a leech rig for a single leech that was derived from a single point,” adds Thornley-Heard. “That just handled the squash and stretch and generated the mesh. So I could feed in a bunch of points with the flocking behavior, which meant a particle sim with some fluid motion could be turned into a crowd of leeches. That was able to generate some orthographic elements that could then be re-projected in NUKE. That got a little bit complicated because we were using the STmap UV lookup in NUKE but through the re-projection we had to then use the camera transform to transform those UV values in order for it to pick up the right part of the projected texture.”

axisVFX relied on C44Matrix from Nukepedia for the transform of the color data. “With extra secondaries like UV maps, ramp ups and other sorts of things,” says Thornley-Heard, “we were able to drag textures, smear it and use time echoes in NUKE to get a really kind of painterly water color smear as well. It had to look like they were tasting the world, sampling it and digesting it in a way that looked like they were understanding it. That was the story point.”

The Boneless shots were the primary effects created by axisVFX on the show, but others included flattening objects such as a couch and even people. The studio also crafted energy fields and blasts and a shape shifting Tardis, working for around six weeks on the show between their Bristol and Glasgow offices and delivering a total of 66 shots.