Artists are often asked to produce images of things never seen before, and often times asked to make them look real when no one is quite sure how they would actually exist. In Christopher Nolan’s Interstellar, visual effects supervisor Paul Franklin and the team at Double Negative were asked to produce images of things that aren’t even in our dimension, and furthermore have them accurate to not only quantum physics and relativistic laws but also our best understanding (guess) of quantum gravity.

Luckily, amongst the key team at Dneg was chief scientist Oliver James. James has a first from Oxford in optics and atomic physics and a personal understanding of Einstein’s relativity laws. He worked, as did Franklin with the film’s executive producer and scientific advisor Kip Thorne. Thorne would work out complex equations in Mathematica and send them James to recode into IMAX quality renderings. To meet the needs of the film and to solve the visual problems involved, James had to not only visualize equations describing the arcing and bending trajectory of light but also equations that ended up describing how a cross section of a beam of light changes its size and shape during its journey past the black hole.

Even then James’ code was only part of the solution – he worked hand in hand with the artistic team lead by CG supervisor Eugenie von Tunzelmann which would add say an accretion disc and create the background galaxy and all its stars and nebulae, that get warped as their light rays are bent past a black hole. But as complex as it is to for the first time show a black hole scientifically correctly in a film, the team also had to show someone entering a four-dimensional tesseract, which also extrudes or shadows into the three dimensions of a little girl’s bedroom – all in a way an audience could follow.

In this article we describe some of the key sequences created by Double Negative, and the scientific research behind them.

Building a black hole

Perhaps the single most stunning results of Nolan’s quest for realism in the film is the depiction of the black hole Gargantua. After input from Thorne, the filmmakers strove to properly show the behavior of a black hole and a wormhole, right down to the lighting or lack of it. For Double Negative, this even necessitated the writing of a whole new vastly physically complex renderer.

Above: view from a camera in a circular, equatorial orbit around a black hole that spins at 0.999 of its maximum possible rate. The camera is at radius r=6.03 GM/c^2 , where M is the black hole’s mass, and G and c are Newton’s gravitational constant and the speed of light. The black hole’s event horizon is at radius r=1.045 GM/c^2.

“Kip was explaining to me the relativistic warping in space around a black hole,” recalls Paul Franklin. “The gravity being bent in space/time deviated the light around it producing this thing called the Einstein lens which is this gravitational lens all around the black hole. I was thinking about how we might go about creating that image and I was thinking about ways or references we could look at and see if there was an existing VFX process.”

“I saw some very basic simulations that had been done by the scientific community,” adds Franklin, “and I thought, well, the movement of this thing is so complex, maybe there’s something we can do ourselves and implement our own version of this. Kip then worked very closely with the R&D at Double Negative, particularly with Oliver James, our chief scientist, to take Kip’s equations that he’d worked out to calculate all of the light paths, the ray tracing paths around the black hole and then Oliver worked out how to implement that in a new renderer we called DnGR which stands for Double Negative General Relativity.”

That approach allowed Double Negative to set all the necessary parameters for their digital black hole. “We could set its rate of spin, its mass and its diameter,” explains Franklin. “Really, those are the only three parameters you have to play with with a black hole – that’s all we can have to measure with black holes. They spent a lot of time working out how to calculate the paths of ray bundles around the black hole. It was pretty intense – it was a good six months of work – those guys putting the software together. We had an early version of it running by the time we finished pre-production on the movie.”

Above: The black hole, initially non-spinning, gets spun up to 0.999 of the maximum; then the camera zooms in from radius 10 GM/c^2 to near the black hole, r=2.60 GM/c^2, and then moves along a circular equatorial orbit. The hole’s enormous shadow is distorted into a boxy shape due to mapping the camera’s spherical sky onto a flat display.

That early imagery was in fact used on set as projections outside the windows of the spacecraft onto giant screens, providing actors with something to look at while filming. No greenscreen was used during production on Interstellar. Later, Double Negative would replace selected views and also fix up some star fields. “Quite a lot of the stuff you see in the finished film where you’re looking over the shoulder of an astronaut and looking out the window,” notes Franklin, “quite a lot of that is straight in-camera. We had a whole bunch of shots which don’t get into the VFX shot count but there’s a whole bunch of stuff which is in-camera visual effects done in this way.”

Those in-camera shots were made possible via a collaboration between Dneg, DOP Hoyte Van Hoytema and LA-based Background Images, which employed new 40,000 lumen projectors on set. “We had two of them converged onto the space,” says Franklin, “so we were overlaying the images one on top of the other to boost the exposure just a stop. We found that we had to be really careful not to make the images too large otherwise we lost exposure.”

Above: Close up of this same simulation, showing the complex fingerprint-like structure of gravitationally lensed starlight near the left edge of the black hole’s shadow, the edge at which the hole’s horizon is moving toward us at near light speed due to its spin.

“We had to re-position and re-converge the projectors from setup to setup,” continues Franklin. “Normally the guys like to have a good week to get a projector in place, converge it properly, get it all finely tuned. But we got that process down to in some cases 15 minutes. They were working so hard. The projectors are big hunky objects – each one weighs 600 pounds. We had two in a specially built cage mounted on a big heavy duty reach lift, with a special pan and tilt head so we could basically use this thing to position the projectors. I’d be on the radio directing content, getting playback working, talking to the projector guys to calibrate the projectors, and also talking to the teamster who was driving our forklift to dance this thing around an extremely crowded stage.”

Making waves

In the film, Cooper (Matthew McConaughey), Amelia (Anne Hathaway), Doyle (Wes Bentley) and the AI robot CASE visit a water-covered planet that also experiences enormous tidal waves, given its close proximity to the gravitationally dense Gargantua. Audiences are perhaps used to seeing waves that can get to be a few hundred feet in films, but due to the story, that wasn’t even close to what was required, the waves needed to be 4,000 feet tall. To help sell the scale of the waves, Double Negative had to re-think the usual approach to making water. “When you take something that large,” explains Franklin, “all of the characteristics you associate with a wave like breakers and a big curl at the top, they just go away because they’re tiny in relation to the mass of water, because it’s more like a moving mountain of water. So we spent a lot of time in previs working out how we can use the one scaled reference we did have which is the Ranger spacecraft, the white shuttle that gets swept up. The key moment of that sequence is when the wave hits the Ranger and sweeps it up the face of the wave. And you see it travel up and become lost and it becomes a tiny little speck and disappears in the face of the wave. That was a key moment for the scale.”

Double Negative artists controlled the waves with animation deformers, sculpting them effectively with keyframes. “That gave us the basic shape of the wave,” says Franklin, “but then obviously to sell it as real you’ve got to create the surface foam, interactive spray, wavelets and tiny breakers on the surface. For that we used an in-house tool called Squirt Ocean. It’s been in development for quite a while, and then there was a lot of additional Houdini work over the top of that.”

The shots were being completed in high enough resolution to work for IMAX, a requirement that limited the amount of time Double Negative had to do iterations. “I would see the layout of the wave sequence and say great let’s get the wavelets onto it and everything else,” says Franklin, “and I’d have to wait about a month and a half to actually see this stuff come back – it was that long a process since we were doing all this IMAX resolution. So we didn’t have that many goes at it. Normally you would expect to have multiple iterations but we really only had three goes at it.”

CASE ultimately rescues Amelia from the tidal wave. CASE and its counterpart TARS were actually 200 pound metal rob puppets operated on set in Iceland by actor Bill Irwin, again the result of Nolan’s push to have as many practical elements as possible. Double Negative carried out performer and rod removal for many shots. “The early shots in that sequence consist of the CASE robot walking through the water,” explains Franklin. “That’s the physical puppet and we just removed the performer from behind it.”

Catch a glimpse of one of the AI robots in the film’s trailer.

When CASE reconfigures himself into the water wheel and spins his way through the water and picks up Amelia and runs off with her, the shot was completed with a practical and digital solution. “What special effects gave us was,” says Franklin, “they built a little water rig attached to a quad bike we could drive through the water and derive interactive splashing. We had another rig again built off a quad bike which essentially had a forklift on the front of it, so that carried our stunt performer. We had the arms of the robot holding the stunt double for Anne Hathaway. The thing would churn up the water and then we got rid of the quad bike and then replaced it with the digital robot.”

Double Negative limited digital robot moments to those of TARS or CASE doing ‘extraordinary things’, such as running through the water, climbing up into the spaceship at the end of the water sequence, running across the ice and some zero gravity freefall shots. “What we’ve always found with these things is that you can really make that digital moment work if you bookend with reality,” suggests Franklin. “So that sequence he runs up to the spacecraft and climbs inside it, as he comes up through the hatchway inside the spacecraft, that’s the practical robot – the physical prop. It finishes it off with a moment of reality and it helps sell all the digital parts of the sequence.”

Inside the tesseract

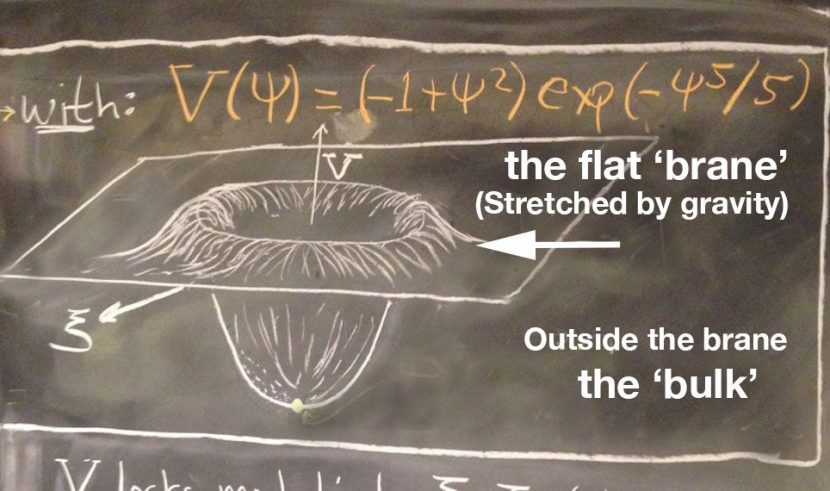

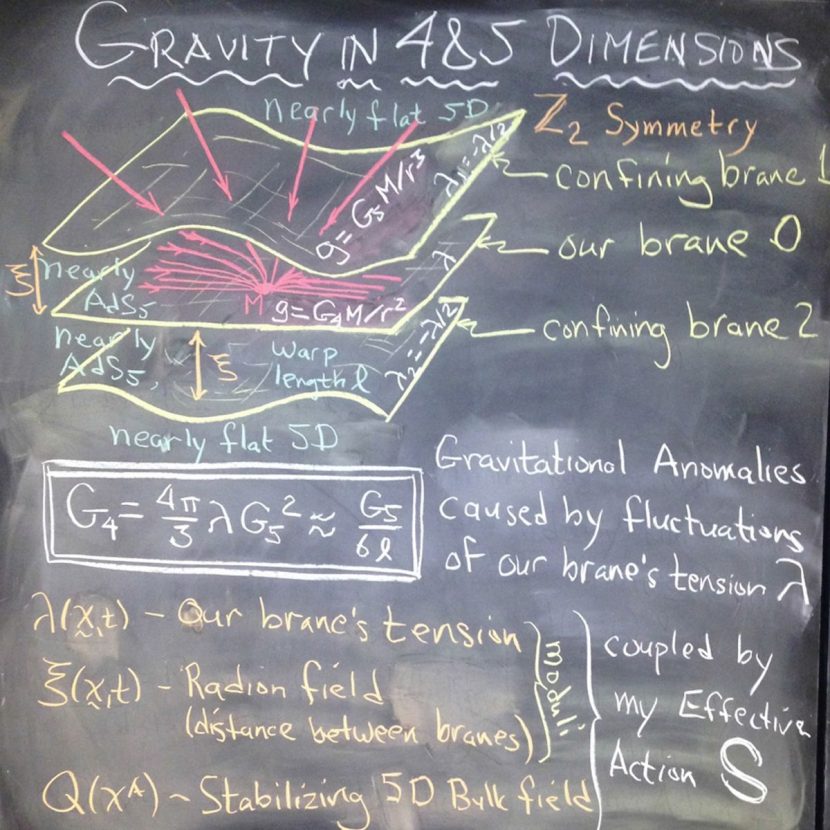

In the film ‘they’ turn out to be us, advanced enough to help Cooper communicate with his daughter on Earth, years earlier in her life. As time travel is impossible in an Einsteinium universe of quantum and relativistic laws, the story solved this by having Cooper leave our dimensions and move into the ‘bulk’ or hyperspace of higher dimensions. If our universe was depicted as a 2D disc or membrane then the bulk or hyperspace would be the box surrounding it in three dimensions. A way to think of this is every dimension is dropped one level to image it. Thus 3D space is drawn as a 2D disc (albeit sagging around the black hole) and the 3D environment around this disc or the brane (physicists use brane not membrane) is the higher dimension or the bulk.

In the film Michael Caine’s character Professor Brand is trying to understand gravitational anomalies. The black boards in the film clearly show him trying to solve gravity in four and five dimensions with diagrams of our brane in the bulk. The film says that if Brand can understand these anomalies then they could be used to change gravity on Earth and lift huge mankind-saving craft into space.

While leaving our three dimensions and entering the fourth does not solve the time travel issue, in the film it does allow for Cooper to send gravity waves back in time. He can see all of time, but he can only cause ripples in those threads of time – gravity ripples that Cooper’s daughter, Murphy, comes to understand as vital information.

The job of Dneg’s team was to visually show a fourth dimensional tesseract, that some future ‘us’ provides for Cooper to cause these gravity waves. It would have been possible for this to be done symbolically or in some white dream sequence but instead Dneg set to trying to actually visualize the four dimensional tesseract in some meaningful way, noting of course, that such concepts are just educated speculation. Here’s where Thorne was again involved. For him, such speculations “will spring from real science, from ideas that at least some ‘respectable’ scientists regard as possible,” to quote from his new book The Science of Interstellar.

To understand the Dneg solution one has to understand the nature of higher dimensions. If an object is at rest – say a ball – to a flat table it is a dot. If the ball could move through the table – much like an apple being sliced thinly – the ball would appear as ever increasing bigger circles and then ever reducing circles until it passed all the way through the table. From the flat surface point of view the circle makes sense…and a circle is not a bad approximation to a ball when drawn on paper. This example moves from 3D to 2D. But how do we move to 4D and beyond? One theory that is common even in every day CGI is to think of the fourth dimension as time. Thus that same ball bouncing is only seen at one instant as a ball – but over time its path defines a tube. From a 4D view the ball is a tube and the sphere we know is just a 3D slice of that 4D world. Now there is some question over time as the fourth dimension, but if we assume it is, then the 5th dimension is the stuff in the bulk, the stuff outside our universe.

If a 4D/5D tesseract was made vastly in the future it would represent a box that grows to be a bigger box, and can animate and change ‘form’, as seen in this short video below.

In this is a box, over time having its timeline varied. If you entered the tesseract by the film’s logic – as this tesseract touched 3D space, you would see all the timelines (4D extrusions of objects) and all the paths forward and backward in time. Furthermore, as the physics of today predicts that there are a vast number of separate realities (sandwiching our flat brane) you would see these lines shooting off in multiple directions. It is in this conceptual space that one can start to see the graphical solution that DNeg came up with, based on the direction of Nolan. The ’threads’ of time that Cooper seems to influence are like strings and his attempts at hitting them causes vibrations that ripple back up the timeline and thus communicate with his daughter Murphy. It really is a brilliant piece of artistic scientific visualization.

But how to film it?

Nolan’s determination to give the actors something physical to interact with applied also to the tesseract sequence. Cooper enters a black hole and emerges inside a multi-dimensional space in which he can see objects and their timelines, including the childhood bedroom of Murphy. “Chris said,” relates Franklin, “‘It was a very abstract concept, but I’d really love if we could build something that we could film. I want to see Matthew interacting physically with these timelines. I want to see him in that space, I don’t want to see him hanging against a greenscreen – that feels like a cop-out compared to everything else we were planning to do in-camera.’”

This led Franklin to consider how the tesseract should look. “I spent a bunch of time thinking about how to make time visible as a physical dimension,” he says, “how to show timelines of all the objects in the room in a physical way that would be comprehensible. Because the danger was it would get so cluttered that all you would see is these timelines and you would have to work out what they came from. Also, it was important from a story point of view that Cooper see the timelines and see the way it’s affecting the objects back in the room and would be interacting with what is going on inside the room.”

The final ‘open lattice’ look was, notes Franklin, inspired by the concept of a tesseract itself. “A tesseract is a three-dimensional shadow of a four-dimensional hyper cube. It has this beautiful lattice-like structure, so that partially informed what we were doing there with that. I also spent a lot of time looking at slit-scan photography and the way that slit-scan allows you to record a single point in space across a whole range of moments in time. So the photograph itself turns time into an axis in the final image. A combination of those two things together gave us these physically extruded three-dimensional timelines, streaming off from the object. The rooms are snapshots, moments in time, embedded in this lattice of timelines that Cooper can then navigate backwards and forwards along the timelines to find specific moments in space.”

Since Nolan wanted some kind of physical presence for the tesseract to shoot with, the visual effects and art departments would exchange information in terms of models and lighting designs and studies. “We ended up building one section of that as a physical set with four rooms around it,” states Franklin. “Then digitally we extended that off into infinity so everywhere you look it’s going off forever. We also used a lot of in-camera projection on set. We overlaid the active timelines onto the physical set using the projectors. That gave us a sense of trembling, febrile energy – all the information streaming along the timelines in and out of the rooms. But every single image of the final sequence has huge amounts of digital work overlaid onto it. A very fine lattice of threads that Cooper encounters when he is actually trying to push up against it – he can’t penetrate the rooms. Every object in the room had to be connected with its own faint moving timeline threads that went in and out of the objects.”

Still, certain moments required fully digital Double Negative backgrounds such as when Cooper moves through the ‘tunnels’ of the tesseract. “We didn’t have enough sets to do that travel,” says Franklin, “so we shot Matthew against a projection screen and we projected the previs of those sequences onto the screens around him, so he had something to ground himself against. The actors all loved that because they had something to look at rather than just having greenscreens and working it out later on. We then replaced that material with the more developed version of the tesseract. There are a couple of moments where we kept the previs because the depth of field was so shallow that the background was out of focus.”

Franklin also notes that layers of digital work, and significant amounts of roto and rig removal was required to complete the scenes. There were some challenging CG requirements too, including when the tesseract closes and begins to break down. “We actually took the CG geometry of the tesseract and put it through a hypercube rotation. The guys worked out how to implement the transforms for the hypercube rotation and apply it directly to the geometry of tesseract set that we’d actually created. That’s a particularly special moment for me. When I saw what the guys had done I thought that’s just perfect, that’s exactly what I want.”

Another challenging section, says Franklin, involved the moment when Cooper interacts with the dust and draws out a binary pattern in the floor during the dust storm. “We had to work out the moves that Matthew was miming on set and make that work with something that could actually drive those shapes appearing on the floor of the room below him.”

Excellent! great article, and such a great film.

Alot of thought into this film which all shows through.

The projected screen from outside the space ship looks amazing realistic (because it is in camera). esp seeing the earth through the space ship which looked over exposed. Reminded me of the moon landing footage of looking at the earth through the space craft window. No doubt if that was green screened that would of been comped perfectly exposed argh!.

Really liked the tesseract opening/folding effect really stuck with me. great work guys!