Have you been playing Halo 5: Guardians this week? If so, you’ll have experienced the incredible tumbling, freefalling action in the opening cinematic. We find out from the team at developer 343 Industries and animation studio Axis Animation how the almost single-shot cinematic for Microsoft’s newest game in the Halo franchise was planned out, animated and rendered.

fxg: Can you talk about the ideas behind the cinematic and what you hoped it would achieve?

Brien Goodrich (Cinematic Director, 343 Industries): From a nuts and bolts perspective, we knew that we needed to introduce Fireteam Osiris, deliver a mission briefing and get the player into gameplay – those were the basic ‘must haves’. The challenge then was really about how do we check those boxes, but do so in a way that really grabs the viewer and pulls them into the world and characters of Halo 5: Guardians. It’s the first thing you experience so we definitely wanted it to be epic and crazy and face-melting, but also wanted to have some subtle character moments like the moment between Buck and Locke. We left the tactical data to Lasky and Palmer and tried to keep the chatter to a minimum with Fireteam Osiris – in many ways, we wanted Fireteam Osiris’s reaction to the events and to one another to begin to shape the viewer’s experience with the team.

We also wanted the viewer to feel this sense of ‘embedded reporter’ as if they were right there watching this moment unfold. It was incredibly important that the camera language during the briefing moments establish and underscore that idea and connection. Once the team jumps out the Pelican, again we kept the com chatter to a minimum and let the Spartan’s’ actions illustrate and define them: We see Buck land with a roll and come up shotgun blasting. Tanaka smashes directly through a rock instead of jumping over it or navigating around it. Vale elegantly leaps and bounds over vehicles, as she takes out Elites, Jackals and grunts. Locke leads us in and out of the sequence as he demonstrates his incredibly tactical and precise combat style. More than anything we wanted it to be fun. We wanted fans to stand up and cheer as it launched them literally into gameplay.

Stu Aitken (Creative Director, Axis): Although the direction for these particular sequences had largely been set by the time we started work on the project with 343 Industries, it was still important for us to understand what 343 Industries was trying to do with the opening – the initial scene in the pelican performs several key creative functions – it sets up the atmosphere and sense of anticipation for what’s to come, it introduces Fireteam Osiris to the player, giving each of the 4 Spartans a moment in the spotlight so we start to get some sense of their various characters, and it’s also a detailed mission briefing for the goal of the upcoming mission.

The downhill sequence on the other hand is all about really trying to sell the scale and excitement of playing the game – I got the distinct impression that this was the kind of sequence 343 Industries had wanted to do for a long time – to really sell the abilities of the Spartans and really sell the impression of you being in amongst this huge battle raging all around. Above all it had to make a statement about what the player could expect in the latest installment.

fxg: How was the single shot action planned out in terms of concepts or previs/animatics? How did you determine places that might work as invisible cuts?

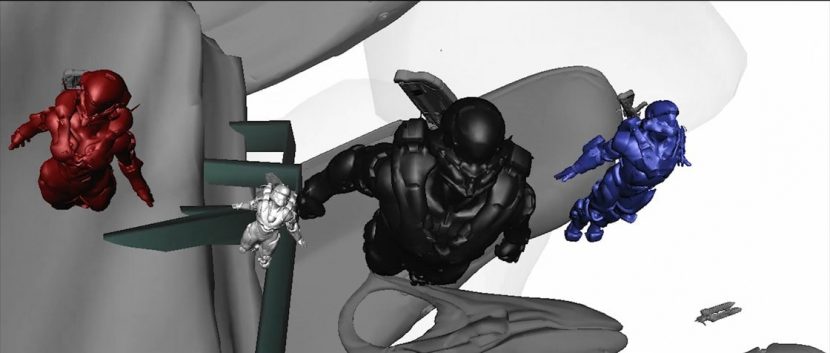

Brien Goodrich: Greg Towner, the animator on the piece, has an amazing talent for previs and animatics. He previs’d the entire sequences using low-res 3D cube Spartans. It allowed us to conceptualize, understand and really shape the single shot before committing significant resources. This was critical, because we knew that once we locked down the previs, it would be almost impossible to alter the course of action. It needed to be a very clear production blueprint for both 343 Industries and Axis. Axis also provided some beautiful concept art to further visualize the look. But the visuals and action planning are really only part of it. The single camera shot presents some incredible production challenges. How do you render a single sequence of this length and complexity? Luckily, our friends at Axis have solid experience in this area – I’ll let them speak to the logistics of that process.

Sergio Caires (CG Supervisor, Axis): 343 Industries did all of previs/animatics/animation on these scenes- though, we followed that up with 2d painted color scripts so that we were all on the same page about the feel of what we were doing. Having a client deliver the animation to a final stage, is not the same as when the animation team are sitting across the room reacting in realtime to whatever issues or questions that may come up. So to get around that complication, we agreed on a process and delivery spec for handing work over and so the feedback process and system of regular deliveries worked out fine, even when there were surprise additions which we needed to accommodate.

I’d say the key was having and preparing the tools and means to be ready so we were able to react to whatever came in from 343 Industries. We have a bunch of very talented fx and pipeline people who approached the challenge in a procedural way, so new changes would automatically filter in our lighting and FX pipeline which made it easier for both us and 343 Industries to complete our tasks smoothly within the highly compressed schedule.

With regards to the downhill sequence I can say that there were no cuts, visible or invisible. The breakdown followed three basic groups, one was the characters, another everything they interact with, and the others were geographical. This is the most logical approach in something like this, so we were happy to find that 343 Industries’ approach matched how we would have done it ourselves.

fxg: Specifically, how was that environment that they freefall into and the path they would take mapped out?

Sergio Caires: 343 Industries took the mountainous background from the game level and gradually evolved/modified the environment from there. When we took delivery of this real time mesh it was already in pretty much its final shape though we significantly enhanced the detail procedurally from there.

fxg: What performance capture was acquired, both for the in-ship scenes and then the much heavier action? Can you talk about the tech setup for this capture?

Brien Goodrich: The entire sequence in the rear of Pelican is performance capture. We brought in the full Fireteam Osiris team plus Lasky and Palmer – face capture, body capture and voice. That meant 6 face cam set ups, 6 mics and 6 actors all in the capture volume at the same time. Additionally, all the characters have some critical timing and choreography beats to hit throughout the sequence so the logistics and tech involved in capturing a scene like that are quite challenging. Due to scheduling, we also had to bring Nathan Fillion in on a separate day so we actually shot the scene twice. We wanted to get everything in a single take to keep the implementation as seamless as possible. It was a heck of a challenge on the day, but in the end we used what is essentially a single take with Nathan’s performance integrated into that take. Once Osiris jumps out the back of the Pelican, the sequence shifts to key frame animation however we used both live action and motion capture stunt reference for many of the specific action moments.

fxg: What approach did you take to CG facial animation – in terms of going from performance capture and replicating the likenesses of actors such as Fillion?

Brien Goodrich: We brought all of Fireteam Osiris to 343 Industries to capture their likeness. 343 Industries‘ character team then worked incredibly hard creating the models, face shapes and texture maps. Axis and 343 Industries shared those assets throughout the production, although each team had to implement them in slightly different ways depending on the output (pre-render vs. real-time). We captured and solved the performance capture data with Profile Studios and then it was up to Greg Towner to work with the capture data and get the performances just right. In the end it’s really a combination of capture technology (performance and scan data) combined with the artistic work of the character teams and the animator to make the ‘likeness’ of a character work. This is especially challenging with someone like Fillion because we all know what he looks like. We’re incredibly pleased with collaborative process and ultimately the final look.

Stu Aitken: We directly used the pipeline which had been established for the in-game cutscenes – stereo head mounted cameras were worn by the actors during the performance capture shoot, and Giant would then take that data and work out correspondences between the captured face data from the stereo rig and the and actor rigs (which they also setup but with various blend shapes input into the rig from 343 Industries and in some cases Axis). The actual takes were then put through custom solvers and then adjusted as necessary. It was quite intensive work for Giant, but the clean up and final polish on our side was fairly minimal. Using the actual actor likenesses was probably a big help there of course.

fxg: How was animation carried out for the various ships and creatures, plus the team – what were some of the challenges of this with such frenetic action and a moving camera?

Stu Aitken: Again 343 Industries were largely responsible for this though there was some feedback loops where we requested various things be tweaked a bit based on how the timing was looking once FX started to go in. In a few places we asked to move the odd ship for lighting or compositional reasons as well – maybe just to make something clearer or less ‘blocked in’.

The constantly moving camera just meant that we had to pay more than usual attention on readability and making sure that the audience could clearly follow the action despite the speed we were moving down the hill and with so much going on!

Lighting can help here though and we were constantly looking at how the various layers worked together in shot so that while the audience would be rewarded for repeat viewing we could still have a clear and readable focus all the time.

Sergio Caires: One particular challenge was due to the fact all the foot contacts were done to an un-subdivided low res mesh, so we waited as long as possible for animation to stabilize before proceeding with adjusting the terrain to match their feet and let the FX procedurally update itself. Naturally the simulated results can be unpredictable given changing initial conditions but we were able to quickly adjust as needed.

fxg: How were the aerial environments, including clouds, and also the mountain environments built?

Sergio Caires: Prior to the project coming in the door, and for no other reason other than to see if it was possible overcome all the potential technical issues, I had been playing with a planetary/atmospheric rendering system that would allow the camera to go all the way from ground, through procedurally detailed clouds, to space where the entire cloud system is visible and where the overall structure is recognizably real (using the highest resolution NASA blue marble data), including realistic recognizable visual phenomenon, such as sunset colors when the Sun is low, the rainbow effect when looking at the atmosphere terminator at a grazing the shadow of the earth onto the atmosphere, and so on.

The densities for the planetary atmosphere and clouds were created with a CVEX volume procedural shader in Houdini, which therefore meant these details only existed in full detail only at render time, but the same shader is also used to visualize cloud placement and so on at low resolution in the viewport.

The planetary clouds are basically a spherical projection. This made a sort of extruded clouds as a starting point, then height ramps are used to shift ranges and invent the cloud profile shape. Various noises, pyroclastic and so on, are used to disturb the data at various points in order to create detail around and billowing effects at and below the cloud map pixel scale.

To make the atmospheric phenomenon work I had to figure out how to make a volume surface shader with spectral opacity, which would mean that if the densities/scales are right, then the sky would react naturally to the Sun and camera position. This part was actually really simple to do in Mantra which can render just about anything you can imagine through custom shader networks, without necessarily having to be a high level programmer.

If you do the density to opacity calculation for each RGB opacity channel separately, where each channel is respectively multiplied by the sky color parameter, then each channel will have different density/opacity attenuation rates and so the resulting volume rendering integration in Mantra will exhibit the hue color shifts we see in nature.

The downhill sequence takes place within a similar “virtual planet”, but all the low/near level clouds are an addition to this because they needed to be more art-directable. To that end they are also rendertime CVEX constructions that either take SDF representations of basic polygon shapes as input, or mostly procedural with input SDF’s used to erase volume. These fields are added/subtracted to by various custom noises and deformed to mimic certain cloud features, such as flattening and dragging cloud bases.

All of this – and everything else in the scene for that matter – is affected by light passing through the atmosphere so that everything is appropriately and automatically tinted at different altitudes.

The terrain also leveraged entirely procedural approaches in order to provide the necessary coverage from any possible viewpoint we might be confronted with in the highly parallel production. The low res terrain was subdivided then converted into VDB volume representations that could be modified by various custom procedural noises (mostly with voronoi basis) to add rocky shapes overhangs and caves, and these were masked based on the obvious things like slope/height as well as procedurally generated masks of the paths of animated assets, so that characters/ships were not running through stuff they did not intend to destroy.

The output of this was a fairly high resolution mesh, but still without enough detail to really stand up in close up, so the very same noises that carve out the landscape are also used as render time displacements.

fxg: There’s such great effects work in the cinematic, from exploding ships to smoke, snow, pieces of ground and fire – can you discuss your technical approach to these different elements?

Sergio Caires: I’ll talk a bit about the workflow aspect and leave the specific FX details to Jayden our Lead FX artist.

We operated with a 2 way asset system. I continually updated the environment asset, while the fx guys referencing this procedurally/live in their work and for testing integration, and in return they would produce lightweight assets containing just the delayed load geometry/shaders/fxlights, that I would in turn bring back into the main render file, and if need be tweak shader colors/densities to better integrate with the hard surface terrain.

Jayden Paterson (FX Supervisor, Axis): From the start we decided to take a much more physically based approach than usual, where we wanted to get as many of the FX elements as possible lit and rendered in the actual lighting scene. For this reason we really treated every effect as a separate asset.

Doing this meant we could start working on a basic version of a snow explosion for example, then create the asset for the lighting scene, from that point forward as we iterate on and approach the final look of the effect itself, this asset would be constantly updating and affecting the way the master scene was being pulled together, with properly cast shadows, GI etc. And this was true for almost all of the FX in the shot.

Another challenge was choreographing all of the FX into one long sequence. The environment and track that the characters run through is huge, so pinpointing and timing view-able FX was important however still trying to give a sense that the whole environment was a war-zone. By the time we were starting to fill up the camera space with as many separate FX as possible we were largely at the point where we could paste and manipulate all of these assets much faster than if we had gone with the more traditional FX creation process.

This was also the first time we had a chance to test out Houdini’s new Grain (PBD) particle system, which we used to drive the bulk of the snow simulation work. So again, a much more physically accurate approach to simulations where there would usually be a lot more cheating. This involved finalizing a base simulation with the position based dynamics, which we went as high as we could in terms of point count, then running several other sims driven by this base. Thick smoke, fine spray smoke, dumb particles etc all driven by the initial granular motion. The important thing with all this heavy simulation work is to know exactly where and for how long it would be view-able, if snow was obscured by a rock halfway through, we could stop simulating and potentially even delete snow after that frame. As one can imagine with all of these assets eventually being forced into one big scene file, we couldn’t waste memory loading particles you wouldn’t see for more than 100 frames!

fxg: What’s really nice are some of the in-camera aspects such as flares and pans to ‘find’ the action – can you talk about the virtual cinematography, including the approach to lighting?

Brien Goodrich: We really wanted the piece to feel photographed. As I mentioned earlier, it was very important to sense and feel the camera operator’s presence to help connect and embed the audience with Fireteam Osiris. Once we leap out the back of the pelican, the single camera move is quite fantastical. When you do something like that, you run the risk of breaking the immersion that you’ve just constructed. So, we tried to emphasize certain elements to help ground the camera – the weight, the motion, vibration, bumps, the lens flares and artifacts – all the while, we still wanted to feel that operator presence. We imagined a super-Spartan camera operator diving out of the Pelican with the team and following them into action. Further, the overall lighting look and feel plays a huge role in maintaining that immersion. The piece would fall flat without the lighting.

Sergio Caires: I suppose the lighting approach has been somewhat outlined above, but in addition to the physically based sky/atmos approach, some mountains were removed/added in order to provide the right amount of light and shadow beats as they go down the mountain. The time of day and so on also had to reasonably tie in with the game world that the player is jumping into right after the intro.

fxg: How was the cinematic rendered – what challenges did the single shot aspect bring?

Sergio Caires: There were no shot splits per se at any time, it was always one single render file and shot entity that everything was funnel ling into, but the sheer size and rendering schedule demanded milestones. To that end the rendering was split into start middle and end sections, with fx resources and client animation/review/approval prioritized to this schedule.

The rendering was split into two main expensive passes and a few cheap passes.

The main ones were the environment (with phantom everything else) and in the other everything else (atmos/clouds/assets/fx) with the environment as a holdout. In addition to this there were a few more splits, a lighting pass from muzzle flashes/tracers, the tracers, and the Spartan suit thrusters. This kind of thing was split off because they are very likely to update, and because they are so cheap to render that it would have been crazy to have to re -render expensive passes along with them, and their luminous nature makes it relatively easy to integrate into an existing plate where masks for everything exist as well as numerous utility aovs.

There were various factors in play which steered the above rendering approach. The main one being that we knew there would be many volumes inside other volumes all over the place, as well as motion blurred assets, and that we could not for various reasons use deep compositing to negate these issues and allow us to render them in more isolation. In terms of post main render fixes, the main passes become the equivalent of working on a live action plate. For some fixes we re-rendered frame ranges as well as regions in the plate.

Of course there can be some loss of re-rendering economy doing it in as few passes as this if a lot of fixing is required, but given all the particulars this solution fit the problem best. I find in practice that the re-render economy argument can be sort of moot, given that the component are interacting with each other in any case, be it shadows and/or holdouts, and therefore they all need re-rendered if there is any change beyond shading/textures. If a shading texture change to say a character was to occur, it would be relatively straightforward to implement without re -rendering volumes by using pre-existing mask passes.

I think based on this and other past experiences with unbroken cameras, the best approach is to try to keep it as simple and manageable as possible, mostly by not splitting it into a million pieces that have to be brought back together in a monstrous comp (which tends to create an expectation that it ca n be fixed in post) and by not having too many hands in the basket. We set ourselves an objective to create well integrated fx to start with, rather than spending a lot of time creating monstrous complexity to support fixing it later.

I think it’s a significant testament to Mantra and Houdini that all the volumes, all FX, and all animated assets, occupy the same file, the same 3d space, shadow/reflect each other, and rendered in a very reasonable amount of time on a fairly modest renderfarm allocation, and that it can do this while providing the flexibility and low level access to create this stuff in the first place.

I also want to make clear it was not all physically based brute force rendering! We don’t have anything like a Weta-sized renderfarm, so there had to be various optimizations to make it render in time, for example the assets did not trace indirect sky light from the atmospheric volumes, a standard physical sky light was used instead. In other words, some less significant light interactions were either culled or replaced with approximations.

fxg: Can you also discuss the sound design and music, which really helped add to the total immersion of the cinematic?

Brien Goodrich: We originally previs’d the sequence using a piece of music from Halo 4 which helped give us a general sense of feel and pacing. Our composer, Kazuma Jinnouchi then wrote a custom piece which just turned out amazing. I can’t even begin to articulate his process in a meaningful way – I find that he has a remarkable instinct for how to describe both big and subtle moments with music – I’m blown away every time I hear one of his scores. Our Audio Director, Sotaro Tojima set to sound effects design using both the internal 343 Industries team as well one of our external audio partners, Brian Watkins. I’m grateful for all of their contributions the piece. The sound design and music really are the backbone of the immersion experience for this piece.