In this fxpodcast, we get an update on Toxik with product manager Chris Vienneau. He also talks about some of the image processing requirements, forming the basis of the 32-bit HDR pipeline. Our feature article examines image processing in compositing apps, with tips from our own experiences as well as from the manufacturers.

During the development of toxik and other compositing applications, numerous tests are done to ensure that the quality of rendered results is up to par. Some deal with color purity issues, some scaling and resizing issues, while others take a look at image degradation over multiple transformations. For example, as toxik was being coded, multiple film facilities were consulted for what tests they do to check the quality of rendered images. Autodesk isn’t alone obviously as Shake, Eyeon and others rely on (possibly the same) film houses to provide guidance on how to improve their software from an image processing standpoint.

To this end, our article takes a look at several tests which help demonstrate how imagery becomes degraded in various places within software apps and how to avoid this. All of us here at fxguide have seen workflow by artists which unnecessarily degrade imagery — such as turning samples up to 64 on a flame Action render thinking the results will always be better. Or not linking transforms in a row in Shake and Fusion.

One test we used to show degradation was actually used in the development of Toxik to check their rendered results….it was suggested to Autodesk by several film facilities. This test is to check how an image holds up by applying and then removing rotation from an image and involves the following:

1. Start with an image

2. Rotate 45 degrees counter-clockwise

3. Process the image to a file

4. Take this resulting image and rotate it back 45 degrees clockwise

5. Process the image to a file

6. Compare this final result back to the original using your eye as well as difference matting techniques

We were curious about doing this ourselves, so we ran several images through this process using the following products:

– Discreet flint on Linux

– Discreet inferno on Onyx2

– After Effects 6.5 OSX

– Shake 4 OSX

– Adobe Photoshop

– Autodesk Toxik 1.1

– Autodesk Combustion 4 PC

– Fusion 5

While we used what would be considered “default” settings, the fact is that one facility’s default is certainly not another’s default. The results which you obtain from a render can vary dramatically based upon the minutia of settings which are available when creating a composite. For instance, toxik has 10 different filtering settings for the output of its Reaction 3D compositing node. What about bit depth of the source image? Anti-aliasing? Sometimes you’ll want a high number of antialiasing samples and other times a low number. Individual materials might have different UV filtering options for textures. Where did the source material come from — scan, texture, digital? The list goes on and on.

To the right is only a sample of some of the tests we did. We have a .zip archive with the original images as well as a layered Photoshop document which allows you to easily turn on and off layers to compare the originals vs. rendered images as well as do your own tests. In the image which is viewable online here the top row is the original, the middle the rendered result, and the bottom a difference between the two. You can download the .zip archive here.

We took each original image — rue.tif and dlad.tif — and ran them through steps 1 through 6 listed above above. In order to view the results, we created a tiff file with the same vertical slice from the result images shifted horizontally (this horizontal shifting in 1 pixel increments does not change the quality of the render). We then took the same image area slice from the original reference image and duplicated it across to match the result renders file. These two images were next taken into the flame difference module to generate a difference matte (YUV, Tolerance 0, Softness 125) where we could view the results.

Scaling Transformations

Another consideration to make when compositing is what type of filtering to choose when resizing objects in a composite. Most applications have various filtering algorithms which can be chosen on a individual transform basis — as in Fusion — or for an entire node — as in Toxik. In addition to transform nodes, resizing nodes also have various options for filtering. We didn’t do side by side comparisons of the algorithms between applications because most of the algorithms are mathematical and in the public domain. However we did do some tests within the Toxik applications, which showcase differences in the choices and give you an idea of how different techniques effect the end result. You can download the test images and results here.

The zoneplate graphic is a great way to test resizing algorithms. This graphic can be downloaded from fxguide, or you can generate it directly within Shake with the following command:

shake -zoneplate -fozoneplate.tif

These filtering options are generally non-adaptive filters, which use the color of adjacent pixels to arrive at a result. The algorithm could sample any where from 0 to 256 (or more) surrounding pixels depending upon the method. Content of the scene is not taken into account — “x” pixels are always examined.

Another class of filters are called “adaptive” and are generally proprietary and not found in motion compositing programs. These vary the method used on a pixel by pixel basis depending upon the content. For instance, a different algorithm might be used for a pixel which is determined to be an edge. This type of approach can be found in applications such as Genuine Fractals, Qimage, and B-Spline Pro on the Mac. The recent release of Shake included new motion estimation technology to help with resizing imagery…in many situations the improvement can be quite dramatic (we show examples later in the story).

Which one do you pick? There are some general guidelines to follow, but in the end as an artist you need to balance the technical knowledge of what is happening behind the scenes with the end result. There are tradeoffs between the various algorithms. Non-adaptive filters inherently end up with three types of artifacts — aliasing, blurring, and edge-halo. Decreasing one of these artifacts increases the other two. In general, bicubic, b-spline, or sinc are good options for enlarging imagery. As far as reducing imagery in size, it really depends upon the content of the scene. You can often resize an image with an algorithm that produces a sharp result. However, when this clip goes into motion, the sharpness could easily create jumping of lines and boiling of edges. Processing time can increase dramatically based upon which algorithm you choose…and not provide and end result which is any better than a slower algorithm.

You’ll also want to pay particular attention to grain when resizing. With most size reductions, you’ll lose all of your grain structure and want to add grain back into the scene. The same holds true for increasing the size of imagery, but you have the extra problem of having increased the size of grain in the scene. Useful techniques for working around this include reducing grain *before* resizing or averaging frames if you don’t have much movement in the scene.

Some notes on algorithms (sample sizes are defaults — some applications allow the artist to adjust pixel sampling area):

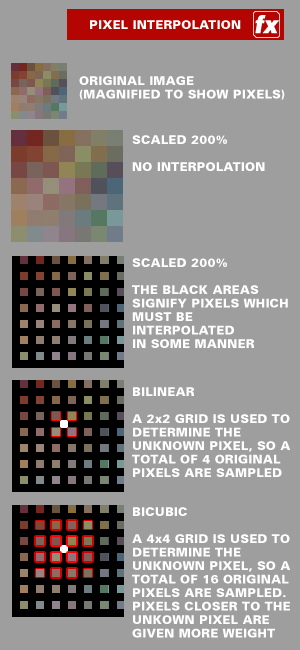

Nearest Neighbor/Impulse/Dirac: This algorithm samples only 1 pixel…the closest one to the original point. In the end, if you’re scaling upwards this simply ends up making the pixels bigger. If you’re doing an effect where you want the image to appear pixellated, this is the one for you. If you’re scaling down, the image will be very sharp yet pixels will boil and jump between frames in many situations.

Box: A 1×1 square is used for interpolation. As the name suggests, this gives a boxy look and in most cases is not useable for scaling up.

Bilinear: A 2×2 square is considered when interpolating imagery, ending up with a weighted average of the four pixels. This results in imagery which is far smoother than nearest neighbor.

Bicubic (“cubic” in Nuke): An even larger area, 4×4, is considered for bicubic interpolation. So you end sampling a total of 16 pixels. Pixels which are closer to the original point have more of an impact on the end result (aka “weighted” higher). Imagery is shaper than Bilinear, yet smoother than nearest neighbor.

Spline, Sinc, and others: Even larger areas are considered for these interpolations. They retain even more image detail than Bilinear or Bicubic when uprezzing or rotating, so if you’re doing multiple rotations which are not concatenated, they are generally a better way to go. However, these types of calculations are much more processor intensive and take a longer time to render. If you don’t need this extra detail — as mentioned above, this can depend upon your imagery — you might be able to get by with other interpolation methods.

Sinc: Keeps small details when scaling down with good aliasing. Ringing problems make it a questionable choice for scaling up. Default size is 4 x 4. It can also deliver negative values, which can be interesting when working in float/channel bit depth. It is one of the best methods for scaling down.

Mitchell / Catmulll-Rom : A good balance between sharpness and ringing, and so a good choice for scaling up. Sometimes Mitchell can produce better result with details, but it depends upon the content. Default size is 4 x 4.

Lanczos: Similar to the Sinc filter, but with less sharpness and ringing. Most likely better quality than Mitchell /Catmull-Rom.

Gaussian: Resizing is generally soft, but is good with ringing and aliasing.

Jinc (Autodesk Proprietary): Found in toxik, this resizing algorithm compares to a Lanczos filter in quality — with very good results maintaing details without softening.

Processing Bit Depth

Another critical region in pixel processing is the bit depth in which you are working. In 16 bit half float or 32 bit float processing pipelines you have a much higher level of headroom in which you can maintain information when color correcting. For instance, early in the pipeline you can blow out highlights in an image (as displayed on a monitor) only to bring them back later in the pipeline. Of course it is possible to hit a wall in this operation if you go too far, but you have a much greater range than with 8, 10, or 12-bit imagery.

16 bit half float Open EXR pipelines are also ideal for working with imagery generated from 3D applications. While not directly related to colorspace, the integral support of multi-channel Open EXR files in Nuke provides an efficient way of dealing with multipass 3D renders. Shake can also handle multi-channel Open EXR files, but you can only assign 5 of these auxiliary channels to RGBAZ channels for each FileIn node….to acccess more than that you must have additional FileIn nodes. The Shake Z channel can handle unsigned integer channels (such as material ID or object ID) in the Open EXR files and pass this along to plugins in the pipeline.

The ability to mix color resolutions within the same graph is also quite useful in many instances. Not only is this workflow flexible, but switching to lower bit depths when higher ones are not needed can speed up processing time. As Gary Meyer of D2 Software points out later in the story, they decided to convert all images to 32 bit float upon input in order to optimize their processing pipeline instead of supporting switching back and forth within a single setup.

All this being said, there are numerous facilities successfully working on feature films in pipelines optimized for lower bit depths. For television and broadcast work, 8-bit and 10-bit work can be more than adequate since the final result needs to be at this bit depth. You do, however, lose the flexibility of the increased color correction headroom of higher bit depths.

Conclusions and Application-Specific Notes

Before we begin, take our results with a grain of salt and do your own testing with the images we used or images you’re familiar with. The important thing is to do your own tests and know how to get the results you want from your imagery. What works really well in one application might not work in another.

First off, other than Photoshop (explained below), there really isn’t a clear “winner” for this particular test. As you can see from our test results, all apps obviously have an impact on the imagery. There are certainly ways to fix the tests and make one app look better than another, but what we tried to do is run the imagery through the same type of process in each application to see if a particular app was inherently better than another. The key is to know that degradation of imagery generally takes place every time you process a setup which has image transformation — and to know within your app how to minimize this processing.

Where this degradation occurs is not always clear as it can come at different steps in the process. Take flame, for instance. You’re not necessarily rendering imagery once in a batch setup. Each time that you have an action node in batch, it is possible you’re doing a form of rendering/pixel resampling and processing. I’ve seen batch setups with action node after action node feeding into the next. This can lead to the imagery being processed multiple times and really let to a loss of image quality. This is certainly not a flame-only “feature” as the same holds true for other compositing applications. For instance, if you have transformation nodes separated from one another in either Shake or Fusion…processing can also occur multiple times within the same process tree.

Shake, Fusion, and others with a nodal approach, can be clever about concatenating transformations through a 2D process tree. If you rotate an image 45 degrees CCW with a transform and then rotate it back 45 degrees later in the graph before other image processing occurs, the applications are intelligent enough add up the transformations and process the image once, resulting in no rotation at all. For our tests, had we done all of the rotations within one process tree, you would have seen no loss of image quality.

The concatenation also breaks down with the addition of 3D nodes in theses apps, such as multi-plane in Shake, and Renderer3D in Fusion. Transforms are concantenated in these apps either immediately before or after the node. Each of these nodes renders the imagery and passes on a 2D image to the pipeline, an additional way of rendering images multiple times within a single graph. Because it is not found within a batch process tree, transformation concatenation is often not thought of as occurring in flame. This really isn’t true, since if you link multiple axes together within a single action setup, their transforms are essentially being concatenated.

Photoshop is an interesting case, as we could find no way to get the processed image to line up with the original image. You can see in the result of the difference matte, that the Photoshop result is easily the worst at not matching the original. The image is shifted less than a pixel, resulting in the poor result since I was unable to line it up. However, in looking at the result with the naked eye, it is not nearly as soft as the imagery coming from the compositing applications. While there are certainly some artifacts, the result could be considered superior in certain situations.

As far as general hints applicable across all applications, you might consider adding a blur to imagery before reducing in size. This reduces information in the imagery — information which you know would not be visible after a resize anyway. This can help greatly, especially in applications such as Flame and Toxik where you might be tempted to turn up Samples — which can soften the imagery. Also, a slight bit of sharpening of the imagery after rendering can be useful in many situations…and in many cases is actually preferred. Be careful to not over-sharpen as this can lead to some vibration and jittering of the images inter-frame over time. A slight touch is best.

What follows are application specific tips and notes.

tips for flame

Action is the location where degradation is going to occur but you can do things to minimize this softening. The Anti Aliasing setting is the item which can cause the most softening in imagery, so use 1 (or as few samples) as possible. This setting is used to anti-alias edges on layers and geometry as well as further resample images which have been reduced in size.

Accoring to Martin Helie, Product Specialist for Flame, “what happens under the hood with anti aliasing is that the camera is ‘exposing’ the scene n times ( where n is from 1 to 64 samples) at slightly different XY positions (distance based on Softness) and blends those together.” Helie continues, “Although this will smooth out edges, it WILL also smooth out pictures (read: subtle blur). The default softness is 1 — you may be able to get away with a value of .5 for Softness.” To avoid this it is quite simple to pass the imagery through as the background layer in the action setup….or use the fill and matte of the geometry later in the processing pipeline.

Bottom line: Use 1 Sample — or as few samples as you can get away with for clean edges.

A second item which can help clean up imagery is to turn surface Filtering OFF, bypassing the Open GL bilinear filtering for an image during renders. This is really most useful for images which are full frame and/or repositioned and not animated. This is due to the fact that with filtering off the image is filtered using nearest (dirac/impulse) filtering, so moving in less than 1 pixel increments will basically bump it to the next pixel. For this reason, I generally like repositioning the image in whole number values just to make this clearer when working. Always turn Filtering off for full frame imagery — a good reminder that filtering is off for an item is to delete the axis which controls it. Do not resize objects which have Filtering off….it won’t look good.

Bottom line: If you need to do a “simple” reposition and don’t want to degrade the image: Anti-Aliasing to 1 and turn Filtering off for the surface you are moving.

If you are working at NTSC or PAL resolution and are not moving your image, turn Filtering OFF to help keep image detail. You can then set anti-aliasing sampling to anything and your image will not be dramatically resampled and will remain clean. This is because the anti-aliasing method explained before has much less of an impact on setups outputting at NTSC/PAL size. When working in HD and higher resolutions, you will still get softening even with Filtering OFF. As mentioned above, turning softness to a value of .5 will further help to keep imagery clean.

When working in batch, use the Modular Keyer, Master Keyer, GMasks, Sparks, and combine items using Logic Ops whenever possible. This is because there is no filtering which needs to be done, since there are no transformations in 2D or 3D space. When you need to use transforms feed the imagery into an Action node for final rendering and follow the tips above.

Finally, much is made of concatenation in other applications as an improvement over flame. In reality, parenting axes in action is almost identical, since as long as you apply the transforms before rendering, transforms will be concatenated. This is similar to having several transforms in a row in Shake (equal to parented axis in action) followed by an image processing node that breaks contatenation (equal to process action).

tips for Shake

One thing that is very useful to be aware of when working in Shake is the idea of concatenation of transformations. When transformations within a shake script are linked immediately one after the other, the software is intelligent enough to add/subtract these values between the various transform nodes and provide one “rendered solution”

Click on the image to the right to see an example we created to illustrate this workflow. The left side shows three viewers of imagery at different points in the process tree. The top image is the original image. The middle image shows the result of what happens when you link two rotations together one after another. In this case they cancel out, leaving a result image which is identical to the original. The bottom image is the last node in the right side flow. Note that there is a color correct node (essentially a pass-through with no change in values) in-between the two rotations. Because there is a node between the two rotations, no concatenation is possible and the image is processed twice.

The Move2D node has filtering options for transformations and scaling, which were chosen based upon experiences with visual effects artists and doing tests of filters. “This means that we’ve chosen the filters that were judged to work best with the following criteria: sharpness-to-softness trade-off, and animation,” says Dion Scoppettuolo, Senior Product Manager of Shake. “Some filters look sharper than our default ones, but don’t necessarily animate very well, ” Scoppettuolo adds. “This also applies to our Blur node – it’s been specially tweaked to avoid pops during animation – animate a Blur on a Checkerboard to verify. Our transform filters are also axis-independent – if you expand in X but shrink in Y, you get two different filters simultaneously. This is also adaptively applied during a CornerPin, but that’s less conceptually obvious.” Filtering options in Shake include box, dirac, gaussian, impuls, lanczos, mitchell, quad, since, and triangle.

If images are transformed in integer values, there is no filtering done…this happens automatically within Shake. The same holds true for 90 degree rotation and -1 scales done within the process tree.

Bottom line: Transform/Stabilize/Adjust operations should be done in one continuous chain whenever possible.

Finally, Scoppettuolo points out that “the new Adaptive tools for retiming in Shake 4 are opening up a new area for us, which is re-mastering, which is changing the speed, interlacing, and, germane to this conversation, image size.” The optical flow analysis allows the software to achieve extremely good quality when resizing entire images in a re-mastering scenario, as opposed to general fx transforms like match-moving. In the comparison to the right, note the white diagonal strut in all 3 images and compare the text under the wing – it’s largely illegible in the first 2 versions. To get access to this scaling, use the FileIn’s Timing subtab, and setting reTiming to ‘Convert’.

tips for fusion

Fusion’s workspace will take into account adjacent transforms and concatenate values whenever possible. As in Shake, its important to keep in mind that processing nodes in-between transforms break this capability. “Whenever I start out teaching Fusion — or even with experienced artists,” says Eyeon’s Isaac Guenard, “I emphasize that it is best to do your color corrections in a row and then apply transformations.”

Filtering options for the Scale node in Fusion include Box, Bi-Linear, Bi-Cubic, B-Spline, Catmull-Rom, Gaussian, Mitchell, Lanczos, Sinc, and Bessel. An important caveat is to note that the Scale functions will not concatenate between nodes — so scale once and be done or use the Transform node to do scaling. Of course, if you use the Transform node you lose the node by node filtering options….but this is a call you need to make depending upon your scene and imagery.

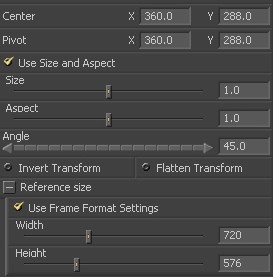

As in other applications, moving imagery in one pixel increments does not resample an image since pixels line up. In previous versions of Fusion this wasn’t very straightforward — users needed to use functions or formulas to determine how far to move a layer since the coordinate space is based on zero to one. In Fusion 5, there is an option in the Transform menu to turn on “Frame Format”. With this item checked, the display of positioning and pivot information switches to coordinates which match the image you’re moving.

Be aware of the fact that the new Renderer3D does what it says — and renders an image. So avoid passing the same imagery through these nodes or using 3D transforms to do simple 2D transformations.

There is a “high quality” setting [HQ] which is on by default in the render setup dialog box. Make sure this is on.

tips for toxik

There are many similarities between Action and Reaction in that passing imagery through multiple Reaction nodes can lead to image degradation. Parented Axis and Layer transformations within a Reaction node are concatenated similarly to the way they are concatenated in flame’s Action module.

Filippo Tampieri of Autodesk recommends to use the output filter options only when you are working with a lot of geometry. “Basically, advanced 3D renderers (including Reaction) actually render geometry at a much higher resolution than the one you are asking for and then filter it down just before output,” says Tampieri. “The filter menu options you see in the output panel of Reaction allows you to choose which filter to use for that output stage. However, compositions are typically done with relatively simple geometry and often with layers that have an alpha channel that cuts out a piece of the rectangular image that is mapped on a layer; for this case, using the filter options on the output will not really improve perceived image quality; rather, they risk of making the result blurrier.”

There are UV filtering options for each and every material in a Reaction node. While “Best” is generally the way to go, a value of “Medium” filtering can sometimes times lead to a result image which is sharper than “Best”, but well filtered. It is useful that this setting travels with each material, as your results may vary. You should change this setting to “Off” if you are reposition items in one pixel increments…this way your image will not be degraded. This is the only way to avoid degradation — If you move in 1 pixel increments but have the material filtering setting on “Medium” or “Best”, your image will be subject to filtering.

Filter settings for the entire Reaction node (Reaction Output->Rendering->Filter) are only used when the Anti-Aliaising button is on. When this button is on, Filter=Box will keep your imagery clean.

Samples is used for either Depth of Field or Motion Blur — and is only active when one of these of these items is turned on. When active, the result is rendered “number of Samples” times and averaging the result together. While they have the same name, this is unlike flame’s Action Samples settings, which does effect Anti-Aliasing.

Image Filtering (Reaction Output->Rendering->Image Filtering) turns on/off the filtering of the textures (not of the

geometry). This is a global control which impacts all materials in the scene. When this is off, it does not matter what you selected at the Material level; all image sampling will be nearest sample at that point.

So, to keep things as sharp as possible, but high quality: Filter=Box, Anti-Aliasing=ON, Image Filtering=ON. Then control the texture filtering on the material to get the best results possible.

One final note. The background image in Reaction is processed based upon the filtering and Samples settings for the node. This is unlike flame or inferno where the background image is passed through without filtering. If you want to pass a background image through cleanly, set Output->Rendering->Samples to 1 and keep Output->Rendering->Filter set to Box. If you are unable to do this for image quality reasons, do your composite *after* reaction using a Blend & Comp node.

Panner, Orient, and Flip are 2D transform nodes in the Toxik procedural schematic which can reposition the image without degradation. However, they are not designed to be animated.

tips for Nuke

Nuke takes a slightly different approach to image processing, in that Nuke takes every input file and converts to linear 32 bit float. According to Gary Meyer of D2 software, the reason for this is straightforward – working all the time in linear 32bit float ensures proper calculations thoughout a script. “D2 Software is less concerned about having the ability to switch between bit depths, ” says Meyer, “preferring to maintain image quality and relying on Nuke’s speed to minimize any negative effects of this practice”.

Nuke follows the same convention as other desktop compositing apps in that nserting operators other than transforms will break transform concatenation (see the discussions above in the Shake and Fusion sections). Nuke also has a selection of filters to choose from- Impulse, Cubic (default), Keys, Simon, Rifmen, Mitchell, Parzen and Notch- giving artists flexibility depending on the type of movement or image in the shot. One thing to watch out for is that Mitchell, Parzen and Notch are not interpolative, so the image will change even if there is no movement of the image.

It is also worth noting that in the upcoming version of Nuke, when working in 3D space there are now separate transform operators, as opposed to transforms being part of the geometry that is imported. These transforms also concatenate exactly as they do in 2D space, which is important to keep in mind as you apply your materials and shaders; they will break the concatenation, exactly as color ops will in 2D space. Also worth noting is that the color operation-based geometry modifiers in Nuke’s 3D space do not concatenate at all. And a scanline render inserted will of course process the image at that point.

tips for Combustion

In combustion, ever operator produces its own output, there is no grouping of the processing. Operators that do not make any transforms will not degrade the image ( Keyer, CC, Edit, Timewarp, paint, … )

Repositioning in increments of “1” will not resample the image. According to Eric Brown of Autodesk, “If you are doing two 45 degree rotations and you want to have the least degradation, make sure your aspect ratio are all square, in the footage, and the composite. The aspect ratio will not rotate with the image giving you the same effect

as a resize. If you comp a layer that as an odd dimension into a comp with an even dimension and vice versa (720×480 with 721×481) you will get some filtering since the layer will be centered on an half pixel giving you the same effect than a pan of 0.5.”

tips for After Effects

The important thing to remember is that a pre-comp is exactly that — a pre-processed composite. If you want to avoid unnecessary image degradation, limit the nesting of composites within composites.

If you want to avoid resampling an image in After Effects, you can move the layer in 1 pixel incremements.

notes

The following are notes regarding some of the application setups for these tests. Again — the idea here is for you to try this on imagery you are familiar with, using your ideal settings. Your results may vary dramatically:

For processing on the flint and flame systems, rendering was done both with Texture On and Texture Off. Antialising Samples were set to 1

For Photoshop, the final image did not line up exactly with the original and instead was shifted slightly. We tried the test several times but obtained the same results.

For After Effects “best” settings were used.

The Digital LAD test image is a Kodak test image used for setting up film workflows. You can find more details about this image by visiting the Kodak web page.

The image rue.tif was transferred on a Spirit 4k with a DaVinci 2K color corrector to D5. It is part of the StEM test footage jointly developed by the American Society of Cinematographers Technology Committee in partnership with the Digital Cinema Initiative. You can find out more information here.

Footnotes:

(1) Shake4 User Manual, © 2005 Apple Computer, Inc. All rights reserved.