We are proud to discuss the latest work from the ICT in LA. If you loved our Art of HDR story – this shows the latest research which will be shown in Siggraph 30th July. We speak to Per Einarsson and Sebastian Sylwan who worked with Paul Debevec and the rest of the team at ICT to take the HDR Lightprobe / light stage to the next level. Jeff Heusser and Mike Seymour from fxguide were lucky enough to tour LS6 just after it was first operational.

A short while ago, while the project was still under wraps, Jeff Heusser and Mike Seymour toured the research labs of ICT in LA. Paul Debevec showed the fxg crew around the new massive LS6. At this time the rig was only just operational and the team were madly finalising their research for a Siggraph submission. Since then the research has continued and this week we spoke to Per Einarsson, about his role in the LS6 and the areas of research they explored. This is coupled with a high resolution video showing the LS6 in action.

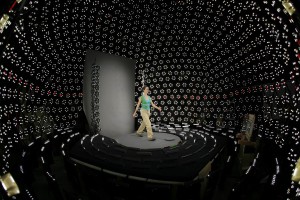

LS5 could record an actor from the waist up delivering a short performance and then relight the performance in postproduction to match any lighting setup, since the performance was shot with a vast array of lighting combinations per second. LS6 goes much further. In LS6 actors can now walk or run and this is then not only captured with a vast array of lighting combinations per second – but via multiple camera from a turning treadmill.

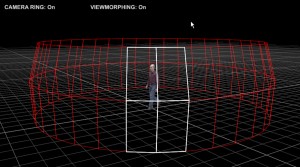

The result is you can dial up in post – ANY lighting – and virtually ANY camera height, or ANY camera angle on the performance ! Again like LS5 this is a research project and not intended as a flawless practical stage. Rather the team are solving the issues to advance the research to places not tried before. Work from LS2 was actually used in Superman Returns released this month, but the teams focus is on research not direct production.

fxg: Per Einarsson and Sebastian Sylwan thanks for talking with us, firstly LS6 is amazingly impressive, just walking around on the stage is an amazing experience, what was each of your involvement with Light Stage Six (LS6)?

SS: Paul and I discussed the possibility of a larger light stage since the moment we met. When I decided to quit my job in Italy to move to California it was to help Paul make this come true. I was called in to coordinate the efforts at the beginning and to make the Light Stage 6 project happen. I took care of the design of most parts of the light stage, in close collaboration with Paul. Basically i was taking Paul’s specifications and wishes and making them true whenever possible, in time, and in budget.

PE: I was leading the software research for processing and rendering the data that we captured in LS6.

fxg: Just what are the dimensions of the final LS6?

SS: It is 8 meters wide and 5.60 m high.

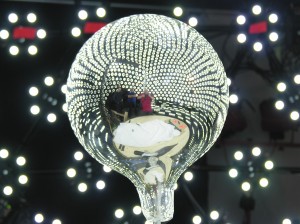

PE: yes, it’s the top 2/3 of an 8 meter geodesic dome.

fxg: How has LS6 advanced the art from the work you and the team published on LS5 ?

PE: For the first time we can capture full-body human performances with time-multiplexed illumination and high-speed photography. This gives us the ability to modify a performance in post-production and view it under any desired lighting condition and from any desired camera view point. We call this post-production control of viewpoint and illumination, and it’s a step towards being able to simulate truly photorealistic humans in computer graphics.

SS: I guess that’s 2 different questions. One is on the engineering side. I think there are very little CG research projects that have a system of this complexity.

On the research side, i believe we put together many techniques, not necessarily innovative, but that were a first tied together to produce a great result. Have you seen the movie ? You’re the artist, you should answer this 😉

fxg: So the actor is walking and being rotated – and filmed with simulateous cameras, how complex was it to have multiple cameras at multiple heights this time and move between them?

PE: Not very complicated. We simply place cameras on tripods just outside the stage or attach them to the stage directly if we want them very high up. All three cameras are synced to the stage so that they automatically expose at the same rate as the light sources in the stage.

fxg: How did the LEDs differ over the previous, much smaller LS5?

SS: They’re the same kind. There’s a lot of them. They’re overdriven almost 3 times. They have a single trigger/power regulator per light. They have a much larger heatsink. There’s more of them per angle unit (ie. more resolution potentially). They have “the smarts” or the microcontrollers (in LS5 there was 1, there are 76 in LS6) are distributed. They’re faster.

fxg: Did people find the turntable /treadmill hard, as you needed them to be as cyclic as possible in their performance ?

PE: It took a little practice, but after a while people found it relatively easy. To help the actor walk as repeatable as possible we played a metronome sound so they could to keep their pace steady. We also put tactile grooves under the treadmill belt so they could feel where they were on the treadmill without having to look down.

fxg: how difficult was it to close the loop and make a continuous walking cycle, or did you not end up with a walk cycle in some cases and just used continuous footage?

PE: We close the loop of the walk cycle. Every single frame that we render is from an arbitrary 3D camera position, which means that we can view any “pose” of a locomotion cycle from any viewpoint, so rendering a continuous locomotion cycle follows naturally from that.

SS: It was definitely one of the biggest challenges, I believe by the end we managed to find small solutions that allowed the performers to feel confortable even at 4am, with no sleep the night before, and walking in this thing.

fxg: Optical flow as par of the published work for LS5. Were there any optical flow advances in LS6?

PE: We used known optical flow techniques in this work. We have however experience with using the lighting information that we captured in LS6 in the optical flow algorithm, and we see great potential in that for improving the robustness of optical flow.

fxg: . And how many LEDs or lights are used?

PE: Each light source consists of 6 LEDs. There are 901 light sources on the stage itself that simulate distant illumination from the upper hemisphere. Then there are another 140 light sources at the virtual floor level of the sphere that simulate the light distribution from a diffuse ground plane, which means that they cast very little light toward the subject’s feet and then increasingly more light towards the upper body and the head, just like the ground would do in real life.

fxg: What was the maximum capture resolution of the cameras on LS6?

PE: The cameras that we use now can capture images at 800×600 resolution, but we are just about to incorporate new high-speed cameras from Vision Research with can capture in high-speed with HD resolution.

fxg: What was the cameras frame rate for capture?

PE: Approximately 1000 fps. It gave us us an effective (relightable) frame rate at 30 fps.

fxg: What next for your research?

PE: We would like to extend our rendering technique to deal with more general motions. So far we have only experimented with cyclic motions such as people walking or running. That gave us an early opportunity to explore 7-dimesional data for the first time with variable time, lighting and viewpoint. It would be great to extend this to any arbitrary human performance.

thanks so much for talking to us.

fxg.

NB: Sebastian Sylwan is now at Digital Domain

You can download the first LS6 paper and video here

movielink(06Jul/ls6/lightstage6.mov, Download a nice QuickTime overview of LS6)