In the new Disney film Maleficent, a once-good fairy becomes the ‘Mistress of All Evil’, and curses the infant Princess Aurora (Sleeping Beauty). The Robert Stromberg-directed film crosses between the human and ‘faerie’ worlds and employed complex facial capture and animation work, along with detailed digital characters and environments. Overall visual effects supervisor Carey Villegas recruited Digital Domain and MPC to complete the lion’s share of the effects work, assisted by Method Studios, The Senate and an in-house team. The Third Floor delivered previs and postvis for the film.

Above: watch a breakdown of DD’s flower pixies effects work in this video produced by fxguide in collaboration with our media partners at WIRED.

Digital Domain’s facial features

Among impressive digital shots of Maleficent, environments and other work, visual effects house Digital Domain (DD) was tasked with the problem of producing believable flower pixies played by Imelda Staunton, Lesley Manville and Juno Temple. In the film the pixies appear as both normal human characters and as smaller versions of themselves in more traditional ‘faeries’ proportions.

The task of making natural looking faeries was made more difficult by the audience seeing the actresses normal sized, as well as their smaller faerie forms. This meant the skin texturing, eyes and facial animation needed to be exceptional as the audience had a very immediate visual reference to compare to.

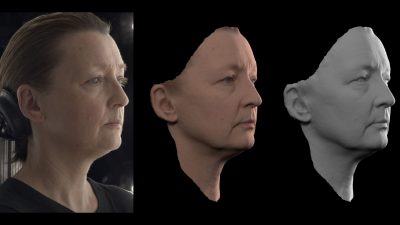

DD breakdown for the character of Thistlewit.

The pipeline solution that DD constructed, partly driven by schedule and dominated by years of experience, was innovative for its seeming redundancy and effectiveness in problem solving. Interestingly, instead of making the digital models of the fairies based on the real actresses and then animating and lighting them, as one might do traditionally, DD made, animated and lit digital replicas of the actresses – looking as they do in real life. Then, once those faces were looking believable the animated faces were re-targeted for the new proportions of the faeries.

To be clear, no audience member will ever see the digital replicas of the actresses, only the final re-targeted faeries. The genius of this approach proved to be in helping fault finding, and accommodate last minute design changes in the faeries. If the animation and lighting had been done initially directly on the faeries then it would have been both hard to work out why they perhaps were not working in any given scene, but also if the design of the faeries changed, it could mean re-doing large amounts of animation. But with this new approach, the team could make sure the faces worked first with no re-targeting and then compare the digital faces to image based lighting capture real shots of the actresses.

Only once the digital faces had any issues sorted out did the re-targeting happen, thus any problem with realism could be found in either the original matching or the re-targeting much more easily. This approach was born from the schedule that simply did not have a final faerie design when the DD team started work. This pushed the team into producing what could be called a redundant step of the intermediate animated natural faces, except the move proved to actually be a time saving innovation, as any late design changes just required a new re-targeting and not a loss of the work done in terms of animation and lighting.

Villegas, who had previously worked at SPI and Digital Domain, had a lot of experience with complex characters from work on such films as Alice in Wonderland. Villegas explored the idea of using real faces and distorting them for use with the flower pixies, an approach that had worked extremely well for him on Alice, but opted instead for a more advanced 3D solution with Digital Domain. He was instrumental in pushing the SSS and pose to pose accuracy that led to the advances seen in Maleficent. Villegas joined the project before it was officially green lit, and worked closely with former VFX designer/art director Robert Stromberg.

Character study.

Villegas told fxguide that, in relation to character design and how to realize characters on screen, that with “Robert being such a visual person, he came up with a lot of the designs for the characters himself, or at least the first pass.” This was Stromberg’s first film as director, and he worked with Dylan Cole on designs. “For the concept art,” adds Villegas, “you are not talking about pencil sketches – you are talking about arguably two of the best matte painters in the world, they were just under photoreal renditions!”

Prior to this project, Villegas had been working on the now cancelled Alex Proyas project Paradise Lost at DD. Before it stopped, that film was aiming to re-set the bar on believable facial animation of digital characters. “With Alex it was all about taking facial animation to the next level,” says Villegas, “so for the last eight months prior to Maleficent that’s what I was focused on.” It was therefore only natural with such great momentum that Villegas would want DD to handle the digital facial animation in Maleficent. Kelly Port was the visual effects supervisor for Digital Domain, heading up a team that built on the great work DD had done previously in films such as The Curious Case of Benjamin Button, TRON: Legacy and others.

Stage 1: Scanning at USC ICT

DD has had significant experience in creating CG humans, but the 21 inch high flower pixies in Maleficent required a more complex facial range and considerably more dialogue than other characters or actors than DD have previously attempted. “We knew from the beginning of this project that there would be intense scrutiny on ensuring the likenesses of the actors in their pixie forms,” explained Port. “To overcome this we embarked on building CG versions of the actors’ faces that were indistinguishable from the real actors before we even started building the pixies. These CG versions of the actors were detailed down to the finest wrinkle and pore. We also did extensive analysis on the actor’s skin under over 300 light positions and ensured that our CG actor skin matched at every level.”

A long time creative technical partner of DD is USC’s ICT headed by Dr Paul Debevec. Maleficent represents one of the largest projects that ICT has worked on – the team scanned many actors for the film in addition to the three flower pixies. Imelda Staunton, who plays Knotgrass, was quite the personality, and charmed the technical crew during production and scanning. She is veteran of visual effects having co-starred in the Harry Potter films as the evil but very pleasant Dolores Umbridge. Several VFX professionals commented on the complexity of her skin, and as she was one of the first of the flower pixies to be scanned (along with Lesley Manville as Flittle), she acted as a proof of concept for many of the techniques Villegas was keen to explore.

ICT did not use its newest micro-geometry capture techniques, as the original scanning was done in 2012, but in addition to generating pore level model scanning data, the Lightstage scanning process produced light field data for an image based lighting solution, which would become key in the overall process later. This initial work would allow DD a more indirect but much more accurate technical pipeline. Each principal actor had approximately 40 FACs poses scanned in the Lightstage. The neutral poses and the FACs poses feed into a face shape network.

“The way we did that was having an apple to apple comparison,” says Villegas, “from actor to their digital counterpart. This was really key for me. We build each of the performer’s facial shapes 1 to 1 with their real actor, so we did not go directly to off proportioned pixie, it was really important to me that we modelled each actor perfectly. So we made a digital Imedla that looked just like Imelda and we did not go straight to some translation. And even more so – we did all the animation on this digital Imedia first. Only when that worked we could go the different proportioned character. I got some ‘stick’ to do this, but it was really important to me.”

Interestingly, this approach of side by side matching before re-targeting was something Villegas says he learnt from his mentor and VFX legend Rob Legato. When Villegas was working on Bad Boys II for Legato, they needed to prove to director Michael Bay that they could produce photorealistic digital cars. Legato arranged for a test using Bay’s own white Ferrari with a stunt driver at Santa Monica airport. The two then matched the car and had a digital Ferrari on screen side by side with the original. Bay green lit the approach when he could not tell which car was real, ie, which car was his! “Of course,” says Villegas, “this is a few years ago and it wasn’t just the look – it is the animation and timing – everything that you needed to do.” Much of the same is true today. Villegas did not just match the actresses with stills of their digital selves, he animated the digital faces to perfectly match the source clips of the actresses, before moving to the smaller re-targeted pixies. “It just eliminates guess work,” he states.

Stage 2: On set capture

The ICT data dovetailed into a new camera head rig used to film the actresses on location in London. The production built a motion capture stage in London, and worked with Disney Research Zurich to gain additional key facial data using the Disney Medusa facial rig. On set the actors were filmed by 4 cameras delivering their lines, even if they were in flying harness rigs. They would then come down and deliver the same set of lines again on the ground to allow a second capture that would map the face more accurately between the key expressions. The head rig used new higher resolution HD cameras running at 60Hz for greatly improved results. The Medusa rig was an “excellent reference as to how particular FACs shapes transition from one face shape into another,” says Digital Domain virtual production supervisor Gary Roberts. “With so many muscles in the face relaxing and compressing with overlapping time frames, the transition from shape A to shape B does not happen in linear fashion, so it is enormously helpful to see a 3d record of that transition in the form of a geometric mesh.”

This video shows the on-set performance capture and build for Flittle.

The Medusa rig, a stereo photogrammetry setup that does not require dots or markers, allowed DD to get a coherent moving mesh, which proved invaluable. Explains Port: “It is one thing to go from FACs pose one to FACs pose two, but it is an entirely new reference to be able to see how that transition happens, because not all muscles fire at once. They are offset from one another. This is information that prior to this had not been really known. That reference was extremely helpful in seeing how poses blended one to another.”

This information fed into the rig system that was driven by the 4 camera helmet rig. The motion capture rig used on the mocap stage was able to record all three flower pixies simultaneously to facilitate strong performances and accurate timing.

The team used the captured FACS shapes from each of the flower pixies to build very accurate actor 1:1 facial animation rigs and a highly comparative flower pixies character rig for each of the characters. “This ensured we could map the actor’s performance from the performance capture session (utilizing our 4 camera head mounted camera system) to the facial animation rig to an extremely high level of accuracy,” says Roberts. “The face shapes from the Medusa system (used on the ground) allowed the team to build high complex and very detailed statistical models of the actor’s faces and necks which were also used to help process the video data from our head mounted camera systems from the first video capture performance (used when the actresses were first captured) in flying rigs etc.” The Medusa system also allowed DD to get very accurate surface information of necks as well as faces which is so important for believable facial animation and performance from the motion capture sessions.

From capture to final.

The key to the ICT stage and the on set motion capture process is not to just get great data on key FACs poses but to get the mesh moving coherently between poses. The team needed to see how the transition happens, not all the muscles move at once, so the timing and exactly how the shapes blend and how the pores of the skin stretch and transition between key poses was a real focus for this project.

Stage 3: Modelling and rigging

The normal pipeline for a digital character is often to take the motion capture data and then re-target it for the digital character, adjusting from the proportions of the real actor to the new proportions of the digital character. Here Digital Domain decided to not re-target directly. Instead, the team built a fully lifelike replica of each actor and then rigged that digital clone before re-targeting.

The normal pipeline for a digital character is often to take the motion capture data and then re-target it for the digital character, adjusting from the proportions of the real actor to the new proportions of the digital character. Here Digital Domain decided to not re-target directly. Instead, the team built a fully lifelike replica of each actor and then rigged that digital clone before re-targeting.

“We were also able to capture the way the different layers of the actor’s skin looked,” says Port, “and evolve our skin shaders so that our CG actor’s skin had the same layer makeup and therefore looked exactly like the actors. Not only did these CG versions look like the real actors but also they had to perform exactly like them.”

Asset development.

The human face is capable of a massive number of shapes and expressions, so the team had to ensure that the CG versions of the actors matched the real actor’s facial range. “We were also extremely lucky or unlucky in that our actors had very malleable faces,” adds Port, “which required a far greater facial range and a greater level of control than we had ever previously attempted.”

All this work was crucial to build a foundation even though these digital versions of the actors would never be directly used in the movie. “This also helped to build a level of trust with the studio to ensure that there was no doubt our pixies matched the actors,” notes Port. “In every aspect of the digital actor build we leveraged and developed upon the large body of virtual human development previously done at DD.”

The team built and lit perfect replicas of each actress for the flower pixies, and animated these. Only once these perfect digital clones worked did they then re-target to the smaller pixie versions. This had two distinct advantages:

1. The side by side comparison between the actress lit with the ICT image based relighting allowed the team to perfect the skin, modeling and rigging before dealing with the added complexity of re-targeting, and

2. If the pixie model changed, and it did up until quite late into the production, the team could just re-target and then re-transfer the animation data and continue without having to start over or lose time.

A side by side showing the real actress and her digital counterpart.

This combination of flexibility and visual accuracy proved vastly more helpful and effective, than the cost of having a large workflow step in the middle of the pipeline that others may have chosen to avoid. Far from this extra stage slowing down the production, it allowed the DD team to do detailed work before the look of the final flower pixies was even known, and to produce animation that is much more realistic. Finally, if the director did have a problem it was easy to step backwards in stages and examine if the problem was re-targeting, or animation, or skin shaders, or just that the original take was not working.

Z-Brush and Mudbox were used for modeling and sculpting beyond the scan model data, while the rest of the modeling, rigging, animation and cloth simulation were all done in Maya. DD used their in house proprietary tool Samson for the hair grooming, which was quite complex as one of the pixies, Thistlewit, has very prominent blonde hair, which not only affects lighting and shading but needed to react properly to gravity and momentum when in flight, while still looking stylish and pleasing.

One aspect not able to be scanned but relied instead on experienced modelling and lighting were the actors’ eyes. The position of the eyes is seen by the head rig motion capture cameras, but the actual eyes are not scanned well, and most of the craft of producing believable eyes falls on the talented artists who model and light them. The eyes are also one of the most important aspects of a performance and a key area that can let down a digital double or digital character.

Eye study.

“We knew the eyes would be crucial to conveying any type of believable actor performance. We were fortunate to have special sessions with the actors in which we could capture extremely detailed material of the unique ways their eyes moved and worked,” says Port. They then analyzed the minute detail of how the actor’s eyes worked and tried to replicate them in CG. To capture all the detail they created a physically based model complete with connective tissue layers and layers of fluid between the eyeballs and lids. “We even went so far as to track the exact path the eyelid makes during a blink which contrary to what most people think is not simply vertically up and down,” points out Port. “We also created extremely close up eye animation test shots much closer than any of our shots in the movie to test the CG eyes. We knew if our CG eyes were believable extremely close up they would be believable in the shots.”

Stage 4: Animating and lighting

For subsurface scattering, the DD Maleficent team used V-Ray’s native low-level SSS brdf shader, “not the wrapper that you normally see in Maya,” notes Digital Domain CG supervisor Jonathan Litt. “We used it in a custom skin shader network which handled other aspects of skin beyond the subsurface scattering. “On the hair Chaos, the makers of V-Ray did quite a bit of new development to optimize their hair rendering for DD. “This turned out to be vital since Thistlewit had a large and complex groom of curly blonde hair,” says Litt. “Everything was ray traced, including hair translucency and global illumination in the hair volume.” Global illumination was a key component to the look of the hair, especially the blonde hair, since light bounces through and around blonde hair much more than in dark hair. The hair groom was fed to V-Ray via custom rendering plugins. “We also wrote a number of custom brdf shaders for Maleficent’s wings and costumes,” adds Litt.

Key to getting the color of the face correct is the dynamic blood flow. The hue and saturation of the SSS is completely affected by the blood, but the blood flow has a different timing than the posing. It is possible to have two correct status poses scanned and recorded, but while the actor can move between expressions very quickly, it can take up to 6 seconds for the blood flow to ‘catch up’ and equalize. Furthermore, it is very hard for humans to read blood flow on a normal looking skin shaded animation.

Blood flow study.

As Paul Debevec explained, from ICT research, the human perceptive system is just designed such that we find it very hard to ‘see’ the color for the face. DD’s solution to this was to do their blood flow tests on a blue faced character. Only then can one visually isolate the blood flow component of the facial coloring and understand what the render is showing. When the artist was happy with the accurate simulation, it was placed back into the pipeline and informed the SSS renders in a very tangible but subtle way. Dynamic blood flow is one of the many aspects of digital faces that experience has taught DD to model, and without the face can fall into the Uncanny Valley, but articulating why the face looks unreal or fake is very hard for most people to explain.

“We analyzed how the blood flow changed in the actor’s faces in various extreme facial expressions all under specialized lighting conditions,” says Port. “We derived maps for all these dynamic facial color changes and which were integrated into our facial rigs, and were automatically driven based on the characters skin tension and facial expression. All of the dynamic color changes were driven automatically from the characters facial animation without any input needed from DD’s animators. “These dynamic skin color changes gave the pixies faces an additional level of realism we had not previously been able to do,” adds Port. For example, the bloodflow system took the time an expression was held into account so the longer a character held a tense expression the redder their faces would get and the longer it would take them to return to normal.

Stage 5: Re-targeting

Finally once the rigged and animated faces are approved by the director there is a re-targeting to the pixie models. These faces are more round, with less wrinkles and bigger eyes.

All the development for the flower pixies was focused around building lifelike articulated actor faces as a foundation and then transferring this information to the characters. “From the beginning of the show we were fortunate to have beautiful pixie artwork supplied by the director,” says Port. “We used actor’s faces as a base and slowly started modifying their facial proportions to pixiefy their faces and transform them into their final characters.”

Port and the team paid careful attention to the bone structure and the physicality of each actor’s face to ensure their pixie faces remained intact so that when they finally moved the pixies would feel as natural as the actors. “Our entire workflow for the faces hinged on a key idea that we could build photoreal articulate actor faces and transfer all of this to our pixies designs, something we had never attempted before,” says Port. A key tool allowing DD to do this was their proprietary facial transfer toolkit, which allowed them to transfer face shapes, rigs, etc from actor to character. This transfer tool takes into account the differences in bone structure, skin and other characteristics between the actor and character and rebuilds each of the face shapes (around 3000) one at a time translating them from how they look on the actor to how they would now look on the actors pixie form.

“The character designs on this show were very fluid and required us to regularly rebuild the entire pixie articulated face on a new character design,” adds Port. “The transfer process proved so robust in the end that we could change the pixie face shape and rebuild the entire face complete with thousands of new face shapes conformed to the new bone and facial anatomy and have it seemly delivered into the animators without losing any work.” Character wardrobes were also introduced. The pixies wore complex multi-layered dynamic wardrobes made of flowers petals, hairy thistles, leaves, and twigs. These complex wardrobes required multiple dynamic free flowing cloth sims with special localised controls to look good though very dynamic actions such as flying and landing.

Digital Domain also produced Maleficent’s wings which were seen extremely close up. Here DD had each feather individually modeled and rigged. The wings were featured as almost a separate character in the movie and were required to conform into almost any shape whilst never appearing to have any of the thousands of feathers intersect with one another.

A breakdown of DD’s work for the character of Maleficent.

The final character renders were combined with complex effects animations such as clouds, water, mist etc, most of which were done in Houdini. All the imagery was composited in NUKE.

In addition to the points above, Villegas also paid attention to a range of other fine facial animation including sticky lips, tongue animation, lip compression and many other very subtle but important refinements.

After the film was finished fxguide asked Villegas if he felt the new techniques the team had developed were finally at a point where a lead character in a film could be fully digital and effective, and had we as an industry reached a point yet when a full digital believable normal actor was possible? “I don’t know but I’d like to try,” he responded laughing.

MPC makes some magical Maleficent effects

We take a look at just some of the visual effects shots crafted by MPC for Maleficent.

The faerie world

MPC handled many shots showing the faerie world, building numerous characters and environments. Many of the backgrounds came directly from paintings by Stromberg and production designer Dylan Cole. “They had designed a gorgeous and graphic art bible,” says MPC visual effects supervisor Adam Valdez, “and it was a very stylized graphic intention on the way in. Once the photography came back it was somewhat stage-bound and hardlit, so we had to figure out ways to combine the more classic Hollywood hard light with these more open air natural light concept art and matte paintings for the backgrounds. It took a little while in post to assemble enough critical mass on the screen so that everyone could see how the storytelling was working.”

To help that process, MPC began building a mass of digital foliage. Valdez also supervised a two week additional greens shoot at Pinewood. “We got a big list of all of the plant types that were used on set from all the greens teams so that we knew what materials we needed to complete the shot,” he says. “I shot with a small unit getting all kinds of different foliage cards and elements. We had oak branches and flats made up and we shot the plants at different heights and with different wind speeds. So we had lots of material that in NUKE we could use as cards to be layered to extend environments. So in the end, every scene is hybrid of set work, 3D and environments.”

To help sell the distinction between the faerie and human world, MPC incorporated very vertical mountains into the faerie environments, as well as atmospherics. “One of the things Rob wanted from the beginning was atmospheric particles in the air and a sense of bug life and pollen,” explains Valdez. “That was achieved by a library of things created in the effects department – they created simulations of pollen and bugs and little sprites to give it that look – fantastical and natural movements. These things would be used as library elements as cards by compositors. If the camera was doing a full 3D movement through space, the effects team would simulate something special for that shot.”

Dark battle

For an opening battle sequence, MPC contributed several ‘dark faeries’ – dark skeletal-like riders atop boars, wood trolls and dark serpents – who assist Maleficent against a human army.

Live action for the battle was filmed in a field near Pinewood Studios, with action scenes handled by second unit director Simon Crane. “Being an accomplished stuntman himself,” relates Valdez, “he always tries to go for it with elaborate rigs and wire pulls. They had a huge overhead scaffolding and high powered cable systems to fling people into the air. They also had some underground rigs that disturbed the earth to mimic where the serpents would leap out of the earth and grab somebody and pull them back underneath.”

“You get a whole plate of stuff that’s physically real and you can match to,” adds Valdez. “And sometimes the stunt performers just have a violence and reality to them that some CG folks might not go to. Then you get a mix of real takes, characters being taken over. You go in there, you have a lot of bluescreen to replace, battlefield to extend, and new characters to insert.”

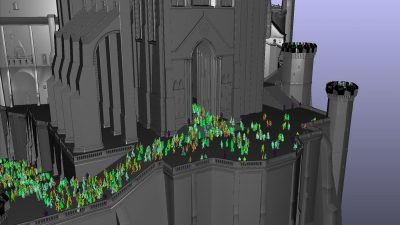

MPC utilized its crowd sim software ALICE and layers of keyframing and choreographed placement to help orchestrate the battle sequences. “We do some pure simulation and have an AI approach,” says Valdez, “but we continue to find that you need to give a crowd TD quite a lot of control, so they do a lot of plotting where and when events happen in the crowds. We work really well hand-in-hand with the animation department to really tell the story. So if a shot is two armies about to collide, you need to build in the tension to the next shot. It just continues to be the case that there’s a lot of hand work involved.”

The dark faeries were sculpted in ZBrush with tree and root references, and rigged to also mimic their plant-like origins. “The fun part was all the mosses and fine foliage that grows all over them,” comments Valdez, “which was a combination of straight modeling approach and our hair tool Furtility. We used it to grow moss but also fine twiggy plants that were almost like little tiny pebbles.”

“Then we were using RenderMan,” continues Valdez, “and a raytracing approach to render the faeries out. We did also use V-Ray for certain environments because it proved really useful at managing really large datasets. The software guys created some import tools to allow our standard pipeline to be used to import all of the plants and trees into the V-Ray pipeline.”

Dragon fire

The film’s conclusion includes a dramatic battle involving the character Diaval transformed by Maleficent into a fire-breathing dragon. In order to connect the dragon to Diaval, MPC maintained certain characteristics of the character that had been represented in the different forms he took throughout the film – from a pointy noise to black feathers. It was the feathers that also helped the director distinguish this dragon from others in recent films.

“The animators did a great job with him – he’s got a lot of ferocity,” says Valdez. “Then the tech animation team did great simulation and muscle work – they spent a lot of time to get the shaking right when he puts his fist down or when he gets knocked over – and all the dynamics of the skin and wings. It was important to show the fabric quality of the wings with folds. It’s always hard to try to convey large creatures because they need to be able to move well but they also have to have scale.”

For the dragon’s fire, Stromberg requested a ‘drippy’ and ‘liquid’ flamethrower effect, for which MPC used its Flowline fluid sim pipeline. “It gives us a lot of really nice elaborate weighty feel,” notes Valdez.”

As the battle consumes the castle’s Great Hall, smoke from dragon fire and surrounding oil chandeliers also consumes the area. “The idea,” explains Valdez, “was that room was turning into a smokey whipped up cauldron of hot fire effects. Our comp team developed ways of using particles in NUKE to create embers and also layering smoke elements with 3D smoke we generated for some wide shots, and then also using some photographic elements of fire.”

Logo intro

Late in post-production, MPC was called upon to add a unique introduction to the film featuring the Disney castle and logo that also helped sell the idea of the magical faerie kingdom that borders the human one. “The idea was,” says Valdez, “what if we actually ‘use’ the logo as the start of a sequence that helps the audience understand the two worlds that divide the characters. It’s a very long shot where we come down on the traditional Disney logo, we find out our new castle for Maleficent, and we push back over the castle and we start to see the human world with the faerie world beyond and that initiates a series of shots that helps the audience understand how far apart they are.”

Although MPC could not use the 3D files from the existing Disney logo animation, they were able to re-create, matchmove and match effects such as the fireworks, while adding in a CG Sleeping Beauty castle to replace the Cinderella style one. “Then we transition into a more photoreal representation of that same look,” describes Valdez. “We even had to create a brand new flying logo for the Disney world itself. There are guidelines for colors, shapes, how long it’s on the screen. We also had to hybrid the lens because our castle’s quite different than the original Disney castle – the scales are very different. We had to do a dissolve between the original Disney logo lens and our own and re-project some of the terrain from the original logo onto our own terrain.”

Finally, MPC constructed the world seen beyond the castle as the camera pushes over. “It was full 3D terrain laid out with 3D trees, a human village, boats in the water, crowds in the village,” explains Valdez. “That sequence was done at the end of the schedule, so luckily for us by then we had a lot of 3D foliage and a library of stuff we could pull from to do that work.”

Previs’ing Maleficent

With so many magical creatures and wondrous environments, director Robert Stromberg sought out the services of The Third Floor for previs, postvis, techvis and even pitchvis services on the film. Here’s a look at some their key work.

Pitchvis: This involved helping Stromberg show his vision of the film to Disney. One pitchvis scene featured a young Maleficent trying to control her wings. “You’re not concerned as much about the story at this point,” says The Third Floor previs/postvis supervisor Mark Nelson, “but it’s kind of a sizzle piece to really get people interested in it.”

Previs: One sequence The Third Floor worked heavily on in terms of previs was the opening dark faerie battle against the human army. “It was roughly storyboarded with Rob’s help and we took it from there,” notes Nelson. “We started using rough characters and built generic evil demon characters – some based on preliminary character designs – then we would keep building low res previs versions and keep updating them. We’d keyframe or use the Xsens MVN suit or a mocap stage at Framestore to do some mocap for the action.”

“Later on we would actually start putting in matte painting backgrounds that Dylan Cole and Rob had done,” adds Nelson. “We’d put pretty close to finalized matte paintings and then as the second unit shoot footage came through we’d jump into postvis before handing things over to MPC.”

Techvis: For a scene in which Maleficent puts Aurora to sleep, causing her to then float in the air, visual effects supervisor Carey Villegas asked The Third Floor to previs and techvis the shots. “Carey gave us one single image of this woman floating from a fashion shoot,” says Nelson. “I’m not sure if she was underwater, but we needed to match that look. So we previs’d her floating really slowly, even though she’s moving through space quickly. After Rob approved the shots based on the story, the idea was to shoot all of it super slow mo with a Phantom camera.”

“So we plotted everything,” adds Nelson. “Elle Fanning was put on a rig with wind blowing and so when slowed down it would match camera moves. We translated all the info to the camera so the actor would appear slow, and put that into the camera where it would static but she would be moving and rotating. The idea was that she rotated in the rig really fast with the Phantom shooting, but when they slowed it down they could have her come towards camera and move away from camera.”

Postvis: Nelson says that postvis work typically consisted of ‘slap comps’ to aid in the editorial process. It was used significantly in a sequence dubbed Maleficent’s rampage after her wings are removed. “There was a lot of stuff of Angelina Jolie walking through a field with greenscreen in the background,” recalls Nelson, “and at one point she lifts up a rock wall and throws it at some farmers. We’d track in backgrounds that Rob or Dylan would do or put in the previs backgrounds we’d made earlier.”

Pingback: The many faces of Maleficent | Animationews

Pingback: The many faces of Maleficent | Occupy VFX!

Pingback: The many faces of Maleficent | ila.solomon

Pingback: Sinks, Mentors and Maleficent | Adam Vickerstaff's 3d blog

Pingback: Talking Maleficent Pixie Facial Animation

Pingback: Maleficent: Not All In The World Is Black And White | thenursechronicles

Pingback: Lab Notes - June 9th | The Capture LabThe Capture Lab

Pingback: And more! | The Plain Jane Costume Chronicles

Pingback: Malévola - Making Off - Choco la Design | Choco la Design | Design é como chocolate, deixa tudo mais gostoso.

Pingback: fx-guide-maleficent | Jonathan McCallum

Pingback: Doi romani au participat la realizarea efectelor vizuale la Maleficent (VIDEO) | European Animation Magazine

Pingback: Ramahan Faulk New Mentor, New Course at Rigging Dojo - Rigging Dojo