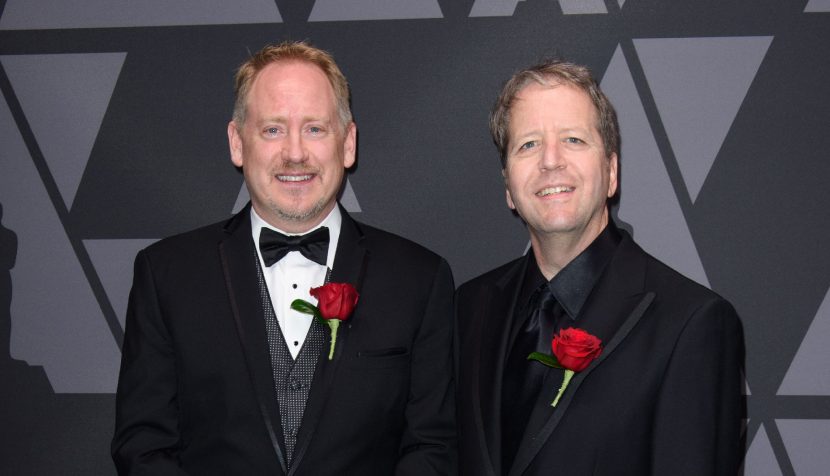

This weekend the Nuke Compositing Package will be honored twice at the Oscar Sci-Tech awards. The first award goes to Bill Spitzak and Jonathan Egstad for the visionary design, development and stewardship of the Nuke compositing system. The second award goes to Abigail Brady, Jon Wadelton and Jerry Huxtable for their significant contributions to the architecture.

In the beginning

Nuke started life at Digital Domain (DD) and then moved to The Foundry. The awards honor both the early work and the continuing R&D that has made this software the cornerstone of so many serious pipelines. Today, Foundry’s Nuke has become a ubiquitous tool used across the motion picture and visual effects industries. It’s nodal approach to compositing and effects has enabled novel and sophisticated workflows at an unprecedented scale and is used the world over.

Expanded as a commercial product at the Foundry, Nuke is a comprehensive, versatile and stable system that has established itself as the backbone of compositing and image processing pipelines. Read our original story when the Foundry first bought Nuke from D2 Software in 2007 here. Bill Collis as then CEO (now President) of The Foundry stated, “The really important thing about Nuke is that it has been developed by artists for artists, it has very many good features and one of those is that it is very fast, and it is really production proven.. the core product is just very very good.”

The Foundry is currently re-grouping and we will be following up with an in-depth interview with new CEO, Craig Rodgerson about the new directions for the company. With respect to Nuke, Rodgerson’s view is very strongly that “we are concentrating on the core DNA of the organisation, which means reinvestment in Nuke. This translates to extending our R&D, as we look to the next generation of solutions.” As we will discuss in our next Foundry story, Nuke remains the core of the company and Rodgerson is doubling down on the importance of the product. As Rodgerson comes from ostensibly an engineering background his commitment comes with a very strong R&D intent, something echoed by Foundry co-founder and Chief Scientist Simon Robinson, “It is safe to say the Nuke team is the biggest it’s ever been and growing”.

There are five individuals who have been singled out, to represent the team who have developed Nuke over the years. “Top of that list has to be Bill Spitzak and Jonathan Egstad” comments fellow award winner and CTO Jon Wadelton. “They are the real the founders of Nuke. Spitzak wrote the architecture of it. He took something that was a command line tool, around Nuke v2, actually it may not have even been called Nuke at that point,.. he provided the real architecture”.

The first version of Nuke was actually called the Nukerizer. At DD, the creative team were using a command-line script-based compositor to handle the simple work, alongside their huge set of SGI Flame and Inferno systems. Spitzak was a graduate of both the Computer Science program at MIT and USC film school, and had several years of software development. In 1993, Spitzak started to develop a visual, node-based version of their simple compositing system, and Nuke was born. It was first used on films such as True Lies, Apollo 13 and Titanic.

Jonathan Egstad, ended up leaving DD and he is now at Dreamworks Animation. As the other primary author of Nuke while at Digital Domain, Egstad was a key inventor and developer of Nuke’s multi-channel and 3D systems.

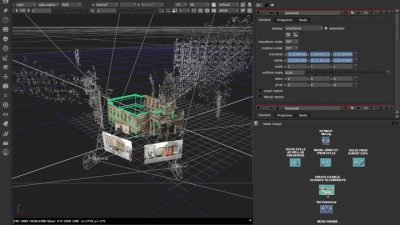

“Jonathan’s big legacy in Nuke, was the adding the 3D system,” comments Robinson “and that is one of the biggest advantages of Nuke”. Nuke’s 3D core is integrated into the system. Unlike Shake which one could claim was two and a half D, Nuke understood 3D and this foundational core aspect is why Nuke is still successful today and so much more than just a compositing tool.

“He did the Scanline renderer, the 3D system, the integration into the 2D sub-system to meld the two together,.. and we still have pretty much the same system today,” comments Wadelton. He jokingly adds, “much to Jonathan’s displeasure – as he really wishes we’d update it,.. and that is one of the things we have looking forward – to replace that rendering system, .. but it is still there now, and a lot of people love it. It is one of the strongest points of Nuke that the system allows you to mix 2D and 3D together freely”.

This is a point that Egstad himself very much agrees with. “The name itself should be a hint that the ScanlineRender node was not intended to be the one and only renderer that was implemented in Nuke,” comments Egstad. His original intention was to have at least ray-tracing, uv and volumetric renderers available, but by the time Foundry took over the code in 2007 “I was hard pressed to wrap up what I could,” says Egstad. “After leaving Digital Domain in 2008 I implemented a volumetric and rib(prman) renderer for Nuke at ImageMovers Digital, and in 2011 a ray-tracer/volumetric renderer at DreamWorks Animation.”

It is worth nothing that Egstad was still a vfx artist when he wrote ScanlineRender. “I’m amazed at what people are still able to pull off with it 15 years later because it really was designed for lightweight OpenGL-ish scenes in mind, and these days people are routinely throwing heavy production assets at it,”Egstad relates.

The core reason for Nuke having 3D was to be able to composite shots better. Nuke never wanted to be a 3D package, but often times key compositing techniques such as Camera Projection for rig removal involved 3D. Nuke confounded the arbitrary line that had been drawn between 2D and 3D at many facilities at that time. Nuke fully embraced anything that would make comp better and that just happened to increasingly require an understanding of high end 3D.

“I always thought from a research perspective, that Nuke was mind blowing,” comments Robinson. “It just made sense to me that anyone working on 2D imagery from the real world would want to continuously re-inflate that 2D back into 3D…and that fundamentally changed from the research team’s perspective our view of our research from pushing 2D pixels around to starting to understand the 3D world.”

“Nuke’s 3D workflow evolved out of compositors like myself pushing Nuke v2 to its limits on Titanic and not being able to efficiently produce 3D tracking enhancement elements,” comments Egstad. He felt that Flame’s simple 3D environment was often just a little too simple. For example, “it was difficult and awkward to load and align production 3D cameras and to import line-up objects. On the other hand most 3D packages of the day such as Maya, Houdini or Softimage were anything but simple and had steep learning curves,” he adds.

In 2001-2 both Spitzak and Egstad, along with Paul Van Camp and Price Pethel received their first Sci-Tech award for Nuke for their pioneering effort on the compositing software. At that time it was noted that one of the great benefits of Nuke was that it no longer required a giant Onyx Supercomputer. The official award description was “Nuke-2D compositing software allows for the creation of complex interactive digital composites using relatively modest computing hardware”.

This first Sci-Tech was really for the initial work of adding a GUI to the text line version of Nuke. “The original award, I believe, was for the original in-house version of Nuke (version 2),” comments Egstad. “The current Nuke v3 architecture, first used on Time Machine in 2000, was a dramatic evolution in architecture and workflow. I am not complaining though, I’m very much appreciative that original recognition, but I do think this second award addresses that issue and recognizes the role Bill Spitzak and I had in the v3 redesign and its subsequent industry adoption.”

At the time of the Nuke sale, the DD team was very focused on making Nuke work and be as fast as possible. Back in 2006 fxguide visited D2 software at DD and the team remarked about the aliased fonts and ‘ugly’ node graphics that whenever they offered the DD compositors a choice between new features and a cleaned up UI, – the composited universally wanted new features. Thus it was in 2006 the early graphical versions of Nuke icons and logos took a backseat to image processing and efficiency.

Nuke at DD was very much artist-driven, and many of the seemingly obvious things in its design, that people now take for granted, were anything but obvious twenty years ago. Even at Digital Domain there were those who were questioning why a 2D compositing application needed to have an integrated 3D geometry system and renderer.

“Many of those design ideas would likely have never seen the light of day had Nuke started life as a commercial product, because they would have been either deemed too costly, difficult to develop, or the target audience deemed too small,” reflects Egstad. “The passion and persistence of that original Digital Domain compositing crew drove a sea change in the way that visual-effects are produced and I’m very proud to have played a part in inventing that future, and it’s why I got into this crazy business in the first place!” he adds.

The Foundry Transition

When The Foundry acquired Nuke from DD in 2007, Spitzak moved over too and joined the Foundry team. “We’d all been aware of Nuke for a while, and knew it was highly regarded,” recalls Robinson. “It looked like a fun way to expand what we did, and to further our interest in continuing to do ‘more stuff’.”

Throughout the next few years, Nuke improved in leaps and bounds, as the Foundry added hundreds of new features—including a built-in camera tracker, denoise, deep compositing and stereo tools—and extended its core with Python, Qt, 64-bit and multi-platform support. It was soon a standard fixture in film pipelines across the globe.

While Shake started to shut down at Apple, Nuke’s main rival in the early days was Discreet (then Autodesk) Flame. The core difference between Flame and Nuke in the early days, other than base computing architecture, was the nodal flow diagram of Nuke vs the relational diagram of Flame’s Action. While Flame would come to add Batch as a flow representation, the early award winning Flame work was all in Action where the graph showed the relation of images and nodes to each other but not a flow of imagery one node to another.

“The thing about Nuke is the node graph and the moment you split the node into sub-systems you effectively go ‘off piste’ for what the system should be,” explains Wadelton. “Let me give you an example. When we recently added our Cara VR, we could have done a stitching solution that are like others out there, where you just put all the cameras and images in and you get an automatic stitched result. But what the team did was add it in a very modular way, so there are a bunch of nodes for each individual camera feed so you can correct them individually, and a bunch of nodes for analysis, etc. It is all broken into steps and that is the key thing that gives the compositor control.”

For the second Sci-Tech honor this year, the three people singled out at the Foundry by the investigating Sci-Tech Committee are Abigail Brady, Jon Wadelton and Jerry Huxtable.

Jerry Huxtable was the UI lead who took Nuke v4.6 and did the major UI upgrade in 2008. He also removed the DD FLTK tool, which was later open sourced. FLTK was Bill Spitzak’s Fast Light Tool Kit. It was always his belief that modern UI’s were too heavy and taxed a system. While Spitzak is no longer actively developing FLTK, he is still know to contribute to it in the public domain in his free time). “Jerry is just very switched on when it comes to UI, pulling things together and making things usable,” explains Wadelton. Huxtable is still at the Foundry and at an engineering level, “if you’ve got a really hard problem – just go see Jerry”, Wadelton adds. Huxtable also did Nuke’s particle system and the UI for roto and paint.

Abigail Brady joined at the same time as Huxtable. At the Foundry, “Abby sort of ‘mind melded’ with Bill Spitzak quite early. Bill is a very smart guy, but hard to follow sometimes; Abigail was just as switched on as him and she decoded the architecture of Nuke quite quickly. She was almost Bill’s protege. She did things very quickly – things that took the rest of us 5 years to work out how to do,” explains Wadelton admiringly. For example, Brady added the multi-view support which provided stereo support in v5.0 which allowed with Nuke’s use on Avatar. Adding stereo as extensively as Nuke did was not easy but it’s comprehensive implementation proved important for a host of later innovations. Around the same time she also added the meta data pipeline and later she wrote key parts of Nuke’s Deep compositing sub-system along with Wadelton.

The first product manager for Nuke was Matt Plec, formerly of Sony Pictures Imageworks and the Shake team. In 2010, Jon Wadelton, now CTO, became Nuke’s product manager. Wadelton had joined the Foundry in 2007. That same year, Nuke expanded its range to include NukeX, which combined the core functionality of Nuke with an out-of-the-box toolkit of exclusive features, Many of these features drew on the Foundry’s core image-processing expertise which had proven so valuable in the plug-in market, including the Foundry’s own Academy Award winner, Furnace.

Wadelton became the face of Nuke and one of the most visible and respected members of the Foundry’s team. He actually did far more than the traditional role of a product manager, at times directly contributing to the code base of Nuke. Wadelton also initially ran the engineering team and was very involved in everything from writing code for the Deep implementation to the GPU acceleration. But his role was perhaps most known for setting the agenda for Nuke over the last decade. Wadelton became very much known as ‘Mr Nuke’, leading a product shake-up of Nuke that resulted in Nuke, Nuke X and Nuke Studio, and steered them to market. “Jon has an uncanny ability to see emerging technologies and trends and apply them in ways that anticipate the increasingly sophisticated and complex needs of our customers,” comments Collis.

Moving forward

Nuke is not finished yet, as the product is very much alive and still being developed. Recent advances have included work around embracing light field data within Nuke. The team has also been streaming out of Nuke to game engines, opening the product to live and real time products such as Unity. This is leading to new editing approaches in storytelling from within Nuke.

One of the company’s development items for R&D is moving away from just primarily shot focused vfx. “We want to go to a world of sequence based compositing not shot based compositing… it could potentially change the way you work,” comments Wadelton. In colour grading the concept of grading by scene, rather than by shot, is well established, but in visual effects most shots are still data islands. Lessons learnt in compositing one shot seldom automatically inform other shot comps in the same sequence.

For his perspective, Egstad would like to see focus put back on the core architecture which is, in his opinion, “after twenty years is in dire need of refresh”. He adds that “much has changed in the intervening years (memory size, #of cpus, shot complexity, image size, etc) and it’s a testament to the solid design of the v3 architecture that it’s still working today with relatively little change. It’s very exciting to see new developments and workflows added to Nuke, but not at the expense of the core workflows. I believe it would be a win-win because improving the underlying architecture will enable new technologies to better fit into Nuke’s design, whereas some of the previous extensions like particles and deep feel a bit tacked on.”

Egstad would like to see further R&D into a mixed 2D/3D world of deep/volumetric image data, but done in a homogenous ‘Nuke’ way. He still has a very fond attitude to Nuke and comments that he “would love to be as involved in that process as I possibly can be.”

The team are very interested in the broader issues surrounding gathering and using volumetric data. When Foundry discuss volumetric effects, they are not referring to 3D particle or volume smoke simulation rendering, for the Foundry volumetric understanding is about understanding the film set – ‘the whole volume’ or the world one is telling the story in. “That is the future of captured content I think… moving forward, more multi-camera capture is good for just regular storytelling, away from even VR or AR type applications,” concludes Wadelton. “Nuke is focused on more data in the hands of the compositors so they can manipulate the scene in more ways.”

Simon Robinson appropriately sums up so much of the teams’ feeling about the development work that has gone into Nuke over the years. “All of that work Jon mentions plays into the company’s strength in optical flow, image processing, set reconstruction – and in many ways the rise of 360 video has been a great excuse to exploit that new way of working,” says Robinson. “But it is fantastic that Nuke’s architecture, designed all those years ago, has proven such a strong flexible platform to explore all these new approaches, never imagined before. Those early architecture designs are what we still fundamentally rely on that today and they to still allow us to adapt and find new ways to make better movies.”