Previs : A close relationship between The Third Floor, production, and ILM

Artists from The Third Floor in LA and London produced previs for Rogue One, collaborating with the director, producers, the art department and the visual effects team at ILM to visualize story ideas and flow of action for the summer box office hit. Barry Howell was the Previs Supervisor at The Third Floor on Rogue One. He originally worked on George Lucas’ team pre-visualizing Star Wars Episode III: Revenge of the Sith, where he met the other future founders of The Third Floor.

“Our teams from The Third Floor Los Angeles and London created previs, techvis and postvis across the movie,” says Howell. “I, along with our Asset Lead, Motoki Nishii, started within the art department in late 2014, working with Production Designer Doug Chiang. We moved with production to the UK, where we joined with artists from The Third Floor’s London office, led by my co-supervisor Margaux Durand-Rival.”

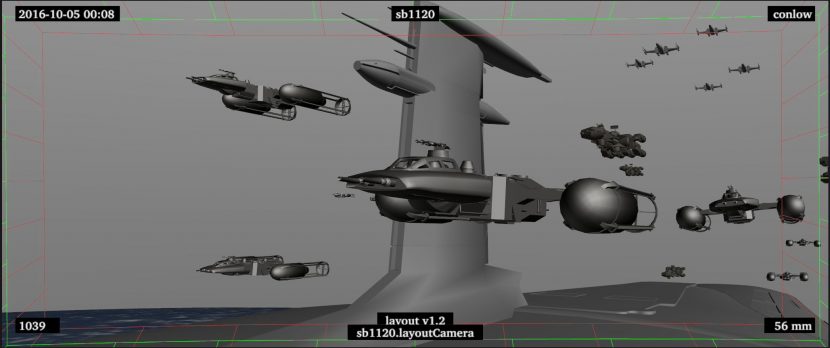

The Third Floor started with a team of just nine for this film, including Howell. “This consisted of full-time asset builders tasked with building custom sets, vehicles, creatures, props and characters for previs. They also incorporated existing ILM assets such as the Star Destroyers and prepped these to work within our system.”

Craig Skerry, Third Floor’s coordinator, stayed in touch with each different department to keep the team up to date, constantly feeding editorial with the latest previs shots and making sure that Edwards had what he needed. “Our other five artists were rock star previs shot creators, producing and animating previs shots to feed previs sequence-building. In the beginning, their work also included creating generic cycles for use later in populating the big battles or doing look development tests.”

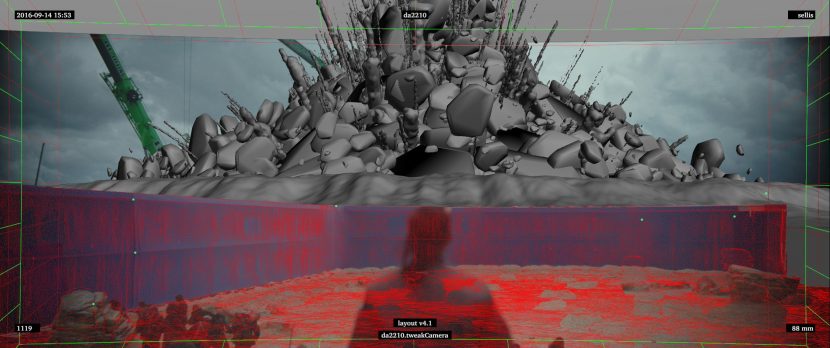

The team had worked with Director Gareth Edwards on Godzilla and they had a good understanding of his creative storytelling and visual style. The scenes with the Death Star and Jedha proved to be the most difficult, because “it was very challenging to conceptualize and visualize what the wave of destruction should look like,” says Howell. “We iterated multiple different ideas, playing out in multiple different scenarios.”

“In general, we created previs for shots based on storyboards or we would iterate ideas from descriptions or scene outlines,” Howell explained. “We touched most of the action sequences in the film, including key scenes in Act 1 and 2 on Eadu and Jedha, and the entirety of Act 3.” The third act of the film changed dramatically as has been pointed out by numerous fan sites which found a set of shots in the trailers that were clearly not in the film.

The Third Floor team also worked extensively on postvis work. The boundaries of pre-prod-post are all but erased in such a visual effects and modern film making approach. “As you can imagine, with a large number of vfx and bluescreen shots, it really helps to have a more complete picture of shots as soon as possible,” adds Howell. “We also assisted editorial by providing our assets on greenscreen turntables so they could quickly comp them in to test ideas.”

A lot of the technical work that Third Floor did focused on size concepts and relationships. The team helped work out what different levels of destruction from the Death Star might look like and how that would appear from various angles, on a planet’s surface or from space. “We also did technical size mockups for vehicles like the AT-ATs for incarnations specific to this film, and diagrams — particularly for the Scarif base, to map out the key locations and what parts of the action would be happening where,” Howell comments.

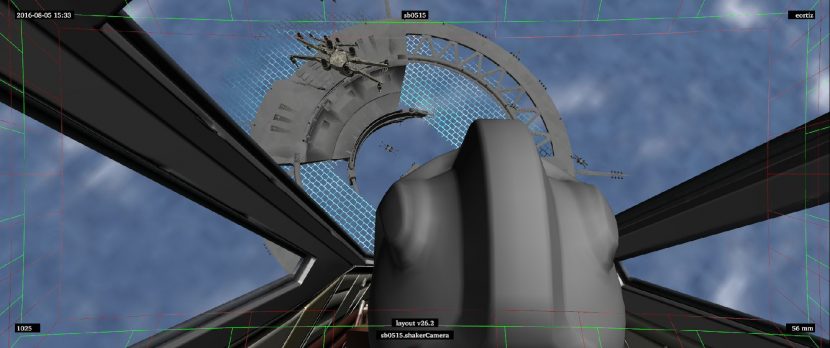

One thing that Edwards was always interested in was finding new and interesting viewpoints on specific action beats. The Third Floor developed a new tool for aiding in this that they called the Random Cam. Howell explained that it allowed the team to select the major points of interests in a shot and set up various parameters, such as the importance of each and the boundary area for the camera. “We could use this tool in any scene and it would auto-generate hundreds of different views.” says Howell. “Gareth would review these and often discover angles he liked that would be the inspiration for further development.”

Gareth Edwards did not tightly previs or storyboard out sequences. The director preferred to walk the set with the actors and find camera angles and explore the space with the actors and just the DOP. “Gareth was interested in using the previs to map out the story action and to determine the best way to get the characters from point A to B. He wanted each shot to serve a purpose, with each one to leading to the next, offering the viewer a bit more information than the last one did. He didn’t want to give too much away too soon, but also didn’t want to bring the viewer along without giving them something to chew on”. Edwards liked exploring the frame so much that he often camera operated according to Mohen Leo, ILM VFX Supervisor. He would personally operate steadicam or Easyrig on set.

“For the most part, he would provide a quick synopsis of what he wanted, set the parameters of the sandbox that we would work in and then let us work from there,” adds Howell. “It was quite fun to help develop what Jyn (Felicity Jones ) and her team would do in between the iconic mood boards and concept art that Lead Concept Artist Matt Allsopp and team provided.”

In terms of particular stylistic reference for lensing and framing the team had multiple references, as Edwards especially loves any movie that portrays a very moody and atmospheric landscape. “For the Eadu sequence, he really liked the lighting and overall aesthetic Ridley Scott and James Cameron had achieved on Alien and Aliens, with scenes that used backlighting very effectively, kept much of the shot in the shadows and hinted at what might be there,” explains Howell. “He wanted us to incorporate that type of a look, but at the same time he wanted this movie to mesh with A New Hope, which was often brightly lit, so it was a blending of styles.”

Much of Rogue One needed to dovetail with the very start of New Hope, but not all the technology and ships were drawn from the feature film universe. It was John Knoll’s idea to see inside the ships as they ripped apart after a Hammerhead Corvette pushes one Star Destroyer onto another. Normally such detail is lost in a vast explosion, but Knoll suggested not losing the action in a fireball. He wanted to let the audience see the internal structure of the ship, as it is being destroyed.

To give the digital ships a model feel, John Knoll came up with an ingenious plan. As all the original ships and death star were made from bits of various commercial model kits, the new team bought large quantities of new model kits and scanned the pieces. When building any new ship the modelling team started with scanned pieces of actual kits, so while there are no actual models used in the film, the new digital ships are in some round-about way linked to the older model ships.

The destruction sequence with the Star Destroyer was handed off to Animator Euisung Lee. “He is just a really talented guy, he made a mini movie out of itm” explained Hal Hickel, Character Animation Supervisor ILM. “What was cool about it was that Euisung animated the whole thing as one continuous piece of action, all the way from the Corvette coming in and hitting the side, pushing the Destroyers together, the collision etc.”

The team then put this ILM V-Cam set up on the motion capture stage, “where Gareth could view it in 3D, from an ipad like controller he holds in his hands, and he can walk around this long looping 30 – 40 second piece of action – and hunt for angles” explains Hickel. “Which is great because that is how he works with live action, walking with a camera hunting for angles on the action that they want.”

This approach was done with a number of scenes in the space battle. Not only could Gareth film the action in real time, as this was all rendered in real time but he could instantly ask to “attach the camera to the back of Hammerhead – I want to ride along with it as it heads towards the Star Destroyer and just give me pan and tilt contro,l” recalls Hickel.

The result is that some of the virtual cinematography is from Lee and much is Edwards filming Lee’s detailed animation with the V-Cam. Edwards really embraced this approach to digital production, according to Hickel. “That’s just his process,” Hickel relates. “He just likes to walk around on set with the camera and find stuff, and we just wanted to give him the same opportunity with the digital scenes, so those shots had the same feeling of cinematography as the set pieces.”

The Hammerhead Corvette, was first seen in the animated Star Wars TV series. In Rogue One, John Knoll suggested having the rebels use a Hammerhead Corvette during the Battle of Scarif, this was not the only thing the team referenced from the animated series, the Y-Wings also used proton bombs during the battle, which is a reference to the bombs from the series.

LED giant light box

The Director was very keen to film as much as he could in camera, and this extended to filming in the space ships. The production built huge gimble computer controlled platforms. Outside these sets were huge LED screens which provided both an accurate exterior in camera and most of the external lighting needed for the shots. While a typical deep space shot has very little light, when the team were close to the Shield gate there was a lot of bounce light in addition to the strong unfiltered direct light from the nearby Sun.

The Third Floor worked very closely with ILM, including ILM VFX Producer Nina Fallon and ILM VFX Supervisors John Knoll and Mohen Leo, creating content for the LED screens used on set. The images used on the large LED surfaces served both the purpose of giving the actors something to react to “Gareth wanted it to be an immersive experience for them so it felt real — and a way to provide a more realistic lighting setup to help illuminate the actors and the practical set with an intensity and ambient color as if they had been shot on location” explained Howell.

The Third Floor delivered spherical renders for automatic projection to each screen. “We started by taking the previs environments and adding more detail to the texture and geometry. Then we lit them based on what was needed for each setup. We used a plugin that allowed us to render out large-scale 360 spherical images. These were not actual renders, but rather playblasts straight out of the Viewport 2.0 display. Most of them were relatively quick, taking only an hour or two, which allowed us to provide something to test on set with time to make any necessary changes for the shoot” explains Howell.

From there, ILM would ingest the material into TouchDesigner and calibrate the images to optimize for brightness. “Most of the time this lit the exterior, but if there was strong direct sunlight we would augment it with an additional studio spot light” explains Leo.

In some instances, the team rendered the scenes using several lighting setups so they could dissolve or combine between different versions to imitate weather effects such as lightning. ” It was great seeing the previs light up the sound stage and it really put you in the middle of the action” commented Howell. The advantage of the TouchDesigner user interface explained Leo was it allowed the lighting to be triggered as needed. For example he explained how the DOP would stand beside someone with a TouchDesigner ipad who would trigger the lighting changes to the LED when the DOP indicated the moment they wanted the ship jump to hyperspace.

For the vast majority of the final rendering Leo explained that they used “RenderMan with some Mantra renders for some of the Houdini elements.” ILM used the new RIS technology in RenderMan which completes the transition from the older REYES approach that worked so well for so long. ILM completed about 1700 shots for the film.

Mohen Leo was on set in London, supervising for part of the principle photography. Interestingly, he had personally worked on Star Wars Episode 1 under John Knoll. On that film Knoll was responsible for the pod race and Leo had been on the pod race effects team focused on the crashes. “I watched so many reference videos with titles such as And they managed to walk away,” he jokingly recalls. He described this latest Star Wars film as “surprisingly less about the technology,” compared to Episode 1, since the team today has much greater technological mastery of the problems of just getting shots done.

Leo is now taking a break after the film and setting himself the task of learning more about game engines and UE4 in particular. “I had thought game engines were all programming – but they are just as creative as films,” Leo says. “And while each gain now in vfx is very hard earned, in games it feels like it was 10 years ago, with big gains possible all the time.” Game assets were used in Rogue One incidentally, Lucasfilm has a commercial arrangement with Electronic Arts (EA) and it is an import game asset that ILM used as the Viper Droid in Rogue One.

One aspect that helped link the film’s visuals to the original Star Wars New Hope was a key lecture given inside by Dennis Murren to the ILM effects team. Murren explained to the team, some of whom were not born when the first film came out, about the range of movement that was possible for the original film cameras. He also spoke to the team about “the need for some simplicity due to complexity of the original process,”‘ recalled Leo.

This guide informed how the team produced, for example, the All-Terrain Armored Transport or AT-AT. The walking artillery pieces were slightly different from earlier films. These ones were designed for cargo and had a slightly different range of movement. The original AT-AT were filmed with the then revolutionary Go-motion technique and thus not only were the Rogue One team aware of the camera movements on the walkers but they introduced a slight stop motion feel to their motion to dovetail these units into the existing Star Wars world.

Hal Hickel, head of ILM’s character animation team, animated the first AT-ACT (All Terrain Armored Cargo Transports) at Scarif himself.

“I just really wanted to do that myself,” says Hickel. “And the first thing I did was look carefully at frame by frame the AT-AT from Empire and Jedi. The first version I did was a very close match in terms of timing, …how much the feet lift, how they moved. I took that and time stretched it a little bit to slow it down,” he explains.

“It felt right for our Walkers as they are a little bit longer legged that the original Walkers, and a slightly different design,” Hickel says. “But other than timing, the order the feet lift, the mechanisms, the timing – it should be a close match to what Phil Tippett, Jon Berg and Tom St. Amand, ( and Doug Beswick) did in Empire… I am friends with Phil, and I have not heard from him yet and I am very keen to hear if he feels like we did his team’s work justice. It was very important to me that it feel like the original one but with modern technology.”

Read more about the character animation in our upcoming Rogue One part 2 story.

The Third Floor used materials from Doug Chiang and his team whenever possible, whether it was concept art or models. “If designs were not completed yet, we would ask Matt or Gareth for a quick sketch to work from,” explains Howell. “As the production continued, we would get updated models and swap them out in our previs so that it represented the latest and greatest.”

After they finished a sequence, they would rendezvous with Doug Chiang and Neil Lamont so Howell could get a more complete visualization of what the director was wanting in terms of the shoot. “It was interesting to see their reactions to the previs, as it helped answer a lot of their questions regarding how much of the set was actually going to be seen and what needed to be built,” says Howell. “Fortunately for us, no Bothans died getting those plans to the art department!”